How the DevOps model will build the new remote workforce

Most importantly, the humans managing systems ultimately determined the

company's capacity to adapt to the pandemic. "We recognized that … the systems

may need to scale, and we may need to make changes to meet a new global

demand, [but] that is much different than how our peers, the people we care

about and work with, are going to be impacted by this," Heckman said. Thus,

the SRE team's role was not just to watch systems and shore up their

reliability, but also to manage communications with other employees, Heckman

said, "not only so they had the current context of what we were thinking,

suggesting and where we were headed, but also to give them some confidence

that the system around them would be fine." Similar principles must be applied

to manage the human impact of a longer-term shift to remote work, said Jaime

Woo, co-founder of Incident Labs, an SRE training and consulting firm, in a

separate presentation at the SRE from Home event. "The answer is not 'just be

stronger,'" for humans during such a transition, any more than it is for

individual components in a distributed computing system under unusual traffic

load, Woo said.

From Defense to Offense: Giving CISOs Their Due

CISOs are now in a position where they must — somehow — reinvent how they work

and how they are perceived within their organizations. Historically, they have

been the company's risk-averse first line of defense against cyberattacks, and

have been viewed as such. But this state of affairs needs to evolve. "CISOs

cannot afford to be seen as blockers of innovation; they must be

problem-solvers," says Kris Lovejoy, EY Global Advisory Cybersecurity Leader,

in EY's report. "The way we've organized cybersecurity is as a

backward-looking function, when it is capable of being a forward-looking,

value-added function. When cybersecurity speaks the language of business, it

takes that critical first step of both hearing and being understood. It starts

to demonstrate value because it can directly tie business drivers to what

cybersecurity is doing to enable them, justifying its spend and

effectiveness." But do current CISOs have the right skills and experience to

work in this new way and serve in a more proactive and forward-thinking role?

That's an open question, and the answer will probably demand a new breed of

CISO whose job is not driven mainly by threat abatement and compliance.

Want an IT job? Look outside the tech industry

It's always been true that most software was written for use, not sale.

Companies might buy its ERP software from SAP and office productivity software

from Microsoft, but they were writing all sorts of software to manage their

supply chain, take care of employees, and more. What wasn't true then, but is

definitely true now, is just how much of that software spend is now focused on

company-defining initiatives, rather than back-office software meant to keep

the lights on. Small wonder, then, that in the past year companies have

posted nearly one million jobs in the US, according to the Burning Glass data,

which scours job postings. That number is expected to increase by more than

30% over the next few years, with non-tech IT jobs set to boom at a 50% faster

clip than IT jobs within tech. As for who is hiring, though tech companies top

the list (arguably one of them isn't really a tech company), the rest of the

top 10 are decidedly non-tech. Digging into the Burning Glass report, and

moving beyond software developer jobs, specifically, and into the broader

category of IT, generally, Professional Services, Manufacturing, and Financial

Services account for roughly half of all IT openings outside tech.

Data protection critical to keeping customers coming back for more

Despite the growing advancements on the data protection front, 51 percent of

consumers surveyed said they are still not comfortable sharing their personal

information. One-third of respondents said they are most concerned about it

being stolen in a breach, with another 26 percent worried about it being

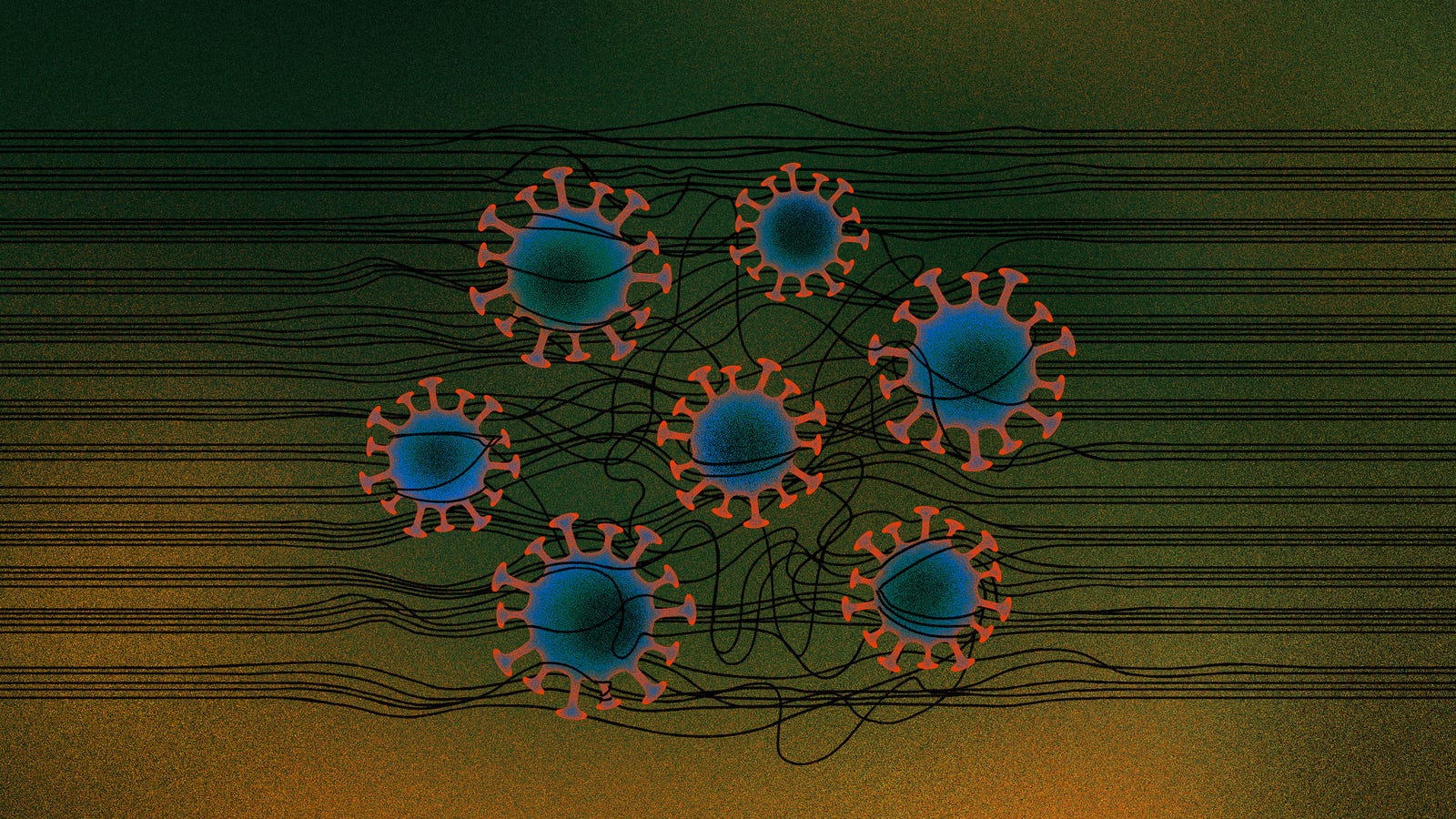

shared with a third party. In the midst of the growing pandemic, COVID-19

tracking, tracing, containment and research depends on citizens opting in to

share their personal data. However, the research shows that consumers are not

interested in sharing their information. When specifically asked about sharing

healthcare data, only 27 percent would share health data for healthcare

advancements and research. Another 21 percent of consumers surveyed would

share health data for contact tracing purposes. As data becomes more valuable

to combat the pandemic, companies must provide consumers with more background

and reasoning as to why they’re collecting data – and how they plan to protect

it. ... As the debate grows louder across the nation, 73 percent of

consumers think that there should be more government oversight at the federal

and/or state/local levels.

The power of open source during a pandemic

The world needs to shift the way it's approaching problems and continue

locating solutions the open source way. Individually, this might mean becoming

connection-oriented problem-solvers. We need people able to think communally,

communicate asynchronously, and consider innovation iteratively. We're seeing

that organizations would need to consider technologists less as tradespeople

who build systems and more as experts in global collaboration, people who can

make future-proof decisions on everything from data structures to personnel

processes. Now is the time to start building new paradigms for global

collaboration and find unifying solutions to our shared problems, and one key

element to doing this successfully is our ability to work together across

sectors. A global pandemic needs the public sector, the private sector, and

the nonprofit world to collaborate, each bringing its own expertise to a

common, shared platform. ... The private sector plays a key role in building

this method of collaboration by building a robust social innovation strategy

that aligns with the collective problems affecting us all. This pandemic is a

great example of collective global issues affecting every business around the

world, and this is the reason why shared platforms and effective collaboration

will be key moving forward.

Why Digital Transformation Always Needs To Start With Customers First

A fascinating point regarding Deloitte Insights’ research is the correlation

it uncovered between an organization’s digital transformation maturity and the

benefits they gain in efficiency, revenue growth, product/service quality,

customer satisfaction and employee engagement. They found a hierarchy of

pivots successful enterprises make to keep pursuing more agile, adaptive

organizational structures combined with business model adaptability, all

driven by customer-driven innovation. The most digitally mature organizations

can adopt new frameworks that prioritize market responsiveness,

customer-centricity and have analytics and data-driven culture with actionable

insights embedded in their DNA. The two highest-payoff areas for accelerating

digital maturity and achieving its many benefits are mastering data and

creating more intelligent workflows. Deloitte Insights’ research team looked

at the seven most effective digital pivots enterprises can make to become more

digitally mature. The pivots that paid off the best as measured by revenue,

margin, customer satisfaction, product/service quality and employee engagement

combined data mastery and improving intelligent workflows.

A searingly honest tech CEO tells the truth about working from home

Morris believes that everyone has what she calls their "Covid Acceptance

Curve." But no two employees' curves are likely to be alike. "Many possible

solutions for one employee are actually counter-indicated for others," she

says. "Think of your team as an overlapping series of waves, each strand

representing a person and their curve. You could try to slot in a single

solution across 'strands,' but it will inevitably miss so many marks, reaching

people too late, too early or with something that isn't even relevant to

them." Some might imagine that one of the particular failings of tech

leadership is the temptation to treat all employees with one broad free lunch.

There, that should please everyone. Now, says Morris of her employees: "Some

are experimenting with how to juggle work and homeschooling, some are

struggling with crippling isolation, some have been impacted by Covid

personally, others are facing anxiety of so many kinds." I wonder whether it

was always this way, but leaders didn't care so much. Each employee has always

been burdened with their own practical and emotional issues not directly

related to work. Now, though, it's the physical distance and the constant,

lonely staring at screens that intensifies difficulties -- and leadership's

ability to anticipate or even understand them.

How does open source thrive in a cloud world? "Incredible amounts of trust," says a Grafana VC

Cloud gives enterprises a "get-out-of-the-burden-of-maintaining-open-source

free" card, but savvy engineering teams still want open source so as to "not

lock themselves in and to not create a bunch of technical debt." How does open

source help to alleviate lock-in? Engineering teams can build "a very modular

system so that they can swap in and out components as technology improves,"

something that is "very hard to do with the turnkey cloud

service." That's the technical side of open source, but there's more to

it than that, Gupta noted. Referring to how Elastic ate away at Splunk's

installed base, Gupta said, "The biggest reason...is there is a deep amount of

developer love and appreciation and almost like an addiction to the [open

source] product." This developer love is deeper than just liking to use a

given technology: "You develop [it] by being able to feel it and understand

the open source technology and be part of a community." Is it impossible

to achieve this community love with a proprietary product? No, but "It's a lot

easier to build if you're open source." He went on, "When you're a black box

cloud service and you have an API, that's great. People like Twilio, but do

they love it?"

Is Low-Code Or No-Code Development Suitable For Your Startup App Idea?

Speed and adaptability are key ingredients in every product development phase

of a startup. Assume it will take you four months to create and launch the

first version of your product. You spoke with potential customers, gathered,

and implemented their feedback to create the best solution you could build

based on the information you have. If those potential customers need your

solution, they will be looking forward to it. And if they committed

financially, they’re going to be even more eager to use it. The truth is that

in a competitive market where buyers have many options, eagerness and patience

are two different things. The customers may wish to use your product sooner

than later but they will not wait for it. Even if they don’t have better

options today, they will figure out an alternative solution. Now assume you

launched your product, served the first customers and gathered some more

critical feedback. Your customers will not wait months for those changes, no

matter how important your product is for them. Speed and adaptability can make

or break a startup.

Tackle.io's Experience With Monitoring Tools That Support Serverless

Tackle runs microservices such as managed containers on AWS Fargate, deploys its

front end on Amazon CloudFront, and uses Amazon DynamoDB for its database, Wood

says. “We’ve spent a lot of time making sure that our architecture is something

scalable and allows us to provide value to our customers without interruption,”

he says. Tackle’s clientele includes software and SaaS companies such as GitHub,

PagerDuty, New Relic, and HashiCorp. Despite the benefits, Woods says running

serverless can introduce such issues as trying to find obscure failures with

APIs. “Once you adopt serverless, you’ll have a chain of Lambda functions

calling each other,” he says. “You know that somewhere in that process was an

error. Tracing it is really difficult with the tools provided out of the box.”

Before adopting Sentry, Tackle spent a lot of engineering hours trying to

discover the root cause of problems, Woods says, such as why a notification was

not sent to a customer. “It might take half a day to get an answer on that.”

Tackle adopted Sentry’s technology initially to get back traces on such errors.

Woods says his company soon discovered Sentry also sends alerts for failures

Tackle was not aware of in its web app.

Quote for the day:

"You can't lead anyone else further than you have gone yourself." -- Gene Mauch