Quote for the day:

"It is during our darkest moments that we must focus to see the light." -- Aristotle Onassis

Machine unlearning gets a practical privacy upgrade

Machine unlearning, which refers to strategies for removing the influence of

specific training data from a model, has emerged to fill the gap. But until now,

most approaches have either been slow and costly or fast but lacking formal

guarantees. A new framework called Efficient Unlearning with Privacy Guarantees

(EUPG) tries to solve both problems at once. Developed by researchers at the

Universitat Rovira i Virgili in Catalonia, EUPG offers a practical way to forget

data in machine learning models with provable privacy protections and a lower

computational cost. Rather than wait for a deletion request and then scramble to

rework a model, EUPG starts by preparing the model for unlearning from the

beginning. The idea is to first train on a version of the dataset that has been

transformed using a formal privacy model, either k-anonymity or differential

privacy. This “privacy-protected” model doesn’t memorize individual records, but

still captures useful patterns. ... The researchers acknowledge that extending

EUPG to large language models and other foundation models will require further

work, especially given the scale of the data and the complexity of the

architectures involved. They suggest that for such systems, it may be more

practical to apply privacy models directly to the model parameters during

training, rather than to the data beforehand.

Machine unlearning, which refers to strategies for removing the influence of

specific training data from a model, has emerged to fill the gap. But until now,

most approaches have either been slow and costly or fast but lacking formal

guarantees. A new framework called Efficient Unlearning with Privacy Guarantees

(EUPG) tries to solve both problems at once. Developed by researchers at the

Universitat Rovira i Virgili in Catalonia, EUPG offers a practical way to forget

data in machine learning models with provable privacy protections and a lower

computational cost. Rather than wait for a deletion request and then scramble to

rework a model, EUPG starts by preparing the model for unlearning from the

beginning. The idea is to first train on a version of the dataset that has been

transformed using a formal privacy model, either k-anonymity or differential

privacy. This “privacy-protected” model doesn’t memorize individual records, but

still captures useful patterns. ... The researchers acknowledge that extending

EUPG to large language models and other foundation models will require further

work, especially given the scale of the data and the complexity of the

architectures involved. They suggest that for such systems, it may be more

practical to apply privacy models directly to the model parameters during

training, rather than to the data beforehand.Emerging Cloaking-as-a-Service Offerings are Changing Phishing Landscape

Cloaking-as-a-service offerings – increasingly powered by AI – are “quietly

reshaping how phishing and fraud infrastructure operates, even if it hasn’t

yet hit mainstream headlines,” SlashNext’s Research Team wrote Thursday. “In

recent years, threat actors have begun leveraging the same advanced

traffic-filtering tools once used in shady online advertising, using

artificial intelligence and clever scripting to hide their malicious payloads

from security scanners and show them only to intended victims.” ... The newer

cloaking services offer advanced detection evasion techniques, such as

JavaScript fingerprinting, device and network profiling, machine learning

analysis and dynamic content swapping, and put them into user-friendly

platforms that hackers and anyone else can subscribe to, SlashNext researchers

wrote. “Cybercriminals are effectively treating their web infrastructure with

the same sophistication as their malware or phishing emails, investing in

AI-driven traffic filtering to protect their scams,” they wrote. “It’s an arms

race where cloaking services help attackers control who sees what online,

masking malicious activity and tailoring content per visitor in real time.

This increases the effectiveness of phishing sites, fraudulent downloads,

affiliate fraud schemes and spam campaigns, which can stay live longer and

snare more victims before being detected.”

Cloaking-as-a-service offerings – increasingly powered by AI – are “quietly

reshaping how phishing and fraud infrastructure operates, even if it hasn’t

yet hit mainstream headlines,” SlashNext’s Research Team wrote Thursday. “In

recent years, threat actors have begun leveraging the same advanced

traffic-filtering tools once used in shady online advertising, using

artificial intelligence and clever scripting to hide their malicious payloads

from security scanners and show them only to intended victims.” ... The newer

cloaking services offer advanced detection evasion techniques, such as

JavaScript fingerprinting, device and network profiling, machine learning

analysis and dynamic content swapping, and put them into user-friendly

platforms that hackers and anyone else can subscribe to, SlashNext researchers

wrote. “Cybercriminals are effectively treating their web infrastructure with

the same sophistication as their malware or phishing emails, investing in

AI-driven traffic filtering to protect their scams,” they wrote. “It’s an arms

race where cloaking services help attackers control who sees what online,

masking malicious activity and tailoring content per visitor in real time.

This increases the effectiveness of phishing sites, fraudulent downloads,

affiliate fraud schemes and spam campaigns, which can stay live longer and

snare more victims before being detected.”You’re Not Imagining It: AI Is Already Taking Tech Jobs

It’s difficult to pinpoint the exact motivation behind job cuts at any given

company. The overall economic environment could also be a factor, marked by

uncertainties heightened by President Donald Trump’s erratic tariff plans.

Many companies also became bloated during the pandemic, and recent layoffs

could still be trying to correct for overhiring. According to one report

released earlier this month by the executive coaching firm Challenger, Gray

and Christmas, AI may be more of a scapegoat than a true culprit for layoffs:

Of more than 286,000 planned layoffs this year, only 20,000 were related to

automation, and of those, only 75 were explicitly attributed to artificial

intelligence, the firm found. Plus, it’s challenging to measure productivity

gains caused by AI, said Stanford’s Chen, because while not every employee may

have AI tools officially at their disposal at work, they do have unauthorized

consumer versions that they may be using for their jobs. While the technology

is beginning to take a toll on developers in the tech industry, it’s actually

“modestly” created more demand for engineers outside of tech, said Chen.

That’s because other sectors, like manufacturing, finance, and healthcare, are

adopting AI tools for the first time, so they are adding engineers to their

ranks in larger numbers than before, according to her research.

It’s difficult to pinpoint the exact motivation behind job cuts at any given

company. The overall economic environment could also be a factor, marked by

uncertainties heightened by President Donald Trump’s erratic tariff plans.

Many companies also became bloated during the pandemic, and recent layoffs

could still be trying to correct for overhiring. According to one report

released earlier this month by the executive coaching firm Challenger, Gray

and Christmas, AI may be more of a scapegoat than a true culprit for layoffs:

Of more than 286,000 planned layoffs this year, only 20,000 were related to

automation, and of those, only 75 were explicitly attributed to artificial

intelligence, the firm found. Plus, it’s challenging to measure productivity

gains caused by AI, said Stanford’s Chen, because while not every employee may

have AI tools officially at their disposal at work, they do have unauthorized

consumer versions that they may be using for their jobs. While the technology

is beginning to take a toll on developers in the tech industry, it’s actually

“modestly” created more demand for engineers outside of tech, said Chen.

That’s because other sectors, like manufacturing, finance, and healthcare, are

adopting AI tools for the first time, so they are adding engineers to their

ranks in larger numbers than before, according to her research.The architecture of culture: People strategy in the hospitality industry

Rewards and recognitions are the visible tip of the iceberg, but culture sits

below the surface. And if there’s one thing that I’ve learned over the years,

it’s that culture only sticks when it’s felt, not just said. Not once a year,

but every single day. Hilton’s consistent recognition as a Great Place to

Work® globally and in India stems from our unwavering support and commitment

to helping people thrive, both personally and professionally. ... What has

sustained our culture through this growth is a focus on the everyday. It is

not big initiatives alone that shape how people feel at work, but the smaller,

consistent actions that build trust over time. Whether it is how a team huddle

is run, how feedback is received, or how farewells are handled, we treat each

moment as an opportunity to reinforce care and connection. ... Equally vital

is cultivating culturally agile, people-first leaders. South Asia’s diversity,

across language, faith, generation, and socio-economic background, demands

leadership that is both empathetic and inclusive. We’re working to embed this

cultural intelligence across the employee journey, from hiring and onboarding

to ongoing development and performance conversations, so that every team

member feels genuinely seen and supported.

Capturing carbon - Is DAC a perfect match for data centers?

The commercialization of DAC, however, faces several significant challenges. One

primary obstacle is navigating different compliance requirements across

jurisdictions. Certification standards vary significantly between regions like

Canada, the UK, and Europe, necessitating differing approaches in each

jurisdiction. However, while requiring adjustments, Chadwick argues that these

differences are not insurmountable and are merely part of the scaling process.

Beyond regulatory and deployment concerns, achieving cost reductions is a

significant challenge. DAC remains highly expensive, costing an average of $680

per ton to produce in 2024, according to Supercritical, a carbon removal

marketplace. In comparison, Biochar has an average price of $165 per ton, and

enhanced rock weathering has an average price of $310 per ton. In addition, the

complexity of DAC means up-front costs are much higher than those of alternative

forms of carbon removal. An average DAC unit comprises air-intake manifolds,

absorption and desorption towers, liquid-handling tanks, and bespoke

site-specific engineering. DAC also requires significant amounts of power to

operate. Recent studies have shown that the energy consumption of fans in DAC

plants can range from 300 to 900 kWh per ton of CO2 captures, which represents

between 20 - 40 percent of total DAC system energy usage.

The commercialization of DAC, however, faces several significant challenges. One

primary obstacle is navigating different compliance requirements across

jurisdictions. Certification standards vary significantly between regions like

Canada, the UK, and Europe, necessitating differing approaches in each

jurisdiction. However, while requiring adjustments, Chadwick argues that these

differences are not insurmountable and are merely part of the scaling process.

Beyond regulatory and deployment concerns, achieving cost reductions is a

significant challenge. DAC remains highly expensive, costing an average of $680

per ton to produce in 2024, according to Supercritical, a carbon removal

marketplace. In comparison, Biochar has an average price of $165 per ton, and

enhanced rock weathering has an average price of $310 per ton. In addition, the

complexity of DAC means up-front costs are much higher than those of alternative

forms of carbon removal. An average DAC unit comprises air-intake manifolds,

absorption and desorption towers, liquid-handling tanks, and bespoke

site-specific engineering. DAC also requires significant amounts of power to

operate. Recent studies have shown that the energy consumption of fans in DAC

plants can range from 300 to 900 kWh per ton of CO2 captures, which represents

between 20 - 40 percent of total DAC system energy usage.

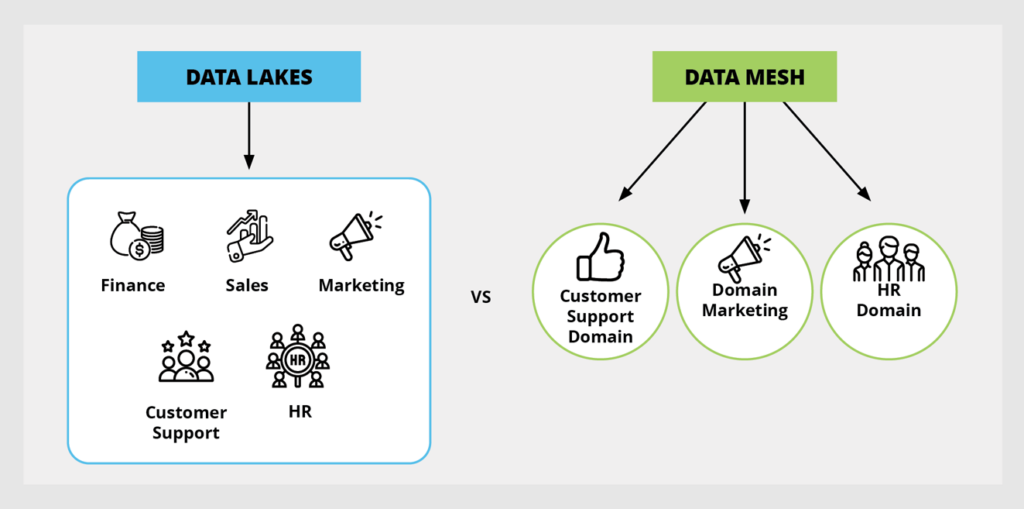

Rethinking Risk: The Role of Selective Retrieval in Data Lake Strategies

Selective retrieval works because it bridges the gap between data engineering

complexity and security usability. It gives teams options without asking them to

reinvent the wheel. It also avoids the need to bring in external tools during a

breach investigation, which can introduce latency, complexity, or worse, gaps in

the chain of custody. What’s compelling about this approach is that it doesn’t

require businesses to abandon existing tools or re-architect their

infrastructure. ... This model is especially relevant for mid-size IT teams who

want to cover their audit requirements, but don’t have a 24/7 security

operations center. It’s also useful in regulated sectors such as healthcare,

financial services, and manufacturing where data retention isn’t optional, but

real-time analysis for everything isn’t practical. ... Data volumes are

continuing to rise. As organizations face high costs and fatigue, those that

thrive will be the ones that treat storage and retrieval as distinct functions.

The ability to preserve signal without incurring ongoing noise costs will become

a critical enabler for everything from insider threat detection to regulatory

compliance. Selective retrieval isn’t just about saving money. It’s about

regaining control over data sprawl, aligning IT resources with actual risk, and

giving teams the tools they need to ask, and answer, better questions.

Selective retrieval works because it bridges the gap between data engineering

complexity and security usability. It gives teams options without asking them to

reinvent the wheel. It also avoids the need to bring in external tools during a

breach investigation, which can introduce latency, complexity, or worse, gaps in

the chain of custody. What’s compelling about this approach is that it doesn’t

require businesses to abandon existing tools or re-architect their

infrastructure. ... This model is especially relevant for mid-size IT teams who

want to cover their audit requirements, but don’t have a 24/7 security

operations center. It’s also useful in regulated sectors such as healthcare,

financial services, and manufacturing where data retention isn’t optional, but

real-time analysis for everything isn’t practical. ... Data volumes are

continuing to rise. As organizations face high costs and fatigue, those that

thrive will be the ones that treat storage and retrieval as distinct functions.

The ability to preserve signal without incurring ongoing noise costs will become

a critical enabler for everything from insider threat detection to regulatory

compliance. Selective retrieval isn’t just about saving money. It’s about

regaining control over data sprawl, aligning IT resources with actual risk, and

giving teams the tools they need to ask, and answer, better questions.

Manufactured Madness: How To Protect Yourself From Insane AIs

The core of the problem lies in a well-intentioned but flawed premise: that we

can and should micromanage an AI’s output to prevent any undesirable outcomes.

These “guardrails” are complex sets of rules and filters designed to stop the

model from generating hateful, biased, dangerous, or factually incorrect

information. In theory, this is a laudable goal. In practice, it has created a

generation of AIs that prioritize avoiding offense over providing truth. ...

Compounding the problem of forced outcomes is a crisis of quality. The data

these models are trained on is becoming increasingly polluted. In the early

days, models were trained on a vast, curated slice of the pre-AI internet. But

now, as AI-generated content inundates every corner of the web, new models are

being trained on the output of their predecessors. ... Given this landscape, the

burden of intellectual safety now falls squarely on the user. We can no longer

afford to treat AI-generated text with passive acceptance. We must become

active, critical consumers of its output. Protecting yourself requires a new

kind of digital literacy. First and foremost: Trust, but verify. Always. Never

take a factual claim from an AI at face value. Whether it’s a historical date, a

scientific fact, a legal citation, or a news summary, treat it as an unconfirmed

rumor until you have checked it against a primary source.

The core of the problem lies in a well-intentioned but flawed premise: that we

can and should micromanage an AI’s output to prevent any undesirable outcomes.

These “guardrails” are complex sets of rules and filters designed to stop the

model from generating hateful, biased, dangerous, or factually incorrect

information. In theory, this is a laudable goal. In practice, it has created a

generation of AIs that prioritize avoiding offense over providing truth. ...

Compounding the problem of forced outcomes is a crisis of quality. The data

these models are trained on is becoming increasingly polluted. In the early

days, models were trained on a vast, curated slice of the pre-AI internet. But

now, as AI-generated content inundates every corner of the web, new models are

being trained on the output of their predecessors. ... Given this landscape, the

burden of intellectual safety now falls squarely on the user. We can no longer

afford to treat AI-generated text with passive acceptance. We must become

active, critical consumers of its output. Protecting yourself requires a new

kind of digital literacy. First and foremost: Trust, but verify. Always. Never

take a factual claim from an AI at face value. Whether it’s a historical date, a

scientific fact, a legal citation, or a news summary, treat it as an unconfirmed

rumor until you have checked it against a primary source.

6 Key Lessons for Businesses that Collect and Use Consumer Data

Ensure your privacy notice properly discloses consumer rights, including the right to access, correct, and delete personal data stored and collected by businesses, and the right to opt-out of the sale of personal data and targeted advertising. Mechanisms for exercising those rights must work properly, with a process in place to ensure a timely response to consumer requests. ... Another issue that the Connecticut AG raised was that the privacy notice was “largely unreadable.” While privacy notices address legal rights and obligations, you should avoid using excessive legal jargon to the extent possible and use clear, simple language to notify consumers about their rights and the mechanisms for exercising those rights. In addition, be as succinct as possible to help consumers locate the information they need to understand and exercise applicable rights. ... The AG provided guidance that under the CTDPA, if a business uses cookie banners to permit a consumer to opt-out of some data processing, such as targeted advertising, the consumer must be provided with a symmetrical choice. In other words, it has to be as clear and as easy for the consumer to opt out of such use of their personal data as it would be to opt in. This includes making the options to accept all cookies and to reject all cookies visible on the screen at the same time and in the same color, font, and size.How agentic AI Is reshaping execution across BFSI

Several BFSI firms are already deploying agentic models within targeted areas of

their operations. The results are visible in micro-interventions that improve

process flow and reduce manual load. Autonomous financial advisors, powered by

agentic logic, are now capable of not just reacting to user input, but

proactively monitoring markets, assessing customer portfolios, and recommending

real-time changes.. In parallel, agentic systems are transforming customer

service by acting as intelligent finance assistants, guiding users through

complex processes such as mortgage applications or claims filing. ... For

Agentic AI to succeed, it must be integrated into operational strategy. This

begins by identifying workflows where progress depends on repetitive human

actions that follow predictable logic. These are often approval chains,

verifications, task handoffs, and follow-ups. Once identified, clear rules need

to be defined. What conditions trigger an action? When is escalation required?

What qualifies as a closed loop? The strength of an agentic system lies in its

ability to act with precision, but that depends on well-designed logic and

relevant signals. Data access is equally important. Agentic AI systems require

context. That means drawing from activity history, behavioural cues, workflow

states and timing patterns.

Several BFSI firms are already deploying agentic models within targeted areas of

their operations. The results are visible in micro-interventions that improve

process flow and reduce manual load. Autonomous financial advisors, powered by

agentic logic, are now capable of not just reacting to user input, but

proactively monitoring markets, assessing customer portfolios, and recommending

real-time changes.. In parallel, agentic systems are transforming customer

service by acting as intelligent finance assistants, guiding users through

complex processes such as mortgage applications or claims filing. ... For

Agentic AI to succeed, it must be integrated into operational strategy. This

begins by identifying workflows where progress depends on repetitive human

actions that follow predictable logic. These are often approval chains,

verifications, task handoffs, and follow-ups. Once identified, clear rules need

to be defined. What conditions trigger an action? When is escalation required?

What qualifies as a closed loop? The strength of an agentic system lies in its

ability to act with precision, but that depends on well-designed logic and

relevant signals. Data access is equally important. Agentic AI systems require

context. That means drawing from activity history, behavioural cues, workflow

states and timing patterns.

Open Source Is Too Important To Dilute

The unfortunate truth is that these criteria don’t apply in every use case.

We’ve seen vendors build traction with a truly open project. Then, worried about

monetization or competition, they relicense it under a “source-available” model

with restrictions, like “no commercial use” or “only if you’re not a

competitor.” But that’s not how open source works. Software today is deeply

interconnected. Every project — no matter how small or isolated — relies on

dependencies, which rely on other dependencies, all the way down the chain. A

license that restricts one link in that chain can break the whole thing. ...

Forks are how the OSS community defends itself. When HashiCorp relicensed

Terraform under the Business Source License (BSL) — blocking competitors from

building on the tooling — the community launched OpenTofu, a fork under an

OSI-approved license, backed by major contributors and vendors. Redis’

transition away from Berkeley Software Distribution (BSD) to a proprietary

license was a business decision. But it left a hole — and the community forked

it. That fork became Valkey, a continuation of the project stewarded by the

people and platforms who relied on it most. ... The open source brand took

decades to build. It’s one of the most successful, trusted ideas in software

history. But it’s only trustworthy because it means something.

The unfortunate truth is that these criteria don’t apply in every use case.

We’ve seen vendors build traction with a truly open project. Then, worried about

monetization or competition, they relicense it under a “source-available” model

with restrictions, like “no commercial use” or “only if you’re not a

competitor.” But that’s not how open source works. Software today is deeply

interconnected. Every project — no matter how small or isolated — relies on

dependencies, which rely on other dependencies, all the way down the chain. A

license that restricts one link in that chain can break the whole thing. ...

Forks are how the OSS community defends itself. When HashiCorp relicensed

Terraform under the Business Source License (BSL) — blocking competitors from

building on the tooling — the community launched OpenTofu, a fork under an

OSI-approved license, backed by major contributors and vendors. Redis’

transition away from Berkeley Software Distribution (BSD) to a proprietary

license was a business decision. But it left a hole — and the community forked

it. That fork became Valkey, a continuation of the project stewarded by the

people and platforms who relied on it most. ... The open source brand took

decades to build. It’s one of the most successful, trusted ideas in software

history. But it’s only trustworthy because it means something.