Generative AI will turn cybercriminals into better con artists. AI will help attackers to craft well-written, convincing phishing emails and websites in different languages, enabling them to widen the nets of their campaigns across locales. We expect to see the quality of social engineering attacks improve, making lures more difficult for targets and security teams to spot. As a result, we may see an increase in the risks and harms associated with social engineering – from fraud to network intrusions. ... AI is driving the democratisation of technology by helping less skilled users to carry out more complex tasks more efficiently. But while AI improves organisations’ defensive capabilities, it also has the potential for helping malicious actors carry out attacks against lower system layers, namely firmware and hardware, where attack efforts have been on the rise in recent years. Historically, such attacks required extensive technical expertise, but AI is beginning to show promise to lower these barriers. This could lead to more efforts to exploit systems at the lower level, giving attackers a foothold below the operating system and the industry’s best software security defences.

Get the Value Out of Your Data

A robust data strategy should have clearly defined outcomes and measurements in place to trace the value it delivers. However, it is important to acknowledge the need for flexibility during the strategic and operational phases. Consequently, defining deliverables becomes crucial to ensure transparency in the delivery process. To achieve this, adopting a data product approach focused on iteratively delivering value to your organization is recommended. The evolution of DevOps, supported by cloud platform technology, has significantly improved the software engineering delivery process by automating development and operational routines. Now, we are witnessing a similar agile evolution in the data management area with the emergence of DataOps. DataOps aims to enhance the speed and quality of data delivery, foster collaboration between IT and business teams, and reduce the associated time and costs. By providing a unified view of data across the organization, DataOps enables faster and more confident data-driven decision-making, ensuring data accuracy, up-to-datedness, and security. It automates and brings transparency to the measurements required for agile delivery through data product management.

Exposure to new workplace technologies linked to lower quality of life

Part of the problem is that IT workers need to stay updated with the newest tech

trends and figure out how to use them at work, said Ryan Smith, founder of the

tech firm QFunction, also unconnected with the study. The hard part is that new

tech keeps coming in, and workers have to learn it, set it up, and help others

use it quickly, he said. “With the rise of AI and machine learning and the

uncertainty around it, being asked to come up to speed with it and how to best

utilize it so quickly, all while having to support your other numerous IT tasks,

is exhausting,” he added. “On top of this, the constant fear of layoffs in the

job market forces IT workers to keep up with the latest technology trends in

order to stay employable, which can negatively affect their quality of life.”

... “As IT has become the backbone of many businesses, that backbone is key to

the businesses operations, and in most cases revenue,” he added. “That means

it’s key to the business’s survival. IT teams now must be accessible 24 hours a

day. In the face of a problem, they are expected to work 24 hours a day to

resolve it. ...”

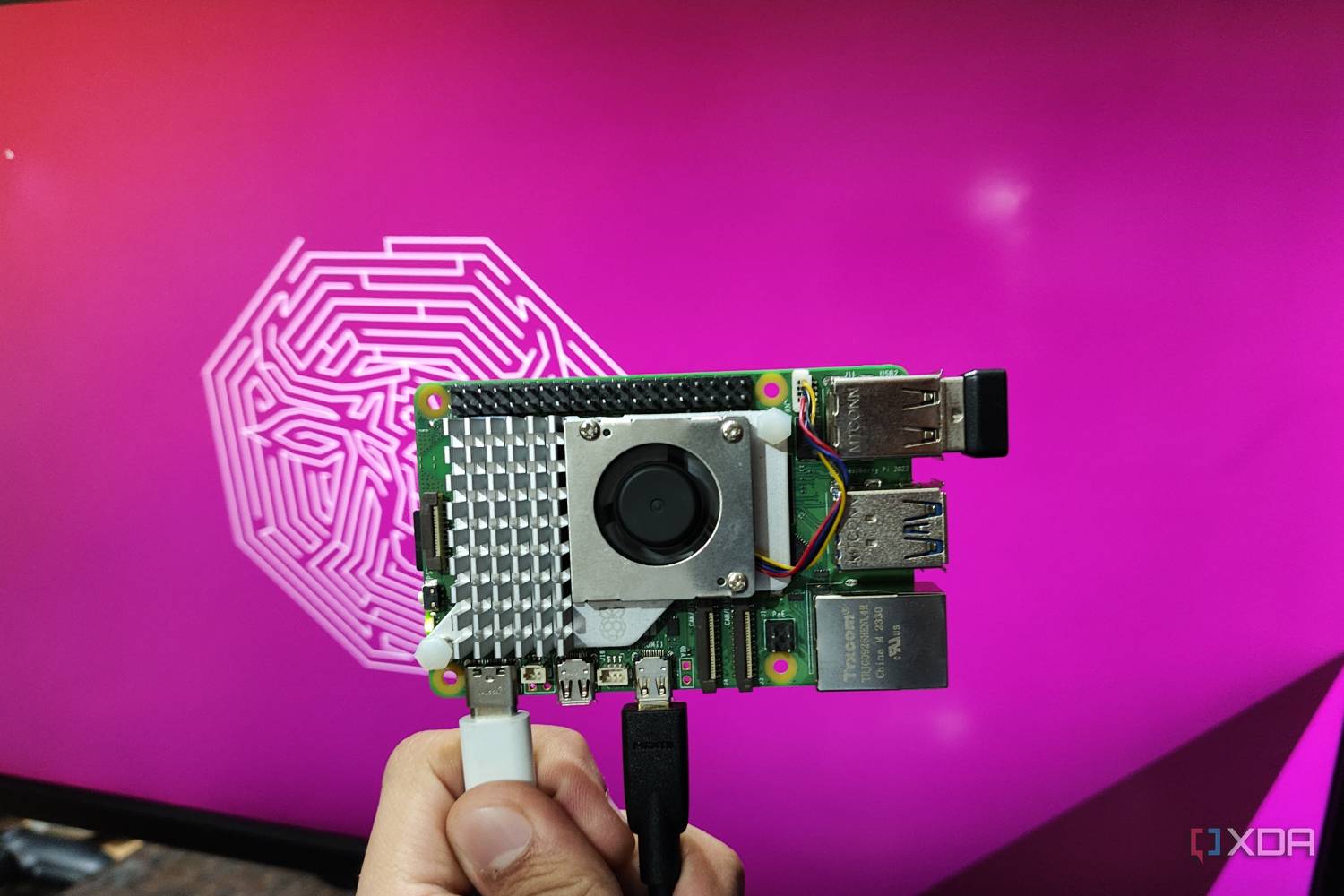

6 best operating systems for Raspberry Pi 5

Even though it has been nearly seven years since Microsoft debuted Windows on

Arm, there has been a noticeable lack of ARM-powered laptops. The situation is

even worse for SBCs like the Raspberry Pi, which aren’t even on Microsoft’s

radar. Luckily, the talented team at WoR project managed to find a way to

install Windows 11 on Raspberry Pi boards. ... Finally, we have the Raspberry

Pi OS, which has been developed specifically for the RPi boards. Since its

debut in 2012, the Raspberry Pi OS (formerly Raspbian) has become the

operating system of choice for many RPi board users. Since it was hand-crafted

for the Raspberry Pi SBCs, it’s faster than Ubuntu and light years ahead of

Windows 11 in terms of performance. Moreover, most projects tend to favor

Raspberry Pi OS over the alternatives. So, it’s possible to run into

compatibility and stability issues if you attempt to use any other operating

system when attempting to replicate the projects created by the lively

Raspberry Pi community. You won’t be disappointed with the Raspberry Pi OS if

you prefer a more minimalist UI. That said, despite including pretty much

everything you need to use to make the most of your RPi SBC, the Raspberry Pi

OS isn't as user-friendly as Ubuntu.

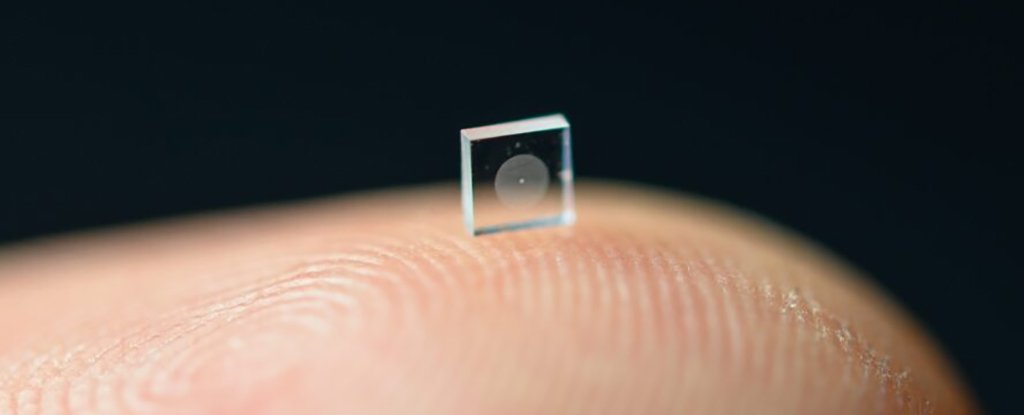

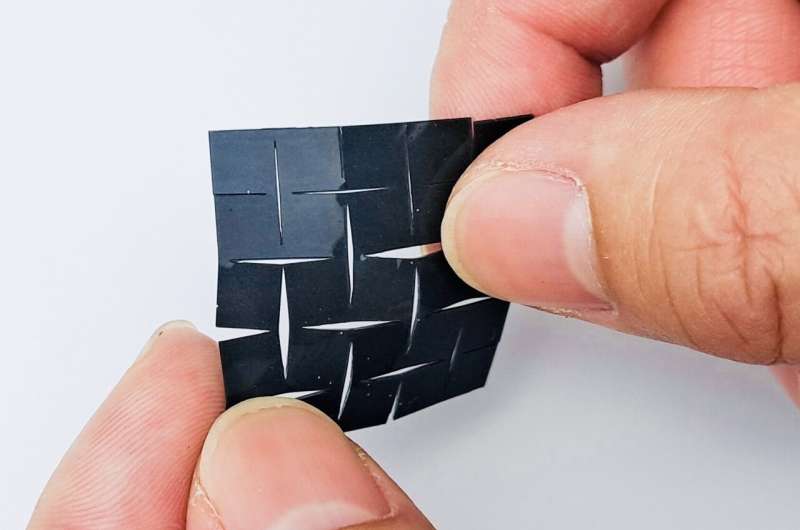

Speaking without vocal cords, thanks to a new AI-assisted wearable device

The breakthrough is the latest in Chen's efforts to help those with

disabilities. His team previously developed a wearable glove capable of

translating American Sign Language into English speech in real time to help

users of ASL communicate with those who don't know how to sign. The tiny new

patch-like device is made up of two components. One, a self-powered sensing

component, detects and converts signals generated by muscle movements into

high-fidelity, analyzable electrical signals; these electrical signals are

then translated into speech signals using a machine-learning algorithm. The

other, an actuation component, turns those speech signals into the desired

voice expression. The two components each contain two layers: a layer of

biocompatible silicone compound polydimethylsiloxane, or PDMS, with elastic

properties, and a magnetic induction layer made of copper induction coils.

Sandwiched between the two components is a fifth layer containing PDMS mixed

with micromagnets, which generates a magnetic field. Utilizing a soft

magnetoelastic sensing mechanism developed by Chen's team in 2021, the device

is capable of detecting changes in the magnetic field when it is altered as a

result of mechanical forces—in this case, the movement of laryngeal

muscles.

We can’t close the digital divide alone, says Cisco HR head as she discusses growth initiatives

At Cisco, we follow a strengths-based approach to learning and development,

wherein our quarterly development discussions extend beyond performance

evaluations to uplifting ourselves and our teams. We understand that a

one-size-fits-all approach is inadequate. To best play to our employees'

strengths, we have to be flexible, adaptable, and open to what works best for

each individual and team. This enables us to understand individual employees'

unique learning needs, enabling us to tailor personalised programs that

encompass diverse learning options such as online courses, workshops,

mentoring, and gamified experiences, catering to diverse learning styles. As a

result, our employees are energized to pursue their passions, contributing

their best selves to the workplace. Measuring the quality of work, internal

movements, employee retention, patents, and innovation, along with engagement

pulse assessments, allows us to gauge the effectiveness of our programs. When

it comes to addressing the challenge of retaining talent, it's essential for

HR leaders to consider a holistic approach.

Vector databases: Shiny object syndrome and the case of a missing unicorn

What’s up with vector databases, anyway? They’re all about information

retrieval, but let’s be real, that’s nothing new, even though it may feel like

it with all the hype around it. We’ve got SQL databases, NoSQL databases,

full-text search apps and vector libraries already tackling that job. Sure,

vector databases offer semantic retrieval, which is great, but SQL databases

like Singlestore and Postgres (with the pgvector extension) can handle

semantic retrieval too, all while providing standard DB features like ACID.

Full-text search applications like Apache Solr, Elasticsearch and OpenSearch

also rock the vector search scene, along with search products like Coveo, and

bring some serious text-processing capabilities for hybrid searching. But

here’s the thing about vector databases: They’re kind of stuck in the

middle. ... It wasn’t that early either — Weaviate, Vespa and Mivlus were

already around with their vector DB offerings, and Elasticsearch, OpenSearch

and Solr were ready around the same time. When technology isn’t your

differentiator, opt for hype. Pinecone’s $100 million Series B funding was led

by Andreessen Horowitz, which in many ways is living by the playbook it

created for the boom times in tech.

The Role of Quantum Computing in Data Science

Despite its potential, the transition to quantum computing presents several

significant challenges to overcome. Quantum computers are highly sensitive to

their environment, with qubit states easily disturbed by external influences –

a problem known as quantum decoherence. This sensitivity requires that quantum

computers be kept in highly controlled conditions, which can be expensive and

technologically demanding. Moreover, concerns about the future cost

implications of quantum computing on software and services are emerging.

Ultimately, the prices will be sky-high, and we might be forced to search for

AWS alternatives, especially if they raise their prices due to the

introduction of quantum features, as it’s the case with Microsoft banking

everything on AI. This raises the question of how quantum computing will alter

the prices and features of both consumer and enterprise software and services,

further highlighting the need for a careful balance between innovation and

accessibility. There’s also a steep learning curve for data scientists to

adapt to quantum computing.

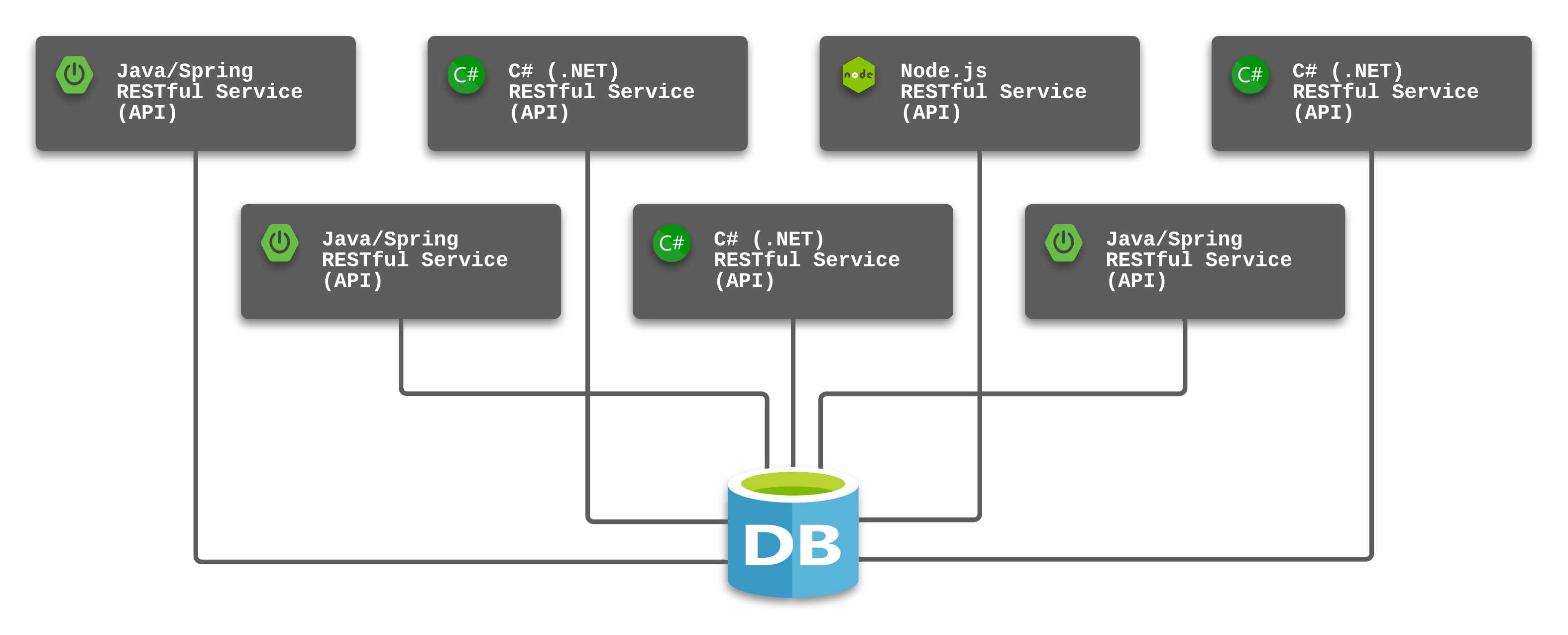

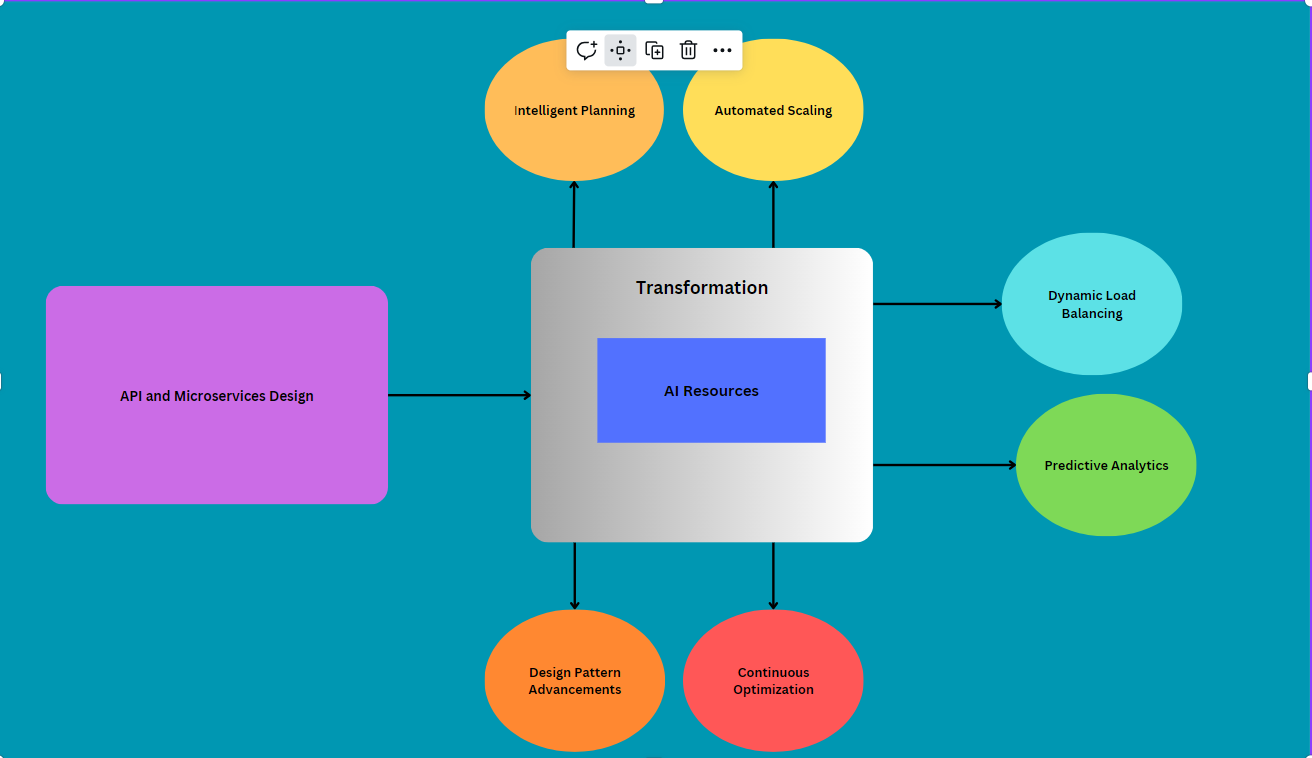

AI-Driven API and Microservice Architecture Design for Cloud

Implementing AI-based continuous optimization for APIs and microservices in

Azure involves using artificial intelligence to dynamically improve

performance, efficiency, and user experience over time. Here's how you can

achieve continuous optimization with AI in Azure:Performance monitoring:

Implement AI-powered monitoring tools to continuously track key performance

metrics such as response times, error rates, and resource utilization for APIs

and microservices in real time. Automated tuning: Utilize machine learning

algorithms to analyze performance data and automatically adjust configuration

settings, such as resource allocation, caching strategies, or database

queries, to optimize performance. Dynamic scaling: Leverage AI-driven

scaling mechanisms to adjust the number of instances hosting APIs and

microservices based on real-time demand and predicted workload trends,

ensuring efficient resource allocation and responsiveness. Cost optimization:

Use AI algorithms to analyze cost patterns and resource utilization data to

identify opportunities for cost savings, such as optimizing resource

allocation, implementing serverless architectures, or leveraging reserved

instances.

4 ways AI is contributing to bias in the workplace

Generative AI tools are often used to screen and rank candidates, create

resumes and cover letters, and summarize several files simultaneously. But AIs

are only as good as the data they're trained on. GPT-3.5 was trained on

massive amounts of widely available information online, including books,

articles, and social media. Access to this online data will inevitably reflect

societal inequities and historical biases, as shown in the training data,

which the AI bot inherits and replicates to some degree. No one using AI

should assume these tools are inherently objective because they're trained on

large amounts of data from different sources. While generative AI bots can be

useful, we should not underestimate the risk of bias in an automated hiring

process -- and that reality is crucial for recruiters, HR professionals, and

managers. Another study found racial bias is present in facial-recognition

technologies that show lower accuracy rates for dark-skinned individuals.

Something as simple as data for demographic distributions in ZIP codes being

used to train AI models, for example, can result in decisions that

disproportionately affect people from certain racial backgrounds.

Quote for the day:

"The most common way people give up

their power is by thinking they don't have any." --

Alice Walker

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/70899604/acastro_210512_1777_deepfake_0002.0.jpg)