Quote for the day:

“The best architectures, requirements, and designs emerge from self‑organizing teams.” -- Martin Fowler

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 23 mins • Perfect for listening on the go.

Why AI can’t match human creative work

This Computerworld article explores why AI-generated content struggles to

match the real effectiveness of human creativity, despite its overwhelming

volume in today's digital marketplace. Recent industry studies in advertising

and search engine optimization highlight a clear pattern: even when typical

audiences cannot consciously distinguish between human and machine outputs,

they consistently prefer human-created work. In advertising, human-made

campaigns perform significantly better in driving sales and boosting long-term

brand health because they can forge genuine emotional connections and break

new ground rather than simply remixing existing data. Similarly, comprehensive

data from web search results reveals that human-written articles

overwhelmingly secure top rankings compared to those entirely generated by

software algorithms. While automated tools have allowed an unprecedented flood

of synthetic blogs, music, videos, and social media posts into the mainstream,

this automated material rarely captures meaningful audience attention or real

engagement. For instance, although AI-produced episodes make up a very

substantial share of new podcast uploads, they currently account for less than

one percent of actual listening time. Ultimately, the author concludes that

while modern technology serves as a practical assistant for formatting,

outlining, or brainstorming, standalone human talent remains completely

indispensable for producing work that truly resonates, engages readers, and

achieves tangible long-term business results.

This Computerworld article explores why AI-generated content struggles to

match the real effectiveness of human creativity, despite its overwhelming

volume in today's digital marketplace. Recent industry studies in advertising

and search engine optimization highlight a clear pattern: even when typical

audiences cannot consciously distinguish between human and machine outputs,

they consistently prefer human-created work. In advertising, human-made

campaigns perform significantly better in driving sales and boosting long-term

brand health because they can forge genuine emotional connections and break

new ground rather than simply remixing existing data. Similarly, comprehensive

data from web search results reveals that human-written articles

overwhelmingly secure top rankings compared to those entirely generated by

software algorithms. While automated tools have allowed an unprecedented flood

of synthetic blogs, music, videos, and social media posts into the mainstream,

this automated material rarely captures meaningful audience attention or real

engagement. For instance, although AI-produced episodes make up a very

substantial share of new podcast uploads, they currently account for less than

one percent of actual listening time. Ultimately, the author concludes that

while modern technology serves as a practical assistant for formatting,

outlining, or brainstorming, standalone human talent remains completely

indispensable for producing work that truly resonates, engages readers, and

achieves tangible long-term business results.TSA seeks biometric identity management support

The Transportation Security Administration is looking for industry assistance

to modernize and maintain its internal identity management and background

check systems. Through a draft work statement issued by its Enrollment

Services and Vetting Programs office, the agency intends to upgrade how it

processes biographical and biometric information. This initiative does not

create new public-facing data collection routines; instead, it optimizes

existing programs that screen pilots, commercial flight students, maritime

personnel, hazardous materials drivers, and PreCheck applicants. A major focus

of this comprehensive update is moving away from traditional, one-time

background checks toward continuous, automated tracking. To do this, the

agency plans to expand its use of the Federal Bureau of Investigation's

recurrent vetting service and automate the evaluation of text-based criminal

records. Additionally, the project outlines plans to integrate existing

systems more deeply with Department of Homeland Security biometric databases

over the next three to five years. To improve data accuracy and operational

speed, the selected contractor will use data science tools, including basic

machine learning, to detect data anomalies and help staff review cases more

efficiently. The proposed contract includes a twelve-month base period

followed by four optional one-year extensions, with all services based at the

agency's Virginia headquarters.

The Transportation Security Administration is looking for industry assistance

to modernize and maintain its internal identity management and background

check systems. Through a draft work statement issued by its Enrollment

Services and Vetting Programs office, the agency intends to upgrade how it

processes biographical and biometric information. This initiative does not

create new public-facing data collection routines; instead, it optimizes

existing programs that screen pilots, commercial flight students, maritime

personnel, hazardous materials drivers, and PreCheck applicants. A major focus

of this comprehensive update is moving away from traditional, one-time

background checks toward continuous, automated tracking. To do this, the

agency plans to expand its use of the Federal Bureau of Investigation's

recurrent vetting service and automate the evaluation of text-based criminal

records. Additionally, the project outlines plans to integrate existing

systems more deeply with Department of Homeland Security biometric databases

over the next three to five years. To improve data accuracy and operational

speed, the selected contractor will use data science tools, including basic

machine learning, to detect data anomalies and help staff review cases more

efficiently. The proposed contract includes a twelve-month base period

followed by four optional one-year extensions, with all services based at the

agency's Virginia headquarters.

Why ‘human in the loop’ falls short – and what to do about it

In this SiliconANGLE column, Jason Bloomberg explains why the common practice

of keeping a human in the loop to oversee artificial intelligence operations

is deeply flawed. While tech companies often pitch human oversight as a safety

net against autonomous systems making mistakes, this method struggles to hold

up under real-world pressure. On an individual level, people tend to trust

automated systems too much, suffer from mental fatigue during repetitive

tasks, or simply wave approvals through without checking. In corporate groups,

it often leads to finger-pointing, blame-shifting, or superficial compliance.

Furthermore, software systems function in mere seconds, whereas human business

workflows require meetings and lengthy procedural delays, creating a massive

gap in actual response times. To fix these flaws, tech providers usually

suggest limiting software capabilities or building detailed tracking tools,

but these heavy-handed changes slow down operations and frustrate commercial

goals. Bloomberg suggests flipping the entire setup by focusing on automation

in the loop instead. Rather than forcing human workers to become cogs inside

an automated pipeline, software should exist purely to assist human day-to-day

operations. This perspective ensures people retain ultimate responsibility,

prevents software from making critical business decisions, and allows systems

to grow safely without overwhelming human operators or clashing with long-term

strategic plans.

In this SiliconANGLE column, Jason Bloomberg explains why the common practice

of keeping a human in the loop to oversee artificial intelligence operations

is deeply flawed. While tech companies often pitch human oversight as a safety

net against autonomous systems making mistakes, this method struggles to hold

up under real-world pressure. On an individual level, people tend to trust

automated systems too much, suffer from mental fatigue during repetitive

tasks, or simply wave approvals through without checking. In corporate groups,

it often leads to finger-pointing, blame-shifting, or superficial compliance.

Furthermore, software systems function in mere seconds, whereas human business

workflows require meetings and lengthy procedural delays, creating a massive

gap in actual response times. To fix these flaws, tech providers usually

suggest limiting software capabilities or building detailed tracking tools,

but these heavy-handed changes slow down operations and frustrate commercial

goals. Bloomberg suggests flipping the entire setup by focusing on automation

in the loop instead. Rather than forcing human workers to become cogs inside

an automated pipeline, software should exist purely to assist human day-to-day

operations. This perspective ensures people retain ultimate responsibility,

prevents software from making critical business decisions, and allows systems

to grow safely without overwhelming human operators or clashing with long-term

strategic plans.Why Moving Off the Cloud Is the Easy Part and What Comes Next Is Where Things Get Hard

6 critical security gaps every CISO must address

The CSO Online article highlights six essential security shortcomings that

corporate security leaders need to address. First, a narrow perspective

remains common; many leaders treat cybersecurity purely as a technical IT

issue instead of focusing on broader business resilience and downstream

operational continuity. Second, a noticeable lag exists between the swift

automation used by digital attackers and the slower, more traditional response

times of corporate defense teams. Similarly, security operations frequently

struggle to match the rapid pace of general business changes, adoptions, and

market expansions. Internal talent issues have also evolved significantly; the

primary challenge is no longer just finding enough individuals to hire, but

ensuring that current employees have the specific, updated skills required to

handle an evolving environment. This skills gap is heavily compounded by the

rapid growth of artificial intelligence, where top-down corporate initiatives

and unauthorized employee tools are vastly outstripping proper security

frameworks and oversight. Finally, aging tech infrastructure creates a

significant vulnerability, as out-of-date systems cannot support modern

security controls, leaving them exposed to easy exploitation. Rather than

attempting to block every single threat, professionals are advised to use

objective, risk-based prioritization to protect core company workflows and

preserve long-term stability.

The CSO Online article highlights six essential security shortcomings that

corporate security leaders need to address. First, a narrow perspective

remains common; many leaders treat cybersecurity purely as a technical IT

issue instead of focusing on broader business resilience and downstream

operational continuity. Second, a noticeable lag exists between the swift

automation used by digital attackers and the slower, more traditional response

times of corporate defense teams. Similarly, security operations frequently

struggle to match the rapid pace of general business changes, adoptions, and

market expansions. Internal talent issues have also evolved significantly; the

primary challenge is no longer just finding enough individuals to hire, but

ensuring that current employees have the specific, updated skills required to

handle an evolving environment. This skills gap is heavily compounded by the

rapid growth of artificial intelligence, where top-down corporate initiatives

and unauthorized employee tools are vastly outstripping proper security

frameworks and oversight. Finally, aging tech infrastructure creates a

significant vulnerability, as out-of-date systems cannot support modern

security controls, leaving them exposed to easy exploitation. Rather than

attempting to block every single threat, professionals are advised to use

objective, risk-based prioritization to protect core company workflows and

preserve long-term stability.The Pitfalls of Defaulting to a Single Database: Why "Good Enough" Isn't Always a Good Strategy

When building software systems, it is incredibly common for modern engineering teams to default to a single database because it feels familiar, comfortable, and entirely sufficient for early stage development. However, accepting a "good enough" data architecture often introduces severe technical challenges as an organization scales. Forcing highly diverse data workloads, such as rapid transactional processing, complex analytical reporting, and unstructured document storage, into one general purpose engine creates major performance bottlenecks. No single database system can optimally handle every distinct data requirement, which forces teams to make design compromises that ultimately drag down the performance of the entire platform. Furthermore, relying on a single shared repository creates a precarious single point of failure. If that central data layer experiences an unexpected outage or suffers a performance slowdown from a poorly optimized query, every connected application and service grinds to a sudden halt. This structural centralization tightly couples unrelated services, making future software changes cumbersome and risky. Instead of settling for a monolithic database structure out of convenience, organizations achieve far greater resilience by matching distinct operational tasks with appropriate, specialized storage technologies. Choosing targeted databases minimizes resource friction, streamlines backend infrastructure management, and ensures individual services remain completely independent and stable. The article examines how advanced artificial intelligence systems have

dismantled traditional timeline safety margins for enterprise cyber defense.

Historically, while AI could exploit known security flaws, it struggled to

identify them independently. However, the release of Anthropic’s Claude Mythos

Preview changed this dynamic by autonomously discovering thousands of zero-day

vulnerabilities across major operating systems and browsers at a minimal

compute cost. Consequently, the window between vulnerability disclosure and

real-world exploitation has collapsed to less than ten hours, rendering

traditional, calendar-based patching schedules obsolete. To address this risk,

security teams are advised to replace standard severity scoring with a more

dynamic, three-layer prioritization filter that integrates real-time

exploitation data from federal databases and predictive scoring systems.

Additionally, the proliferation of AI-driven developer platforms creates

massive security risks because a single compromised host can easily expose

high-value credentials across an entire corporate ecosystem. Because formal

safety and authorization standards are still years away from implementation,

organizations must move away from human-speed response intervals. Securing

modern networks requires implementing event-driven patching for core services,

conducting proactive asset discovery scans, and strictly auditing

authorization boundaries to match the accelerated operational speed of

automated adversaries.

The article examines how advanced artificial intelligence systems have

dismantled traditional timeline safety margins for enterprise cyber defense.

Historically, while AI could exploit known security flaws, it struggled to

identify them independently. However, the release of Anthropic’s Claude Mythos

Preview changed this dynamic by autonomously discovering thousands of zero-day

vulnerabilities across major operating systems and browsers at a minimal

compute cost. Consequently, the window between vulnerability disclosure and

real-world exploitation has collapsed to less than ten hours, rendering

traditional, calendar-based patching schedules obsolete. To address this risk,

security teams are advised to replace standard severity scoring with a more

dynamic, three-layer prioritization filter that integrates real-time

exploitation data from federal databases and predictive scoring systems.

Additionally, the proliferation of AI-driven developer platforms creates

massive security risks because a single compromised host can easily expose

high-value credentials across an entire corporate ecosystem. Because formal

safety and authorization standards are still years away from implementation,

organizations must move away from human-speed response intervals. Securing

modern networks requires implementing event-driven patching for core services,

conducting proactive asset discovery scans, and strictly auditing

authorization boundaries to match the accelerated operational speed of

automated adversaries.Why Data “Spring Cleaning” Is Critical for AI Execution

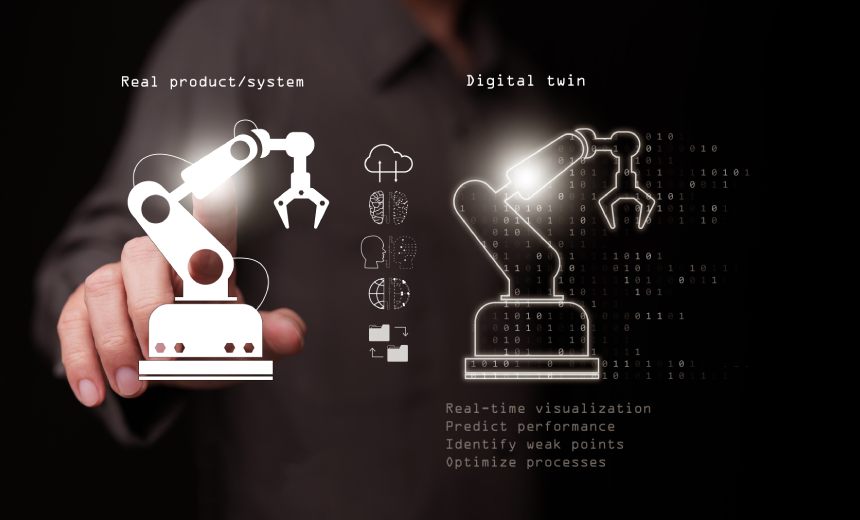

Digital Twins Are Broken, AI Might Finally Fix Them

For nearly two decades, digital twins struggled to live up to their initial

promises. Most companies used them merely as advanced visualization tools or

static engineering models that quickly became disconnected from the physical

equipment they represented. Building and maintaining these simulations was

highly expensive, and fragmented data across separate corporate departments

further limited their actual utility. However, the broader availability of

practical artificial intelligence is changing how factories and industrial

plants operate. By cleanly integrating live data feeds, modern digital twins

can continuously learn from everyday operational events, environmental shifts,

and machinery maintenance histories rather than remaining static. This shift

allows large companies to simulate factory updates and test potential facility

modifications safely without pausing active assembly lines. Beyond basic

mirroring, newer setups enable virtual models to accurately predict system

failures and automate adjustments directly back into real-world workflows.

This ongoing progression also encourages organizations to dismantle the

traditional divisions between their plant-floor operational systems and

standard corporate IT networks. Ultimately, these tools working together allow

manufacturers to bypass previous technical limitations. Instead of managing

passive digital replicas, businesses can now run responsive systems that

analyze data and optimize physical environments in real time, finally

capturing real value from their data investments.

For nearly two decades, digital twins struggled to live up to their initial

promises. Most companies used them merely as advanced visualization tools or

static engineering models that quickly became disconnected from the physical

equipment they represented. Building and maintaining these simulations was

highly expensive, and fragmented data across separate corporate departments

further limited their actual utility. However, the broader availability of

practical artificial intelligence is changing how factories and industrial

plants operate. By cleanly integrating live data feeds, modern digital twins

can continuously learn from everyday operational events, environmental shifts,

and machinery maintenance histories rather than remaining static. This shift

allows large companies to simulate factory updates and test potential facility

modifications safely without pausing active assembly lines. Beyond basic

mirroring, newer setups enable virtual models to accurately predict system

failures and automate adjustments directly back into real-world workflows.

This ongoing progression also encourages organizations to dismantle the

traditional divisions between their plant-floor operational systems and

standard corporate IT networks. Ultimately, these tools working together allow

manufacturers to bypass previous technical limitations. Instead of managing

passive digital replicas, businesses can now run responsive systems that

analyze data and optimize physical environments in real time, finally

capturing real value from their data investments.

/articles/schema-proliferation-problem/en/smallimage/schema-proliferation-problem-thumbnail-1779270222602.jpg)

/vnd/media/media_files/2026/04/21/from-pilots-to-platforms-2026-04-21-12-07-28.jpg)