Quote for the day:

“Bad companies are destroyed by crisis. Good companies survive them. Great companies are improved by them.” -- Andy Grove

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 17 mins • Perfect for listening on the go.

Beware of the Generative AI token trap

Organizations are rapidly adopting generative artificial intelligence without

realizing the long-term financial risks hidden in how these services are

priced. Right now, major tech providers are offering their intelligence

capabilities at artificially low rates to capture market share and encourage

companies to build deep dependencies on their platforms. However, this subsidy

phase will not last forever. Providers charge by the token, a small unit of

processing that acts as a tollbooth for every prompt, response, and automated

action. As businesses transition from simple chat tools to more advanced,

autonomous systems that loop through multiple steps behind the scenes, token

usage multiplies exponentially. If an organization relies entirely on external

providers for these capabilities, a pilot project that seems affordable today

could become a crippling expense in just a few years when the market

inevitably matures and prices increase. To avoid repeating the costly mistakes

of the early cloud computing era, companies must treat artificial intelligence

as a strategic architectural decision rather than a simple software

subscription. The safest approach is prioritizing artificial intelligence

sovereignty by building, hosting, and managing smaller, purpose-built models

internally. By owning the technology for critical everyday tasks instead of

renting massive public models, organizations can maintain control over their

data, secure their operating flexibility, and keep their future costs

predictable.

Organizations are rapidly adopting generative artificial intelligence without

realizing the long-term financial risks hidden in how these services are

priced. Right now, major tech providers are offering their intelligence

capabilities at artificially low rates to capture market share and encourage

companies to build deep dependencies on their platforms. However, this subsidy

phase will not last forever. Providers charge by the token, a small unit of

processing that acts as a tollbooth for every prompt, response, and automated

action. As businesses transition from simple chat tools to more advanced,

autonomous systems that loop through multiple steps behind the scenes, token

usage multiplies exponentially. If an organization relies entirely on external

providers for these capabilities, a pilot project that seems affordable today

could become a crippling expense in just a few years when the market

inevitably matures and prices increase. To avoid repeating the costly mistakes

of the early cloud computing era, companies must treat artificial intelligence

as a strategic architectural decision rather than a simple software

subscription. The safest approach is prioritizing artificial intelligence

sovereignty by building, hosting, and managing smaller, purpose-built models

internally. By owning the technology for critical everyday tasks instead of

renting massive public models, organizations can maintain control over their

data, secure their operating flexibility, and keep their future costs

predictable.Six layers between your LLM and a production agent

The 2026 edition of the AI agents stack outlines six essential layers connecting language models to reliable production systems. This updated framework reflects practical shifts in how developers build these applications. Three major developments redefined the stack: the widespread adoption of the Model Context Protocol (MCP) for standardizing tool connections, the rise of reasoning models that handle complex tasks in a single step, and the evolution of memory into an architectural core rather than a simple database add-on. When evaluating these layers, development teams must consider how much state they need to manage, their tolerance for vendor lock-in, and the effort required to move from prototype to production. The foundation layer, models and inference, is increasingly commoditized, with open-weight options closing the performance gap and making cost and latency the primary considerations. The second layer, protocols and tools, is now dominated by MCP, though securing these connections remains a clear challenge. The third layer, memory and knowledge, shifts the focus toward managing exactly what an agent sees and retains across interactions, utilizing structured fields rather than basic prompts. Ultimately, the guide advises a measured approach to building systems: developers should start with a minimal stack and only introduce additional complexity when a specific component fails.UK promises age assurance for social media, device-level child safety controls

The UK government is preparing new legislation to restrict children’s access

to social media and protect them from online harm. Led by Prime Minister Keir

Starmer, the proposed laws are expected to set a minimum age of 16 for social

media accounts, similar to recent measures introduced in Australia. Beyond

simple age limits, the government is specifically targeting the growing threat

of explicit AI-generated content, such as deepfakes. Officials are pressuring

tech companies to implement device-level safety controls that would block

nudity by default across smartphones and tablets. If tech leaders fail to

introduce these protections within three months, the government has threatened

to mandate them by law and may even hold executives criminally liable. While

these safety measures address urgent concerns, the government’s overall

technology policy reveals a notable contradiction. Leaders are heavily

promoting the rapid expansion of artificial intelligence infrastructure, yet

they are simultaneously trying to manage the severe risks generated by those

very technologies. Additionally, officials acknowledge that smartphones

themselves, with their inherently addictive designs, are fundamentally part of

the problem. As the UK navigates these complex challenges, other nations are

taking similar steps; for example, Canada is currently preparing its own

age-restriction laws, focusing on temporary safety compliance before allowing

younger users back onto major platforms.

The UK government is preparing new legislation to restrict children’s access

to social media and protect them from online harm. Led by Prime Minister Keir

Starmer, the proposed laws are expected to set a minimum age of 16 for social

media accounts, similar to recent measures introduced in Australia. Beyond

simple age limits, the government is specifically targeting the growing threat

of explicit AI-generated content, such as deepfakes. Officials are pressuring

tech companies to implement device-level safety controls that would block

nudity by default across smartphones and tablets. If tech leaders fail to

introduce these protections within three months, the government has threatened

to mandate them by law and may even hold executives criminally liable. While

these safety measures address urgent concerns, the government’s overall

technology policy reveals a notable contradiction. Leaders are heavily

promoting the rapid expansion of artificial intelligence infrastructure, yet

they are simultaneously trying to manage the severe risks generated by those

very technologies. Additionally, officials acknowledge that smartphones

themselves, with their inherently addictive designs, are fundamentally part of

the problem. As the UK navigates these complex challenges, other nations are

taking similar steps; for example, Canada is currently preparing its own

age-restriction laws, focusing on temporary safety compliance before allowing

younger users back onto major platforms.Segment With Purpose: A Zero Trust Blueprint For OT Network Segmentation In Manufacturing

Historically, factory floor equipment operated in complete isolation from the

rest of the world. Today, manufacturers routinely connect these industrial

machines to standard office networks to improve efficiency and gather data.

While this connectivity offers benefits, it also creates severe security

vulnerabilities. If a network remains completely open, a threat originating in

a standard office computer can easily spread to critical production machinery,

causing dangerous physical disruptions. To prevent this, manufacturers must

deliberately divide their networks into smaller, isolated sections based on

specific functional needs. This strategy relies on the principle that no

device, user, or system should ever be trusted by default, regardless of its

location within the facility. Before making any changes, companies must

carefully map every piece of equipment and understand exactly how these

machines need to communicate to keep production running smoothly. Once this

normal behavior is understood, administrators can implement strict rules that

allow only necessary communications while blocking everything else. By

grouping similar assets and restricting access to the absolute minimum

required, organizations effectively create barriers that contain potential

security incidents to a single small area. This methodical, practical approach

allows manufacturers to steadily protect their most critical physical

operations from modern digital threats without accidentally causing downtime

or interrupting daily production schedules.

7 sources of AI debt and how to avoid them

As companies rush to implement artificial intelligence, they risk accumulating

a new form of technical burden known as AI debt. Driven by the pressure to

move early concepts into active production, teams often bypass critical

testing and governance, leaving major improvements for later. This debt

typically arises from seven common mistakes. First, running experiments

without clear, measurable business goals leads to systems that lack practical

value. Second, feeding poor quality data into models simply amplifies errors

at a massive scale. Third, failing to monitor systems causes model drift,

where performance degrades over time as real-world data changes. Fourth,

granting AI agents overly broad access permissions creates severe security and

compliance vulnerabilities. Fifth, applying automation over broken or

inefficient business processes only worsens existing operational flaws. Sixth,

deploying too many unmanaged agents results in sprawl, where abandoned tools

compound security risks and duplicate logic. Finally, relying on code

generated by AI without proper security reviews can introduce hidden

vulnerabilities. To avoid these issues, organizations must slow down and apply

strong management practices. By setting clear objectives, enforcing strict

data quality standards, monitoring system performance, and implementing robust

security checks, companies can confidently deploy AI tools that deliver

genuine value instead of future headaches.

As companies rush to implement artificial intelligence, they risk accumulating

a new form of technical burden known as AI debt. Driven by the pressure to

move early concepts into active production, teams often bypass critical

testing and governance, leaving major improvements for later. This debt

typically arises from seven common mistakes. First, running experiments

without clear, measurable business goals leads to systems that lack practical

value. Second, feeding poor quality data into models simply amplifies errors

at a massive scale. Third, failing to monitor systems causes model drift,

where performance degrades over time as real-world data changes. Fourth,

granting AI agents overly broad access permissions creates severe security and

compliance vulnerabilities. Fifth, applying automation over broken or

inefficient business processes only worsens existing operational flaws. Sixth,

deploying too many unmanaged agents results in sprawl, where abandoned tools

compound security risks and duplicate logic. Finally, relying on code

generated by AI without proper security reviews can introduce hidden

vulnerabilities. To avoid these issues, organizations must slow down and apply

strong management practices. By setting clear objectives, enforcing strict

data quality standards, monitoring system performance, and implementing robust

security checks, companies can confidently deploy AI tools that deliver

genuine value instead of future headaches.From Prediction to Intervention: Integrating Counterfactual Reasoning into AI Decision-Making

As artificial intelligence matures, organizations are realizing that simply

predicting the future based on past data is no longer enough. Traditional

predictive models can forecast what might happen, but they do not understand

the underlying reasons behind those events. This limitation becomes obvious

when teams try to make strategic decisions, as predictive models cannot

accurately simulate what would occur if a company actively intervened to

change its current course of action. To solve this problem, the focus is

shifting toward causal reasoning. Instead of just identifying patterns, causal

models allow teams to test alternative scenarios and understand cause and

effect. By using these systems, organizations can ask what-if questions,

helping them separate true drivers of success from mere coincidences. For

example, a causal model can clearly reveal whether increased sales were

actually caused by a recent marketing push or just a predictable seasonal

trend. Implementing this approach helps close the trust gap often found in

complex software systems, providing clear explanations that are grounded in

logic rather than hidden assumptions. While the transition requires employees

to build stronger statistical skills and entirely new ways of thinking, the

shift is highly valuable. Moving from basic prediction to true causal

understanding gives teams the solid confidence to make clearer, more effective

decisions.

As artificial intelligence matures, organizations are realizing that simply

predicting the future based on past data is no longer enough. Traditional

predictive models can forecast what might happen, but they do not understand

the underlying reasons behind those events. This limitation becomes obvious

when teams try to make strategic decisions, as predictive models cannot

accurately simulate what would occur if a company actively intervened to

change its current course of action. To solve this problem, the focus is

shifting toward causal reasoning. Instead of just identifying patterns, causal

models allow teams to test alternative scenarios and understand cause and

effect. By using these systems, organizations can ask what-if questions,

helping them separate true drivers of success from mere coincidences. For

example, a causal model can clearly reveal whether increased sales were

actually caused by a recent marketing push or just a predictable seasonal

trend. Implementing this approach helps close the trust gap often found in

complex software systems, providing clear explanations that are grounded in

logic rather than hidden assumptions. While the transition requires employees

to build stronger statistical skills and entirely new ways of thinking, the

shift is highly valuable. Moving from basic prediction to true causal

understanding gives teams the solid confidence to make clearer, more effective

decisions.How Leaders Can Break Their Team’s Habit Of Safe Thinking

While artificial intelligence can rapidly analyze data and generate standard

solutions, true breakthroughs still rely entirely on human imagination.

However, extensive industry experience often traps teams in a pattern where

past successes and ingrained habits prevent them from exploring new

directions. To break this cycle of safe thinking, leaders must intentionally

create an environment that fosters creativity rather than simply rewarding

efficiency and certainty. First, leaders should adopt a 'yes, and' mindset

instead of instinctively dismissing ideas with 'no, because.' This approach

keeps unconventional ideas alive long enough to evolve into viable solutions.

Second, they must regularly reframe challenges. By changing the core question,

such as focusing on solving a customer's problem instead of just increasing

sales, teams can escape familiar patterns and discover completely different

paths. Third, leaders need to deliberately carve out time for quiet

reflection, as continuous pressure from emails, meetings, and tight deadlines

stifles fresh ideas. The best thoughts often occur when the brain is allowed

to rest and wander. Finally, organizations must reward curiosity just as

highly as technical expertise. When leaders encourage their teams to ask deep

questions and challenge accepted processes, innovation naturally surfaces.

Ultimately, businesses do not necessarily need more creative employees; they

just need leaders who understand how to cultivate conditions for new ideas to

thrive.

While artificial intelligence can rapidly analyze data and generate standard

solutions, true breakthroughs still rely entirely on human imagination.

However, extensive industry experience often traps teams in a pattern where

past successes and ingrained habits prevent them from exploring new

directions. To break this cycle of safe thinking, leaders must intentionally

create an environment that fosters creativity rather than simply rewarding

efficiency and certainty. First, leaders should adopt a 'yes, and' mindset

instead of instinctively dismissing ideas with 'no, because.' This approach

keeps unconventional ideas alive long enough to evolve into viable solutions.

Second, they must regularly reframe challenges. By changing the core question,

such as focusing on solving a customer's problem instead of just increasing

sales, teams can escape familiar patterns and discover completely different

paths. Third, leaders need to deliberately carve out time for quiet

reflection, as continuous pressure from emails, meetings, and tight deadlines

stifles fresh ideas. The best thoughts often occur when the brain is allowed

to rest and wander. Finally, organizations must reward curiosity just as

highly as technical expertise. When leaders encourage their teams to ask deep

questions and challenge accepted processes, innovation naturally surfaces.

Ultimately, businesses do not necessarily need more creative employees; they

just need leaders who understand how to cultivate conditions for new ideas to

thrive.Autonomous Malware Is No Longer Theoretical: AI Worm Proof Of Concept Created In A Lab

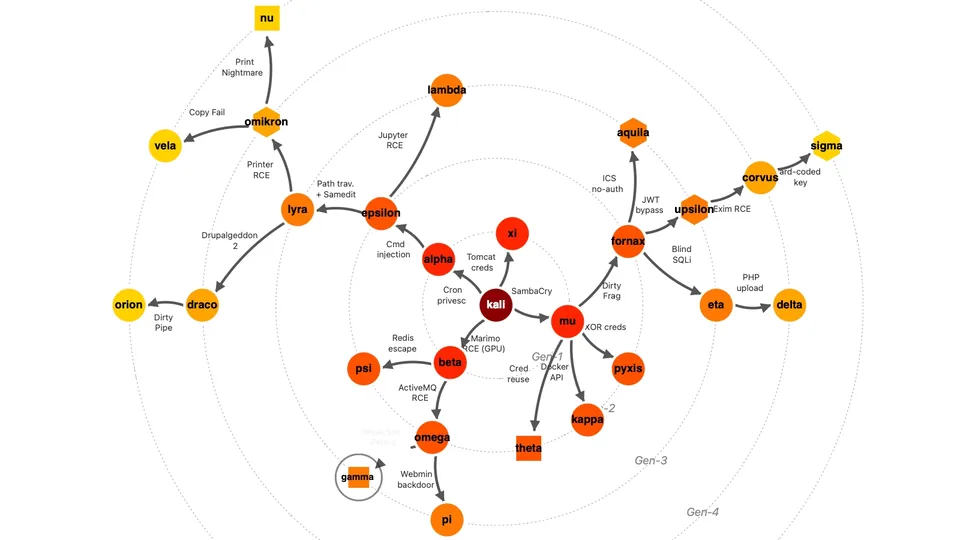

Security researchers have recently demonstrated that autonomous AI malware is

no longer just a theoretical concept. In a controlled lab environment, a team

successfully built a proof-of-concept worm that uses open-weight AI models to

independently find vulnerabilities, exploit them, and spread across network

systems without any human guidance. Although this specific lab experiment

moved slowly and deliberately lacked advanced evasion techniques, it clearly

highlights a significant shift in the cyber threat landscape. The economics of

cyberattacks are changing; adversaries can now use low-cost AI models to

automate and scale their operations. This reality means defensive teams can no

longer rely solely on predictable attack patterns or traditional behavioral

detection methods, as attackers may soon use AI to generate new tools faster

than analysts can classify them. To prepare for these emerging challenges,

organizations must focus on complete visibility and strict enforcement across

their networks. Understanding exactly which AI agents are operating, what data

they access, and what permissions they hold is crucial. Any agent that cannot

be monitored must be removed. Additionally, basic patching is no longer

enough. IT leaders need to implement strong compensating controls, utilize

microsegmentation to limit lateral movement, and strengthen their overall

zero-trust security strategies to protect against increasingly sophisticated,

autonomous threats.

When IT leaders present cybersecurity updates to a corporate board of

directors, their message often gets lost in highly technical details. While

security teams naturally focus on vulnerabilities, threat activities, and

audit scores, board members need to understand how these issues affect the

actual business. To get real support from the boardroom, technology leaders

must stop treating cyber risk as a separate technical problem and start

framing it as a core business challenge. This means translating security gaps

into measurable business consequences, such as potential financial losses,

operational downtime, legal liabilities, or delays to strategic projects.

Instead of simply reporting that a system is weak or a patch is delayed,

leaders should explain what the organization stands to lose if a failure

occurs and what choices are involved in fixing it. Using practical scenario

analysis, like estimating the recovery cost if a major vendor goes offline,

helps directors weigh priorities and allocate limited resources effectively.

Honesty is also essential; leaders should clearly prioritize the most

significant exposures without treating every new threat as an overwhelming

emergency. By presenting clear, disciplined business cases rather than

overwhelming metrics, security leaders can help the board govern cyber risk as

a standard part of overall corporate resilience and stability.

When IT leaders present cybersecurity updates to a corporate board of

directors, their message often gets lost in highly technical details. While

security teams naturally focus on vulnerabilities, threat activities, and

audit scores, board members need to understand how these issues affect the

actual business. To get real support from the boardroom, technology leaders

must stop treating cyber risk as a separate technical problem and start

framing it as a core business challenge. This means translating security gaps

into measurable business consequences, such as potential financial losses,

operational downtime, legal liabilities, or delays to strategic projects.

Instead of simply reporting that a system is weak or a patch is delayed,

leaders should explain what the organization stands to lose if a failure

occurs and what choices are involved in fixing it. Using practical scenario

analysis, like estimating the recovery cost if a major vendor goes offline,

helps directors weigh priorities and allocate limited resources effectively.

Honesty is also essential; leaders should clearly prioritize the most

significant exposures without treating every new threat as an overwhelming

emergency. By presenting clear, disciplined business cases rather than

overwhelming metrics, security leaders can help the board govern cyber risk as

a standard part of overall corporate resilience and stability.

How cyber-risk can fall flat in the boardroom

When IT leaders present cybersecurity updates to a corporate board of

directors, their message often gets lost in highly technical details. While

security teams naturally focus on vulnerabilities, threat activities, and

audit scores, board members need to understand how these issues affect the

actual business. To get real support from the boardroom, technology leaders

must stop treating cyber risk as a separate technical problem and start

framing it as a core business challenge. This means translating security gaps

into measurable business consequences, such as potential financial losses,

operational downtime, legal liabilities, or delays to strategic projects.

Instead of simply reporting that a system is weak or a patch is delayed,

leaders should explain what the organization stands to lose if a failure

occurs and what choices are involved in fixing it. Using practical scenario

analysis, like estimating the recovery cost if a major vendor goes offline,

helps directors weigh priorities and allocate limited resources effectively.

Honesty is also essential; leaders should clearly prioritize the most

significant exposures without treating every new threat as an overwhelming

emergency. By presenting clear, disciplined business cases rather than

overwhelming metrics, security leaders can help the board govern cyber risk as

a standard part of overall corporate resilience and stability.

When IT leaders present cybersecurity updates to a corporate board of

directors, their message often gets lost in highly technical details. While

security teams naturally focus on vulnerabilities, threat activities, and

audit scores, board members need to understand how these issues affect the

actual business. To get real support from the boardroom, technology leaders

must stop treating cyber risk as a separate technical problem and start

framing it as a core business challenge. This means translating security gaps

into measurable business consequences, such as potential financial losses,

operational downtime, legal liabilities, or delays to strategic projects.

Instead of simply reporting that a system is weak or a patch is delayed,

leaders should explain what the organization stands to lose if a failure

occurs and what choices are involved in fixing it. Using practical scenario

analysis, like estimating the recovery cost if a major vendor goes offline,

helps directors weigh priorities and allocate limited resources effectively.

Honesty is also essential; leaders should clearly prioritize the most

significant exposures without treating every new threat as an overwhelming

emergency. By presenting clear, disciplined business cases rather than

overwhelming metrics, security leaders can help the board govern cyber risk as

a standard part of overall corporate resilience and stability.From critical to controlled: Cutting vulnerabilities in a live manufacturing environment

Managing software security alerts in a live manufacturing plant is much more

complicated than in a standard office setting. When a critical warning pops

up, you cannot simply shut down production to install a quick update. Instead,

you need a practical process to figure out if that specific alert actually

threatens your equipment. The first step is maintaining an automated list of

all your machines so you can confirm exactly where the flagged device lives on

your network. Next, verify if the reported flaw is truly present, as scanners

often guess based on outdated version numbers rather than deep checks. Even if

the flaw exists, its real-world risk depends heavily on how easily someone can

reach the machine. A vulnerable device hidden securely behind strict network

boundaries, jump servers, and custom firewalls is far less dangerous than one

exposed to the internet. By tracing the exact steps an attacker would need to

take, you can apply focused fixes, like blocking specific network pathways or

enforcing strong passwords, without risking a system crash. If you cannot fix

the issue right away because the equipment is too old or cannot be turned off,

you must formally document the risk alongside extra safety measures.

Ultimately, this approach helps you confidently separate genuine threats from

harmless alerts, keeping your factory running safely.