Experts Concerned by Signs of AI Bubble

"Capital continues to pour into the AI sector with very little attention being paid to company fundamentals," he wrote, "in a sure sign that when the music stops there will not be many chairs available." It's been a turbulent week for AI companies, highlighting what sometimes seems like unending investor appetite for new AI ventures. Case in point is Cohere, one of the many startups focusing on generative AI, which is reportedly in late-stage discussions that would value the venture at a whopping $5 billion. Then there's Microsoft, which has already made a $13 billion bet on OpenAI, as well as hiring most of the staff from AI startup Inflection AI earlier this month. The highly unusual deal — or "non-acquisition" — raised red flags among investors, leading to questions as to why Microsoft didn't simply buy the company. According to Windsor, companies "are rushing into anything that can be remotely associated with AI." Ominously, the analyst wasn't afraid to draw direct lines between the ongoing AI hype and previous failed hype cycles. "This is precisely what happened with the Internet in 1999, autonomous driving in 2017 and now generative AI in 2024," he wrote.

A New Way to Let AI Chatbots Converse All day Without Crashing?

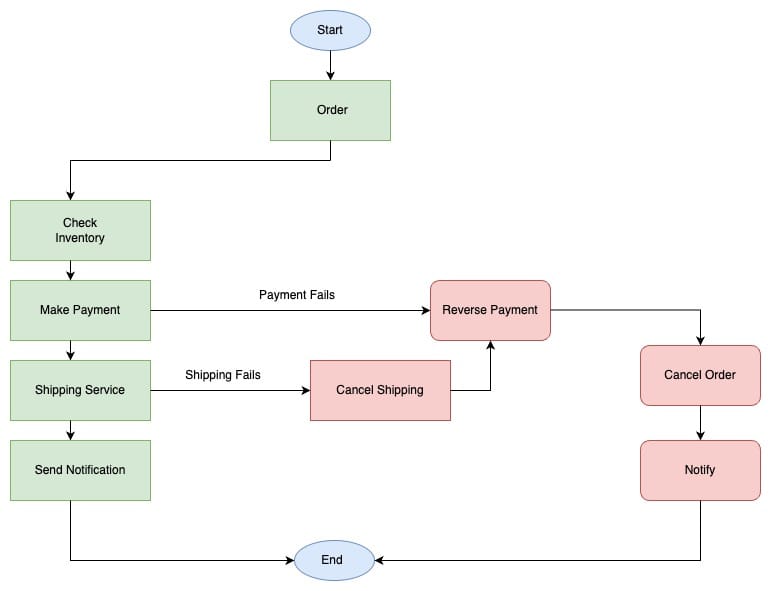

Typically, an AI chatbot writes new text based on text it has just seen, so it stores recent tokens in memory, called a KV Cache, to use later. The attention mechanism builds a grid that includes all tokens in the cache, an “attention map” that maps out how strongly each token, or word, relates to each other token. Understanding these relationships is one feature that enables large language models to generate human-like text. But when the cache gets very large, the attention map can become even more massive, which slows down computation. Also, if encoding content requires more tokens than the cache can hold, the model’s performance drops. For instance, one popular model can store 4,096 tokens, yet there are about 10,000 tokens in an academic paper. To get around these problems, researchers employ a “sliding cache” that bumps out the oldest tokens to add new tokens. However, the model’s performance often plummets as soon as that first token is evicted, rapidly reducing the quality of newly generated words. In this new paper, researchers realized that if they keep the first token in the sliding cache, the model will maintain its performance even when the cache size is exceeded.

AI and compliance: The future of adverse media screening in FinTech

Unlike static databases, AI systems continuously learn and adapt from the data

they process. This means that they become increasingly effective over time, able

to discern patterns and flag potential risks with greater accuracy. This

evolving intelligence is crucial for keeping pace with the sophisticated

techniques employed by individuals or entities trying to circumvent financial

regulations. Furthermore, the implementation of AI in adverse media screening

fosters a more robust compliance framework. It empowers FinTech companies to

preemptively address potential regulatory challenges by providing them with

actionable insights. This proactive approach to compliance not only safeguards

the institution but also ensures the integrity of the financial system at large.

Despite the promising benefits, the integration of AI into adverse media

screening is not without challenges. Concerns regarding data privacy, the

potential for bias in AI algorithms, and the need for transparent methodologies

are at the forefront of discussions among regulators and companies

alike.

How to Get Tech-Debt on the Roadmap

/filters:no_upscale()/articles/getting-tech-debt-on-roadmap/en/resources/7fig3-1710944508954.jpg)

Addressing technical debt is costly, emphasizing the need to view engineering

efforts beyond the individual contributor level. Companies often use the

revenue per employee metric as a gauge of the value each employee contributes

towards the company's success. Quick calculations suggest that engineers, who

typically constitute about 30-35% of a company's workforce, are expected to

generate approximately one million dollars in revenue each through their

efforts. ... Identifying technical debt is complex. It encompasses

customer-facing features, such as new functionalities and bug fixes, as well

as behind-the-scenes work like toolchains, testing, and compliance, which

become apparent only when issues arise. Additionally, operational aspects like

CI/CD processes, training, and incident response are crucial, non-code

components of system management. ... Service Level Objectives (SLOs) stand out

as a preferred tool for connecting technical metrics with business value,

primarily because they encapsulate user experience metrics, offering a

concrete way to demonstrate the impact of technical decisions on business

outcomes.

What are the Essential Skills for Cyber Security Professionals in 2024?

It perhaps goes without saying, but technical proficiency is key. It is

essential to understand how networks function, and have the ability to secure

them. This should include knowledge of firewalls, intrusion detection and

prevention systems, VPNs and more. Coding and scripting is also crucial, with

proficiency in languages such as Python, Java, or C++ invaluable for

cybersecurity professionals. ... Coding skills can enable professionals to

analyse, identify, and fix vulnerabilities in software and systems, essential

when carrying out effective audits of security practices. It will also be

needed for evaluation of new technology being integrated into the business, to

implement controls to diminish any risk in its operation. ... There’s no

escaping the fact that data in general is the lifeblood of modern business. As

a result, every cyber security professional will benefit from learning data

analysis skills. This does not mean becoming a data scientist, but upskilling

in areas such as statistics that can have a profound impact on your job. At

the very least you need to be able to understand what the data is telling you.

Otherwise, you’re simply following what other people – usually in the data

team – tell you.

Removing the hefty price tag: cloud storage without the climate cost

Firstly, businesses should consider location. This means picking a cloud

storage provider that’s close to a power facility. This is because distance

matters. If electricity travels a long way between generation and use, a

percentage is lost. In addition, data centers located in underwater

environments or cooler climates can reduce the energy required for cooling.

Next, businesses should ask green providers about what they’re doing to

minimize their environmental impact. For example, powering their operations

with solar, wind, or biofuels reduces reliance on fossil fuels and so lowers

GHG emissions. Some facilities will house large battery banks to store

renewable energy and ensure a continuous, eco-friendly power supply. Last but

certainly not least, technology offers a powerful avenue for enhancing the

energy efficiency of cloud storage. Some providers have been investing in

algorithms, software, and hardware designed to optimize energy use. For

instance, introducing AI and machine learning algorithms or frequency scaling

can drastically improve how data centers manage power consumption and

cooling.

RESTful APIs: Essential Concepts for Developers

One of these principles is called statelessness, which emphasizes that each

request from a client to the server must contain all the information necessary

to understand and process the request. This includes the endpoint URI, the

HTTP method, any headers, query parameters, authentication tokens, and session

information. By eliminating the need for the server to store session state

between requests, statelessness enhances scalability and reliability, making

RESTful APIs ideal for distributed systems. Another core principle of REST

architecture is the uniform interface, promoting simplicity and consistency in

API design. This includes several key concepts, such as resource

identification through URIs (endpoints), the use of standard HTTP methods like

GET, POST, PUT, and DELETE for CRUD operations, and the adoption of standard

media types for representing resources. ... RESTful API integration allows

developers to leverage the functionalities of external APIs to enhance the

capabilities of their applications. This includes accessing external data

sources, performing specific operations, or integrating with third-party

services.

The dawn of eco-friendly systems development

First, although we are good at writing software, we struggle to create

software that optimally utilizes hardware resources. This leads to

inefficiencies in power consumption. In the era of cloud computing, we view

hardware resources as a massive pool of computing goodness readily available

for the taking. No need to think about efficiency or optimization. Second,

there has been no accountability for power consumption. Developers and devops

engineers do not have access to metrics demonstrating poorly engineered

software’s impact on hardware power consumption. In the cloud, this lack of

insight is usually worse. Hardware inefficiency costs are hidden since the

hardware does not need to be physically purchased—it’s on demand. Cloud finops

may change this situation, but it hasn’t yet. Finally, we don’t train

developers to write efficient code. A nonoptimized versus a highly optimized

application could be 500% more efficient in power consumption metrics. I have

watched this situation deteriorate over time. We had to write efficient code

back in the day because the cost and availability of processors, storage, and

memory were prohibitive and limited.

10 Essential Measures To Secure Access Credentials

Eliminating passwords should be every organization’s goal, but if that’s not a

near-term possibility, implement advanced password policies that exceed

traditional standards. Encourage the use of passphrases with a minimum length of

16 characters, incorporating complexity through variations in character types,

as well as password changes every 90 days. Additionally, advocate for the use of

password managers and passkeys, where possible, and educate end users on the

dangers of reusing passwords across different services. ... Ensure the

encryption of credentials using advanced cryptographic algorithms and manage

encryption keys securely with hardware security modules or cloud-based key

management services. Implement zero-trust architectures to safeguard encrypted

data further. ... Implement least privilege access controls, which give users

only the minimum level of access needed to do their jobs, and regularly audit

permissions. Use just-in-time (JIT) and just-enough-access (JEA) principles to

dynamically grant access as needed, reducing the attack surface by limiting

excessive permissions.

What car shoppers need to know about protecting their data

You can ask representatives at the dealership about a carmaker’s privacy

policies and if you have the ability to opt-in or opt-out of data collection,

data aggregation and data monetization — or the selling of your data to

third-party vendors, said Payton. Additionally, ask if you can be anonymized and

not have the data aggregated under your name and your vehicle’s unique

identifying number, she said. People at the dealership “might even point you

towards talking to the service manager, who often has to deal with any repairs

and any follow up and technical components,” said Drury. ... These days, many

newer vehicles essentially have an onboard computer. If you don’t want to be

tracked or have vehicle data collected and shared, you might find instructions

in your owner’s manual on how to wipe out your personalized data and information

from the onboard computer, said Payton. “That can be a great way if you are

already in a car and you love the car, but you don’t like the data tracking,”

she said. While you may not know if the data was already collected and sent out

to third parties, you could do this on a periodic basis, she said.

Quote for the day:

"Thinking should become your capital

asset, no matter whatever ups and downs you come across in your life." --

Dr. APJ Kalam