Quote for the day:

"You can only lead others where you yourself are willing to go." -- Lachlan McLean

CISOs must prove the business value of cyber — the right metrics can help

With a foundational ERM program, and by aligning metrics to business priorities,

cybersecurity leaders can ultimately prove the value of the cyber security

function. Useful metrics examples in business terms include maturity,

compliance, risk, budget, business value streams, and status of SecDevOps

(shifting left) adoption, Oberlaender explains. But how does a cybersecurity

expert learn what’s important to the business? ... “Boards are faced with

complex matters such as impact on interest rates, tariffs, stock price

volatility, supply chain issues, profitability, and acquisitions. Then the CISO

enters the boardroom with their MITRE Attack framework, patching metrics and

NIST maturity models,” Hetner continues. “These metrics are not aligned to what

the board is conditioned to reviewing.” ... Rather than just asking “are we

secure?” business leaders are asking what metrics their cyber components are

using to measure and quantify risk and how they’re spending against those risks.

For CISO’s, this goes beyond measuring against frameworks such as NIST, listing

a litany of security vulnerabilities they patched, or their mean time to

response. “Instead, we can say, ‘This is our potential financial exposure’,”

Nolen explains. “So now you’re talking dollars and cents rather than CVEs and

technical scores that board members don’t care about. What they care about is

the bottom line.”

With a foundational ERM program, and by aligning metrics to business priorities,

cybersecurity leaders can ultimately prove the value of the cyber security

function. Useful metrics examples in business terms include maturity,

compliance, risk, budget, business value streams, and status of SecDevOps

(shifting left) adoption, Oberlaender explains. But how does a cybersecurity

expert learn what’s important to the business? ... “Boards are faced with

complex matters such as impact on interest rates, tariffs, stock price

volatility, supply chain issues, profitability, and acquisitions. Then the CISO

enters the boardroom with their MITRE Attack framework, patching metrics and

NIST maturity models,” Hetner continues. “These metrics are not aligned to what

the board is conditioned to reviewing.” ... Rather than just asking “are we

secure?” business leaders are asking what metrics their cyber components are

using to measure and quantify risk and how they’re spending against those risks.

For CISO’s, this goes beyond measuring against frameworks such as NIST, listing

a litany of security vulnerabilities they patched, or their mean time to

response. “Instead, we can say, ‘This is our potential financial exposure’,”

Nolen explains. “So now you’re talking dollars and cents rather than CVEs and

technical scores that board members don’t care about. What they care about is

the bottom line.” Feeding the AI beast, with some beauty

AI-driven growth is placing an unprecedented load on data centres worldwide, and India is poised to shoulder a large share of the incremental electricity, real estate, and cooling burden created by rising AI demand. The IEA has estimated a trajectory that AI is accelerating at a rapid pace. Under realistic scenarios, AI workloads alone could require on the order of 1–1.5 GW of continuous IT power—equivalent to 8.8–13 TWh annually—in India by 2030. This translates into a significant new draw on grids, water resources, and capex for cooling and power infrastructure. Recent analyses indicate that while AI’s share of data centre power today stands in the single-digit to low-teens range, it could climb to 20–40 per cent or more by 2030 in some scenarios, fundamentally reshaping the power-consumption profile of digital infrastructure. ... As data centres grow in scale, sustainability is becoming a competitive differentiator—and that’s where Life Cycle Assessments (LCAs) and Environmental Product Declarations (EPDs) play a critical role. An LCA is a systematic method for evaluating the total environmental impact of a product, process, or system across its entire life cycle. For a data centre, this spans both upstream (embodied) impacts—such as construction materials, IT equipment manufacturing, and cooling and power infrastructure including gensets—as well as operational impacts like electricity consumption.8 IT leadership tips for first-time CIOs

Generally speaking, the first three years can make or break your IT leadership

career, given that digital leaders globally tend to stay at one company for

just over that length of time on average, according to the 2025 Nash Squared

Digital Leadership Report. CIOs looking to sidestep that statistic are taking

intentional measures, ensuring they get early wins, and perhaps most

importantly, not coming into their role with preconceived ideas about how to

lead or assuming what worked in a past job can be replicated. ... The CTO of

staffing and recruiting firm Kelly says that “building momentum, finding ways

to get quick wins from the low hanging fruit” will help build credibility with

the leadership team. Then, you can parlay those into bigger wins and avoid

spinning out, he says. ... While making connections and establishing

relationships is critical, Lewis stresses the importance of not rushing to

change things right away when you’re new to the job. “Let it set for a while,”

he says. ... This is especially true of midsize and larger midsize

organizations “where the clarity of strategy and clarity of what’s important …

isn’t always well documented and well thought out,” Rosenbaum says. Knowing

the maturity of your organization is really important, he says. “Some CIO

roles are just about keeping the lights on, making sure security is good at a

lower level. As the company starts to mature, they start thinking about

technology as an enabler, and to that end, they start having maybe a more

unified technology strategy.”

Generally speaking, the first three years can make or break your IT leadership

career, given that digital leaders globally tend to stay at one company for

just over that length of time on average, according to the 2025 Nash Squared

Digital Leadership Report. CIOs looking to sidestep that statistic are taking

intentional measures, ensuring they get early wins, and perhaps most

importantly, not coming into their role with preconceived ideas about how to

lead or assuming what worked in a past job can be replicated. ... The CTO of

staffing and recruiting firm Kelly says that “building momentum, finding ways

to get quick wins from the low hanging fruit” will help build credibility with

the leadership team. Then, you can parlay those into bigger wins and avoid

spinning out, he says. ... While making connections and establishing

relationships is critical, Lewis stresses the importance of not rushing to

change things right away when you’re new to the job. “Let it set for a while,”

he says. ... This is especially true of midsize and larger midsize

organizations “where the clarity of strategy and clarity of what’s important …

isn’t always well documented and well thought out,” Rosenbaum says. Knowing

the maturity of your organization is really important, he says. “Some CIO

roles are just about keeping the lights on, making sure security is good at a

lower level. As the company starts to mature, they start thinking about

technology as an enabler, and to that end, they start having maybe a more

unified technology strategy.”Drata’s VP of Data on Rethinking Data Ops for the AI Era: Crawl, Walk, Run — Then Sprint

While GenAI may be the shiny new tool, Solomon makes it clear that foundational work around ingestion and transformation is far from trivial. “We live and die by making sure that all the data has been ingested in a fresh manner into the data warehouse,” he explains. He describes the “bread and butter” of the team: synchronizing thousands of MySQL databases from a single-tenant production architecture into the warehouse — closer to real-time. “We do a lot of activities with regard to the CDC pipeline, which is just like driving terabytes of data per day.” But the data team isn’t working in isolation. GTM executives return from conferences excited about GenAI. ... Rather than building fully-fledged pipelines from day one, the team prioritizes quick feedback loops — using sandboxes, cloud notebooks, or Streamlit apps to test hypotheses. Once business impact is validated, the team gradually introduces cost tracking, governance, and scalability. If a stakeholder’s hypothesis lacks merit, there is no point in building complex data pipelines, governance frameworks, or cost-tracking systems. This shift in mindset, he explains, is something many data teams are grappling with today. Traditionally, data teams were trained to focus on building scalable, robust pipelines from day one — often requiring significant upfront effort. But this often led to cost inefficiencies and delays.Model Context Protocol Servers: Build or Buy?

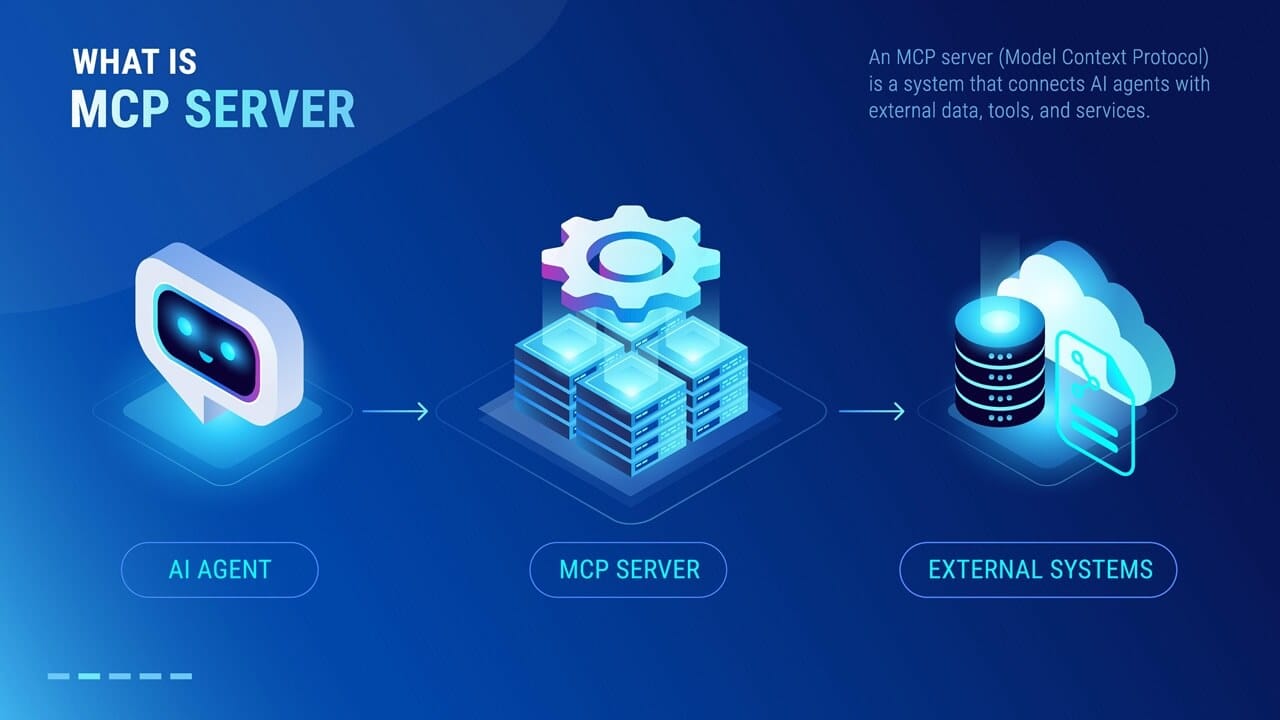

"The tension lies in whether you have the sustained capacity to keep pace with

protocols that are still being debated by their maintainers," said Rishi

Bhargava, co-founder at Descope, a customer and agentic IAM platform. "Are you

prepared to build the plane while it's flying, or would you rather upgrade a

finished plane mid-flight?" ... "From a business perspective, the build versus

buy decision for MCP servers boils down to strategic priorities and risk

appetite," Jain said. Building MCP servers in-house gives you "complete

control," but buying provides "speed, reliability, and lower operational

burden," he said. But others think there's no reason to rush your decision. ...

"Most companies shouldn't be doing either yet," he said, explaining that

companies should first focus on the specific business goals they are trying to

achieve, rather than on which existing applications they think should have AI

features added. "Build when you have an actual AI application that requires

custom data integration and you understand exactly what intelligence you're

trying to deploy. If you're simply connecting ChatGPT to your CRM, you don't

need MCP at all," Prywata said. ... "It is usually best to build [MCP servers]

in-house when compliance, performance tuning, or data sovereignty are key

priorities for the business," said Marcus McGehee, founder at The AI Consulting

Lab.

"The tension lies in whether you have the sustained capacity to keep pace with

protocols that are still being debated by their maintainers," said Rishi

Bhargava, co-founder at Descope, a customer and agentic IAM platform. "Are you

prepared to build the plane while it's flying, or would you rather upgrade a

finished plane mid-flight?" ... "From a business perspective, the build versus

buy decision for MCP servers boils down to strategic priorities and risk

appetite," Jain said. Building MCP servers in-house gives you "complete

control," but buying provides "speed, reliability, and lower operational

burden," he said. But others think there's no reason to rush your decision. ...

"Most companies shouldn't be doing either yet," he said, explaining that

companies should first focus on the specific business goals they are trying to

achieve, rather than on which existing applications they think should have AI

features added. "Build when you have an actual AI application that requires

custom data integration and you understand exactly what intelligence you're

trying to deploy. If you're simply connecting ChatGPT to your CRM, you don't

need MCP at all," Prywata said. ... "It is usually best to build [MCP servers]

in-house when compliance, performance tuning, or data sovereignty are key

priorities for the business," said Marcus McGehee, founder at The AI Consulting

Lab.

Every CIO Fails; The Smart Ones Admit It

There's a "hero CIO" myth deeply rooted in our mindset - the idea that you're the person who makes technology work, no matter what. Admitting failure feels like admitting incompetence, especially in boardrooms where few understand the complexity of IT. Organizational incentives also discourage openness. Many companies punish failure more than they reward learning. I've seen talented CIOs denied promotion because of a single delayed project, even when their broader portfolio delivered value. When institutional memory focuses on what went wrong rather than what was learned, people stop taking risks. The second factor is C-suite politics. In some environments, transparency becomes ammunition. Another team might use a project delay to justify requests for budget increases or to exert influence. And finally, CIOs worry about vendor perception, admitting setbacks could impact pricing, support or their reputation with partners. ... Build your transparency muscle in peacetime, not when something is on fire. By the time a crisis hits, it's too late to establish credibility. Make transparency habitual. Share work in progress, not just results. Celebrate learning, not perfection. Run "pre-mortems" where you assume a project failed and work backwards to identify what could go wrong. And when you make a mistake, own it publicly. The honesty earns you more trust than a polished explanation ever will.6 proven lessons from the AI projects that broke before they scaled

In analyzing dozens of AI PoCs that sailed on through to full production use —

or didn’t — six common pitfalls emerge. Interestingly, it’s not usually the

quality of the technology but misaligned goals, poor planning or unrealistic

expectations that caused failure. ... Define specific, measurable objectives

upfront. Use SMART criteria. For example, aim for “reduce equipment downtime by

15% within six months” rather than a vague “make things better.” Document these

goals and align stakeholders early to avoid scope creep. ... Invest in data

quality over volume. Use tools like Pandas for preprocessing and Great

Expectations for data validation to catch issues early. Conduct exploratory data

analysis (EDA) with visualizations (like Seaborn) to spot outliers or

inconsistencies. Clean data is worth more than terabytes of garbage. ... Start

simple. Use straightforward algorithms like random forest or XGBoost from

scikit-learn to establish a baseline. Only scale to complex models —

TensorFlow-based long-short-term-memory (LSTM) networks — if the problem demands

it. Prioritize explainability with tools like SHAP to build trust with

stakeholders. ... Plan for production from day one. Package models in Docker

containers and deploy with Kubernetes for scalability. Use TensorFlow Serving or

FastAPI for efficient inference. Monitor performance with Prometheus and Grafana

to catch bottlenecks early. Test under realistic conditions to ensure

reliability.

In analyzing dozens of AI PoCs that sailed on through to full production use —

or didn’t — six common pitfalls emerge. Interestingly, it’s not usually the

quality of the technology but misaligned goals, poor planning or unrealistic

expectations that caused failure. ... Define specific, measurable objectives

upfront. Use SMART criteria. For example, aim for “reduce equipment downtime by

15% within six months” rather than a vague “make things better.” Document these

goals and align stakeholders early to avoid scope creep. ... Invest in data

quality over volume. Use tools like Pandas for preprocessing and Great

Expectations for data validation to catch issues early. Conduct exploratory data

analysis (EDA) with visualizations (like Seaborn) to spot outliers or

inconsistencies. Clean data is worth more than terabytes of garbage. ... Start

simple. Use straightforward algorithms like random forest or XGBoost from

scikit-learn to establish a baseline. Only scale to complex models —

TensorFlow-based long-short-term-memory (LSTM) networks — if the problem demands

it. Prioritize explainability with tools like SHAP to build trust with

stakeholders. ... Plan for production from day one. Package models in Docker

containers and deploy with Kubernetes for scalability. Use TensorFlow Serving or

FastAPI for efficient inference. Monitor performance with Prometheus and Grafana

to catch bottlenecks early. Test under realistic conditions to ensure

reliability.

Andela CEO talks about the need for ‘borderless talent’ amid work visa limitation

Globally, three of four IT employers say they lack the tech talent they need, and the outlook will only get more dire as AI

creates a demand for high-skilled specialists like data engineers, senior

architects, and agentic orchestrators. Visa programs aren’t designed by the laws

of supply and demand. They’re defined by policy makers and are updated

infrequently. So, they’ll never truly be in sync with the needs of the labor

market. ... Brilliant people exist around the world. It’s why they want to

sponsor people for H-1B visas. But hiring outside of those traditional pathways

— to work with a brilliant machine learning engineer from Cairo or São Paulo,

for example — is…a long, painful process that takes months and is inaccessible

to them. They don’t know that they can find the right partner, someone who has

sorted this all out and vetted talent and developed compliance with global labor

and tax laws, etc. Once they understand that those partners exist, the global

workforce becomes instantly accessible to them. ... Technical hiring still feels

like a gamble, even though software development is, relatively speaking, packed

with deterministic skills. There are two main problems. One problem is the data

problem. There’s not enough reliable data about what a job actually requires and

what a worker is capable of doing. Today, we rely on resumes and job

descriptions.

Globally, three of four IT employers say they lack the tech talent they need, and the outlook will only get more dire as AI

creates a demand for high-skilled specialists like data engineers, senior

architects, and agentic orchestrators. Visa programs aren’t designed by the laws

of supply and demand. They’re defined by policy makers and are updated

infrequently. So, they’ll never truly be in sync with the needs of the labor

market. ... Brilliant people exist around the world. It’s why they want to

sponsor people for H-1B visas. But hiring outside of those traditional pathways

— to work with a brilliant machine learning engineer from Cairo or São Paulo,

for example — is…a long, painful process that takes months and is inaccessible

to them. They don’t know that they can find the right partner, someone who has

sorted this all out and vetted talent and developed compliance with global labor

and tax laws, etc. Once they understand that those partners exist, the global

workforce becomes instantly accessible to them. ... Technical hiring still feels

like a gamble, even though software development is, relatively speaking, packed

with deterministic skills. There are two main problems. One problem is the data

problem. There’s not enough reliable data about what a job actually requires and

what a worker is capable of doing. Today, we rely on resumes and job

descriptions.

The Overwhelm Epidemic: Why Resilience Begins with You

People have so much to do and not enough time. There’s nothing new with the

phenomena of not enough time to do what needs to be done, but today it’s

different. Today, it’s unique because this feeling of overwhelm has been

continuously expanding since early 2020 as we experienced the pandemic. We’re

being overwhelmed to an extent most people are not experienced to deal with.

For

you in operational resilience, I believe self-care is more critical now than it

has ever been. You are only able to help your clients and their systems be

resilient to the extent you are taking care of yourself and are resilient. ...

Most say something like, “I’m going to double down and focus on this. I’m going

to work harder and spend as much time as needed, even if it means cutting into

my already precious personal time.” They think working harder is the best

approach, but here’s the thing—they are wrong.

When you are operating at

high-stress levels, introducing more stress by doubling down and working harder,

actually reduces your output. ... Bottom line, a thriving, elite mindset is the

foundation of personal wellbeing and professional success.

Turning to positive

psychology, underlying Martin Seligman‘s model for human flourishing, are 24

positive character strengths. While more research is still needed, the research

to date has concluded that of the 24, the best predictor of living a

flourishing, thriving life is gratitude.

People have so much to do and not enough time. There’s nothing new with the

phenomena of not enough time to do what needs to be done, but today it’s

different. Today, it’s unique because this feeling of overwhelm has been

continuously expanding since early 2020 as we experienced the pandemic. We’re

being overwhelmed to an extent most people are not experienced to deal with.

For

you in operational resilience, I believe self-care is more critical now than it

has ever been. You are only able to help your clients and their systems be

resilient to the extent you are taking care of yourself and are resilient. ...

Most say something like, “I’m going to double down and focus on this. I’m going

to work harder and spend as much time as needed, even if it means cutting into

my already precious personal time.” They think working harder is the best

approach, but here’s the thing—they are wrong.

When you are operating at

high-stress levels, introducing more stress by doubling down and working harder,

actually reduces your output. ... Bottom line, a thriving, elite mindset is the

foundation of personal wellbeing and professional success.

Turning to positive

psychology, underlying Martin Seligman‘s model for human flourishing, are 24

positive character strengths. While more research is still needed, the research

to date has concluded that of the 24, the best predictor of living a

flourishing, thriving life is gratitude.

_blickwinkel_Alamy.jpg?width=850&auto=webp&quality=95&format=jpg&disable=upscale)