Quote for the day:

"Thinking should become your capital asset, no matter whatever ups and downs you come across in your life." -- Dr. APJ Kalam

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 18 mins • Perfect for listening on the go.

Eval engineering: The missing piece of agentic AI governance

In the SiliconANGLE article, Jason Bloomberg highlights eval engineering as a

vital yet often overlooked component of agentic AI governance required to keep

increasingly powerful autonomous agents from malfunctioning. While employing

independent validator agents to monitor other AI agents is an ideal solution,

implementing these validator models in live production environments introduces

significant latency and token consumption bottlenecks. To mitigate these

constraints, eval engineering focuses on developing framework evaluations,

often utilizing large language models as judges, to test and observe AI

workflows throughout their lifecycle. Startups tackle production bottlenecks

using diverse approaches: Maxim AI and Confident AI employ out of band

asynchronous pipelines and traffic sampling, whereas Arize AI relies on

lightweight monitoring, and Conscium utilizes virtual simulations. Notably,

Galileo AI addresses the efficiency dilemma with its ChainPoll methodology and

Luna, a purpose built, cost effective evaluation model that allows full

production sampling. Galileo's imminent acquisition by Cisco to join its

Splunk division underscores the commercial importance of this discipline.

Ultimately, the article emphasizes that as large language models mature, the

industry must pivot toward solving these core cost and performance

constraints, shifting the focus from merely making models better to rendering

them faster and more affordable for scalable enterprise governance.

In the SiliconANGLE article, Jason Bloomberg highlights eval engineering as a

vital yet often overlooked component of agentic AI governance required to keep

increasingly powerful autonomous agents from malfunctioning. While employing

independent validator agents to monitor other AI agents is an ideal solution,

implementing these validator models in live production environments introduces

significant latency and token consumption bottlenecks. To mitigate these

constraints, eval engineering focuses on developing framework evaluations,

often utilizing large language models as judges, to test and observe AI

workflows throughout their lifecycle. Startups tackle production bottlenecks

using diverse approaches: Maxim AI and Confident AI employ out of band

asynchronous pipelines and traffic sampling, whereas Arize AI relies on

lightweight monitoring, and Conscium utilizes virtual simulations. Notably,

Galileo AI addresses the efficiency dilemma with its ChainPoll methodology and

Luna, a purpose built, cost effective evaluation model that allows full

production sampling. Galileo's imminent acquisition by Cisco to join its

Splunk division underscores the commercial importance of this discipline.

Ultimately, the article emphasizes that as large language models mature, the

industry must pivot toward solving these core cost and performance

constraints, shifting the focus from merely making models better to rendering

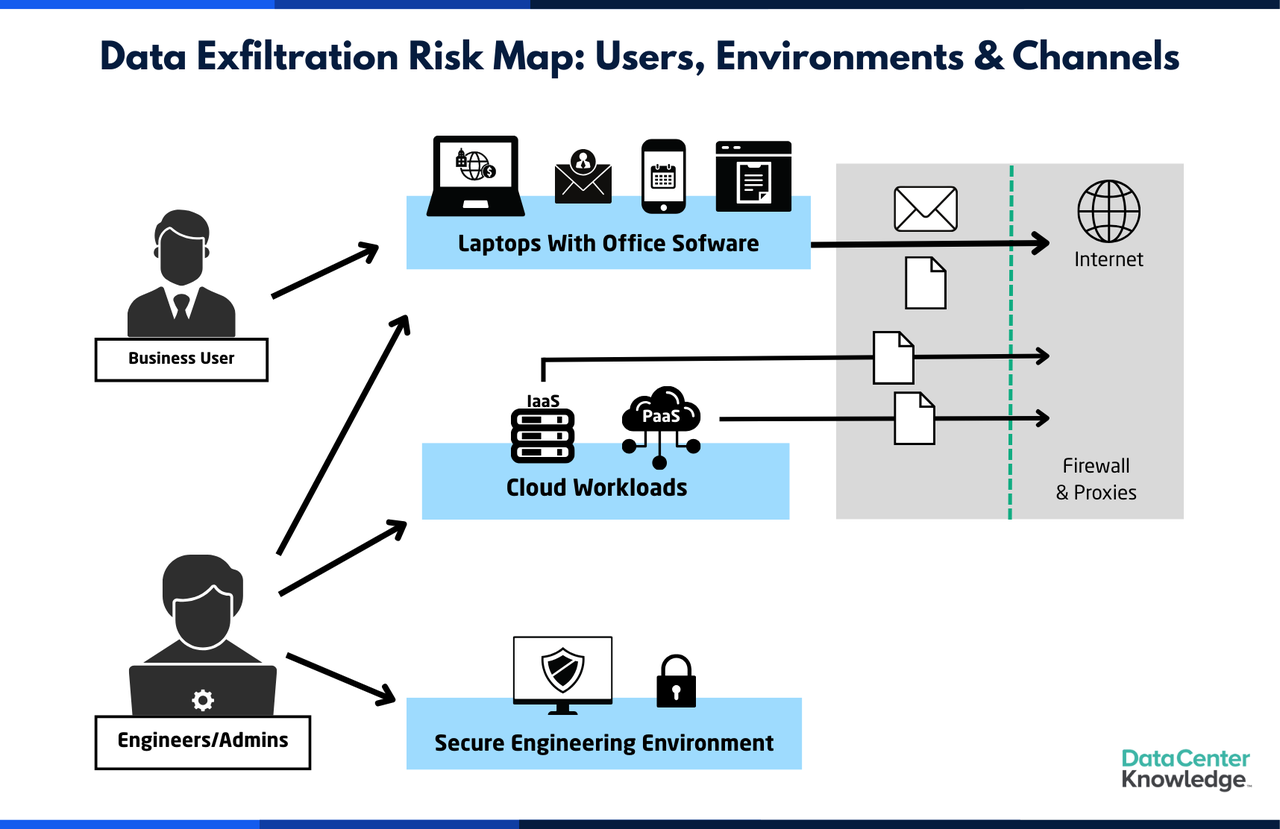

them faster and more affordable for scalable enterprise governance.Virtual vs. physical firewalls: A practical guide for modern networks

The article provides a comprehensive guide contrasting virtual and physical

firewalls within modern, dynamic network architectures. Virtual firewalls are

software-based security solutions running on shared compute infrastructure,

including hypervisors, public cloud platforms, and container environments.

They decouple security features from physical hardware, offering exceptional

deployment agility, programmatic scaling, and crucial east-west visibility to

inspect lateral traffic moving internally between workloads. However, because

they are CPU-bound, they can experience performance bottlenecks during

compute-intensive tasks like TLS inspection. Conversely, physical firewalls

are dedicated hardware appliances utilizing purpose-built processors.

Installed at fixed perimeters, local data centers, or branch offices, they

deliver highly predictable, hardware-accelerated throughput for north-south

traffic. They remain indispensable for air-gapped systems or strict data

sovereignty regulations, though their fixed capacity requires longer

procurement times. Ultimately, the article notes that neither solution is

universally superior. Instead, most organizations benefit by blending both

into a unified hybrid mesh architecture. This approach utilizes physical

hardware at high-bandwidth network boundaries while deploying virtual

instances inside dynamic cloud environments. To prevent policy drift and

dashboard fatigue, the text emphasizes utilizing a centralized, single-pane

management platform to streamline deployments, automate logging, and maintain

consistent security outcomes across the entire global infrastructure.

Architectural patterns for graph-enhanced RAG: Moving beyond vector search in production

In this article, Daulet Amirkhanov explains that while traditional

retrieval-augmented generation (RAG) effectively utilizes vector databases for

unstructured semantic search, it often fails in complex enterprise domains

because flattening data discards critical structural topologies. This

structural limitation leads to model hallucinations during multi-hop reasoning

tasks like tracing intricate supply chain disruptions. To overcome this

context loss, the author introduces a graph-enhanced RAG architecture

featuring a three-layer hybrid stack. First, structured entities and

relationships are explicitly extracted at ingestion using LLMs or entity

recognition. Next, this relational data is stored in graph databases like

Neo4j, where vector embeddings serve as node properties. Finally, hybrid

queries execute vector scans to locate entry points and traverse graph paths

to gather context-rich information. Although this advanced approach introduces

a production latency tax of 200 to 500 milliseconds, which can be mitigated

through semantic caching, and requires managing data dependencies via change

data capture pipelines, it ensures deterministic explainability. Ultimately,

Amirkhanov provides an infrastructure framework advising organizations to

deploy vector-only RAG for flat text and low-latency requirements, while

upgrading to graph-enhanced RAG for highly regulated domains requiring

multi-hop relationship mapping.

In this article, Daulet Amirkhanov explains that while traditional

retrieval-augmented generation (RAG) effectively utilizes vector databases for

unstructured semantic search, it often fails in complex enterprise domains

because flattening data discards critical structural topologies. This

structural limitation leads to model hallucinations during multi-hop reasoning

tasks like tracing intricate supply chain disruptions. To overcome this

context loss, the author introduces a graph-enhanced RAG architecture

featuring a three-layer hybrid stack. First, structured entities and

relationships are explicitly extracted at ingestion using LLMs or entity

recognition. Next, this relational data is stored in graph databases like

Neo4j, where vector embeddings serve as node properties. Finally, hybrid

queries execute vector scans to locate entry points and traverse graph paths

to gather context-rich information. Although this advanced approach introduces

a production latency tax of 200 to 500 milliseconds, which can be mitigated

through semantic caching, and requires managing data dependencies via change

data capture pipelines, it ensures deterministic explainability. Ultimately,

Amirkhanov provides an infrastructure framework advising organizations to

deploy vector-only RAG for flat text and low-latency requirements, while

upgrading to graph-enhanced RAG for highly regulated domains requiring

multi-hop relationship mapping.Designing Effective Meetings in Tech: From Time Wasters to Strategic Tools

The DZone article "Designing Effective Meetings in Tech: From Time Wasters to

Strategic Tools" argues that engineering meetings must be systematically

re-engineered into highly productive communication and decision-making systems

rather than remain baseline sources of organizational disruption. To achieve

this ideal state, the text outlines five core tactical principles tailored

specifically for technical leaders. First, organizers must establish a clear

scope and explicit expected outcomes beforehand, completely avoiding

ambiguous, open-ended calendar titles. Second, leaders should actively combat

Parkinson's Law by defaulting to much shorter, tightly constrained time slots,

which structurally forces absolute intentionality among participants. Third,

facilitators must aggressively redirect conversations away from trivial

implementation details, effectively preventing "bikeshedding" by managing team

discussions similarly to focused, high-priority computational thread

execution. Fourth, comprehensive preparation is entirely mandatory; sharing

technical artifacts like design proposals or Architecture Decision Records at

least 24 hours in advance completely eliminates wasteful synchronous reading,

shifting the collective focus strictly to active decision-making. Finally, the

author promotes thorough documentation as an ultimate scaling mechanism and a

"cached artifact" that inherently reduces organizational latency, turning

blocking onboarding syncs into strategic collaborative sessions that

permanently optimize long-term engineering workflow efficiency.

The Hidden Cost of Poor Training Data in Generative AI

The TDWI article highlights that while failed generative AI initiatives are

frequently blamed on models, the true culprit is typically poor training data.

In a generative AI context, data that is incomplete, mislabeled, biased, or

outdated can train systems to be consistently wrong across all future

interactions. This triggers a compounding financial and operational chain

reaction, causing wasted compute, delayed product launches, legal exposure,

and an erosion of enterprise confidence. Specifically, retraining an AI model

after data failures can cost three to ten times the initial budget due to

wasted GPU cycles, fresh audits, and restarted annotation pipelines.

Enterprises often experience success during narrow pilots, only to watch

models fail when introduced to messy, real-world production environments.

Furthermore, regulatory frameworks like the EU AI Act, GDPR, and HIPAA mandate

strict documentation and data traceability, which becomes exponentially

expensive to build retroactively. To mitigate these hidden costs,

organizations must shift their focus to pre-training data quality rather than

post-training fixes. Key disciplines include running rigorous pre-training

audits, intentionally designing training datasets to mirror real-world

distributions, and embedding human validation at scale. Ultimately,

prioritizing data integrity early prevents severe reputational risks and

effectively enables scalable enterprise AI success.

The TDWI article highlights that while failed generative AI initiatives are

frequently blamed on models, the true culprit is typically poor training data.

In a generative AI context, data that is incomplete, mislabeled, biased, or

outdated can train systems to be consistently wrong across all future

interactions. This triggers a compounding financial and operational chain

reaction, causing wasted compute, delayed product launches, legal exposure,

and an erosion of enterprise confidence. Specifically, retraining an AI model

after data failures can cost three to ten times the initial budget due to

wasted GPU cycles, fresh audits, and restarted annotation pipelines.

Enterprises often experience success during narrow pilots, only to watch

models fail when introduced to messy, real-world production environments.

Furthermore, regulatory frameworks like the EU AI Act, GDPR, and HIPAA mandate

strict documentation and data traceability, which becomes exponentially

expensive to build retroactively. To mitigate these hidden costs,

organizations must shift their focus to pre-training data quality rather than

post-training fixes. Key disciplines include running rigorous pre-training

audits, intentionally designing training datasets to mirror real-world

distributions, and embedding human validation at scale. Ultimately,

prioritizing data integrity early prevents severe reputational risks and

effectively enables scalable enterprise AI success.CtrlS Says AI Is Breaking Traditional Data Centre Assumptions

In an interview with Dataquest, Rahul Dhar of CtrlS explains that the surge in

GPU-intensive AI workloads is fundamentally dismantling traditional data

center architecture assumptions. While legacy facilities typically manage 5 to

15 kW per rack, modern AI clusters demand an unprecedented 80 to 150 kW+,

shifting industry bottlenecks from physical floor space to power density,

cooling capacity, and interconnect efficiency. Consequently, the industry is

bifurcating into conventional centers for general workloads and "AI factories"

featuring power-first engineering, liquid cooling, and software orchestration.

In India, this transition is amplified by the rapid evolution of Global

Capability Centers into AI innovation hubs requiring ultra-low latency,

GPU-dense environments, and sovereign data architectures. Furthermore,

independent operators can successfully compete with dominant hyperscalers by

prioritizing geographic proximity, specialized compliance, and localized edge

infrastructure for latency-sensitive inference processing. Dhar projects a

decisively hybrid future structured around an orchestrated AI fabric where

large-scale training remains concentrated in hyperscale clouds while inference

moves closer to end users. Ultimately, capital-intensive compute access,

strategic grid energy availability, and robust infrastructure engineering,

rather than human talent alone, are emerging as the primary bottlenecks

shaping global technological innovation velocity over the next decade.

Why every organisation needs a minimum viable company strategy

The article highlights the growing necessity of a Minimum Viable Company (MVC)

strategy to combat the prolonged, financially devastating operational

disruptions caused by modern cyberattacks. Traditional disaster recovery

methods often falter because they attempt to fully restore complex IT systems

simultaneously, a tedious process that frequently leaves enterprises

incapacitated for weeks or months. Conversely, an MVC strategy shifts focus

toward identifying and sustaining only the leanest, most critical operational

framework required to continue serving clients during an active crisis. Key

areas prioritized typically include communications, identity access, and

crucial supply chain or financial systems. Despite widespread recognition of

its immense value, defining an MVC remains exceptionally challenging due to

deep structural IT silos, systemic application dependencies, and complex

hybrid environments. To operationalize an MVC strategy efficiently, experts

recommend allocating a foundational baseline of roughly 20% of the company's

production infrastructure—such as storage, compute power, and workload

scope—and keeping it entirely immutable and air-gapped. Within this baseline,

roughly 10% should be set aside as an isolated, cleanroom environment for

malware-free recovery. By preparing these parameters in advance and utilizing

modern recovery tools, businesses can rapidly recover essential functions

within hours rather than weeks, dramatically mitigating long-term operational

downtime and protecting market reputation.

The article highlights the growing necessity of a Minimum Viable Company (MVC)

strategy to combat the prolonged, financially devastating operational

disruptions caused by modern cyberattacks. Traditional disaster recovery

methods often falter because they attempt to fully restore complex IT systems

simultaneously, a tedious process that frequently leaves enterprises

incapacitated for weeks or months. Conversely, an MVC strategy shifts focus

toward identifying and sustaining only the leanest, most critical operational

framework required to continue serving clients during an active crisis. Key

areas prioritized typically include communications, identity access, and

crucial supply chain or financial systems. Despite widespread recognition of

its immense value, defining an MVC remains exceptionally challenging due to

deep structural IT silos, systemic application dependencies, and complex

hybrid environments. To operationalize an MVC strategy efficiently, experts

recommend allocating a foundational baseline of roughly 20% of the company's

production infrastructure—such as storage, compute power, and workload

scope—and keeping it entirely immutable and air-gapped. Within this baseline,

roughly 10% should be set aside as an isolated, cleanroom environment for

malware-free recovery. By preparing these parameters in advance and utilizing

modern recovery tools, businesses can rapidly recover essential functions

within hours rather than weeks, dramatically mitigating long-term operational

downtime and protecting market reputation.Can Laws Stop Deepfakes? South Korea Aims to Find Out

South Korea's local elections serve as a critical test bed for the efficacy of legislative frameworks aimed at curbing political AI deepfakes. The country is pioneering national regulation through two primary statutes: Article 82-8 of the Public Official Election Act, which bans realistic synthetic media for ninety days before an election under penalty of prison or substantial fines, and the AI Basic Act, which mandates explicit watermarks or disclosures on AI-generated content. Additionally, the National Police Agency utilizes a specialized deepfake detection tool to aid investigations. Despite these aggressive legal tools, experts warn that regulation acts only as a baseline defense due to a fundamental asymmetry in operational speed. Publicly available AI tools can generate and propagate convincing deepfakes globally in seconds via encrypted apps and direct messaging, while the judicial machinery required to detect, investigate, and remove content operates over days or weeks. Furthermore, foreign threat actors remain largely outside the reach of local prosecution. Ultimately, cybersecurity and election experts argue that laws must be reinforced by a multi-layered strategy that holds social media platforms accountable, implements robust content provenance standards, and promotes widespread voter media literacy to successfully mitigate the disruptive demand side of digital disinformation.Four cutting-edge tools for spec-driven development

Based on the InfoWorld article by Martin Heller, the text highlights the shift

from haphazard "vibe coding" to Spec-Driven Development (SDD), a structured

methodology that keeps AI coding agents accurate and managed. While vibe

coding might suffice for minor weekend hobbies, it introduces major technical

debt and obscure bugs to enterprise environments. In contrast, SDD acts as a

formal contract and reliable source of truth by utilizing concise, readable

documents. The article details four advanced tools pioneering this approach:

AWS's Kiro, Microsoft's Spec Kit, Tessl, and Zenflow. Kiro works as an IDE and

CLI tool, generating structured markdown files to outline requirements,

architecture, and agent steering. Microsoft’s open-source Spec Kit utilizes

special slash commands to manage project principles, requirements, and

parallel execution. Tessl maintains agent alignment using a unique package

registry with "tiles" that bundle coding workflows and rules. Finally, Zenflow

orchestrates dynamic workflows via multiple autonomous agents, implementing

automated test verification and cross-agent code reviews within isolated Git

environments. Ultimately, the article concludes that implementing

specifications is vital for large refactoring efforts and enterprise software

engineering, advising developers to evaluate their infrastructure to select

the framework that best fits their orchestration, scalability, and workflow

criteria.

Based on the InfoWorld article by Martin Heller, the text highlights the shift

from haphazard "vibe coding" to Spec-Driven Development (SDD), a structured

methodology that keeps AI coding agents accurate and managed. While vibe

coding might suffice for minor weekend hobbies, it introduces major technical

debt and obscure bugs to enterprise environments. In contrast, SDD acts as a

formal contract and reliable source of truth by utilizing concise, readable

documents. The article details four advanced tools pioneering this approach:

AWS's Kiro, Microsoft's Spec Kit, Tessl, and Zenflow. Kiro works as an IDE and

CLI tool, generating structured markdown files to outline requirements,

architecture, and agent steering. Microsoft’s open-source Spec Kit utilizes

special slash commands to manage project principles, requirements, and

parallel execution. Tessl maintains agent alignment using a unique package

registry with "tiles" that bundle coding workflows and rules. Finally, Zenflow

orchestrates dynamic workflows via multiple autonomous agents, implementing

automated test verification and cross-agent code reviews within isolated Git

environments. Ultimately, the article concludes that implementing

specifications is vital for large refactoring efforts and enterprise software

engineering, advising developers to evaluate their infrastructure to select

the framework that best fits their orchestration, scalability, and workflow

criteria.

/articles/orchestrating-agentic-multimodal-ai-pipelines-apache-camel/en/smallimage/orchestrating-agentic-multimodal-ai-pipelines-apache-camel-thumbnail-1776763980414.jpg)

/dq/media/media_files/2026/02/04/tiny-ai-2026-02-04-16-39-39.jpg)

/filters:no_upscale()/articles/multi-cloud-event-driven-architectures/en/resources/36figure-6-1762944573069.jpg)