'Lemon Duck' Cryptominer Aims for Linux Systems

The malware uses the infected computer to replicate itself in a network and

then uses the contacts from the victim's Microsoft Outlook account to send

additional spam emails to more potential victims, the report notes. "People

are more likely to trust messages from people they know than from random

internet accounts," Rajesh Nataraj, a researcher with Sophos Labs, notes. The

malware contains code that generates email messages with dynamically added

malicious files and subject lines pulled up from its database with phrases

such as: "The Truth of COVID-19," "COVID-19 nCov Special info WHO" or "HEALTH

ADVISORY: CORONA VIRUS," according to the report. Researchers found that Lemon

Duck malware exploits the SMBGhost vulnerability found in versions 1902 and

1909 of the Windows 10 operating system. Exploiting this vulnerability allows

for remote code execution. Microsoft fixed this bug in March, but unpatched

systems remain at risk. The code used in Lemon Duck also leverages the

EternalBlue vulnerability in Windows to help the malware spread laterally

through enterprise networks.

Can AI Reimagine City Configuration and Automate Urban Planning?

While the concept of AI-enabled automated urban planning is appealing, the

researchers quickly encountered three challenges: how to quantify a land-use

configuration plan, how to develop a machine learning framework that can learn

the good and the bad of existing urban communities in terms of land-use

configuration policies, and how to evaluate the quality of the system’s

generated land-use configurations. The researchers began by formulating the

automated urban planning problem as a learning task on the configuration of

land-use given surrounding spatial contexts. They defined land-use

configuration as a longitude-latitude-channel tensor with the goal of

developing a framework that could automatically generate such tensors for

unplanned areas. The team developed an adversarial learning framework called

LUCGAN to generate effective land-use configurations by drawing on urban

geography, human mobility, and socioeconomic data. LUCGAN is designed to first

learn representations of the contexts of a virgin area and then generate an

ideal land-use configuration solution for the area.

AT&T Waxes 5G Edge for Enterprise With IBM

As enterprises increasingly shift to a hybrid-cloud model, IBM is working with

AT&T and other operators to allow businesses to deploy applications or

workloads wherever they see fit, Canepa said. “That includes now what we’re

highlighting here, the mobile edge environment that comes with this, the

emerging 5G world.” Because enterprises are no longer restricted to a single

cloud architecture on premises, they’re gaining access to a larger pool of

potential innovation sources, he explained. This extends to mobile network

operators’ infrastructure as well. “Up until this point, the networks inside

the telcos were very kind of structured environments, hardwired, specialized

equipment that was really good at what it did, but did a fairly limited set of

things,” Canepa said. “What we’re evolving to now is truly a hybrid-cloud

environment where that network itself becomes a platform. And then the ability

to extend that platform to the edge creates a whole new opportunity to create

new insights as a service, new applications, and solutions that can be

deployed in that environment.”

Databricks Delta Lake — Database on top of a Data Lake

The most challenging was the lack of database like transactions in Big Data

frameworks. To cover for this missing functionality we had to develop several

routines the performed the necessary checks and measures. However, the process

was cumbersome, time-consuming and frankly error-prone. Another issue that use

to keep me awake at night was the dreaded Change Data Capture (CDC). Databases

have a convenient way of updating records and showing the latest state of the

record to the user. On the other hand in Big Data we ingest data and store

them as files. Therefore, the daily delta ingestion may contain a combination

of newly inserted, updated or deleted data. This means we end up storing the

same row multiple times in the Data Lake. ... Developed by Databricks, Delta

Lake brings ACID transaction support for your data lakes for both batch and

streaming operations. Delta Lake is an open-source storage layer for big data

workloads over HDFS, AWS S3, Azure Data Lake Storage or Google Cloud Storage.

Delta Lake packs in a lot of cool features useful for Data Engineers.

Developing a scaling strategy for IoT

“One of the most often overlooked or under budgeted issues of IoT scaling is

not the initial build out of the system which is typically well planned for,

but the long-term maintenance and support of what can quickly become a huge

network of devices that are often deployed in difficult to reach locations,”

he said. “That complexity requires a resilient network to ensure that all of

these IoT devices, connected via an aggregation point, can be securely managed

and updated to extend their lifespan. Where edge compute is necessary due to

the density of connected IoT devices, it is also advisable to provide

scalable, secure and highly reliable remote management for all the IoT network

infrastructure that provides a fast and predictable way to recover from

failures. “An independent management network should provide a secure alternate

access path, including the ability to quickly re-deploy any software and or

configs automatically onto connected equipment if they need to be re-built,

ideally without having to send an engineer to site. In general networking

terms, it is very important to ensure that the IoT gateways and edge compute

equipment stack is actively monitored and that it is designed with resiliency

in mind.”

Creating The Vision For Data Governance

The first step in every successful data governance effort is the establishment

of a common vision and mission for data and its governance across the

enterprise. The vision articulates the state the organization wishes to

achieve with data, and how data governance will foster reaching that state.

Through the skills of a specialist in data governance and using the techniques

of facilitation, the senior business team develops the enterprise’s vision for

data and its governance. All of the subsequent activities of any data

governance effort should be formed by this vision. Visioning offers the widest

possible participation for developing a long-range plan, especially in

enterprise-oriented areas such as data governance. It is democratic in its

search for disparate opinions from all stakeholders and directly involves a

cross-section of constituents from the enterprise. Developing a vision helps

avoid piecemeal and reactionary approaches to addressing problems. It accounts

for the relationship between issues, and how one problem’s solution may

generate other problems or have an impact on another area of the enterprise.

Developing a vision at the enterprise level allows the organization to create

a holistic approach to setting goals that will enable the it to realize the

vision.

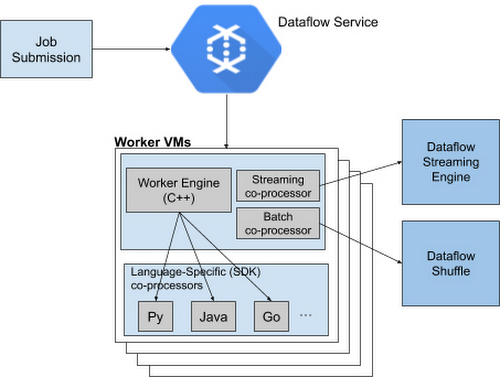

Google Announces a New, More Services-Based Architecture Called Runner V2 to Dataflow

Runner V2 has a more efficient and portable worker architecture rewritten in

C++, which is based on Apache Beam's new portability framework. Moreover,

Google packaged this framework together with Dataflow Shuffle for batch jobs

and Streaming Engine for streaming jobs, allowing them to provide a standard

feature set from now on across all language-specific SDKs, as well as share

bug fixes and performance improvements. The critical component in the

architecture is the worker Virtual Machines (VM), which run the entire

pipeline and have access to the various SDKs.... If features or transforms are

missing for a given language, they must be duplicated across various SDKs to

ensure parity; otherwise, there will be gaps in feature coverage and newer

SDKs like Apache Beam Go SDK will support fewer features and exhibit inferior

performance characteristics for some scenarios. Currently, Dataflow Runner v2

is available with Python streaming pipelines and Google recommends developers

to test the new Runner out with current non-production workloads before

enabling it by default on all new pipelines.

DOJ Seeks to Recover Stolen Cryptocurrency

The cryptocurrency stolen from the two exchanges was later traded for other

types of virtual currency, such as bitcoin and tether, to launder the funds

and obscure its transaction path, the Justice Department says. The civil

lawsuit relates to a criminal case that the Justice Department brought against

two Chinese nationals for their alleged role in laundering $100 million in

cryptocurrency stolen from exchanges by North Korean hackers in 2018. The two

suspects, Tian Yinyin, and Li Jiadong, are each charged with money laundering

conspiracy and operating an unlicensed money transmitting business. The two

also face sanctions from the U.S. Treasury Department. U.S. law enforcement

officials and intelligence agencies, including the Cybersecurity and

Infrastructure Security Agency, believe these types of crypto heists are

carried out by the Lazarus Group, a hacking group collective also known as

Hidden Cobra. Earlier this week, CISA, the FBI and the U.S. Cyber Command

warned of an uptick in bank heists and cryptocurrency thefts since February by

a subgroup of the Lazarus Group called BeagleBoyz

The increasing importance of data management

The goal of data management is to facilitate a holistic view of data and

enable users to access and derive optimal value from it—both data in motion

and at rest. Along with other data management solutions, DataOps leads to

measurably better business outcomes: boosted customer loyalty, revenue,

profit, and other benefits. The trouble with achieving these goals lies in

part in businesses not understanding how to translate the information they

hold into actionable outcomes. Once a business has toiled all the information

it holds to unearth valuable insights, they can then enact changes or

implement efficiencies to yield returns. ... Data security is consistently

rated among the highest concerns and priorities of IT management and business

leaders alike. But we can’t say that technology is always the answer in

ensuring that data is securely and safely stored. A key challenge is getting

alignment across organizations on the classification of data by risk and on

how data should be stored and protected. That makes security a human issue;

the tech is often easy. Two thirds of survey respondents report insufficient

data security, making data security an essential element of any discussion of

efficient data management.

What Companies are Disclosing About Cybersecurity Risk and Oversight

More boards are assigning cybersecurity oversight responsibilities to a

committee. Eighty-seven percent of companies this year have charged at least one

board-level committee with cybersecurity oversight, up from 82% last year and

74% in 2018. Audit committees remain the primary choice for those

responsibilities. This year 67% of boards assigned cybersecurity oversight to

the audit committee, up from 62% in 2019 and 59% in 2018. Last year we observed

a significant increase in boards assigning cybersecurity oversight to non-audit

committees, most often risk or technology committees, (28% in 2019 up from 20%

in 2018), but that percentage dropped this year (26% in 2020). A minority of

boards, 7% overall, assigned cyber responsibilities to both the audit and a

non-audit committee. Among the boards assigning cybersecurity oversight

responsibilities to the audit committee, nearly two-thirds (65%) formalize those

responsibilities in the audit committee charter. Among the boards assigning such

responsibilities to non-audit committees, most (85%) include those

responsibilities in the charter.

Identification of director skills and expertise

Identification of director skills and expertise

Quote for the day:

"For true success ask yourself these four questions: Why? Why not? Why not me? Why not now?" -- James Allen

No comments:

Post a Comment