Quote for the day:

"In my whole life, I have known no wise people who didn't read all the time, none, zero." -- Charlie Munger

How risk culture turns cyber teams predictive

Reactive teams don’t choose chaos. Chaos chooses them, one small compromise at

a time. A rushed change goes in late Friday. A privileged account sticks

around “temporarily” for months. A patch slips because the product has a

deadline, and security feels like the polite guest at the table. A supplier

gets fast-tracked, and nobody circles back. Each event seems manageable.

Together, they create a pattern. The pattern is what burns you. Most teams

drown in noise because they treat every alert as equal and security’s job. You

never develop direction. You develop reflexes. ... We’ve seen teams with

expensive tooling and miserable outcomes because engineers learned one lesson.

“If I raise a risk, I’ll get punished, slowed down or ignored.” So they keep

quiet, and you get surprised. We’ve also seen teams with average tooling but

strong habits. They didn’t pretend risk was comfortable. They made it

speakable. Speakable risk is the start of foresight. Foresight enables the

right action or inaction to achieve the best result! ... Top teams collect

near misses like pilots collect flight data. Not for blame. For pattern. A

near miss is the attacker who almost got in. The bad change that almost made

it into production. The vendor who nearly exposed a secret. The credential

that nearly shipped in code. Most organizations throw these away. “No harm

done.” Ticket closed. Then harm arrives later, wearing the same outfit.

Reactive teams don’t choose chaos. Chaos chooses them, one small compromise at

a time. A rushed change goes in late Friday. A privileged account sticks

around “temporarily” for months. A patch slips because the product has a

deadline, and security feels like the polite guest at the table. A supplier

gets fast-tracked, and nobody circles back. Each event seems manageable.

Together, they create a pattern. The pattern is what burns you. Most teams

drown in noise because they treat every alert as equal and security’s job. You

never develop direction. You develop reflexes. ... We’ve seen teams with

expensive tooling and miserable outcomes because engineers learned one lesson.

“If I raise a risk, I’ll get punished, slowed down or ignored.” So they keep

quiet, and you get surprised. We’ve also seen teams with average tooling but

strong habits. They didn’t pretend risk was comfortable. They made it

speakable. Speakable risk is the start of foresight. Foresight enables the

right action or inaction to achieve the best result! ... Top teams collect

near misses like pilots collect flight data. Not for blame. For pattern. A

near miss is the attacker who almost got in. The bad change that almost made

it into production. The vendor who nearly exposed a secret. The credential

that nearly shipped in code. Most organizations throw these away. “No harm

done.” Ticket closed. Then harm arrives later, wearing the same outfit.Why CIOs are turning to digital twins to future-proof the supply chain

The ways in which digital twin models differ from traditional models are that

they can be run as what-if scenarios and simulated by creating models based on

cause-and-effect. Examples of this would include a demand increase in volume

of supply chain product in a short time frame, or changes involving a facility

shutting down because of severe weather conditions. The model will look at how

this will affect a supply chain’s inventory levels, shipping schedule and

delivery date, and even worker availability if any. All of this allows

companies to move their decision-making process away from reactive

firefighting to the more proactive planning process. For a CIO, using a

digital twin model eliminates the historical siloing of enterprise

architecture of supply chain-related data. ... Although the value of the

digital twin technology is evident, scaling digital twins remains a

significant challenge. Integration of data from multiple sources including

ERP, WMS, IoT, and partner systems is a primary challenge for all. High

fidelity simulation requires high computational capacity, which in turn

requires trade-offs between realism, performance, and cost. There are also

governance issues associated with digital twins. As digital twin models drift

or are modified due to the physical state of the model changing, potential

security vulnerabilities also increase as continuing data is streamed from

cloud and edge environments.

The ways in which digital twin models differ from traditional models are that

they can be run as what-if scenarios and simulated by creating models based on

cause-and-effect. Examples of this would include a demand increase in volume

of supply chain product in a short time frame, or changes involving a facility

shutting down because of severe weather conditions. The model will look at how

this will affect a supply chain’s inventory levels, shipping schedule and

delivery date, and even worker availability if any. All of this allows

companies to move their decision-making process away from reactive

firefighting to the more proactive planning process. For a CIO, using a

digital twin model eliminates the historical siloing of enterprise

architecture of supply chain-related data. ... Although the value of the

digital twin technology is evident, scaling digital twins remains a

significant challenge. Integration of data from multiple sources including

ERP, WMS, IoT, and partner systems is a primary challenge for all. High

fidelity simulation requires high computational capacity, which in turn

requires trade-offs between realism, performance, and cost. There are also

governance issues associated with digital twins. As digital twin models drift

or are modified due to the physical state of the model changing, potential

security vulnerabilities also increase as continuing data is streamed from

cloud and edge environments.Quantum computing is getting closer, but quantum-proof encryption remains elusive

“Everybody’s well into the belief that we’re within five years of this

cryptocalypse,” says Blair Canavan, director of alliances for the PKI and PQC

portfolio at Thales, a French multinational company that develops technologies

for aerospace, defense, and digital security. “I see it and hear it in almost

every circle.” Fortunately, we already have new, quantum-safe encryption

technology. NIST released its fifth quantum-safe encryption algorithm in early

2025. The recommended strategy is to build encryption systems that make it

easy to swap out algorithms if they become obsolete and new algorithms are

invented. And there’s also regulatory pressure to act. ... CISA is due to

release its PQC category list, which will establish PQC standards for data

management, networking, and endpoint security. And early this year, the Trump

administration is expected to release a six-pillar cybersecurity strategy

document that includes post-quantum cryptography. But, according to the Post

Quantum Cryptography Coalition’s state of quantum migration report, when it

comes to public standards, there’s only one area in which we have broad

adoption of post-quantum encryption, and that’s with TLS 1.3, and only with

hybrid encryption — not pre or post quantum encryption or signatures. ... The

single biggest driver for PQC adoption is contractual agreements with

customers and partners, cited by 22% of respondents.

“Everybody’s well into the belief that we’re within five years of this

cryptocalypse,” says Blair Canavan, director of alliances for the PKI and PQC

portfolio at Thales, a French multinational company that develops technologies

for aerospace, defense, and digital security. “I see it and hear it in almost

every circle.” Fortunately, we already have new, quantum-safe encryption

technology. NIST released its fifth quantum-safe encryption algorithm in early

2025. The recommended strategy is to build encryption systems that make it

easy to swap out algorithms if they become obsolete and new algorithms are

invented. And there’s also regulatory pressure to act. ... CISA is due to

release its PQC category list, which will establish PQC standards for data

management, networking, and endpoint security. And early this year, the Trump

administration is expected to release a six-pillar cybersecurity strategy

document that includes post-quantum cryptography. But, according to the Post

Quantum Cryptography Coalition’s state of quantum migration report, when it

comes to public standards, there’s only one area in which we have broad

adoption of post-quantum encryption, and that’s with TLS 1.3, and only with

hybrid encryption — not pre or post quantum encryption or signatures. ... The

single biggest driver for PQC adoption is contractual agreements with

customers and partners, cited by 22% of respondents. From compliance to competitive edge: How tech leaders can turn data sovereignty into a business advantage

Data sovereignty - where data is subject to the laws and governing structures of the nation in which it is collected, processed, or held - means that now more than ever, it’s incredibly important that you understand where your organization’s data comes from, and how and where it’s being stored. Understandably, that effort is often seen through the lens of regulation and penalties. If you don’t comply with GDPR, for example, you risk fines, reputational damage, and operational disruption. But the real conversation should be about the opportunities it could bring, and that involves looking beyond ticking boxes, towards infrastructure and strategy. ... Complementing the hybrid hub-and-spoke model, distributed file systems synchronize data across multiple locations, either globally or only within the boundaries of jurisdictions. Instead of maintaining separate, siloed copies, these systems provide a consistent view of data wherever it is needed and help teams collaborate while keeping sensitive information within compliant zones. This reduces delays and duplication, so organizations can meet data sovereignty obligations without sacrificing agility or teamwork. Architecture and technology like this, built for agility and collaboration, are perfectly placed to transform data sovereignty from a barrier into a strategic enabler. They support organizations in staying compliant while preserving the speed and flexibility needed to adapt, compete, and grow.Why digital transformation fails without an upskilled workforce

“Capability” isn’t simply knowing which buttons to click. It’s being able to

troubleshoot when data doesn’t reconcile. It’s understanding how actions in the

system cascade through downstream processes. It’s recognizing when something

that’s technically possible in the system violates a business control. It’s

making judgment calls when the system presents options that the training

scenarios never covered. These capabilities can’t be developed through a

three-day training session two weeks before go-live. They’re built through

repeated practice, pattern recognition, feedback loops and reinforcement over

time. ... When upskilling is delayed or treated superficially, specific

operational risks emerge quickly. In fact, in the implementations I’ve

supported, I’ve found that organizations routinely experience productivity

declines of as much as 30-40% within the first 90 days of go-live if workforce

capability hasn’t been adequately addressed. ... Start by asking your

transformation team this question: “Show me the behavioral performance standards

that define readiness for the roles, and show me the evidence that we’re meeting

them.” If the answer is training completion dashboards, course evaluation scores

or “we have a really good training vendor,” you have a problem. Next, spend time

with actual end users not power users, not super users, but the people who will

do this work day in and day out.

“Capability” isn’t simply knowing which buttons to click. It’s being able to

troubleshoot when data doesn’t reconcile. It’s understanding how actions in the

system cascade through downstream processes. It’s recognizing when something

that’s technically possible in the system violates a business control. It’s

making judgment calls when the system presents options that the training

scenarios never covered. These capabilities can’t be developed through a

three-day training session two weeks before go-live. They’re built through

repeated practice, pattern recognition, feedback loops and reinforcement over

time. ... When upskilling is delayed or treated superficially, specific

operational risks emerge quickly. In fact, in the implementations I’ve

supported, I’ve found that organizations routinely experience productivity

declines of as much as 30-40% within the first 90 days of go-live if workforce

capability hasn’t been adequately addressed. ... Start by asking your

transformation team this question: “Show me the behavioral performance standards

that define readiness for the roles, and show me the evidence that we’re meeting

them.” If the answer is training completion dashboards, course evaluation scores

or “we have a really good training vendor,” you have a problem. Next, spend time

with actual end users not power users, not super users, but the people who will

do this work day in and day out.

How Infrastructure Is Reshaping the U.S.–China AI Race

Most of the early chapters of the global AI race were written in model releases.

As LLMs became more widely adopted, labs in the U.S. moved fast. They had

support from big cloud companies and investors. They trained larger models and

chased better results. For a while, progress meant one thing. Build bigger

models, and get stronger output. That approach helped the U.S. move ahead at the

frontier. However, China had other plans. Their progress may not have been as

visible or flashy, but they quietly expanded AI research across universities and

domestic companies. They steadily introduced machine learning into various

industries and public sector systems. ... At the same time, something happened

in China that sent shockwaves through the world, including tech companies in the

West. DeepSeek burst out of nowhere to show how AI model performance may not be

as contrained by hardware as many of us thought. This completely reshaped

assumptions about what it takes to compete in the AI race. So, instead of being

dependent on scale, Chinese teams increasingly focused on efficiency and

practical deployment. Did powerful AI really need powerful hardware? Well, some

experts thought DeepSeek developers were not being completely transparent on the

methods used to develop it. However, there is no doubt that the emergence of

DeepSeek created immense hype. ... There was no single turning point for the

emergence of the infrastructure problem. Many things happened over time.

Most of the early chapters of the global AI race were written in model releases.

As LLMs became more widely adopted, labs in the U.S. moved fast. They had

support from big cloud companies and investors. They trained larger models and

chased better results. For a while, progress meant one thing. Build bigger

models, and get stronger output. That approach helped the U.S. move ahead at the

frontier. However, China had other plans. Their progress may not have been as

visible or flashy, but they quietly expanded AI research across universities and

domestic companies. They steadily introduced machine learning into various

industries and public sector systems. ... At the same time, something happened

in China that sent shockwaves through the world, including tech companies in the

West. DeepSeek burst out of nowhere to show how AI model performance may not be

as contrained by hardware as many of us thought. This completely reshaped

assumptions about what it takes to compete in the AI race. So, instead of being

dependent on scale, Chinese teams increasingly focused on efficiency and

practical deployment. Did powerful AI really need powerful hardware? Well, some

experts thought DeepSeek developers were not being completely transparent on the

methods used to develop it. However, there is no doubt that the emergence of

DeepSeek created immense hype. ... There was no single turning point for the

emergence of the infrastructure problem. Many things happened over time.

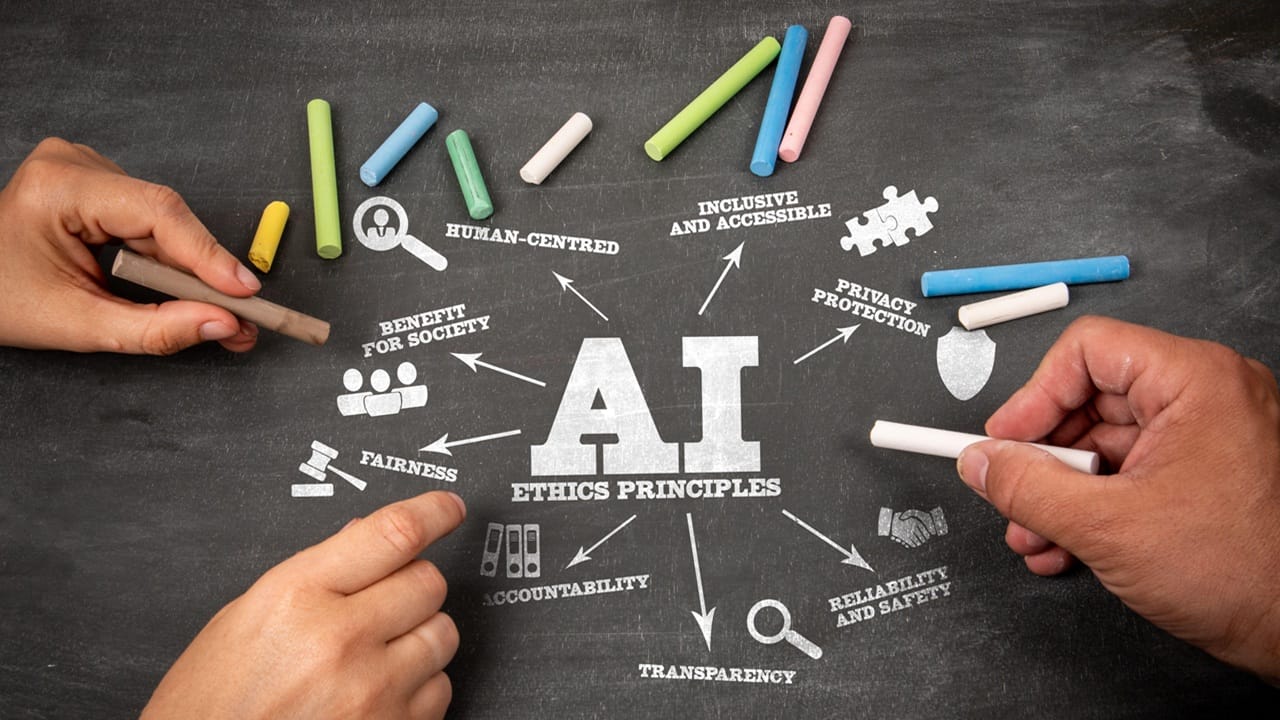

Why AI adoption keeps outrunning governance — and what to do about it

The first problem is structural. Governance was designed for centralized,

slow-moving decisions. AI adoption is neither. Ericka Watson, CEO of consultancy

Data Strategy Advisors and former chief privacy officer at Regeneron

Pharmaceuticals, sees the same pattern across industries. “Companies still

design governance as if decisions moved slowly and centrally,” she said. “But

that’s not how AI is being adopted. Businesses are making decisions daily —

using vendors, copilots, embedded AI features — while governance assumes someone

will stop, fill out a form, and wait for approval.” That mismatch guarantees

bypass. Even teams with good intentions route around governance because it

doesn’t appear where work actually happens. ... “Classic governance was built

for systems of record and known analytics pipelines,” he said. “That world is

gone. Now you have systems creating systems — new data, new outputs, and much is

done on the fly.” In that environment, point-in-time audits create false

confidence. Output-focused controls miss where the real risk lives. ...

Technology controls alone do not close the responsible-AI gap. Behavior matters

more. Asha Palmer, SVP of Compliance Solutions at Skillsoft and a former US

federal prosecutor, is often called in after AI incidents. She says the first

uncomfortable truth leaders confront is that the outcome was predictable. “We

knew this could happen,” she said. “The real question is: why didn’t we equip

people to deal with it before it did?”

The first problem is structural. Governance was designed for centralized,

slow-moving decisions. AI adoption is neither. Ericka Watson, CEO of consultancy

Data Strategy Advisors and former chief privacy officer at Regeneron

Pharmaceuticals, sees the same pattern across industries. “Companies still

design governance as if decisions moved slowly and centrally,” she said. “But

that’s not how AI is being adopted. Businesses are making decisions daily —

using vendors, copilots, embedded AI features — while governance assumes someone

will stop, fill out a form, and wait for approval.” That mismatch guarantees

bypass. Even teams with good intentions route around governance because it

doesn’t appear where work actually happens. ... “Classic governance was built

for systems of record and known analytics pipelines,” he said. “That world is

gone. Now you have systems creating systems — new data, new outputs, and much is

done on the fly.” In that environment, point-in-time audits create false

confidence. Output-focused controls miss where the real risk lives. ...

Technology controls alone do not close the responsible-AI gap. Behavior matters

more. Asha Palmer, SVP of Compliance Solutions at Skillsoft and a former US

federal prosecutor, is often called in after AI incidents. She says the first

uncomfortable truth leaders confront is that the outcome was predictable. “We

knew this could happen,” she said. “The real question is: why didn’t we equip

people to deal with it before it did?”

How AI Will ‘Surpass The Boldest Expectations’ Over The Next Decade And Why Partners Need To ‘Start Early’

The key to success in the AI era is delivering fast ROI and measurable productivity gains for clients. But integrating AI into enterprise workflows isn’t simple; it requires deep understanding of how work gets done and seamless connection to existing systems of record. That’s where IBM and our partners excel: embedding intelligence into processes like procurement, HR, and operations, with the right guardrails for trust and compliance. We’re already seeing signs of progress. A telecom client using AI in customer service achieved a 25-point Net Promoter Score (NPS) increase. In software development, AI tools are boosting developer productivity by 45 percent. And across finance and HR, AI is making processes more efficient, error-free, and fraud-resistant. ... Patience is key. We’re still in the early innings of enterprise AI adoption — the players are on the field, but the game is just beginning. If you’re not playing now, you’ll miss it entirely. The real risk isn’t underestimating AI; it’s failing to deploy it effectively. That means starting with low-risk, scalable use cases that deliver measurable results. We’re already seeing AI investments translate into real enterprise value, and that will accelerate in 2026. Over the next decade, AI will surpass today’s boldest expectations, driving a tenfold productivity revolution and long-term transformation. But the advantage will go to those who start early.Five AI agent predictions for 2026: The year enterprises stop waiting and start winning

By mid-2026, the question won't be whether enterprises should embed AI agents in

business processes—it will be what they're waiting for if they haven't already.

DIY pilot projects will increasingly be viewed as a risker alternative to

embedded pre-built capabilities that support day-to-day work. We're seeing the

first wave of natively embedded agents in leading business applications across

finance, HR, supply chain, and customer experience functions. ... Today's

enterprise AI landscape is dominated by horizontal AI approaches: broad use

cases that can be applied to common business processes and best practices. The

next layer of intelligence - vertical AI - will help to solve complex

industry-specific problems, delivering additional P&L impact. This shift

fundamentally changes how enterprises deploy AI. Vertical AI requires deep

integration with workflows, business data, and domain knowledge—but the

transformative power is undeniable. ... Advanced enterprises in 2026 will

orchestrate agent teams that automatically apply business rules, maintain a

tight control on compliance, integrate seamlessly across their technology stack,

and scale human expertise rather than replace it. This orchestration preserves

institutional knowledge while dramatically multiplying its impact. Organizations

that master multi-agent workflows will operate with fundamentally different

economics than those managing point automation solutions.

By mid-2026, the question won't be whether enterprises should embed AI agents in

business processes—it will be what they're waiting for if they haven't already.

DIY pilot projects will increasingly be viewed as a risker alternative to

embedded pre-built capabilities that support day-to-day work. We're seeing the

first wave of natively embedded agents in leading business applications across

finance, HR, supply chain, and customer experience functions. ... Today's

enterprise AI landscape is dominated by horizontal AI approaches: broad use

cases that can be applied to common business processes and best practices. The

next layer of intelligence - vertical AI - will help to solve complex

industry-specific problems, delivering additional P&L impact. This shift

fundamentally changes how enterprises deploy AI. Vertical AI requires deep

integration with workflows, business data, and domain knowledge—but the

transformative power is undeniable. ... Advanced enterprises in 2026 will

orchestrate agent teams that automatically apply business rules, maintain a

tight control on compliance, integrate seamlessly across their technology stack,

and scale human expertise rather than replace it. This orchestration preserves

institutional knowledge while dramatically multiplying its impact. Organizations

that master multi-agent workflows will operate with fundamentally different

economics than those managing point automation solutions.

How should AI agents consume external data?

Agents benefit from real-time information ranging from publicly accessible web

data to integrated partner data. Useful external data might include product and

inventory data, shipping status, customer behavior and history, job postings,

scientific publications, news and opinions, competitive analysis, industry

signals, or compliance updates, say the experts. With high-quality external data

in hand, agents become far more actionable, more capable of complex

decision-making and of engaging in complex, multi-party flows. ... According to

Lenchner, the advantages of scraping are breadth, freshness, and independence.

“You can reach the long tail of the public web, update continuously, and

avoid single‑vendor dependencies,” he says. Today’s scraping tools grant agents

impressive control, too. “Agents connected to the live web can navigate dynamic

sites, render JavaScript, scroll, click, paginate, and complete multi-step tasks

with human‑like behavior,” adds Lenchner. Scraping enables fast access to public

data without negotiating partnership agreements or waiting for API approvals. It

avoids the high per-call pricing models that often come with API integration,

and sometimes it’s the only option, when formal integration points don’t exist.

... “Relying on official integrations can be positive because it offers

high-quality, reliable data that is clean, structured, and predictable data

through a stable API contract,” says Informatica’s Pathak. “There is also legal

protection, as they operate under clear terms of service, providing legal

clarity and mitigating risk.”

Agents benefit from real-time information ranging from publicly accessible web

data to integrated partner data. Useful external data might include product and

inventory data, shipping status, customer behavior and history, job postings,

scientific publications, news and opinions, competitive analysis, industry

signals, or compliance updates, say the experts. With high-quality external data

in hand, agents become far more actionable, more capable of complex

decision-making and of engaging in complex, multi-party flows. ... According to

Lenchner, the advantages of scraping are breadth, freshness, and independence.

“You can reach the long tail of the public web, update continuously, and

avoid single‑vendor dependencies,” he says. Today’s scraping tools grant agents

impressive control, too. “Agents connected to the live web can navigate dynamic

sites, render JavaScript, scroll, click, paginate, and complete multi-step tasks

with human‑like behavior,” adds Lenchner. Scraping enables fast access to public

data without negotiating partnership agreements or waiting for API approvals. It

avoids the high per-call pricing models that often come with API integration,

and sometimes it’s the only option, when formal integration points don’t exist.

... “Relying on official integrations can be positive because it offers

high-quality, reliable data that is clean, structured, and predictable data

through a stable API contract,” says Informatica’s Pathak. “There is also legal

protection, as they operate under clear terms of service, providing legal

clarity and mitigating risk.”