Quote for the day:

“Wisdom equals knowledge plus courage. You have to not only know what to do and when to do it, but you have to also be brave enough to follow through.” -- Jarod Kintz

What happens when AI becomes the customer?

If the first point of contact is no longer a person but an AI agent, then

traditional tactics like branding, visual merchandising or website design will

have reduced impact. Instead, the focus will move to how easily machines can

find and understand product information. Retailers will need to ensure that

data, from specifications and availability to pricing and reviews, is accurate,

structured and optimised for AI discovery. Products will no longer be browsed by

humans but scanned and filtered by autonomous systems making selections on

someone else’s behalf. ... This trend is particularly strong among younger and

higher-income consumers. People under 35 are far more likely to use AI

throughout the buying process, particularly for everyday items like groceries,

toiletries and clothes. For this group, convenience matters. Many are

comfortable letting technology take over simple tasks, and when it comes to low

cost, low risk products, they’re happy for AI to handle the entire purchase. ...

These developments point to the rise of the agentic internet – a world in which

AI agents become the main way consumers interact with brands. As these tools

search, compare, buy and manage products on users’ behalf, they will reshape how

visibility, loyalty and influence work. Retailers have less than five years to

respond. That means investing in clean, structured product data, adapting

automation where it’s welcomed, and keeping the human touch where trust

matters.

If the first point of contact is no longer a person but an AI agent, then

traditional tactics like branding, visual merchandising or website design will

have reduced impact. Instead, the focus will move to how easily machines can

find and understand product information. Retailers will need to ensure that

data, from specifications and availability to pricing and reviews, is accurate,

structured and optimised for AI discovery. Products will no longer be browsed by

humans but scanned and filtered by autonomous systems making selections on

someone else’s behalf. ... This trend is particularly strong among younger and

higher-income consumers. People under 35 are far more likely to use AI

throughout the buying process, particularly for everyday items like groceries,

toiletries and clothes. For this group, convenience matters. Many are

comfortable letting technology take over simple tasks, and when it comes to low

cost, low risk products, they’re happy for AI to handle the entire purchase. ...

These developments point to the rise of the agentic internet – a world in which

AI agents become the main way consumers interact with brands. As these tools

search, compare, buy and manage products on users’ behalf, they will reshape how

visibility, loyalty and influence work. Retailers have less than five years to

respond. That means investing in clean, structured product data, adapting

automation where it’s welcomed, and keeping the human touch where trust

matters. The overlooked cyber risk in data centre cooling systems

Data centre operations are critically dependent on a complex ecosystem of OT

equipment, including HVAC and building management systems. As operators adopt

closed-loop and waterless cooling to improve efficiency, these systems are

increasingly tied into BMS and DCIM platforms. This expands the attack surface

of networks that were once more segmented. A compromise of these systems could

directly affect temperature, humidity or airflow, with clear implications for

the availability of services that critical infrastructure asset owners rely on.

... Resilience also depends on secure remote access, including multi-factor

authentication and controlled jump-host environments for vendors and third

parties. Finally, risk-based vulnerability management ensures that critical

assets are either patched, mitigated, or closely monitored for exploitation,

even where systems cannot easily be taken offline. Taken together, these

controls provide a framework for protecting data centre cooling and building

systems without slowing the drive for efficiency and innovation. ... As the UK

expands its data centre capacity to fuel AI ambitions and digital

transformation, cybersecurity must be designed into the physical systems that

keep those facilities stable. Cooling is not just an operational detail. It is a

potential target — and protecting it is essential to ensuring the sector’s

growth is sustainable, resilient, and secure.

Data centre operations are critically dependent on a complex ecosystem of OT

equipment, including HVAC and building management systems. As operators adopt

closed-loop and waterless cooling to improve efficiency, these systems are

increasingly tied into BMS and DCIM platforms. This expands the attack surface

of networks that were once more segmented. A compromise of these systems could

directly affect temperature, humidity or airflow, with clear implications for

the availability of services that critical infrastructure asset owners rely on.

... Resilience also depends on secure remote access, including multi-factor

authentication and controlled jump-host environments for vendors and third

parties. Finally, risk-based vulnerability management ensures that critical

assets are either patched, mitigated, or closely monitored for exploitation,

even where systems cannot easily be taken offline. Taken together, these

controls provide a framework for protecting data centre cooling and building

systems without slowing the drive for efficiency and innovation. ... As the UK

expands its data centre capacity to fuel AI ambitions and digital

transformation, cybersecurity must be designed into the physical systems that

keep those facilities stable. Cooling is not just an operational detail. It is a

potential target — and protecting it is essential to ensuring the sector’s

growth is sustainable, resilient, and secure.

Rethinking Regression Testing with Change-to-Test Mapping

Regression testing is essential to software quality, but in enterprise projects

it often becomes a bottleneck. Full regression suites may run for hours,

delaying feedback and slowing delivery. The problem is sharper in agile and

DevOps, where teams must release updates daily. ... The need for smarter

regression strategies is more urgent than ever. Modern software systems are no

longer monoliths; they are built from microservices, APIs, and distributed

components, each evolving quickly. Every code change can ripple across modules,

making full regressions increasingly impractical. At the same time, CI/CD costs

are rising sharply. Cloud pipelines scale easily but generate massive bills when

regression packs run repeatedly. ... The core idea is simple: “If only part of

the code changes, why not run only the tests covering that part?” Change-to-test

mapping links modified code to the relevant tests. Instead of running the entire

suite on every commit, the approach executes a targeted subset – while retaining

safeguards such as safety tests and fallback runs. What makes this approach

pragmatic is that it does not rely on building a “perfect” model of the system.

Instead, it uses lightweight signals – such as file changes, annotations, or

coverage data – to approximate the most relevant set of tests. Combined with

guardrails, this creates a balance: fast enough to keep up with modern delivery,

yet safe enough to trust in production-grade environments.

Regression testing is essential to software quality, but in enterprise projects

it often becomes a bottleneck. Full regression suites may run for hours,

delaying feedback and slowing delivery. The problem is sharper in agile and

DevOps, where teams must release updates daily. ... The need for smarter

regression strategies is more urgent than ever. Modern software systems are no

longer monoliths; they are built from microservices, APIs, and distributed

components, each evolving quickly. Every code change can ripple across modules,

making full regressions increasingly impractical. At the same time, CI/CD costs

are rising sharply. Cloud pipelines scale easily but generate massive bills when

regression packs run repeatedly. ... The core idea is simple: “If only part of

the code changes, why not run only the tests covering that part?” Change-to-test

mapping links modified code to the relevant tests. Instead of running the entire

suite on every commit, the approach executes a targeted subset – while retaining

safeguards such as safety tests and fallback runs. What makes this approach

pragmatic is that it does not rely on building a “perfect” model of the system.

Instead, it uses lightweight signals – such as file changes, annotations, or

coverage data – to approximate the most relevant set of tests. Combined with

guardrails, this creates a balance: fast enough to keep up with modern delivery,

yet safe enough to trust in production-grade environments.

Is A Human Touch Needed When Compliance Has Automation?

Even with technical issues, automation may highlight missing patches, but humans

are the ones who must prioritize fixes, coordinate remediation, and validate

that vulnerabilities are closed. Audits highlight this divide even more clearly.

Regulators rarely accept a data dump without explanation. Compliance officers

must be able to explain how controls work, why exceptions exist, and what is

being done to address them. Without human review, automated alerts risk creating

false positives, blind spots, or alert fatigue. Perhaps most critically,

over-dependence on automation can erode institutional knowledge, leaving teams

unprepared to interpret risk independently. ... By eliminating repetitive

evidence collection, teams gain the capacity to analyze training effectiveness,

scenario-plan future threats, and interpret regulatory changes. Automation

becomes not a replacement for people, but a multiplier of their impact. ...

Over-reliance on automation carries its own risks. A clean dashboard may mask

legacy systems still in production or system blind spots if a monitoring tool

goes down. Without active oversight, teams may not discover gaps until the next

audit. There’s also the danger of compliance becoming a “black box,” where staff

interact with dashboards but never learn how to evaluate risk themselves. CIOs

need to actively design against these vulnerabilities.

Even with technical issues, automation may highlight missing patches, but humans

are the ones who must prioritize fixes, coordinate remediation, and validate

that vulnerabilities are closed. Audits highlight this divide even more clearly.

Regulators rarely accept a data dump without explanation. Compliance officers

must be able to explain how controls work, why exceptions exist, and what is

being done to address them. Without human review, automated alerts risk creating

false positives, blind spots, or alert fatigue. Perhaps most critically,

over-dependence on automation can erode institutional knowledge, leaving teams

unprepared to interpret risk independently. ... By eliminating repetitive

evidence collection, teams gain the capacity to analyze training effectiveness,

scenario-plan future threats, and interpret regulatory changes. Automation

becomes not a replacement for people, but a multiplier of their impact. ...

Over-reliance on automation carries its own risks. A clean dashboard may mask

legacy systems still in production or system blind spots if a monitoring tool

goes down. Without active oversight, teams may not discover gaps until the next

audit. There’s also the danger of compliance becoming a “black box,” where staff

interact with dashboards but never learn how to evaluate risk themselves. CIOs

need to actively design against these vulnerabilities.

14 Challenges (And Solutions) Of Filling Fractional Leadership Roles

Filling a fractional leadership role is tough when companies underestimate the

expertise required to thrive in such a role. Fractional leaders need both

autonomy and seamless integration with key stakeholders. ... One challenge of

fractional leadership is grasping the company culture and processes with limited

time on site. Without that context, even the most skilled leader can struggle to

drive meaningful change or build credibility. ... Finding the right culture fit

for a fractional leadership role can be challenging. High-performing leadership

teams are tight-knit ecosystems, and a fractional leader’s challenges with

breaking into them and fitting into their culture can be daunting. ... One

challenge is unrealistic expectations—wanting full-time availability at

part-time cost. The key is to define scope, decision rights and deliverables

upfront. Treat fractional leaders as strategic partners, not stopgaps. Clear

onboarding and aligned incentives are essential to driving value and trust. ...

A common hurdle with fractional roles is misaligned expectations—impact is

needed fast, but boundaries and authority aren’t always defined. The fix? Be

upfront: outline goals, decision-making limits and integration plans early so

leaders can add value quickly without friction.

Filling a fractional leadership role is tough when companies underestimate the

expertise required to thrive in such a role. Fractional leaders need both

autonomy and seamless integration with key stakeholders. ... One challenge of

fractional leadership is grasping the company culture and processes with limited

time on site. Without that context, even the most skilled leader can struggle to

drive meaningful change or build credibility. ... Finding the right culture fit

for a fractional leadership role can be challenging. High-performing leadership

teams are tight-knit ecosystems, and a fractional leader’s challenges with

breaking into them and fitting into their culture can be daunting. ... One

challenge is unrealistic expectations—wanting full-time availability at

part-time cost. The key is to define scope, decision rights and deliverables

upfront. Treat fractional leaders as strategic partners, not stopgaps. Clear

onboarding and aligned incentives are essential to driving value and trust. ...

A common hurdle with fractional roles is misaligned expectations—impact is

needed fast, but boundaries and authority aren’t always defined. The fix? Be

upfront: outline goals, decision-making limits and integration plans early so

leaders can add value quickly without friction.

Will the EU Designate AI Under the Digital Markets Act?

There are two main ways in which the DMA will be relevant for generative AI

services. First, a generative AI player may offer a core platform service and

meet the gatekeeper requirements of the DMA. Second, generative AI-powered

functionalities may be integrated or embedded in existing designated core

platform services and therefore be covered by the DMA obligations. Those

obligations apply in principle to the entire core platform service as

designated, including features that rely on generative AI. ... Cloud computing

is already listed as a core platform service under the DMA, and thus,

designating cloud services would be a much faster process than creating a new

core platform service category. Michelle Nie, a tech policy researcher formerly

with the Open Markets Institute, says the EU should designate cloud providers to

tackle the infrastructural advantages held by gatekeepers. Indeed, she has

previously written for Tech Policy Press that doing so “would help address

several competitive concerns like self-preferencing, using data from businesses

that rely on the cloud to compete against them, or disproportionate conditions

for termination of services.” ... Introducing contestability and fairness, the

stated goals of the DMA, into digital ecosystems increasingly relied on by

private and public institutions could not be more critical.

There are two main ways in which the DMA will be relevant for generative AI

services. First, a generative AI player may offer a core platform service and

meet the gatekeeper requirements of the DMA. Second, generative AI-powered

functionalities may be integrated or embedded in existing designated core

platform services and therefore be covered by the DMA obligations. Those

obligations apply in principle to the entire core platform service as

designated, including features that rely on generative AI. ... Cloud computing

is already listed as a core platform service under the DMA, and thus,

designating cloud services would be a much faster process than creating a new

core platform service category. Michelle Nie, a tech policy researcher formerly

with the Open Markets Institute, says the EU should designate cloud providers to

tackle the infrastructural advantages held by gatekeepers. Indeed, she has

previously written for Tech Policy Press that doing so “would help address

several competitive concerns like self-preferencing, using data from businesses

that rely on the cloud to compete against them, or disproportionate conditions

for termination of services.” ... Introducing contestability and fairness, the

stated goals of the DMA, into digital ecosystems increasingly relied on by

private and public institutions could not be more critical.

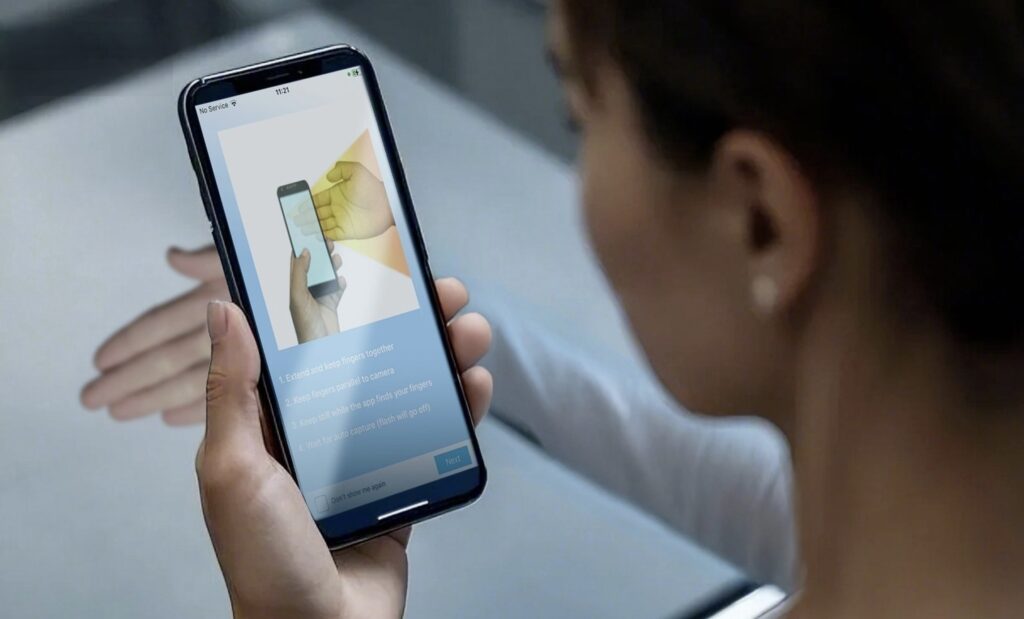

The Looming Authorization Crisis: Why Traditional IAM Fails Agentic AI

From copilots booking travel to intelligent agents updating systems and

coordinating with other bots, we’re stepping into a world where software can

reason, plan, and operate with increasing autonomy.This shift brings immense

promise and significant risk. The identity and access management (IAM)

infrastructures that we rely upon today were built for people and fixed service

accounts. They weren’t designed to manage self-directing, dynamic digital

agents. And yet that’s what Agentic AI demands. ... The road to a comprehensive

and internationally accessible Agentic AI IAM framework is a daunting task. The

rapid pace of AI development demands accelerated IAM security guidance,

especially for heavily regulated sectors. Continued research, continued

development of standards, and rigorous interoperability are required to prevent

fragmentation into incompatible identity silos. We must also address the ethical

issues, such as bias detection and mitigation in credentials, and offer

transparency and explainability of IAM decisions. ... The stakes are high.

Without a comprehensive plan for managing these agents—one that tracks who they

are, what they can perceive, and when their permissions expire—we risk disaster

through way of complexity and compromise. Identity remains the foundation of

enterprise security, and its scope must reach rapidly to shield the autonomous

revolution.

From copilots booking travel to intelligent agents updating systems and

coordinating with other bots, we’re stepping into a world where software can

reason, plan, and operate with increasing autonomy.This shift brings immense

promise and significant risk. The identity and access management (IAM)

infrastructures that we rely upon today were built for people and fixed service

accounts. They weren’t designed to manage self-directing, dynamic digital

agents. And yet that’s what Agentic AI demands. ... The road to a comprehensive

and internationally accessible Agentic AI IAM framework is a daunting task. The

rapid pace of AI development demands accelerated IAM security guidance,

especially for heavily regulated sectors. Continued research, continued

development of standards, and rigorous interoperability are required to prevent

fragmentation into incompatible identity silos. We must also address the ethical

issues, such as bias detection and mitigation in credentials, and offer

transparency and explainability of IAM decisions. ... The stakes are high.

Without a comprehensive plan for managing these agents—one that tracks who they

are, what they can perceive, and when their permissions expire—we risk disaster

through way of complexity and compromise. Identity remains the foundation of

enterprise security, and its scope must reach rapidly to shield the autonomous

revolution.

How immutability tamed the Wild West

One of the first lessons that a new programmer should learn is that global

variables are a crime against all that is good and just. If a variable is passed

around like a football, and its state can change anywhere along the way, then

its state will change along the way. Naturally, this leads to hair pulling and

frustration. Global variables create coupling, and deep and broad coupling is

the true crime against the profession. At first, immutability seems kind of

crazy—why eliminate variables? Of course things need to change! How the heck am

I going to keep track of the number of items sold or the running total of an

order if I can’t change anything? ... The key to immutability is understanding

the notion of a pure function. A pure function is one that always returns the

same output for a given input. Pure functions are said to be deterministic, in

that the output is 100% predictable based on the input. In simpler terms, a pure

function is a function with no side effects. It will never change something

behind your back. ... Immutability doesn’t mean nothing changes; it means values

never change once created. You still “change” by rebinding a name to a new

value. The notion of a “before” and “after” state is critical if you want

features like undo, audit tracing, and other things that require a complete

history of state. Back in the day, GOSUB was a mind-expanding concept. It seems

so quaint today.

One of the first lessons that a new programmer should learn is that global

variables are a crime against all that is good and just. If a variable is passed

around like a football, and its state can change anywhere along the way, then

its state will change along the way. Naturally, this leads to hair pulling and

frustration. Global variables create coupling, and deep and broad coupling is

the true crime against the profession. At first, immutability seems kind of

crazy—why eliminate variables? Of course things need to change! How the heck am

I going to keep track of the number of items sold or the running total of an

order if I can’t change anything? ... The key to immutability is understanding

the notion of a pure function. A pure function is one that always returns the

same output for a given input. Pure functions are said to be deterministic, in

that the output is 100% predictable based on the input. In simpler terms, a pure

function is a function with no side effects. It will never change something

behind your back. ... Immutability doesn’t mean nothing changes; it means values

never change once created. You still “change” by rebinding a name to a new

value. The notion of a “before” and “after” state is critical if you want

features like undo, audit tracing, and other things that require a complete

history of state. Back in the day, GOSUB was a mind-expanding concept. It seems

so quaint today.

What Lessons Can We Learn from the Internet for AI/ML Evolution?

One of the defining principles of the Internet was to keep the core simple and

push the intelligence to the edge. The network and its host computers just

simply delivered packets reliably without dictating or controlling applications.

That principle enabled the explosion of the Web, streaming, and countless other

services. In AI, similar principles should be considered. Instead of

centralizing everything in “one foundational model”, we should empower

distributed agents and edge intelligence. Core infrastructure should stay simple

and robust, enabling diverse use cases on top. ... One of the most important

lessons of all from the Internet is that there be no single company nor

government-owned or controlled TCP/IP stack. It is neutral governance that

created global trust and adoption. Institutions such as ICANN, and the regional

Internet registries (RIRs) played a key role by managing domain names and IP

address assignments in an open and transparent way, ensuring that resources were

allocated fairly across geographies. This kind of neutral stewardship allowed

the Internet to remain interoperable and borderless. On the other hand, today’s

AI landscape is controlled by a handful of big-tech companies. To scale AI

responsibly, we will need similar global governance structures—an “IETF for AI,”

complemented by neutral registries that can manage shared resources such as

model identifiers, agent IDs, coordinating protocols, among others.

One of the defining principles of the Internet was to keep the core simple and

push the intelligence to the edge. The network and its host computers just

simply delivered packets reliably without dictating or controlling applications.

That principle enabled the explosion of the Web, streaming, and countless other

services. In AI, similar principles should be considered. Instead of

centralizing everything in “one foundational model”, we should empower

distributed agents and edge intelligence. Core infrastructure should stay simple

and robust, enabling diverse use cases on top. ... One of the most important

lessons of all from the Internet is that there be no single company nor

government-owned or controlled TCP/IP stack. It is neutral governance that

created global trust and adoption. Institutions such as ICANN, and the regional

Internet registries (RIRs) played a key role by managing domain names and IP

address assignments in an open and transparent way, ensuring that resources were

allocated fairly across geographies. This kind of neutral stewardship allowed

the Internet to remain interoperable and borderless. On the other hand, today’s

AI landscape is controlled by a handful of big-tech companies. To scale AI

responsibly, we will need similar global governance structures—an “IETF for AI,”

complemented by neutral registries that can manage shared resources such as

model identifiers, agent IDs, coordinating protocols, among others.

/articles/complex-applications-storage-constraints/en/smallimage/compex-applications-storage-constraints-thumbnail-1747126977374.jpg)