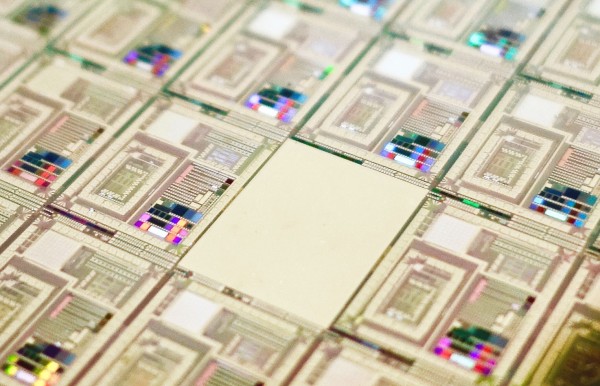

How a quantum computer could break 2048-bit RSA encryption in 8 hours

Shor showed that a sufficiently powerful quantum computer could do this with ease, a result that sent shock waves through the security industry. And since then, quantum computers have been increasing in power. In 2012, physicists used a four-qubit quantum computer to factor 143. Then in 2014 they used a similar device to factor 56,153. It’s easy to imagine that at this rate of progress, quantum computers should soon be able to outperform the best classical ones. Not so. It turns out that quantum factoring is much harder in practice than might otherwise be expected. The reason is that noise becomes a significant problem for large quantum computers. And the best way currently to tackle noise is to use error-correcting codes that require significant extra qubits themselves. Taking this into account dramatically increases the resources required to factor 2048-bit numbers. In 2015, researchers estimated that a quantum computer would need a billion qubits to do the job reliably. That’s significantly more than the 70 qubits in today’s state-of-the-art quantum computers.

How Urbanhire is disrupting HR in Indonesia

Specifically, the hiring platform allows companies to post jobs across more than 50 portals, including Google, LinkedIn and Line - a freeware app which became Japan’s largest social network in 2013. Tapping into a pool of more than one million active jobseekers, the software-as-a-service (SaaS) follows a “data-driven hiring strategy”, aligning businesses to a four-step digital strategy of “source, assess, recruit and on-board”. Three years since launching, key customers include global brands such as AIA, Zurich and The Body Shop, in addition to Indonesian organisations like Danamon, Pertamina and Djarum. “Indonesia is a fantastic opportunity given where it is at from a growth perspective,” Kamstra added. “As a tech entrepreneur, I love the fact that we can use business models that have been successful in more developed countries without a lot of the baggage that comes with historical tech implementations that are no longer sufficient. “I love to use the telecom industry as an example. Indonesia was able to go from little infrastructure to a very modern one by not having gone through all the investment steps that countries like the US were forced to do as pioneers.

Don't Miss These 10 Cybersecurity Blind Spots

When an employee is terminated, it’s important to shut down their access to all work-related accounts — immediately. Ideally, you might want to try to automate as much of the account-termination process as possible and ensure that the process covers all accounts for all employees. This can be easier said than done, but it's important to get a process or automated solution nailed down before that employee's access causes an unwanted breach. ... Any application that uses third-party software components, including open-source components, takes on the risk of potential vulnerabilities in those dependencies. These vulnerabilities should be identified, tracked and accounted for in the same way as every other software component. ... Service accounts are used by machines, and user accounts are used by humans. The trouble with service accounts is that sometimes they have access to a lot of different systems, and their passwords aren’t always managed well. Poorly managed passwords make for easy compromise by attackers.

Business needs to see infosec pros as trusted advisers

The first issue clouding communication between security professionals and the board or senior business leaders is the misunderstanding that IT risk is separate from business risk. Nothing could be further from the truth, especially considering that in most organisations today, the separation between what is IT and what is business is hard to identify because technology is the backbone of everything the business does. The second issue relates to how the message is packaged. Is the language full of technical jargon, or is it simple to understand and gets the message across in business terms? Does it highlight the loss to the business in terms understood by the board and senior business leaders? Take the example of when business downtime is required when a patch needs to be applied. Instead of talking in terms of the technical threats and the outcomes of poor patching, security professionals would be more effective explaining it in terms of loss to the business, such as lost opportunities or losses from an attack that may occur because of the unpatched status.

MongoDB CEO explains where the company has an edge over database giant Oracle

Cramer noted that Oracle, which has a nearly $195 billion market cap, has recently bought back billions in stock and has a big war chest. Despite that, MongoDB's architecture sets the younger company apart, Ittycheria said. The firm's database is built for the modern world, he added. "[Oracle] built an architecture designed in the late '70s for the world then, and they just tried to make it better over time," he said. "We built an architecture design for today's high performance mobile cloud computing world." Ittycheria explained how MongoDB helped Cisco address an order management application issue in which they receive tens of billions of orders from different sales channels a year. The platform serves more than 14,000 customers, including some of the most "sophisticated, demanding customers in the world." The list ranges from big media to telecom to gaming media to financial services, he said. Start-ups are also developing their business on MongoDB, Ittycheria said.

Fix your cloud security

Enterprises are either not willing to use the right technology, or they don’t understand that the technology exists. It’s not that the database is unencrypted, it’s that nobody has any idea how to turn on encryption in flight or at rest. Also at fault are the “it was not on-premises” folks out there. They cling to the fact that since some security feature was not a part of the original on-premises system, it shouldn’t be needed in the cloud. The time to deal with security issues is when you move from on-premises to the public cloud. You need to spend at least a couple of weeks looking at identity access management, encryption, auditing, proactive security, and more, and then evaluating its viability to your enterprise. Otherwise, you could miss the cloud security boat as you make the migration. In my opinion, this is the single most important step in migration. It allows you to reflect on what your security needs really are and how to solve them using cloud computing technology which, these days, is better than anything you can find on-premises.

Can Apple compete on privacy?

Apple's privacy campaign has already had an impact in terms of forcing the competition to pay closer attention to their disclosure and controls. It is unlikely to move the needle in terms of market share, but Apple can only gain as awareness of the great data tradeoff of targeted advertising grows and missteps in executing it continues. It should also be more effective as a retention tool for anyone who has not already been locked into Apple's milieu through its self-reinforcing portfolio of devices and growing family of services. Furthermore, while the smartphone market is mature, whatever challenges it as an emerging platform will likely raise even more profound privacy concerns. Already, wearables measure our pulse and assess whether we've fallen, and the kind of personal data that could be generated by measuring exactly what you're looking at via augmented reality gear could make smartphone-generated data seem crude by comparison. And there's another potential benefit to Apple's privacy campaign, one that the company has developed since it first stepped up its advocacy.

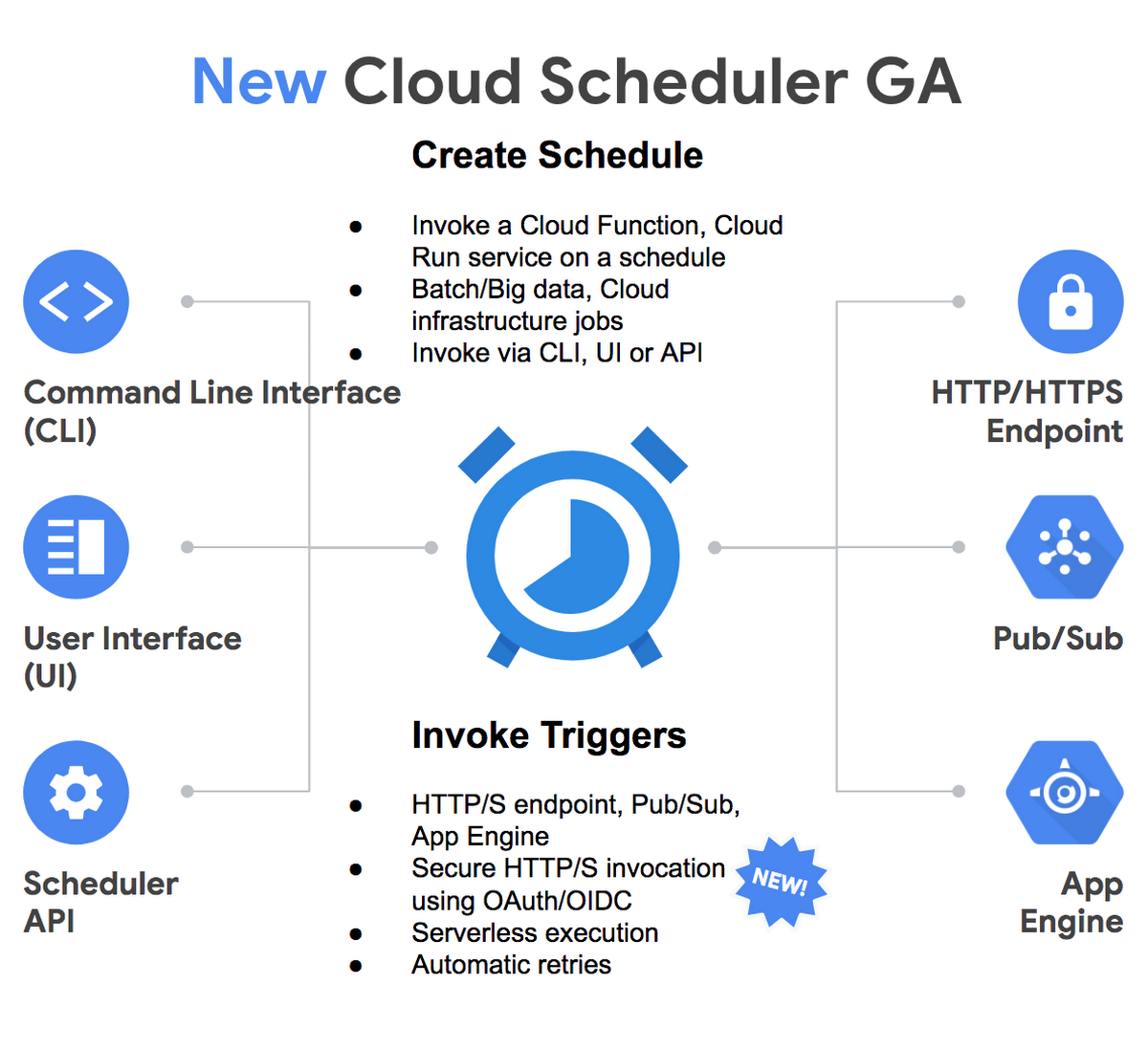

Serverless: applications only when you need them - no more, no less

Traditional IT architectures use a server infrastructure, whether on-premises or cloud-based, that requires managing the systems and services required for an application to function. The application must always be running, and the organization must spin up other instances of the application to handle more load which tends to be resource-intensive. Serverless architecture focuses instead on having the infrastructure provided by a third party, with the organization only providing the code for the applications broken down into functions that are hosted by the third party. This allows the application to scale based on function usage and is more cost-effective since the third-party charges for how often the application uses the function, instead of having the application running all the time. ... Serverless computing is constrained by performance requirements, resource limits, and security concerns, but excels at reducing costs for compute. That being said, where feasible, one should gradually migrate over to serverless infrastructure to make sure it can handle the application requirements before phasing out the legacy infrastructure.

Four Myths of Digital Transformation: What Only 8% of Companies Know

New research by Bain & Company finds that only 8% of global companies have been able to achieve their targeted business outcomes from their investments in digital technology. Said another way, more than 90% of companies are still struggling to deliver on the promise of a technology-enabled business model. What secret formula do the 8% deploy? Unsurprisingly, there are no shortcuts or silver bullet. But successful transformations do share some common themes. One of the most important is understanding that this is really a business transformation, supported by investments in new technology—not new technology in search of opportunities. Many executives pay lip service to this idea, but in practice, they delegate too much responsibility to the tech team, hoping the business can watch from the sidelines. At the 8%, executive teams understand that the core of a digital transformation is a business transformation, changing the way of engaging customers across channels, simplifying business processes, and redesigning products or services.

“We need to up our game”—DHS cybersecurity director on Iran and ransomware

Both the Iranian malicious activities and ransomware attacks are largely dependent on exploiting the same sorts of security issues. Both rely largely on the same tactics: malicious attachments, stolen credentials, or brute-force credential attacks to gain a foothold on targeted networks, usually using readily available malware as a foothold to use those credentials to then move across a network. When asked if the recent ransomware attacks on cities across the US (including three recent attacks in Florida with dramatically larger ransom demands) were indicative of a new, more targeted set of campaigns against US local governments, Krebs said that the attacks were likely not targeted—at least not initially. "I still think these [ransomware campaigns] are fairly expansive efforts, where [the attackers] are initially scanning, looking for certain vulnerabilities, and when they find one that's when they start to target," he said. "Again, I'm not sure we have the information right now saying they were specifically targeted.

Quote for the day:

"Leaders stuck in old cow paths are destined to repeat the same mistakes. Change leaders recognize the need to avoid old paths, old ideas and old plans." -- Reed Markham

![Microsoft > OneDrive [Office 365]](https://images.idgesg.net/images/article/2019/02/cw_microsoft_office_365_onedrive-100787148-large.jpg)