Rethinking Testing in Production

With products becoming more interconnected, trying to accurately replicate

third-party APIs and integrations outside of production is close to

impossible. Trunk-based development, with its focus on continuous

integration and delivery, acknowledges the need for a paradigm shift. Feature

flags emerge as the proverbial Archimedes lever in this transformation, offering

a flexible and controlled approach to testing in production. Developers can now

gradually roll out features without disrupting the entire user base, mitigating

the risks associated with traditional testing methodologies. Feature flags

empower developers to enable a feature in production for themselves during the

development phase, allowing them to refine and perfect it before exposing it to

broader testing audiences. This progressive approach ensures that potential

issues are identified and addressed early in the development process. As the

feature matures, it can be selectively enabled for testing teams, engineering

groups or specific user segments, facilitating thorough validation at each step.

The logistic nightmare of maintaining identical environments is alleviated, as

testing in production becomes an integral part of the development workflow.

Enterprise Architecture in the Financial Realm

Enterprise architecture emerges as the North Star guiding banks through these

changes. Its role transcends being a mere operational construct; it becomes a

strategic enabler that harmonizes business and technology components. A

well-crafted enterprise architecture lays the foundation for adaptability and

resilience in the face of digital transformation. Enterprise architecture

manifests two key characteristics: unity and agility. The unity aspect

inherently provides an enterprise-level perspective, where business and IT

methodologies seamlessly intertwine, creating a cohesive flow of processes and

data. Conversely, agility in enterprise architecture construction involves

deconstruction and subsequent reconstruction, refining shared and reusable

business components, akin to assembling Lego bricks. ... Quantifying the success

of digital adaptation is crucial. Metrics should not solely focus on financial

outcomes but also on key performance indicators reflecting the effectiveness of

digital initiatives, customer satisfaction, and the agility of operational

models.

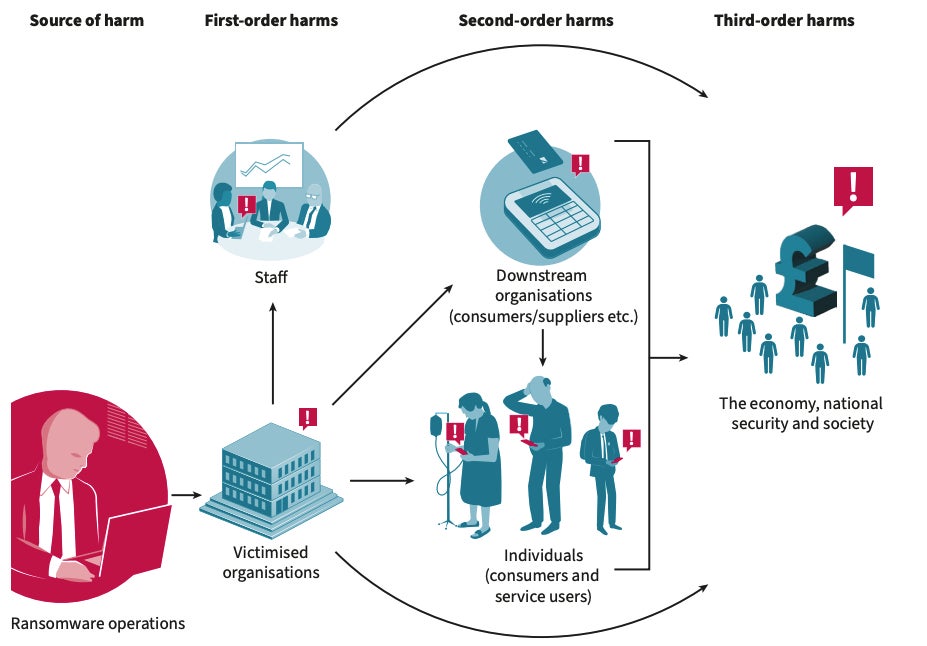

Cloud Security: Stay One Step Ahead of the Attackers

The relatively easy availability of cloud-based storage can lead to a data

sprawl that is uncontrolled and unmanageable. In many cases, data which must

be deleted or secured is left ungoverned, as organizations are not aware of

their existence. In April 2022, cloud data security firm, Cyera, found

unmanaged data store copies, and snapshots or log data. The researchers from

this firm found out that 60% of the data security issues present in cloud data

stores were due to unsecured sensitive data. The researchers further observed

that over 30% of scanned cloud data stores were ghost data, and more than 58%

of these ghost data stores contained sensitive or very sensitive data. ...

Despite best practices advised by cloud service providers, data breaches that

originate in the cloud have only increased. IBM’s annual Cost of a Data Breach

report for example, highlights that 45% of studied breaches have occurred in

the cloud. What is also noteworthy is that a significant 43% of reporting

organizations which have stated they are just in the early stages or have not

started implementing security practices to protect their cloud environments,

have observed higher breach costs.

Five Questions That Determine Where AI Fits In Your Digital Transformation Strategy

Once you understand the why and the what, only then can you consider how your

organization can use insights from AI to better accomplish its goals. How will

your people respond, and how will they benefit? Today’s organizations have

multiple technology partners, and they may have many that are all saying they

can do AI. But how will your organization work with all those partners to make

an AI solution come together? Many organizations are developing AI policies to

define how it can be used. Having these guardrails ensures that your

organization is operating ethically, morally and legally when it comes to the

use of AI. ... It’s important to consider whether your organization is truly

ready for AI at an enterprise or divisional level before deciding to implement

AI at scale. Pilot projects can help you determine whether the implementation

is generating the intended results and better understand how end users will

interact with the processes. If you can't achieve customization and

personalization across the organization, AI initiatives will be much tougher

to implement.

A Dive into the Detail of the Financial Data Transparency Act’s Data Standards Requirements

The act is a major undertaking for regulators and regulated firms. It is also

an opportunity for the LEI, if selected, to move to another level in the US,

which has been slow to adopt the identifier, and significantly increase

numbers that will strengthen the Global LEI System. While industry experts

suggest regulators in scope of FDTA, collectively called Financial Stability

Oversight Council (FSOC) agencies, initially considered data standards

including the LEI and Financial Instrument Global Identifier published by

Bloomberg, they suggest the LEI is the best match for the regulation’s

requirements for ‘Covered agencies to establish “common identifiers” for

information reported to covered regulatory agencies, which could include

transactions and financial products/instruments.” ... The selection and

implementation of a reporting taxonomy is more challenging as it will require

many of the regulators to abandon existing reporting practices often based on

PDFs, text and CSV files, and replace these with electronic reporting and

machine-readable tagging. XBRL fits the bill, say industry experts, although

there has been pushback from some agencies that see the unfunded requirement

for change as too great a burden.

Data Center Approach to Legacy Modernization: When is the Right Time?

Legacy systems can lead to inefficiencies in your business. If we take one of

the parameters mentioned above, such as cooling, one example of inefficiency

could lie within an old server that’s no longer of use but still turned on.

This could be placing unneccesary strain on your cooling, thus impacting your

environmental footprint. Legacy systems may no longer be the most appropriate

for your business, as newer technologies emerge that offer a more efficient

method of producing the same, or better, results. If you neglect this

technology, you might be giving your competitors an advantage which could be

costly for your business. ... A cyber-attack takes place every 39 seconds,

according to one report. This puts businesses at risk of losing or

compromising not only their intellectual property and assets but also their

customer’s data. This could put you at risk of damaging your reputation and

even facing regulation fines. One of the best reasons to invest in digital

transformation is for the security of your business. Systems that no longer

receive updates can become a target of cyber-attacks and act as a

vulnerability within your technology infrastructure.

4 paths to sustainable AI

Hosting AI operations at a data center that uses renewable power is a

straightforward path to reduce carbon emissions, but it’s not without

tradeoffs. Online translation service Deepl runs its AI functions from four

co-location facilities: two in Iceland, one in Sweden, and one in Finland. The

Icelandic data center uses 100% renewably generated geothermal and

hydroelectric power. The cold climate also eliminates 40% or more of the total

data center power needed to cool the servers because they open the windows

rather than use air conditioners, says Deepl’s director of engineering Guido

Simon. Cost is another major benefit, he says, with prices of five cents per

KW/hour compared to about 30 cents or more in Germany. The network latency

between the user and a sustainable data center can be an issue for

time-sensitive applications, says Stent, but only in the inference stage,

where the application provides answers to the user, rather than the

preliminary training phase. Deepl, with headquarters in Cologne, Germany,

found it could run both training and inference from its remote co-location

facilities. “We’re looking at roughly 20 milliseconds more latency compared to

a data center closer to us,” says Simon.

Can ChatGPT drive my car? The case for LLMs in autonomy

Autonomous driving is an especially challenging problem because certain edge

cases require complex, human-like reasoning that goes far beyond legacy

algorithms and models. LLMs have shown promise in going beyond pure

correlations to demonstrating a real “understanding of the world.” This new

level of understanding extends to the driving task, enabling planners to

navigate complex scenarios with safe and natural maneuvers without requiring

explicit training. ... Safety-critical driving decisions must be made in less

than one second. The latest LLMs running in data centers can take 10 seconds

or more. One solution to this problem is hybrid-cloud architectures that

supplement in-car compute with data center processing. Another is

purpose-built LLMs that compress large models into form factors small enough

and fast enough to fit in the car. Already we are seeing dramatic improvements

in optimizing large models. Mistral 7B and Llama 2 7B have demonstrated

performance rivaling GPT-3.5 with an order of magnitude fewer parameters (7

billion vs. 175 billion). Moore’s Law and continued optimizations should

rapidly shift more of these models to the edge.

The Race to AI Implementation: 2024 and Beyond

The biggest problem is that the competitive and product landscape will be

undergoing massive flux, so picking a strategic solution will be increasingly

difficult. Younger companies that are less likely to be able to handle the

speed of these advancements should focus on openness so that if they fail,

someone else can pick up support, interoperability, and compatibility. If you

aren’t locked into a single vendor’s solution and can mix and match as needed,

you can move on or off a platform based on your needs. Like any new

technology, take advice about hardware selection from the platform supplier.

This means that if you are using ChatGPT, you want to ask OpenAI for advice

about new hardware. If you are working with Microsoft or Google or any other

AI developer, ask them what hardware they would recommend. ... You need a

vendor that embraces all the client platforms for hybrid AI and one with a

diverse, targeted solution set that individually focuses on the markets your

firm is in. Right now, only Lenovo seems to have all the parts necessary

thanks to its acquisition of Motorola.

Quote for the day:

"It's fine to celebrate success but it

is more important to heed the lessons of failure." --

Bill Gates

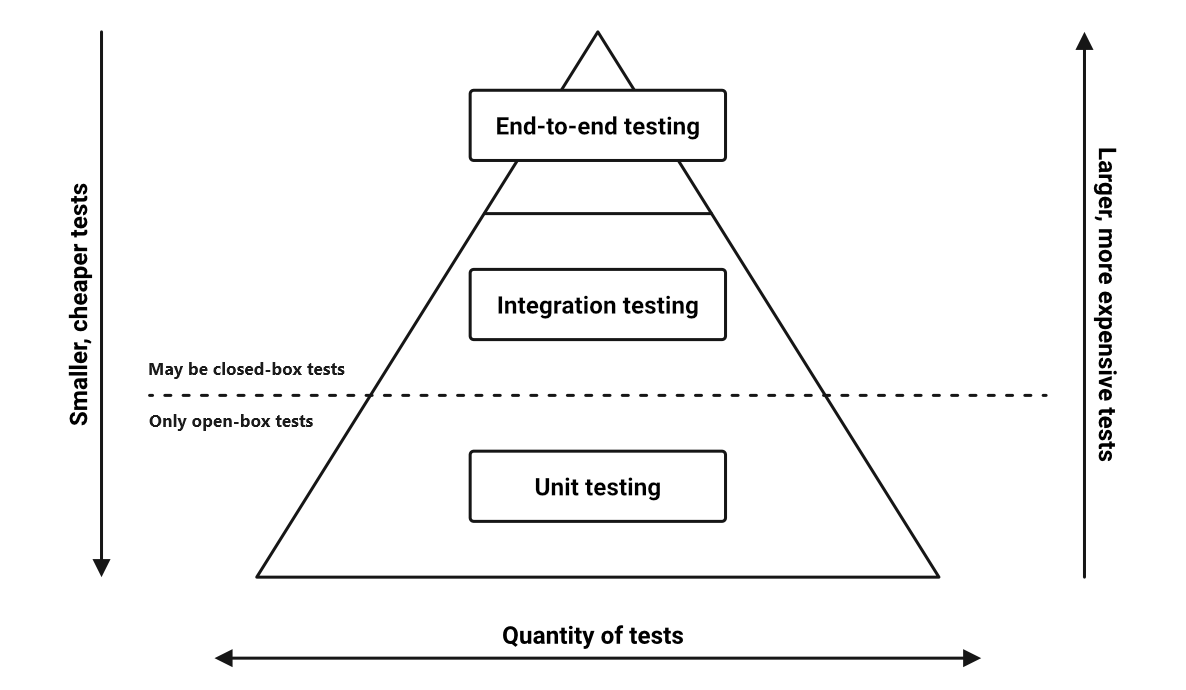

/filters:no_upscale()/articles/mva-enough-architecture/en/resources/1figure-1-resized-1705943271137.jpg)