AMD files teleportation patent to supercharge quantum computing

AMD has proposed a patent for 'teleportation,' meaning things could be about to

get much more efficient around here. With the incredible technological feats

humanity achieves on a daily basis, and Nvidia's Jensen going off on one last

year about GeForce holodecks and time machines, it's easy for us to slip into a

headspace that lets us believe genuine human teleportation is just around the

corner. "Finally," you sigh, mouthing the headline to yourself. "Goodbye work

commute, hello popping to Japan for an authentic Ramen on my lunch break." ...

Essentially, the 'out-of-order' execution method AMD is looking to lay claim to

ensures some Qubits that would be left idle—waiting for their calculation step

to come around—are able to execute independent of a prior result. Where usually

they would need to wait for previous Qubits to provide instructions, they can

calculate simultaneously, no need to wait in line. So, no, we're not going to be

zipping through wormholes just yet. But if AMD's designs come through, we could

be looking at much more efficient, scalable and stable quantum computing

architecture than we have now.

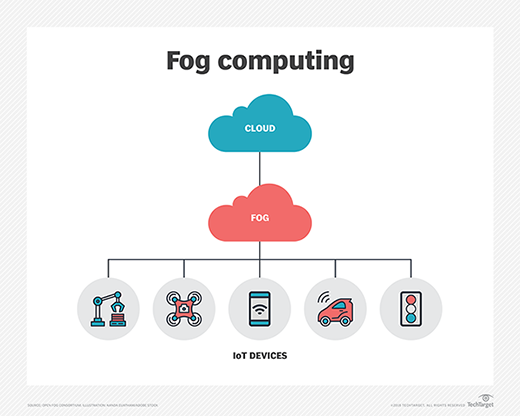

The Internet of Things Requires a Connected Data Infrastructure

Not long ago, a terabyte of information was an enormous amount and might be the

foundation for solid decision-making. These days, it won’t cut it. For example,

looking at a terabyte of data might yield a decision that’s 70% accurate. But

leaving 30% to chance is unacceptable when it comes to real-time vehicle safety.

On the other hand, having the ability to ingest and process 40 terabytes — from

all sources, edge to core — can result in an accuracy rate well exceeding 90%

accuracy. Something jumps in front of your car — is it a person, a dog, a trash

bag, a child’s ball? Real-time systems need to determine the level of risk and

react in micro milliseconds. Real-time processing has to be done closer to where

the decisions are being made. In terms of IoT, a lot of questions can be

answered by using a digital twin. These create additional layers of insights and

provide a better understanding of what’s happening in any given situation and

decide on the most appropriate course of immediate action. Digital twins take

insight not just from the raw sensors — the edge compute nodes — but a

combination of real-time data at the edge and historical data at the core.

Can Your Organization Benefit from Edge Data Centers?

Organizations considering a move to edge computing should begin their journey by

inventorying their applications and infrastructure. It's also a good idea to

assess current and future user requirements, focusing on where data is created

and what actions need to be performed on that data. "Generally speaking, the

more susceptible data is to latency, bandwidth, or security issues, the more

likely the business is to benefit from edge capabilities," said Vipin Jain, CTO

of edge computing startup Pensando. “Focus on a small number of pilot projects

and partner with integrators/ISVs with experience in similar deployments."

Fugate recommended examining business functions and processes and linking them

to the application and infrastructure services they depend on. "This will ensure

that there isn’t one key centralized service that could stop critical business

functions," he said. "The idea is to determine what functions must survive

regardless of an infrastructure or connectivity failure." Fugate also advised

determining how to effectively manage and secure distributed edge platforms.

How to Speed Up Your Digital Transformation

The complexity-in-use is often overlooked in digitalization projects because

those in charge think that accounting for task and system complexity

independent of one another is enough. In our case, at the beginning of the

transformation, tasks and processes were considered relatively stable and

independent from the new system. As a result, the loan-editing clerks were

unable to complete business-critical tasks for weeks, and management needed

to completely reinvent their change management approach to turn the project

around and overcome operational problems in the high complexity-in-use area.

They brought in more people to reduce the backlog, developed new training

materials, and even changed the newly implemented system — a problem-solving

technique organizations with smaller budgets wouldn’t find easy to deploy.

In the end, our study partner managed this herculean task, but it took them

months to get the struggling departments back on track.

Ecosystems at The Edge: Where the Data Center Becomes a Marketplace

Rapidly evolving edge computing architectures are often seen as a way for

businesses to enable new applications that require low latency and place

computing close to the origin of data. While those are important use cases,

what is less often discussed is the opportunity for businesses to leverage

the edge to spawn ecosystems that generate new revenue. To realize this

value, companies must think of the edge as more than just a collection point

for data from intelligent devices. They should broaden their vision to see

the edge as a new business hub. These small data centers can evolve into

full-fledged service providers that attract local businesses, generate

e-commerce transactions and enable interconnections that never touch the

central cloud. Edge computing is an expansion of cloud infrastructure that

moves data collection, processing and services closer to the point at which

data is created or used. It is the fastest-growing segment of the cloud

category with the total market expected to expand 37% annually through 2027,

according to Grand View Research.

NSA: We 'don't know when or even if' a quantum computer will ever be able to break today's public-key encryption

In the NSA's summary, a CRQC – should one ever exist – "would be capable of

undermining the widely deployed public key algorithms used for asymmetric

key exchanges and digital signatures" – and what a relief it is that no one

has one of these machines yet. The post-quantum encryption industry has long

sought to portray itself as an immediate threat to today's encryption, as El

Reg detailed in 2019. "The current widely used cryptography and hashing

algorithms are based on certain mathematical calculations taking an

impractical amount of time to solve," explained Martin Lee, a technical lead

at Cisco's Talos infosec arm. "With the advent of quantum computers, we risk

that these calculations will become easy to perform, and that our

cryptographic software will no longer protect systems." Given that nations

and labs are working toward building crypto-busting quantum computers, the

NSA said it was working on "quantum-resistant public key" algorithms for

private suppliers to the US government to use, having had its Post-Quantum

Standardization Effort running since 2016.

There are multiple ways that AI could become a detriment to society. Machine

learning, a subfield of AI, learns from vast quantities of data and hence

carries the risk of perpetuating data bias. AI use cases including facial

recognition and predictive analytics could adversely impact protected classes

in areas such as loan rejection, criminal justice and racial bias, leading to

unfair outcomes for certain people. ... AI is only as good as the data that is

used to train it. From an industry perspective, this is problematic given

there is often a lack of training data for true failures in critical systems.

This becomes dangerous when a wrong prediction leads to potentially

life-threatening events such as manufacturing accidents or oil spills. This is

why a focus on hybrid AI and “explainable AI” is necessary. ... Unfortunately,

cybercriminals have historically been better and faster adopters of technology

than the rest of us. AI can become a detriment to society when deepfakes and

deep learning models are used as vehicles for social engineering by scammers

to steal money, sensitive data and confidential intellectual property by

pretending to be people and entities we trust.

Reviewing the Eight Fallacies of Distributed Computing

The challenges of distributed systems, and the broad science around the

techniques and mechanisms used to build them, are now well researched. The

thing you learn when addressing these challenges in the real world, however,

is that academic understanding only gets you so far. Building distributed

systems involves engineering pragmatism and trade-offs, and the best solutions

are the ones you discover by experience and experiment. ... However, the

engineering reality is that multiple kinds of failures can, and will, occur at

the same time. The ideal solution now depends on the statistical distribution

of failures; or on analysis of error budgets, and the specific service impact

of certain errors. The recovery mechanisms can themselves fail due to system

unreliability, and the probability of those failures might impact the

solution. And of course, you have the dangers of complexity: solutions that

are theoretically sound, but complex, might be far more complicated to manage

or understand whenever an incident takes place than simpler mechanisms that

are theoretically not as complete.

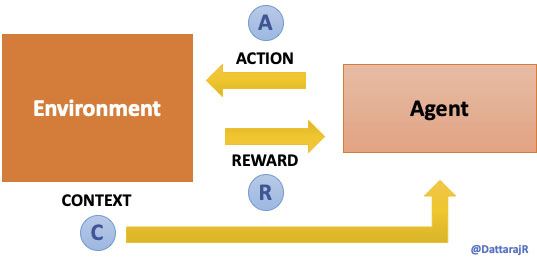

Machine Learning Algorithm Sidesteps the Scientific Method

We might be most familiar with machine learning algorithms as they are used in

recommendation engines, and facial recognition and natural language processing

applications. In the field of physics, however, machine learning algorithms

are typically used to model complex processes like plasma disruptions in

magnetic fusion devices, or modeling the dynamic motions of fluids. In the

case of this work by the Princeton team, the algorithm skips the interim steps

of needing to be explicitly programmed with the conventions of physics. “The

algorithms developed are robust against variations of the governing laws of

physics because the method does not require any knowledge of the laws of

physics other than the fundamental assumption that the governing laws are

field theories,” said the team. “When the effects of special relativity or

general relativity are important, the algorithms are expected to be valid as

well.” The researchers’ approach was inspired in part by Oxford philosopher

Nick Bostrom’s philosophical thought experiment that the universe is actually

a computer simulation.

What's the Real Difference Between Leadership and Management?

Leaders, like entrepreneurs, are constantly looking for ways to add to their

world of expertise. They tend to enjoy reading, researching and connecting

with like-minded individuals; they constantly aim to grow. They are usually

open-minded and seek opportunities that challenge them to expand their level

of thinking, which in turn leads to developing more solutions to problems that

may arise. Managers, many times, rely on existing knowledge and skills by

repeating proven strategies or behaviors that may have worked in the past to

help maintain a steady track record within their field of success with

clients. ... Leaders create trust and bonds between their mentees that go

beyond expression or definition. Their mentees become raving fanatics willing

to go above and beyond the usual scope of supporting their leader in achieving

his or her mission. In the long run, the overwhelming support from his or her

fanatics helps increase the value and credibility of the leader. On the other

hand, managers direct, delegate, enforce and advise either an individual or

group that typically represents a brand or organization looking for direction.

Followers do as they are told and rarely ask questions.

Quote for the day:

"Most people don't know how AWESOME

they are, until you tell them. Be sure to tell them." --

Kelvin Ringold

:max_bytes(150000):strip_icc():format(webp)/GettyImages-1223790532-b9202544771f4246912063b14cc0e41a.jpg)