Top 6 cybersecurity trends to watch for at Black Hat USA 2020

Tom Kellermann, head of cybersecurity strategy atVMware Carbon Black, said,

"Black Hat USA 2020 will highlight the dramatic surge and increased

sophistication of cyberattacks amid COVID-19. A recent VMware Carbon Black

report found that from the beginning of February to the end of April 2020,

attacks targeting the financial sector have grown by 238%. Cybercriminals are

also preying on the virtual workforce, the mass shift to remote work has

sparked increasingly punitive attacks. Malicious actors have set their sights

on commandeering digital transformation efforts to attack the customers of

organization. These burglaries have escalated to a home invasion, with

destructive attacks exploding to a 102% increase with the use of NOTPetya

style ransomware and wipers. Spear phishing is no longer the primary attack

vector, rather OS vulnerabilities, application exploitation, RDP open to the

internet, and island hopping have risen to the top." Code42 CISO and CIO Jadee

Hanson, said, "Top of mind for me is how the mental and emotional wellbeing of

our workforce during the pandemic is impacting people's work and behavior and,

as a result, their risk profiles. Businesses need to have a strong pulse on

how their employees are doing.

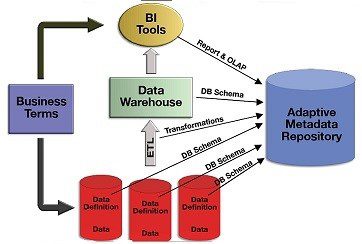

Metadata Repository Basics: From Database to Data Architecture

While knowledge graphs have shown potential for the metadata repository to find relationship patterns among large amounts of information, some businesses want more from a metadata repository. Streaming data ingested into databases from social media and IoT sensors, also need to be described. According to a New Stack survey of 800 professionals developers, real-time data use has seen a significant increase. What does this mean for the metadata repository? Enterprises want metadata to show the who, what, why, when, and how of their data. The centralized metadata repository database answers these questions but remains too slow and cumbersome to handle large amounts of light-speed metadata. Knowledge graphs have the advantage of dealing with lots of data and quickly. However, knowledge graphs display only specific types of patterns in their metadata repository. Companies need another metadata repository tool. Here comes the data catalog, a metadata repository informing consumers what data lives in data systems and the context of this data. Automation and discovery make the data catalog attractive by ensuring it keeps up with fast-moving data and its changes. Business and technical users can easily query the data catalog.

Confidential Computing Will Revolutionize The Internet Of Things

Confidential computing is all about trust. Developers in this field are

seeking to accelerate the adoption of what is known as “Trusted

Execution Environment” (TEE) technologies. A TEE sequesters code and data away

from applications on the main operating system in order to keep them away from

adversaries who may gain access to the main operating system. Or, to use an

analogy from this article, if the main system is in the White House, for

instance, with a variety of protections, a TEE is the bunker underneath it.

Within any of these bunkers, only those entities authorized by the actual data

owner can view or alter the data. This enables all sorts of applications to

operate efficiently without ever needing to have direct access to data. This

goes beyond the better-known technique of anonymizing data, which just removes

personal identifiers from a database. While anonymization protects privacy, it

limits the usefulness of the data, whereas confidential computing secures data

even as it is in use, allowing for wider application. Confidential computing

protects encrypted software code and data from malicious administrators and

hackers in public clouds; protects sensitive machine-learning models and

enables privacy-preserving data analytics;

Technical Challenges of IoT Cybersecurity in a Post-COVID-19 World

For manufacturers of connected IoT products, it is key to focus on their

supply chain and increase the ability to break their products down into their

respective components. Effective management of vulnerabilities can be done

only when information about supply chain dependencies is accurate and recent.

A second side effect of the pandemic is the massively increased reliance on

cloud-based communication systems. It is unthinkable to conduct business

effectively and in compliance with the current legal restrictions without

holding a videoconference, sharing a document, or presenting a slide set

remotely. The systems used to perform those tasks, however, are largely

following the same basic principles that typical client-server architectures

have been following for roughly 20 years. While the cryptographic transport

protocols have improved significantly since SSLv2, there still is a disparity

in the level of trust between client and server: Clients are typically

considered entirely untrusted while servers hold all the secrets and relay

data securely. While this is easiest for the implementors of backend

infrastructure, such a design is something which is fundamentally unpleasant

from a security point of view.

Industrial robots could 'eat metal' to power themselves

The researchers' vision for a "metal-air scavenger" could solve one of the

quandaries of future IoT-enabled factories. That quandary is how to power a

device that moves without adding mass and weight, as one does by adding bulky

batteries. The answer, according to the University of Pennsylvania

researchers, is to try to electromechanically forage for energy from the metal

surfaces that a robot or IoT device traverses, thus converting material

garnered, using a chemical reaction, into power. "Robots and electronics

[would] extract energy from large volumes of energy dense material without

having to carry the material on-board," the researchers say in a paper they've

published in ACS Energy Letters. It would be like "eating metal, breaking down

its chemical bonds for energy like humans do with food." Batteries work by

repeatedly breaking and creating chemical bonds. The research references the

dichotomy between computing and power storage. Computing is well suited to

miniaturization, and processers have been progressively reduced in size while

performance has increased, but battery storage hasn't.

IoT Automation Trend Rides Next Wave of Machine Learning, Big Data

In particular, automated discovery of IoT environments for cybersecurity

purposes has been an ongoing driver of IoT automation. That is simply because

there is too much machine information to manually track, according to Lerry

Wilson, senior director for innovation and digital ecosystems at Splunk. The

target is anomalies found in data stream patterns. “Anomalous behavior starts

to trickle into the environment, and there’s too much for humans to do,”

Wilson said. And, while much of this still requires a human somewhere “in the

loop,” the role of automation continues to grow. Wilson said Splunk, which

focuses on integrating a breadth of machine data, has worked with partners to

ensure incoming data can now kick off useful functions in real time. These

kinds of efforts are central to emerging information technology/operations

technology (IT/OT) integration. This, along with machine learning (ML),

promises increased automation of business workflows. “Today, we and our

partners are creating machine learning that will automatically set up a work

order – people don’t have to [manually] enter that anymore,” he said

Putting AI And Machine Learning To Work In Cloud-Based BI And Analytics

By leveraging modern technology to automate data lake migration and

replication to the cloud with WANdisco LiveData Cloud Services through its

patented Distributed Coordination Engine platform. This innovation is founded

on fundamental IP which is based around forming consensus in a distributed

network. This is an extremely hard problem to solve and to this day some

people believe that it cannot be solved. So what is this problem at a high

level? If you have a network of nodes, distributed across the world with

little to no knowledge of the distance and bandwidth between the nodes, how

can you get the nodes to coordinate between each other without worrying about

any failure scenarios? The solution is the application of a consensus

algorithm and the gold standard in consensus is an algorithm called Paxos. Our

chief Scientist Dr. Yeturu Aahlad, an expert in distributed systems, devised

the first, and even now only, commercialised version of Paxos. By doing so, he

solved a problem that had been puzzling computer scientists for years.

WANdisco’s LiveData Cloud Services are based on this core IP including our

products focused on analytical data and the challenge of migrating this data

to the cloud and keeping the data consistent in multiple locations.

Breach of high-profile Twitter accounts caused by phone spear phishing attack

Whatever specific spear phishing method was used in the breach, clearly the

attackers relied on a combination of technical skills and social engineering

know-how to be able to convince employees into sharing their account

credentials. Of course, that's the M.O. for many phishing attacks and other

types of malicious campaigns. "This attack relied on a significant and

concerted attempt to mislead certain employees and exploit human

vulnerabilities to gain access to our internal systems." Twitter acknowledged.

"This was a striking reminder of how important each person on our team is in

protecting our service." Other than training employees through phishing

simulations and similar methods, trying to correct human behavior is always

challenging. That's why socially engineered attacks are often successful.

"This incident demonstrates that social engineering is still a common method

for attackers to gain access to internal systems," Ray Kelly, principal

security engineer at WhiteHat Security, told TechRepublic. "The human is often

times the weakest link in any security chain.

Meeting the Demand: Containerizing Master Data Management in the Cloud

Containerizing MDM as a PaaS offering is essential to realizing the

flexibility for which the cloud is renowned. Although this capability becomes

redoubled with Dockers or Kubernetes orchestration platforms, containers

themselves “reduce the disruption of the architecture of the platform and

provide more portability and flexibility for customers,” Melcher remarked.

“What a container really is is kind of a preconfigured application, if you

will.” These lightweight repositories include everything to deploy apps.

Without them, MDM as a native PaaS offering increases the propensity for

vendor lock-in per cloud provider, and all but eliminates on-premise hybrid

clouds. The speed and ease of containerizing MDM services lets customers “spin

the platform up in a matter of minutes without downloading installation and

configuration guides, spinning up a Windows server, or loading up a bunch of

pre-requisites,” Melcher mentioned. “All of that sort of tribal type knowledge

that customers have had to historically take on when they buy an application

goes away.”

The Role of Augmented Data Management in the Workplace

The negative aspects of the current situation with COVID-19 and the associated

economic downturn have presented a shift in the workplace, which is driving more

opportunities for growth, greater visibility into the B2B buying process, and

ensuring quality customer experience throughout the buying cycle, according to

Rashmi Vittal, CMO at Foster City, Calif.-based Conversica. “This change

introduces intelligent automation into the workplace, something we refer to as

the Augmented Workforce,” she said. An Augmented Workforce describes a workplace

where business professionals work alongside artificial intelligence to drive

better business outcomes. One such AI-driven technology making dramatic changes

for customer-facing teams, including sales, marketing, and customer success, is

an intelligent virtual assistant (IVA). IVAs in turn accelerate revenue across

the customer journey by autonomously engaging contacts, prospects, customers, or

partners in human-like, two-way interactions at scale, to drive towards the next

best action.

Quote for the day:

No comments:

Post a Comment