LockFile Ransomware Uses Never-Before Seen Encryption to Avoid Detection

The ransomware first exploits unpatched ProxyShell flaws and then uses what’s

called a PetitPotam NTLM relay attack to seize control of a victim’s domain,

researchers explained. In this type of attack, a threat actor uses Microsoft’s

Encrypting File System Remote Protocol (MS-EFSRPC) to connect to a server,

hijack the authentication session, and manipulate the results such that the

server then believes the attacker has a legitimate right to access it, Sophos

researchers described in an earlier report. LockFile also shares some attributes

of previous ransomware as well as other tactics—such as forgoing the need to

connect to a command-and-control center to communicate–to hide its nefarious

activities, researchers found. “Like WastedLocker and Maze ransomware, LockFile

ransomware uses memory mapped input/output (I/O) to encrypt a file,” Loman wrote

in the report. “This technique allows the ransomware to transparently encrypt

cached documents in memory and causes the operating system to write the

encrypted documents, with minimal disk I/O that detection technologies would

spot.”

How To Prepare for SOC 2 Compliance: SOC 2 Types and Requirements

To be reliable in today’s data-driven world, SOC 2 compliance is essential for

all cloud-based businesses and technology services that collect and store their

clients’ information. This gold standard of information security certifications

helps to ensure your current data privacy levels and security infrastructure to

prevent any kind of data breach. Data breaches are all too common nowadays among

small to large scale companies across the globe in all sectors. According to

PurpleSec, half of all data breaches will occur in the United States by 2023.

Experiencing such a breach causes customers to completely lose trust in the

targeted company and those who have been through one tend to move their business

elsewhere to protect their personal information in the future. SOC 2 compliance

can protect from all this pain by improving customer trust in a company with

secured data privacy policies. Companies that adhere to the gold standard-level

principles of SOC 2 compliance, can provide this audit as evidence of secure

data privacy practices.

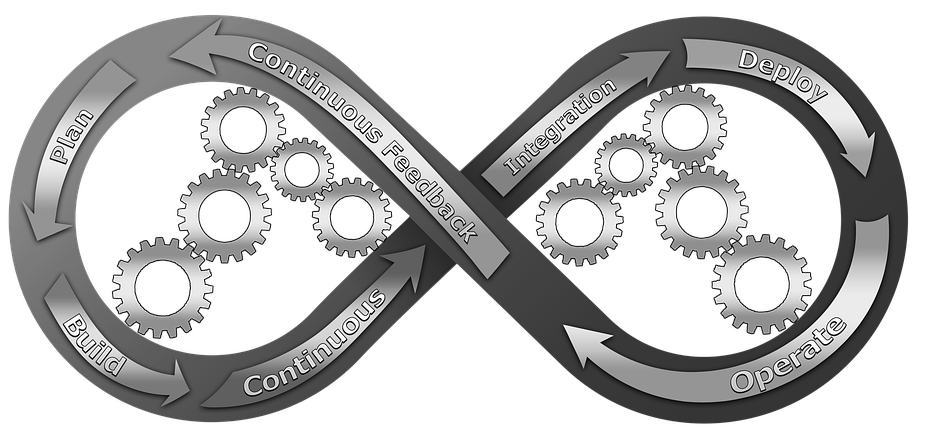

6 Reasons why you can’t have DevOps without Test Automation

Digital transformation is gaining traction every single day. The modern consumer

is more demanding of quality products and services. Adoption of technologies

helps companies stay ahead of the competition. They can achieve higher

efficiency and better decision-making. Further, there is room for innovation

that aims to meet the needs of customers. All these imply integration,

continuous development, innovation, and deployment. All this is achievable with

DevOps and the attendant test automation. But, can one exist without the other?

We believe not; test automation is a critical component of DevOps, and we will

tell you why. ... One of the biggest challenges with software is the need for

constant updates. That is the only way to avoid glitches while improving upon

what exists. But, the process of testing across many operating platforms and

devices is difficult. DevOps processes must execute testing, development, and

deployment in the right way. Improper testing can lead to low-quality products.

Customers have so many options in the competitive business landscape.

One Year Later, a Look Back at Zerologon

Netlogon is a protocol that serves as a channel between domain controllers and

machines joined to the domain, and it handles authenticating users and other

services to the domain. CVE-2020-1472 stems from a flaw in the cryptographic

authentication scheme used by the Netlogon Remote Protocol. An attacker who sent

Netlogon messages in which various fields are filled with zeroes could change

the computer password of the domain controller that is stored in Active

Directory, Tervoort explains in his white paper. This can be used to obtain

domain admin credentials and then restore the original password for the domain

controller, he adds. "This attack has a huge impact: it basically allows any

attacker on the local network (such as a malicious insider or someone who simply

plugged in a device to an on-premises network post) to completely compromise the

Windows domain," Tervoort wrote. "The attack is completely unauthenticated: the

attacker does not need any user credentials." Another reason Zerologon appeals

to attackers is it can be plugged into a variety of attack chains.

Forrester: Why APIs need zero-trust security

API governance needs zero trust to scale. Getting governance right sets the

foundation for balancing business leaders’ needs for a continual stream of new

innovative API and endpoint features with the need for compliance. Forrester’s

report says “API design too easily centers on innovation and business

benefits, overrunning critical considerations for security, privacy, and

compliance such as default settings that make all transactions accessible.”

The Forrester report says policies must ensure the right API-level trust is

enabled for attack protection. That isn’t easy to do with a perimeter-based

security framework. Primary goals need to be setting a security context for

each API type and ensuring security channel zero-trust methods can scale. APIs

need to be managed by least privileged access and microsegmentation in every

phase of the SDLC and continuous integration/continuous delivery (CI/CD)

Process. The well-documented SolarWinds attack is a stark reminder of how

source code can be hacked and legitimate program executable files can be

modified undetected and then invoked months after being installed on customer

sites.

The consumerization of the Cybercrime-as-a-Service market

Many trends in the cybercrime market and shadow economy mirror those in the

legitimate world, and this is also the case with how cybercriminals are

profiling and targeting victims. The Colonial Pipeline breach triggered a

serious reaction from the US government, including some stark warnings to

criminal cyber operators, CCaaS vendors and any countries hosting them, that a

ransomware may lead to a kinetic response or even inadvertently trigger a war.

Not long after, the criminal gang suspected to be behind the attack resurfaced

under a new name, BlackMatter, and advertised that they are buying access from

brokers with very specific criteria. Seeking companies with revenue of at

least 100 million US dollars per year and 500 to 15,000 hosts, the gang

offered $100,000, but also provided a clear list of targets they wanted to

avoid, including critical infrastructure and hospitals. It’s a net positive if

the criminals actively avoid disrupting critical infrastructure and important

targets such as hospitals.

NGINX Commits to Open Source and Kubernetes Ingress

Regarding NGINX’s open source software moving forward, Whiteley said the

company’s executives have committed to a model where open source will be meant

for use in production and nothing less. Whiteley even said that, if they were

able to go back in time, certain features currently available only in NGINX

Plus would be available in the open source version. “One model is ‘open

source’ equals ‘test/dev, ‘ ‘commercial’ equals ‘production,’ so the second

you trip over into production, you kind of trip over a right-to-use issue,

where you then have to start licensing the technology ...” said Whiteley.

“What we want to do is focus on, as the application scales — it’s serving more

traffic, it’s generating more revenue, whatever its goal is as an app — that

the investment is done in lockstep with the success and growth of that.” This

first point, NGINX’s stated commitment to open source, serves partly as

background for the last point mentioned above, wherein NGINX says it will

devote additional resources to the Kubernetes community, a move partly driven

by the fact that Alejandro de Brito Fonte, the founder of the ingress-nginx

project, has decided to step aside.

How RPA Is Changing the Way People Work

Employees are struggling under the burden of routine, repetitive work but

notice the consumers demanding better services and products. Employees expect

companies to improve the working environment in the same spirit as improving

customer satisfaction. The corporate response, in the form of automation, is

expanding the comfort zone of employees. But, there’s a flip side to the RPA

coin. With the rise of automation, people fear the consequences of RPA

solutions replacing human labor and marginalizing the human touch that was at

the core of services and product delivery. Such a threat could seem an

exaggeration. RPA removes the drudgery of routine work and sets the stage for

workers to play a more decisive role in areas where human touch, care, and

creativity are essential. ... With loads of time and better tools at their

disposal, employees are more caring and sensitive to the need for making a

difference in the lives of customers. More employees are actively unlocking

their reservoir of creativity.

Predicting the future of the cloud and open source

The open source community has also started to dedicate time and effort to

resolving some of the world’s most life-threatening challenges. When the

COVID-19 pandemic hit, the open source community quickly distributed data to

create apps and dashboards that could follow the evolution of the virus. Tech

leaders like Apple and Google came together to build upon this technology to

provide an open API that could facilitate the development of standard and

applications by health organisations around the world, and open hardware

designs for ventilators and other critical medical equipment that was in high

demand. During lockdown last year, the open source community also launched

projects to tackle the climate crisis an increasingly important issue that

world leaders are under ever-more pressure to address. One of the most notable

developments was the launch of the Linux Foundation Climate Finance

Foundation, which aims to provide more funding for game-changing solutions

through open source applications.

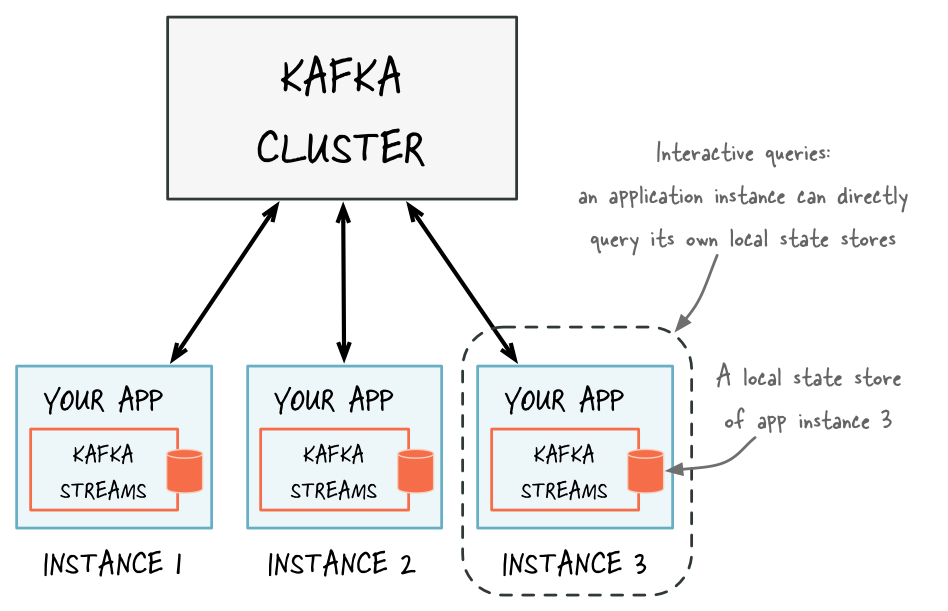

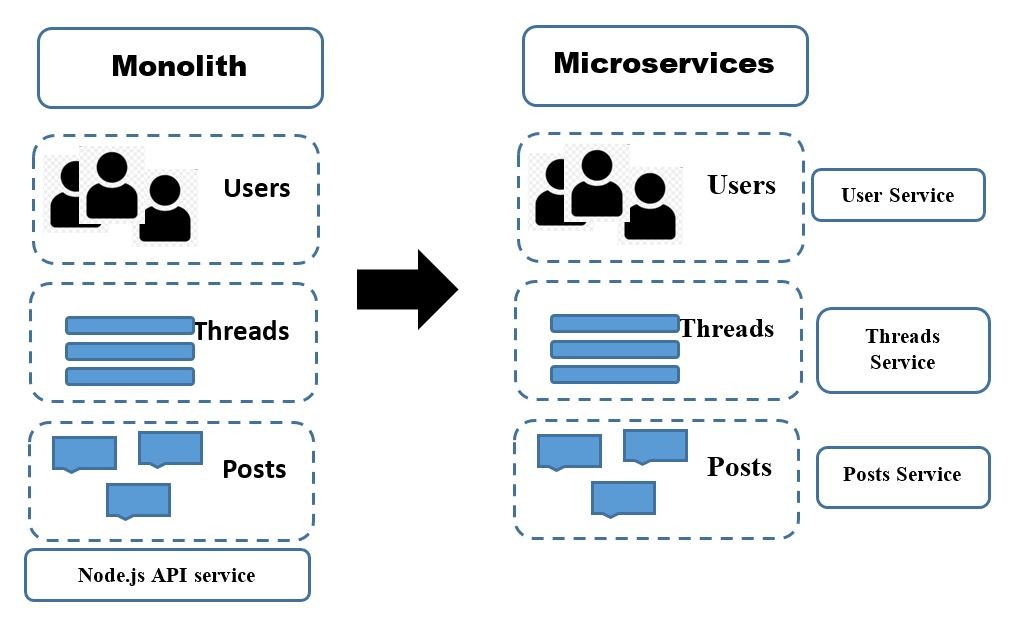

Pitfalls and Patterns in Microservice Dependency Management

Running a product in a microservice architecture provides a series of

benefits. Overall, the possibility of deploying loosely coupled binaries in

different locations allows product owners to choose among cost-effective and

high-availability deployment scenarios, hosting each service in the cloud or

in their own machines. It also allows for independent vertical or horizontal

scaling: increasing the hardware resources for each component, or replicating

the components which has the benefit of allowing the use of different

independent regions. ... Despite all its advantages, having an architecture

based on microservices may also make it harder to deal with some processes. In

the following sections, I'll present the scenarios I mentioned before

(although I changed some real names involved). I will present each scenario in

detail, including some memorable pains related to managing microservices, such

as aligning traffic and resource growth between frontends and backends. I will

also talk about designing failure domains, and computing product SLOs based on

the combined SLOs of all microservices.

Quote for the day:

"Different times need different types

of leadership." -- Park Geun-hye

/cloudfront-us-east-2.images.arcpublishing.com/reuters/N45WXQTJYFIIBNBHNKPEHHVXO4.jpg)