Quote for the day:

"Winners are not afraid of losing. But losers are. Failure is part of the process of success. People who avoid failure also avoid success." -- Robert T. Kiyosaki

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 20 mins • Perfect for listening on the go.

Reshaping Cloud strategy: the rise of sovereign Edge computing for AI and IoT

The article addresses a major shift in enterprise cloud strategy, detailing

how businesses are increasingly migrating away from centralized public cloud

systems toward hybrid, local, and regional alternatives. This corporate

movement is heavily shaped by four critical drivers: cost efficiency,

operational performance, legal compliance, and the emerging infrastructure

demands of artificial intelligence (AI). To bypass the continuous uptime

"cloud tax" and costly data egress fees, enterprises are repatriating

predictable, steady-state workloads to owned or co-located hardware.

Additionally, by moving data closer to the end-user via regional edge

computing facilities, organizations significantly lower data transit

distances, reducing costly "lag tax" issues while keeping latency under ten

milliseconds. Data sovereignty and compliance also dictate this spending

shift, as businesses rely on secure, sovereign private clouds to strictly

retain local data control and meet evolving regulatory mandates like GDPR.

Finally, while public cloud networks remain necessary for massive AI model

training, localized edge infrastructure has become essential for supporting

low-latency AI inference and real-time IoT networks. To successfully navigate

this multi-environment transition without suffering severe operational

disruption, the article advises tech leaders to build interoperable ecosystems

featuring unified management platforms, high-performance private networks, and

unified visibility portals.

The article addresses a major shift in enterprise cloud strategy, detailing

how businesses are increasingly migrating away from centralized public cloud

systems toward hybrid, local, and regional alternatives. This corporate

movement is heavily shaped by four critical drivers: cost efficiency,

operational performance, legal compliance, and the emerging infrastructure

demands of artificial intelligence (AI). To bypass the continuous uptime

"cloud tax" and costly data egress fees, enterprises are repatriating

predictable, steady-state workloads to owned or co-located hardware.

Additionally, by moving data closer to the end-user via regional edge

computing facilities, organizations significantly lower data transit

distances, reducing costly "lag tax" issues while keeping latency under ten

milliseconds. Data sovereignty and compliance also dictate this spending

shift, as businesses rely on secure, sovereign private clouds to strictly

retain local data control and meet evolving regulatory mandates like GDPR.

Finally, while public cloud networks remain necessary for massive AI model

training, localized edge infrastructure has become essential for supporting

low-latency AI inference and real-time IoT networks. To successfully navigate

this multi-environment transition without suffering severe operational

disruption, the article advises tech leaders to build interoperable ecosystems

featuring unified management platforms, high-performance private networks, and

unified visibility portals.Your AI agents need a terminal, not just a vector database

The VentureBeat article introduces Direct Corpus Interaction, a novel

retrieval technique that allows AI agents to bypass traditional vector

databases and embedding models to interact directly with raw text data. While

classic Retrieval-Augmented Generation workflows rely heavily on semantic

similarity search, this strategy often creates an early information bottleneck

because it fails to capture exact strings, specific version numbers, or

rapidly updating workspace data. To address these limitations, Direct Corpus

Interaction provides agents with a terminal-like execution environment. By

utilizing standard command-line tools such as grep, find, and cat, agents can

dynamically execute complex shell pipelines, perform localized file

inspection, and implement exact lexical pattern testing. Researchers evaluated

two specific versions: the budget-friendly DCI-Agent-Lite and the

higher-performance DCI-Agent-CC. Across rigorous multi-hop reasoning

benchmarks, this methodology significantly boosted execution accuracy and

dramatically decreased overall API costs compared to traditional dense or

sparse retrievers. However, because Direct Corpus Interaction intentionally

trades broad document recall for high-resolution local precision, it can

struggle with initial search breadth across massive document collections.

Consequently, experts recommend a hybrid operational pattern where traditional

semantic engines handle broad document discovery, while the terminal-based

system functions as a subsequent precision verification layer.

The VentureBeat article introduces Direct Corpus Interaction, a novel

retrieval technique that allows AI agents to bypass traditional vector

databases and embedding models to interact directly with raw text data. While

classic Retrieval-Augmented Generation workflows rely heavily on semantic

similarity search, this strategy often creates an early information bottleneck

because it fails to capture exact strings, specific version numbers, or

rapidly updating workspace data. To address these limitations, Direct Corpus

Interaction provides agents with a terminal-like execution environment. By

utilizing standard command-line tools such as grep, find, and cat, agents can

dynamically execute complex shell pipelines, perform localized file

inspection, and implement exact lexical pattern testing. Researchers evaluated

two specific versions: the budget-friendly DCI-Agent-Lite and the

higher-performance DCI-Agent-CC. Across rigorous multi-hop reasoning

benchmarks, this methodology significantly boosted execution accuracy and

dramatically decreased overall API costs compared to traditional dense or

sparse retrievers. However, because Direct Corpus Interaction intentionally

trades broad document recall for high-resolution local precision, it can

struggle with initial search breadth across massive document collections.

Consequently, experts recommend a hybrid operational pattern where traditional

semantic engines handle broad document discovery, while the terminal-based

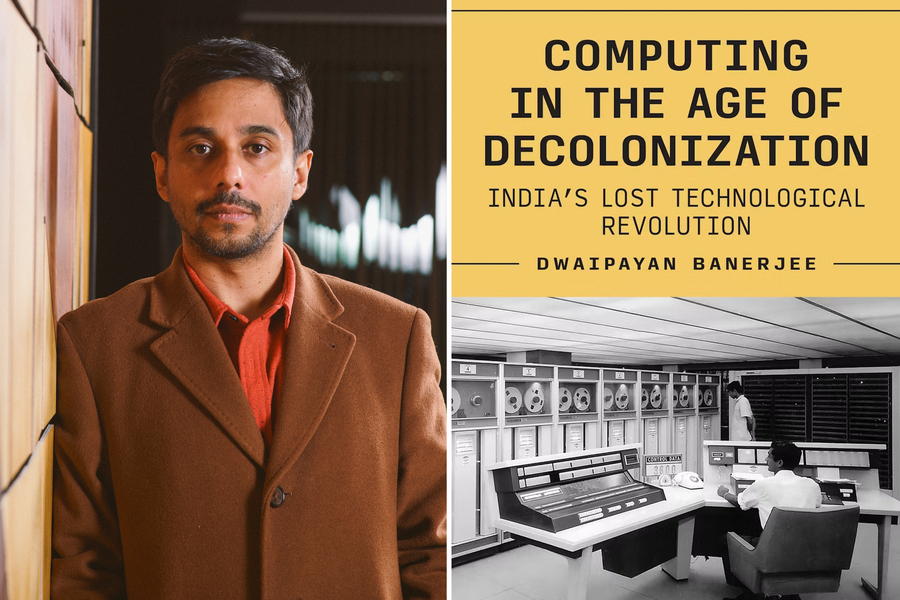

system functions as a subsequent precision verification layer.The Cloud Provider’s Blueprint: Navigating Data Localization and DPDP Compliance in India

This article outlines the architectural blueprint required for Cloud Service

Providers to navigate India's stringent data localization laws and Digital

Personal Data Protection Act compliance within the financial sector. As

regulatory scrutiny intensifies from the Reserve Bank of India and the Data

Protection Board, data governance has replaced traditional infrastructure

metrics as the primary architectural driver. While the primary privacy act

allows general international data transfers, stricter sectoral regulations

override this permissiveness, enforcing absolute localized data residency for

financial records, transaction histories, and localized disaster recovery

setups. To safely host regulated entities like banks and fintech platforms,

cloud vendors must operate as trusted data processor partners. This obligation

demands executing strict data processing agreements that prohibit secondary

usage for artificial intelligence training, enforce automated deletion

mechanisms across all storage layers, and safely maintain localized system

access logs for a full year. Furthermore, cloud platforms must implement

advanced cryptographic isolation through local Hardware Security Modules and

Hold Your Own Key frameworks, alongside localized sovereign support models to

prevent accidental international engineering access. Ultimately, providing

continuous forensic telemetry to meet the central bank’s aggressive six hour

incident notification window helps establish a compliant architecture,

transforming regulatory compliance into a competitive advantage.

This article outlines the architectural blueprint required for Cloud Service

Providers to navigate India's stringent data localization laws and Digital

Personal Data Protection Act compliance within the financial sector. As

regulatory scrutiny intensifies from the Reserve Bank of India and the Data

Protection Board, data governance has replaced traditional infrastructure

metrics as the primary architectural driver. While the primary privacy act

allows general international data transfers, stricter sectoral regulations

override this permissiveness, enforcing absolute localized data residency for

financial records, transaction histories, and localized disaster recovery

setups. To safely host regulated entities like banks and fintech platforms,

cloud vendors must operate as trusted data processor partners. This obligation

demands executing strict data processing agreements that prohibit secondary

usage for artificial intelligence training, enforce automated deletion

mechanisms across all storage layers, and safely maintain localized system

access logs for a full year. Furthermore, cloud platforms must implement

advanced cryptographic isolation through local Hardware Security Modules and

Hold Your Own Key frameworks, alongside localized sovereign support models to

prevent accidental international engineering access. Ultimately, providing

continuous forensic telemetry to meet the central bank’s aggressive six hour

incident notification window helps establish a compliant architecture,

transforming regulatory compliance into a competitive advantage.The Architecture Decisions Only CFOs Can Make

Zero Trust Is Not a Product You Buy. But It’s Not a War You Win Alone, Either

In this RTInsights article, Jamie Pugh explains that the primary obstacle to

successful Zero Trust implementation is organizational rather than

technological, driven by a deep structural conflict between Network Operations

(NetOps) and Security Operations (SecOps). Historically, NetOps has

prioritized system availability, speed, and uptime, while SecOps has focused

on control, verification, and risk reduction. When Zero Trust emerged,

commercial vendor marketing misleadingly framed it as an easily purchasable

platform. This enabled security teams to mandate complex, uncoordinated

frameworks onto existing network architectures without consulting their

operational counterparts, resulting in severe cultural friction and project

gridlock. Consequently, Gartner predicts that thirty percent of organizations

will completely abandon their Zero Trust initiatives by 2028 due to these

cultural integration failures. To counter this, the article highlights the

philosophy of Zero Trust creator John Kindervag, who maintains that the

framework is a strategy rather than a product. Achieving true security

maturity requires corporate executives to shift away from isolated mandates

and actively enforce unified governance. Both teams must establish a shared

program charter to collectively define protect surfaces, map traffic

dependencies, and share accountability, successfully harmonizing overall

network infrastructure availability with continuous identity verification to

withstand modern enterprise cyber threats.

In this RTInsights article, Jamie Pugh explains that the primary obstacle to

successful Zero Trust implementation is organizational rather than

technological, driven by a deep structural conflict between Network Operations

(NetOps) and Security Operations (SecOps). Historically, NetOps has

prioritized system availability, speed, and uptime, while SecOps has focused

on control, verification, and risk reduction. When Zero Trust emerged,

commercial vendor marketing misleadingly framed it as an easily purchasable

platform. This enabled security teams to mandate complex, uncoordinated

frameworks onto existing network architectures without consulting their

operational counterparts, resulting in severe cultural friction and project

gridlock. Consequently, Gartner predicts that thirty percent of organizations

will completely abandon their Zero Trust initiatives by 2028 due to these

cultural integration failures. To counter this, the article highlights the

philosophy of Zero Trust creator John Kindervag, who maintains that the

framework is a strategy rather than a product. Achieving true security

maturity requires corporate executives to shift away from isolated mandates

and actively enforce unified governance. Both teams must establish a shared

program charter to collectively define protect surfaces, map traffic

dependencies, and share accountability, successfully harmonizing overall

network infrastructure availability with continuous identity verification to

withstand modern enterprise cyber threats.We’re About to Drown in AI-Generated Technical Debt

From edge appliance to enterprise compromise: Multi-stage Linux intrusion via F5 and Confluence

This Microsoft Security report details a multi-stage Linux intrusion that

highlights a growing trend of cybercriminals exploiting vulnerable,

internet-facing edge appliances to systematically compromise enterprise

networks. The threat actor initially gained access by exploiting an

end-of-life, Azure-hosted F5 BIG-IP load balancer. Using this perimeter

foothold, the attacker established an over-privileged SSH session with sudo

rights on an internal Linux host and launched extensive automated

reconnaissance using Nmap, gowitness, and custom malicious packages to map

internal infrastructure. From there, the attacker moved laterally by

exploiting remote code execution vulnerabilities in an unpatched, internally

facing Atlassian Confluence server. After successfully compromising

Confluence, the actor extracted stored application credentials and weaponized

them to execute Kerberos and NTLM relay attacks against Windows

infrastructure, specifically targeting Active Directory domain controllers to

escalate privileges. Microsoft warns that internally deployed SaaS

applications represent a critical attack surface even if they are not exposed

to the public internet. To mitigate these identity-centric, cross-domain

threats, organizations must treat edge appliances as Tier-0 assets with strict

patch governance, harden internal web applications with equal urgency, disable

NTLM where possible, and enforce robust security controls like SMB and LDAP

signing to completely disrupt sophisticated relay techniques.

This Microsoft Security report details a multi-stage Linux intrusion that

highlights a growing trend of cybercriminals exploiting vulnerable,

internet-facing edge appliances to systematically compromise enterprise

networks. The threat actor initially gained access by exploiting an

end-of-life, Azure-hosted F5 BIG-IP load balancer. Using this perimeter

foothold, the attacker established an over-privileged SSH session with sudo

rights on an internal Linux host and launched extensive automated

reconnaissance using Nmap, gowitness, and custom malicious packages to map

internal infrastructure. From there, the attacker moved laterally by

exploiting remote code execution vulnerabilities in an unpatched, internally

facing Atlassian Confluence server. After successfully compromising

Confluence, the actor extracted stored application credentials and weaponized

them to execute Kerberos and NTLM relay attacks against Windows

infrastructure, specifically targeting Active Directory domain controllers to

escalate privileges. Microsoft warns that internally deployed SaaS

applications represent a critical attack surface even if they are not exposed

to the public internet. To mitigate these identity-centric, cross-domain

threats, organizations must treat edge appliances as Tier-0 assets with strict

patch governance, harden internal web applications with equal urgency, disable

NTLM where possible, and enforce robust security controls like SMB and LDAP

signing to completely disrupt sophisticated relay techniques.Tokenized assets surge puts always-on cross-border payment rails in demand

According to the TechJournal article, the surging market for tokenized real world assets has reached a market capitalization of $36 to $40 billion and is projected by McKinsey to reach $2 trillion by 2033. This growth is forcing major payment industry giants to develop always on, cross border payment infrastructure. The demand for continuous transaction settlement stems from remittances, corporate treasury operations, and blockchain based financial assets. Experts from Mastercard, Visa, JPMorgan’s Kinexys, Aave Labs, and STBL discussed these structural shifts at the Digital Assets Forum 2026. While technology manages transaction speed, governance remains the central obstacle to scaling and achieving true interoperability due to competing private interests and a lack of shared rulebooks. In response, infrastructure companies like STBL are creating innovative models that separate a stablecoin's principal from its yield component. Simultaneously, traditional networks are executing distinct strategies; Visa is integrating stablecoins directly into its massive merchant network and offering round the clock USD Coin settlement, while Kinexys provides blockchain deposit accounts that mimic traditional banking setups. Regulatory milestones, like the GENIUS Act in the United States, are further advancing legal clarity for global institutions as they incrementally assemble the necessary infrastructure solutions.They Built The Building But Not The Mirror, Cultural Blind Spots That Are Breaking Your Organization

The Medium article "They Built The Building But Not The Mirror" by M. examines

how widespread cultural blind spots within corporate leadership inadvertently

break organizations despite polished public declarations regarding inclusivity

and psychological safety. Often, predominantly homogenous leadership teams

attempt to solve complex personnel issues by conflating shallow corporate

representation with true cultural awareness, ultimately resulting in

organizational assimilation rebranded as "culture fit." Marginalized

employees, including Black, brown, immigrant, and queer staff, are frequently

forced to downplay their authentic identities and lived perspectives, leading

to forced code switching, emotional exhaustion, and an ongoing quiet brain

drain. To bridge this systemic gap, the author argues that leaders must treat

cultural awareness as an operational skill rather than a superficial corporate

slogan. This necessary shift requires transitioning from defending individual

intent to analyzing structural flaws, and moving from performative

representation to actual power redistribution. Practically, organizations can

initiate immediate behavioral rewiring by implementing a tactical "culture

gemba" to actively listen to frontline experiences without defensiveness.

Additionally, intentionally restructuring repetitive meeting dynamics can

successfully dismantle default assumptions and elevate historically silenced

voices. Ultimately, prioritizing deep cultural awareness creates equitable

professional environments where diverse individuals do not merely endure a

workplace but genuinely breathe and belong.

The Medium article "They Built The Building But Not The Mirror" by M. examines

how widespread cultural blind spots within corporate leadership inadvertently

break organizations despite polished public declarations regarding inclusivity

and psychological safety. Often, predominantly homogenous leadership teams

attempt to solve complex personnel issues by conflating shallow corporate

representation with true cultural awareness, ultimately resulting in

organizational assimilation rebranded as "culture fit." Marginalized

employees, including Black, brown, immigrant, and queer staff, are frequently

forced to downplay their authentic identities and lived perspectives, leading

to forced code switching, emotional exhaustion, and an ongoing quiet brain

drain. To bridge this systemic gap, the author argues that leaders must treat

cultural awareness as an operational skill rather than a superficial corporate

slogan. This necessary shift requires transitioning from defending individual

intent to analyzing structural flaws, and moving from performative

representation to actual power redistribution. Practically, organizations can

initiate immediate behavioral rewiring by implementing a tactical "culture

gemba" to actively listen to frontline experiences without defensiveness.

Additionally, intentionally restructuring repetitive meeting dynamics can

successfully dismantle default assumptions and elevate historically silenced

voices. Ultimately, prioritizing deep cultural awareness creates equitable

professional environments where diverse individuals do not merely endure a

workplace but genuinely breathe and belong.

Quantum ‘Jamming’ Could Help Unlock the Mysteries of Causality

The WIRED article explores the mind-bending concept of quantum jamming, a

theoretical phenomenon rooted in a hypothetical super-quantum mechanics that

could help physicists deeply refine their understanding of cause and effect.

In standard quantum mechanics, the well-established principle of the monogamy

of entanglement dictates that a subatomic particle can only be fully

correlated with a single other particle at any given time. This fundamental

rule secures modern post-quantum cryptography. However, theoretical physicists

have proposed that a third-party adversary could subtly alter these delicate

nonlocal correlations without leaving any detectable trace, causing the

monogamy of entanglement to completely break down. Crucially, quantum jamming

must still strictly respect the universal no-signaling principle, meaning it

cannot be used to transmit information faster than light or send intentional

signals back in time. Instead, it exclusively manipulates how measurements

between distant particles relate. While some scientists view jamming as a

profound cryptographic vulnerability, others treat it as an invaluable

diagnostic tool to map out the boundaries of spacetime causality. Researchers

are actively using this paradigm to classify complex causal relationships,

showing that jamming might even permit limited, paradox-free causal loops,

ultimately testing whether current quantum laws are absolute or merely

approximations of reality.

The WIRED article explores the mind-bending concept of quantum jamming, a

theoretical phenomenon rooted in a hypothetical super-quantum mechanics that

could help physicists deeply refine their understanding of cause and effect.

In standard quantum mechanics, the well-established principle of the monogamy

of entanglement dictates that a subatomic particle can only be fully

correlated with a single other particle at any given time. This fundamental

rule secures modern post-quantum cryptography. However, theoretical physicists

have proposed that a third-party adversary could subtly alter these delicate

nonlocal correlations without leaving any detectable trace, causing the

monogamy of entanglement to completely break down. Crucially, quantum jamming

must still strictly respect the universal no-signaling principle, meaning it

cannot be used to transmit information faster than light or send intentional

signals back in time. Instead, it exclusively manipulates how measurements

between distant particles relate. While some scientists view jamming as a

profound cryptographic vulnerability, others treat it as an invaluable

diagnostic tool to map out the boundaries of spacetime causality. Researchers

are actively using this paradigm to classify complex causal relationships,

showing that jamming might even permit limited, paradox-free causal loops,

ultimately testing whether current quantum laws are absolute or merely

approximations of reality.

/vnd/media/media_files/2026/04/11/meteoroid-meteor-or-meteorite-2026-04-11-14-38-14.jpg)

/articles/event-driven-banking-architecture/en/smallimage/event-driven-banking-architecture-thumbnail-1774430827143.jpg)