Quote for the day:

"People respect leaders who share power and despise those who hoard it." -- Gordon Tredgold

TheCUBE Research 2026 predictions: The year of enterprise ROI

Fourteen years into the modern AI era, our research indicates AI is maturing

rapidly. The data suggests we are entering the enterprise productivity phase,

where we move beyond the novelty of retrieval-augmented-generation-based

chatbots and agentic experimentation. In our view, 2026 will be remembered as

the year that kicked off decades of enterprise AI value creation. ... Bob

Laliberte agreed the prediction is plausible and argued OpenAI is clearly

pushing into the enterprise developer segment. He said the consumerization

pattern is repeating – consumer adoption often drives faster enterprise adoption

– and he viewed OpenAI’s Super Bowl presence as a flag in the ground, with Codex

ads and meaningful spend behind them. He said he is hearing from enterprises

using Codex in meaningful ways, including cases where as much as three quarters

of programming is done with Codex, and discussions of a first 100%

Codex-developed product. He emphasized that driving broader adoption requires

leaning on early adopters, surfacing use cases, and showing productivity gains

so they can be replicated across environments. ... Paul Nashawaty said

application development is bifurcating. Lines of business and citizen developers

are taking on more responsibility for work that historically sat with

professional developers. He said professional developers don’t go away – their

work shifts toward “true professional development,” while line of business

developers focus on immediate outcomes.

Fourteen years into the modern AI era, our research indicates AI is maturing

rapidly. The data suggests we are entering the enterprise productivity phase,

where we move beyond the novelty of retrieval-augmented-generation-based

chatbots and agentic experimentation. In our view, 2026 will be remembered as

the year that kicked off decades of enterprise AI value creation. ... Bob

Laliberte agreed the prediction is plausible and argued OpenAI is clearly

pushing into the enterprise developer segment. He said the consumerization

pattern is repeating – consumer adoption often drives faster enterprise adoption

– and he viewed OpenAI’s Super Bowl presence as a flag in the ground, with Codex

ads and meaningful spend behind them. He said he is hearing from enterprises

using Codex in meaningful ways, including cases where as much as three quarters

of programming is done with Codex, and discussions of a first 100%

Codex-developed product. He emphasized that driving broader adoption requires

leaning on early adopters, surfacing use cases, and showing productivity gains

so they can be replicated across environments. ... Paul Nashawaty said

application development is bifurcating. Lines of business and citizen developers

are taking on more responsibility for work that historically sat with

professional developers. He said professional developers don’t go away – their

work shifts toward “true professional development,” while line of business

developers focus on immediate outcomes.Snowflake CEO: Software risks becoming a “dumb data pipe” for AI

Ramaswamy argues that his company lives with the fear that organizations will

stop using AI agents built by software vendors. There must certainly be added

value for these specialized agents, for example, that they are more accurate,

operate more securely, and are easier to use. For experienced users of existing

platforms, this is already the case. A solution such as NetSuite or Salesforce

offers AI functionality as an extension of familiar systems, whereby adoption of

these features almost always takes place without migration. Ramaswamy believes

that customers have the final say on this. If they want to consult a central AI

and ignore traditional enterprise apps, then they should be given that option,

according to the Snowflake CEO. ... However, the tug-of-war around the center of

AI is in full swing. It is not without reason that vendors claim that their

solution should be the central AI system, for example because they contain

enormous amounts of data or because they are the most critical application for

certain departments. So far, AI trends among these vendors have revolved around

the adoption of AI chatbots, easy-to-set-up or ready-made agentic workflows, and

automatic document generation. During several IT events over the past year,

attendees toyed with the idea that old interfaces may disappear because every

employee will be talking to the data via AI.

Ramaswamy argues that his company lives with the fear that organizations will

stop using AI agents built by software vendors. There must certainly be added

value for these specialized agents, for example, that they are more accurate,

operate more securely, and are easier to use. For experienced users of existing

platforms, this is already the case. A solution such as NetSuite or Salesforce

offers AI functionality as an extension of familiar systems, whereby adoption of

these features almost always takes place without migration. Ramaswamy believes

that customers have the final say on this. If they want to consult a central AI

and ignore traditional enterprise apps, then they should be given that option,

according to the Snowflake CEO. ... However, the tug-of-war around the center of

AI is in full swing. It is not without reason that vendors claim that their

solution should be the central AI system, for example because they contain

enormous amounts of data or because they are the most critical application for

certain departments. So far, AI trends among these vendors have revolved around

the adoption of AI chatbots, easy-to-set-up or ready-made agentic workflows, and

automatic document generation. During several IT events over the past year,

attendees toyed with the idea that old interfaces may disappear because every

employee will be talking to the data via AI.Will LLMs Become Obsolete?

“We are at a unique time in history,” write Ashu Garg and Jaya Gupta at

Foundation Capital, citing multimodal systems, multiagent systems, and more.

“Every layer in the AI stack is improving exponentially, with no signs of a

slowdown in sight. As a result, many founders feel that they are building on

quicksand. On the flip side, this flywheel also presents a generational

opportunity. Founders who focus on large and enduring problems have the

opportunity to craft solutions so revolutionary that they border on magic.”

... “When we think about the future of how we can use agentic systems of AI to

help scientific discovery,” Matias said, “what I envision is this: I think

about the fact that every researcher, even grad students or postdocs, could

have a virtual lab at their disposal ...” ... In closing, Matias described

what makes him enthusiastic about the future. “I'm really excited about the

opportunity to actually take problems that make a difference, that if we solve

them, we can actually have new scientific discovery or have societal impact,”

he said. “The ability to then do the research, and apply it back to solve

those problems, what I call the ‘magic cycle’ of research, is accelerating

with AI tools. We can actually accelerate the scientific side itself, and then

we can accelerate the deployment of that, and what would take years before can

now take months, and the ability to actually open it up for many more people,

I think, is amazing.”

“We are at a unique time in history,” write Ashu Garg and Jaya Gupta at

Foundation Capital, citing multimodal systems, multiagent systems, and more.

“Every layer in the AI stack is improving exponentially, with no signs of a

slowdown in sight. As a result, many founders feel that they are building on

quicksand. On the flip side, this flywheel also presents a generational

opportunity. Founders who focus on large and enduring problems have the

opportunity to craft solutions so revolutionary that they border on magic.”

... “When we think about the future of how we can use agentic systems of AI to

help scientific discovery,” Matias said, “what I envision is this: I think

about the fact that every researcher, even grad students or postdocs, could

have a virtual lab at their disposal ...” ... In closing, Matias described

what makes him enthusiastic about the future. “I'm really excited about the

opportunity to actually take problems that make a difference, that if we solve

them, we can actually have new scientific discovery or have societal impact,”

he said. “The ability to then do the research, and apply it back to solve

those problems, what I call the ‘magic cycle’ of research, is accelerating

with AI tools. We can actually accelerate the scientific side itself, and then

we can accelerate the deployment of that, and what would take years before can

now take months, and the ability to actually open it up for many more people,

I think, is amazing.”Deepfake business risks are growing – here's what leaders need to know

The risk of deepfake attacks appears to be growing as the technology becomes

more accessible. The threat from deepfakes has escalated from a “niche

concern” to a “mainstream cybersecurity priority” at “remarkable speed”, says

Cooper. “The barrier to entry has lowered dramatically thanks to open source

software and automated creation tools. Even low-skilled threat actors can

launch highly convincing attacks.” The target pool is also expanding, says

Cooper. “As larger corporations invest in advanced mitigation strategies,

threat actors are turning their attention to small and medium-sized

businesses, which often lack the resources and dedicated cybersecurity teams

to combat these threats effectively.” The technology itself is also improving.

Deepfakes have already improved “a staggering amount” – even in the past six

months, says McClain. “The tech is internalising human mannerisms all the

time. It is already widely accessible at a consumer level, even used as a form

of entertainment via face swap apps.” ... Meanwhile, technology can be helpful

in mitigating deepfake attack risks. Cooper recommends deepfake detection

tools that use AI to analyse facial movements, voice patterns and metadata in

emails, calls and video conferences. “While not foolproof, these tools can

flag suspicious content for human review.” With the risks in mind, it also

makes sense to implement multi-factor authentication for sensitive

requests.

The risk of deepfake attacks appears to be growing as the technology becomes

more accessible. The threat from deepfakes has escalated from a “niche

concern” to a “mainstream cybersecurity priority” at “remarkable speed”, says

Cooper. “The barrier to entry has lowered dramatically thanks to open source

software and automated creation tools. Even low-skilled threat actors can

launch highly convincing attacks.” The target pool is also expanding, says

Cooper. “As larger corporations invest in advanced mitigation strategies,

threat actors are turning their attention to small and medium-sized

businesses, which often lack the resources and dedicated cybersecurity teams

to combat these threats effectively.” The technology itself is also improving.

Deepfakes have already improved “a staggering amount” – even in the past six

months, says McClain. “The tech is internalising human mannerisms all the

time. It is already widely accessible at a consumer level, even used as a form

of entertainment via face swap apps.” ... Meanwhile, technology can be helpful

in mitigating deepfake attack risks. Cooper recommends deepfake detection

tools that use AI to analyse facial movements, voice patterns and metadata in

emails, calls and video conferences. “While not foolproof, these tools can

flag suspicious content for human review.” With the risks in mind, it also

makes sense to implement multi-factor authentication for sensitive

requests. The Big Shift: From “More Qubits” to Better Qubits

As quantum systems grew, it became clear that more qubits do not always mean

more computing power. Most physical qubits are too noisy, unstable, and

short-lived to run useful algorithms. Errors pile up faster than useful

results, and after a while, the output stops making sense. Adding more fragile

qubits now often makes things worse, not better. This realization has led to a

shift in thinking across the field. Instead of asking how many qubits fit on a

chip, researchers and engineers now ask a tougher question: how many of those

qubits can actually be trusted? ... For businesses watching from the outside,

this change matters. It is easier to judge claims when vendors talk about

error rates, runtimes, and reliability instead of vague promises. It also

helps set realistic expectations. Logical qubits show that early useful

systems will be small but stable, solving specific problems well instead of

trying to do everything. This new way of thinking also changes how we look at

risk. The main risk is not that quantum computing will fail completely.

Instead, the risk is that organizations will misunderstand early progress and

either invest too much because of hype or too little because of old ideas.

Knowing how important error correction is helps clear up this confusion. One

of the clearest signs of maturity is how failure is handled. In early science,

failure can be unclear.

As quantum systems grew, it became clear that more qubits do not always mean

more computing power. Most physical qubits are too noisy, unstable, and

short-lived to run useful algorithms. Errors pile up faster than useful

results, and after a while, the output stops making sense. Adding more fragile

qubits now often makes things worse, not better. This realization has led to a

shift in thinking across the field. Instead of asking how many qubits fit on a

chip, researchers and engineers now ask a tougher question: how many of those

qubits can actually be trusted? ... For businesses watching from the outside,

this change matters. It is easier to judge claims when vendors talk about

error rates, runtimes, and reliability instead of vague promises. It also

helps set realistic expectations. Logical qubits show that early useful

systems will be small but stable, solving specific problems well instead of

trying to do everything. This new way of thinking also changes how we look at

risk. The main risk is not that quantum computing will fail completely.

Instead, the risk is that organizations will misunderstand early progress and

either invest too much because of hype or too little because of old ideas.

Knowing how important error correction is helps clear up this confusion. One

of the clearest signs of maturity is how failure is handled. In early science,

failure can be unclear. Reimagining digital value creation at Inventia Healthcare

“The business strategy and IT strategy cannot be two different strategies altogether,” he explains. “Here at Inventia, IT strategy is absolutely coupled with the core mission of value-added oral solid formulations. The focus is not on deploying systems, it is on creating measurable business value.” Historically, the pharmaceutical industry has been perceived as a laggard in technology adoption, largely due to stringent regulatory requirements. However, this narrative has shifted significantly over the last five to six years. “Regulators and organisations realised that without digitalisation, it is impossible to reach the levels of efficiency and agility that other industries have achieved,” notes Nandavadekar. “Compliance is no longer a barrier, it is an enabler when implemented correctly.” ... “Digitalisation mandates streamlined and harmonised operations. Once all processes are digital, we can correlate data across functions and even correlate how different operations impact each other,” points out Nandavadekar. ... With expanding digital footprints across cloud, IoT, and global operations, cybersecurity has become a mission-critical priority for Inventia. Nandavadekar describes cybersecurity as an “iceberg,” where visible threats represent only a fraction of the risk landscape. “In the pharmaceutical world, cybersecurity is not just about hackers, it is often a national-level activity. India is emerging as a global pharma hub, and that makes us a strategic target.”Scaling Agentic AI: When AI Takes Action, the Real Challenge Begins

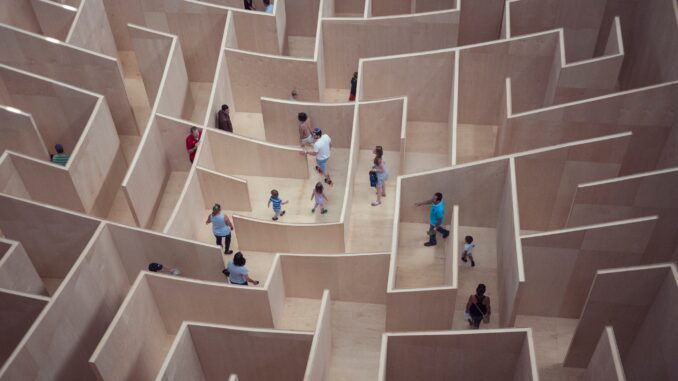

Organizations often underestimate tool risk. The model is only one part of the

decision chain. The real exposure comes from the tools and APIs the agent can

call. If those are loosely governed, the agent becomes privileged automation

moving faster than human oversight can keep up. “Agentic AI does not just stress

models. It stress-tests the enterprise control plane.” ... Agentic AI requires

reliable data, secure access, and strong observability. If data quality is

inconsistent and telemetry is incomplete, autonomy turns into uncertainty.

Leaders need a clear method to select use cases based on business value,

feasibility, risk class, and time-to-impact. The operating model should enforce

stage gates and stop low-value projects early. Governance should be built into

delivery through reusable patterns, reference architectures, and pre-approved

controls. When guardrails are standardized, teams move faster because they no

longer have to debate the same risk questions repeatedly. ... Observability must

cover the full chain, not just model performance. Teams should be able to trace

prompts, context, tool calls, policy decisions, approvals, and downstream

outcomes. ... Agentic AI introduces failure modes that can appear plausible on

the surface. Without traceability and real-time signals, organizations are

forced to guess, and guessing is not an operating strategy.

Organizations often underestimate tool risk. The model is only one part of the

decision chain. The real exposure comes from the tools and APIs the agent can

call. If those are loosely governed, the agent becomes privileged automation

moving faster than human oversight can keep up. “Agentic AI does not just stress

models. It stress-tests the enterprise control plane.” ... Agentic AI requires

reliable data, secure access, and strong observability. If data quality is

inconsistent and telemetry is incomplete, autonomy turns into uncertainty.

Leaders need a clear method to select use cases based on business value,

feasibility, risk class, and time-to-impact. The operating model should enforce

stage gates and stop low-value projects early. Governance should be built into

delivery through reusable patterns, reference architectures, and pre-approved

controls. When guardrails are standardized, teams move faster because they no

longer have to debate the same risk questions repeatedly. ... Observability must

cover the full chain, not just model performance. Teams should be able to trace

prompts, context, tool calls, policy decisions, approvals, and downstream

outcomes. ... Agentic AI introduces failure modes that can appear plausible on

the surface. Without traceability and real-time signals, organizations are

forced to guess, and guessing is not an operating strategy.Security at AI speed: The new CISO reality

The biggest shift isn’t tooling, we’ve always had to choose our platforms carefully, it’s accountability. When an AI agent acts at scale, the CISO remains accountable for the outcome. That governance and operating model simply didn’t exist a decade ago. Equally, CISOs now carry accountability for inaction. Failing to adopt and govern AI-driven capabilities doesn’t preserve safety, it increases exposure by leaving the organization structurally behind. The CISO role will need to adopt a fresh mindset and the skills to go with it to meet this challenge. ... While quantification has value, seeking precision based on historical data before ensuring strong controls, ownership, and response capability creates a false sense of confidence. It anchors discussion in technical debt and past trends, rather than aligning leadership around emerging risks and sponsoring a bolder strategic leap through innovation. That forward-looking lens drives better strategy, faster decisions, and real organizational resilience. ... When a large incumbent experiences an outage, breach, model drift, or regulatory intervention, the business doesn’t degrade gracefully, it fails hard. The illusion of safety disappears quickly when you realise you don’t own the kill switches, can’t constrain behaviour in real time, and don’t control the recovery path. Vendor scale does not equal operational resilience.Why Borderless AI Is Coming to an End

Most countries are still wrestling with questions related to "sovereign AI" -

the technical ambition to develop domestic compute, models and data capabilities

- and "AI sovereignty" - the political and legal right to govern how AI operates

within national boundaries, said Gaurav Gupta, vice president analyst at

Gartner. Most national strategies today combine both. "There is no AI journey

without thinking geopolitics in today's world," said Akhilesh Tuteja, partner,

advisory services and former head of cybersecurity at KPMG. ... Smaller nations,

Gupta said, are increasing their investment in domestic AI stacks as they look

for alternatives to the closed U.S. model, including computing power, data

centers, infrastructure and models aligned with local laws, culture and region.

"Organizations outside the U.S. and China are investing more in sovereign cloud

IaaS to gain digital and technological independence," said Rene Buest, senior

director analyst at Gartner. "The goal is to keep wealth generation within their

own borders to strengthen the local economy." ... The practical barriers to AI

sovereignty start with infrastructure. The level of investment is beyond the

reach of most countries, creating a fundamental asymmetry in the global AI

landscape. "One gigawatt new data centers cost north of $50 billion," Gupta

said. "The biggest constraint today is availability of power … You are now

competing for electricity with residential and other industrial use cases."

Most countries are still wrestling with questions related to "sovereign AI" -

the technical ambition to develop domestic compute, models and data capabilities

- and "AI sovereignty" - the political and legal right to govern how AI operates

within national boundaries, said Gaurav Gupta, vice president analyst at

Gartner. Most national strategies today combine both. "There is no AI journey

without thinking geopolitics in today's world," said Akhilesh Tuteja, partner,

advisory services and former head of cybersecurity at KPMG. ... Smaller nations,

Gupta said, are increasing their investment in domestic AI stacks as they look

for alternatives to the closed U.S. model, including computing power, data

centers, infrastructure and models aligned with local laws, culture and region.

"Organizations outside the U.S. and China are investing more in sovereign cloud

IaaS to gain digital and technological independence," said Rene Buest, senior

director analyst at Gartner. "The goal is to keep wealth generation within their

own borders to strengthen the local economy." ... The practical barriers to AI

sovereignty start with infrastructure. The level of investment is beyond the

reach of most countries, creating a fundamental asymmetry in the global AI

landscape. "One gigawatt new data centers cost north of $50 billion," Gupta

said. "The biggest constraint today is availability of power … You are now

competing for electricity with residential and other industrial use cases."

)