Quote for the day:

"Always remember, your focus determines your reality." -- George Lucas

Leading In The Age Of AI: Five Human Competencies Every Modern Leader Needs

Leaders are surrounded by data, metrics and algorithmic recommendations, but

decision quality depends on interpretation rather than volume. Insight is the

ability to turn information and diverse perspectives into clarity. It requires

curiosity, patience and the humility to question assumptions. Leaders who

demonstrate this capability articulate complex issues clearly, invite dissent

before deciding and translate analysis into meaningful direction. ...

Integration is the capability to design environments where human creativity and

machine intelligence reinforce one another. Leaders strong in this capability

align technology with purpose and culture, encourage experimentation and ensure

that tools enhance human capability rather than replacing reflection and

judgment. The aim is capability at scale, not efficiency at any cost. ...

Inspiration is the ability to energize people by helping them see what is

possible and how their work contributes to a larger purpose. It is grounded

optimism rather than polished enthusiasm. Leaders who inspire use story, clarity

and authenticity to create shared commitment rather than simple compliance. When

purpose becomes personal, contribution follows. ... It is not only about speed

or quarterly numbers. It is about sustainable value for people, organizations

and society. Leaders strong in this capability balance performance with

well-being and growth, adapt strategy based on real feedback and design systems

that strengthen capacity over time instead of exhausting it.

Leaders are surrounded by data, metrics and algorithmic recommendations, but

decision quality depends on interpretation rather than volume. Insight is the

ability to turn information and diverse perspectives into clarity. It requires

curiosity, patience and the humility to question assumptions. Leaders who

demonstrate this capability articulate complex issues clearly, invite dissent

before deciding and translate analysis into meaningful direction. ...

Integration is the capability to design environments where human creativity and

machine intelligence reinforce one another. Leaders strong in this capability

align technology with purpose and culture, encourage experimentation and ensure

that tools enhance human capability rather than replacing reflection and

judgment. The aim is capability at scale, not efficiency at any cost. ...

Inspiration is the ability to energize people by helping them see what is

possible and how their work contributes to a larger purpose. It is grounded

optimism rather than polished enthusiasm. Leaders who inspire use story, clarity

and authenticity to create shared commitment rather than simple compliance. When

purpose becomes personal, contribution follows. ... It is not only about speed

or quarterly numbers. It is about sustainable value for people, organizations

and society. Leaders strong in this capability balance performance with

well-being and growth, adapt strategy based on real feedback and design systems

that strengthen capacity over time instead of exhausting it.Big shifts that will reshape work in 2026

We’re moving into a new chapter where real skills and what people can actually

do matter more than degrees or job titles. In 2026, this shift will become the

standard across organisations in APAC. Instead of just looking for certificates,

employers are now keen to find people who can show adaptability, pick up new

things quickly, and prove their expertise through action. ... as helpful as AI

can be, there’s a catch. Technology can make things faster and smarter, but it’s

not a substitute for the human touch—creativity, empathy, and making the right

call when it matters. The real test for leaders will be making sure AI helps

people do their best work, not strip away what makes us human. That means

setting clear rules for how AI is used, helping employees build digital skills,

and keeping trust at the centre of it all. Organisations that succeed will

strike a balance: leveraging AI’s analytical power to unlock efficiencies, while

empowering people to focus on the relational, imaginative, and moral dimensions

of work. ... Employee wellbeing is set to become the foundation of the future of

work. No longer a peripheral benefit or a box to check, wellbeing will be woven

into organisational culture, shaping every aspect of the employee experience.

... Purpose is emerging as the new currency of talent attraction and retention,

particularly for Gen Z and millennials, who are steadfast in their desire to

work for organisations that reflect their personal values.

We’re moving into a new chapter where real skills and what people can actually

do matter more than degrees or job titles. In 2026, this shift will become the

standard across organisations in APAC. Instead of just looking for certificates,

employers are now keen to find people who can show adaptability, pick up new

things quickly, and prove their expertise through action. ... as helpful as AI

can be, there’s a catch. Technology can make things faster and smarter, but it’s

not a substitute for the human touch—creativity, empathy, and making the right

call when it matters. The real test for leaders will be making sure AI helps

people do their best work, not strip away what makes us human. That means

setting clear rules for how AI is used, helping employees build digital skills,

and keeping trust at the centre of it all. Organisations that succeed will

strike a balance: leveraging AI’s analytical power to unlock efficiencies, while

empowering people to focus on the relational, imaginative, and moral dimensions

of work. ... Employee wellbeing is set to become the foundation of the future of

work. No longer a peripheral benefit or a box to check, wellbeing will be woven

into organisational culture, shaping every aspect of the employee experience.

... Purpose is emerging as the new currency of talent attraction and retention,

particularly for Gen Z and millennials, who are steadfast in their desire to

work for organisations that reflect their personal values.

How AI could close the education inequality gap - or widen it

On one side are those who say that AI tools will never be able to replace the

teaching offered by humans. On the other side are those who insist that access

to AI-powered tutoring is better than no access to tutoring at all. The one

thing that can be agreed on across the board is that students can benefit from

tutoring, and fair access remains a major challenge -- one that AI may be able

to smooth over. "The best human tutors will remain ahead of AI for a long time

yet to come, but do most people have access to tutors outside of class?" said

Mollick. To evaluate educational tools, Mollick uses what he calls the "BAH"

test, which measures whether a tool is better than the best available human a

student can realistically access. ... AI tools that function like a tutor could

also help students who don't have the resources to access a human tutor. A

recent Brookings Institution report found that the largest barrier to scaling

effective tutoring programs is cost, estimating a requirement $1,000 to $3,000

per student annually for high-impact models. Because private tutoring often

requires financial investment, it can drive disparities in educational

achievement. Aly Murray experienced those disparities firsthand. Raised by a

single mother who immigrated to the US from Cuba, Murray grew up as a low-income

student and later recognized how transformative access to a human tutor could

have been.

On one side are those who say that AI tools will never be able to replace the

teaching offered by humans. On the other side are those who insist that access

to AI-powered tutoring is better than no access to tutoring at all. The one

thing that can be agreed on across the board is that students can benefit from

tutoring, and fair access remains a major challenge -- one that AI may be able

to smooth over. "The best human tutors will remain ahead of AI for a long time

yet to come, but do most people have access to tutors outside of class?" said

Mollick. To evaluate educational tools, Mollick uses what he calls the "BAH"

test, which measures whether a tool is better than the best available human a

student can realistically access. ... AI tools that function like a tutor could

also help students who don't have the resources to access a human tutor. A

recent Brookings Institution report found that the largest barrier to scaling

effective tutoring programs is cost, estimating a requirement $1,000 to $3,000

per student annually for high-impact models. Because private tutoring often

requires financial investment, it can drive disparities in educational

achievement. Aly Murray experienced those disparities firsthand. Raised by a

single mother who immigrated to the US from Cuba, Murray grew up as a low-income

student and later recognized how transformative access to a human tutor could

have been.

Shift-Left Strategies for Cloud-Native and Serverless Architectures

The whole architectural framework of shift-left security depends on moving critical security practices earlier in the development lifecycle. Incorporating security in the development lifecycle should not be an afterthought. Within this context, teams are empowered to identify and eliminate risks at design time, build time, and during CI/CD — not after. These modern workloads are highly dynamic and interconnected, and a single mishap can trickle down across the entire environment. ... Serverless Functions can introduce issues if they run with excessive privileges. This can be addressed by simply embedding permissions checks early in the development lifecycle. A baseline of minimum required identity and access management (IAM) privileges should be enforced to keep development tight. Wildcards or broad permissions should be leveraged in this context. Also, it makes sense to use runtime permission boundary generation — otherwise, functions can be compromised without appropriate safeguards. ... In modern-day cloud environments, it is crucial that observability is considered a major priority. Shifting left within the context of observability means logs, metrics, traces, and alerts are integrated directly into the application from day one. AWS CloudWatch or DataDog metrics can be integrated into the application code so that developers can keep an eye on the critical behaviors of the application.Agentic AI and Autonomous Agents: The Dawn of Smarter Machines

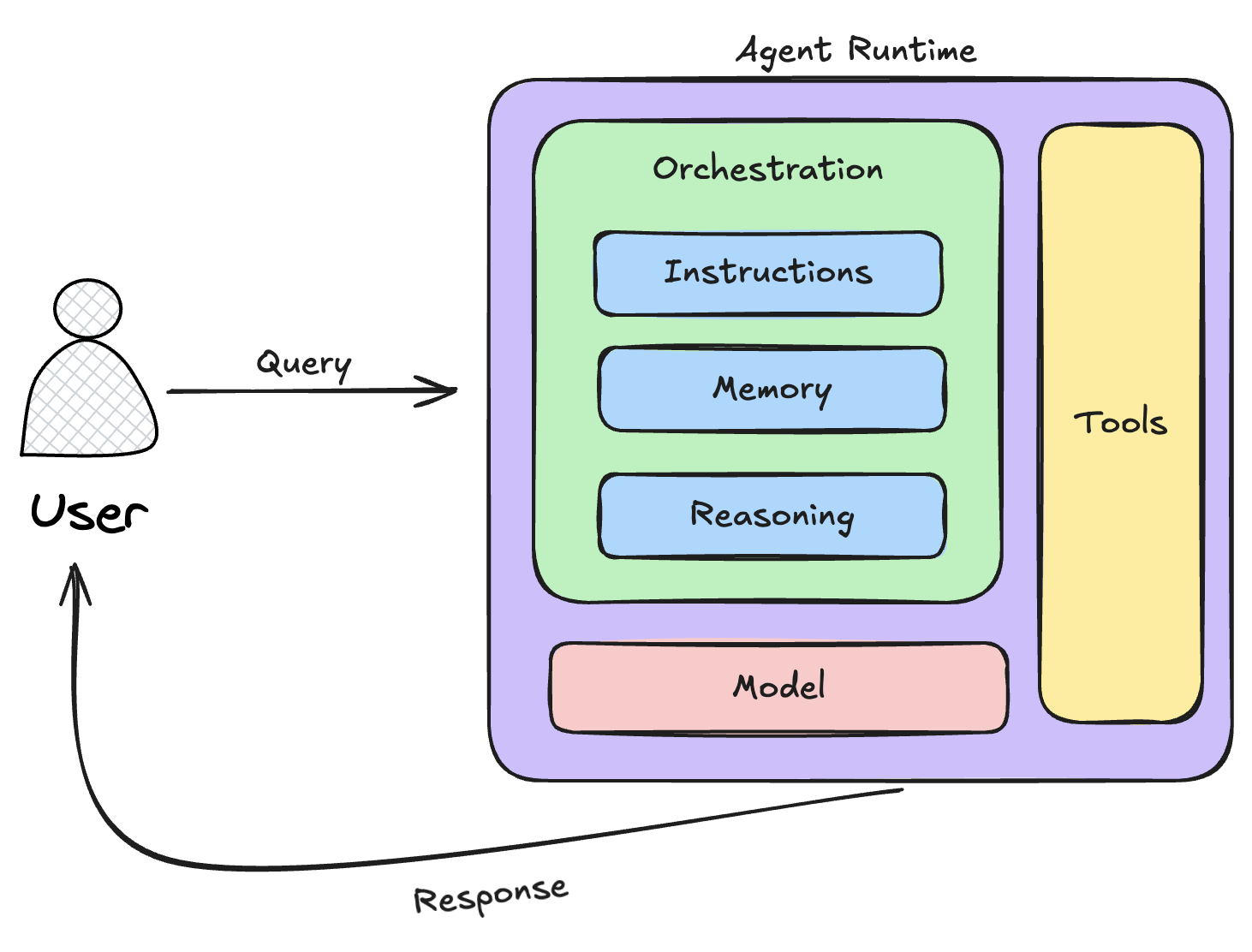

At their core, agentic AI and autonomous agents rely on a few powerhouse

components: planning, reasoning, acting, and tool integration. Planning is the

blueprint phase the AI breaks a goal into subtasks, like mapping out a road trip

with stops for gas and sights. Reasoning kicks in next, where it evaluates

options using logic, past data, or even ethical guidelines (more on that later).

Acting is the execution: interfacing with the real world via APIs, databases, or

even physical robots. And tool integration? ... Diving deeper, it’s worth

comparing agentic AI to other paradigms to see why it’s a game-changer.

Standalone LLMs, like basic GPT models, are fantastic for generating text but

falter on execution — they can’t “do” things without external help. Agentic

systems bridge that by embedding action loops. Multi-agent setups take it

further: Imagine a team of specialized agents collaborating, one for research,

another for analysis, like a virtual task force. ... Looking ahead, the future

of agentic AI feels electric yet cautious. By 2030, I predict multi-agent

collaborations becoming standard, with advancements in human-in-the-loop designs

to mitigate ethics pitfalls — like ensuring transparency in decision-making or

preventing job displacement. OpenAI’s push for standardized frameworks addresses

this, but we must grapple with questions: Who owns the data agents learn from?

How do we audit autonomous actions?

At their core, agentic AI and autonomous agents rely on a few powerhouse

components: planning, reasoning, acting, and tool integration. Planning is the

blueprint phase the AI breaks a goal into subtasks, like mapping out a road trip

with stops for gas and sights. Reasoning kicks in next, where it evaluates

options using logic, past data, or even ethical guidelines (more on that later).

Acting is the execution: interfacing with the real world via APIs, databases, or

even physical robots. And tool integration? ... Diving deeper, it’s worth

comparing agentic AI to other paradigms to see why it’s a game-changer.

Standalone LLMs, like basic GPT models, are fantastic for generating text but

falter on execution — they can’t “do” things without external help. Agentic

systems bridge that by embedding action loops. Multi-agent setups take it

further: Imagine a team of specialized agents collaborating, one for research,

another for analysis, like a virtual task force. ... Looking ahead, the future

of agentic AI feels electric yet cautious. By 2030, I predict multi-agent

collaborations becoming standard, with advancements in human-in-the-loop designs

to mitigate ethics pitfalls — like ensuring transparency in decision-making or

preventing job displacement. OpenAI’s push for standardized frameworks addresses

this, but we must grapple with questions: Who owns the data agents learn from?

How do we audit autonomous actions?

Operationalizing Data Strategy with OKRs: From Vision to Execution

For any business, some of the most critical data-driven initiatives and priorities include risk mitigation, revenue growth, and customer experience. To drive more effectiveness and accuracy in such business functions, finding ways to blend the technical output and performance data with tangible business outcomes is important. You must also proactively assess the shortcomings and errors in your data strategy to identify and correct any misaligned priorities. ... OKRs can empower data teams to leverage analytics and data sources to deliver highly actionable, timely insights. Set measurable and time-bound objectives to ensure focus and drive tangible progress toward your goals by leveraging an OKR platform, creating visually appealing dashboards, and assigning accountability to employees. ... If your high-level vision is “to become a data-driven organization,” the most effective way to work toward it is to break it into specific and measurable objectives. More importantly, consider segmenting your core strategy into multiple use cases, like operations optimization, customer analytics, and regulatory compliance. With these easily trackable segments, improve your focus and enable your teams to deliver incremental value. ... By tying OKRs with processes like governance and quality, you can ensure that they become measurable and visible priorities, causing fewer incidents and building confidence in analytics-based projects and processes.This tiny chip could change the future of quantum computing

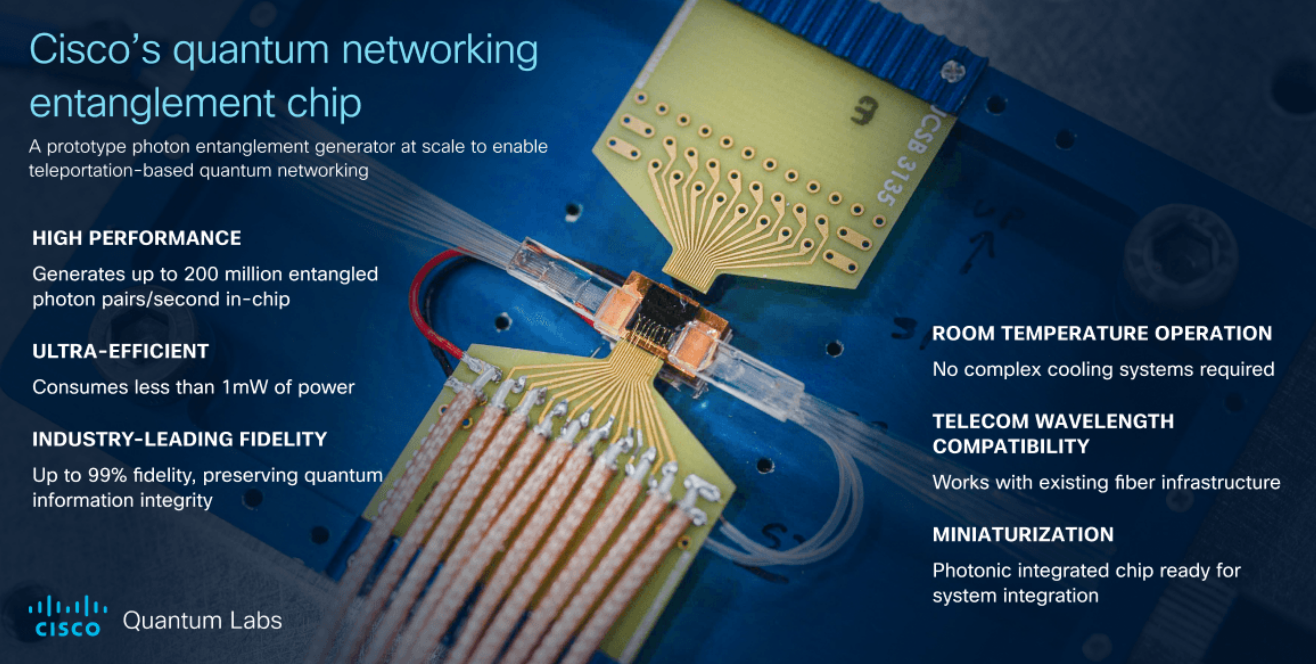

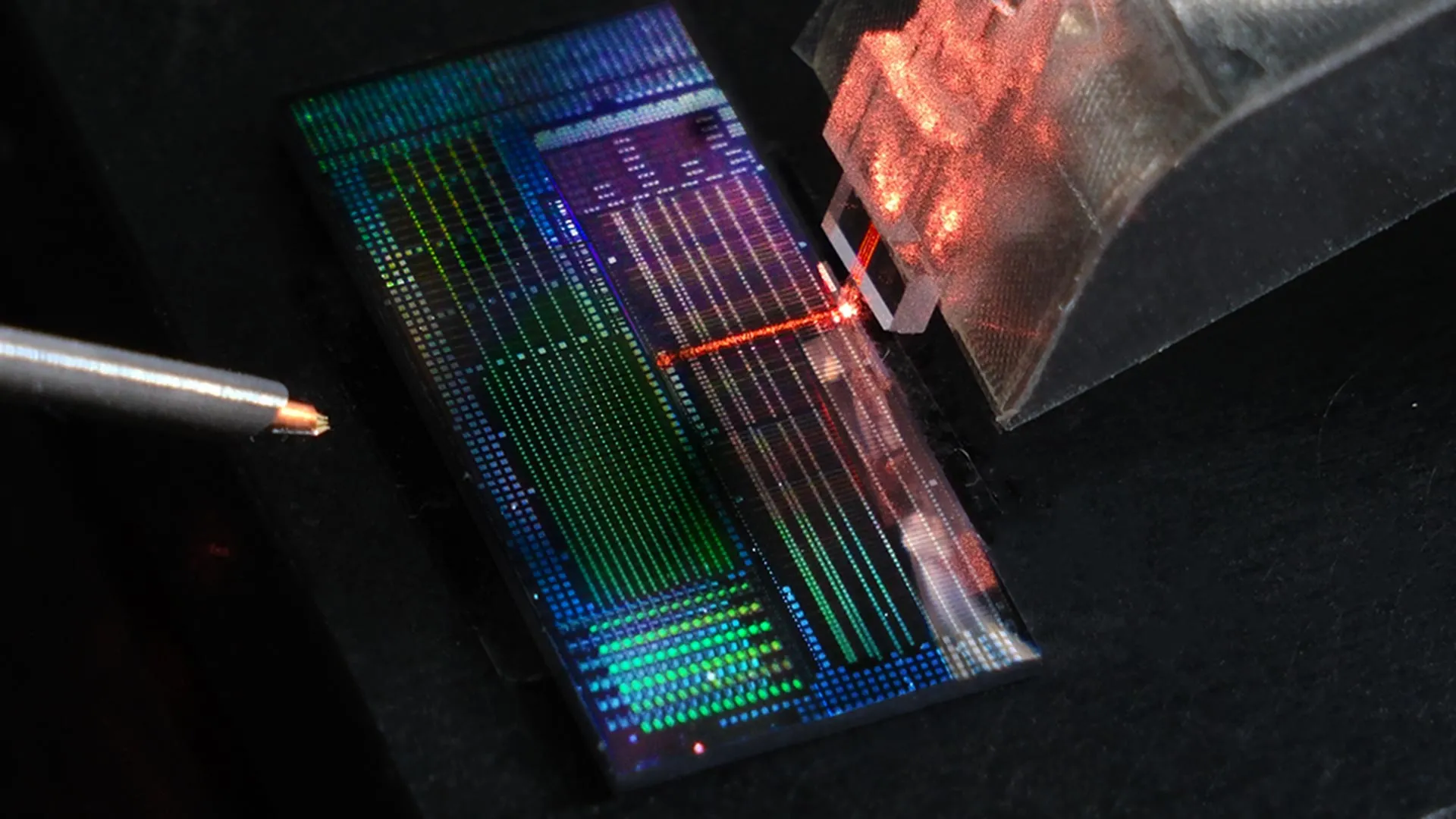

At the heart of the technology are microwave-frequency vibrations that oscillate

billions of times per second. These vibrations allow the chip to manipulate

laser light with remarkable precision. By directly controlling the phase of a

laser beam, the device can generate new laser frequencies that are both stable

and efficient. This level of control is a key requirement not only for quantum

computing, but also for emerging fields such as quantum sensing and quantum

networking. ... The new device generates laser frequency shifts through

efficient phase modulation while using about 80 times less microwave power than

many existing commercial modulators. Lower power consumption means less heat,

which allows more channels to be packed closely together, even onto a single

chip. Taken together, these advantages transform the chip into a scalable system

capable of coordinating the precise interactions atoms need to perform quantum

calculations. ... The researchers are now working on fully integrated photonic

circuits that combine frequency generation, filtering, and pulse shaping on a

single chip. This effort moves the field closer to a complete, operational

quantum photonic platform. Next, the team plans to partner with quantum

computing companies to test these chips inside advanced trapped-ion and

trapped-neutral-atom quantum computers.

At the heart of the technology are microwave-frequency vibrations that oscillate

billions of times per second. These vibrations allow the chip to manipulate

laser light with remarkable precision. By directly controlling the phase of a

laser beam, the device can generate new laser frequencies that are both stable

and efficient. This level of control is a key requirement not only for quantum

computing, but also for emerging fields such as quantum sensing and quantum

networking. ... The new device generates laser frequency shifts through

efficient phase modulation while using about 80 times less microwave power than

many existing commercial modulators. Lower power consumption means less heat,

which allows more channels to be packed closely together, even onto a single

chip. Taken together, these advantages transform the chip into a scalable system

capable of coordinating the precise interactions atoms need to perform quantum

calculations. ... The researchers are now working on fully integrated photonic

circuits that combine frequency generation, filtering, and pulse shaping on a

single chip. This effort moves the field closer to a complete, operational

quantum photonic platform. Next, the team plans to partner with quantum

computing companies to test these chips inside advanced trapped-ion and

trapped-neutral-atom quantum computers.The 5-Step Framework to Ensure AI Actually Frees Your Time Instead of Creating More Work

Success with AI isn’t measured by the number of automations you have deployed. True AI leverage is measured by the number of high-value tasks that can be executed without oversight from the business owner. ... Map what matters most — It’s critical to focus your energy on where it matters the most. Look through your processes to identify bottlenecks and repetitive decisions or tasks that don’t need your input. ... Design roles before rules — Figure out where you need human ownership in your processes. These will be activities that require traits like empathy, creative thinking and high-level strategy. Once the roles are established, you can build automation that supports those roles. ... Document before you delegate — Both humans and machines need clear direction. Be sure to document any processes, procedures, and SOPs before delegating or automating them. ... Automate boring and elevate brilliant — Your primary goal with automation is to free up your time for creating, strategy and building relationships. Of course, the reality is that not everything should be automated. ... Measure output, not inputs — Too many entrepreneurs spend their time focused on what their team and AI agents are doing and not what they are achieving. Intentional automation requires placing your focus on outputs to ensure the processes you have in place are working effectively, or where they can be improved.The next big IT security battle is all about privileged access

As the space matures, privileged access workflows will increasingly depend on

adaptive authentication policies that validate identity and device posture in

real time. Vendors that offer flexible passwordless frameworks and integrations

with existing IAM and PAM systems will see increased market traction. This will

mark a shift in the promised end of passwords, eliminating one of the most

exploited attack vectors in privilege abuse and account takeovers. ... Instead

of relying solely on human auditors or predefined rules, IAM/PAM solutions will

use generative AI to summarize risky session activities, detect lateral movement

indicators, and suggest remediations in real time. AI-assisted security will

make privileged access oversight continuous and contextual, helping enterprises

detect insider threats and compromised accounts faster than ever before. This

will also move the industry toward autonomous access governance. ... Compromised

privileged credentials will remain the single most direct path to data loss, and

a sharp rise in targeted breaches, ransomware campaigns, and supply-chain

intrusions involving administrative accounts will elevate IAM/PAM to a

board-level concern in 2026. Enterprises will accelerate investments in vendor

privileged access tools to mitigate risk from contractors, managed service

providers, and external support staff.

As the space matures, privileged access workflows will increasingly depend on

adaptive authentication policies that validate identity and device posture in

real time. Vendors that offer flexible passwordless frameworks and integrations

with existing IAM and PAM systems will see increased market traction. This will

mark a shift in the promised end of passwords, eliminating one of the most

exploited attack vectors in privilege abuse and account takeovers. ... Instead

of relying solely on human auditors or predefined rules, IAM/PAM solutions will

use generative AI to summarize risky session activities, detect lateral movement

indicators, and suggest remediations in real time. AI-assisted security will

make privileged access oversight continuous and contextual, helping enterprises

detect insider threats and compromised accounts faster than ever before. This

will also move the industry toward autonomous access governance. ... Compromised

privileged credentials will remain the single most direct path to data loss, and

a sharp rise in targeted breaches, ransomware campaigns, and supply-chain

intrusions involving administrative accounts will elevate IAM/PAM to a

board-level concern in 2026. Enterprises will accelerate investments in vendor

privileged access tools to mitigate risk from contractors, managed service

providers, and external support staff.