Handing Over the (Digital) Keys: Should You Trust a Smart Lock?

Inherent security flaws that lead to hacks aren’t the only avenue third parties can use to eye your data. Sometimes, it hits a little closer to home. If you have access to the app that controls a smart lock, you can probably see someone leaves and enters for the day, which can be beneficial in knowing your significant other made it home safely. But it could also inform someone of your whereabouts. Technically, if you don’t own the lock, the owner might be able to see your information, too “If a lock is connected to the internet, then there is always the danger that it could be hacked,” Ray Walsh, digital privacy expert for ProPrivacy.com, said in an email to Reviews.com. “Of course, an internet-connected smart lock may be able to feed its owner additional information – such as an alert when someone unlocks it. This data certainly has its merits, but may only be so useful in the end,” Walsh said. For example, although the privacy policy has since changed, Gizmodo found that smart lock company Latch stated GPS information could be stored and shared with owners and any subsequent owners in an archived link from May 8th.

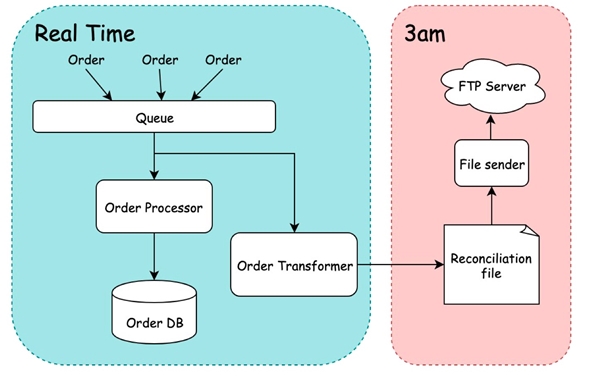

Don’t get woken up for something a computer can do for you; computers will do it better anyway. The best thing to come our way in terms of automation is all the cloud tooling and approaches we now have. Whether you love serverless or containers, both give you a scale of automation that we previously would have to hand roll. Kubernetes monitors the health checks of your services and restarts on demand; it will also move your services when "compute" becomes unavailable. Serverless will retry requests and hook in seamlessly to your cloud provider’s alerting system. These platforms have come a long way, but they are still only as good as the applications we write. We need to code with an understanding of how they will be run, and how they can be automatically recovered. ... There are also techniques for dealing with situations when an outage is greater than one service, or if the scale of the outage is not yet known. One such technique is to have your platform running in more than one region, so if you see issues in one region, then you can failover to another region.

In July, Reuters reported that as part of an effort to combat money laundering, Japan’s government is “leading a global push” to set up for cryptocurrency exchanges a system like SWIFT, the international messaging protocol that banks use for bank-to-bank payments. Last week, a report from Nikkei suggested that 15 governments are planning to create a system for collecting and sharing personal data on cryptocurrency users. But several people familiar with the FATF-led international discussions around cryptocurrency regulation told MIT Technology Review that these reports don’t have it quite right. There doesn’t appear to be a government-led global cryptocurrency surveillance system in the works—at least not yet. And it’s likely that whatever does eventually emerge won’t look much like SWIFT. Exchanges are still early in the process of figuring out what systems and technologies to use to securely handle sensitive data, Spiro says, and how to do it in a way that complies with a range of local privacy rules. “There are a lot of balls in the air,” he says.

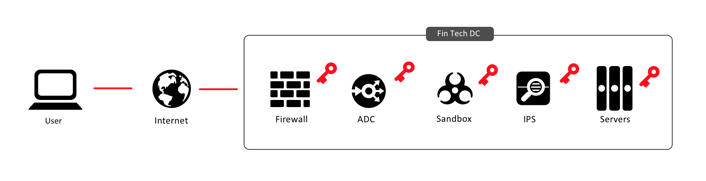

Security concerns blocking UK digital transformation

“Protection and prevention are still paramount yet, to stay ahead of these evolving trends, organisations need to start thinking differently about cyber security. Business leaders need to make the leap from seeing cyber security as only a protective measure, to it also being a strategic value driver,” he said. The report also shows that across many organisations, chief information officers (CIOs) and wider board member views around cyber security are not yet aligned. Business leaders such as the CEO, CFO and COO tend to be less confident about their organisation’s cyber security than those with direct responsibility for IT and technology such as the CIO and chief information security officer (CISO). In addition, technology leaders are more likely to believe it is important for competitive advantage to have a cyber-secure brand (82%), compared with only 68% of business leaders.

Use of Facial Recognition Stirs Controversy

Over the past several years, the use of facial recognition - along with other technologies such as machine learning, artificial intelligence and big data - has stoked global invasion of privacy fears. In the U.S., the American Civil Liberties Union has taken aim at Amazon's Rekognition product, which uses a number of technologies to enable its users to rapidly run searches against facial databases. The ACLU's Nicole Ozer last year called for guarding against supercharged surveillance before it's used to track protesters, target immigrants and spy on entire neighborhoods. More recently, city officials in San Francisco and Oakland have banned police from using facial recognition technology. The debate over facial recognition technology has also been addressed by several U.S. presidential candidates. On Monday, Democratic hopeful Bernie Sanders became the first presidential candidate to call for a ban on the use of facial recognition by law enforcement. This is one part of a larger criminal justice reform package that the Vermont senator's campaign calls "Justice and Safety for All."

Extreme Programming in Agile – A Practical Guide for Project Managers

The XP lifecycle can be explained concerning the Weekly Cycle and Quarterly Cycle. To begin with, the customer defines the set of stories. The team estimates the size of each story, which along with relative benefit as estimated by the customer, indicate the relative value used to prioritize the stories. In case, some stories cannot be estimated by the team due to unclear technical considerations involved, they can introduce a Spike. Spikes are referred to as short, time frames for research and may occur before regular iterations start or along with ongoing iterations. Next comes the release plan: The release plan covers the stories that will be delivered in a particular quarter or release. At this point, the weekly cycles begin. The start of each weekly cycle involves the team and the customer meeting up to decide the set of stories to be realized that week. Those stories are then broken into tasks to be completed within that week. The weekends with a review of the progress to date between the team and the customer. This leads to the decision if the project should continue or if sufficient value has been delivered.

Breakthroughs bring a quantum Internet closer

The TUM quantum-electronics breakthrough is just one announced in the last few weeks. Scientists at Osaka University say they’ve figured a way to get information that’s encoded in a laser-beam to translate to a spin state of an electron in a quantum dot. They explain, in their release, that they solve an issue where entangled states can be extremely fragile, in other words, petering out and not lasting for the required length of transmission. Roughly, they explain that their invention allows electron spins in distant, terminus computers to interact better with the quantum-data-carrying light signals. “The achievement represents a major step towards a ‘quantum internet,’ the university says. “There are those who think all computers, and other electronics, will eventually be run on light and forms of photons, and that we will see a shift to all-light,” I wrote earlier this year. That movement is not slowing. Unrelated to the aforementioned quantum-based light developments, we’re also seeing a light-based thrust that can be used in regular electronics too. Engineers may soon be designing with small photon diodes that would allow light to flow in one direction only, says Stanford University in a press release.

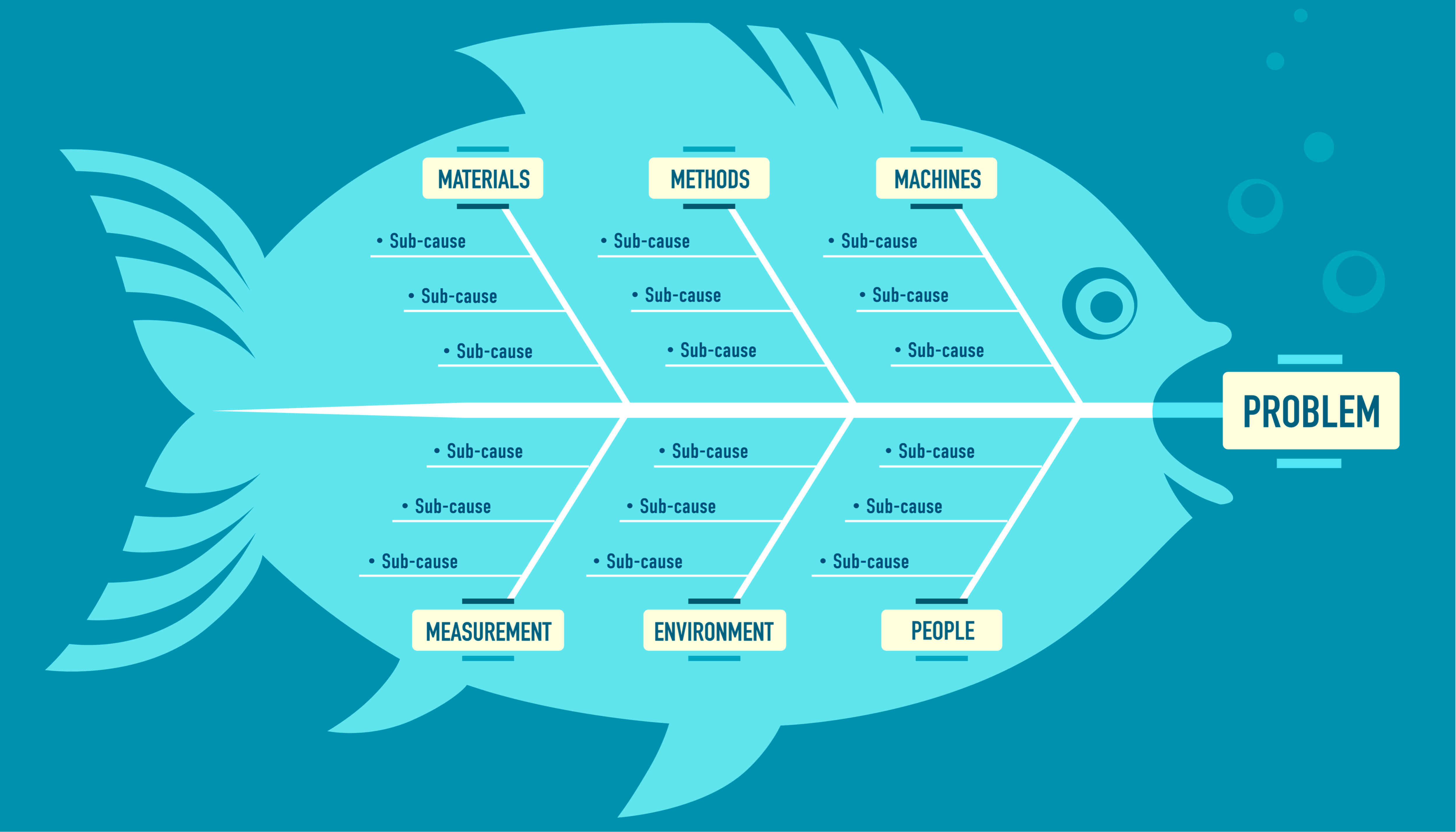

Automated machine learning or AutoML explained

Automated machine learning, or AutoML, aims to reduce or eliminate the need for skilled data scientists to build machine learning and deep learning models. Instead, an AutoML system allows you to provide the labeled training data as input and receive an optimized model as output. There are several ways of going about this. One approach is for the software to simply train every kind of model on the data and pick the one that works best. A refinement of this would be for it to build one or more ensemble models that combine the other models, which sometimes (but not always) gives better results. A second technique is to optimize the hyperparameters of the best model or models to train an even better model. Feature engineering is a valuable addition to any model training. One way of de-skilling deep learning is to use transfer learning, essentially customizing a well-trained general model for specific data. Transfer learning is sometimes called custom machine learning, and sometimes called AutoML (mostly by Google). Rather than starting from scratch when training models from your data, Google Cloud AutoMLimplements automatic deep transfer learning and neural architecture search for language pair translation, natural language classification, and image classification.

Considerations for choosing enterprise mobility tools

One option is to use an open source enterprise mobility management (EMM) platform. If the organization is willing to invest in the resources, open source EMM offers the flexibility to customize and extend the source code to match specific needs. IT pros should be aware of challenges that can come with maintaining their own open source EMM, such as hidden costs of deployment and lack of support. A few options for open source EMM include WSO2 Enterprise Mobility Manager or Teclib's Flyve MDM. WSO2's offering includes enterprise mobility tools such as mobile application management and mobile identity management. It also includes open source support for IoT devices, such as enrollment and application management, through IoT Server. Organizations looking for more established enterprise mobility tools can look to UEM platforms including Citrix Workspace, VMware Workspace One, IBM MaaS360, BlackBerry Unified Endpoint Manager, MobileIron UEM or Microsoft Enterprise Mobility + Security, which includes Intune.

The Future Enterprise Architect

Archie II understands the needs of decision makers throughout the organization including the need to provide timely, if not on-demand, decision support based on solid information and analysis. Archie II also understands that he must not only support the decision making processes in the organization, but also to enable those decisions by providing guidance. Archie II is proactive and is often ready with answers before questions arrive. Archie II uses or adapts existing architectures, and/or creates new architectural patterns and models to support analysis he performs in order to make recommendations needed as value chains or value streams progress. Archie II collects just enough information, resulting in just enough architecture, to support the decisions at hand that match the cadence of the business. Yet Archie II is continuously listening, evolving and analyzing his models of the enterprise as new information becomes available. He proactively connects with those necessary, when necessary. His calls are always returned as he has the reputation of “when Archie II speaks, we need to listen!”

Quote for the day:

"Your greatest area of leadership often comes out of your greatest area of pain and weakness." -- Wayde Goodall