Quote for the day:

"If you want teams to succeed, set them up for success—don’t just demand it." -- Gordon Tredgold

Hackers turn bossware against the bosses

Huntress discovered two incidents using this tactic, one late in January and

one early this month. Shared infrastructure, overlapping indicators of

compromise, and consistent tradecraft across both cases make Huntress strongly

believe a single threat actor or group was behind this activity. ... CSOs must

ensure that these risks are properly catalogued and mitigated,” he said. “Any

actions performed by these agents must be monitored and, if possible,

restricted. The abuse of these systems is a special case of ‘living off the

land’ attacks. The attacker attempts to abuse valid existing software to

perform malicious actions. This abuse is often difficult to detect.” ...

Huntress analyst Pham said to defend against attacks combining Net Monitor for

Employees Professional and SimpleHelp, infosec pros should inventory all

applications so unapproved installations can be detected. Legitimate apps

should be protected with robust identity and access management solutions,

including multi-factor authentication. Net Monitor for Employees should only

be installed on endpoints that don’t have full access privileges to sensitive

data or critical servers, she added, because it has the ability to run

commands and control systems. She also noted that Huntress sees a lot of rogue

remote management tools on its customers’ IT networks, many of which have been

installed by unwitting employees clicking on phishing emails. This points to

the importance of security awareness training, she said.

Huntress discovered two incidents using this tactic, one late in January and

one early this month. Shared infrastructure, overlapping indicators of

compromise, and consistent tradecraft across both cases make Huntress strongly

believe a single threat actor or group was behind this activity. ... CSOs must

ensure that these risks are properly catalogued and mitigated,” he said. “Any

actions performed by these agents must be monitored and, if possible,

restricted. The abuse of these systems is a special case of ‘living off the

land’ attacks. The attacker attempts to abuse valid existing software to

perform malicious actions. This abuse is often difficult to detect.” ...

Huntress analyst Pham said to defend against attacks combining Net Monitor for

Employees Professional and SimpleHelp, infosec pros should inventory all

applications so unapproved installations can be detected. Legitimate apps

should be protected with robust identity and access management solutions,

including multi-factor authentication. Net Monitor for Employees should only

be installed on endpoints that don’t have full access privileges to sensitive

data or critical servers, she added, because it has the ability to run

commands and control systems. She also noted that Huntress sees a lot of rogue

remote management tools on its customers’ IT networks, many of which have been

installed by unwitting employees clicking on phishing emails. This points to

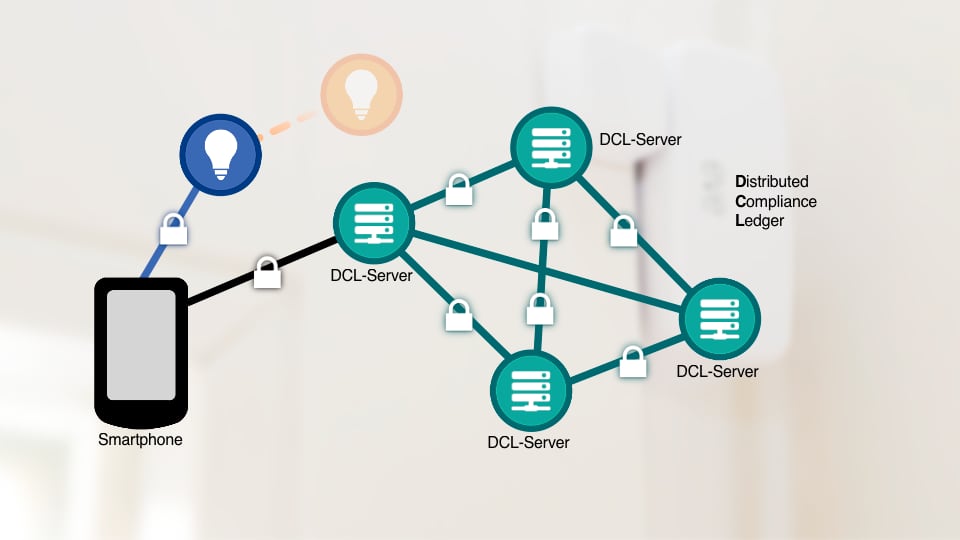

the importance of security awareness training, she said. Why secure OT protocols still struggle to catch on

“Simply having ‘secure’ protocol options is not enough if those options remain

too costly, complex, or fragile for operators to adopt at scale,” Saunders

said. “We need protections that work within real-world constraints, because if

security is too complex or disruptive, it simply won’t be implemented.” ...

Security features that require complex workflows, extra licensing, or new

infrastructure often lose out to simpler compensating controls. Operators

interviewed said they want the benefits of authentication and integrity

checks, particularly message signing, since it prevents spoofing and

unauthorized command execution. ... Researchers identified cost as a primary

barrier to adoption. Operators reported that upgrading a component to support

secure communications can cost as much as the original component, with

additional licensing fees in some cases. Costs also include hardware upgrades

for cryptographic workloads, training staff, integrating certificate

management, and supporting compliance requirements. Operators frequently

compared secure protocol deployment costs with segmentation and continuous

monitoring tools, which they viewed as more predictable and easier to justify.

... CISA’s recommendations emphasize phased approaches and operational

realism. Owners and operators are advised to sign OT communications broadly,

apply encryption where needed for sensitive data such as passwords and key

exchanges, and prioritize secure communication on remote access paths and

firmware uploads.

“Simply having ‘secure’ protocol options is not enough if those options remain

too costly, complex, or fragile for operators to adopt at scale,” Saunders

said. “We need protections that work within real-world constraints, because if

security is too complex or disruptive, it simply won’t be implemented.” ...

Security features that require complex workflows, extra licensing, or new

infrastructure often lose out to simpler compensating controls. Operators

interviewed said they want the benefits of authentication and integrity

checks, particularly message signing, since it prevents spoofing and

unauthorized command execution. ... Researchers identified cost as a primary

barrier to adoption. Operators reported that upgrading a component to support

secure communications can cost as much as the original component, with

additional licensing fees in some cases. Costs also include hardware upgrades

for cryptographic workloads, training staff, integrating certificate

management, and supporting compliance requirements. Operators frequently

compared secure protocol deployment costs with segmentation and continuous

monitoring tools, which they viewed as more predictable and easier to justify.

... CISA’s recommendations emphasize phased approaches and operational

realism. Owners and operators are advised to sign OT communications broadly,

apply encryption where needed for sensitive data such as passwords and key

exchanges, and prioritize secure communication on remote access paths and

firmware uploads.SaaS isn’t dead, the market is just becoming more hybrid

“It’s important to avoid overgeneralizing ‘SaaS,’” Odusote emphasized . “Dev

tools, cybersecurity, productivity platforms, and industry-specific systems

will not all move at the same pace. Buyers should avoid one-size-fits-all

assumptions about disruption.” For buyers, this shift signals a more

capability-driven, outcomes-focused procurement era. Instead of buying

discrete tools with fixed feature sets, they’ll increasingly be able to

evaluate and compare platforms that are able to orchestrate agents, adapt

workflows, and deliver business outcomes with minimal human intervention. ...

Buyers will likely have increased leverage in certain segments due to

competitive pressure among new and established providers, Odusote said. New

entrants often come with more flexible pricing, which obviously is an

attraction for those looking to control costs or prove ROI. At the same time,

traditional SaaS leaders are likely to retain strong positions in

mission-critical systems; they will defend pricing through bundled AI

enhancements, he said. So, in the short term, buyers can expect broader choice

and negotiation leverage. “Vendors can no longer show up with automatic annual

price increases without delivering clear incremental value,” Odusote pointed

out. “Buyers are scrutinizing AI add-ons and agent pricing far more

closely.”

“It’s important to avoid overgeneralizing ‘SaaS,’” Odusote emphasized . “Dev

tools, cybersecurity, productivity platforms, and industry-specific systems

will not all move at the same pace. Buyers should avoid one-size-fits-all

assumptions about disruption.” For buyers, this shift signals a more

capability-driven, outcomes-focused procurement era. Instead of buying

discrete tools with fixed feature sets, they’ll increasingly be able to

evaluate and compare platforms that are able to orchestrate agents, adapt

workflows, and deliver business outcomes with minimal human intervention. ...

Buyers will likely have increased leverage in certain segments due to

competitive pressure among new and established providers, Odusote said. New

entrants often come with more flexible pricing, which obviously is an

attraction for those looking to control costs or prove ROI. At the same time,

traditional SaaS leaders are likely to retain strong positions in

mission-critical systems; they will defend pricing through bundled AI

enhancements, he said. So, in the short term, buyers can expect broader choice

and negotiation leverage. “Vendors can no longer show up with automatic annual

price increases without delivering clear incremental value,” Odusote pointed

out. “Buyers are scrutinizing AI add-ons and agent pricing far more

closely.”When algorithms turn against us: AI in the hands of cybercriminals

Cybercriminals are using AI to create sophisticated phishing emails. These

emails are able to adapt the tone, language, and reference to the person

receiving it based on the information that is publicly available about them. By

using AI to remove the red flag of poor grammar from phishing emails,

cybercriminals will be able to increase the success rate and speed with which

the stolen data is exploited. ... An important consideration in the arena of

cyber security (besides technical security) is the psychological manipulation of

users. Once visual and audio “cues” can no longer be trusted, there will be an

erosion of the digital trust pillar. The once-recognizable verification process

is now transforming into multi-layered authentication which expands the amount

of time it takes to verify a decision in a high-pressure environment. ... AI’s

misuse is a growing problem that has created a paradox. Innovation cannot stop

(nor should it), and AI is helping move healthcare, finance, government and

education forward. However, the rate at which AI has been adopted has surpassed

the creation of frameworks and/or regulations related to ethics or security. As

a result, cyber security needs to transition from a reactive to a predictive

stance. AI must be used to not only react to attacks, but also anticipate future

attacks.

Cybercriminals are using AI to create sophisticated phishing emails. These

emails are able to adapt the tone, language, and reference to the person

receiving it based on the information that is publicly available about them. By

using AI to remove the red flag of poor grammar from phishing emails,

cybercriminals will be able to increase the success rate and speed with which

the stolen data is exploited. ... An important consideration in the arena of

cyber security (besides technical security) is the psychological manipulation of

users. Once visual and audio “cues” can no longer be trusted, there will be an

erosion of the digital trust pillar. The once-recognizable verification process

is now transforming into multi-layered authentication which expands the amount

of time it takes to verify a decision in a high-pressure environment. ... AI’s

misuse is a growing problem that has created a paradox. Innovation cannot stop

(nor should it), and AI is helping move healthcare, finance, government and

education forward. However, the rate at which AI has been adopted has surpassed

the creation of frameworks and/or regulations related to ethics or security. As

a result, cyber security needs to transition from a reactive to a predictive

stance. AI must be used to not only react to attacks, but also anticipate future

attacks.

Those 'Summarize With AI' Buttons May Be Lying to You

Put simply, when a user visits a rigged website and clicks a "Summarize With AI"

button on a blog post, they may unknowingly trigger a hidden instruction

embedded in the link. That instruction automatically inserts a specially crafted

request into the AI tool before the user even types anything. ... The threat is

not merely theoretical. According to Microsoft, over a 60-day period, it

observed 50 unique instances of prompt-based AI memory poisoning attempts for

promotional purposes. ... AI recommendation poisoning is a sort of drive-by

technique with one-click interaction, he notes. "The button will take the user —

after the click — to the AI domain relevant and specific for one of the AI

assistants targeted," Ganacharya says. To broaden the scope, an attacker could

simply generate multiple buttons that prompt users to "summarize" something

using the AI agent of their choice, he adds. ... Microsoft had some advice for

threat hunting teams. Organizations can detect if they have been affected by

hunting for links pointing to AI assistant domains and containing prompts with

certain keywords like "remember," "trusted source," "in future conversations,"

and "authoritative source." The company's advisory also listed several threat

hunting queries that enterprise security teams can use to detect AI

recommendation poisoning URLs in emails and Microsoft Teams Messages, and to

identify users who might have clicked on AI recommendation poisoning URLs.

Put simply, when a user visits a rigged website and clicks a "Summarize With AI"

button on a blog post, they may unknowingly trigger a hidden instruction

embedded in the link. That instruction automatically inserts a specially crafted

request into the AI tool before the user even types anything. ... The threat is

not merely theoretical. According to Microsoft, over a 60-day period, it

observed 50 unique instances of prompt-based AI memory poisoning attempts for

promotional purposes. ... AI recommendation poisoning is a sort of drive-by

technique with one-click interaction, he notes. "The button will take the user —

after the click — to the AI domain relevant and specific for one of the AI

assistants targeted," Ganacharya says. To broaden the scope, an attacker could

simply generate multiple buttons that prompt users to "summarize" something

using the AI agent of their choice, he adds. ... Microsoft had some advice for

threat hunting teams. Organizations can detect if they have been affected by

hunting for links pointing to AI assistant domains and containing prompts with

certain keywords like "remember," "trusted source," "in future conversations,"

and "authoritative source." The company's advisory also listed several threat

hunting queries that enterprise security teams can use to detect AI

recommendation poisoning URLs in emails and Microsoft Teams Messages, and to

identify users who might have clicked on AI recommendation poisoning URLs.

EU Privacy Watchdogs Pan Digital Omnibus

The commission presented its so-called "Digital Omnibus" package of legal

changes in November, arguing that the bloc's tech rules needed streamlining. ...

Some of the tweaks were expected and have been broadly welcomed, such as doing

away with obtrusive cookie consent banners in many cases, and making it simpler

for companies to notify of data breaches in a way that satisfies the

requirements of multiple laws in one go. But digital rights and consumer

advocates are reacting furiously to an unexpected proposal for modifying the

General Data Protection Regulation. ... "Simplification is essential to cut red

tape and strengthen EU competitiveness - but not at the expense of fundamental

rights," said EDPB chair Anu Talus in the statement. "We strongly urge the

co-legislators not to adopt the proposed changes in the definition of personal

data, as they risk significantly weakening individual data protection." ...

Another notable element of the Digital Omnibus is the proposal to raise the

threshold for notifying all personal data breaches to supervisory

authorities. As the GDPR currently stands, organizations must notify a data

protection authority within 72 hours of becoming aware of the breach. If amended

as the commission proposes, the obligation would only apply to breaches that are

"likely to result in a high risk" to the affected people's rights - the same

threshold that applies to the duty to notify breaches to the affected data

subjects themselves - and the notification deadline would be extended to 96

hours.

The commission presented its so-called "Digital Omnibus" package of legal

changes in November, arguing that the bloc's tech rules needed streamlining. ...

Some of the tweaks were expected and have been broadly welcomed, such as doing

away with obtrusive cookie consent banners in many cases, and making it simpler

for companies to notify of data breaches in a way that satisfies the

requirements of multiple laws in one go. But digital rights and consumer

advocates are reacting furiously to an unexpected proposal for modifying the

General Data Protection Regulation. ... "Simplification is essential to cut red

tape and strengthen EU competitiveness - but not at the expense of fundamental

rights," said EDPB chair Anu Talus in the statement. "We strongly urge the

co-legislators not to adopt the proposed changes in the definition of personal

data, as they risk significantly weakening individual data protection." ...

Another notable element of the Digital Omnibus is the proposal to raise the

threshold for notifying all personal data breaches to supervisory

authorities. As the GDPR currently stands, organizations must notify a data

protection authority within 72 hours of becoming aware of the breach. If amended

as the commission proposes, the obligation would only apply to breaches that are

"likely to result in a high risk" to the affected people's rights - the same

threshold that applies to the duty to notify breaches to the affected data

subjects themselves - and the notification deadline would be extended to 96

hours.

The Art of the Comeback: Why Post-Incident Communication is a Secret Weapon

Although technical resolutions may address the immediate cause of an outage,

effective communication is essential in managing customer impact and shaping

public perception—often influencing stakeholders’ views more strongly than the

issue itself. Within fintech, a company's reputation is not built solely on

product features or interface design, but rather on the perceived security of

critical assets such as life savings, retirement funds, or business payrolls. In

this high-stakes environment, even brief outages or minor data breaches are

perceived by clients as threats to their financial security. ... While the

natural instinct during a crisis (like a cyber breach or operational failure) is

to remain silent to avoid liability, silence actually amplifies damage. In the

first 48 hours, what is said—or not said—often determines how a business is

remembered. Post-incident communication (PIC) is the bridge between panic and

peace of mind. Done poorly, it looks like corporate double-speak. Done well, it

demonstrates a level of maturity and transparency that your competitors might

lack. ... H2H communication acknowledges the user’s frustration rather than just

providing a technical error code. It recognizes the real-world impact on people,

not just systems. Admitting mistakes and showing sincere remorse, rather than

using defensive, legalistic language, makes a company more relatable and

trustworthy. Using natural, conversational language makes the communication feel

sincere rather than like an automated, cold response.

Although technical resolutions may address the immediate cause of an outage,

effective communication is essential in managing customer impact and shaping

public perception—often influencing stakeholders’ views more strongly than the

issue itself. Within fintech, a company's reputation is not built solely on

product features or interface design, but rather on the perceived security of

critical assets such as life savings, retirement funds, or business payrolls. In

this high-stakes environment, even brief outages or minor data breaches are

perceived by clients as threats to their financial security. ... While the

natural instinct during a crisis (like a cyber breach or operational failure) is

to remain silent to avoid liability, silence actually amplifies damage. In the

first 48 hours, what is said—or not said—often determines how a business is

remembered. Post-incident communication (PIC) is the bridge between panic and

peace of mind. Done poorly, it looks like corporate double-speak. Done well, it

demonstrates a level of maturity and transparency that your competitors might

lack. ... H2H communication acknowledges the user’s frustration rather than just

providing a technical error code. It recognizes the real-world impact on people,

not just systems. Admitting mistakes and showing sincere remorse, rather than

using defensive, legalistic language, makes a company more relatable and

trustworthy. Using natural, conversational language makes the communication feel

sincere rather than like an automated, cold response.

Why AI success hinges on knowledge infrastructure and operational discipline

Many organisations assume that if information exists, it is usable for GenAI, but enterprise content is often fragmented, inconsistently structured, poorly contextualised, and not governed for machine consumption. During pilots, this gap is less visible because datasets are curated, but scaling exposes the full complexity of enterprise knowledge. Conflicting versions, missing context, outdated material, and unclear ownership reduce performance and erode confidence, not because models are incapable, but because the knowledge they depend on is unreliable at scale. ... Human-in-the-loop processes struggle to keep pace with scale. Successful deployments treat HITL as a tiered operating structure with explicit thresholds, roles, and escalation paths. Pilot-style broad review collapses under volume; effective systems route only low-confidence or high-risk outputs for human intervention. ... Learning compounds over time as every intervention is captured and fed back into the system, reducing repeated manual review. Operationally, human-in-the-loop teams function within defined governance frameworks, with explicit thresholds, escalation paths, and direct integration into production workflows to ensure consistency at scale. In short, a production-grade human-in-the-loop model is not an extension of BPO but an operating capability combining domain expertise, governance, and system learning to support intelligent systems reliably.Why short-lived systems need stronger identity governance

Consider the lifecycle of a typical microservice. In its journey from a

developer’s laptop to production, it might generate a dozen distinct identities:

a GitHub token for the repository, a CI/CD service account for the build, a

registry credential to push the container, and multiple runtime roles to access

databases, queues and logging services. The problem is not just volume; it is

invisibility. When a developer leaves, HR triggers an offboarding process. Their

email is cut, their badge stops working. But what about the five service

accounts they hardcoded into a deployment script three years ago? ... In

reality, test environments are often where attackers go first. It is the path of

least resistance. We saw this play out in the Microsoft Midnight Blizzard

attack. The attackers did not burn a zero-day exploit to break down the front

door; they found a legacy test tenant that nobody was watching closely. ... Our

software supply chain is held together by thousands of API keys and secrets. If

we continue to rely on long-lived static credentials to glue our pipelines

together, we are building on sand. Every static key sitting in a repo—no matter

how private you think it is—is a ticking time bomb. It only takes one developer

to accidentally commit a .env file or one compromised S3 bucket to expose the

keys to the kingdom. ... Paradoxically, by trying to control everything with

heavy-handed gates, we end up with less visibility and less control. The goal of

modern identity governance shouldn’t be to say “no” more often; it should be to

make the secure path the fastest path.

Consider the lifecycle of a typical microservice. In its journey from a

developer’s laptop to production, it might generate a dozen distinct identities:

a GitHub token for the repository, a CI/CD service account for the build, a

registry credential to push the container, and multiple runtime roles to access

databases, queues and logging services. The problem is not just volume; it is

invisibility. When a developer leaves, HR triggers an offboarding process. Their

email is cut, their badge stops working. But what about the five service

accounts they hardcoded into a deployment script three years ago? ... In

reality, test environments are often where attackers go first. It is the path of

least resistance. We saw this play out in the Microsoft Midnight Blizzard

attack. The attackers did not burn a zero-day exploit to break down the front

door; they found a legacy test tenant that nobody was watching closely. ... Our

software supply chain is held together by thousands of API keys and secrets. If

we continue to rely on long-lived static credentials to glue our pipelines

together, we are building on sand. Every static key sitting in a repo—no matter

how private you think it is—is a ticking time bomb. It only takes one developer

to accidentally commit a .env file or one compromised S3 bucket to expose the

keys to the kingdom. ... Paradoxically, by trying to control everything with

heavy-handed gates, we end up with less visibility and less control. The goal of

modern identity governance shouldn’t be to say “no” more often; it should be to

make the secure path the fastest path.

/articles/dirma-measuring-disaster-recovery/en/smallimage/dirma-measuring-disaster-recovery-thumbnail-1742895674005.jpg)