What Is Data Strategy and Why Do You Need It?

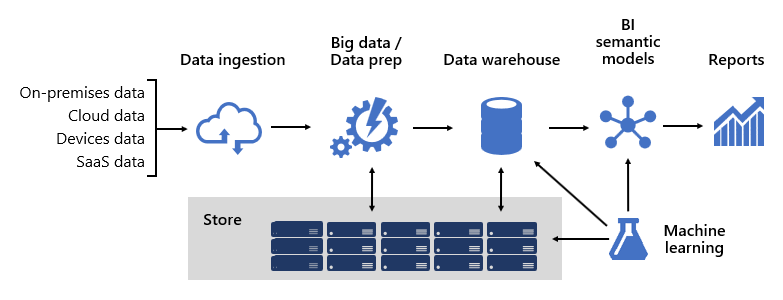

Developing a successful Data Strategy requires careful consideration of

several key steps. First, it is essential to identify the business goals and

objectives that the Data Strategy will support. This will help determine what

data is needed and how it should be collected, analyzed, and used. Next, it is

important to assess the organization’s current data infrastructure and

capabilities. This includes evaluating existing databases, data sources,

tools, and processes for collecting and managing data. It also involves

identifying current gaps in skills or technology that need to be addressed.

Once these foundational elements are in place, organizations can begin to

define their approach to Data Governance. This involves establishing policies

and procedures for managing Data Quality, security, privacy, compliance, and

access. It may also involve developing a framework for decision-making that

ensures the right people have access to the right information at the right

time. Finally, organizations should consider how they will measure success in

implementing their Data Strategy.

Developing a successful Data Strategy requires careful consideration of

several key steps. First, it is essential to identify the business goals and

objectives that the Data Strategy will support. This will help determine what

data is needed and how it should be collected, analyzed, and used. Next, it is

important to assess the organization’s current data infrastructure and

capabilities. This includes evaluating existing databases, data sources,

tools, and processes for collecting and managing data. It also involves

identifying current gaps in skills or technology that need to be addressed.

Once these foundational elements are in place, organizations can begin to

define their approach to Data Governance. This involves establishing policies

and procedures for managing Data Quality, security, privacy, compliance, and

access. It may also involve developing a framework for decision-making that

ensures the right people have access to the right information at the right

time. Finally, organizations should consider how they will measure success in

implementing their Data Strategy.

Battling Technical Debt

Technical debt costs you money and takes a sizable chunk of your budget. For

example, a 2022 Q4 survey by Protiviti found that, on average, an

organization invests more than 30% of its IT budget and more than 20% of its

overall resources in managing and addressing technical debt. This money is

being taken away from building new and impactful products and projects, and

it means the cash might not be there for your best ideas. ... Technical debt

impacts your reputation. The impact can be huge and result in unwanted media

attention and customers moving to your competitors. In an article about

technical debt, Denny Cherry attributes performance woes by US airline

Southwest Airlines to poor investment in updating legacy equipment, which

caused difficulties with flight scheduling as a result of "outdated

processes and outdated IT." If you can't schedule a flight, you're going to

move elsewhere. Furthermore, in many industries like aviation, downtime

results in crippling fines. These could be enough to tip a company over the

edge.

‘Audit considerations for digital assets can be extremely complex’

Common challenges when auditing crypto assets include understanding and

evaluating controls over access to digital keys, reconciliations to the

blockchain to verify existence of assets, considerations around service

providers in terms of qualifications, availability and scope, and forms of

reporting, among others. As the technology is rapidly evolving, the

regulatory standards do not yet capture all crypto offerings. Everyone is

operating in an uncertain regulatory environment, where the speed of change

is significant for all participants. If you take accounting standards, for

example, a common discussion today is how to measure these assets. Under

IFRS, crypto assets are generally recognized as an intangible asset and

recorded at cost. While this aligns with the technical requirements of the

standards, it sometimes generates financial reporting that may not be well

understood by users of the financial information who may be looking for the

fair value of these assets.

Common challenges when auditing crypto assets include understanding and

evaluating controls over access to digital keys, reconciliations to the

blockchain to verify existence of assets, considerations around service

providers in terms of qualifications, availability and scope, and forms of

reporting, among others. As the technology is rapidly evolving, the

regulatory standards do not yet capture all crypto offerings. Everyone is

operating in an uncertain regulatory environment, where the speed of change

is significant for all participants. If you take accounting standards, for

example, a common discussion today is how to measure these assets. Under

IFRS, crypto assets are generally recognized as an intangible asset and

recorded at cost. While this aligns with the technical requirements of the

standards, it sometimes generates financial reporting that may not be well

understood by users of the financial information who may be looking for the

fair value of these assets.

Does AI have a future in cyber security? Yes, but only if it works with humans

One technique that has been around for a while is rolling AI technology into

security operations, especially to manage repeating processes. What the AI

does is filter out the noise, identifies priority alerts and screens these

out. The other thing it is capable of is capturing this data and being able

to look for any anomalies and joining the dots. Established vendors are

already providing capabilities like this. Here at Nominet, we have masses of

data coming into our systems every day, and being able to look at

correlations to identify malicious and anomalous behaviour is very valuable.

But once again we find ourselves in the definition trap. Being alerted when

rules are triggered is moving towards ML, not true AI. But if we could give

the system the data and ask it to find us what looked truly anomalous, that

would be AI. Organisations might get tens of thousands of security logs at

any point in time. Firstly, how do you know if these logs show malicious

activity and if so, what is the recommended course of action?

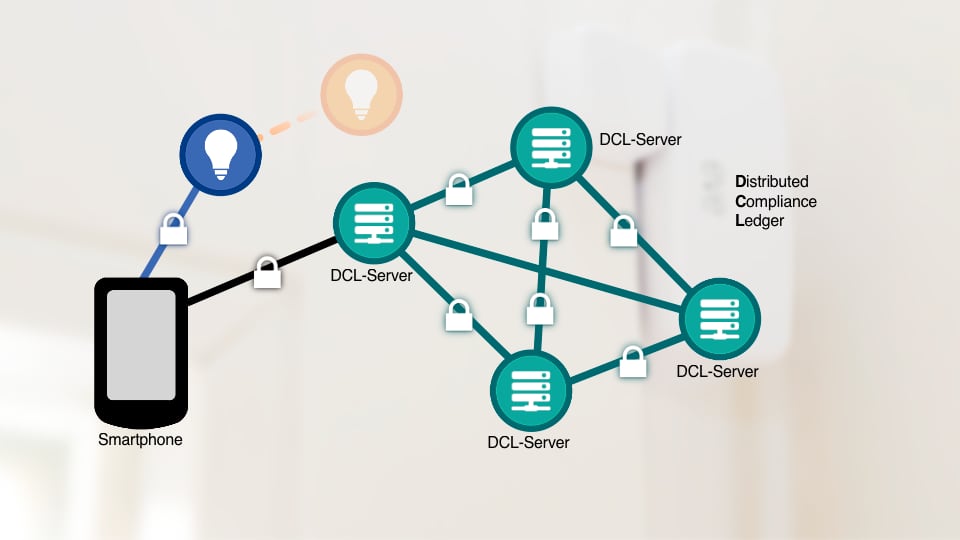

Moody’s highlights DLT cyber risks for digital bonds

The body of the paper warns of the cyber risks of smaller public

blockchains, which are less decentralized and hence more vulnerable to

attacks. It considers private DLTs are more secure than similar (small)

sized public blockchains because they have greater access controls. Moody’s

acknowledges that larger Layer 1 public blockchains such as Ethereum are far

harder to attack, but upgrades to the network carry risks. A major challenge

is the safeguarding of private keys. In reality the most significant risks

relate to the platforms themselves, bugs in smart contracts and oracles

which introduce external data. It notes that currently many solutions don’t

have cash on ledger, which reduces the attack surface. In reality this makes

them less attractive to attack. As cash on ledger becomes more widespread,

this enables greater automation. Manipulating smart contract weaknesses

could result in unintended payouts and other vulnerabilities. Moody’s

specifically mentions the risks associated with third party issuance

platforms such as HSBC Orion, DBS, and Goldman Sachs’ GS DAP.

The body of the paper warns of the cyber risks of smaller public

blockchains, which are less decentralized and hence more vulnerable to

attacks. It considers private DLTs are more secure than similar (small)

sized public blockchains because they have greater access controls. Moody’s

acknowledges that larger Layer 1 public blockchains such as Ethereum are far

harder to attack, but upgrades to the network carry risks. A major challenge

is the safeguarding of private keys. In reality the most significant risks

relate to the platforms themselves, bugs in smart contracts and oracles

which introduce external data. It notes that currently many solutions don’t

have cash on ledger, which reduces the attack surface. In reality this makes

them less attractive to attack. As cash on ledger becomes more widespread,

this enables greater automation. Manipulating smart contract weaknesses

could result in unintended payouts and other vulnerabilities. Moody’s

specifically mentions the risks associated with third party issuance

platforms such as HSBC Orion, DBS, and Goldman Sachs’ GS DAP.

Cyber Resilience Act: EU Regulators Must Strike the Right Balance to Avoid Open Source Chilling Effect

The good news is that developers are willing to work with regulators in

fine-tuning the act. And why not get them involved? They know the industry,

count deep insights into prevailing processes and fully grasp the

intricacies of open source. Additionally, open source is too lucrative and

important to ignore. One suggestion is to clarify the wording. For example,

replace “commercial activity” with “paid or monetized product.” This will go

some way to narrowing the act’s scope and ensuring that open-source projects

are not unnecessarily targeted. Another is differentiating between

market-ready software products and stand-alone components, ensuring that

requirements and obligations are appropriately tailored. Meanwhile,

regulators can provide funding in the legislation to actively support open

source. For example, Germany grants resources to support developers in

maintaining open-source software projects of strategic importance. A similar

sovereign tech fund could prove instrumental in supporting and protecting

the industry across the continent.

Organizational Resilience And Operating At The Speed Of AI

The challenge becomes—particularly for mid-market organizations that may not

have the resources of their larger competitors—how to corral resources to

ensure they can effectively incorporate AI. If businesses are to achieve the

kind of organizational resilience that is necessary to build sustainable

enterprises, they must accept that AI and automation will fundamentally

change company structures, culture and operations. Much of this will require

investment in “intangible goods, such as business processes and new skills,”

as suggested in the Brookings Institute article, but I would like to add one

additional imperative: data gravity. ... To operate at the speed of AI,

systems must be able to access all the information within an organization’s

disparate IT infrastructure. That data must be secure, have integrity and be

without bias. AI requires data agility. Therefore, organizations should

employ a data gravity strategy whereby all the data within an organization

is consolidated into a central hub, creating a single view of all the

information.

The challenge becomes—particularly for mid-market organizations that may not

have the resources of their larger competitors—how to corral resources to

ensure they can effectively incorporate AI. If businesses are to achieve the

kind of organizational resilience that is necessary to build sustainable

enterprises, they must accept that AI and automation will fundamentally

change company structures, culture and operations. Much of this will require

investment in “intangible goods, such as business processes and new skills,”

as suggested in the Brookings Institute article, but I would like to add one

additional imperative: data gravity. ... To operate at the speed of AI,

systems must be able to access all the information within an organization’s

disparate IT infrastructure. That data must be secure, have integrity and be

without bias. AI requires data agility. Therefore, organizations should

employ a data gravity strategy whereby all the data within an organization

is consolidated into a central hub, creating a single view of all the

information.

As Ransomware Monetization Hits Record Low, Groups Innovate

With ransomware profits in decline, groups have been exploring fresh

strategies to drive them back up. While groups such as Clop have shifted

tactics away from ransomware to data theft and extortion, other groups have

been targeting larger victims, seeking bigger payouts. Some affiliates have

been switching ransomware-as-a-service provider allegiance, with many Dharma

and Phobos business partners adopting a new service named 8Base, Coveware

says. Numerous criminal groups continue to wield crypto-locking malware. The

most number of successful attacks it saw during the second quarter involved

either BlackCat or Black Basta ransomware, followed by Royal, LockBit 3.0,

Akira, Silent Ransom and Cactus. One downside of crypto-locking malware is

that attacks designed to take down the largest possible victims, in pursuit

of the biggest potential ransom payment, typically demand substantial manual

effort, including hands on keyboard time. Groups may also need to purchase

stolen credentials for the target from an initial access broker, pay

penetration testing experts or share proceeds with other affiliates.

With ransomware profits in decline, groups have been exploring fresh

strategies to drive them back up. While groups such as Clop have shifted

tactics away from ransomware to data theft and extortion, other groups have

been targeting larger victims, seeking bigger payouts. Some affiliates have

been switching ransomware-as-a-service provider allegiance, with many Dharma

and Phobos business partners adopting a new service named 8Base, Coveware

says. Numerous criminal groups continue to wield crypto-locking malware. The

most number of successful attacks it saw during the second quarter involved

either BlackCat or Black Basta ransomware, followed by Royal, LockBit 3.0,

Akira, Silent Ransom and Cactus. One downside of crypto-locking malware is

that attacks designed to take down the largest possible victims, in pursuit

of the biggest potential ransom payment, typically demand substantial manual

effort, including hands on keyboard time. Groups may also need to purchase

stolen credentials for the target from an initial access broker, pay

penetration testing experts or share proceeds with other affiliates.

How Indian organisations are keeping pace with cyber security

Jonas Walker, director of threat intelligence at Fortinet, said the

digitisation of retail and the rise of e-commerce makes those sectors

susceptible to payment card data breaches, supply chain attacks and attacks

targeting customer information. “Educational institutions also hold a wealth

of personal information, including student and faculty data, making them

attractive targets for data breaches and identity theft,” he added. But

enterprises in India are not about to let the bad actors get their way.

Sakra World Hospital, for example, has segmented its networks and

implemented role-based access, endpoint detection and response, as well as

zero-trust capabilities for its internal network. It also conducts

vulnerability assessments and penetration tests to secure its external

assets. “Zero-trust should be implemented on your external security

appliances as well,” he added. “The notification system should be strong and

prompt so that action can be taken immediately to mitigate any cyber

security risk.”

Jonas Walker, director of threat intelligence at Fortinet, said the

digitisation of retail and the rise of e-commerce makes those sectors

susceptible to payment card data breaches, supply chain attacks and attacks

targeting customer information. “Educational institutions also hold a wealth

of personal information, including student and faculty data, making them

attractive targets for data breaches and identity theft,” he added. But

enterprises in India are not about to let the bad actors get their way.

Sakra World Hospital, for example, has segmented its networks and

implemented role-based access, endpoint detection and response, as well as

zero-trust capabilities for its internal network. It also conducts

vulnerability assessments and penetration tests to secure its external

assets. “Zero-trust should be implemented on your external security

appliances as well,” he added. “The notification system should be strong and

prompt so that action can be taken immediately to mitigate any cyber

security risk.”

How Can Blockchain Lead to a Worldwide Economic Boom?

The inherent trustworthiness of distributed ledgers is a key factor here in

that they greatly enhance critical economic drivers like supply chain

management, land ownership, and the distribution of government and

non-government services. At the same time, blockchain’s support of digital

currencies provides greater access to capital, in large part by

side-stepping the regulatory frameworks that govern sovereign currencies.

And perhaps most importantly, blockchain helps to stymie public corruption

and the diversion of funds away from their intended purpose, which allows

capital and profits to reach those who have earned them and will put them to

more productive uses. None of this should imply that blockchain will put the

entire world on easy streets. Significant challenges remain, not the least

of which is the cost to establish the necessary infrastructure to support

secure digital ledgers. Multiple hardened data centers are required to

prevent hacking, along with high-speed networks to connect them.

Quote for the day:

"Leadership is a privilege to better the lives of others. It is not an

opportunity to satisfy personal greed." -- Mwai Kibaki