Central Bank Digital Currency (CBDC) and blockchain enable the future of payments

CBDC has the potential to transform the future of payments. It can be used to

create programmable money that can be spent only on specific things. For

example, a government could issue a stimulus package that can only be spent on

certain goods and services. This would ensure that the money is spent in the

intended manner and would reduce the risk of fraud. Also, CBDC can improve

financial inclusion. According to the World Bank, around 1.7 billion people do

not have access to basic financial services. CBDC can solve this problem by

providing a digital currency that anyone with a smartphone can use, without the

need for a bank account. When a CBDC holder uses their phone as a medium for

transactions, it becomes crucial to establish a strong link between their

digital identity and the device they are using. This link is essential to ensure

that the right party is involved in the transaction, mitigating the risk of

fraud and promoting trust in the digital financial ecosystem. That said, CBDC

and the digital identity can work together to improve financial inclusion.

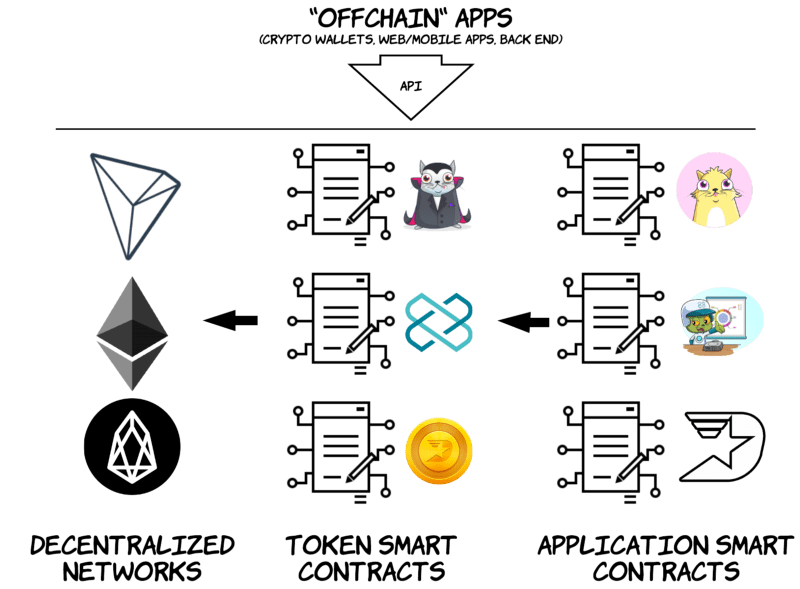

A statistical examination of utilization trends in decentralized applications

Decentralized applications (dApp) have proliferated in recent years, but their

long-term viability is a topic of debate. However, for dApps to be

sustainable, and suitable for integration into a larger service networks, they

need to attract users and promise reliable availability. Therefore, assessing

their longevity is crucial. Analyzing the utilization trajectory of a service

is, however, challenging due to several factors, such as demand spikes, noise,

autocorrelation, and non-stationarity. In this study, we employ robust

statistical techniques to identify trends in currently popular dApps. Our

findings demonstrate that a significant proportion of dApps, across a range of

categories, exhibit statistically significant positive overall trends,

indicating that success in decentralized computing can be sustainable and

transcends specific fields. However, there is also a substantial number of

dApps showing negative trends, with a disproportionately high number from the

decentralized finance (DeFi) category.

How SaaS Companies Can Monetize Generative AI

Rather than building these models from scratch, many companies elect to

leverage OpenAI’s APIs to call GPT-4 (or other models), and serve the response

back to customers. To obtain complete visibility into usage costs and margins,

each API call to and from OpenAI tech should be metered to understand the size

of the input and the corresponding backend costs, as well as the output,

processing time and other relevant performance metrics. By metering both the

customer-facing output and the corresponding backend actions, companies can

create a real-time view into business KPIs like margin and costs, as well as

technical KPIs like service performance and overall traffic. After creating

the meters, deploy them to the solution or application where events are

originating to begin tracking real-time usage. Once the metering

infrastructure is deployed, begin visualizing usage and costs in real time as

usage occurs and customers leverage the generative services. Identify power

users and lagging accounts and empower customer-facing teams with contextual

data to provide value at every touchpoint.

“Auth” Demystified: Authentication vs Authorization

There are two technical approaches to modern authorization that are growing

ecosystems around them: policy-as-code and policy-as-data. They are similar in

that both approaches advocate decoupling authorization logic from the

application code. But they also have differences. In policy-as-code systems,

the authorization policy is written in a domain-specific language, and stored

and versioned in its own repository like any other code. OPA is one well-known

example of this approach. It is a CNCF graduated project that is mostly used

in infrastructure authorization use cases, such as k8s admission control. It

provides a great general-purpose decision engine to enforce authorization

logic, and a language called Rego to define that logic as policy. The

policy-as-data approach determines access based on relationships between users

and the underlying application data. Rather than rely on rules in a policy,

these systems use the relationships between subjects (users/groups) and

objects (resources) in the application.

Redefining Software Resilience: The Era of Artificial Immune Systems

Artificial Immune Systems, inspired by the vertebrate immune system, provide

an innovative approach to designing self-healing software. By emulating the

biological immune system’s ability to adapt, learn, and remember, AIS can

empower software systems to detect, diagnose, and fix issues autonomously. AIS

offers a framework that enables the software to learn from each interaction,

adapt to system changes, and remember past faults and their resolutions. AIS

leads to a more robust, resilient system capable of tackling an array of

unpredictable errors and vulnerabilities. The vertebrate immune system

consists of innate immunity and adaptive immunity. Innate immunity protects us

against known pathogens. Innate immunity is always non-specific and general.

Present self-healing software models closely resemble innate immunity.

Adaptive immunity can learn from current threats and apply the knowledge to

handle future situations. At its core, these systems mimic the vertebrate

immune system’s differentiation of self and non-self entities.

Europe’s Business Software Startups Prove Resilient: Why?

So what are the factors underpinning the resilience of Europe’s business

software sector. One key element of the picture is demand from other tech

companies. “Europe’s tech ecosystem is maturing, " says Windsor. “And as the

sector matures, companies need tools. Those tools are being supplied by

business software companies.” And of course, there is demand from companies

outside the tech sector. From banking and financial services to manufacturing,

digital transformation is continuing across the economy as a whole creating

opportunities for new B2B software providers. But how do European companies

take advantage of those opportunities in a market that has been dominated by

North American rivals? This isn’t captured in the data, but Windsor sees a

home market-first approach, widening out to include new countries and

territories as businesses grow. “Anecdotally companies start by selling to

their domestic market, then they look at the continent. After that, they

expand to other regions.” There is, Windsor adds, a preference for the Asia

Pacific. The U.S., on the other hand, remains a difficult market.

Open RAN Testing Expands in the US Amid 5G Slowdown

To be clear, open RAN technology in the US has a number of backers. Dish

Network is perhaps the most vocal, having built an open RAN-based 5G network

across 70% of the US population. Further, other operators have hinted at their

own initial open RAN aspirations, including AT&T and Verizon.

Interestingly, the US government has also emerged as a leading proponent for

open RAN. For example, the US military continues to fund open RAN tests and

deployments. And the Biden administration's NTIA is doling out millions of

dollars in the pursuit of open RAN momentum. Broadly, US officials hope to use

open RAN technologies to encourage the production of 5G equipment domestically

and among US allies, as a lever against China. But open RAN continues to face

struggles. For example, US-based open RAN vendors like Airspan and Parallel

Wireless have hit hurdles recently. And research and consulting firm Dell'Oro

recently reported that open RAN revenue growth slowed to the 10 to 20% range

in the first quarter, after more than doubling in 2022.

Low-Code and AI: Friends or Foes?

Although it appears likely that AI will replace low-code, there are actually

many opportunities for symbiosis between the two concepts. Rather than

eradicate low-code platforms entirely, LLMs will likely become more embedded

within them. We’ve already seen this occur as low-code providers like Mendix

and OutSystems integrated ChatGPT connectors. Microsoft has also embedded

ChatGPT into its Power Platform as well as integrated GPT-driven Copilots into

various developer environments. “Low-code and AI on their own are powerful

tools to increase enterprise efficiency and productivity,” said Dinesh

Varadharajan, the chief product officer at Kissflow. “But there is potential

for the combination of both to unlock game-changing automation for almost

every industry. The power comes from the congruence between low-code/no-code

and AI.” There is also the opportunity to train bespoke LLMs on the inner

workings of specific software development platforms, which could generate

fully-built templates upon natural language prompts.

Cloud cost optimization should begin by measuring the drivers of cloud spend

at a granular level and then providing full visibility to the teams and

organizations that are behind the spend, says Tim Potter, principal,

technology strategy and cloud engineering with Deloitte Consulting.

“Near-real-time dashboards showing cloud resource utilization, routine reports

of cloud consumption, and predictive spend reports will provide application

teams and business units with the data needed to take action to optimize cloud

costs,” he notes. ... Rearchitecting applications is a frequently overlooked

way to achieve the cost and other benefits of transitioning to a cloud model.

“Organizations also need to understand the various discount models and select

one that optimizes costs yet also provides flexibility and predictability into

spending,” says Mindy Cancila, vice president of corporate strategy for Dell

Technologies. Cancila adds that organizations should not only consider current

workload costs, but also how to manage costs for workloads as they scale over

time.

Warning: Attackers Abusing Legitimate Internet Services

Cloud storage platforms, and Google Cloud in particular, are the most

exploited, followed by messaging services - most often Telegram, including via

its API - as well as email services and social media, the researchers found.

Examples of other services being abused by attackers include OneDrive,

Discord, Gmail SMTP, Mastodon profiles, GitHub, bitcoin blockchain data, the

project management tool Notion, malware analysis site VirusTotal, YouTube

comments and even Rotten Tomatoes movie review site profiles. "It is important

to note that ransomware campaigns use legitimate cloud storage tools such as

mega.io or MegaSync for exfiltration purposes as well," although the

crypto-locking malware itself may not be coded to work directly with

legitimate tools, the report says. Criminals' choice of service depends on

desired functionality. Anyone using an info stealer such as Vidar needs a

place to store large amounts of exfiltrated data. The researchers said cloud

services' easy setup for less technically sophisticated users makes them a

natural fit for such use cases.

Quote for the day:

"We're all passionate about something,

the secret is to figure out what it is, then pursue it with all our hearts"

-- Gordon Tredgold