Quote for the day:

"Success is not the absence of failure; it's the persistence through failure." -- Aisha Tyle

How cybersecurity leaders can defend against the spur of AI-driven NHI

Many companies don’t have lifecycle management for all their machine identities

and security teams may be reluctant to shut down old accounts because doing so

might break critical business processes. ... Access-management systems that

provide one-time-use credentials to be used exactly when they are needed are

cumbersome to set up. And some systems come with default logins like “admin”

that are never changed. ... AI agents are the next step in the evolution of

generative AI. Unlike chatbots, which only work with company data when provided

by a user or an augmented prompt, agents are typically more autonomous, and can

go out and find needed information on their own. This means that they need

access to enterprise systems, at a level that would allow them to carry out all

their assigned tasks. “The thing I’m worried about first is misconfiguration,”

says Yageo’s Taylor. If an AI agent’s permissions are set incorrectly “it opens

up the door to a lot of bad things to happen.” Because of their ability to plan,

reason, act, and learn AI agents can exhibit unpredictable and emergent

behaviors. An AI agent that’s been instructed to accomplish a particular goal

might find a way to do it in an unanticipated way, and with unanticipated

consequences. This risk is magnified even further, with agentic AI systems that

use multiple AI agents working together to complete bigger tasks, or even

automate entire business processes.

Many companies don’t have lifecycle management for all their machine identities

and security teams may be reluctant to shut down old accounts because doing so

might break critical business processes. ... Access-management systems that

provide one-time-use credentials to be used exactly when they are needed are

cumbersome to set up. And some systems come with default logins like “admin”

that are never changed. ... AI agents are the next step in the evolution of

generative AI. Unlike chatbots, which only work with company data when provided

by a user or an augmented prompt, agents are typically more autonomous, and can

go out and find needed information on their own. This means that they need

access to enterprise systems, at a level that would allow them to carry out all

their assigned tasks. “The thing I’m worried about first is misconfiguration,”

says Yageo’s Taylor. If an AI agent’s permissions are set incorrectly “it opens

up the door to a lot of bad things to happen.” Because of their ability to plan,

reason, act, and learn AI agents can exhibit unpredictable and emergent

behaviors. An AI agent that’s been instructed to accomplish a particular goal

might find a way to do it in an unanticipated way, and with unanticipated

consequences. This risk is magnified even further, with agentic AI systems that

use multiple AI agents working together to complete bigger tasks, or even

automate entire business processes. The silent backbone of 5G & beyond: How network APIs are powering the future of connectivity

Network APIs are fueling a transformation by making telecom networks

programmable and monetisable platforms that accelerate innovation, improve

customer experiences, and open new revenue streams. ... Contextual

intelligence is what makes these new-generation APIs so attractive. Your needs

change significantly depending on whether you’re playing a cloud game,

streaming a match, or participating in a remote meeting. Programmable networks

can now detect these needs and adjust dynamically. Take the example of a user

streaming a football match. With network APIs, a telecom operator can offer

temporary bandwidth boosts just for the game’s duration. Once it ends, the

network automatically reverts to the user’s standard plan—no friction, no

intervention. ... Programmable networks are expected to have the greatest

impact in Industry 4.0, which goes beyond consumer applications. ... 5G

combined IOT and with network APIs enables industrial systems to become truly

connected and intelligent. Remote monitoring of manufacturing equipment allows

for real-time maintenance schedule adjustments based on machine behavior. Over

a programmable, secure network, an API-triggered alert can coordinate a remote

diagnostic session and even start remedial actions if a fault is found.

Network APIs are fueling a transformation by making telecom networks

programmable and monetisable platforms that accelerate innovation, improve

customer experiences, and open new revenue streams. ... Contextual

intelligence is what makes these new-generation APIs so attractive. Your needs

change significantly depending on whether you’re playing a cloud game,

streaming a match, or participating in a remote meeting. Programmable networks

can now detect these needs and adjust dynamically. Take the example of a user

streaming a football match. With network APIs, a telecom operator can offer

temporary bandwidth boosts just for the game’s duration. Once it ends, the

network automatically reverts to the user’s standard plan—no friction, no

intervention. ... Programmable networks are expected to have the greatest

impact in Industry 4.0, which goes beyond consumer applications. ... 5G

combined IOT and with network APIs enables industrial systems to become truly

connected and intelligent. Remote monitoring of manufacturing equipment allows

for real-time maintenance schedule adjustments based on machine behavior. Over

a programmable, secure network, an API-triggered alert can coordinate a remote

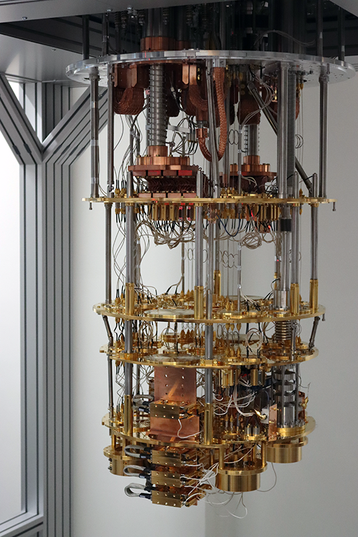

diagnostic session and even start remedial actions if a fault is found.Quantum Computers Just Reached the Holy Grail – No Assumptions, No Limits

A breakthrough led by Daniel Lidar, a professor of engineering at USC and an

expert in quantum error correction, has pushed quantum computing past a key

milestone. Working with researchers from USC and Johns Hopkins, Lidar’s team

demonstrated a powerful exponential speedup using two of IBM’s 127-qubit Eagle

quantum processors — all operated remotely through the cloud. Their results were

published in the prestigious journal Physical Review X. “There have previously

been demonstrations of more modest types of speedups like a polynomial speedup,

says Lidar, who is also the cofounder of Quantum Elements, Inc. “But an

exponential speedup is the most dramatic type of speed up that we expect to see

from quantum computers.” ... What makes a speedup “unconditional,” Lidar

explains, is that it doesn’t rely on any unproven assumptions. Prior speedup

claims required the assumption that there is no better classical algorithm

against which to benchmark the quantum algorithm. Here, the team led by Lidar

used an algorithm they modified for the quantum computer to solve a variation of

“Simon’s problem,” an early example of quantum algorithms that can, in theory,

solve a task exponentially faster than any classical counterpart,

unconditionally.

A breakthrough led by Daniel Lidar, a professor of engineering at USC and an

expert in quantum error correction, has pushed quantum computing past a key

milestone. Working with researchers from USC and Johns Hopkins, Lidar’s team

demonstrated a powerful exponential speedup using two of IBM’s 127-qubit Eagle

quantum processors — all operated remotely through the cloud. Their results were

published in the prestigious journal Physical Review X. “There have previously

been demonstrations of more modest types of speedups like a polynomial speedup,

says Lidar, who is also the cofounder of Quantum Elements, Inc. “But an

exponential speedup is the most dramatic type of speed up that we expect to see

from quantum computers.” ... What makes a speedup “unconditional,” Lidar

explains, is that it doesn’t rely on any unproven assumptions. Prior speedup

claims required the assumption that there is no better classical algorithm

against which to benchmark the quantum algorithm. Here, the team led by Lidar

used an algorithm they modified for the quantum computer to solve a variation of

“Simon’s problem,” an early example of quantum algorithms that can, in theory,

solve a task exponentially faster than any classical counterpart,

unconditionally.

4 things that make an AI strategy work in the short and long term

Most AI gains came from embedding tools like Microsoft Copilot, GitHub Copilot,

and OpenAI APIs into existing workflows. Aviad Almagor, VP of technology

innovation at tech company Trimble, also notes that more than 90% of Trimble

engineers use Github Copilot. The ROI, he says, is evident in shorter

development cycles, and reduced friction in HR and customer service. Moreover,

Trimble has introduced AI into their transportation management system, where AI

agents optimize freight procurement by dynamically matching shippers and

carriers. ... While analysts often lament the difficulty of showing short-term

ROI for AI projects, these four organizations disagree — at least in part. Their

secret: flexible thinking and diverse metrics. They view ROI not only as dollars

saved or earned, but also as time saved, satisfaction increased, and strategic

flexibility gained. London says that Upwave listens for customer signals like

positive feedback, contract renewals, and increased engagement with AI-generated

content. Given the low cost of implementing prebuilt AI models, even modest wins

yield high returns. For example, if a customer cites an AI-generated feature as

a reason to renew or expand their contract, that’s taken as a strong ROI

indicator. Trimble uses lifecycle metrics in engineering and operations. For

instance, one customer used Trimble AI tools to reduce the time it took to

perform a tunnel safety analysis from 30 minutes to just three.

Most AI gains came from embedding tools like Microsoft Copilot, GitHub Copilot,

and OpenAI APIs into existing workflows. Aviad Almagor, VP of technology

innovation at tech company Trimble, also notes that more than 90% of Trimble

engineers use Github Copilot. The ROI, he says, is evident in shorter

development cycles, and reduced friction in HR and customer service. Moreover,

Trimble has introduced AI into their transportation management system, where AI

agents optimize freight procurement by dynamically matching shippers and

carriers. ... While analysts often lament the difficulty of showing short-term

ROI for AI projects, these four organizations disagree — at least in part. Their

secret: flexible thinking and diverse metrics. They view ROI not only as dollars

saved or earned, but also as time saved, satisfaction increased, and strategic

flexibility gained. London says that Upwave listens for customer signals like

positive feedback, contract renewals, and increased engagement with AI-generated

content. Given the low cost of implementing prebuilt AI models, even modest wins

yield high returns. For example, if a customer cites an AI-generated feature as

a reason to renew or expand their contract, that’s taken as a strong ROI

indicator. Trimble uses lifecycle metrics in engineering and operations. For

instance, one customer used Trimble AI tools to reduce the time it took to

perform a tunnel safety analysis from 30 minutes to just three.

How IT Leaders Can Rise to a CIO or Other C-level Position

For any IT professional who aspires to become a CIO, the key is to start

thinking like a business leader, not just a technologist, says Antony Marceles,

a technology consultant and founder of software staffing firm Pumex. "This means

taking every opportunity to understand the why behind the technology, how it

impacts revenue, operations, and customer experience," he explained in an email.

The most successful tech leaders aren't necessarily great technical experts, but

they possess the ability to translate tech speak into business strategy,

Marceles says, adding that "Volunteering for cross-functional projects and

asking to sit in on executive discussions can give you that perspective." ...

CIOs rarely have solo success stories; they're built up by the teams around

them, Marceles says. "Colleagues can support a future CIO by giving honest

feedback, nominating them for opportunities, and looping them into strategic

conversations." Networking also plays a pivotal role in career advancement, not

just for exposure, but for learning how other organizations approach IT

leadership, he adds. Don't underestimate the power of having an executive

sponsor, someone who can speak to your capabilities when you’re not there to

speak for yourself, Eidem says. "The combination of delivering value and having

someone champion that value -- that's what creates real upward momentum."

For any IT professional who aspires to become a CIO, the key is to start

thinking like a business leader, not just a technologist, says Antony Marceles,

a technology consultant and founder of software staffing firm Pumex. "This means

taking every opportunity to understand the why behind the technology, how it

impacts revenue, operations, and customer experience," he explained in an email.

The most successful tech leaders aren't necessarily great technical experts, but

they possess the ability to translate tech speak into business strategy,

Marceles says, adding that "Volunteering for cross-functional projects and

asking to sit in on executive discussions can give you that perspective." ...

CIOs rarely have solo success stories; they're built up by the teams around

them, Marceles says. "Colleagues can support a future CIO by giving honest

feedback, nominating them for opportunities, and looping them into strategic

conversations." Networking also plays a pivotal role in career advancement, not

just for exposure, but for learning how other organizations approach IT

leadership, he adds. Don't underestimate the power of having an executive

sponsor, someone who can speak to your capabilities when you’re not there to

speak for yourself, Eidem says. "The combination of delivering value and having

someone champion that value -- that's what creates real upward momentum."

SLMs vs. LLMs: Efficiency and adaptability take centre stage

SLMs are becoming central to Agentic AI systems due to their inherent efficiency and adaptability. Agentic AI systems typically involve multiple autonomous agents that collaborate on complex, multi-step tasks and interact with environments. Fine-tuning methods like Reinforcement Learning (RL) effectively imbue SLMs with task-specific knowledge and external tool-use capabilities, which are crucial for agentic operations. This enables SLMs to be efficiently deployed for real-time interactions and adaptive workflow automation, overcoming the prohibitive costs and latency often associated with larger models in agentic contexts. ... Operating entirely on-premises ensures that decisions are made instantly at the data source, eliminating network delays and safeguarding sensitive information. This enables timely interpretation of equipment alerts, detection of inventory issues, and real-time workflow adjustments, supporting faster and more secure enterprise operations. SLMs also enable real-time reasoning and decision-making through advanced fine-tuning, especially Reinforcement Learning. RL allows SLMs to learn from verifiable rewards, teaching them to reason through complex problems, choose optimal paths, and effectively use external tools.Quantum’s quandary: racing toward reality or stuck in hyperbole?

One important reason is for researchers to demonstrate their advances and show

that they are adding value. Quantum computing research requires significant

expenditure, and the return on investment will be substantial if a quantum

computer can solve problems previously deemed unsolvable. However, this return

is not assured, nor is the timeframe for when a useful quantum computer might be

achievable. To continue to receive funding and backing for what ultimately is a

gamble, researchers need to show progress — to their bosses, investors, and

stakeholders. ... As soon as such announcements are made, scientists and

researchers scrutinize them for weaknesses and hyperbole. The benchmarks used

for these tests are subject to immense debate, with many critics arguing that

the computations are not practical problems or that success in one problem does

not imply broader applicability. In Microsoft’s case, a lack of peer-reviewed

data means there is uncertainty about whether the Majorana particle even exists

beyond theory. The scientific method encourages debate and repetition, with the

aim of reaching a consensus on what is true. However, in quantum computing,

marketing hype and the need to demonstrate advancement take priority over the

verification of claims, making it difficult to place these announcements in the

context of the bigger picture.

One important reason is for researchers to demonstrate their advances and show

that they are adding value. Quantum computing research requires significant

expenditure, and the return on investment will be substantial if a quantum

computer can solve problems previously deemed unsolvable. However, this return

is not assured, nor is the timeframe for when a useful quantum computer might be

achievable. To continue to receive funding and backing for what ultimately is a

gamble, researchers need to show progress — to their bosses, investors, and

stakeholders. ... As soon as such announcements are made, scientists and

researchers scrutinize them for weaknesses and hyperbole. The benchmarks used

for these tests are subject to immense debate, with many critics arguing that

the computations are not practical problems or that success in one problem does

not imply broader applicability. In Microsoft’s case, a lack of peer-reviewed

data means there is uncertainty about whether the Majorana particle even exists

beyond theory. The scientific method encourages debate and repetition, with the

aim of reaching a consensus on what is true. However, in quantum computing,

marketing hype and the need to demonstrate advancement take priority over the

verification of claims, making it difficult to place these announcements in the

context of the bigger picture.

Ethical AI for Product Owners and Product Managers

Sharded vs. Distributed: The Math Behind Resilience and High Availability

In probability theory, independent events are events whose outcomes do not

affect each other. For example, when throwing four dice, the number displayed on

each dice is independent of the other three dice. Similarly, the availability of

each server in a six-node application-sharded cluster is independent of the

others. This means that each server has an individual probability of being

available or unavailable, and the failure of one server is not affected by the

failure or otherwise of other servers in the cluster. In reality, there may be

shared resources or shared infrastructure that links the availability of one

server to another. In mathematical terms, this means that the events are

dependent. However, we consider the probability of these types of failures to be

low, and therefore, we do not take them into account in this analysis. ...

Traditional architectures are limited by single-node failure risk.

Application-level sharding compounds this problem because if any node goes down,

its shard and therefore the total system becomes unavailable. In contrast,

distributed databases with quorum-based consensus (like YugabyteDB) provide

fault tolerance and scalability, enabling higher resilience and improved

availability.

In probability theory, independent events are events whose outcomes do not

affect each other. For example, when throwing four dice, the number displayed on

each dice is independent of the other three dice. Similarly, the availability of

each server in a six-node application-sharded cluster is independent of the

others. This means that each server has an individual probability of being

available or unavailable, and the failure of one server is not affected by the

failure or otherwise of other servers in the cluster. In reality, there may be

shared resources or shared infrastructure that links the availability of one

server to another. In mathematical terms, this means that the events are

dependent. However, we consider the probability of these types of failures to be

low, and therefore, we do not take them into account in this analysis. ...

Traditional architectures are limited by single-node failure risk.

Application-level sharding compounds this problem because if any node goes down,

its shard and therefore the total system becomes unavailable. In contrast,

distributed databases with quorum-based consensus (like YugabyteDB) provide

fault tolerance and scalability, enabling higher resilience and improved

availability.