Quote for the day:

"Little minds are tamed and subdued by misfortune; but great minds rise above it." -- Washington Irving

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 21 mins • Perfect for listening on the go.

The Architect Reborn

In "The Architect Reborn," Paul Preiss argues that the technology architecture

profession is experiencing a significant resurgence after fifteen years of

structural decline. He explains that the rise of Agile methodologies and the

"three-in-a-box" delivery model—comprising product owners, tech leads, and

scrum masters—mistakenly rendered the architect role as a redundant expense or

a "tax" on speed. This industry shift led many senior developers to pivot

toward "engineering" titles while neglecting essential cross-cutting concerns,

resulting in massive technical debt and systemic instabilities, exemplified by

high-profile failures like the 2024 CrowdStrike outage. However, the current

explosion of AI-generated code has created a critical need for human oversight

that automated tools cannot replicate. Organizations are rediscovering that

they require skilled architects to manage complex quality attributes—such as

security, reliability, and maintainability—and to bridge the gap between

business strategy and technical execution. By leveraging the five pillars of

the Business Technology Architecture Body of Knowledge (BTABoK), the reborn

architect ensures that systems are designed with long-term viability and

strategic purpose in mind. Ultimately, Preiss suggests that as AI disrupts

traditional coding roles, the architect’s unique ability to provide business

context and disciplined design is becoming the most vital asset in the modern

technology landscape.

In "The Architect Reborn," Paul Preiss argues that the technology architecture

profession is experiencing a significant resurgence after fifteen years of

structural decline. He explains that the rise of Agile methodologies and the

"three-in-a-box" delivery model—comprising product owners, tech leads, and

scrum masters—mistakenly rendered the architect role as a redundant expense or

a "tax" on speed. This industry shift led many senior developers to pivot

toward "engineering" titles while neglecting essential cross-cutting concerns,

resulting in massive technical debt and systemic instabilities, exemplified by

high-profile failures like the 2024 CrowdStrike outage. However, the current

explosion of AI-generated code has created a critical need for human oversight

that automated tools cannot replicate. Organizations are rediscovering that

they require skilled architects to manage complex quality attributes—such as

security, reliability, and maintainability—and to bridge the gap between

business strategy and technical execution. By leveraging the five pillars of

the Business Technology Architecture Body of Knowledge (BTABoK), the reborn

architect ensures that systems are designed with long-term viability and

strategic purpose in mind. Ultimately, Preiss suggests that as AI disrupts

traditional coding roles, the architect’s unique ability to provide business

context and disciplined design is becoming the most vital asset in the modern

technology landscape.Supply-chain attacks take aim at your AI coding agents

The emergence of autonomous AI coding agents has introduced a sophisticated

new frontier in software supply chain security, as evidenced by recent attacks

targeting these systems. Security researchers from ReversingLabs have

identified a campaign dubbed "PromptMink," attributed to the North Korean

threat group "Famous Chollima." Unlike traditional social engineering that

targets human developers, these adversaries utilize "LLM Optimization" (LLMO)

and "knowledge injection" to manipulate AI agents. By crafting persuasive

documentation and bait packages on registries like NPM and PyPI, attackers

increase the likelihood that an agent will autonomously select and integrate

malicious dependencies into its projects. This threat is further exacerbated

by "slopsquatting," where attackers register package names that AI agents

frequently hallucinate. Once installed, these malicious components can grant

attackers remote access through SSH keys or facilitate the exfiltration of

sensitive codebases. Because AI agents often operate with high-level system

privileges, the risk of rapid, automated compromise is significant. To

mitigate these vulnerabilities, organizations must implement rigorous security

controls, including mandatory developer reviews for all AI-suggested

dependencies and the adoption of comprehensive Software Bill of Materials

(SBOM) practices. Ultimately, while AI agents offer productivity gains, their

integration into development pipelines requires a "trust but verify" approach

to prevent large-scale supply chain poisoning.

The emergence of autonomous AI coding agents has introduced a sophisticated

new frontier in software supply chain security, as evidenced by recent attacks

targeting these systems. Security researchers from ReversingLabs have

identified a campaign dubbed "PromptMink," attributed to the North Korean

threat group "Famous Chollima." Unlike traditional social engineering that

targets human developers, these adversaries utilize "LLM Optimization" (LLMO)

and "knowledge injection" to manipulate AI agents. By crafting persuasive

documentation and bait packages on registries like NPM and PyPI, attackers

increase the likelihood that an agent will autonomously select and integrate

malicious dependencies into its projects. This threat is further exacerbated

by "slopsquatting," where attackers register package names that AI agents

frequently hallucinate. Once installed, these malicious components can grant

attackers remote access through SSH keys or facilitate the exfiltration of

sensitive codebases. Because AI agents often operate with high-level system

privileges, the risk of rapid, automated compromise is significant. To

mitigate these vulnerabilities, organizations must implement rigorous security

controls, including mandatory developer reviews for all AI-suggested

dependencies and the adoption of comprehensive Software Bill of Materials

(SBOM) practices. Ultimately, while AI agents offer productivity gains, their

integration into development pipelines requires a "trust but verify" approach

to prevent large-scale supply chain poisoning.

Why disaster recovery plans fail in geopolitical crises

In "Why Disaster Recovery Plans Fail in Geopolitical Crises," Lisa Morgan

explains that traditional disaster recovery (DR) strategies are increasingly

inadequate against the cascading disruptions of modern warfare and global

instability. Historically, DR plans have relied on "known knowns" like

localized hardware failures or natural disasters, but the blurring line

between private enterprise and nation-state conflict has introduced

unprecedented risks. Recent drone strikes on data centers in the Middle East

demonstrate that physical infrastructure is no longer immune to military

action. Furthermore, the rise of "techno-nationalism" and strict data

sovereignty laws significantly complicates geographic failover, as transiting

data across borders can now lead to legal and regulatory violations. Modern

resilience requires CIOs to shift from static IT playbooks to cross-functional

business capabilities involving legal, risk, and compliance teams. The article

also highlights how AI-driven resource constraints, particularly in energy and

silicon, exacerbate these vulnerabilities. It is critical that organizations

move beyond simple redundancy toward adaptive architectures that can withstand

simultaneous infrastructure failures and prioritize employee safety in

conflict zones. Ultimately, today’s CIOs must adopt the mindset of military

strategists, conducting robust tabletop exercises that challenge existing

assumptions and prepare for the total, non-linear disruptions characteristic

of the current geopolitical climate.

In "Why Disaster Recovery Plans Fail in Geopolitical Crises," Lisa Morgan

explains that traditional disaster recovery (DR) strategies are increasingly

inadequate against the cascading disruptions of modern warfare and global

instability. Historically, DR plans have relied on "known knowns" like

localized hardware failures or natural disasters, but the blurring line

between private enterprise and nation-state conflict has introduced

unprecedented risks. Recent drone strikes on data centers in the Middle East

demonstrate that physical infrastructure is no longer immune to military

action. Furthermore, the rise of "techno-nationalism" and strict data

sovereignty laws significantly complicates geographic failover, as transiting

data across borders can now lead to legal and regulatory violations. Modern

resilience requires CIOs to shift from static IT playbooks to cross-functional

business capabilities involving legal, risk, and compliance teams. The article

also highlights how AI-driven resource constraints, particularly in energy and

silicon, exacerbate these vulnerabilities. It is critical that organizations

move beyond simple redundancy toward adaptive architectures that can withstand

simultaneous infrastructure failures and prioritize employee safety in

conflict zones. Ultimately, today’s CIOs must adopt the mindset of military

strategists, conducting robust tabletop exercises that challenge existing

assumptions and prepare for the total, non-linear disruptions characteristic

of the current geopolitical climate.

The immutable mountain: Understanding distributed ledgers through the lens of alpine climbing

The article "The Immutable Mountain" utilizes the high-stakes environment of

alpine climbing on Ecuador’s Cayambe volcano to explain the sophisticated

mechanics of distributed ledgers. Moving away from traditional centralized

command-and-control structures, which often represent single points of

failure, the author illustrates how expedition rope teams function as

autonomous nodes. Each team possesses the authority to make critical,

real-time decisions, mirroring the decentralized nature of blockchain

technology. This structure ensures that information is not merely passed down

a hierarchy but is synchronized across a collective network, fostering

operational resilience and organizational agility. Key technical concepts like

consensus are framed through the lens of climbers reaching a shared agreement

on route safety, while immutability is compared to the permanent, unalterable

nature of a daily trip report. By adopting this "composable authoritative

source," modern enterprises can achieve radical transparency and maintain a

singular, verifiable version of the truth across disparate departments and

external partners. Ultimately, the piece argues that the true power of a

distributed ledger lies not in its complex code, but in a foundational

philosophy of collective trust. This paradigm shift allows organizations to

navigate volatile global markets with the same discipline and absolute

reliability required to survive the "death zone" of a mountain summit.

The article "The Immutable Mountain" utilizes the high-stakes environment of

alpine climbing on Ecuador’s Cayambe volcano to explain the sophisticated

mechanics of distributed ledgers. Moving away from traditional centralized

command-and-control structures, which often represent single points of

failure, the author illustrates how expedition rope teams function as

autonomous nodes. Each team possesses the authority to make critical,

real-time decisions, mirroring the decentralized nature of blockchain

technology. This structure ensures that information is not merely passed down

a hierarchy but is synchronized across a collective network, fostering

operational resilience and organizational agility. Key technical concepts like

consensus are framed through the lens of climbers reaching a shared agreement

on route safety, while immutability is compared to the permanent, unalterable

nature of a daily trip report. By adopting this "composable authoritative

source," modern enterprises can achieve radical transparency and maintain a

singular, verifiable version of the truth across disparate departments and

external partners. Ultimately, the piece argues that the true power of a

distributed ledger lies not in its complex code, but in a foundational

philosophy of collective trust. This paradigm shift allows organizations to

navigate volatile global markets with the same discipline and absolute

reliability required to survive the "death zone" of a mountain summit.

Train like you fight: Why cyber operations teams need no-notice drills

The article "Train like you fight: Why cyber operations teams need no-notice

drills" argues that traditional, scheduled tabletop exercises fail to prepare

cybersecurity teams for the intense psychological stress of a real-world

incident. While planned exercises satisfy compliance, they lack the "threat

stimulus" necessary to engage the sympathetic nervous system, which can

suppress executive function when a genuine crisis occurs. Drawing on medical

training at Level 1 trauma centers and research by psychologist Donald

Meichenbaum, the author advocates for "no-notice" drills as a form of stress

inoculation. This approach, rooted in the Yerkes-Dodson principle, shifts

incident response from a document-heavy process to a conditioned physiological

response by raising the threshold at which stress impairs performance. By

surprising teams with realistic anomalies, organizations can uncover critical

operational gaps—such as communication breakdowns, cross-functional latency,

or outdated escalation contacts—that remain hidden during predictable tests.

Furthermore, these drills foster psychological safety and trust, as teams

learn to navigate ambiguity together without fear of blame through blameless

post-mortems. Ultimately, the article maintains that the temporary discomfort

of a surprise drill is a necessary investment, as failing during practice is

far less damaging than failing during a real breach when the damage clock is

already running.

The article "Train like you fight: Why cyber operations teams need no-notice

drills" argues that traditional, scheduled tabletop exercises fail to prepare

cybersecurity teams for the intense psychological stress of a real-world

incident. While planned exercises satisfy compliance, they lack the "threat

stimulus" necessary to engage the sympathetic nervous system, which can

suppress executive function when a genuine crisis occurs. Drawing on medical

training at Level 1 trauma centers and research by psychologist Donald

Meichenbaum, the author advocates for "no-notice" drills as a form of stress

inoculation. This approach, rooted in the Yerkes-Dodson principle, shifts

incident response from a document-heavy process to a conditioned physiological

response by raising the threshold at which stress impairs performance. By

surprising teams with realistic anomalies, organizations can uncover critical

operational gaps—such as communication breakdowns, cross-functional latency,

or outdated escalation contacts—that remain hidden during predictable tests.

Furthermore, these drills foster psychological safety and trust, as teams

learn to navigate ambiguity together without fear of blame through blameless

post-mortems. Ultimately, the article maintains that the temporary discomfort

of a surprise drill is a necessary investment, as failing during practice is

far less damaging than failing during a real breach when the damage clock is

already running.

The Art of Lean Governance: Developing the Nerve Center of Trust

Steve Zagoudis’s article, "The Art of Lean Governance: Developing the Nerve Center of Trust," explores the transformation of data governance from a static, policy-driven framework into a dynamic, continuous control system. He argues that the foundation of modern data integrity lies in data reconciliation, which should be elevated from a mere back-office correction mechanism to the primary control for enterprise data risk. By embedding reconciliation directly into data architecture, organizations can establish a "nerve center of trust" that operates at the same cadence as the data itself. This shift is particularly crucial for AI readiness, as the effectiveness of artificial intelligence is fundamentally defined by whether data can be trusted at the moment of use. Without this systemic trust, AI risks accelerating organizational errors rather than providing a competitive advantage. Zagoudis critiques traditional governance for being too episodic and manual, advocating instead for a lean approach that provides automated, evidence-based assurance. Ultimately, lean governance fosters a culture where data is a reliable asset for defensible decision-making. By operationalizing trust through disciplined execution and architectural integration, institutions can move beyond conceptual alignment to achieve genuine agility and accuracy in an increasingly data-driven landscape, ensuring that their technological investments yield meaningful results.Narrative Architecture: Designing Stories That Survive Algorithms

The Forbes Business Council article, "Narrative Architecture: Designing

Stories That Survive Algorithms," critiques the modern trend of platform-first

storytelling, where brands prioritize distribution and algorithmic trends over

substantive identity. This reactionary approach often leads to "identity

erosion," as content becomes ephemeral and dependent on shifting digital

environments. To combat this, the author introduces "narrative architecture"

as a vital strategic asset. This framework acts as a brand's "home base,"

grounding all content in a coherent core story that defines the organization’s

history, values, and fundamental purpose. Rather than letting algorithms

dictate their messaging, brands should use them as tools to inform a

pre-established narrative. By shifting focus from fleeting visibility to

deep-rooted credibility, companies can build lasting trust with audiences,

investors, and potential employees. The article argues that stories built on

solid narrative architecture possess a unique longevity that extends far

beyond digital platforms, manifesting in conference invitations, earned media

coverage, and consistent internal brand alignment. Ultimately, while

platform-optimized content might gain temporary engagement, a well-architected

story ensures a brand remains relevant and respected even as algorithms

evolve, securing long-term reputation and sustainable business success in an

increasingly crowded digital landscape.

The Forbes Business Council article, "Narrative Architecture: Designing

Stories That Survive Algorithms," critiques the modern trend of platform-first

storytelling, where brands prioritize distribution and algorithmic trends over

substantive identity. This reactionary approach often leads to "identity

erosion," as content becomes ephemeral and dependent on shifting digital

environments. To combat this, the author introduces "narrative architecture"

as a vital strategic asset. This framework acts as a brand's "home base,"

grounding all content in a coherent core story that defines the organization’s

history, values, and fundamental purpose. Rather than letting algorithms

dictate their messaging, brands should use them as tools to inform a

pre-established narrative. By shifting focus from fleeting visibility to

deep-rooted credibility, companies can build lasting trust with audiences,

investors, and potential employees. The article argues that stories built on

solid narrative architecture possess a unique longevity that extends far

beyond digital platforms, manifesting in conference invitations, earned media

coverage, and consistent internal brand alignment. Ultimately, while

platform-optimized content might gain temporary engagement, a well-architected

story ensures a brand remains relevant and respected even as algorithms

evolve, securing long-term reputation and sustainable business success in an

increasingly crowded digital landscape.

Zero Trust in OT: Why It's Been Hard and Why New CISA Guidance Changes Everything

The Nozomi Networks blog post titled "Zero Trust in OT: Why It’s Been Hard and

Why New CISA Guidance Changes Everything" examines the historic friction and

recent transformative shifts in applying Zero Trust (ZT) principles to

operational technology. While ZT has matured within IT, extending it to

industrial environments like SCADA systems and critical infrastructure has

long been hindered by significant technical and cultural hurdles. Traditional

IT security controls—such as active scanning, encryption, and aggressive

network isolation—often disrupt real-time industrial processes, posing severe

risks to safety, system uptime, and equipment integrity. However, the author

emphasizes that the April 2026 release of CISA’s "Adapting Zero Trust

Principles to Operational Technology" guide marks a pivotal turning point.

This collaborative framework, developed alongside the DOE and FBI, validates

unique industrial constraints by prioritizing physical safety and availability

over mere data protection. By advocating for specialized, "OT-safe"

strategies—including passive monitoring, protocol-aware visibility, and

operationally-aware segmentation—the guidance removes years of ambiguity for

practitioners. Ultimately, the blog argues that Zero Trust has evolved from an

IT concept forced onto the factory floor into a practical, resilient framework

designed to protect the physical processes essential to modern society without

sacrificing operational integrity.

The Nozomi Networks blog post titled "Zero Trust in OT: Why It’s Been Hard and

Why New CISA Guidance Changes Everything" examines the historic friction and

recent transformative shifts in applying Zero Trust (ZT) principles to

operational technology. While ZT has matured within IT, extending it to

industrial environments like SCADA systems and critical infrastructure has

long been hindered by significant technical and cultural hurdles. Traditional

IT security controls—such as active scanning, encryption, and aggressive

network isolation—often disrupt real-time industrial processes, posing severe

risks to safety, system uptime, and equipment integrity. However, the author

emphasizes that the April 2026 release of CISA’s "Adapting Zero Trust

Principles to Operational Technology" guide marks a pivotal turning point.

This collaborative framework, developed alongside the DOE and FBI, validates

unique industrial constraints by prioritizing physical safety and availability

over mere data protection. By advocating for specialized, "OT-safe"

strategies—including passive monitoring, protocol-aware visibility, and

operationally-aware segmentation—the guidance removes years of ambiguity for

practitioners. Ultimately, the blog argues that Zero Trust has evolved from an

IT concept forced onto the factory floor into a practical, resilient framework

designed to protect the physical processes essential to modern society without

sacrificing operational integrity.

The expensive habits we can't seem to break

The article "The Expensive Habits We Can't Seem to Break" explores critical

management failures that continue to hinder organizational success, focusing

on three persistent mistakes. First, it critiques the tendency to treat

culture as a mere communications exercise. Instead of relying on glossy value

statements, the author argues that culture is defined by lived experiences and

managerial responses during crises. Second, the piece highlights the costly

underinvestment in the middle manager layer. With research showing that a

significant portion of voluntary turnover is preventable through better

management, the author notes that managers are often overextended and

undersupported, lacking the necessary tools for "people stewardship." Finally,

the article addresses the confusion between flexibility and autonomy. The

return-to-office debate often misses the mark by focusing on location rather

than trust. Organizations that dictate mandates rather than co-creating norms

risk losing critical talent who seek agency over their work. Ultimately,

bridging these gaps requires a move away from superficial fixes toward

deep-seated changes in leadership behavior and employee trust. By addressing

these "expensive habits," HR leaders can foster psychologically safe

environments that drive retention and long-term performance, ensuring that

organizational values are authentically integrated into the daily reality of

the workforce.

The article "The Expensive Habits We Can't Seem to Break" explores critical

management failures that continue to hinder organizational success, focusing

on three persistent mistakes. First, it critiques the tendency to treat

culture as a mere communications exercise. Instead of relying on glossy value

statements, the author argues that culture is defined by lived experiences and

managerial responses during crises. Second, the piece highlights the costly

underinvestment in the middle manager layer. With research showing that a

significant portion of voluntary turnover is preventable through better

management, the author notes that managers are often overextended and

undersupported, lacking the necessary tools for "people stewardship." Finally,

the article addresses the confusion between flexibility and autonomy. The

return-to-office debate often misses the mark by focusing on location rather

than trust. Organizations that dictate mandates rather than co-creating norms

risk losing critical talent who seek agency over their work. Ultimately,

bridging these gaps requires a move away from superficial fixes toward

deep-seated changes in leadership behavior and employee trust. By addressing

these "expensive habits," HR leaders can foster psychologically safe

environments that drive retention and long-term performance, ensuring that

organizational values are authentically integrated into the daily reality of

the workforce.

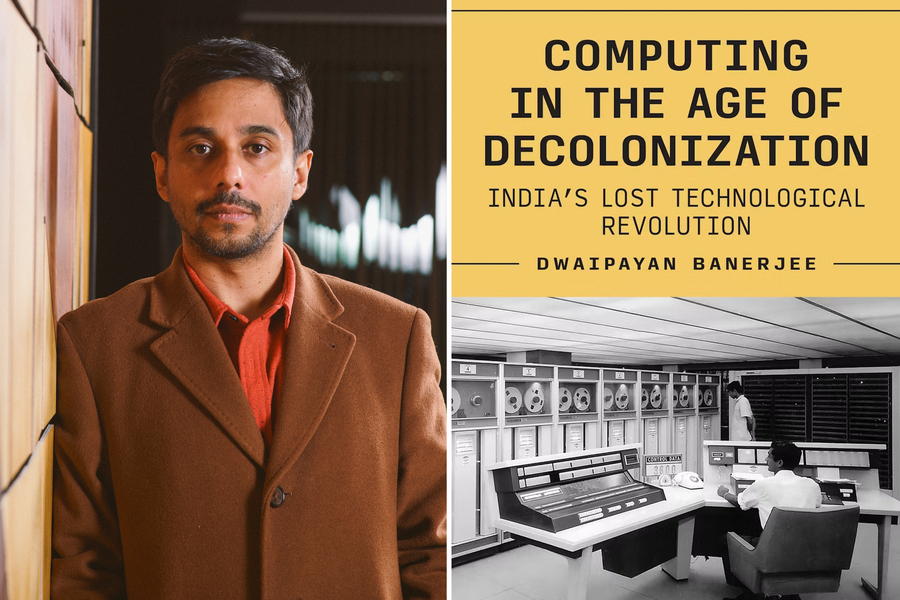

The tech revolution that wasn’t

The MIT News article "The tech revolution that wasn't" explores Associate

Professor Dwai Banerjee’s book, Computing in the Age of Decolonization:

India's Lost Technological Revolution. It details India’s early, ambitious

attempts to achieve technological sovereignty following independence,

exemplified by the 1960 creation of the TIFRAC computer at the Tata Institute

of Fundamental Research. Despite being a state-of-the-art machine built with

minimal resources, the TIFRAC never reached mass production. Banerjee examines

how India’s vision of becoming a global hardware manufacturing powerhouse was

derailed by geopolitical constraints, limited knowledge sharing from the U.S.,

and a pivotal domestic shift in the 1970s and 1980s toward the private

software services sector. This transition favored quick profits through

outsourcing over the long-term investment required for R&D and

manufacturing. Consequently, India became a leader in offshoring talent rather

than a primary innovator in computer hardware. Banerjee challenges the common

"individual genius" narrative of tech history, emphasizing instead that

large-scale global capital and institutional support are the true determinants

of success. Ultimately, the book uses India’s experience to illustrate the

enduring, unequal power structures that continue to shape technological

advancement in post-colonial nations, where the promise of a sovereign digital

revolution was traded for a role in the global services economy.

The MIT News article "The tech revolution that wasn't" explores Associate

Professor Dwai Banerjee’s book, Computing in the Age of Decolonization:

India's Lost Technological Revolution. It details India’s early, ambitious

attempts to achieve technological sovereignty following independence,

exemplified by the 1960 creation of the TIFRAC computer at the Tata Institute

of Fundamental Research. Despite being a state-of-the-art machine built with

minimal resources, the TIFRAC never reached mass production. Banerjee examines

how India’s vision of becoming a global hardware manufacturing powerhouse was

derailed by geopolitical constraints, limited knowledge sharing from the U.S.,

and a pivotal domestic shift in the 1970s and 1980s toward the private

software services sector. This transition favored quick profits through

outsourcing over the long-term investment required for R&D and

manufacturing. Consequently, India became a leader in offshoring talent rather

than a primary innovator in computer hardware. Banerjee challenges the common

"individual genius" narrative of tech history, emphasizing instead that

large-scale global capital and institutional support are the true determinants

of success. Ultimately, the book uses India’s experience to illustrate the

enduring, unequal power structures that continue to shape technological

advancement in post-colonial nations, where the promise of a sovereign digital

revolution was traded for a role in the global services economy.

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/70244595/acastro_200730_1777_ai_0001.0.jpg)