We don’t need to go back to the office to be creative, we need AI

The solution to this dilemma will come from artificial intelligence (AI). The inherent trade-off between exploration and efficiency is well known to AI researchers. One question that those working in AI often have to grapple with is how often an algorithm should take actions that it hasn’t tried, as against actions it has already tried that will usually lead to some reward. Untried actions can yield spectacular results. For example, when the DeepMind computer program AlphaGo beat Go world champion Lee Sedol in 2016, it did so by exploring moves most human players had never seen before. Prior to move 37 in the second match against Sedol, AlphaGo had calculated that there was a one-in-ten-thousand chance that a human player would make that same move. And the adventurous gamble paid off. Human innovation involves a similar process of exploration and, to facilitate innovation, companies must get their employees to “collide”. Before the pandemic, this was achieved through open-plan architecture that encouraged “water-cooler” moments of unplanned encounters. But, with many employees working from home, corporations will have to find different ways to facilitate these kinds of random interactions.

Why Data Monitoring is Critical in a Hybrid Cloud Environment

Effective capacity management is not the only benefit of data monitoring,

however. While cloud providers typically have strong security protocols,

agencies and organizations must remain vigilant for cyberattacks. Data

monitoring software provides an effective means to spot problems and mitigate

issues before they can affect or damage the network and limit operations. When

it comes to data, agencies and organizations must be able to “properly protect

their data whether it is on-prem, in the cloud, or in transit,” Grunewald

explained. While the path to the cloud has become more clear, Grunewald cautions

that there must be an ordered approach to migrating software. That process can

be roughly divided into six steps: 1) assessment, 2) prioritization, 3) roadmap,

4) optimization, 5) build, and 6) migration. From start to finish, the entire

process is designed to encourage frank conversations about what material and

processes are worth the transition and how to best utilize the available

resources. There are incredible gains to be made by transitioning to the cloud.

Attack on Exchange Servers Gives Impetus to Move Email to the Cloud

Moving Exchange to the cloud began in 2005 but only became mainstream after the

release of Office 365 in 2011. I spoke about the perils of moving to Exchange

Online at the Exchange Conferences of 2012 and 2014. On-premises servers were

still attractive in 2014 but the situation is very different now, both in terms

of the threat to on-premises servers and our knowledge of what it’s like for

companies to run email in the cloud. According to data shared at the TEC 2020

conference, Exchange Online supports 5.5 billion mailboxes. That number seems

enormous in the context of 250-odd monthly active Office 365 users, but more

reasonable when you consider that the figure includes Outlook.com users (400

million switched over to use the Exchange Online infrastructure in 2017), shared

mailboxes, group mailboxes, resource mailboxes, and a very large number of

system mailboxes used by the Microsoft 365 substrate. Exchange Online is a

massive online service running on 275,000 mailbox servers. The attack penetrated

none of these servers.

Infrastructure as code: Create and configure infrastructure elements in seconds

The final part of the IaC topology, is the one that will be most visible and

familiar to IT Ops teams. These are the container orchestrators that control the

way in which containers are deployed and provisioned. The most widespread of

these orchestrators is undoubtedly Kubernetes. However, the ubiquity of

Kubernetes has created something of a problem—that most IT Ops staff, and indeed

many developers, think that IaC and Kubernetes are synonymous. I don’t mean this

as a criticism of Kubernetes. The system is perhaps the purest expression of the

IaC paradigm: eminently portable, but also capable of being adapted to run

efficiently on a wide variety of hardware. However, Kubernetes is far from

representing the full range of what can be achieved by a careful mix of

containers, VMs, and a creative use of container orchestrators. In other

words—Kubernetes is the start of your IaC journey, not the end. While it’s a

great place to begin to explore adaptive provisioning and continuous

integration, many firms will need to develop bespoke containerization strategies

in order to work with obscure systems: those that interact with legacy

hardware

Are Passwords Becoming A Thing Of The Past?

Compounding the problem of a weak password is the tendency of many users to use

the same password across multiple sites and accounts, ranging from their social

media to their financial accounts. One study revealed that an average of just

five passwords is used across multiple services by more than half of the

respondents. One password getting compromised could spell disaster for a user,

and even more so if they possess sensitive information about their company. Many

users may already have their passwords out in the open – the website Have I Been

Pwned has a database of over 613 million passwords that data breaches have

exposed. Passwords acquired through these breaches are usually sold en masse to

other hackers that use automated attacks like credential stuffing to find a

password match to an account. One of the more common ways to strengthen

authentication is to use a password manager, especially those that generate

unique passwords and automatically change passwords every few months. Changing

passwords frequently greatly reduces the risk of user data being affected in the

event of a password breach. Two-factor authentication is another common method

of going semi, if not fully, passwordless.

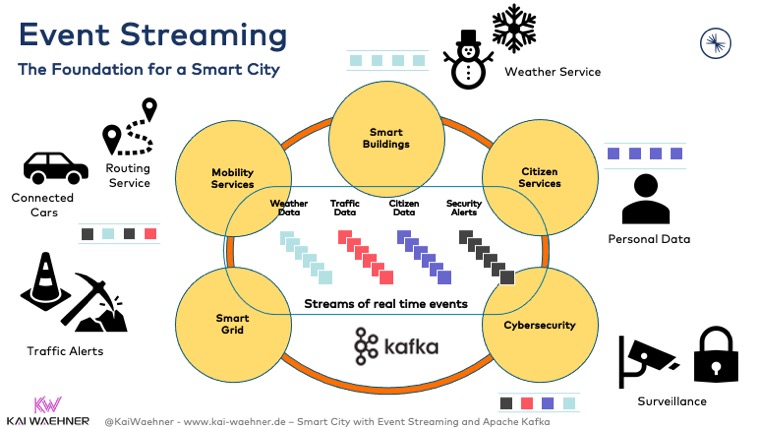

No More Wasted Data: Why More Companies Are Turning Data Into Action

Though the majority of data collected by businesses currently goes to waste,

there are more tools emerging to help companies unify consumed data, automate

insights, and apply machine learning to better leverage data to meet business

goals. First, it's important to take a step back to evaluate the purpose and end

goals here. Collecting data for the sake of having it won't get anyone very far.

Companies need to identify the issues or opportunities associated with the data

collection. In other words, they need to know what they're going to do with

every single piece of data collected. To determine the end goals, start by

analyzing and accessing different types of data collected to determine if it was

beneficial to the desired outcome or has the potential to be but wasn't

leveraged. This will help identify any holes where other data should be tracked.

This will also help hone the focus on the more important data sets to integrate

and normalize, ultimately making data analysis a more painless process that

produces more usable information. Next, make sure the data is useful -- that

it's standardized, integrated across as few tech platforms as possible, and that

the collection of specific data follows company rules and industry

regulations.

Akash Network Launches Akash MAINNET 2, the First Decentralized Open-Source Cloud

As the first open-source cloud and the only viable decentralized cloud

alternative to centralized cloud providers like Amazon Web Services, Google

Cloud, and Microsoft Azure, Akash MAINNET 2 empowers developers to break free

from the limitations of traditional cloud infrastructure. The platform

accelerates growth and scale in the blockchain ecosystem by enabling developers

and companies to decentralize their cloud infrastructure, deploying applications

faster, more efficiently, and at lower cost. Through the platform, individuals,

companies, and data centers with underutilized computing capacity will also be

able to monetize and lease their cloud compute to those who need it, recouping

the high costs of server maintenance and capital expenditure. Recently, Akash

announced an integration with Equinix Metal, the world's largest data center and

colocation infrastructure provider with 220 data centers in 25 countries, to

expand access to global, low-latency, and powerful cloud infrastructure. For the

first time, developers will be able to launch applications such as DeFi apps,

blogs, games, data visualizations, block explorers, blockchain nodes, and other

blockchain network components on a decentralized cloud.

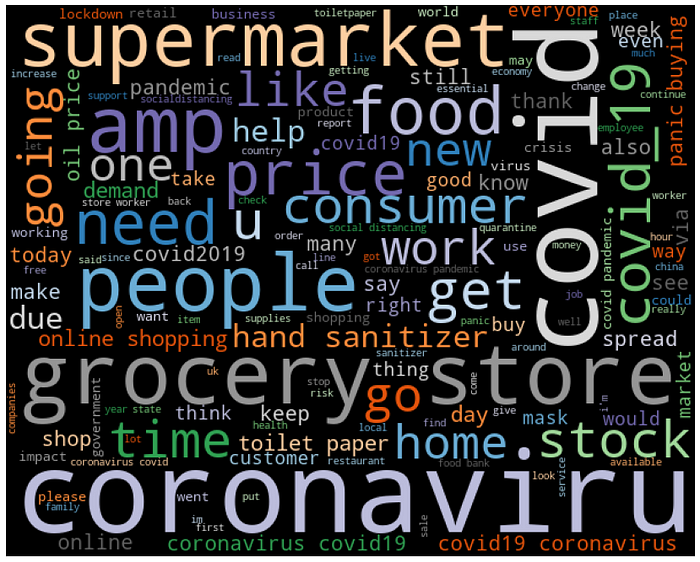

Number of ransomware attacks grew by more than 150%

On a technical level, public-facing RDP servers were the most common target for

many ransomware gangs last year. Against the backdrop of the pandemic that

caused many people to work from home, the number of such servers grew

exponentially. In 52% of all attacks, analyzed by Group-IB, publicly accessible

RDP servers were used to gain initial access, followed by phishing (29%), and

exploitation of public-facing applications (17%). Big Game Hunting – targeted

ransomware attacks against wealthy enterprises – continued to be one of the

defining trends in 2020. In hope to secure the biggest ransom possible, the

adversaries were going after large companies. Big businesses cannot afford

downtime, averaging 18 days in 2020. The operators were less concerned about the

industry and more focused on scale. It’s no surprise that most of the ransomware

attacks, that Group-IB analyzed, occurred in North America and Europe, where

most of the Fortune 500 firms are located, followed by Latin America and the

Asia-Pacific respectively. A chance of easy money prompted many gangs to join

the Big Game Hunting. State-sponsored threat actors who were seen carrying out

financially motivated attacks were not long in coming.

NHS datacentre transformation projects continue apace during pandemic

Ratcliffe suggests a shift away from a top-down approach across NHS IT,

recognising the beneficial modularity that enables individual working parts such

as hospitals or trusts to become more nimble – a whole that is greater than the

sum of its parts. He says NHS Digital, the NHS’s digital arm, has partly

succeeded in moving away from a more traditional “command and control” approach,

shifting emphasis more to the empowerment of clinicians and the individual

healthcare bodies for which they work. “It is amazing what a crisis will do,”

says Ratcliffe, pointing to the fast-moving, NHS-driven, coronavirus vaccination

roll-out across England. “There weren’t really rules before, but habits that

became rules by repetition. I genuinely don’t think we will all suddenly go, ‘oh

wow, we’ve got to get back to normal’ either – ‘normal’ doesn’t exist any more.”

The NHS has been able to move forward – for example, in its Covid-19 vaccine

roll-out and, to a lesser degree, in contact tracing – in ways that NHS Digital

chief Sarah Wilkinson admitted, in an IBM presentation, “no one would have

thought possible”. Ratcliffe says more productive provision might involve

slicing resources by disease or by discipline, horizontally or vertically,

instead of NHS-wide or even trust-wide.

Look to Banking as a Model for Stopping Crime-as-a-Service

We have seen good examples of how cybersecurity teams are working more closely with other internal parties, especially in the banking sector. Some of the major UK and European banks have been operating with an organizational structure where financial crime and cybersecurity teams have been part of the same business unit for over 10 years, driven by the natural synergy between these functions. This has created significant progress. With the convergence of cyber and financial crime teams, the industry has seen the emergence of the fusion center which can be thought of as an advanced version of the security operations center (SOC) management model, unifying several different teams within an organization, such as fraud, financial crime, and cyber. By bringing together these units, organizations can increase situational awareness, share analytics and threat intelligence more easily, have increased attractiveness to talent, and have a standard framework for procedures. Combating cybercrime and disrupting the illegal economy can then be done to a more effective degree by having more transparent management, establishing an end-to-end operating model, and allowing easier collaboration and consolidation on relevant threats and actions.

Quote for the day:

"Every leader needs to look back once in

awhile to make sure he has followers." -- Kouzes and Posner