Quote for the day:

"Effective leaders know that resources are never the problem; it's always a matter of resourfulness." -- Tony Robbins

AI web browsers are cool, helpful, and utterly untrustworthy

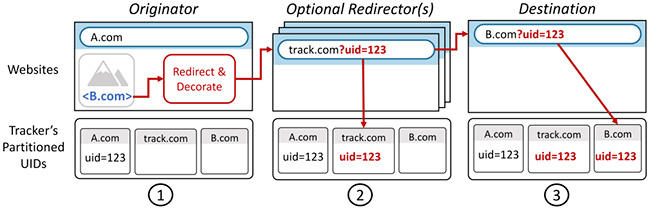

AI browsers can and do interact with everything on a web page: summarizing

content, reading emails, composing posts, looking at images, etc., etc. Every

element on the page, whether you can see it or not, can hide an attack. A

hacker can embed clipboard manipulations or other hacks that traditional

browsers would never, not ever, execute automatically. ... AI browser agents

can be tricked by hidden instructions embedded in websites via invisible text,

images, scripts, or, believe it or not, bad grammar. Your eyes might glaze

over at a long run-on sentence, but your AI web browser will read it all,

including instructions for an attack hidden in plain sight within it. Such

malicious commands are read and executed by the AI. This can lead to exposure

of sensitive data, such as emails, authentication tokens, and login details,

or triggering unwanted actions, including sending emails, posting to social

media, or giving your computer a bad case of malware. ... Privacy is pretty

much lost these days anyway, but with AI web browsers, we’ll have all the

privacy of a goldfish in a bowl. Since AI browsers monitor our every last

move, they process much more granular personal information than conventional

browsers. Worrying about cookies and privacy is so 1990s. AI browsers track

everything. This is then used to create highly detailed behavioral profiles.

What? You didn’t know that AI browsers have built-in memory functions that

retain your interactions, browser history, and content from other apps? How do

you think they do what they do? Intuition? ESP?

AI browsers can and do interact with everything on a web page: summarizing

content, reading emails, composing posts, looking at images, etc., etc. Every

element on the page, whether you can see it or not, can hide an attack. A

hacker can embed clipboard manipulations or other hacks that traditional

browsers would never, not ever, execute automatically. ... AI browser agents

can be tricked by hidden instructions embedded in websites via invisible text,

images, scripts, or, believe it or not, bad grammar. Your eyes might glaze

over at a long run-on sentence, but your AI web browser will read it all,

including instructions for an attack hidden in plain sight within it. Such

malicious commands are read and executed by the AI. This can lead to exposure

of sensitive data, such as emails, authentication tokens, and login details,

or triggering unwanted actions, including sending emails, posting to social

media, or giving your computer a bad case of malware. ... Privacy is pretty

much lost these days anyway, but with AI web browsers, we’ll have all the

privacy of a goldfish in a bowl. Since AI browsers monitor our every last

move, they process much more granular personal information than conventional

browsers. Worrying about cookies and privacy is so 1990s. AI browsers track

everything. This is then used to create highly detailed behavioral profiles.

What? You didn’t know that AI browsers have built-in memory functions that

retain your interactions, browser history, and content from other apps? How do

you think they do what they do? Intuition? ESP?AI can flag the risk, but only humans can close the loop

Companies embedding AI into vendor risk processes need governance structures that ensure transparency, accountability, and compliance. This includes maintaining an approved sources catalogue and requiring either the system or an analyst to validate findings and document the rationale behind them. Data minimization should be built into the design by defining what information is always in scope, such as sanctions or embargo lists, and what is contextually relevant, while excluding protected or sensitive attributes under GDPR and configuring AI to ignore them. Risk assessments should be tiered, calibrating the depth of checks to supplier criticality and geography to avoid unnecessary data collection for low-risk relationships while expanding scope for high-risk scenarios. Human accountability remains essential, with a named individual owning due diligence decisions while AI provides recommendations without replacing human judgment ... Regulators are likely to allow AI use if firms establish strong controls and demonstrate effective oversight, as required by frameworks like the EU AI Act. Responsibility remains with individuals or organizations; liability does not transfer to AI itself. While regulators may struggle to specify detailed technical rules, one clear shift is that “the data volume was too large to review” will no longer be an acceptable defense.10 top devops practices no one is talking about

“A key, yet overlooked, devops practice is building true shared ownership, which

means more than just putting teams in the same chat room,” says Chris Hendrich,

associate CTO of AppMod at SADA. “It requires making production reliability and

performance a primary success indicator for development, not solely an

operational concern. This shared accountability is what builds the

organizational competency of creating better, more resilient products.” ...

“Baking an integrated code quality and code security approach into your devops

workflow isn’t just good practice, it’s essential and a game-changer,” says

Donald Fischer, VP at Sonar. “Tackling security alongside quality from day one

isn’t merely about early bug detection; it’s about building fundamentally

stronger, more trustworthy, and resilient software that is secure by design.”

... “Open source is a no-brainer for developers, but as the ecosystem grows, so

do the risks of malware, unsafe AI models, license issues, outdated packages,

poor performance, and missing features,” says Mitchell Johnson, CPDO of

Sonatype. “Modern devops teams need visibility into what’s getting pulled in,

not just to stay secure and compliant, but to make sure they’re building with

high-quality components.” ... “Version-controlling database schemas and

configurations across development, QA, and production is a quietly powerful

devops practice,” says McMillan.

“A key, yet overlooked, devops practice is building true shared ownership, which

means more than just putting teams in the same chat room,” says Chris Hendrich,

associate CTO of AppMod at SADA. “It requires making production reliability and

performance a primary success indicator for development, not solely an

operational concern. This shared accountability is what builds the

organizational competency of creating better, more resilient products.” ...

“Baking an integrated code quality and code security approach into your devops

workflow isn’t just good practice, it’s essential and a game-changer,” says

Donald Fischer, VP at Sonar. “Tackling security alongside quality from day one

isn’t merely about early bug detection; it’s about building fundamentally

stronger, more trustworthy, and resilient software that is secure by design.”

... “Open source is a no-brainer for developers, but as the ecosystem grows, so

do the risks of malware, unsafe AI models, license issues, outdated packages,

poor performance, and missing features,” says Mitchell Johnson, CPDO of

Sonatype. “Modern devops teams need visibility into what’s getting pulled in,

not just to stay secure and compliant, but to make sure they’re building with

high-quality components.” ... “Version-controlling database schemas and

configurations across development, QA, and production is a quietly powerful

devops practice,” says McMillan.

Cloud Identity Exposure Is 'a Critical Point of Failure'

Attackers keep targeting cloud-based identities to help them bypass endpoint and

network defenses, says an August report from cybersecurity firm CrowdStrike.

That report counts a 136% increase in cloud intrusions over the preceding 12

months, plus a 40% year-on-year increase in cloud intrusions tied to threat

actors likely working for the Chinese government. "The cloud is a priority

target for both criminals and nation-state threat actors," said Adam Meyers,

head of counter adversary operations at CrowdStrike ... One challenge is that

enough cloud identities justify elevated permissions, putting organizations at

elevated risk when their credentials are exposed. Take security operations

centers and incident response teams. In general, while "the principle of least

privilege and minimal manual access" is a best practice, first responders often

need immediate and "necessary access," says an August report from Darktrace.

"Security teams need access to logs, snapshots and configuration data to

understand how an attack unfolded, but giving blanket access opens the door to

insider threats, misconfigurations and lateral movement." Rather than always

allowing such access, experts recommend using tools that only provide it when

needed, for example, through Amazon Web Services' Security Token Service.

"Leveraging temporary credentials, such as AWS STS tokens, allows for

just-in-time access during an investigation" that can be automatically revoked

after, which "reduces the window of opportunity for potential attackers to

exploit elevated permissions," Darktrace said.

Attackers keep targeting cloud-based identities to help them bypass endpoint and

network defenses, says an August report from cybersecurity firm CrowdStrike.

That report counts a 136% increase in cloud intrusions over the preceding 12

months, plus a 40% year-on-year increase in cloud intrusions tied to threat

actors likely working for the Chinese government. "The cloud is a priority

target for both criminals and nation-state threat actors," said Adam Meyers,

head of counter adversary operations at CrowdStrike ... One challenge is that

enough cloud identities justify elevated permissions, putting organizations at

elevated risk when their credentials are exposed. Take security operations

centers and incident response teams. In general, while "the principle of least

privilege and minimal manual access" is a best practice, first responders often

need immediate and "necessary access," says an August report from Darktrace.

"Security teams need access to logs, snapshots and configuration data to

understand how an attack unfolded, but giving blanket access opens the door to

insider threats, misconfigurations and lateral movement." Rather than always

allowing such access, experts recommend using tools that only provide it when

needed, for example, through Amazon Web Services' Security Token Service.

"Leveraging temporary credentials, such as AWS STS tokens, allows for

just-in-time access during an investigation" that can be automatically revoked

after, which "reduces the window of opportunity for potential attackers to

exploit elevated permissions," Darktrace said.

How Software Development Teams Can Securely and Ethically Deploy AI Tools

Clearly, there is a danger that teams will trust AI too much, as these tools

lack a command of the often nuanced context to recognize complex

vulnerabilities. They may not fully grasp an application’s authentication or

authorization framework, potentially leading to the omission of critical checks.

If developers reach a state of complacency in their vigilance, the potential for

such risks will only increase. ... Beyond security, team leaders and members

must focus more on ethical and even legal considerations: Nearly one-half of

software engineers are facing legal, compliance and ethical challenges in

deploying AI, according the The AI Impact Report 2025 from LeadDev. The

ethical/legal scenarios can take on a highly perplexing nature: A human engineer

can read, learn from and write original code from an open-source library. But if

an LLM does the same thing, it can be accused of engaging in derivative

practices. What’s more, the current legal picture is a murky work in progress.

Given the still-evolving judicial conclusions and guidelines, those using

third-party AI tools need to ensure they are properly indemnified from potential

copyright infringement liability, according to Ropes & Gray, a global law

firm that advises clients on intellectual property and data matters. “Risk

allocation in contracts concerning or contemplating AI models should be

approached very carefully,” according to the firm.

Clearly, there is a danger that teams will trust AI too much, as these tools

lack a command of the often nuanced context to recognize complex

vulnerabilities. They may not fully grasp an application’s authentication or

authorization framework, potentially leading to the omission of critical checks.

If developers reach a state of complacency in their vigilance, the potential for

such risks will only increase. ... Beyond security, team leaders and members

must focus more on ethical and even legal considerations: Nearly one-half of

software engineers are facing legal, compliance and ethical challenges in

deploying AI, according the The AI Impact Report 2025 from LeadDev. The

ethical/legal scenarios can take on a highly perplexing nature: A human engineer

can read, learn from and write original code from an open-source library. But if

an LLM does the same thing, it can be accused of engaging in derivative

practices. What’s more, the current legal picture is a murky work in progress.

Given the still-evolving judicial conclusions and guidelines, those using

third-party AI tools need to ensure they are properly indemnified from potential

copyright infringement liability, according to Ropes & Gray, a global law

firm that advises clients on intellectual property and data matters. “Risk

allocation in contracts concerning or contemplating AI models should be

approached very carefully,” according to the firm.

How AI is Revolutionising RegTech and Compliance

Traditional approaches are failing, overwhelmed by increasing regulatory

complexity and cross-border requirements. Enter RegTech: a technological

revolution transforming how institutions manage regulatory obligations. Advanced

artificial intelligence systems now predict compliance breaches weeks before

they occur, while blockchain platforms create tamper-proof audit trails that

streamline regulatory examinations. ... Natural language processing interprets

complex regulatory documents automatically, updating compliance procedures

within minutes of regulatory changes. Smart contracts execute compliance actions

without human intervention, ensuring consistent adherence to evolving

requirements. Leading institutions are achieving remarkable results. Barclays

reduced regulatory document processing time from days to minutes using

AI-powered analysis. JPMorgan's blockchain settlement system maintains

compliance across multiple jurisdictions simultaneously. ...

Regulatory-as-a-Service models are democratising access to sophisticated

compliance capabilities. Smaller institutions can now access enterprise-grade

RegTech through subscription services, reducing compliance costs by up to 50%

whilst improving regulatory coverage. Challenges remain significant. Data

privacy concerns intensify as compliance systems process vast quantities of

sensitive information. Regulatory fragmentation across jurisdictions complicates

platform development.

Traditional approaches are failing, overwhelmed by increasing regulatory

complexity and cross-border requirements. Enter RegTech: a technological

revolution transforming how institutions manage regulatory obligations. Advanced

artificial intelligence systems now predict compliance breaches weeks before

they occur, while blockchain platforms create tamper-proof audit trails that

streamline regulatory examinations. ... Natural language processing interprets

complex regulatory documents automatically, updating compliance procedures

within minutes of regulatory changes. Smart contracts execute compliance actions

without human intervention, ensuring consistent adherence to evolving

requirements. Leading institutions are achieving remarkable results. Barclays

reduced regulatory document processing time from days to minutes using

AI-powered analysis. JPMorgan's blockchain settlement system maintains

compliance across multiple jurisdictions simultaneously. ...

Regulatory-as-a-Service models are democratising access to sophisticated

compliance capabilities. Smaller institutions can now access enterprise-grade

RegTech through subscription services, reducing compliance costs by up to 50%

whilst improving regulatory coverage. Challenges remain significant. Data

privacy concerns intensify as compliance systems process vast quantities of

sensitive information. Regulatory fragmentation across jurisdictions complicates

platform development.

CEOs Go All-In on AI, But Talent Isn't Ready

Despite the enthusiasm for AI, workforce readiness is still a critical concern.

Approximately 74% of Indian CEOs see AI talent readiness as a determinant of

their company's future success, yet 34% admit to a widening skills gap. This

talent gap is multifaceted; it's not only technical proficiency that's in short

supply, but also expertise in blending data science with ethics, regulatory

understanding and business acumen. About 26% struggle to find candidates who

balance technical skill with collaboration capabilities. ... Regulatory

uncertainty still weighs heavily on CEOs' minds, with nearly half of Indian CEOs

awaiting clearer regulatory guidance before pushing bold innovation initiatives,

compared to only 39% globally. This cautious stance underlines a pragmatic

approach to integrating AI amid evolving governance landscapes. About 76% of

Indian CEOs worry that slow AI regulation progress could hinder organizational

success. Ethical concerns also loom large: 62% of Indian CEOs cite them as

significant barriers, slightly higher than the 59% global average, underscoring

the importance of embedding trust and governance frameworks alongside

technological investments. "This is why culture and leadership are very

important. The board of directors must have a degree of AI literacy. There must

be psychological safety in the organization. Employees must feel safe and if

there's clear governance, it means there is a proactive suggestion to use

sanctioned AI that meets security requirements," John Barker

Despite the enthusiasm for AI, workforce readiness is still a critical concern.

Approximately 74% of Indian CEOs see AI talent readiness as a determinant of

their company's future success, yet 34% admit to a widening skills gap. This

talent gap is multifaceted; it's not only technical proficiency that's in short

supply, but also expertise in blending data science with ethics, regulatory

understanding and business acumen. About 26% struggle to find candidates who

balance technical skill with collaboration capabilities. ... Regulatory

uncertainty still weighs heavily on CEOs' minds, with nearly half of Indian CEOs

awaiting clearer regulatory guidance before pushing bold innovation initiatives,

compared to only 39% globally. This cautious stance underlines a pragmatic

approach to integrating AI amid evolving governance landscapes. About 76% of

Indian CEOs worry that slow AI regulation progress could hinder organizational

success. Ethical concerns also loom large: 62% of Indian CEOs cite them as

significant barriers, slightly higher than the 59% global average, underscoring

the importance of embedding trust and governance frameworks alongside

technological investments. "This is why culture and leadership are very

important. The board of directors must have a degree of AI literacy. There must

be psychological safety in the organization. Employees must feel safe and if

there's clear governance, it means there is a proactive suggestion to use

sanctioned AI that meets security requirements," John Barker

Powering financial services innovation: The critical role of colocation

As AI continues to evolve, its impact on financial services is becoming both

broader and deeper – moving beyond high-level innovation into the operational

core of the enterprise. Today’s financial institutions face a dual mandate: to

accelerate AI adoption in pursuit of competitive advantage, and to do so within

the constraints of an increasingly complex digital and regulatory environment.

From risk modelling and fraud prevention to real-time analytics and customer

personalization, AI is being embedded into mission-critical functions. Realising

its full potential, however, isn't solely a matter of algorithms – it hinges on

having a data-first strategy, with the right infrastructure and governance in

place. ... With exponential data growth presenting challenges, customers gain

access to a secure, compliant, resilient, and performant foundation. This

foundation enables the implementation of new technologies and seamless

orchestration of data flows. Our goal is to simplify data management complexity

and serve as the single, trusted, global data center partner for our customers.

As organizations optimize their AI strategies, many are exploring cloud

repatriation – the process of moving certain workloads from the cloud back to

on-premises or colocation environments. This strategic move can be crucial for

AI success, as it allows for better control over sensitive data, reduced

latency, and improved performance for demanding AI workloads.

As AI continues to evolve, its impact on financial services is becoming both

broader and deeper – moving beyond high-level innovation into the operational

core of the enterprise. Today’s financial institutions face a dual mandate: to

accelerate AI adoption in pursuit of competitive advantage, and to do so within

the constraints of an increasingly complex digital and regulatory environment.

From risk modelling and fraud prevention to real-time analytics and customer

personalization, AI is being embedded into mission-critical functions. Realising

its full potential, however, isn't solely a matter of algorithms – it hinges on

having a data-first strategy, with the right infrastructure and governance in

place. ... With exponential data growth presenting challenges, customers gain

access to a secure, compliant, resilient, and performant foundation. This

foundation enables the implementation of new technologies and seamless

orchestration of data flows. Our goal is to simplify data management complexity

and serve as the single, trusted, global data center partner for our customers.

As organizations optimize their AI strategies, many are exploring cloud

repatriation – the process of moving certain workloads from the cloud back to

on-premises or colocation environments. This strategic move can be crucial for

AI success, as it allows for better control over sensitive data, reduced

latency, and improved performance for demanding AI workloads.Measuring, Reporting, and Improving: Making Resilience Tangible and Accountable

A continuity plan sitting on a shelf provides little assurance of resilience.

What matters is whether organizations can demonstrate their strategies work,

they are tested, and corrective actions are tracked. Measurement transforms

resilience from an abstract concept into quantifiable performance. ... Metrics

ensure resilience is not left to chance or anecdote. They provide boards and

regulators with evidence of progress, reinforcing accountability at the

executive and governance levels. A resilience strategy that cannot be measured

cannot be trusted. ... The first step in strengthening measurement is to define

resilience key performance indicators (KPIs) and key risk indicators (KRIs).

These metrics should evaluate outcomes rather than simply tracking activities,

ensuring performance reflects actual readiness. ... Measurement alone is not

enough without transparency. Organizations must establish reporting practices

that make resilience performance visible to boards, regulators, and, when

appropriate, customers. Sharing outcomes openly not only demonstrates

accountability but also builds trust and credibility. ... One challenge

organizations often encounter when measuring resilience is metric overload. In

the effort to capture every detail, leaders may track too many indicators,

creating complexity that dilutes focus and makes it difficult to interpret

results.

A continuity plan sitting on a shelf provides little assurance of resilience.

What matters is whether organizations can demonstrate their strategies work,

they are tested, and corrective actions are tracked. Measurement transforms

resilience from an abstract concept into quantifiable performance. ... Metrics

ensure resilience is not left to chance or anecdote. They provide boards and

regulators with evidence of progress, reinforcing accountability at the

executive and governance levels. A resilience strategy that cannot be measured

cannot be trusted. ... The first step in strengthening measurement is to define

resilience key performance indicators (KPIs) and key risk indicators (KRIs).

These metrics should evaluate outcomes rather than simply tracking activities,

ensuring performance reflects actual readiness. ... Measurement alone is not

enough without transparency. Organizations must establish reporting practices

that make resilience performance visible to boards, regulators, and, when

appropriate, customers. Sharing outcomes openly not only demonstrates

accountability but also builds trust and credibility. ... One challenge

organizations often encounter when measuring resilience is metric overload. In

the effort to capture every detail, leaders may track too many indicators,

creating complexity that dilutes focus and makes it difficult to interpret

results.

/pcq/media/media_files/HSHrfZcv7l4P6Ab0c0kS.png)