Web applications are the most visible front door to any enterprise and are often designed and built without strong security in mind. Stressing out over hardware vulnerabilities like Spectre or Meltdown is fun and trendy, but while you're digging a moat around your castle someone is prancing across the drawbridge using SQL injection (SQLi) or cross-site scripting (XSS). The OWASP Broken Web Applications Project comes bundled in a virtual machine (VM) that contains a large collection of deliberately broken web applications with tutorials to help students master the various attack vectors. From trivial to more difficult, the project is designed to lead the user to a better understanding of web application security. The OWASP Broken Web Applications Project includes the appropriately named Damn Vulnerable Web Application, deliberately broken for your pentesting enjoyment. For maximum lulz, download OWASP Zed Attack Proxy, configure a local browser to proxy traffic through ZAP, and get ready to attack some damn vulnerable web applications.

Emotional skill is key to success

According to Susan David, emotional agility is about adaptability, facing emotions and moving on from them. It is also the ability to master the challenges life throws at us in an increasingly complex world. She added that while emotional intelligence is not values-focused, emotional agility is. "Women do have some advantages in the domain of emotional agility," she said. "When I go into organisations and look at hotspots or business units that are extremely high functioning, what we find is that the most important predictor of enabling these units is what I call 'individualised considerations'. That means leaders who are able to see the individual as an individual and this has diversity at its core. "These leaders do not stereotype or exclude," she added. "Of course, this doesn't work always in practice and there is a lot of work to be done in this regard in organisations and businesses."

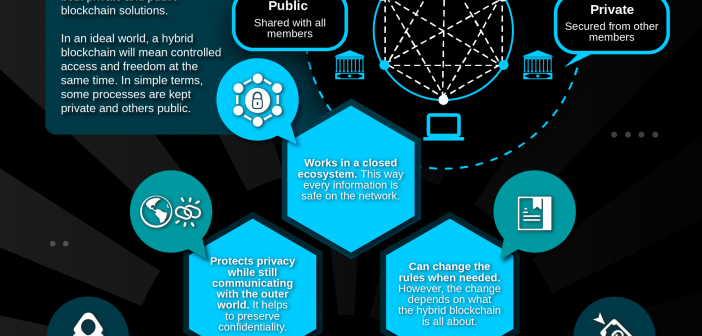

Hybrid Blockchain- The Best Of Both Worlds

The hybrid blockchain is best defined as the blockchain that attempts to use the best part of both private and public blockchain solutions. In an ideal world, a hybrid blockchain will mean controlled access and freedom at the same time. The hybrid blockchain is distinguishable from the fact that they are not open to everyone, but still offers blockchain features such as integrity, transparency, and security. As usual, Hybrid blockchain is entirely customizable. The members of the hybrid blockchain can decide who can take participation in the blockchain or which transactions are made public. This brings the best of both worlds and ensures that a company can work with their stakeholders in the best possible way. We hope that you got a clear view from the hybrid blockchain definition. To get a much better picture, we recommend you to check out some hybrid blockchain projects.

How universities should teach blockchain

The core issue is that blockchain is really hard to teach correctly. There’s no established curriculum, few textbooks exist, and the field is rife with misinformation, making it hard to know what is credible. Protocols are evolving at a rapid pace, and it’s tough to tell the difference between a white paper and reality. Having so much attention around blockchain specifically frames it as a miraculous and novel development rather than an outgrowth of decades of computer science research. Matt Blaze, an associate professor at the University of Pennsylvania and a cyber-security researcher, points out that the push for degree programs in blockchain is part of a trend of overspecialization by some engineering schools. The concepts sound good on paper but don’t live up to their promise. Despite the best of intentions, trends change, and students get stuck in narrow career paths. In order to avoid these pitfalls, universities will have to take an approach they’re not used to.

Experience an RDP attack? It’s your fault, not Microsoft’s

![Windows security and protection [Windows logo/locks]](https://images.idgesg.net/images/article/2018/02/windows_security_safety_protection_encryption_locks_thinkstock_831741980-100749419-large.jpg)

If you are compromised because of RDP, the problem is you or your organization. It isn’t a problem with Microsoft or RDP. You don’t need to put a VPN around RDP to protect it. You don’t need to change default network ports or some other black magic. Just use the default security settings or implement the myriad other security defenses you should have already been using. If you’re getting hacked because of RDP, you’re not doing a bunch of things that any good computer security defender should be doing. There are many ransomware programs, like SamSam, and cryptominers, like CrySis, that attempt brute-force guessing attacks against accessible RDP services. So many companies have had their RDP services compromised that the FBI and Department of Homeland Security (DHS) have issued warnings. The warning should be, “Your security sucks!” It isn’t like the malware programs are conducting a zero-day attack against some unpatched vulnerability.

Data as a Driver of Economic Efficiency

The General Data Protection Regulation (GDPR) became enforceable on May 25, 2018. The regulation aims to protect data by ‘design and default,’ whereby firms must handle data according to a set of principles. GDPR mandates opt-in consent for data collection and assigns substantial liability risks and penalties for data flow and data processing violations. GDPR’s enactment is particularly likely to influence technology ventures, given an increasing need for the use of data as a core product input. Specifically, data has become a key factor in technology-driven innovation and production, spanning industry sectors from pharmaceuticals and healthcare, to automative, smart infrastructure, and broader decision making. This report presents economic analyses of the consequences of data regulation and opt-in consent requirements for investment in new technology ventures, for consumer prices, and for economic welfare.

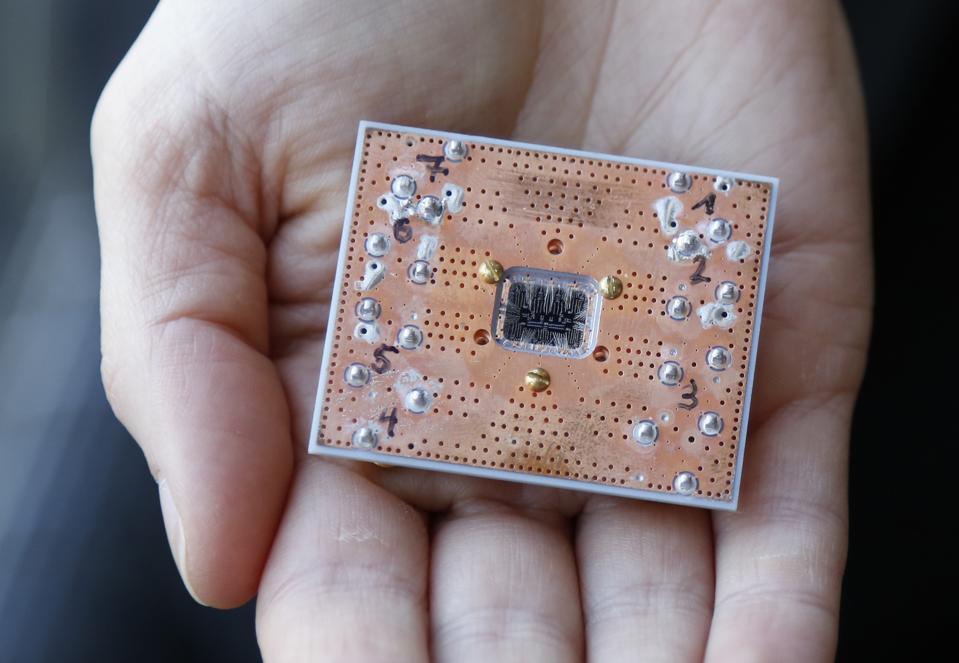

A Two-Minute Guide To Quantum Computing

Most of us aren't clued up on the art of harnessing elementary particles like electrons and photons, so to understand how quantum computing works, meet Scottish startup M Squared. The company’s bread and butter is making some of the most accurate lasers in the world, using pure light and precise wavelengths. Such lasers can be used like a scalpel, one atom wide, to carve out the transistors of a silicon chip. Typically the chip or brain in your smartphone is a centimeter square. It has a small section in the middle made up of around 300 million transistors, with connections spreading out like fingers to talk to the screen, the camera, the battery and more. But imagine a chip with no transistors at all, and instead a small chamber that’s controlling the processes and energy levels inside of atoms. This is quantum computing, the next frontier of machines that think not in bytes but in powerful qubits. It sounds cutting-edge, but scientists have been studying the theory of quantum computing for 30 years, and some say the first mainstream applications are just around the corner.

How Do Self-Driving Cars See? (And How Do They See Me?)

We’ll start with radar, which rides behind the car’s sheet metal. It’s a technology that has been going into production cars for 20 years now, and it underpins familiar tech like adaptive cruise control and automatic emergency braking. ... The cameras—sometimes a dozen to a car and often used in stereo setups—are what let robocars see lane lines and road signs. They only see what the sun or your headlights illuminate, though, and they have the same trouble in bad weather that you do. But they’ve got terrific resolution, seeing in enough detail to recognize your arm sticking out to signal that left turn. ... If you spot something spinning, that’ll be the lidar. This gal builds a map of the world around the car by shooting out millions of light pulses every second and measuring how long they take to come back. It doesn’t match the resolution of a camera, but it should bounce enough of those infrared lasers off you to get a general sense of your shape. It works in just about every lighting condition and delivers data in the computer’s native tongue: numbers.

Facial recognition's failings: Coping with uncertainty in the age of machine learning

The shortcomings of publicly available facial-recognition systems were further highlighted in summer this year, when the American Civil Liberties Union (ACLU) tested the AWS Reckognition service. The test found that 28 members of the US Congress were falsely matched with mug shots from publicly available arrest photos. Professor Chris Bishop, director of Microsoft's Research Lab in Cambridge, said that as machine learning technologies were deployed in different real-world locales for the first time it was inevitable there would be complications. "When you apply something in the real world, the statistical distribution of the data probably isn't quite the same as you had in the laboratory," he said. "When you take data in the real world, point a camera down the street and so on, the lighting may be different, the environment may be different, so the performance can degrade for that reason. "When you're applying [these technologies] in the real world all these other things start to matter."

Robots Have a Diversity Problem

It is well-documented that A.I. programs of all stripes inherit the gender and racial biases of their creators on an algorithmic level, turning well-meaning machines into accidental agents of discrimination. But it turns out we also inflict our biases onto robots. A recent study led by Christoph Bartneck, a professor at the Human Interface Technology Lab at the University of Canterbury in New Zealand, found that not only are the majority of home robots designed with white plastic, but we also actually have a bias against the ones that are coated in black plastic. The findings were based on a shooter bias test, in which participants were asked to perceive threat level based on a split-second image of various black and white people, with robots thrown into the mix. Black robots that posed no threat were shot more than white ones. “The only thing that would motivate their bias [against the robots] would be that they would have transferred their already existing racial bias to, let’s say, African-Americans, onto the robots,” Bartneck told Medium. “That’s the only plausible explanation.”

Quote for the day:

"Remember this: Anticipation is the ultimate power. Losers react; leaders anticipate." -- Tony Robbins