Quote for the day:

“Without data, you’re just another person with an opinion.” -- W. Edwards Deming

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 22 mins • Perfect for listening on the go.

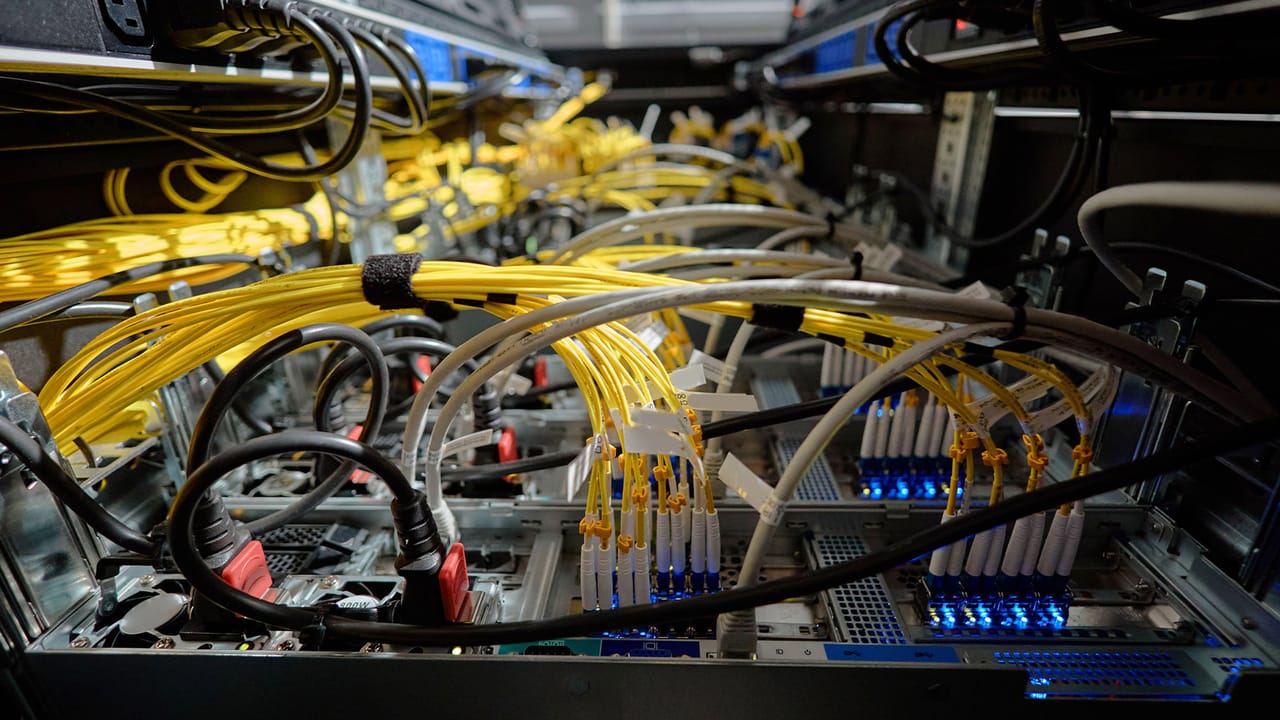

Industry 5.0’s Hidden Challenge: Managing Risk in the Hyperconnected Factory

As manufacturing transitions into Industry 5.0, the focus is shifting from

simple automation to deep collaboration between human workers and advanced

machinery. While these hyperconnected factories offer significant improvements

in efficiency and customization, they also introduce serious, often overlooked

vulnerabilities. The core issue lies in the merging of traditional physical

equipment with modern internet-connected systems. This integration creates a

massive target for cyber threats. When factory floors are wired directly to

global networks, a single security breach can do more than steal data; it can

halt physical production entirely. Furthermore, because these modern

facilities rely on interconnected supply chains, a weakness in a smaller

partner’s system can quickly spread to the main operation. Managing these

risks requires a shift from reactive problem-solving to building long-term

operational resilience. Manufacturers must implement strict security measures,

such as dividing networks to contain potential breaches and ensuring constant

monitoring of their equipment. More importantly, they need to invest in

training their workforce to recognize and respond to these modern threats.

Ultimately, as factories become more intelligent and connected, companies must

treat security not as a separate IT problem, but as a fundamental part of the

manufacturing process to keep operations running smoothly and safely.

As manufacturing transitions into Industry 5.0, the focus is shifting from

simple automation to deep collaboration between human workers and advanced

machinery. While these hyperconnected factories offer significant improvements

in efficiency and customization, they also introduce serious, often overlooked

vulnerabilities. The core issue lies in the merging of traditional physical

equipment with modern internet-connected systems. This integration creates a

massive target for cyber threats. When factory floors are wired directly to

global networks, a single security breach can do more than steal data; it can

halt physical production entirely. Furthermore, because these modern

facilities rely on interconnected supply chains, a weakness in a smaller

partner’s system can quickly spread to the main operation. Managing these

risks requires a shift from reactive problem-solving to building long-term

operational resilience. Manufacturers must implement strict security measures,

such as dividing networks to contain potential breaches and ensuring constant

monitoring of their equipment. More importantly, they need to invest in

training their workforce to recognize and respond to these modern threats.

Ultimately, as factories become more intelligent and connected, companies must

treat security not as a separate IT problem, but as a fundamental part of the

manufacturing process to keep operations running smoothly and safely.Copilot Billing Shock Hits Developers

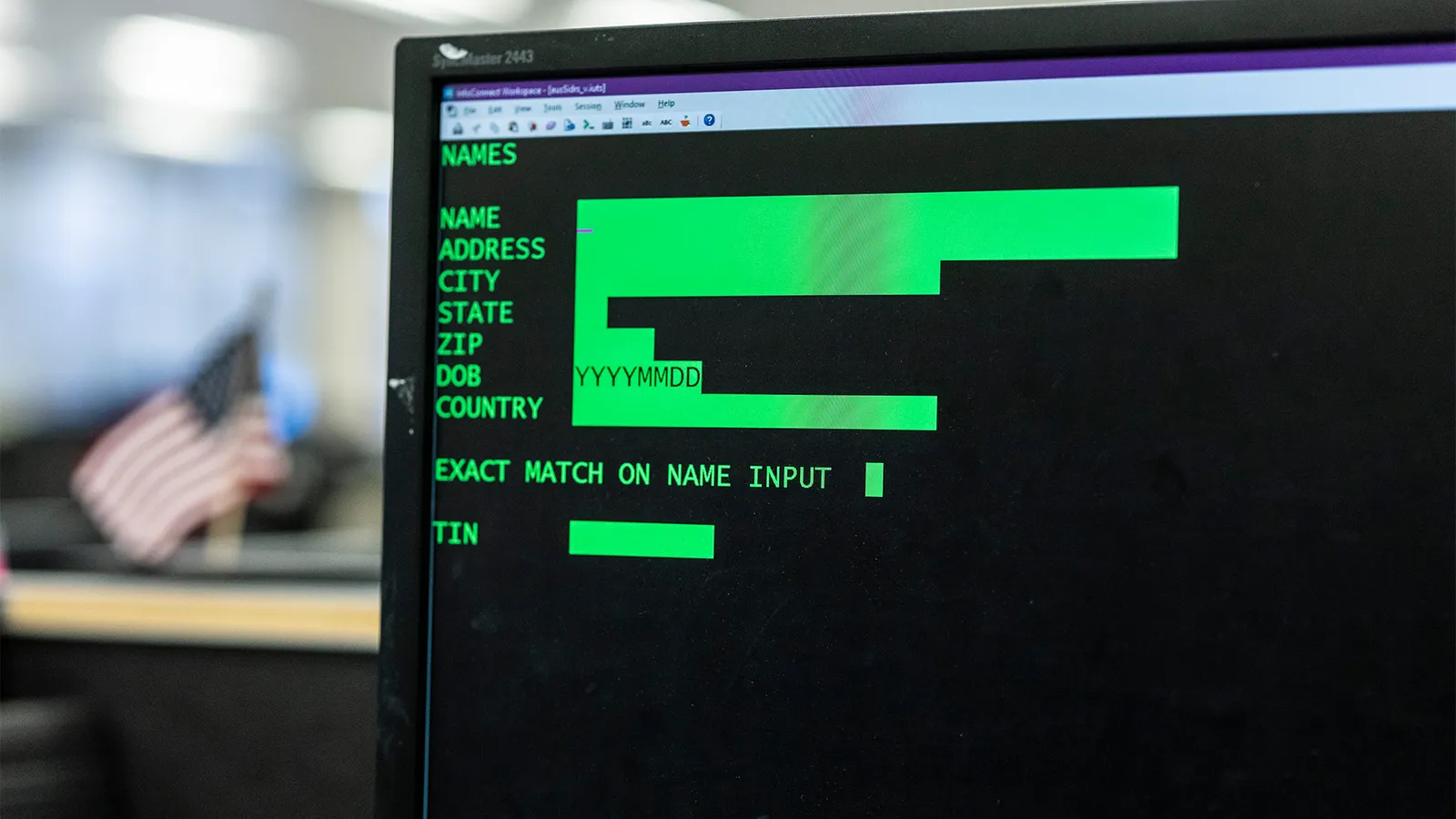

How Risk Management Frameworks Protect Organisations from Insider Threats

When dealing with cybersecurity, organizations frequently focus on external

attacks and overlook the risks posed by their own employees, contractors, or

vendors. Protecting against these insider threats requires more than just

reactive measures; it demands a structured approach rooted in risk management

frameworks. Standardized models like NIST or ISO 27001 provide a clear

foundation to help organizations systematically identify, assess, and handle

vulnerabilities before they result in serious damage. Rather than relying on

guesswork, these frameworks encourage practical steps such as mapping user

roles, reviewing asset inventories, and carefully analyzing data flow. A

critical component is establishing strong governance that clearly defines who

is accountable across departments, bridging the gap between IT, human

resources, and legal teams. By integrating access controls, organizations can

enforce strict permissions so individuals only access the information

necessary for their specific roles. Furthermore, utilizing continuous

monitoring and behavioral analytics allows security teams to detect unusual

activities, such as irregular login times or massive data transfers, long

before they escalate. Alongside technical defenses, effective frameworks

outline clear incident response plans and emphasize the importance of

cultivating a strong security culture. Ultimately, educating staff and

fostering an environment where suspicious activity can be reported safely

helps businesses maintain solid long-term resilience against internal security

risks.

When dealing with cybersecurity, organizations frequently focus on external

attacks and overlook the risks posed by their own employees, contractors, or

vendors. Protecting against these insider threats requires more than just

reactive measures; it demands a structured approach rooted in risk management

frameworks. Standardized models like NIST or ISO 27001 provide a clear

foundation to help organizations systematically identify, assess, and handle

vulnerabilities before they result in serious damage. Rather than relying on

guesswork, these frameworks encourage practical steps such as mapping user

roles, reviewing asset inventories, and carefully analyzing data flow. A

critical component is establishing strong governance that clearly defines who

is accountable across departments, bridging the gap between IT, human

resources, and legal teams. By integrating access controls, organizations can

enforce strict permissions so individuals only access the information

necessary for their specific roles. Furthermore, utilizing continuous

monitoring and behavioral analytics allows security teams to detect unusual

activities, such as irregular login times or massive data transfers, long

before they escalate. Alongside technical defenses, effective frameworks

outline clear incident response plans and emphasize the importance of

cultivating a strong security culture. Ultimately, educating staff and

fostering an environment where suspicious activity can be reported safely

helps businesses maintain solid long-term resilience against internal security

risks.Segment With Purpose: A Zero Trust Blueprint For OT Network Segmentation In Manufacturing

Is the data center industry ready to change for the coming of the 1MW rack?

The data center industry is debating a major infrastructure shift: moving to

one-megawatt server racks powered by 800-volt direct current systems.

Historically, facilities have relied on alternating current power and managed

rack densities averaging around 15 kilowatts. However, as artificial

intelligence applications demand increasingly powerful hardware, companies

like Nvidia are projecting the need for one-megawatt racks by 2028. Because

traditional power systems hit practical capacity limits near 400 kilowatts due

to cable congestion and space constraints, achieving this extreme density

requires a fundamental redesign toward high-voltage direct current

distribution. In the near term, operators might adapt by installing separate

power sidecars next to standard racks, but eventually, entire facilities could

require ground-up direct current electrical architectures. Despite these

projections, industry experts question whether the broader market should

undergo such an expensive overhaul based primarily on one company's product

roadmap. While top-tier tech firms training massive models will certainly

require this capability, other hardware developers are already focusing on

more energy-efficient specialist chips. Additionally, as artificial

intelligence matures, everyday tasks like answering questions or generating

text will likely run on less demanding equipment. Ultimately, building

completely redesigned data centers may prove lucrative for early adopters, but

over-engineering facilities for a niche scenario could be highly risky for

most operators.

The data center industry is debating a major infrastructure shift: moving to

one-megawatt server racks powered by 800-volt direct current systems.

Historically, facilities have relied on alternating current power and managed

rack densities averaging around 15 kilowatts. However, as artificial

intelligence applications demand increasingly powerful hardware, companies

like Nvidia are projecting the need for one-megawatt racks by 2028. Because

traditional power systems hit practical capacity limits near 400 kilowatts due

to cable congestion and space constraints, achieving this extreme density

requires a fundamental redesign toward high-voltage direct current

distribution. In the near term, operators might adapt by installing separate

power sidecars next to standard racks, but eventually, entire facilities could

require ground-up direct current electrical architectures. Despite these

projections, industry experts question whether the broader market should

undergo such an expensive overhaul based primarily on one company's product

roadmap. While top-tier tech firms training massive models will certainly

require this capability, other hardware developers are already focusing on

more energy-efficient specialist chips. Additionally, as artificial

intelligence matures, everyday tasks like answering questions or generating

text will likely run on less demanding equipment. Ultimately, building

completely redesigned data centers may prove lucrative for early adopters, but

over-engineering facilities for a niche scenario could be highly risky for

most operators.The cost of rebuilding talent now exceeds the cost of retaining it

Your outsourcing contract needs XLAs, not just SLAs

When outsourcing IT services, traditional service level agreements (SLAs) are

no longer sufficient because they only measure technical processes rather than

actual human outcomes. While SLAs ensure baseline operational standards, like

system uptime or ticket resolution speed, they often fail to capture whether

employees actually feel supported or can efficiently do their jobs. To bridge

this gap, organizations must incorporate experience level agreements (XLAs)

into their vendor contracts. XLAs shift the focus toward tangible user

outcomes, tracking metrics such as employee satisfaction, lost productivity

time, ease of accessing support, and overall confidence in IT services.

Introducing XLAs does not mean abandoning SLAs. Instead, the two work together

to provide a complete picture of IT performance. To implement XLAs

successfully, companies and providers need a shared baseline of current

employee experience data. Contracts can then require fixed satisfaction

scores, continuous metric improvements, or the creation of an experience

measurement infrastructure by the provider. For these agreements to work,

total transparency is essential; hiding poor scores destroys the

accountability the model relies upon. Ultimately, moving to an XLA model

represents a significant shift in how companies define IT value. Unless you

explicitly demand better employee experiences in your outsourcing contracts,

service providers are unlikely to prioritize them over basic technical

compliance.

When outsourcing IT services, traditional service level agreements (SLAs) are

no longer sufficient because they only measure technical processes rather than

actual human outcomes. While SLAs ensure baseline operational standards, like

system uptime or ticket resolution speed, they often fail to capture whether

employees actually feel supported or can efficiently do their jobs. To bridge

this gap, organizations must incorporate experience level agreements (XLAs)

into their vendor contracts. XLAs shift the focus toward tangible user

outcomes, tracking metrics such as employee satisfaction, lost productivity

time, ease of accessing support, and overall confidence in IT services.

Introducing XLAs does not mean abandoning SLAs. Instead, the two work together

to provide a complete picture of IT performance. To implement XLAs

successfully, companies and providers need a shared baseline of current

employee experience data. Contracts can then require fixed satisfaction

scores, continuous metric improvements, or the creation of an experience

measurement infrastructure by the provider. For these agreements to work,

total transparency is essential; hiding poor scores destroys the

accountability the model relies upon. Ultimately, moving to an XLA model

represents a significant shift in how companies define IT value. Unless you

explicitly demand better employee experiences in your outsourcing contracts,

service providers are unlikely to prioritize them over basic technical

compliance.

Context as Code - Build-time governance in the era of infinite syntax

In his article on context as code, Artur Huk explores the hidden costs of relying on artificial intelligence to rapidly generate software. Today, automated tools produce working code at incredible speeds, optimizing for quick feature delivery rather than long-term maintainability. Because these systems are designed to always fulfill a user's immediate request, they often bypass established design rules. For instance, an AI might inappropriately force new features directly into critical systems instead of following careful organizational patterns, creating software that works today but becomes a tangled liability tomorrow. Huk points out that we are losing a crucial historical defense mechanism. In the past, compilers acted as rigid gatekeepers that prevented fundamental errors before a program could even run. Now, human language acts as our control system, blurring the line between safe instructions and unpredictable data. This shifts significant risk away from the building phase directly to the live environment. To regain control, Huk suggests we must enforce strict constraints before the code is ever generated. Rather than relying on massive, complex libraries that hide how systems actually work, teams should build clear, transparent structures. By setting firm boundaries and effectively teaching AI tools when to say no, organizations can safely use automated generation without sacrificing their future stability.Think Inside The Box: How Constraints Can Unleash Your Creativity And Unlock Decision Making

Empowering employees with autonomy over how they execute their tasks is one of

the most effective ways to build engagement, pride, and accountability. While

leaders often assign specific responsibilities, dictating every step of the

process can suppress independent problem solving and create a workforce that

simply waits for instructions. On the other hand, many managers hesitate to

offer complete freedom due to the genuine financial, reputational, or

regulatory risks involved in their operations. To balance these competing

needs, organizations should implement a sandbox approach to decision making.

In this model, leaders establish clear constraints that represent the

acceptable limits of risk, forming the boundaries of the sandbox. Once these

rigid parameters are defined, employees are given the full authority to

experiment and find the best solutions within that secure space. Building this

environment requires three straightforward steps: clearly outlining the goals,

communicating the strict boundaries, and stepping back to let employees

determine their own methods. Because the parameters can be adjusted for

different roles or projects, this structured autonomy protects the company

while still fostering innovation at every level. Ultimately, when people

understand their limits but have the freedom to navigate within them, they are

far more likely to produce meaningful work and deliver better outcomes for the

organization.

Empowering employees with autonomy over how they execute their tasks is one of

the most effective ways to build engagement, pride, and accountability. While

leaders often assign specific responsibilities, dictating every step of the

process can suppress independent problem solving and create a workforce that

simply waits for instructions. On the other hand, many managers hesitate to

offer complete freedom due to the genuine financial, reputational, or

regulatory risks involved in their operations. To balance these competing

needs, organizations should implement a sandbox approach to decision making.

In this model, leaders establish clear constraints that represent the

acceptable limits of risk, forming the boundaries of the sandbox. Once these

rigid parameters are defined, employees are given the full authority to

experiment and find the best solutions within that secure space. Building this

environment requires three straightforward steps: clearly outlining the goals,

communicating the strict boundaries, and stepping back to let employees

determine their own methods. Because the parameters can be adjusted for

different roles or projects, this structured autonomy protects the company

while still fostering innovation at every level. Ultimately, when people

understand their limits but have the freedom to navigate within them, they are

far more likely to produce meaningful work and deliver better outcomes for the

organization.