Quote for the day:

“If you don’t have a competitive advantage, don’t compete.” -- Jack Welch

Security Is Blocking AI Adoption: Is BYOC the Answer?

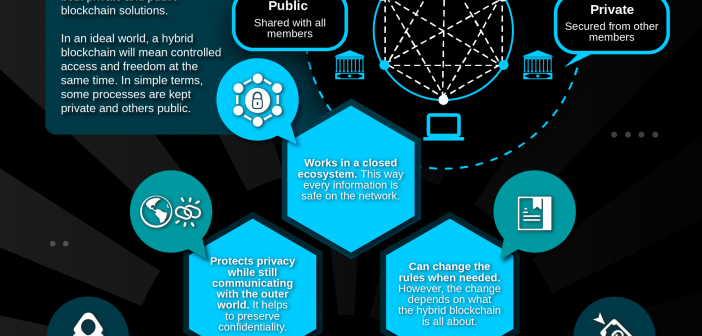

Enterprises face unique hurdles in adopting AI at scale. Sensitive data must

remain within secure, controlled environments, avoiding public networks or

shared infrastructures. Traditional SaaS models often fail to meet these

stringent data sovereignty and compliance demands. Beyond this, organizations

require granular control, comprehensive auditing and full transparency to trace

every AI decision and data access. This ensures vendors cannot interact with

sensitive data without explicit approval and documentation. These unmet needs

create a significant gap, preventing regulated industries from deploying AI

solutions while maintaining compliance and security. ... The concept of Bring

Your Own Cloud (BYOC) isn’t new. It emerged as a middle ground between

traditional SaaS and on-premises deployments, promising to combine the best of

both worlds: the convenience of managed services with the control and security

of on-premises infrastructure. However, its history in the industry has been

marked by both successes and cautionary tales. Early BYOC implementations often

failed to live up to their promises. Some vendors merely deployed their software

into customer cloud accounts without proper architectural planning, resulting in

what was essentially remotely managed on-premises environments.

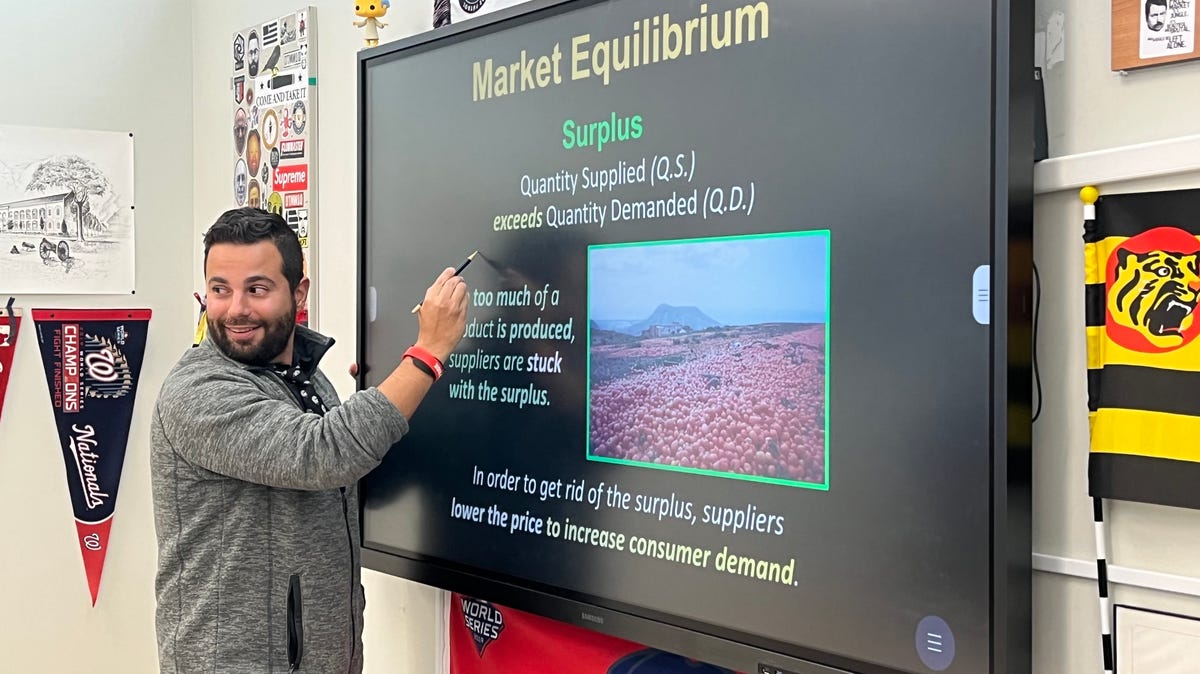

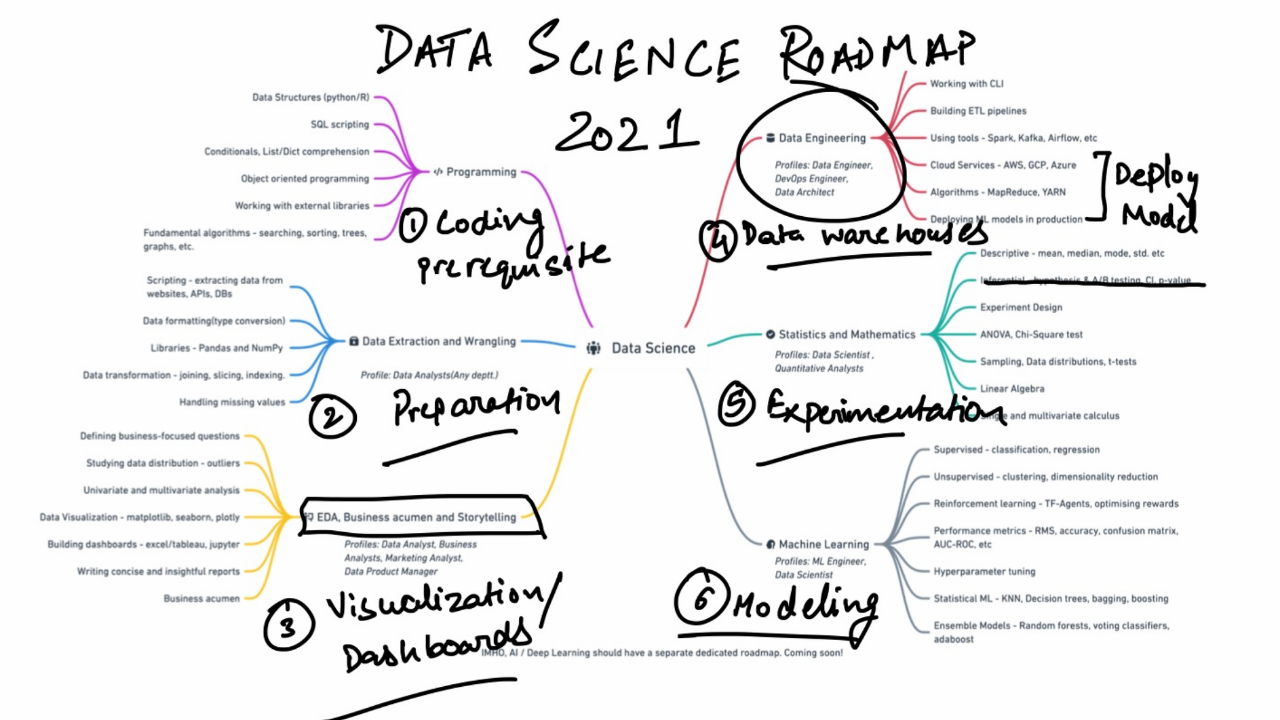

The Importance of Continuing Education in Data and Tech

Continuing education plays a vital role in workforce development and career

advancement within the tech industries, where rapid technological advancements

and evolving market demands necessitate a culture of lifelong learning. As

businesses increasingly rely on sophisticated data analytics, artificial

intelligence (AI), and cloud technologies, professionals in these fields must

continuously update their skills to remain competitive. Continuing education

offers a pathway for individuals to acquire new capabilities, adapt to emerging

technologies, and gain proficiency in specialized areas that are in high demand.

By engaging in ongoing learning opportunities, tech professionals can enhance

their expertise, making them more valuable to their current employers and more

attractive to potential future ones. ... Professional certifications and

competency-based education have become significant avenues for career

advancement in the data and tech field. As the landscape of technology rapidly

evolves, organizations increasingly seek professionals who possess validated

skills and up-to-date knowledge. Professional certifications serve as tangible

proof of one’s expertise in specific areas such as data governance, analytics,

cybersecurity, or cloud computing. These certifications, offered by leading

industry bodies and tech companies, are designed to align with current industry

standards and demands.

Agents, shadow AI and AI factories: Making sense of it all in 2025

“Agentic AI” promises “digital agents” that learn from us, and can perceive,

reason problems out in multiple steps and then make autonomous decisions on our

behalf. They can solve multilayered questions that require them to interact with

many other agents, formulate answers and take actions. Consider forecasting

agents in the supply chain predicting customer needs by engaging customer

service agents, and then proactively adjusting warehouse stock by engaging

inventory agents. Every knowledge worker will find themselves gaining these

superhuman capabilities backed by a team of domain-specific task agent workers

helping them tackle large complex jobs with less expended effort. ... However,

the proliferation of generative, and soon agentic AI, presents a growing problem

for IT teams. Maybe you’re familiar with “shadow IT,” where individual

departments or users procure their own resources, without IT knowing. In today’s

world we have “shadow AI,” and it’s hitting businesses on two fronts. ...

Today’s enterprises create value through insights and answers driven by

intelligence, setting them apart from their competitors. Just as past industrial

revolutions transformed industries — think about steam, electricity, internet

and later computer software — the age of AI heralds a new era where the

production of intelligence is the core engine of every business.

Is VMware really becoming the new mainframe?

“CIOs can start to unwind their dependence on VMware,” he says. “But they need

to know it may not have any material reduction in their spend with Broadcom over

multiple renewals. They’re going to have to get completely off Broadcom.” Still,

Warrilow recommends that CIOs running VMware consider alternatives over the long

term. They should also look for exit strategies for other market-dominant IT

products they use, given that Broadcom has seen early success with VMware, he

says. “The cautionary tale for CIOs is that this is just the beginning,” he

says. “Every tech investment firm is going to be saying, ‘I want what Broadcom

has with their share price.’ ... “The comparison works a bit, maybe from a

stickiness perspective, because customers have built their applications and

workload using virtualization technology on VMware,” he says. “When they have to

do a mass refactoring of applications, it’s very, very hard.” But the analogy

has its limitations because many users think of mainframes as a legacy

technology, while VMware’s cloud-based products address future challenges, he

adds. “The cloud is the future for running your AI workload,” Shenoy says.

“Customers have trusted us for the last 20 to 25 years to run their

business-critical applications, and the interesting part right now is we are

seeing a lot of growth of these AI workloads and container workloads running on

VMware.”

Deep Learning – a Necessity

It is essential in architecture that we realize that a skill set is not an

arbitrary thing. It isn’t learn one skill and you are done. It also isn’t learn

any skill from any background and you’re in. It is the application of all of the

identified and necessary skills combined that makes a distinguished architect.

It is also important to understand the purpose and context of mastery. Working

in a startup is very different from working in a large corporation. Industry can

change things significantly as well. Always remember that the profession’s

purpose has to be paramount in the learning. For example, both doctors and

lawyers have to deal with clients and need human interaction skills to be

successful. Yet, the nature and implementation of these differ drastically. We

will explore this point in a further article. However, do not underestimate the

impact of changing the meaning of the profession while claiming similar skills.

The current environment is rife with this kind of co-opting of the terminology

and tools to alter the whole purpose of architecture fundamentally. ... In

medicine and other professions, an individual studies and practices for 7+ years

to become fully independent, and they never stop learning. This learning is

tracked by both mentors and the profession. Because medicine is so essential to

humans it is important that professionals are measured and constantly update and

hone their competencies.

Crawl, then walk, before you run with AI agents, experts recommend

The best bet for percolating AI agents throughout the organization is to keep

things as simple as possible. "Companies and employees that have already found

ways to operationalize intelligent agents for simple tasks are best placed to

exploit the next wave with agentic AI," said Benjamin Lee, professor of computer

and information science at the University of Pennsylvania. "These employees

would already be engaging generative AI for simple tasks and they would be

manually breaking complex tasks into simpler tasks for the AI. Such employees

would already be seeing productivity gains from using generative AI for these

simple tasks." Rowan agreed that enterprises should adopt a crawl, walk, run

approach: "Begin with a pilot program to explore the potential of multiagent

systems in a controlled, measurable environment." "Most people say AI is at the

toddler stage, whereas agentic AI is like a tween," said Ben Sapp, global

practice lead of intelligence at Digital.ai. "It's functional and knows how to

execute certain functions." Enterprises and their technology teams "should

socialize the use of generative AI for simple tasks within their organizations,"

Lee continued. "They should have strategies for breaking complex tasks into

simpler ones so that, when intelligent agents become a reality, the sources of

productivity gains are transparent, easily understood, and trusted."

Growth of digital wallet use shaking up payment regulations and benefits delivery

Australian banks are calling on the government to pass legislation that

accommodates payments with digital wallets within the country’s regulatory

framework. A release from the Australian Banking Association (ABA) argues that

with the country’s residents making $20 billion worth of payments across 500

million transactions each month with mobile wallets, all players within the

payment ecosystem should be under the remit of the Reserve Bank of Australia.

... Digital wallets are by far the most popular method of making cross-border

payments, according to a new report from Payments Cards & Mobile. The How

Digital Wallets Are Transforming Cross-Border Transactions report shows digital

wallets are chosen for international transactions by 42.1 percent. That makes

them more people than the next two most popular methods, money transfer services

(16.8 percent) and bank accounts (14.8 percent) combined. Transactions with

digital wallets are much faster than wire transfers, are available to people who

don’t possess bank accounts, and have lower fees than bank transfers, the report

says. Interoperability remains a challenge, and regulations and infrastructure

limitations could pose barriers to adoption, but the report authors only expect

the dominance of digital wallets to increase in the years ahead.

My vision is to create a digital twin of our entire operations, from design and manufacturing to products and customers

We approach this transformation from three dimensions. First is empathy – truly

understanding not just who our customers are, but their emotions. This is where

the concept of creating a ‘digital twin’ of the customer comes in. Second is

innovation – not just adopting new technologies but ensuring that our processes

are lean, digitised, and seamless throughout the customer journey, from research

to purchase, service, and brand loyalty. The goal is to provide a consistent and

empathetic experience across all touchpoints. ... The first challenge is

identifying our customers. For example, if a distributor in one business also

buys from another or if a consumer connects with one of our industrial projects,

it’s hard to track. To address this, we launched a customer UID project, which

has been in progress for months. It helps us identify customers across channels

while keeping an eye on privacy and adhering to upcoming data protection

regulations. The second part involves gathering all customer-related data in one

place. Over the past three years, we unified all customer interactions into a

single platform with a one CRM strategy, which was complex but essential. Now,

with AI solutions like social listening combined with sentiment analysis, we can

understand what our customers are saying about us and where we need to improve,

both in India and globally.

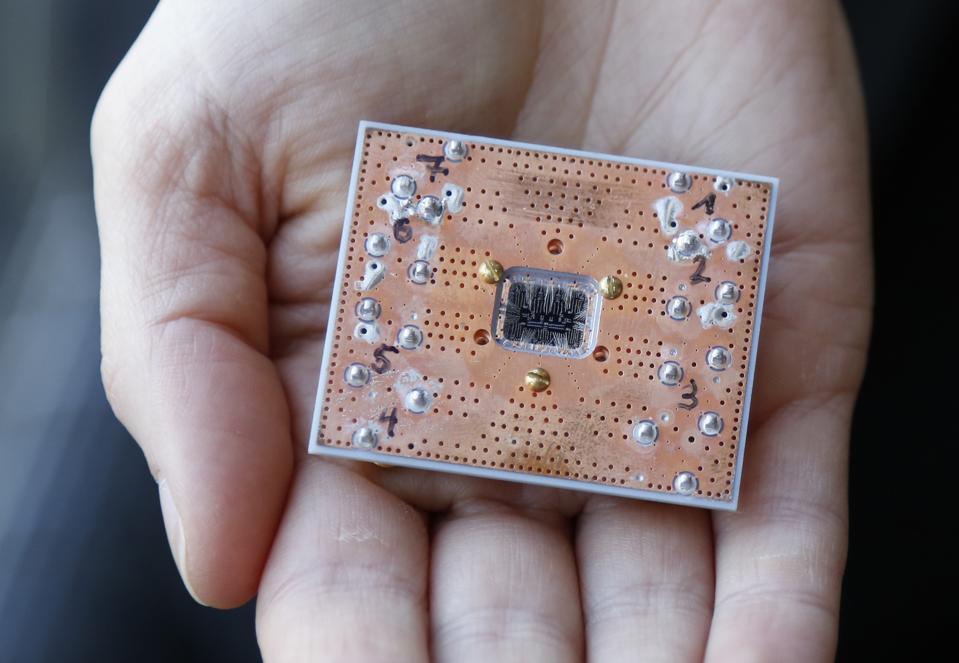

Will AI Chip Supply Dry Up and Turn Your Project Into a Costly Monster?

CIOs and other IT leaders face tremendous pressure to quickly develop GenAI

strategies in the face of a potential supply shortage. With the cost of

individual units, spending can easily reach into the multi-million-dollar range.

But it wouldn’t be the first time companies have dealt with semiconductor

shortages. During the COVID-19 pandemic, a spike in PC demand for remote work

met with global shipping disruptions to create a chip drought that impacted

everything from refrigerators to automobiles and PCs. “One thing we learned was

the importance of supply chain resiliency, not being overly dependent on any one

supplier and understanding what your alternatives are,” Hoecker says. “When we

work with clients to make sure they have a more resilient supply chain, we

consider a few things … One is making sure they rethink how much inventory do

they want to keep for their most critical components so they can survive any

potential shocks.” She adds, “Another is geographic resiliency, or understanding

where your components come from and do you feel like you’re overly exposed to

any one supplier or any one geography.” Nvidia’s GPUs, she notes, are harder to

find alternatives for -- but other chips do have alternatives. “There are other

places where you can dual-source or find more resiliency in your marketplace.”

WTF? Why the cybersecurity sector is overrun with acronyms

Imagine an organization is in the midst of a massive hack or security breach,

and employees or clients are having to Google frantically to translate company

emails, memos or crisis plans, slowing down the response. When these acronyms

inevitably migrate into a cybersecurity company’s external marketing or

communications efforts, they’re almost guaranteed to cause the general public to

tune out news about issues and innovations that could have a far-reaching impact

on how people live their lives and conduct their businesses. This is especially

true as artificial intelligence (AI!) and machine learning (ML!) technologies

expand and new acronyms emerge to keep pace with developments. Acronyms can also

have unfortunate real-life connotations — point of sale, to name just one

example. When shortened to POS, it can suggest something is… well, crappy. ...

So, what’s behind the tendency to shorten terms to a jumble of often

incomprehensible acronyms and abbreviations? “On the one hand, acronyms,

abbreviations and jargon are used to achieve brevity, standardization and

efficiency in communication, so if a profession is steeped in complex and

technical language, it will likely be flowing with acronyms,” says Ian P.

McCarthy, a professor of innovation and operations management at Simon Fraser

University in Burnaby, British Columbia.

![Windows security and protection [Windows logo/locks]](https://images.idgesg.net/images/article/2018/02/windows_security_safety_protection_encryption_locks_thinkstock_831741980-100749419-large.jpg)

![app svcs dev wants soad18_thumb[2]](https://f5.com/Portals/1/Users/038/38/38/app_svcs_dev_wants_soad18_thumb%5B2%5D_thumb.png?ver=2018-03-14-084407-557)