Quote for the day:

“The greatest leader is not necessarily the one who does the greatest things. He is the one that gets the people to do the greatest things.” -- Ronald Reagan

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 19 mins • Perfect for listening on the go.

Where to begin a cloud career

Starting a career in cloud computing often seems daunting due to perceived

barriers like expensive boot camps and complex certifications, but David

Linthicum argues that the best entry point is actually through free

foundational courses. These no-cost resources allow beginners to gain

essential orientation, learning vital concepts such as infrastructure,

elasticity, and governance without financial risk. Major providers like AWS,

Microsoft Azure, and Google Cloud offer these learning paths to cultivate a

skilled ecosystem of future professionals. By utilizing these introductory

materials, learners can compare different platforms to see which best aligns

with their career goals — such as choosing Azure for enterprise Windows

environments or AWS for startup versatility — before committing to a specific

specialization. Linthicum emphasizes that these courses provide a structured

progression from broad terminology to mental models, which is more effective

than jumping straight into technical tools. Furthermore, he highlights that

cloud careers are accessible even to those without coding backgrounds,

including roles in security, project delivery, and business analysis. The

ultimate strategy is to treat free courses as a launchpad for momentum; by

finishing introductory training across multiple providers, aspiring

professionals can build the necessary breadth and confidence to pursue more

advanced hands-on labs and role-based certifications later.

Starting a career in cloud computing often seems daunting due to perceived

barriers like expensive boot camps and complex certifications, but David

Linthicum argues that the best entry point is actually through free

foundational courses. These no-cost resources allow beginners to gain

essential orientation, learning vital concepts such as infrastructure,

elasticity, and governance without financial risk. Major providers like AWS,

Microsoft Azure, and Google Cloud offer these learning paths to cultivate a

skilled ecosystem of future professionals. By utilizing these introductory

materials, learners can compare different platforms to see which best aligns

with their career goals — such as choosing Azure for enterprise Windows

environments or AWS for startup versatility — before committing to a specific

specialization. Linthicum emphasizes that these courses provide a structured

progression from broad terminology to mental models, which is more effective

than jumping straight into technical tools. Furthermore, he highlights that

cloud careers are accessible even to those without coding backgrounds,

including roles in security, project delivery, and business analysis. The

ultimate strategy is to treat free courses as a launchpad for momentum; by

finishing introductory training across multiple providers, aspiring

professionals can build the necessary breadth and confidence to pursue more

advanced hands-on labs and role-based certifications later.Cybersecurity Risks Related to the Iran War

In the article "Cybersecurity Risks Related to the Iran War," authors Craig Horbus and Ryan Robinson explore how modern geopolitical tensions between Iran, the United States, and Israel have expanded into a parallel digital battlefield. As conventional military operations escalate, cybersecurity experts and regulators warn that financial institutions and critical infrastructure are facing heightened risks from state-sponsored actors and affiliated hacktivists. Groups like "Handala" have already demonstrated their disruptive capabilities by targeting energy companies and medical providers, using techniques such as DDoS attacks, data-wiping malware, and sophisticated phishing campaigns. These adversaries target the financial sector primarily to cause widespread economic instability, erode public confidence, and secure funding for hostile activities through fraudulent transfers or ransomware. Consequently, regulatory bodies like the New York Department of Financial Services are urging institutions to adopt more robust cyber resilience strategies. This includes intensifying network monitoring, enhancing authentication protocols, and strengthening third-party vendor risk management. The article emphasizes that cybersecurity is no longer merely a technical IT concern but a critical legal and strategic obligation. Ensuring that incident response plans can withstand nation-state level threats is essential for maintaining global economic stability in an increasingly volatile digital landscape where physical conflicts and cyber warfare are now inextricably linked.Vector Database - A Deep Dive

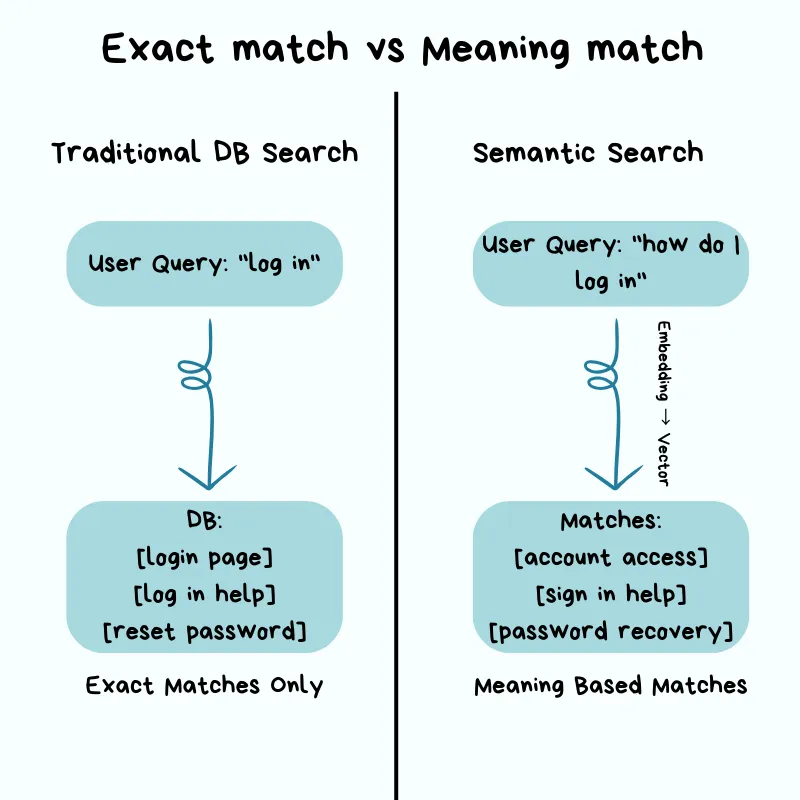

Vector databases represent a specialized class of data management systems

engineered to efficiently store, index, and retrieve high-dimensional vector

embeddings, which are numerical representations of unstructured data like

text, images, and audio. Unlike traditional relational databases that rely on

exact keyword matches and structured schemas, vector databases leverage the

"meaning" of data by measuring the mathematical distance between vectors in a

multi-dimensional space. This enables powerful semantic search capabilities

where the system identifies items with conceptual similarities rather than

just literal overlaps. At their core, these databases utilize embedding models

to transform raw information into dense vectors, which are then organized

using specialized indexing algorithms such as Hierarchical Navigable Small

World (HNSW) or Inverted File Index (IVF). These techniques facilitate

Approximate Nearest Neighbor (ANN) searches, allowing for rapid retrieval

across billions of data points with minimal latency. Consequently, vector

databases have become the foundational "long-term memory" for modern AI

applications, particularly in Retrieval-Augmented Generation (RAG) workflows

and recommendation engines. By bridging the gap between raw unstructured data

and machine-interpretable context, they empower developers to build

intelligent, scalable systems that can understand and process information at a

more human-like level of nuance and complexity, while handling massive

datasets through horizontal scaling and efficient sharding strategies.

Vector databases represent a specialized class of data management systems

engineered to efficiently store, index, and retrieve high-dimensional vector

embeddings, which are numerical representations of unstructured data like

text, images, and audio. Unlike traditional relational databases that rely on

exact keyword matches and structured schemas, vector databases leverage the

"meaning" of data by measuring the mathematical distance between vectors in a

multi-dimensional space. This enables powerful semantic search capabilities

where the system identifies items with conceptual similarities rather than

just literal overlaps. At their core, these databases utilize embedding models

to transform raw information into dense vectors, which are then organized

using specialized indexing algorithms such as Hierarchical Navigable Small

World (HNSW) or Inverted File Index (IVF). These techniques facilitate

Approximate Nearest Neighbor (ANN) searches, allowing for rapid retrieval

across billions of data points with minimal latency. Consequently, vector

databases have become the foundational "long-term memory" for modern AI

applications, particularly in Retrieval-Augmented Generation (RAG) workflows

and recommendation engines. By bridging the gap between raw unstructured data

and machine-interpretable context, they empower developers to build

intelligent, scalable systems that can understand and process information at a

more human-like level of nuance and complexity, while handling massive

datasets through horizontal scaling and efficient sharding strategies.Reimagining tech infrastructure for (and with) agentic AI

The rapid evolution of agentic AI is compelling chief technology officers to fundamentally reimagine IT infrastructure, moving beyond traditional support layers toward a modular, "mesh-like" backbone that orchestrates autonomous agents. As AI workloads expand, organizations face a critical dual challenge: infrastructure costs are projected to triple by 2030 while budgets remain stagnant, necessitating a shift where AI is used to manage the very systems it inhabits. Successfully scaling agentic AI requires building "agent-ready" foundations characterized by composability, secure APIs, and robust governance frameworks that ensure accountability. High-value impacts are already surfacing in areas like service desk operations, observability, and hosting, where agents can automate up to 80 percent of routine tasks, potentially reducing run-rate costs by 40 percent. This transition demands a significant cultural and operational pivot, shifting the role of IT professionals from manual ticket-based troubleshooting to the supervision and architectural design of intelligent systems. By integrating these autonomous entities into a coherent backbone, enterprises can bridge the gap between experimentation and enterprise-wide scale, transforming infrastructure from a reactive cost center into a dynamic platform for innovation. Those who embrace this agentic shift will secure a significant advantage in speed, resilience, and economic efficiency in the AI-driven era.Quantum-Safe Security: How Enterprises Can Prepare for Q-Day

The provided page explores the critical necessity for enterprises to

transition toward quantum-safe security to mitigate the existential threats

posed by future quantum computers. Traditional encryption methods, such as RSA

and ECC, are increasingly vulnerable to advanced quantum algorithms, most

notably Shor’s algorithm, which can efficiently solve the complex mathematical

problems that currently protect digital infrastructure. A particularly urgent

concern highlighted is the "harvest now, decrypt later" strategy, where

adversaries collect encrypted sensitive data today with the intention of

deciphering it once powerful quantum technology becomes commercially

available. To defend against these emerging risks, the article outlines a

strategic preparation roadmap for organizations. This involves achieving

"crypto-agility"—the ability to rapidly switch cryptographic standards—and

conducting comprehensive inventories of current encryption usage across all

systems. Furthermore, enterprises are encouraged to align with evolving NIST

standards for post-quantum cryptography (PQC) and prioritize the protection of

high-value, long-term assets. By integrating these quantum-resistant

algorithms into their security architecture now, businesses can ensure

long-term data confidentiality, maintain regulatory compliance, and

future-proof their digital operations against the impending "quantum

apocalypse." This proactive shift is presented not merely as a technical

update, but as a fundamental requirement for maintaining trust and operational

continuity in a post-quantum world.

The provided page explores the critical necessity for enterprises to

transition toward quantum-safe security to mitigate the existential threats

posed by future quantum computers. Traditional encryption methods, such as RSA

and ECC, are increasingly vulnerable to advanced quantum algorithms, most

notably Shor’s algorithm, which can efficiently solve the complex mathematical

problems that currently protect digital infrastructure. A particularly urgent

concern highlighted is the "harvest now, decrypt later" strategy, where

adversaries collect encrypted sensitive data today with the intention of

deciphering it once powerful quantum technology becomes commercially

available. To defend against these emerging risks, the article outlines a

strategic preparation roadmap for organizations. This involves achieving

"crypto-agility"—the ability to rapidly switch cryptographic standards—and

conducting comprehensive inventories of current encryption usage across all

systems. Furthermore, enterprises are encouraged to align with evolving NIST

standards for post-quantum cryptography (PQC) and prioritize the protection of

high-value, long-term assets. By integrating these quantum-resistant

algorithms into their security architecture now, businesses can ensure

long-term data confidentiality, maintain regulatory compliance, and

future-proof their digital operations against the impending "quantum

apocalypse." This proactive shift is presented not merely as a technical

update, but as a fundamental requirement for maintaining trust and operational

continuity in a post-quantum world.Your Disaster Recovery Plan Doesn’t Account for AI Agents. It Should

The article "Your Disaster Recovery Plan Doesn’t Account for AI Agents. It

Should" highlights a critical gap in contemporary business continuity

strategies as enterprise adoption of agentic AI accelerates. While Gartner

predicts a massive surge in AI agents embedded within applications by 2026,

many organizations still rely on legacy governance frameworks that operate at

human speeds. These traditional models are ill-equipped for autonomous agents

that execute thousands of data accesses instantly, often bypassing standard

security alerts. Unlike traditional technical failures with clear timestamps,

AI governance failures are often "silent," characterized by over-permissioned

agents accessing sensitive datasets over long periods. This leads to an

exponential increase in the "blast radius" of potential breaches across cloud

and on-premises environments. To mitigate these risks, the author advocates

for machine-speed governance that utilizes dynamic, context-aware access

controls and just-in-time permissions. By embedding governance directly into

the architecture, organizations can transform it from a deployment bottleneck

into a recovery accelerant. Such an approach provides the immutable audit

trails necessary to drastically reduce the 100-day recovery window typically

associated with AI-related incidents. Ultimately, robust governance is

presented not as a constraint, but as a prerequisite for sustaining resilient

AI innovation.

The article "Your Disaster Recovery Plan Doesn’t Account for AI Agents. It

Should" highlights a critical gap in contemporary business continuity

strategies as enterprise adoption of agentic AI accelerates. While Gartner

predicts a massive surge in AI agents embedded within applications by 2026,

many organizations still rely on legacy governance frameworks that operate at

human speeds. These traditional models are ill-equipped for autonomous agents

that execute thousands of data accesses instantly, often bypassing standard

security alerts. Unlike traditional technical failures with clear timestamps,

AI governance failures are often "silent," characterized by over-permissioned

agents accessing sensitive datasets over long periods. This leads to an

exponential increase in the "blast radius" of potential breaches across cloud

and on-premises environments. To mitigate these risks, the author advocates

for machine-speed governance that utilizes dynamic, context-aware access

controls and just-in-time permissions. By embedding governance directly into

the architecture, organizations can transform it from a deployment bottleneck

into a recovery accelerant. Such an approach provides the immutable audit

trails necessary to drastically reduce the 100-day recovery window typically

associated with AI-related incidents. Ultimately, robust governance is

presented not as a constraint, but as a prerequisite for sustaining resilient

AI innovation.Cloud Native Platforms Transforming Digital Banking

The financial services industry is undergoing a profound structural revolution as traditional banks transition from rigid, monolithic legacy systems to agile, cloud-native architectures. This shift is centered on the adoption of microservices and containerization, allowing institutions to break down complex applications into independent, modular components. Such an approach enables rapid deployment of updates and innovative fintech services without disrupting core operations, ensuring established banks can effectively compete with nimble startups. Beyond mere speed, cloud-native platforms offer superior security through "Zero Trust" models and immutable infrastructure, which mitigate risks like configuration errors and persistent malware. Furthermore, the integration of open banking APIs and real-time payment processing transforms banks into central hubs within a broader digital ecosystem, providing customers with instant, seamless financial experiences. The scalability of the cloud also provides a robust foundation for Artificial Intelligence, facilitating hyper-personalized "predictive banking" that anticipates user needs. Ultimately, by embracing cloud computing, financial institutions are not only automating compliance through "Policy as Code" but are also building a flexible, future-proof foundation capable of incorporating emerging technologies like blockchain and quantum computing to meet the demands of the modern global economy.Turning security into a story: How managed service providers use reporting to drive retention and revenue

Managed Service Providers (MSPs) often face the challenge of proving their value because effective cybersecurity is inherently "invisible," resulting in an absence of security breaches that customers may interpret as a lack of necessity for the service. To bridge this gap, MSPs must transition from providing raw technical data to crafting a compelling narrative through strategic reporting. As highlighted by the experiences of industry professionals using SonicWall tools, the core of a successful MSP practice relies on five pillars: monitoring, patch management, configuration oversight, alert response, and, most importantly, reporting. By utilizing automated platforms like Network Security Manager (NSM) and Capture Client, MSPs can produce detailed assessments and audit trails that make their backend efforts tangible to clients. Moving beyond monthly logs to implement Quarterly Business Reviews (QBRs) allows providers to transition from mere vendors to trusted strategic advisors. This shift significantly impacts business outcomes; for instance, MSPs employing regular QBRs often see renewal rates jump from 71% to 96%. Ultimately, by structuring services into clear tiers with documented deliverables, MSPs can use reporting to tell a story of protection. This strategy not only justifies current expenditures but also drives new revenue by fostering client trust and highlighting unmet security needs.Cybersecurity in the AI age: speed and trust define resilience

In the rapidly evolving digital landscape, cybersecurity has transitioned from

a technical hurdle to a strategic imperative where speed and trust are the

cornerstones of resilience. According to insights from iqbusiness, the

"breakout time" for e-crime—the window an attacker has to move laterally

within a system—has plummeted from nearly ten hours in 2019 to just 29 minutes

today, necessitating near-instantaneous responses. This urgency is exacerbated

by artificial intelligence, which serves as a double-edged sword; while it

empowers attackers to craft sophisticated phishing campaigns and malicious

code, it also provides defenders with automated tools to filter noise and

prioritize threats. However, the rise of "shadow AI" and a lack of visibility

into unsanctioned tools pose significant risks to data integrity. To combat

these threats, the article advocates for a "Zero Trust" architecture—where

every interaction, whether by human or machine, is verified—and the adoption

of robust frameworks like the NIST Cybersecurity Framework 2.0. Ultimately,

modern cyber resilience depends on more than just defensive technology; it

requires a proactive organisational culture, strong leadership, and the

seamless integration of AI into security strategies. By prioritising

visibility and governance, businesses can navigate the complexities of the AI

age while maintaining the trust of their stakeholders and partners.

In the rapidly evolving digital landscape, cybersecurity has transitioned from

a technical hurdle to a strategic imperative where speed and trust are the

cornerstones of resilience. According to insights from iqbusiness, the

"breakout time" for e-crime—the window an attacker has to move laterally

within a system—has plummeted from nearly ten hours in 2019 to just 29 minutes

today, necessitating near-instantaneous responses. This urgency is exacerbated

by artificial intelligence, which serves as a double-edged sword; while it

empowers attackers to craft sophisticated phishing campaigns and malicious

code, it also provides defenders with automated tools to filter noise and

prioritize threats. However, the rise of "shadow AI" and a lack of visibility

into unsanctioned tools pose significant risks to data integrity. To combat

these threats, the article advocates for a "Zero Trust" architecture—where

every interaction, whether by human or machine, is verified—and the adoption

of robust frameworks like the NIST Cybersecurity Framework 2.0. Ultimately,

modern cyber resilience depends on more than just defensive technology; it

requires a proactive organisational culture, strong leadership, and the

seamless integration of AI into security strategies. By prioritising

visibility and governance, businesses can navigate the complexities of the AI

age while maintaining the trust of their stakeholders and partners.

No comments:

Post a Comment