“The artificial intelligence (AI) boom across all industries has fueled anxiety in the workforce, with employees fearing ethical usage, legal risks and job displacement,” EY said in its report. The future of work has shifted due to genAI in particular, enabling work to be done equally well and securely across remote, field, and office environments, according to EY. ... “We can say that the most common worry is that AI will impact an employee’s role – either making it obsolete entirely or changing it in a way which concerns the employee, For example, taking some of the challenge or excitement out of it,” Harris said. “And the point is, these perspectives are already having an impact – irrespective of what the future really holds.” Harris said in another Gartner survey, employees indicated they were less likely to stay with an organization due to concerns about AI-driven job loss. ... Organizations can also overcome employee AI fears and build trust by offering training or development on a range of topics, such as how AI works, how to create prompts and effectively use AI, and even how to evaluate AI output for biases or inaccuracies. And employees want to learn.

Importance of security-by-design for IT and OT systems in building a security resilient framework

Regular security testing, vulnerability assessments, penetration testing, and

compliance audits are vital for identifying vulnerabilities and potential attack

vectors. This proactive approach allows organizations to rectify weaknesses,

enhance security posture, and protect their systems effectively. For OT systems,

specialized testing methods that support the unique requirements of industrial

environments are necessary. ... Developers should adopt secure coding practices,

including coding standards, input validation, secure data storage, secure

communication protocols, code reviews, and automated security testing. These

measures help identify and mitigate security issues during the development

phase, eliminating common vulnerabilities. Additionally, training developers in

secure coding techniques and fostering a security-centric culture within

development teams are equally crucial. ... Regular software updates and

effective patch management are essential to address newly identified security

vulnerabilities. Staying current with security patches and updates for all

software components is crucial.

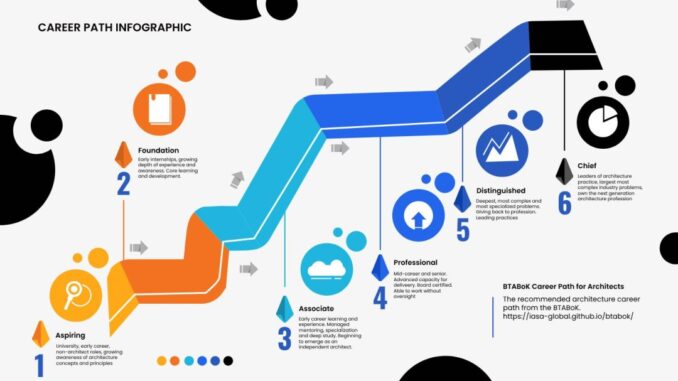

The Case For a Managed Career for Architects

The case for making architecture a managed profession stems from a few

critical factors: Rising Levels of Societal Impact: The impact of technology

is growing daily. This impact and difficulties of technology, not just threats

but the daily interaction of people with technology like subscription models,

social media, passwords, banking etc, is increasingly important to the average

person. ... Regulatory Pressure: Increasing pressure is coming to bear on all

aspects of technology as it relates to government and regulation. From things

like sustainability, privacy and accountability to impacts to purchasing,

monopolies, identity and security. The more prevalent technology becomes in

society, the more regulation that needs to be met to ensure appropriate use.

... New Technology Opportunities/Threats: Avoiding catastrophes in both small

and large scopes is one function of modern professions. Non-professionals are

not allowed to play with dangerous research or deploy dangerous products in

most fields. ... Severe Demand/Quality Problems: The demand for high-quality

architecture professionals is growing daily. This demand can no longer be met

in the role-based education methods that were developed in the early rush of

the 90’s.

Is your bank’s architecture trapped in the past? It’s time to recompose it

The complexity of banking modernisation, particularly the cost and resource

intensiveness associated with a big-bang approach, is one of several reasons

many banks may still be stuck with a legacy technology platform. With

contemporary architectural techniques such as the “strangulation pattern”,

banks can achieve the desired modernisation in a streamlined manner. The

strangulation pattern is a software migration strategy involving forming a new

software layer, the “strangler”, around the legacy banking system. This

strangler interacts with the core system’s data and functionality through

well-defined APIs. Gradually, new functionalities are developed within the

strangler layer in parallel with the legacy systems, allowing the bank to

independently test and refine the new functionalities. Over time, more and

more functionalities, based on needs and complexity, are migrated from the

core system to the strangler layer. As a result, the core system becomes less

and less critical and can be retired entirely or kept as a backup system. Not

only does this approach minimise risk compared to a big-bank switchover, but

it also allows business operations to continue with minimal

disruption.

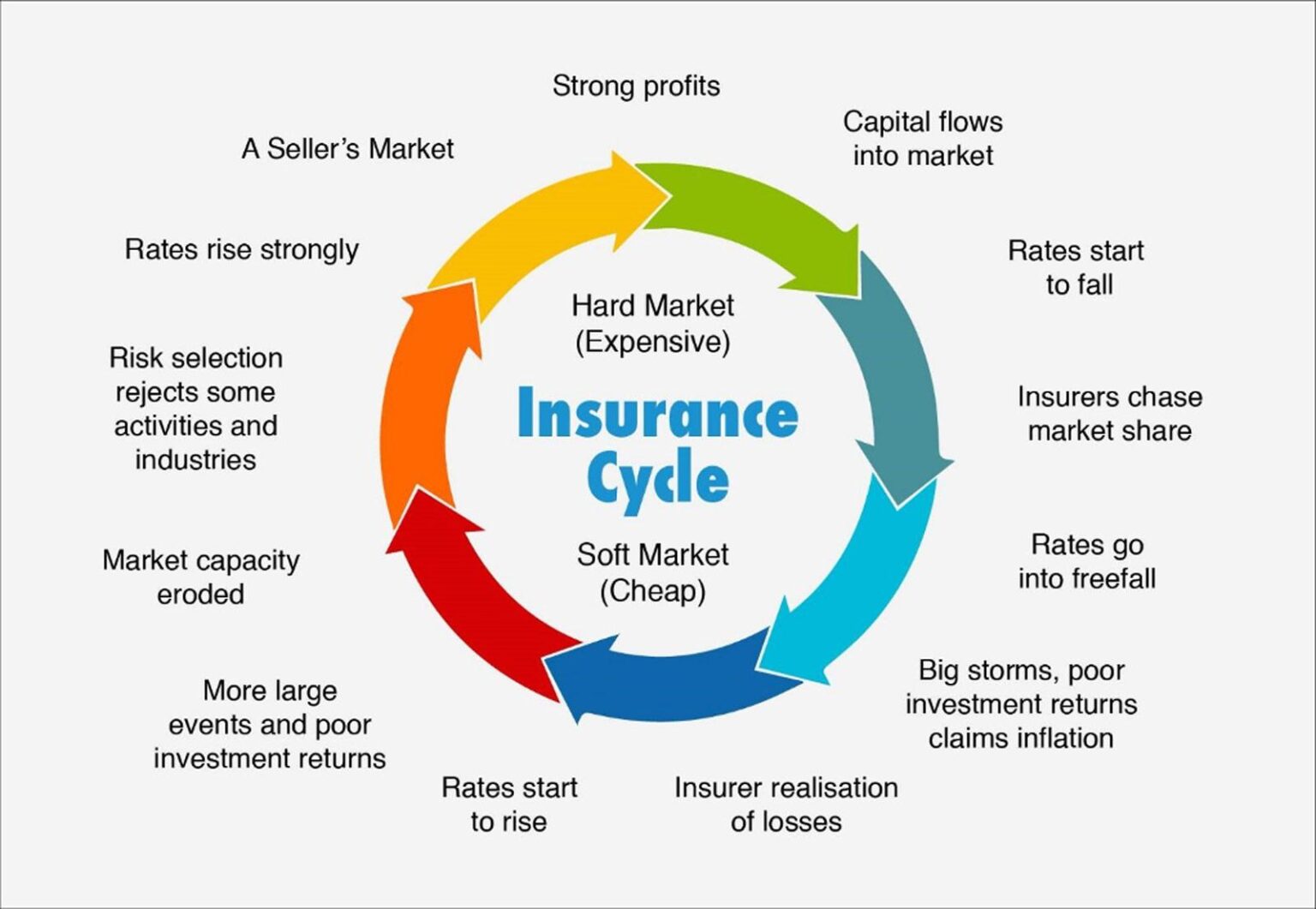

Cyberinsurance Premiums are Going Down: Here’s Why and What to Expect

The insurance cycle is described in Wikipedia as “a term describing the

tendency of the insurance industry to swing between profitable and

unprofitable periods over time…” Such swings are common to all businesses but

are particularly relevant to insurance. Within this insurance cycle, the swing

is between a ‘hard market’ and a ‘soft market’. Howden defines it thus: “In

simple terms, [a soft market] is when there is a lot of insurance capacity,

and rates are low. Conversely, a hard market is when insurance capacity is

reduced and premium rates are high.” Noticeably, the state of the insured does

not figure. “Insurance markets (cyber, property, D&O, etc) tend to run

through rating cycles,” explains George Mawdsley, head of risk solutions at

DeNexus. “What makes cyber unique is that there is material uncertainty around

how big the ‘Big Storms’ can get, which means capital allocators will make

conservative assumptions on max downside or will not invest. Given the strong

growth projections (demand) for the cyber insurance market, we expect this

dynamic to drive up prices over the long term.”

Productivity and patience: How GitHub Copilot is expanding development horizons

Copilot shines in "implementing straightforward, well-defined components in

terms of performance and other non-functional aspects. Its efficiency

diminishes when addressing complex bugs or tasks requiring deep domain

expertise." ... Copilot's greatest challenge is context, he pointed out. "Code

and code development has a lot to do with the context that you're dealing

with. Are you in a legacy code base or not? Are you in COBOL or in C++ or in

JavaScript or TypeScript? It's a lot of context that needs to happen for the

quality of that code to be high and for you to accept it." ... The impact on

software development from AI will be subtler: "What if a text box is all they

needed to be able to accomplish something that creates software and something

that they could then derive value from?" For example, said Rodriguez: "If I

could say very quickly in my phone, 'Hey, I am thinking of talking to my

daughter about these things. Can you give me the last three X, Y, and Z

articles and then just create a little program that we could play as a game?'

You could envision Copilot being able to help you with that in the future."

How Part-Time Senior Leaders Can Help Your Business

It’s not only CEOs that benefit. With their deep functional expertise,

fractionals often serve as advisors and mentors to other C-suite leaders.

Barry Hurd, a fractional chief marketing officer (CMO), describes his role as

providing expert counsel to full-time CMOs: “I’ve worked with a couple of CMOs

who have hired me to simply double-check their work. I act as the executive

coach, bringing my 30 years of wisdom and experience.” Similarly, Katie

Walter, another fractional CMO, shares an experience where she supported an

executive transitioning into a marketing leadership role: “She had never led

the marketing function before, so the expectation was that I would work

alongside her and help her to become more effective. In this case, I was

introduced to the team as her coach.” The benefits also extend to the

organization as a whole. Because fractional leaders often juggle multiple

roles, they gain access to a wide professional network and are exposed to

diverse working methods. This unique position allows them to introduce new

ideas and practices among the organizations they serve.

How the CISO Can Transform Into a True Cyber Hero

Operationalizing readiness, response, and recovery is where the rubber meets

the road for the CISO. Plans, processes, and technologies underpin operations,

but they each rely on people. Tabletop exercises that focus only on technical

response activities strengthen only one "muscle group" of the organization.

Consider a different kind of cyber exercise — a war game that involves the

entire organization. By exercising the incident management plan with a broader

constituency of stakeholders, organizations can build "muscle memory," test

communication channels, and identify decisions or risks based on a given

scenario. As part of the war game, the recovery team should run through the

sequential restoration. By socializing the order in which operations will

return after a disruption, the team can reduce the number of "Is it back

online yet?" queries received during a real incident. ... There's an old

joke that "CISO" stands for "career is seriously over." But today’s CISO has a

serious role to play as a hero for their organization. It is a simple matter

of evolving from a primarily technical role to a role that incorporates

empowering their human peers and stakeholders to become greater collaborators

in cyber-incident response, recovery, and readiness.

How CISOs can protect their personal liability

One of the most effective and methodical methods of documentation that a CISO

can maintain is a risk register that identifies existing cyber risk and

records risk acceptance by relevant business stakeholders. This can help bring

greater visibility into cyber risk to the board and it certainly helps CISOs

to protect themselves. “In order to run a security program, you have to have a

risk register. It’s like table stakes,” says Greg Notch CISO of Expel, a

managed detection and response firm, and a longtime security veteran who

served as CISO for the National Hockey League prior to this job. ... Even with

rock solid policies, procedures, and documentation, CISOs should also seek to

establish legal protection through tools like indemnification agreements,

employment contractual terms, and the right level of insurance protection.

Kolochenko says CISOs that are unsure of their protections should proactively

reach out to their general counsel and ask them about all of their duties,

liabilities, and protections. If something sounds unfavorable, push back, he

says.

How New Frameworks for Cyber Metrics are Reshaping Boardroom Conversations

Ideally, boards have one or more sitting executives with risk experience, but

the reality is that boards primarily consist of executives with a

non-technical understanding of risk management methods. Risk and cybersecurity

information must be always conveyed in easy-to-understand, business-oriented

language. Start by quantifying risk in monetary or dollar terms. Board members

may not understand the technical details of Monte Carlo simulations or

probabilistic risk assessments, but they do need to understand the potential

impact of risk on the business in the most efficient way. Quantification can

help anyone understand how the business anticipates risk, prioritizes risk

controls, and takes preventative action against risk. Tailor risk information

to board members, depending on their expertise and the board report’s purpose.

There is no one-size-fits-all approach to reporting. CISOs can segregate risk

metrics into categories, like security, financial, third-party, or employee

awareness risks. Grouping information together helps non-technical executives

understand how risks are interconnected and what’s being done to anticipate

these risks.

Quote for the day:

“The more you loose yourself in

something bigger than yourself, the more energy you will have.” --

Norman Vincent Peale

.jpg?width=850&auto=webp&quality=95&format=jpg&disable=upscale)