Quote for the day:

“The only true wisdom is knowing that you know nothing.” -- Socrates

How AI is Becoming More Human-Like With Emotional Intelligence

The concept of humanizing AI is designing systems that can understand,

interpret, and respond to human emotions in a way that feels more natural. It

is making the AI efficient enough to pick up cues to read the room and react

as a human would but in a polished way. ... It is only natural that a

potential user will prefer to interact with someone who acknowledges the

queries and engages with them like a human. AI that sounds and responds like a

human helps build trust and rapport with users. ... AI that adapts based on

mood and tone. You cannot keep sending automated messages to your users,

especially to the ones who are irate. AI that sounds and responds like a human

helps build trust and rapport with users ... The humanization of AI makes AI

accessible and inclusive to all. Voice assistants and screen readers,

AI-powered speech-to-text, and text-to-speech tools are some great examples of

these fleets. ... As AI becomes more aware and powerful there are rising

concerns about its ethical usage. There have to be checks in place that ensure

AI doesn’t blatantly mimic human emotions to exploit users’ feelings. There

should be a trigger warning for the users to know that they are dealing with

machine-generated content. Businesses must ensure ethical AI development,

prioritizing user trust and transparency systems should be programmed to

respect user privacy and not manipulate users into making purchases or

conversions.

Beyond Trends: A Practical Guide to Choosing the Right Message Broker

In distributed systems, messaging patterns define how services communicate and

process information. Each pattern comes with unique requirements, such as

ordering, scalability, error handling, or parallelism, which guide the

selection of an appropriate message broker. ... The Event-Carried State

Transfer (ECST) pattern is a design approach used in distributed systems to

enable data replication and decentralized processing. In this pattern, events

act as the primary mechanism for transferring state changes between services

or systems. Each event includes all the necessary information (state) required

for other components to update their local state without relying on

synchronous calls to the originating service. By decoupling services and

reducing the need for real-time communication, ECST enhances system

resilience, allowing components to operate independently even when parts of

the system are temporarily unavailable. ... The Event Notification Pattern

enables services to notify other services of significant events occurring

within a system. Notifications are lightweight and typically include just

enough information (e.g., an identifier) to describe the event. To process a

notification, consumers often need to fetch additional details from the source

(and/or other services) by making API calls.

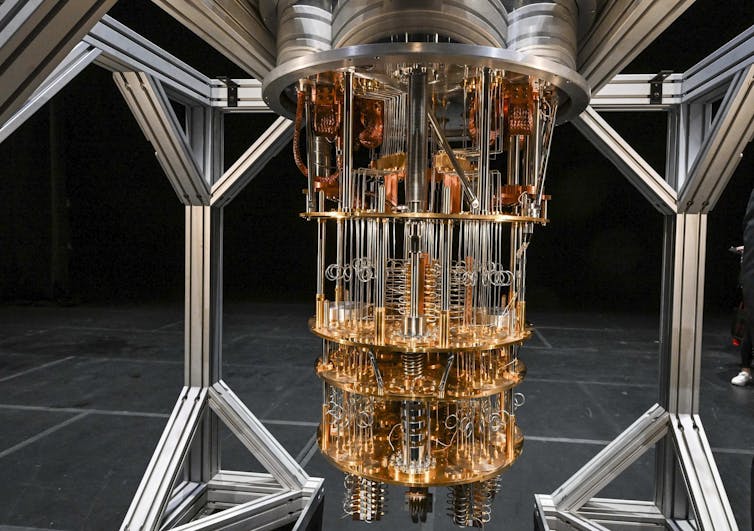

Successful AI adoption comes down to one thing: Smarter, right-size compute

A common perception in the enterprise is that AI solutions require a massive

investment right out of the gate, across the board, on hardware, software and

services. That has proven to be one of the most common barriers to adoption —

and an easy one to overcome, Balasubramanian says. The AI journey kicks off

with a look at existing tech and upgrades to the data center; from there, an

organization can start scaling for the future by choosing technology that can

be right-sized for today’s problems and tomorrow’s goals. “Rather than

spending everything on one specific type of product or solution, you can

now right-size the fit and solution for the organizations you have,”

Balasubramanian says. “AMD is unique in that we have a broad set of solutions

to meet bespoke requirements. We have solutions from cloud to data center,

edge solutions, client and network solutions and more. ... While both hardware

and software are crucial for tackling today’s AI challenges, open-source

software will drive true innovation. “We believe there’s no one company in

this world that has the answers for every problem,” Balasubramanian says. “The

best way to solve the world’s problems with AI is to have a united front, and

to have a united front means having an open software stack that everyone can

collaborate on. ...”

CDOs: Your AI is smart, but your ESG is dumb. Here’s how to fix it

Embedding sustainability into a data strategy requires a deliberate shift in

how organizations manage, govern and leverage their data assets. CDOs must

ensure that sustainability considerations are integrated into every phase of

data decision-making rather than treating ESG as an afterthought or compliance

requirement. A well-designed strategy can help organizations balance business

growth with environmental, social and governance (ESG) responsibility while

improving operational efficiency. ... Advanced analytics and AI can unlock new

opportunities for sustainability. Predictive modeling can help companies

optimize energy consumption, while AI-driven insights can identify supply

chain inefficiencies that lead to excessive waste. For example, retailers are

leveraging AI-powered demand forecasting to reduce overproduction and excess

inventory, significantly cutting down carbon emissions and waste. ...

Creating a sustainability-focused data culture requires education and

engagement across all levels of the organization. CDOs can implement

ESG-focused data literacy programs to ensure that business leaders, data

scientists and engineers understand the impact of their work on

sustainability. Encouraging collaboration between data teams and

sustainability departments ensures ESG considerations remain a priority

throughout the data lifecycle.

Five Critical Shifts for Cloud Native at a Crossroads

General-purpose operating systems can become a Kubernetes bottleneck at scale.

Traditional OS environments are designed for a wide range of use cases, carry

unnecessary overhead and bring security risks when running cloud native

workloads. Enterprises are increasingly instead turning to specialized

operating systems that are purpose-built for Kubernetes environments, finding

that this shift has advantages across security, reliability and operational

efficiency. The security implications are particularly compelling. While

traditional operating systems leave many potential entry points exposed,

specialized cloud native operating systems take a radically different

approach. ... Cost-conscious organizations (Is there another kind?) are

discovering that running Kubernetes workloads solely in public clouds isn’t

always the best approach. Momentum has continued to grow toward pursuing

hybrid and on-premises strategies for greater control over both costs and

capabilities. This shift isn’t just about cost savings, it’s about building

infrastructure precisely tailored to specific workload requirements, whether

that’s ultra-low latency for real-time applications or specialized

configurations for AI/machine learning workloads.

Moving beyond checkbox security for true resilience

A threat-informed and risk-based approach is paramount in an era of

perpetually constrained cybersecurity budgets. Begin by assessing the

organization’s crown jewels – sensitive customer data, intellectual property,

financial records, or essential infrastructure. These assets represent the

core of the organization’s value and should demand the highest priority in

protection.... Organizations frequently underestimate the risks from unmanaged

devices, also called shadow IT, and within their software supply chain. As

reliance on third-party software and libraries embedded within the

organization and in-house apps deepens, the attack surface becomes a

constantly shifting landscape with hidden vulnerabilities. Unmanaged devices

and unauthorized applications are equally problematic and can introduce

unexpected and substantial risks. To address these blind spots, organizations

must implement rigorous vendor risk management programs, track IT assets, and

enforce application control policies. These often-overlooked elements create

critical blind spots, allowing attackers to exploit vulnerabilities that

existing security measures might miss. ... Regardless of the trends, CISOs

should assess the specific threats relative to their organization and ensure

that foundational security measures are in place.

How to simplify app migration with generative AI tools

Reviewing existing documentation and interviewing subject matter experts is

often the best starting point to prepare for an application migration.

Understanding the existing system’s business purposes, workflows, and data

requirements is essential when seeking opportunities for improvement. This

outside-in review helps teams develop a checklist of which requirements are

essential to the migration, where changes are needed, and where unknowns

require further discovery. Furthermore, development teams should expect and

plan a change management program to support end users during the

migration. ... Technologists will also want to do an inside-out analysis,

including performing a code review, diagraming the runtime infrastructure,

conducting a data discovery, and analyzing log files or other observability

artifacts. Even more important may be capturing the dependencies, including

dependent APIs, third-party data sources, and data pipelines. This

architectural review can be time-consuming and often requires significant

technical expertise. Using genAI can simplify and accelerate the process.

“GenAI is impacting app migrations in several ways, including helping

developers and architects answer questions quickly regarding architectural and

deployment options for apps targeted for migration,” says Rob Skillington, CTO

& co-founder of Chronosphere.

How to Stop Expired Secrets from Disrupting Your Operations

Unlike human users, the credentials used by NHIs often don’t receive expiration

reminders or password reset prompts. When a credential quietly reaches the end

of its validity period, the impact can be immediate and severe: application

failures, broken automation workflows, service downtime, and urgent security

escalations. And unlike the food in your fridge, there’s no nosy relative to

point out that your secrets have gone bad. ... While TLS/SSL certificate

expiration often gets the most attention due to its visible impact on websites,

many types of machine credentials have built-in expiration. API keys silently

time out in backend services, OAuth tokens reach their limits, IAM role sessions

terminate, Kubernetes service account tokens expire, and database connection

credentials become invalid. ... The primary consequence of an expired credential

is a failed authentication attempt. At first glance, this might seem like a

simple fix – just replace the credential and restart the service. But in

reality, identifying and resolving an expired credential issue is rarely

straightforward. Consider a cloud-native application that relies on multiple

APIs, internal microservices, and external integrations. If an API key or OAuth

token used by a backend service expires, the application might return unexpected

errors, time out, or degrade in ways that aren’t immediately obvious.

Role of Interconnects in GenAI

The emergence of High-Performance Computing (HPC) demanded a leap in

interconnect capabilities. InfiniBand entered the scene, offering significantly

higher throughput and lower latency compared to existing technologies. It became

the cornerstone of data centers and large-scale computing environments, enabling

the rapid exchange of massive datasets required for complex simulations and

scientific computations. Simultaneously, the introduction of Peripheral

Component Interconnect Express (PCIe) revolutionized off-chip communication. ...

the scalability of GenAI models, particularly large language models, relies

heavily on robust interconnects. These systems facilitate the distribution of

computational load across multiple processors and machines, enabling the

training and deployment of increasingly complex models. This scalability is

achieved through efficient network topologies that minimize communication

bottlenecks, allowing for both vertical and horizontal scaling. Parallel

processing, a cornerstone of GenAI training, is also dependent on effective

interconnects. Model and data parallelism require seamless communication and

synchronization between processors working on different segments of data or

model components. Interconnects ensure that these processors can exchange

information efficiently, maintaining consistency and accuracy throughout the

training process.

That breach cost HOW MUCH? How CISOs can talk effectively about a cyber incident’s toll

Many CISOs struggle to articulate the financial impact of cyber incidents. “The

role of a CISO is really interesting and uniquely challenging because they have

to have one foot in the technical world and one foot in the executive world,”

Amanda Draeger, principal cybersecurity consultant at Liberty Mutual Insurance,

tells CSO. “And that is a difficult challenge. Finding people who can balance

that is like finding a unicorn.” ... Quantifying the costs of an incident in

advance is an inexact art greatly aided by tabletop exercises. “The best way in

my mind to flush all of this out is by going through a regular incident response

tabletop exercise,” Gary Brickhouse, CISO at GuidePoint Security, tells CSO.

“People know their roles so that when it does happen, you’re prepared.” It also

helps to develop an incident response (IR) plan and practice it frequently. “I

highly recommend having an incident response plan that exists on paper,” Draeger

says. “I mean literal paper so that when your entire network explodes, you still

have a list of phone numbers and contacts and something to get you started.” Not

only does the incident response plan lead to better cost estimates, but it will

also lead to a quicker return of network functions. “Practice, practice,

practice,” Draeger says.

.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)

/dq/media/media_files/5cF1SILWQRaPHFVmYj1U.jpg)

.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)