AI: A Catalyst for Gender Equality in the Workplace

The Equality and Human Rights Commission reports that 77% of mothers have

encountered negative or possibly discriminatory experiences during pregnancy,

maternity leave, or upon returning to work. The joy of impending motherhood is

often tainted by biases, as expecting mothers face subtle exclusions from

projects or career advancements. Maternity leave, intended as a sacred period

for bonding, becomes tinged with anxiety as women grapple with the fear of being

sidelined professionally and the pressure to resume duties prematurely.

Returning to the workplace brings feelings of inadequacy and frustration, met

with insufficient support for balancing work and family responsibilities. These

experiences, rife with frustration and disappointment, mark a daunting struggle

for women seeking to re-establish themselves professionally post-maternity

leave. However, despite these challenges, women actively choose to re-enter the

workforce, embarking on the second phase of their careers post-sabbatical.

Addressing these issues requires normative frameworks that ethically tackle the

consequences of AI usage.

How to Identify and Address the Challenges of Excessive Business Growth

In other words, when processes start breaking down, and you find yourself

constantly in reactive, catch-up mode, it's a sign you need more capacity. The

tipping point will vary for each company, but if productivity and quality take a

nosedive, growth has become excessive for your present resources. Other red

flags include: Customer complaints spike; Employees seem stressed, burned

out; You're always scrambling to meet deadlines; Infrastructure creaks

under the weight - think cyberattacks, IT failures, supply chain issues; No

time for strategy, only tackling emergencies; Costs rising faster than

revenue; Profitability declines. Essentially, if growth starts hurting

rather than helping, it's time for a change. ... Trying to manage a 100-person

company like a 10-person startup will lead to chaos. But running a 10-person

shop like a rigid 100-person bureaucracy will cause frustration. Align your

leadership style, organizational structure, systems, and talent to your current

size and growth needs.

AI Pushes Universities to Modernize IT Infrastructure

The convenience and accessibility of those technologies have created new demands

for higher-quality and customizable learning experiences in higher education.

According to data from McKinsey, 60% of students report that classroom learning

technologies such as generative AI, machine learning and supercomputing have

improved their learning and grades since COVID-19 began. In addition to using AI

in classrooms, institutions can implement AI solutions in their IT

decision-making to create a reliable, secure data infrastructure. As AI becomes

more mainstream in higher education operations, universities can better

understand, invest and apply AI-specific solutions to their IT needs. While

investing in AI and the technology to support it, universities can improve

operations, offering faster innovation and better student, faculty and

researcher experiences. ... With demand for advanced technological offerings at

universities becoming commonplace, IT teams face new challenges under small

bud/gets. Many require modern IT infrastructure to support increasingly large

datasets required for groundbreaking insights from research teams.

Future-proofing the digital rupee

Several factors contributed to the inception of India's CBDC. The global

competition for CBDC development, coupled with the enthusiasm among nations to

embrace digital solutions, played a pivotal role. The introduction of India's

CBDC, the digital rupee, might have been influenced, at least partially, by the

rising prevalence of cryptocurrencies, especially stablecoins. The Deputy

Governor of the Reserve Bank of India (RBI) emphasised the need for caution in

permitting such instruments. While stablecoins offer certain advantages, their

applicability is confined to a limited number of developed countries. The

success of UPI in India has raised questions about the necessity of deploying

CBDCs in the country, perhaps making it look like an inconspicuous addition to

an already largely developed payments landscape. The RBI Deputy Governor cited

the ascent of cryptocurrencies and concerns about policy sovereignty as one of

the reasons for considering CBDCs, along with improving digital transactions.

However, India presents a unique case with the well-established UPI system

already in place.

How to lock down backup infrastructure

The first thing to do is to protect the privileged accounts in your backup

system. First, separate these accounts from any centralized login system you

use, such as Active Directory, because these systems are sometimes

compromised. Create as much of a firewall between that production system and

the backup system as possible. And, of course, use a safe password, and do not

use any passwords for these accounts that are used anywhere else. (Personally

I would use a password manager to support having a different password

everywhere.) Finally, make sure that any such logins are protected by

multi-factor authentication, and use the best option available. Avoid the use

of email or SMS-based MFA, as it is easily foiled by an experienced hacker.

Try to use an OTP-based system of some kind, such as Google Authenticator,

Symantec VIP, or Yubikey. Also investigate if your backup system has enhanced

authentication for dangerous actions, such as deletion of backups before their

scheduled expiration, or restoration of any data to anywhere other than where

it was originally created. The first is used to easy delete backups from your

backup system, without setting off any alarms, and the second is used to

exfiltrate data by restoring it to a system the hacker controls.

Fortifying cyber defenses: A proactive approach to ransomware resilience

Instead of investing time in formulating non-binding pledges rather than

working on actionable solutions, the US Government should adopt a more

proactive stance by directly procuring advanced cybersecurity tools. These

tools, which have been developed to keep data safe and stop ransomware

attacks, exist and are continually evolving. By spearheading the

implementation, through investment and education, the government can set a

powerful example for the private sector to follow, thereby reinforcing the

nation’s cyber infrastructure. The effectiveness of such tools is not

hypothetical: they have been tested and proven in various cybersecurity

battlegrounds. They range from advanced threat detection systems that use

artificial intelligence to identify potential threats before they strike, to

automated response solutions that can protect data on infected systems and

networks, preventing the lateral spread of ransomware. Investing in these

tools would not only enhance the government’s defensive capabilities but would

also stimulate the cybersecurity industry, encouraging innovation and

development of even more effective defenses.

Cloud squatting: How attackers can use deleted cloud assets against you

The risk from cloud squatting issues can even be inherited from third-party

software components. In June, researchers from Checkmarx warned that attackers

are scanning npm packages for references to S3 buckets. If they find a bucket

that no longer exists, they register it. In many cases the developers of those

packages chose to use an S3 bucket to store pre-compiled binary files that are

downloaded and executed during the package’s installation. So, if attackers

re-register the abandoned buckets, they can perform remote code execution on

the systems of the users trusting the affected npm package because they can

host their own malicious binaries. ... The attack surface is very large, but

organizations need to start somewhere and the sooner the better. The IP reuse

and DNS scenario seems to be the most widespread and can be mitigated in

several ways: by using reserved IP addresses from a cloud provider which means

they won’t be released back into the shared pool until the organization

explicitly releases them, by transferring their own IP addresses to the cloud,

by using private (internal) IP addresses between services when users don’t

need to directly access those servers, or by using IPv6 addresses if offered

by the cloud provider because their number is so large that they’re unlikely

to ever be reused.

Data Leaders Say ‘AI Paralysis’ Stifling Adoption: Study

While AI is not new in the data industry, the public’s fascination with

generative AI has fueled a veritable gold rush for industries to adopt the

emerging technologies for a competitive advantage. But the lack of safety

guidelines and organizational framework and training may be suffocating AI

adoption efforts, according to the report. ... “What happened is everybody got

ahold of the GenAI hammer, and now everything looks like a nail,” she says,

adding that CIOs and CDOs must do their best to articulate the technical needs

to non-technical members of the C-suite. “I do think there’s a disconnect

between the CIO and CDO and the chief executive. We should not, in the data

and technology space, expect people to understand the layer of complexity that

we have to deal with. What we should be doing is taking that complexity and

creating a story and a narrative, so it makes sense to the other people

in our organization and businesses we work with.” The report also showed that

data governance has stalled just as AI is being adopted across industries.

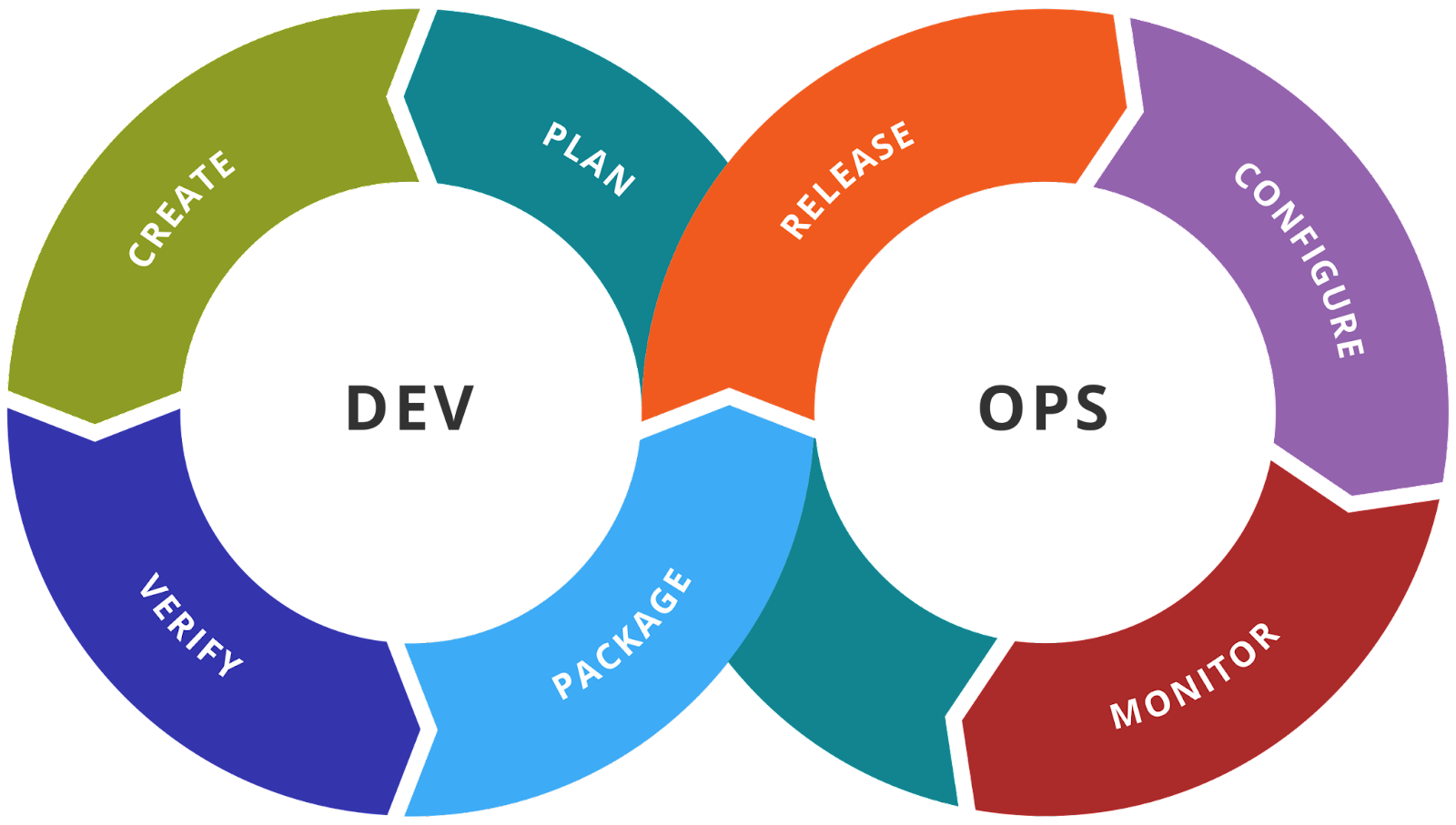

Artificial Intelligence Governance & Alignment with Enterprise Governance

The Objectives of the AI Governance are: Ensure enterprise is adopted

pre-trained foundation models and complied; Guide the decision-making process

to maintain AI Solution coherence; Maintain the relevancy of the

enterprise to meet changing requirements ... The AI Governance Framework helps

Enterprise to Manage, Govern, Monitor, and Adopt AI activities, practices, and

systems across enterprise. AI Governance Framework defines a set of metrics

that can be used to measure the success of the framework implementation. ...

Establish an executive team for identifying and overseeing the AI initiatives

across the enterprise. Define a clear vision and strategy for AI

implementation aligned with the enterprise goals and business functions.

Develop practical communications to, and appropriate access for employees.

Setup AI Governance across enterprise. Define roles and responsibilities of

individuals involved in AI development, deployment and monitoring. Foster the

collaboration between AI experts, domain experts and business stakeholders.

Establish a centralized, cross-functional team to review and update AI

governance practices as technology, regulations, and enterprise needs.

Role of digital in risk management and compliances

Embracing risks is crucial for survival, as risks are inherent in every aspect

of business, whether financial or non-financial. As Mark Zuckerberg says, “The

only strategy that is guaranteed to fail is not taking risks.” However, this

leads to a fundamental question: should businesses pursue risks solely in

pursuit of higher returns? Going beyond the pursuit of returns alone,

businesses in today’s context should focus on Return of capital and not just

Return on capital. Business is about taking calculated risks and managing

risks to achieve business goals. Risk exposures must be strategically crafted,

with a comprehensive risk management framework in place. We piloted

technology-enabled compliance way back in 2015, starting with an India-centric

compliance tool that has now been implemented across the global organisation.

The tool aids informed decision-making and swift response to emerging risks.

The digital solution facilitates seamless communication and collaboration

between dispersed teams, ensuring a coordinated approach to risk

management.

Quote for the day:

"Your job gives you authority. Your

behavior gives you respect." -- Irwin Federman