Quote for the day:

"The best leaders build teams that don’t rely on them. That’s true excellence." -- Gordon Tredgold

Ransomware groups switch to stealthy attacks and long-term access

“Ransomware groups no longer treat vulnerabilities as isolated entry points,”

says Aviral Verma, lead threat intelligence analyst at penetration testing and

cybersecurity services firm Securin. “They assemble them into deliberate

exploitation chains, selecting weaknesses not just for severity, but for how

effectively they can collapse trust, persistence, and operational control across

entire platforms.” AI is now widely accessible to threat actors, but it

primarily functions as a force multiplier rather than a driving force in

ransomware attacks. ... Vasileios Mourtzinos, a member of the threat team at

managed detection and response firm Quorum Cyber, says that more groups are

moving away from high-impact encryption towards extortion-led models that

prioritize data theft and prolonged, low-noise access. “This approach,

popularized by actors such as Cl0p through large-scale exploitation of

third-party and supply chain vulnerabilities, is now being mirrored more widely,

alongside increased abuse of valid accounts, legitimate administrative tools to

blend into normal activity, and in some cases attempts to recruit or incentivize

insiders to facilitate access,” Mourtzinos says. ... “For CISOs, the priority

should be strengthening identity controls, closely monitoring trusted

applications and third-party integrations, and ensuring detection strategies

focus on persistence and data exfiltration activity,” Mourtzinos advises.

“Ransomware groups no longer treat vulnerabilities as isolated entry points,”

says Aviral Verma, lead threat intelligence analyst at penetration testing and

cybersecurity services firm Securin. “They assemble them into deliberate

exploitation chains, selecting weaknesses not just for severity, but for how

effectively they can collapse trust, persistence, and operational control across

entire platforms.” AI is now widely accessible to threat actors, but it

primarily functions as a force multiplier rather than a driving force in

ransomware attacks. ... Vasileios Mourtzinos, a member of the threat team at

managed detection and response firm Quorum Cyber, says that more groups are

moving away from high-impact encryption towards extortion-led models that

prioritize data theft and prolonged, low-noise access. “This approach,

popularized by actors such as Cl0p through large-scale exploitation of

third-party and supply chain vulnerabilities, is now being mirrored more widely,

alongside increased abuse of valid accounts, legitimate administrative tools to

blend into normal activity, and in some cases attempts to recruit or incentivize

insiders to facilitate access,” Mourtzinos says. ... “For CISOs, the priority

should be strengthening identity controls, closely monitoring trusted

applications and third-party integrations, and ensuring detection strategies

focus on persistence and data exfiltration activity,” Mourtzinos advises.Expert Maps Identity Risk and Multi-Cloud Complexity to Evolving Cloud Threats

Cavalancia began by noting that cloud adoption has fundamentally altered traditional security boundaries. With 88 percent of organizations now operating in hybrid or multi-cloud environments, the hardened network edge is no longer the primary control point. Instead, identity and privilege determine access across distributed systems. ... Discussing identity risk specifically, he underscored how central privilege is to modern attacks, saying, "If you don't have identity, you don't have identity, you don't have privilege, you don't have privilege, you don't have a threat." Excessive permissions and credential abuse create privilege escalation paths once access is obtained. ... Reducing exploitable attack paths requires prioritizing risk based on business impact. Rather than attempting to address every vulnerability equally, organizations should identify which exposures would cause the greatest operational or financial harm and focus there first. ... Looking ahead, Cavalancia argued that security must be built around continuous monitoring and identity-first principles. "Continuous monitoring, continuous validation, continuous improvement, maybe we should just have the word continuous here," he said. He also cautioned that AI-assisted attacks are already influencing the threat landscape, noting that "90% of the decisions being made by that attack were done solely by AI, no human intervention whatsoever."Data Centers in Space: Pi in the Sky or AI Hallucination?

Space is a great place for data centers because it solves one of the biggest

problems with locating data centers on Earth: power, argues Google’s Senior

Director of Paradigms of Intelligence, Travis Beals. ... SpaceX is also on

board with the idea of data centers in space. Last month, it filed a request

with the Federal Communications Commission to launch a constellation of up to

one million solar-powered satellites that it said will serve as data centers

for artificial intelligence. ... “Data centers in space can access solar power

24/7 in certain ‘sun-synchronous’ orbits, giving them all the power they need

to operate without putting immense strain on power grids here on Earth,”

Scherer told TechNewsWorld. “This would alleviate concerns about consumers

having to bear the costs of higher energy use.” “There is also less risk of

running out of real estate in space, no complex permitting requirements, and

no community pushback to new data centers being built in people’s backyards,”

he added. ... “By some estimates, energy and land costs are only around 25% of

the total cost for a data center,” Yoon told TechNewsWorld. “AI hardware is

the real cost driver, and shifting to space only makes that hardware more

expensive.” “Hardware cannot be repaired or upgraded at scale in space,” he

explained. “Maintaining satellites is extremely hard, especially if you have

hundreds of thousands of them. Maintaining a traditional data center is

extremely easy.”

Space is a great place for data centers because it solves one of the biggest

problems with locating data centers on Earth: power, argues Google’s Senior

Director of Paradigms of Intelligence, Travis Beals. ... SpaceX is also on

board with the idea of data centers in space. Last month, it filed a request

with the Federal Communications Commission to launch a constellation of up to

one million solar-powered satellites that it said will serve as data centers

for artificial intelligence. ... “Data centers in space can access solar power

24/7 in certain ‘sun-synchronous’ orbits, giving them all the power they need

to operate without putting immense strain on power grids here on Earth,”

Scherer told TechNewsWorld. “This would alleviate concerns about consumers

having to bear the costs of higher energy use.” “There is also less risk of

running out of real estate in space, no complex permitting requirements, and

no community pushback to new data centers being built in people’s backyards,”

he added. ... “By some estimates, energy and land costs are only around 25% of

the total cost for a data center,” Yoon told TechNewsWorld. “AI hardware is

the real cost driver, and shifting to space only makes that hardware more

expensive.” “Hardware cannot be repaired or upgraded at scale in space,” he

explained. “Maintaining satellites is extremely hard, especially if you have

hundreds of thousands of them. Maintaining a traditional data center is

extremely easy.”Centralized Security Can't Scale. It's Time to Embrace Federation

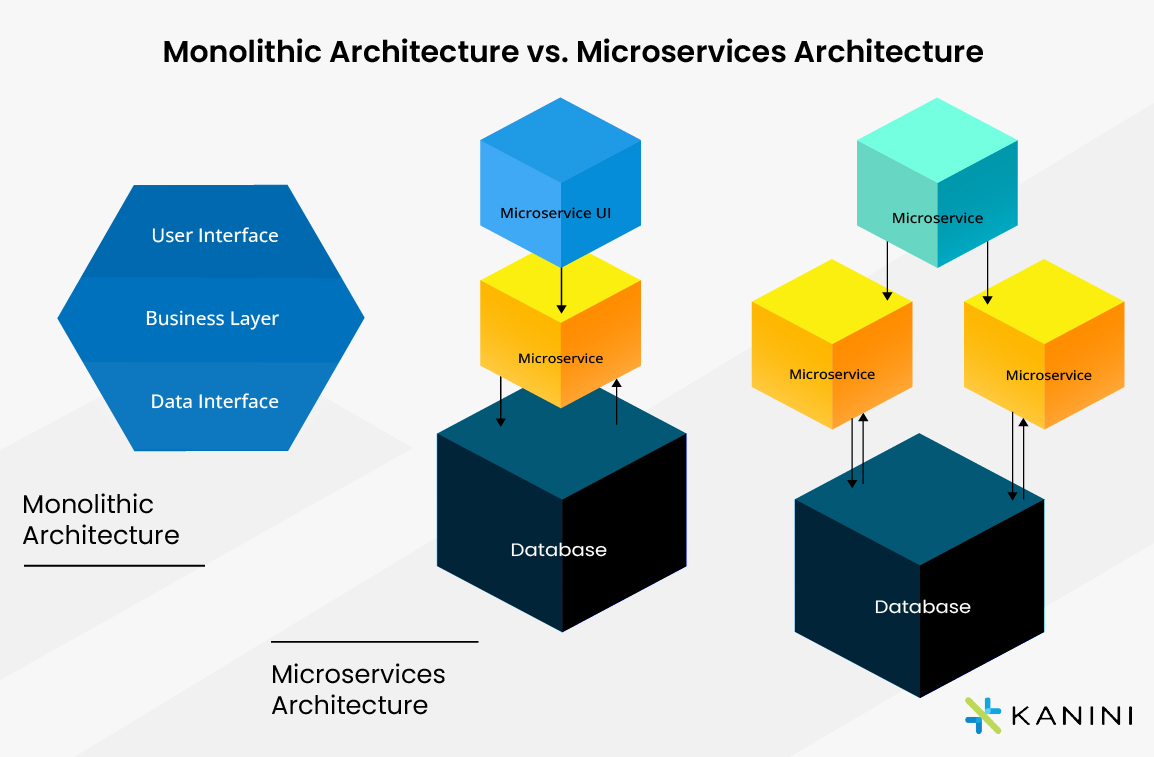

In a federated model, the organization recognizes that technology leaders, whether from across security, IT, and Engineering, have a deep understanding of the nuances of their assigned units. Their specialized knowledge helps them set strategies that match the goals, technologies, workflows, and risks they need. That in turn leads to benefits that a centralized security authority can't touch. To start with, security decisions happen faster when the people making them are closer to the action. Service and application owners already have the context and expertise to make the right calls based on their scopes. Delegated authority allows companies to seize market opportunities faster, deploy new tools more easily, manage fewer escalations, and reduce friction and delays. ... In practice, that might look like a CISO setting data classification standards, while partner teams take responsibility for implementing these standards via low-friction policies and capabilities at the source of record for the data. Netflix's security team figured this out early. Their "Paved Roads" philosophy offers a collection of secure options that meet corporate guidelines while being the easiest for developers to use. In other words, less saying no, more offering a secure path forward. Outside of engineering, organization-wide standards also need to provide flexibility and avoid becoming overly specific or too narrow.Linux explores new way of authenticating developers and their code - here's how it works

Today, kernel maintainers who want a kernel.org account must find someone

already in the PGP web of trust, meet them face‑to‑face, show government ID,

and get their key signed. ... the kernel maintainers are working to replace

this fragile PGP key‑signing web of trust with a decentralized,

privacy‑preserving identity layer that can vouch for both developers and the

code they sign. ... Linux ID is meant to give the kernel community a more

flexible way to prove who people are, and who they're not, without falling

back on brittle key‑signing parties or ad‑hoc video calls. ... At the core of

Linux ID is a set of cryptographic "proofs of personhood" built on modern

digital identity standards rather than traditional PGP key signing. Instead of

a single monolithic web of trust, the system issues and exchanges personhood

credentials and verifiable credentials that assert things like "this person is

a real individual," "this person is employed by company X," or "this Linux

maintainer has met this person and recognized them as a kernel maintainer."

... Technically, Linux ID is built around decentralized identifiers (DIDs).

This is a W3C‑style mechanism for creating globally unique IDs and attaching

public keys and service endpoints to them. Developers create DIDs, potentially

using existing Curve25519‑based keys from today's PGP world, and publish DID

documents via secure channels such as HTTPS‑based "did:web" endpoints that

expose their public key infrastructure and where to send encrypted

messages.

Today, kernel maintainers who want a kernel.org account must find someone

already in the PGP web of trust, meet them face‑to‑face, show government ID,

and get their key signed. ... the kernel maintainers are working to replace

this fragile PGP key‑signing web of trust with a decentralized,

privacy‑preserving identity layer that can vouch for both developers and the

code they sign. ... Linux ID is meant to give the kernel community a more

flexible way to prove who people are, and who they're not, without falling

back on brittle key‑signing parties or ad‑hoc video calls. ... At the core of

Linux ID is a set of cryptographic "proofs of personhood" built on modern

digital identity standards rather than traditional PGP key signing. Instead of

a single monolithic web of trust, the system issues and exchanges personhood

credentials and verifiable credentials that assert things like "this person is

a real individual," "this person is employed by company X," or "this Linux

maintainer has met this person and recognized them as a kernel maintainer."

... Technically, Linux ID is built around decentralized identifiers (DIDs).

This is a W3C‑style mechanism for creating globally unique IDs and attaching

public keys and service endpoints to them. Developers create DIDs, potentially

using existing Curve25519‑based keys from today's PGP world, and publish DID

documents via secure channels such as HTTPS‑based "did:web" endpoints that

expose their public key infrastructure and where to send encrypted

messages.IT hiring is under relentless pressure. Here's how leaders are responding

The CIO's relationship with the chief human resources officer (CHRO) matters

greatly, though historically, they've viewed recruitment through different

lenses. HR professionals tend not to be technologists, so their approach to

hiring tends to be generic. Conversely, IT leaders aren't HR professionals.

Many of them were promoted to management or executive roles for their expert

technical skills, not their managerial or people skills. ... The

multigenerational workforce can be frustrating for everyone at times, simply

because employees' lives and work experiences can be so different. While not

all individuals in a demographic group are homogeneous, at a 30,000-foot view,

Gen Z wants to work on interesting and innovative projects -- things that

matter on a greater scale, such as climate change. They also expect more rapid

advancement than previous generations, such as being promoted to a management

role after a year or two versus five or seven years, for example. ... Most

organizational leaders will tell you their companies have great cultures, but

not all their employees would likely agree. Cultural decisions made behind

closed doors by a few for the many tend to fail because too many assumptions

are made, and not enough hypotheses tested. "Seeing how your job helps the

company move forward has been a point of opacity for a long time, and after a

certain point, it's like, 'Why am I still here?'" Skillsoft's Daly said.

The CIO's relationship with the chief human resources officer (CHRO) matters

greatly, though historically, they've viewed recruitment through different

lenses. HR professionals tend not to be technologists, so their approach to

hiring tends to be generic. Conversely, IT leaders aren't HR professionals.

Many of them were promoted to management or executive roles for their expert

technical skills, not their managerial or people skills. ... The

multigenerational workforce can be frustrating for everyone at times, simply

because employees' lives and work experiences can be so different. While not

all individuals in a demographic group are homogeneous, at a 30,000-foot view,

Gen Z wants to work on interesting and innovative projects -- things that

matter on a greater scale, such as climate change. They also expect more rapid

advancement than previous generations, such as being promoted to a management

role after a year or two versus five or seven years, for example. ... Most

organizational leaders will tell you their companies have great cultures, but

not all their employees would likely agree. Cultural decisions made behind

closed doors by a few for the many tend to fail because too many assumptions

are made, and not enough hypotheses tested. "Seeing how your job helps the

company move forward has been a point of opacity for a long time, and after a

certain point, it's like, 'Why am I still here?'" Skillsoft's Daly said.Generative AI has ushered in a new era of fraud, say reports from Plaid, SEON

“Generative AI has lowered the barrier to creating fake personas, falsifying

documents, and impersonating real people at scale,” says a new report from

Plaid, “Rethinking fraud in the AI era.” “As a result, fraud losses are

projected to reach $40 billion globally within the next few years, driven in

large part by AI-enabled attacks.” The warning is familiar. What’s different

about Plaid’s approach to the problem is “network insights” – “each person’s

unique behavioral footprint across the broader financial and app ecosystem,”

understood as a system of relationships and long-standing patterns. In these

combined signals, the company says, can be found “a resilient, high-signal

lens into intent, risk and legitimacy.” ... “The industry is overdue for its

next wave of fraud-fighting innovation,” the report says. “The question is not

whether change is needed, but what unique combination of data, insights, and

analytics can meet this moment.” The AI era needs its weapon of choice, and it

needs to work continuously. “AI driven fraud is exposing the limits of

identity controls that were designed for point in time verification rather

than continuous assurance,” says Sam Abadir, research director for risk,

financial (crime & compliance) at IDC, as quoted in the Plaid report. ...

The overarching message is that “AI is real, embedded and widely trusted, but

it has not materially reduced the scope of fraud and AML operations.” Fraud

continues to scale, enabled by the same AI boom.

“Generative AI has lowered the barrier to creating fake personas, falsifying

documents, and impersonating real people at scale,” says a new report from

Plaid, “Rethinking fraud in the AI era.” “As a result, fraud losses are

projected to reach $40 billion globally within the next few years, driven in

large part by AI-enabled attacks.” The warning is familiar. What’s different

about Plaid’s approach to the problem is “network insights” – “each person’s

unique behavioral footprint across the broader financial and app ecosystem,”

understood as a system of relationships and long-standing patterns. In these

combined signals, the company says, can be found “a resilient, high-signal

lens into intent, risk and legitimacy.” ... “The industry is overdue for its

next wave of fraud-fighting innovation,” the report says. “The question is not

whether change is needed, but what unique combination of data, insights, and

analytics can meet this moment.” The AI era needs its weapon of choice, and it

needs to work continuously. “AI driven fraud is exposing the limits of

identity controls that were designed for point in time verification rather

than continuous assurance,” says Sam Abadir, research director for risk,

financial (crime & compliance) at IDC, as quoted in the Plaid report. ...

The overarching message is that “AI is real, embedded and widely trusted, but

it has not materially reduced the scope of fraud and AML operations.” Fraud

continues to scale, enabled by the same AI boom.The hidden cost of AI adoption: Why most companies overestimate readiness

Walk into enough leadership meetings and you’ll hear the same story told with

different accents: “We need AI.” It shows up in board decks, annual strategy

documents and that one slide with a hockey-stick curve that magically turns

pilot into profit. ... When I talk about the hidden cost of AI adoption, I’m

not talking about model pricing or vendor fees. Those are visible and

negotiable. The real cost lives in the messy middle: data foundations,

integration work, operating model changes, governance, security, compliance

and the ongoing effort required to keep AI useful after the demo fades. ... If

I had to summarize AI readiness in one sentence, it would be this: AI

readiness is your organization’s ability to repeatedly take a business

problem, turn it into a well-defined decision or workflow, feed it trustworthy

data and ship a solution you can monitor, audit and improve. ... Having data

is not the same as having usable data. AI systems amplify quality problems at

scale. Until proven otherwise, “we already have the data” usually means

duplicated records, inconsistent definitions, missing fields, sensitive data

in the wrong places and unclear ownership. ... If it adds friction or produces

unreliable outputs, adoption collapses fast. Vendor risk doesn’t disappear

either. Pricing changes. Usage spikes. Workflows become coupled to tools you

don’t fully control. Without internal ownership, you’re not building

capability, you’re renting it.

Walk into enough leadership meetings and you’ll hear the same story told with

different accents: “We need AI.” It shows up in board decks, annual strategy

documents and that one slide with a hockey-stick curve that magically turns

pilot into profit. ... When I talk about the hidden cost of AI adoption, I’m

not talking about model pricing or vendor fees. Those are visible and

negotiable. The real cost lives in the messy middle: data foundations,

integration work, operating model changes, governance, security, compliance

and the ongoing effort required to keep AI useful after the demo fades. ... If

I had to summarize AI readiness in one sentence, it would be this: AI

readiness is your organization’s ability to repeatedly take a business

problem, turn it into a well-defined decision or workflow, feed it trustworthy

data and ship a solution you can monitor, audit and improve. ... Having data

is not the same as having usable data. AI systems amplify quality problems at

scale. Until proven otherwise, “we already have the data” usually means

duplicated records, inconsistent definitions, missing fields, sensitive data

in the wrong places and unclear ownership. ... If it adds friction or produces

unreliable outputs, adoption collapses fast. Vendor risk doesn’t disappear

either. Pricing changes. Usage spikes. Workflows become coupled to tools you

don’t fully control. Without internal ownership, you’re not building

capability, you’re renting it.Overcoming Security Challenges in Remote Energy Operations

The security landscape for remote facilities has shifted "dramatically," and

energy providers can no longer rely on isolation for protection, said Nir

Ayalon, founder and CEO of Cydome, a maritime and critical infrastructure

cybersecurity firm. "These sites are just as exposed as a corporate office -

but with far more complex operational challenges," Ayalon said. ... A recent

PES Wind report by Cyber Energia found that only 1% of 11,000 wind assets

worldwide have adequate cyber protection, while U.K.-based renewable assets

face up to 1,000 attempted cyberattacks daily. Trustwave SpiderLabs also

reported an 80% rise in ransomware attacks on energy and utilities in 2025,

with average costs exceeding $5 million. Ransomware is the most common form of

attack. ... Protecting offshore facilities is also costly and a major

challenge. Sending a technician for on-site installation can run up to

$200,000, including vessel rental. Ayalon said most sites lack specialized IT

staff. The person managing the hardware is usually an operator or engineer and

not necessarily a certified cybersecurity professional. Limited space for

racks and equipment, as well as poor bandwidth poses major challenges, said

Rick Kaun, global director of cybersecurity services at Rockwell Automation.

... Designing secure offshore energy systems and shipping vessels is no longer

a choice but a necessity. Cybersecurity can't be an afterthought, said Guy

Platten, secretary general of the International Chamber of Shipping.

The security landscape for remote facilities has shifted "dramatically," and

energy providers can no longer rely on isolation for protection, said Nir

Ayalon, founder and CEO of Cydome, a maritime and critical infrastructure

cybersecurity firm. "These sites are just as exposed as a corporate office -

but with far more complex operational challenges," Ayalon said. ... A recent

PES Wind report by Cyber Energia found that only 1% of 11,000 wind assets

worldwide have adequate cyber protection, while U.K.-based renewable assets

face up to 1,000 attempted cyberattacks daily. Trustwave SpiderLabs also

reported an 80% rise in ransomware attacks on energy and utilities in 2025,

with average costs exceeding $5 million. Ransomware is the most common form of

attack. ... Protecting offshore facilities is also costly and a major

challenge. Sending a technician for on-site installation can run up to

$200,000, including vessel rental. Ayalon said most sites lack specialized IT

staff. The person managing the hardware is usually an operator or engineer and

not necessarily a certified cybersecurity professional. Limited space for

racks and equipment, as well as poor bandwidth poses major challenges, said

Rick Kaun, global director of cybersecurity services at Rockwell Automation.

... Designing secure offshore energy systems and shipping vessels is no longer

a choice but a necessity. Cybersecurity can't be an afterthought, said Guy

Platten, secretary general of the International Chamber of Shipping.How the CISO’s Role is Evolving From Technologist to Chief Educator

Regardless of structure, modern CISOs are embedded in executive decision-making,

legal strategy and supply chain oversight. Their responsibilities have expanded

from managing technical defenses to maintaining dynamic risk portfolios, where

trade-offs must be weighed across business functions. Stakeholders now include

regulators, customers and strategic partners, not just internal IT teams. ...

Effective leaders accumulate knowledge and know when to go deep and when to

delegate, ensuring subject-matter experts are empowered while key decisions

remain aligned to business outcomes. This blend of technical insight and

strategic judgment defines the CISO’s value in complex environments. ... As

security becomes more embedded in daily operations, cultural leadership plays a

defining role in long-term resilience. A positive cybersecurity culture is

proactive and free from blame, creating an environment where employees feel safe

to speak up and suggest improvements without fear of repercussions. This shift

leads to earlier detection, better mitigation and stronger overall security

posture. Teams asking for security input during the design phase and employees

self-reporting suspicious activity signal a mature culture that understands

protection is everyone’s job. ... The modern CISO operates at the intersection

of technology, risk, leadership and influence. Leaders must navigate shifting

business priorities and complex stakeholder relationships while building a

strong security culture across the enterprise.

Regardless of structure, modern CISOs are embedded in executive decision-making,

legal strategy and supply chain oversight. Their responsibilities have expanded

from managing technical defenses to maintaining dynamic risk portfolios, where

trade-offs must be weighed across business functions. Stakeholders now include

regulators, customers and strategic partners, not just internal IT teams. ...

Effective leaders accumulate knowledge and know when to go deep and when to

delegate, ensuring subject-matter experts are empowered while key decisions

remain aligned to business outcomes. This blend of technical insight and

strategic judgment defines the CISO’s value in complex environments. ... As

security becomes more embedded in daily operations, cultural leadership plays a

defining role in long-term resilience. A positive cybersecurity culture is

proactive and free from blame, creating an environment where employees feel safe

to speak up and suggest improvements without fear of repercussions. This shift

leads to earlier detection, better mitigation and stronger overall security

posture. Teams asking for security input during the design phase and employees

self-reporting suspicious activity signal a mature culture that understands

protection is everyone’s job. ... The modern CISO operates at the intersection

of technology, risk, leadership and influence. Leaders must navigate shifting

business priorities and complex stakeholder relationships while building a

strong security culture across the enterprise.

/dq/media/media_files/2026/02/04/tiny-ai-2026-02-04-16-39-39.jpg)

/dq/media/media_files/2025/12/29/godspeed-curtain-twitchers-2025-12-29-12-33-59.jpg)

/filters:no_upscale()/articles/architects-ai-era/en/resources/128figure-2-1765966955803.jpg)