Quote for the day:

“You may be disappointed if you fail, but you are doomed if you don’t try.” -- Beverly Sills

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 21 mins • Perfect for listening on the go.

How technical debt turns your IT infrastructure into a game you can’t win

Technical debt is compared to a high-stakes game of Jenga where every shortcut

or deferred refactoring pulls a vital block from an organization’s structural

foundation. Initially, quick fixes seem harmless, driven by aggressive

deadlines and resource constraints; however, they eventually create a

"velocity trap" where development speed plummets because engineers spend more

time navigating fragile code than building new features. Beyond slow shipping,

this debt manifests as a silent budget killer through architectural

mismatches—such as using stateless frameworks for real-time systems—resulting

in exorbitant cloud costs and significant cybersecurity vulnerabilities,

evidenced by massive data breaches at firms like Equifax. While agile startups

leverage modern, scalable architectures to outpace incumbents, many

established organizations suffer because their internal culture discourages

developers from addressing these structural issues, viewing refactoring as a

distraction from value creation. To break this cycle, businesses must move

beyond pretending the trade-off doesn’t exist. Successful companies explicitly

measure their "technical debt ratio," tracking the percentage of engineering

time spent on maintenance versus innovation. By acknowledging that

high-quality code is a strategic asset rather than an optional luxury,

organizations can stop pulling the "safe blocks" of their infrastructure and

instead build the resilient, high-velocity systems required to survive in an

increasingly competitive global market.

Technical debt is compared to a high-stakes game of Jenga where every shortcut

or deferred refactoring pulls a vital block from an organization’s structural

foundation. Initially, quick fixes seem harmless, driven by aggressive

deadlines and resource constraints; however, they eventually create a

"velocity trap" where development speed plummets because engineers spend more

time navigating fragile code than building new features. Beyond slow shipping,

this debt manifests as a silent budget killer through architectural

mismatches—such as using stateless frameworks for real-time systems—resulting

in exorbitant cloud costs and significant cybersecurity vulnerabilities,

evidenced by massive data breaches at firms like Equifax. While agile startups

leverage modern, scalable architectures to outpace incumbents, many

established organizations suffer because their internal culture discourages

developers from addressing these structural issues, viewing refactoring as a

distraction from value creation. To break this cycle, businesses must move

beyond pretending the trade-off doesn’t exist. Successful companies explicitly

measure their "technical debt ratio," tracking the percentage of engineering

time spent on maintenance versus innovation. By acknowledging that

high-quality code is a strategic asset rather than an optional luxury,

organizations can stop pulling the "safe blocks" of their infrastructure and

instead build the resilient, high-velocity systems required to survive in an

increasingly competitive global market.The Compliance Blueprint: Handling Minors’ Data in the Post-DPDP Era

.png) The blog post titled "The Compliance Blueprint: Handling Minors’ Data in the

Post-DPDP Era" explores the stringent regulatory landscape established by

India’s Digital Personal Data Protection (DPDP) Act regarding users under

eighteen. Under Section 9, organizations face significant mandates, including

securing verifiable parental consent, prohibiting behavioral tracking, and

banning targeted advertising to children. Failure to comply can result in

catastrophic penalties of up to ₹200 Crore, making data protection a critical

operational priority rather than a mere policy update. The author outlines

various verification methods, such as utilizing government-backed tokens or

linked family accounts, while highlighting the "implementation paradox" where

verifying age often requires collecting even more sensitive data.

Operationally, businesses must redesign user interfaces to "fork" into

protective modes for minors, provide itemized notices in multiple languages,

and maintain detailed audit logs. Despite the heavy compliance burden and

challenges like the "death of personalization" for EdTech and gaming firms,

the Act serves as a vital safeguard for India’s 450 million children.

Ultimately, the article advises companies to adopt a "Safety First" mindset,

viewing children’s data as a potential liability that necessitates a

fundamental shift in product design and data governance to ensure long-term

viability in the Indian digital ecosystem.

The blog post titled "The Compliance Blueprint: Handling Minors’ Data in the

Post-DPDP Era" explores the stringent regulatory landscape established by

India’s Digital Personal Data Protection (DPDP) Act regarding users under

eighteen. Under Section 9, organizations face significant mandates, including

securing verifiable parental consent, prohibiting behavioral tracking, and

banning targeted advertising to children. Failure to comply can result in

catastrophic penalties of up to ₹200 Crore, making data protection a critical

operational priority rather than a mere policy update. The author outlines

various verification methods, such as utilizing government-backed tokens or

linked family accounts, while highlighting the "implementation paradox" where

verifying age often requires collecting even more sensitive data.

Operationally, businesses must redesign user interfaces to "fork" into

protective modes for minors, provide itemized notices in multiple languages,

and maintain detailed audit logs. Despite the heavy compliance burden and

challenges like the "death of personalization" for EdTech and gaming firms,

the Act serves as a vital safeguard for India’s 450 million children.

Ultimately, the article advises companies to adopt a "Safety First" mindset,

viewing children’s data as a potential liability that necessitates a

fundamental shift in product design and data governance to ensure long-term

viability in the Indian digital ecosystem.The need for a board-level definition of cyber resilience

The article emphasizes that the lack of a standardized definition for cyber

resilience creates significant systemic risks for organizational boards and

executive teams. Currently, conceptual fragmentation across various regulatory

frameworks makes it difficult for leadership to determine what to oversee or

how to measure success. To address this, the focus must shift from technical

metrics and security controls toward broader business outcomes, such as

maintaining operational continuity, preserving stakeholder confidence, and

ensuring financial stability during disruptions. Cyber resilience is

increasingly framed as a core leadership responsibility, with many

jurisdictions now legally requiring boards to oversee these outcomes. However,

a major point of contention remains regarding the scope of

resilience—specifically whether it includes proactive preparedness or is

limited strictly to response and recovery phases. Furthermore, resilience is

no longer just about defending against cybercrime; it encompasses all forms of

digital disruption, including unintentional outages. As global economies

become more interdependent, an individual organization’s ability to recover

quickly is essential not only for its own survival but also for overall

economic stability. Ultimately, establishing a clear, board-level definition

is a critical governance requirement that provides the foundation for

navigating the complexities of modern digital economies and ensuring long-term

institutional health.

The article emphasizes that the lack of a standardized definition for cyber

resilience creates significant systemic risks for organizational boards and

executive teams. Currently, conceptual fragmentation across various regulatory

frameworks makes it difficult for leadership to determine what to oversee or

how to measure success. To address this, the focus must shift from technical

metrics and security controls toward broader business outcomes, such as

maintaining operational continuity, preserving stakeholder confidence, and

ensuring financial stability during disruptions. Cyber resilience is

increasingly framed as a core leadership responsibility, with many

jurisdictions now legally requiring boards to oversee these outcomes. However,

a major point of contention remains regarding the scope of

resilience—specifically whether it includes proactive preparedness or is

limited strictly to response and recovery phases. Furthermore, resilience is

no longer just about defending against cybercrime; it encompasses all forms of

digital disruption, including unintentional outages. As global economies

become more interdependent, an individual organization’s ability to recover

quickly is essential not only for its own survival but also for overall

economic stability. Ultimately, establishing a clear, board-level definition

is a critical governance requirement that provides the foundation for

navigating the complexities of modern digital economies and ensuring long-term

institutional health.2026 global semiconductor industry outlook: Delloite

Deloitte’s 2026 global semiconductor industry outlook forecasts a

transformative year, with annual sales projected to reach a historic peak of

$975 billion. Driven primarily by an intensifying artificial intelligence

infrastructure boom, the sector expects a remarkable 26% growth rate following

a robust 2025. This surge is reflected in the staggering $9.5 trillion market

capitalization of the top ten global chip companies, though wealth remains

highly concentrated among the top three leaders. While AI chips generate half

of total revenue, they represent less than 0.2% of total unit volume, creating

a stark structural divergence. Personal computing and smartphone markets may

face declines as specialized AI demand causes consumer memory prices to spike.

Technological advancements will likely focus on integrating high-bandwidth

memory via 3D stacking and adopting co-packaged optics to reduce power

consumption by up to 50%. However, the outlook warns of a "high-stakes

paradox." While the immediate future appears solid due to backlogged orders,

2027 and 2028 may face significant headwinds from power grid

constraints—requiring 92 gigawatts of additional energy—and potential

return-on-investment concerns. Ultimately, long-term success hinges on

balancing aggressive AI investments with proactive risk mitigation against

infrastructure limits and geopolitical shifts, including India’s emergence as

a vital back-end assembly hub.

New Executive Leadership Challenges Emerging—And What’s Driving Them

In the article "New Executive Leadership Challenges Emerging—And What's

Driving Them," members of the Forbes Coaches Council highlight a significant

shift in the corporate landscape driven by hybrid work, AI integration, and

rapid systemic change. Today’s executives face a "leadership vortex," where

they must navigate role compression and overwhelming demands while maintaining

strategic clarity. A primary challenge is rebuilding connection in hybrid

environments, where communication gaps are more visible and psychological

safety is harder to cultivate. Leaders are moving beyond traditional

performance metrics to focus on their "being"—cultivating a leadership

identity that prioritizes generative dialogue and mutual accountability over

mere individual contribution. The rise of AI has introduced systemic

ambiguity, requiring a pivot from "expert" to "explorer" to manage fears of

obsolescence. Furthermore, the modern era demands a heightened appetite for

change and a renewed focus on team cohesion, as previous playbooks rewarding

certainty and control become less effective. Ultimately, successful leadership

now hinges on expanding personal capacity and translating technical

uncertainty into a shared, meaningful vision. This evolution reflects a

broader trend where emotional intelligence and adaptive identity are as

critical as technical expertise in steering organizations through

unprecedented volatility and complexity.

In the article "New Executive Leadership Challenges Emerging—And What's

Driving Them," members of the Forbes Coaches Council highlight a significant

shift in the corporate landscape driven by hybrid work, AI integration, and

rapid systemic change. Today’s executives face a "leadership vortex," where

they must navigate role compression and overwhelming demands while maintaining

strategic clarity. A primary challenge is rebuilding connection in hybrid

environments, where communication gaps are more visible and psychological

safety is harder to cultivate. Leaders are moving beyond traditional

performance metrics to focus on their "being"—cultivating a leadership

identity that prioritizes generative dialogue and mutual accountability over

mere individual contribution. The rise of AI has introduced systemic

ambiguity, requiring a pivot from "expert" to "explorer" to manage fears of

obsolescence. Furthermore, the modern era demands a heightened appetite for

change and a renewed focus on team cohesion, as previous playbooks rewarding

certainty and control become less effective. Ultimately, successful leadership

now hinges on expanding personal capacity and translating technical

uncertainty into a shared, meaningful vision. This evolution reflects a

broader trend where emotional intelligence and adaptive identity are as

critical as technical expertise in steering organizations through

unprecedented volatility and complexity.New US Air Force Office Will Focus on OT Cybersecurity

The U.S. Air Force has pioneered a critical shift in military defense by

establishing the Cyber Resiliency Office for Control Systems (CROCS), the

first dedicated office within the American military services focused

specifically on operational technology (OT) cybersecurity. Launched to address

vulnerabilities in essential infrastructure like power grids, water supplies,

and HVAC systems, CROCS serves as a central "front door" for managing the

security of non-traditional IT assets that are vital for mission readiness.

While the office reached initial operating capability in 2024, its creation

followed years of bureaucratic effort to recognize OT systems as primary

targets for foreign adversaries seeking asymmetric advantages. A significant

milestone for the office was successfully integrating OT security costs into

the Department of Defense’s long-term budgeting process, ensuring that

assessments, training, and mitigations are formally funded rather than treated

as secondary mandates. Directed by Daryl Haegley, CROCS does not execute all

security tasks directly but instead coordinates contracts, personnel, and

prioritized strategies to bridge reporting gaps between engineering teams and

the CIO. By modeling itself after the Air Force’s existing weapon systems

resiliency office, CROCS aims to build a robust defense pipeline, ultimately

securing the foundational utilities that allow the military to function

globally.

The U.S. Air Force has pioneered a critical shift in military defense by

establishing the Cyber Resiliency Office for Control Systems (CROCS), the

first dedicated office within the American military services focused

specifically on operational technology (OT) cybersecurity. Launched to address

vulnerabilities in essential infrastructure like power grids, water supplies,

and HVAC systems, CROCS serves as a central "front door" for managing the

security of non-traditional IT assets that are vital for mission readiness.

While the office reached initial operating capability in 2024, its creation

followed years of bureaucratic effort to recognize OT systems as primary

targets for foreign adversaries seeking asymmetric advantages. A significant

milestone for the office was successfully integrating OT security costs into

the Department of Defense’s long-term budgeting process, ensuring that

assessments, training, and mitigations are formally funded rather than treated

as secondary mandates. Directed by Daryl Haegley, CROCS does not execute all

security tasks directly but instead coordinates contracts, personnel, and

prioritized strategies to bridge reporting gaps between engineering teams and

the CIO. By modeling itself after the Air Force’s existing weapon systems

resiliency office, CROCS aims to build a robust defense pipeline, ultimately

securing the foundational utilities that allow the military to function

globally.Rethinking Business Processes for the Age of AI

The article "Rethinking Business Processes for the Age of AI" by Vasily

Yamaletdinov explores the fundamental evolution of business architecture as

organizations transition from human-centric automation to agentic AI systems.

Traditionally, business processes have relied on BPMN 2.0, a notation designed

for deterministic, repeatable, and rigid sequences. However, these classical

methods struggle with the non-deterministic nature of AI, which requires

dynamic planning and context-driven decision-making. The author argues that

modern AI-native processes must shift from "rigid conveyor belts" to flexible

systems that prioritize goals, guardrails, and autonomy over strict

algorithmic steps. To address the limitations of traditional BPMN—such as poor

exception handling and an inability to model uncertainty—the article advocates

for Goal-Oriented BPMN (GO-BPMN). This approach decomposes processes into a

tree of objectives and modular plans, allowing AI agents to dynamically select

the best path based on real-time context. By integrating a "Human-in-the-loop"

framework and supporting the "Reason-Act-Observe" cycle, GO-BPMN enables a

hybrid environment where deterministic operations and intelligent agents

coexist. Ultimately, while traditional modeling remains valuable for highly

regulated tasks, GO-BPMN provides the necessary framework for building

resilient, adaptive, and truly intelligent enterprise operations in the

burgeoning age of AI.

The article "Rethinking Business Processes for the Age of AI" by Vasily

Yamaletdinov explores the fundamental evolution of business architecture as

organizations transition from human-centric automation to agentic AI systems.

Traditionally, business processes have relied on BPMN 2.0, a notation designed

for deterministic, repeatable, and rigid sequences. However, these classical

methods struggle with the non-deterministic nature of AI, which requires

dynamic planning and context-driven decision-making. The author argues that

modern AI-native processes must shift from "rigid conveyor belts" to flexible

systems that prioritize goals, guardrails, and autonomy over strict

algorithmic steps. To address the limitations of traditional BPMN—such as poor

exception handling and an inability to model uncertainty—the article advocates

for Goal-Oriented BPMN (GO-BPMN). This approach decomposes processes into a

tree of objectives and modular plans, allowing AI agents to dynamically select

the best path based on real-time context. By integrating a "Human-in-the-loop"

framework and supporting the "Reason-Act-Observe" cycle, GO-BPMN enables a

hybrid environment where deterministic operations and intelligent agents

coexist. Ultimately, while traditional modeling remains valuable for highly

regulated tasks, GO-BPMN provides the necessary framework for building

resilient, adaptive, and truly intelligent enterprise operations in the

burgeoning age of AI.Runtime FinOps: Making Cloud Cost Observable

Shadow AI and the new visibility gap in software development

The rise of "shadow AI" in software development has introduced a significant

visibility gap, posing new challenges for organizations and managed service

providers. As developers increasingly turn to unapproved AI tools and agents

to boost productivity, they inadvertently create a "lethal trifecta" of risks

involving sensitive private data, external communications, and vulnerability

to malicious prompt injections. This unauthorized usage bypasses traditional

security monitoring like SaaS discovery platforms because AI agents often

operate within local engineering environments or through personal API keys. To

address this, the article suggests shifting from futile attempts to block AI

toward a governance-first infrastructure. By routing AI access through

centrally managed platforms and implementing process-level controls at

runtime, organizations can secure data flows and restrict agents to approved

services without stifling innovation. This approach allows developers to

maintain their preferred workflows while providing the oversight necessary to

prevent code leaks and compliance breaches. Ultimately, closing the visibility

gap requires building governance around fundamental development processes

rather than individual tools, enabling partners to guide businesses through a

secure evolution of AI integration that scales from initial modernization to

advanced agentic automation.

The rise of "shadow AI" in software development has introduced a significant

visibility gap, posing new challenges for organizations and managed service

providers. As developers increasingly turn to unapproved AI tools and agents

to boost productivity, they inadvertently create a "lethal trifecta" of risks

involving sensitive private data, external communications, and vulnerability

to malicious prompt injections. This unauthorized usage bypasses traditional

security monitoring like SaaS discovery platforms because AI agents often

operate within local engineering environments or through personal API keys. To

address this, the article suggests shifting from futile attempts to block AI

toward a governance-first infrastructure. By routing AI access through

centrally managed platforms and implementing process-level controls at

runtime, organizations can secure data flows and restrict agents to approved

services without stifling innovation. This approach allows developers to

maintain their preferred workflows while providing the oversight necessary to

prevent code leaks and compliance breaches. Ultimately, closing the visibility

gap requires building governance around fundamental development processes

rather than individual tools, enabling partners to guide businesses through a

secure evolution of AI integration that scales from initial modernization to

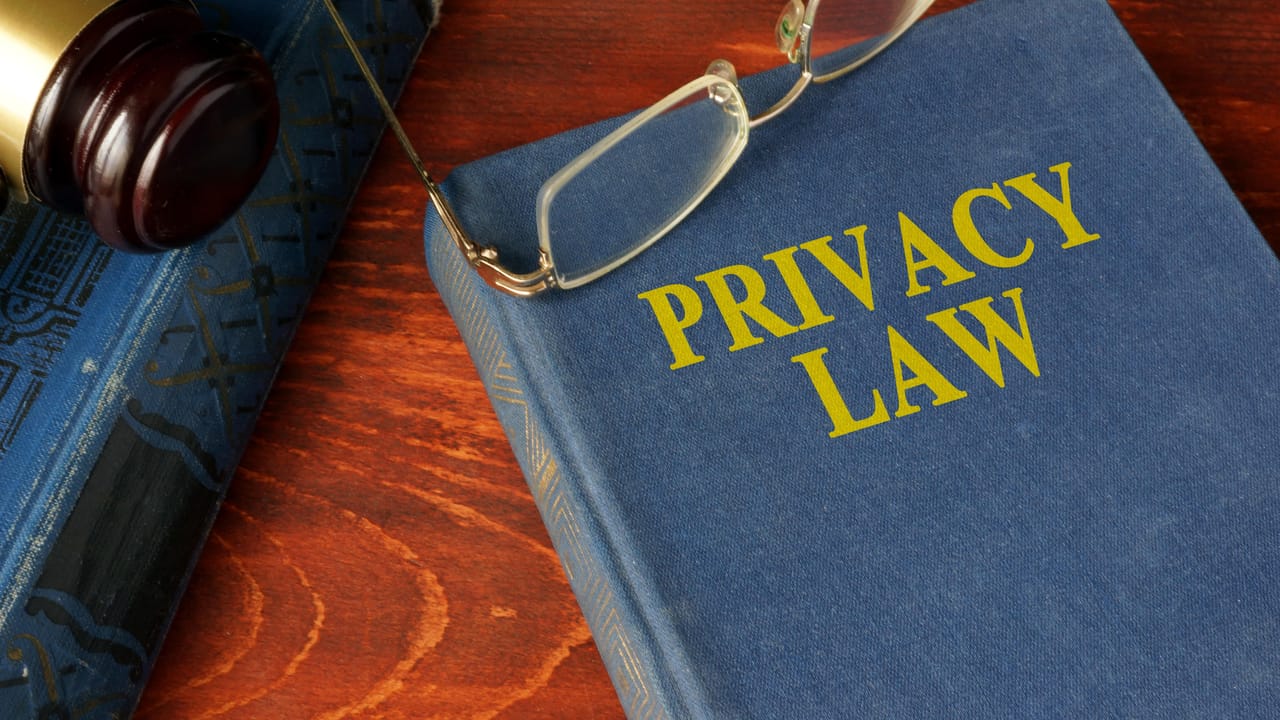

advanced agentic automation.Audit: Big Tech Often Ignores CA Privacy Law Opt-Out Requests

A recent independent audit conducted by privacy organization WebXray reveals

that major technology companies, specifically Google, Meta, and Microsoft,

frequently fail to honor legally mandated data collection opt-out requests in

California. Despite the California Consumer Privacy Act (CCPA) requiring

businesses to respect the Global Privacy Control (GPC) signal—a browser-based

mechanism allowing users to decline personal data sharing—the audit found

widespread non-compliance. Google emerged as the worst offender with an 86%

failure rate, followed by Meta at 69% and Microsoft at 50%. Researchers

observed that Google’s servers often respond to opt-out signals by explicitly

commanding the creation of advertising cookies, such as the “IDE” cookie,

effectively ignoring the user's preference in "plain sight." In response, Meta

dismissed the findings as a “marketing ploy,” while Microsoft claimed that

some cookies remain necessary for operational functions rather than

unauthorized tracking. This systemic disregard for privacy signals underscores

the ongoing tension between Big Tech and state regulations. To address these

gaps, the report recommends that security professionals treat privacy

telemetry with the same rigor as security data, conducting frequent audits of

third-party data flows and aligning runtime behavior with privacy controls to

ensure legitimate regulatory compliance.

A recent independent audit conducted by privacy organization WebXray reveals

that major technology companies, specifically Google, Meta, and Microsoft,

frequently fail to honor legally mandated data collection opt-out requests in

California. Despite the California Consumer Privacy Act (CCPA) requiring

businesses to respect the Global Privacy Control (GPC) signal—a browser-based

mechanism allowing users to decline personal data sharing—the audit found

widespread non-compliance. Google emerged as the worst offender with an 86%

failure rate, followed by Meta at 69% and Microsoft at 50%. Researchers

observed that Google’s servers often respond to opt-out signals by explicitly

commanding the creation of advertising cookies, such as the “IDE” cookie,

effectively ignoring the user's preference in "plain sight." In response, Meta

dismissed the findings as a “marketing ploy,” while Microsoft claimed that

some cookies remain necessary for operational functions rather than

unauthorized tracking. This systemic disregard for privacy signals underscores

the ongoing tension between Big Tech and state regulations. To address these

gaps, the report recommends that security professionals treat privacy

telemetry with the same rigor as security data, conducting frequent audits of

third-party data flows and aligning runtime behavior with privacy controls to

ensure legitimate regulatory compliance.