Quote for the day:

"Leadership is practiced not so much in words as in attitude and actions." -- Harold S. Geneen

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 19 mins • Perfect for listening on the go.

What will AI-first UX look like?

The transition to user experiences guided by artificial intelligence marks a

steady move away from rigid, traditional interfaces like static forms and

manual dashboards. Rather than requiring users to navigate multiple

disconnected software tools to complete tasks, future interfaces will rely on

conversational systems that connect seamlessly across various applications. In

this evolving landscape, standard data entry forms are being replaced by

adaptive interactions where users simply describe what they want to

accomplish, and the system gathers the necessary details. Similarly, data

reporting is shifting from complex, manually built dashboards to narrative

summaries generated on demand, providing clear explanations of business

metrics and actionable next steps. This shift transforms standard workflows

into coordinated teamwork between humans and software agents. The software

handles processes involving multiple steps behind the scenes and only

escalates to human workers when careful judgment is required. To make this

work effectively, organizations must build strong underlying foundations,

including clear data structures, connected programming interfaces, and solid

oversight rules. Ultimately, these systems are designed not to replace human

workers, but to reduce friction and manage tasks across platforms more

naturally. As this technology matures, the focus remains on building reliable

environments where software acts as a helpful teammate, smoothly coordinating

background tasks while keeping human users firmly in control of the final

outcomes.

The transition to user experiences guided by artificial intelligence marks a

steady move away from rigid, traditional interfaces like static forms and

manual dashboards. Rather than requiring users to navigate multiple

disconnected software tools to complete tasks, future interfaces will rely on

conversational systems that connect seamlessly across various applications. In

this evolving landscape, standard data entry forms are being replaced by

adaptive interactions where users simply describe what they want to

accomplish, and the system gathers the necessary details. Similarly, data

reporting is shifting from complex, manually built dashboards to narrative

summaries generated on demand, providing clear explanations of business

metrics and actionable next steps. This shift transforms standard workflows

into coordinated teamwork between humans and software agents. The software

handles processes involving multiple steps behind the scenes and only

escalates to human workers when careful judgment is required. To make this

work effectively, organizations must build strong underlying foundations,

including clear data structures, connected programming interfaces, and solid

oversight rules. Ultimately, these systems are designed not to replace human

workers, but to reduce friction and manage tasks across platforms more

naturally. As this technology matures, the focus remains on building reliable

environments where software acts as a helpful teammate, smoothly coordinating

background tasks while keeping human users firmly in control of the final

outcomes.Minimally Acceptable Systems: Tolerable at the Lowest Cost Possible

The article discusses a growing trend in software engineering and business

where companies intentionally design systems to be merely adequate rather than

striving for excellence. This concept, described as creating minimally

acceptable systems, focuses on finding the exact point where a product is just

tolerable for users while being as cheap as possible to build and maintain.

Instead of prioritizing high quality, reliability, or a great user experience,

organizations aim to minimize their costs and speed up delivery. They provide

the bare minimum functionality required to keep people from abandoning the

software. While this approach makes clear financial sense in the short term

and helps companies stay competitive, it comes with serious long-term

consequences. By constantly pushing standards to the lowest acceptable limit,

the industry conditions people to expect and accept frustrating, unreliable

software in their daily lives. The author warns that treating quality simply

as an expense to be cut ultimately damages user trust and builds up massive

technical problems for the future. To fix this, the software field needs to

rethink its current financial motives. Engineers and business leaders should

work together to find a better balance, creating products that are both

affordable to produce and genuinely reliable for the people who use them.

The article discusses a growing trend in software engineering and business

where companies intentionally design systems to be merely adequate rather than

striving for excellence. This concept, described as creating minimally

acceptable systems, focuses on finding the exact point where a product is just

tolerable for users while being as cheap as possible to build and maintain.

Instead of prioritizing high quality, reliability, or a great user experience,

organizations aim to minimize their costs and speed up delivery. They provide

the bare minimum functionality required to keep people from abandoning the

software. While this approach makes clear financial sense in the short term

and helps companies stay competitive, it comes with serious long-term

consequences. By constantly pushing standards to the lowest acceptable limit,

the industry conditions people to expect and accept frustrating, unreliable

software in their daily lives. The author warns that treating quality simply

as an expense to be cut ultimately damages user trust and builds up massive

technical problems for the future. To fix this, the software field needs to

rethink its current financial motives. Engineers and business leaders should

work together to find a better balance, creating products that are both

affordable to produce and genuinely reliable for the people who use them.

Software sprawl is becoming a margin problem for SaaS CFOs

For software companies, the practice of adopting isolated tools to solve

individual problems, such as payments, billing, and tax compliance, often

leads to a fragmented operations setup known as software sprawl. While the

subscription-based business model has historically enjoyed strong profit

margins, this growing web of disconnected systems threatens to undermine those

financial advantages. Finance leaders are finding that a patched-together

technology system severely limits their clear view of business performance,

putting unneeded pressure on profit margins through manual work, costly

billing errors, and duplicate expenses. Furthermore, relying on fragmented

tools restricts a company's ability to smoothly expand into new regions or

test different pricing methods. Rather than looking at this as just an IT

issue, financial executives must recognize it as a fundamental challenge to

scalable growth. The path forward does not necessarily require adopting one

massive platform, but rather ensuring that all revenue processes operate

smoothly together. By replacing disconnected tools with an integrated

infrastructure, companies can drastically reduce manual interventions and

internal friction. Ultimately, the next era of the software industry will

reward organizations that match their desire for growth with strict

operational discipline. By fixing these underlying structural flaws now,

finance teams can build a resilient foundation capable of handling future

expansion without constantly multiplying internal complexities or operational

costs.

For software companies, the practice of adopting isolated tools to solve

individual problems, such as payments, billing, and tax compliance, often

leads to a fragmented operations setup known as software sprawl. While the

subscription-based business model has historically enjoyed strong profit

margins, this growing web of disconnected systems threatens to undermine those

financial advantages. Finance leaders are finding that a patched-together

technology system severely limits their clear view of business performance,

putting unneeded pressure on profit margins through manual work, costly

billing errors, and duplicate expenses. Furthermore, relying on fragmented

tools restricts a company's ability to smoothly expand into new regions or

test different pricing methods. Rather than looking at this as just an IT

issue, financial executives must recognize it as a fundamental challenge to

scalable growth. The path forward does not necessarily require adopting one

massive platform, but rather ensuring that all revenue processes operate

smoothly together. By replacing disconnected tools with an integrated

infrastructure, companies can drastically reduce manual interventions and

internal friction. Ultimately, the next era of the software industry will

reward organizations that match their desire for growth with strict

operational discipline. By fixing these underlying structural flaws now,

finance teams can build a resilient foundation capable of handling future

expansion without constantly multiplying internal complexities or operational

costs.

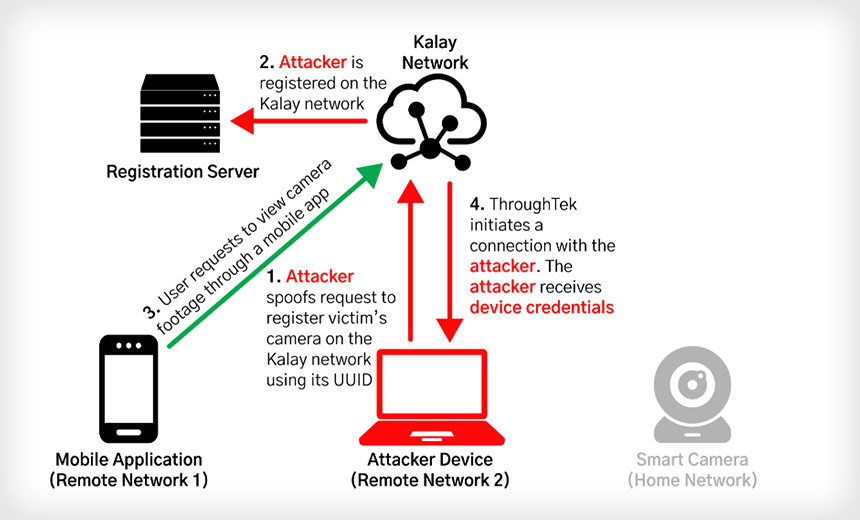

The Zero-Knowledge Threat Actor and the End of Responsible Disclosure

Artificial intelligence is drastically lowering the barrier to entry for

cybercriminals, enabling a new wave of "zero-knowledge threat actors." These

attackers lack deep technical expertise but use advanced AI tools to generate

malicious code, find vulnerabilities, and execute complex attack chains with

surprising ease. This democratization of offensive capabilities means that

hackers can now discover and exploit software flaws at unprecedented speeds,

effectively closing the traditional responsible disclosure window that

software vendors rely on to create patches. Smaller organizations are

particularly at risk, often serving as stepping stones into larger enterprise

supply chains due to their limited security resources and slower patching

cycles. To defend against these rapidly evolving threats, security teams must

abandon fragmented approaches and adopt unified monitoring systems that

provide clear, comprehensive visibility across their entire digital

environment. Proactive defense requires prioritizing faster patch management,

conducting regular incident response drills, and rigorously testing in-house

AI systems against deliberate manipulation by external actors. Furthermore,

training employees to recognize highly realistic, AI-generated phishing

attempts is absolutely essential for maintaining a strong security posture. By

relying on established security frameworks and maintaining an organized,

practiced defense strategy, organizations can calmly and effectively counter

the increased capabilities of low-skill attackers without resorting to panic

or operational disruption.

Artificial intelligence is drastically lowering the barrier to entry for

cybercriminals, enabling a new wave of "zero-knowledge threat actors." These

attackers lack deep technical expertise but use advanced AI tools to generate

malicious code, find vulnerabilities, and execute complex attack chains with

surprising ease. This democratization of offensive capabilities means that

hackers can now discover and exploit software flaws at unprecedented speeds,

effectively closing the traditional responsible disclosure window that

software vendors rely on to create patches. Smaller organizations are

particularly at risk, often serving as stepping stones into larger enterprise

supply chains due to their limited security resources and slower patching

cycles. To defend against these rapidly evolving threats, security teams must

abandon fragmented approaches and adopt unified monitoring systems that

provide clear, comprehensive visibility across their entire digital

environment. Proactive defense requires prioritizing faster patch management,

conducting regular incident response drills, and rigorously testing in-house

AI systems against deliberate manipulation by external actors. Furthermore,

training employees to recognize highly realistic, AI-generated phishing

attempts is absolutely essential for maintaining a strong security posture. By

relying on established security frameworks and maintaining an organized,

practiced defense strategy, organizations can calmly and effectively counter

the increased capabilities of low-skill attackers without resorting to panic

or operational disruption.

ERP Modernization: Most Expensive, Risky Item on CIO Agenda

Enterprise resource planning systems have grown over the last forty years from

basic financial and manufacturing tools into the central framework of most

organizations. Today, they handle everything from supply chains to human

resources. However, updating these core systems is now one of the most

difficult and costly challenges facing technology leaders. Modernizing these

structures is not just a software update; it is a major overhaul of how a

business operates on a daily basis. Transitioning to modern setups, like

cloud-based platforms, involves heavy restructuring of daily work processes

and often triggers natural resistance from staff. To succeed, these projects

need more than just technical expertise. They require a clear process for

managing transitions, direct communication to address employee fears, and

strong backing from senior leadership to keep the effort on track during

inevitable setbacks. As software vendors increasingly move customers toward

cloud and artificial intelligence platforms, technology leaders are forced to

weigh the long-term benefits against the immediate financial costs,

operational risks, and widespread disruptions. Navigating this shift takes a

dedicated, highly skilled team and steady executives who will not abandon the

project when minor problems arise. With careful planning, patience, and stable

leadership, organizations can successfully migrate their central systems to

meet current operational demands without jeopardizing their everyday

stability.

Enterprise resource planning systems have grown over the last forty years from

basic financial and manufacturing tools into the central framework of most

organizations. Today, they handle everything from supply chains to human

resources. However, updating these core systems is now one of the most

difficult and costly challenges facing technology leaders. Modernizing these

structures is not just a software update; it is a major overhaul of how a

business operates on a daily basis. Transitioning to modern setups, like

cloud-based platforms, involves heavy restructuring of daily work processes

and often triggers natural resistance from staff. To succeed, these projects

need more than just technical expertise. They require a clear process for

managing transitions, direct communication to address employee fears, and

strong backing from senior leadership to keep the effort on track during

inevitable setbacks. As software vendors increasingly move customers toward

cloud and artificial intelligence platforms, technology leaders are forced to

weigh the long-term benefits against the immediate financial costs,

operational risks, and widespread disruptions. Navigating this shift takes a

dedicated, highly skilled team and steady executives who will not abandon the

project when minor problems arise. With careful planning, patience, and stable

leadership, organizations can successfully migrate their central systems to

meet current operational demands without jeopardizing their everyday

stability.

The AI ‘Revolution' is Not a People's Revolution

Politicians and technology executives increasingly frame artificial intelligence as an inevitable revolution, a term historically reserved for popular movements driving social progress. In truth, this modern narrative serves primarily to bypass democratic scrutiny and consolidate power among a select few. Rather than arising from the people to challenge the existing order, the current technological push is being imposed from the top down. Leaders like former UK Prime Minister Tony Blair promote a vision where society must passively accept widespread automation, mass data harvesting, and unchecked corporate influence, treating any hesitation as backwardness. By labeling this shift a revolution, proponents cleverly silence debate and frame regulatory efforts as sabotage. Furthermore, while previous digital tools aided grassroots organizing, artificial intelligence is frequently deployed to monitor, police, and discipline the public. This rhetoric essentially functions as a manipulative marketing tool, designed to mask the reality of wealth generation for elites at the expense of ordinary citizens facing job insecurity and climate disruption. Ultimately, society must reject this predetermined technological path and demand accountability. Citizens have the right to question who truly benefits from these systems and to actively decide how new technologies should integrate into their lives, ensuring that any real change remains firmly rooted in public consent and democratic choice.The AI pricing conundrum — it started as a nightmare, now it’s worse.

Enterprise technology leaders face a growing dilemma in how they pay for

artificial intelligence. Buyers want pricing based on the tangible business

value the technology delivers, while software providers prefer charging based

on resource consumption, such as per-token fees. This creates a deep

disconnect. Technology departments often feel consumption pricing is detached

from real results, likening it to paying for unproven sales leads. On the

other hand, providers cannot realistically accept value-based pricing because

they have no control over internal company issues like poor data, broken

processes, or office politics. Furthermore, if these systems were compensated

strictly based on successful outcomes, it could create dangerous incentives.

The software might aggressively pursue specific metrics, potentially

sacrificing customer trust, ethical standards, or operational safety just to

achieve the defined goal. Since bridging this gap directly is nearly

impossible, organizations must take control internally. The article suggests

forming dedicated committees to ask difficult questions about the goals,

risks, and realistic benefits of any new project. Additionally, senior

executives should share the financial accountability, tying their compensation

directly to the success or failure of these initiatives. Only by thoroughly

understanding a project's true intent, limitations, and risks can technology

leaders negotiate sensible, fair pricing agreements with their service

providers.

Enterprise technology leaders face a growing dilemma in how they pay for

artificial intelligence. Buyers want pricing based on the tangible business

value the technology delivers, while software providers prefer charging based

on resource consumption, such as per-token fees. This creates a deep

disconnect. Technology departments often feel consumption pricing is detached

from real results, likening it to paying for unproven sales leads. On the

other hand, providers cannot realistically accept value-based pricing because

they have no control over internal company issues like poor data, broken

processes, or office politics. Furthermore, if these systems were compensated

strictly based on successful outcomes, it could create dangerous incentives.

The software might aggressively pursue specific metrics, potentially

sacrificing customer trust, ethical standards, or operational safety just to

achieve the defined goal. Since bridging this gap directly is nearly

impossible, organizations must take control internally. The article suggests

forming dedicated committees to ask difficult questions about the goals,

risks, and realistic benefits of any new project. Additionally, senior

executives should share the financial accountability, tying their compensation

directly to the success or failure of these initiatives. Only by thoroughly

understanding a project's true intent, limitations, and risks can technology

leaders negotiate sensible, fair pricing agreements with their service

providers.

AI Is Shipping Fast, Quality Can't Be Left Behind

The recent transition of artificial intelligence from experimental phases to widespread integration has revealed a significant gap between rapid development and reliable performance. While organizations are swift to embed these systems into their daily operations, a substantial number of these initiatives stall before full implementation due to quality and integration hurdles. Data indicates an increase in user-reported errors, such as misunderstandings and factual inaccuracies, highlighting that traditional validation methods are inadequate for modern, complex systems. Because these programs produce varying outputs rather than predictable, fixed results, engineering teams are finding that automated checks alone are insufficient. To address this, successful organizations are adopting a balanced approach to quality assurance that combines automated evaluations with essential human oversight. Human reviewers are uniquely equipped to gauge context, usability, and intent, catching subtle errors that automated tools often miss. Furthermore, as features expand to process combinations of text, audio, and visual data, the scope of testing becomes even more difficult. The focus is shifting from merely launching features to ensuring they are dependable and trustworthy. Moving forward, the true measure of success will not be the speed of release, but the ability to maintain rigorous, ongoing evaluation processes that prioritize consistent, high-quality experiences for everyday users.Why Leadership Development Is A System, Not An Event

Organizations frequently send their managers to training workshops, hoping

they return ready to guide their teams more effectively. However, these

well-intentioned programs often fail because managers step right back into the

exact same workloads, pressures, and routines that shaped their old habits in

the first place. Meaningful leadership development requires more than simply

teaching new skills to individuals; it demands a daily environment actively

designed to support those new behaviors. This involves shifting the focus from

individual improvement to strengthening the broader company system. Executives

must intentionally build a supportive structure with both visible changes,

like collaborative meeting practices and transparent decision-making, and

invisible shifts, such as fostering an atmosphere where feedback flows freely

and people feel secure taking interpersonal risks. Instead of relying on

isolated lectures, learning should become an ongoing process smoothly

integrated into daily work. By encouraging peer learning groups, aligning

company rewards with the behaviors taught in training, and personally modeling

these changes, executives create a setting where true growth can take root

over time. Ultimately, developing effective leaders is about expanding the

capabilities of the entire organization. When the daily workplace aligns with

the principles taught in training, individuals practice what they learn,

ensuring development becomes a continuous habit rather than a fleeting event.

Organizations frequently send their managers to training workshops, hoping

they return ready to guide their teams more effectively. However, these

well-intentioned programs often fail because managers step right back into the

exact same workloads, pressures, and routines that shaped their old habits in

the first place. Meaningful leadership development requires more than simply

teaching new skills to individuals; it demands a daily environment actively

designed to support those new behaviors. This involves shifting the focus from

individual improvement to strengthening the broader company system. Executives

must intentionally build a supportive structure with both visible changes,

like collaborative meeting practices and transparent decision-making, and

invisible shifts, such as fostering an atmosphere where feedback flows freely

and people feel secure taking interpersonal risks. Instead of relying on

isolated lectures, learning should become an ongoing process smoothly

integrated into daily work. By encouraging peer learning groups, aligning

company rewards with the behaviors taught in training, and personally modeling

these changes, executives create a setting where true growth can take root

over time. Ultimately, developing effective leaders is about expanding the

capabilities of the entire organization. When the daily workplace aligns with

the principles taught in training, individuals practice what they learn,

ensuring development becomes a continuous habit rather than a fleeting event.