Quote for the day:

“When someone really hears you without passing judgment, it feels damn good.” -- Carl Rogers

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 22 mins • Perfect for listening on the go.

EU AI Act – the high-risk classification guidelines explained

Rising hardware costs accelerate shift to private cloud adoption

Making sense of too much code

While artificial intelligence has notably accelerated software development,

creating more applications does not automatically translate into more users.

Recent data shows that even though AI tools have significantly increased raw

coding output, increasing code commits by nearly two hundred percent, the

actual usage of these new applications remains flat. This discrepancy

highlights a fundamental reality in the software industry: writing code is

often the easiest part of the process. The true challenge lies in everything

that happens after the code is written, including integrating systems,

ensuring security, writing clear documentation, and earning user trust. In a

market flooded with similar AI-generated software, human attention is the most

scarce resource. As a result, technical superiority alone is rarely enough to

guarantee success. Products that thrive are typically supported by essential

but frequently undervalued efforts, such as community building, recognizable

branding, and effective technical marketing. Developers often dismiss

traditional advertising, but they value deep, hands-on guidance and

comprehensive tutorials, which are simply different forms of marketing.

Ultimately, while AI tools are useful for improving developer efficiency, they

cannot replace the necessary human effort required to connect a product with

its audience. Earning market share still relies heavily on the steady,

unglamorous work of helping people understand and apply your technology

effectively.

While artificial intelligence has notably accelerated software development,

creating more applications does not automatically translate into more users.

Recent data shows that even though AI tools have significantly increased raw

coding output, increasing code commits by nearly two hundred percent, the

actual usage of these new applications remains flat. This discrepancy

highlights a fundamental reality in the software industry: writing code is

often the easiest part of the process. The true challenge lies in everything

that happens after the code is written, including integrating systems,

ensuring security, writing clear documentation, and earning user trust. In a

market flooded with similar AI-generated software, human attention is the most

scarce resource. As a result, technical superiority alone is rarely enough to

guarantee success. Products that thrive are typically supported by essential

but frequently undervalued efforts, such as community building, recognizable

branding, and effective technical marketing. Developers often dismiss

traditional advertising, but they value deep, hands-on guidance and

comprehensive tutorials, which are simply different forms of marketing.

Ultimately, while AI tools are useful for improving developer efficiency, they

cannot replace the necessary human effort required to connect a product with

its audience. Earning market share still relies heavily on the steady,

unglamorous work of helping people understand and apply your technology

effectively.

How AI Agents Are Reshaping DataOps for the Always-On Enterprise

5 ways data centers endanger their local communities and the country as a whole

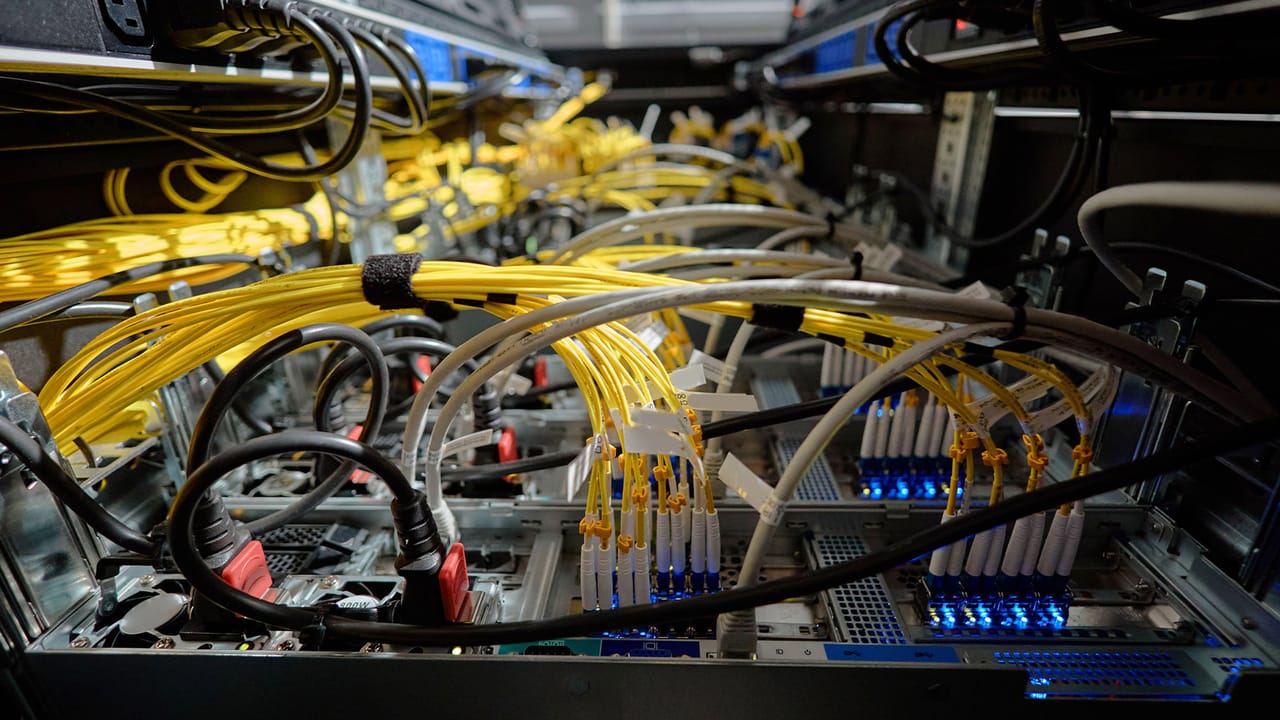

Data centers are the physical backbone of our digital world, but their rapid

expansion poses significant risks to local communities and the broader public.

According to a study focusing on facilities in Virginia, which hosts the

highest concentration of data centers in the United States, these massive

structures create five primary hazards. First, they demand enormous amounts of

electricity, which, when generated by fossil fuels or backup diesel

generators, releases harmful air pollutants and greenhouse gases. Second,

servers require millions of gallons of water for cooling, placing severe

strain on local rivers and municipal water supplies, even in areas not prone

to drought. Third, the constant operation of air chillers and cooling fans

produces a persistent, low frequency hum that can disrupt residents' sleep and

reduce their overall wellbeing. Fourth, developers frequently target

affordable green spaces and agricultural land for new construction, replacing

natural environments with heavy industrial zones and increasing diesel truck

traffic. Finally, the massive electricity demand of data centers stresses the

power grid, driving up energy costs for everyday consumers and

disproportionately affecting lower income families. While targeted solutions

like transitioning to renewable energy, utilizing recycled water systems,

reengineering fan mounts, and shifting grid costs to developers can mitigate

these impacts, unchecked expansion remains a serious threat to public health

and the environment.

Data centers are the physical backbone of our digital world, but their rapid

expansion poses significant risks to local communities and the broader public.

According to a study focusing on facilities in Virginia, which hosts the

highest concentration of data centers in the United States, these massive

structures create five primary hazards. First, they demand enormous amounts of

electricity, which, when generated by fossil fuels or backup diesel

generators, releases harmful air pollutants and greenhouse gases. Second,

servers require millions of gallons of water for cooling, placing severe

strain on local rivers and municipal water supplies, even in areas not prone

to drought. Third, the constant operation of air chillers and cooling fans

produces a persistent, low frequency hum that can disrupt residents' sleep and

reduce their overall wellbeing. Fourth, developers frequently target

affordable green spaces and agricultural land for new construction, replacing

natural environments with heavy industrial zones and increasing diesel truck

traffic. Finally, the massive electricity demand of data centers stresses the

power grid, driving up energy costs for everyday consumers and

disproportionately affecting lower income families. While targeted solutions

like transitioning to renewable energy, utilizing recycled water systems,

reengineering fan mounts, and shifting grid costs to developers can mitigate

these impacts, unchecked expansion remains a serious threat to public health

and the environment.AI in SDLC Right Now: What's Working and What Isn't

Artificial intelligence is steadily finding its place in the software development life cycle, but its current value is uneven across different stages. Right now, AI tools are highly effective at handling repetitive, well-defined tasks. Developers are seeing real benefits from code completion assistants, which reliably write boilerplate code and suggest basic functions, saving substantial time. AI is also proving useful in automated testing, where it can quickly generate test cases and identify simple bugs before human review. However, the technology still struggles with complex logic and broad system architecture. When asked to design entire applications or refactor massive legacy codebases, AI often introduces subtle errors or suggests inefficient patterns that require heavy human correction. It also lacks an understanding of business context, meaning it cannot determine if a correctly written feature actually solves the underlying user problem. Furthermore, security remains a concern, as AI-generated code can occasionally include vulnerabilities if the training data was flawed. The most practical approach today is to treat AI as a capable junior assistant rather than an independent expert. By assigning it routine coding chores and initial code reviews, engineering teams can free up their human developers to focus on high-level system design, complex problem solving, and ensuring the software genuinely meets user needs.15 tough cybersecurity questions every CISO must answer

The article outlines the challenging questions Chief Information Security

Officers (CISOs) must be prepared to answer when facing their board of

directors or executive leadership. Rather than focusing on complex technical

details, these questions target the broader business impact of security

programs. Leaders want to know the plain truth about the organization’s

current risk level, specifically asking what the most likely threats are and

how those threats could affect daily operations. CISOs are expected to clearly

explain how they measure success and whether the current security budget is

actually reducing risk. Other crucial topics include the organization's

overall readiness for a major breach, the exact steps planned for recovery,

and how long it would realistically take to restore normal business functions.

The questions also probe the security of external vendors and partners,

acknowledging that vulnerabilities often originate outside the company’s

direct control. Furthermore, executives need assurance that the security team

has the right talent and that everyday employees are adequately trained to

avoid common mistakes. Ultimately, the guide emphasizes that a modern security

leader cannot just manage technology. They must translate complex challenges

into straightforward business terms, proving that their strategies protect the

company's critical assets and customer data without slowing down its financial

growth or operational efficiency.

The article outlines the challenging questions Chief Information Security

Officers (CISOs) must be prepared to answer when facing their board of

directors or executive leadership. Rather than focusing on complex technical

details, these questions target the broader business impact of security

programs. Leaders want to know the plain truth about the organization’s

current risk level, specifically asking what the most likely threats are and

how those threats could affect daily operations. CISOs are expected to clearly

explain how they measure success and whether the current security budget is

actually reducing risk. Other crucial topics include the organization's

overall readiness for a major breach, the exact steps planned for recovery,

and how long it would realistically take to restore normal business functions.

The questions also probe the security of external vendors and partners,

acknowledging that vulnerabilities often originate outside the company’s

direct control. Furthermore, executives need assurance that the security team

has the right talent and that everyday employees are adequately trained to

avoid common mistakes. Ultimately, the guide emphasizes that a modern security

leader cannot just manage technology. They must translate complex challenges

into straightforward business terms, proving that their strategies protect the

company's critical assets and customer data without slowing down its financial

growth or operational efficiency.

Why digital governance is quietly redefining modern trusteeship

Historically, the role of a trustee focused almost entirely on safeguarding physical property and managing financial wealth. Today, the rapid shift toward digital operations has fundamentally redefined what it actually means to be a modern trustee. As organizations and individuals accumulate vast amounts of digital assets, data records, and online infrastructure, the everyday responsibilities of a trustee have expanded far beyond their traditional boundaries. Good digital governance now requires these professionals to actively oversee cybersecurity measures, manage complex data privacy regulations, and protect sensitive information from constant external threats. Without strong digital policies, these vital assets are left completely vulnerable to theft and mismanagement. Instead of relying on slow, manual oversight, modern trustees must use automated compliance tools and secure digital platforms to monitor their operations in real time. This technological shift ensures that all managed assets remain secure while maintaining complete transparency for the beneficiaries involved. Furthermore, integrating solid digital governance into daily practices allows trustees to make much faster, more informed decisions based on accurate data. Adapting to this new reality is no longer an optional upgrade; it is a critical requirement for maintaining trust. By fully embracing these digital frameworks, modern fiduciaries can confidently protect long-term interests, prevent unnecessary risks, and ensure lasting stability in an increasingly complicated online world.The architecture of subtraction: Why it’s time to erase the roads, not just map the traffic

As artificial intelligence drastically shortens the time it takes attackers to

turn newly discovered vulnerabilities into active exploits, relying on

software patching as a primary defense is no longer a practical strategy.

Patching is inherently reactive; it forces security teams into a continuous

cycle of applying temporary fixes without actually closing the underlying

avenues that attackers use to move through a network. Furthermore, simply

prioritizing which patches to apply first does not solve this fundamental

structural flaw. Instead, organizations should adopt a subtractive approach to

security, which focuses on permanently erasing unneeded attack paths rather

than merely managing a backlog of flaws. This method centers on minimizing

privileges and stripping away unnecessary system capabilities, such as

disabling outdated protocols, restricting internet access for specific

applications, or blocking tools like SSH for employees who do not genuinely

need them. By taking the time to understand exactly what functionality is

required for normal daily operations, engineering teams can safely disable the

rest. This targeted strategy allows defenders to implement firm structural

constraints that completely eliminate entire categories of attack techniques

across their environments. Ultimately, taking away the very terrain that

attackers rely upon provides a much stronger, more enduring defense than

constantly racing to apply the latest security update.

As artificial intelligence drastically shortens the time it takes attackers to

turn newly discovered vulnerabilities into active exploits, relying on

software patching as a primary defense is no longer a practical strategy.

Patching is inherently reactive; it forces security teams into a continuous

cycle of applying temporary fixes without actually closing the underlying

avenues that attackers use to move through a network. Furthermore, simply

prioritizing which patches to apply first does not solve this fundamental

structural flaw. Instead, organizations should adopt a subtractive approach to

security, which focuses on permanently erasing unneeded attack paths rather

than merely managing a backlog of flaws. This method centers on minimizing

privileges and stripping away unnecessary system capabilities, such as

disabling outdated protocols, restricting internet access for specific

applications, or blocking tools like SSH for employees who do not genuinely

need them. By taking the time to understand exactly what functionality is

required for normal daily operations, engineering teams can safely disable the

rest. This targeted strategy allows defenders to implement firm structural

constraints that completely eliminate entire categories of attack techniques

across their environments. Ultimately, taking away the very terrain that

attackers rely upon provides a much stronger, more enduring defense than

constantly racing to apply the latest security update.

Quality as Business Technology Architecture: A New Model for Digital Enterprises

While many organizations invest heavily in digital upgrades, they often

struggle to innovate safely because of how they handle quality control.

Historically, quality management has functioned purely as a rigid compliance

tool, relying on isolated processes, heavy paperwork, and reactive fixes to

pass audits. However, as operations become more complex and data-driven, this

traditional approach creates constant bottlenecks. To succeed today, companies

must stop treating quality as a separate checkpoint and instead build it

directly into their foundational business and technology structures. This

means designing an integrated system across three main areas. First, core

processes like tracking errors and managing suppliers must be connected into

smooth, end-to-end workflows to spot root causes faster. Second, data must be

standardized and shared across platforms so teams can actively use it to make

informed decisions rather than just filing reports. Finally, the underlying

technology must connect these workflows seamlessly rather than reinforcing old

silos. This shift requires a major cultural change, moving quality teams away

from simply policing mistakes toward helping design better processes from the

start. Ultimately, advanced tools like artificial intelligence and automation

will only work if they rest on a well-designed, integrated quality foundation.

Leaders must coordinate across departments to build this architectural

backbone, ensuring their organizations remain safe, compliant, and

adaptable.

While many organizations invest heavily in digital upgrades, they often

struggle to innovate safely because of how they handle quality control.

Historically, quality management has functioned purely as a rigid compliance

tool, relying on isolated processes, heavy paperwork, and reactive fixes to

pass audits. However, as operations become more complex and data-driven, this

traditional approach creates constant bottlenecks. To succeed today, companies

must stop treating quality as a separate checkpoint and instead build it

directly into their foundational business and technology structures. This

means designing an integrated system across three main areas. First, core

processes like tracking errors and managing suppliers must be connected into

smooth, end-to-end workflows to spot root causes faster. Second, data must be

standardized and shared across platforms so teams can actively use it to make

informed decisions rather than just filing reports. Finally, the underlying

technology must connect these workflows seamlessly rather than reinforcing old

silos. This shift requires a major cultural change, moving quality teams away

from simply policing mistakes toward helping design better processes from the

start. Ultimately, advanced tools like artificial intelligence and automation

will only work if they rest on a well-designed, integrated quality foundation.

Leaders must coordinate across departments to build this architectural

backbone, ensuring their organizations remain safe, compliant, and

adaptable.