Quote for the day:

“Every time you have to speak, you are auditioning for leadership.” -- James Humes

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 19 mins • Perfect for listening on the go.

How To Navigate The New Economics Of Professionalized Cybercrime

The modern cybercrime landscape has evolved into a professionalized industry

where attackers prioritize precision and severity over volume. According to

recent data, while the frequency of material claims has decreased, the average

cost per ransomware incident has surged, signaling a shift toward more

efficient targeting. This new economic reality is defined by three primary

trends: the rise of data-theft extortion, the prevalence of identity attacks,

and the long-tail financial consequences that follow a breach. Because

businesses have improved their backup and recovery systems, criminals have

pivoted from simple encryption to threatening the exposure of sensitive data,

often leveraging AI to analyze stolen information for maximum leverage.

Furthermore, the professionalization of these threats extends to supply chain

vulnerabilities, where a single vendor compromise can cause cascading losses

across thousands of downstream clients. Consequently, cyber incidents are no

longer isolated technical failures but material enterprise risks with

financial repercussions lasting years. To navigate this environment,

organizational leaders must shift their focus from mere operational recovery

to robust data exfiltration prevention. CISOs, CFOs, and CROs must collaborate

to integrate cyber risk into broader enterprise frameworks, ensuring that

financial planning and security investments account for the multi-year legal,

regulatory, and reputational exposures that now characterize the threat

landscape.

The modern cybercrime landscape has evolved into a professionalized industry

where attackers prioritize precision and severity over volume. According to

recent data, while the frequency of material claims has decreased, the average

cost per ransomware incident has surged, signaling a shift toward more

efficient targeting. This new economic reality is defined by three primary

trends: the rise of data-theft extortion, the prevalence of identity attacks,

and the long-tail financial consequences that follow a breach. Because

businesses have improved their backup and recovery systems, criminals have

pivoted from simple encryption to threatening the exposure of sensitive data,

often leveraging AI to analyze stolen information for maximum leverage.

Furthermore, the professionalization of these threats extends to supply chain

vulnerabilities, where a single vendor compromise can cause cascading losses

across thousands of downstream clients. Consequently, cyber incidents are no

longer isolated technical failures but material enterprise risks with

financial repercussions lasting years. To navigate this environment,

organizational leaders must shift their focus from mere operational recovery

to robust data exfiltration prevention. CISOs, CFOs, and CROs must collaborate

to integrate cyber risk into broader enterprise frameworks, ensuring that

financial planning and security investments account for the multi-year legal,

regulatory, and reputational exposures that now characterize the threat

landscape.How Agentic AI is transforming the future of Indian healthcare

Agentic AI represents a transformative shift in the Indian healthcare

landscape, transitioning from passive data analysis to autonomous,

goal-oriented systems that proactively manage patient care. Unlike traditional

AI, which primarily focuses on reporting, agentic systems independently

execute tasks such as triaging, scheduling, and continuous monitoring to

address India’s strained doctor-to-patient ratio. By integrating these

intelligent agents, medical facilities can streamline outpatient visits—from

digital symptom recording to automated post-consultation

follow-ups—significantly reducing the administrative burden on overworked

clinicians. The technology is particularly vital for chronic disease

management, where it provides timely nudges for medication adherence and

identifies early warning signs before they escalate into emergencies.

Furthermore, Agentic AI acts as a crucial support layer for frontline health

workers in rural regions, bridging the clinical knowledge gap through

real-time protocol guidance and decision support. While these advancements

offer a scalable solution for public health, the article emphasizes that human

empathy remains irreplaceable. Successful adoption requires robust frameworks

for data privacy and ethical transparency, ensuring that physicians always

retain final decision-making authority. Ultimately, by evolving from a mere

tool into essential digital infrastructure, Agentic AI is poised to

democratize access and foster a more responsive, patient-centric healthcare

ecosystem across the diverse Indian population.

Agentic AI represents a transformative shift in the Indian healthcare

landscape, transitioning from passive data analysis to autonomous,

goal-oriented systems that proactively manage patient care. Unlike traditional

AI, which primarily focuses on reporting, agentic systems independently

execute tasks such as triaging, scheduling, and continuous monitoring to

address India’s strained doctor-to-patient ratio. By integrating these

intelligent agents, medical facilities can streamline outpatient visits—from

digital symptom recording to automated post-consultation

follow-ups—significantly reducing the administrative burden on overworked

clinicians. The technology is particularly vital for chronic disease

management, where it provides timely nudges for medication adherence and

identifies early warning signs before they escalate into emergencies.

Furthermore, Agentic AI acts as a crucial support layer for frontline health

workers in rural regions, bridging the clinical knowledge gap through

real-time protocol guidance and decision support. While these advancements

offer a scalable solution for public health, the article emphasizes that human

empathy remains irreplaceable. Successful adoption requires robust frameworks

for data privacy and ethical transparency, ensuring that physicians always

retain final decision-making authority. Ultimately, by evolving from a mere

tool into essential digital infrastructure, Agentic AI is poised to

democratize access and foster a more responsive, patient-centric healthcare

ecosystem across the diverse Indian population.What a Post-Commercial Quantum World Could Look Like

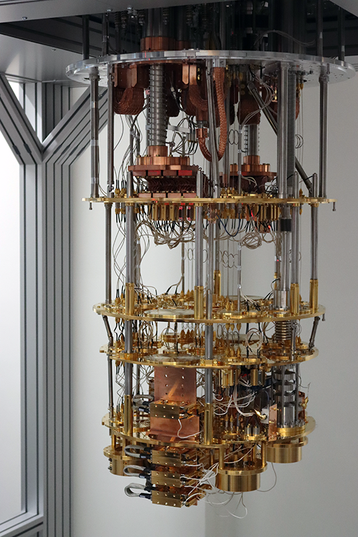

The article "What a Post-Commercial Quantum World Could Look Like," published

by The Quantum Insider, explores a future where quantum computing has moved

beyond its initial commercial hype into a phase of deep integration and

stabilization. In this post-commercial era, the focus shifts from the race for

"quantum supremacy" toward the practical, ubiquitous application of quantum

technologies across global infrastructure. The piece suggests that once the

technology matures, it will cease to be a standalone industry of speculative

startups and instead become a foundational utility, much like the internet or

electricity today. Key impacts include a complete transformation of

cybersecurity through quantum-resistant encryption and the optimization of

complex systems in logistics, materials science, and drug discovery that were

previously unsolvable. This transition will likely lead to a "quantum divide,"

where geopolitical and economic power is concentrated among those who have

successfully integrated these capabilities into their national security and

industrial frameworks. Ultimately, the article paints a picture of a world

where quantum mechanics no longer represents a frontier of experimental

physics but serves as the silent, invisible engine driving high-performance

global economies and ensuring long-term technological resilience.

The article "What a Post-Commercial Quantum World Could Look Like," published

by The Quantum Insider, explores a future where quantum computing has moved

beyond its initial commercial hype into a phase of deep integration and

stabilization. In this post-commercial era, the focus shifts from the race for

"quantum supremacy" toward the practical, ubiquitous application of quantum

technologies across global infrastructure. The piece suggests that once the

technology matures, it will cease to be a standalone industry of speculative

startups and instead become a foundational utility, much like the internet or

electricity today. Key impacts include a complete transformation of

cybersecurity through quantum-resistant encryption and the optimization of

complex systems in logistics, materials science, and drug discovery that were

previously unsolvable. This transition will likely lead to a "quantum divide,"

where geopolitical and economic power is concentrated among those who have

successfully integrated these capabilities into their national security and

industrial frameworks. Ultimately, the article paints a picture of a world

where quantum mechanics no longer represents a frontier of experimental

physics but serves as the silent, invisible engine driving high-performance

global economies and ensuring long-term technological resilience.Continuous AI biometric identification: Why manual patient verification is not enough!

The article explores the critical transition from manual patient verification

to continuous AI-powered biometric identification in modern healthcare.

Traditional methods, such as verbal confirmations and physical wristbands, are

increasingly deemed insufficient due to their susceptibility to human error

and data entry inconsistencies, which often lead to fragmented medical records

and life-threatening mistakes. To address these vulnerabilities, the industry

is shifting toward a model of constant identity assurance using advanced

technologies like facial biometrics, behavioral signals, and passive

authentication. This continuous approach ensures real-time validation across

all clinical touchpoints, significantly reducing the risks associated with

duplicate electronic health records — currently estimated at 8-12% of total

files. Furthermore, the integration of agentic AI and multimodal systems —

combining fingerprints, voice, and device data — creates a secure identity

layer that streamlines clinical workflows and protects patients from

misidentification. With the healthcare biometrics market projected to reach

$42 billion by 2030, the article argues that automating identity verification

is no longer optional. Ultimately, by replacing episodic manual checks with

autonomous, intelligent monitoring, healthcare organizations can enhance data

integrity, safeguard financial interests against identity fraud, and, most

importantly, ensure the highest standards of safety for the individuals in

their care.

The article explores the critical transition from manual patient verification

to continuous AI-powered biometric identification in modern healthcare.

Traditional methods, such as verbal confirmations and physical wristbands, are

increasingly deemed insufficient due to their susceptibility to human error

and data entry inconsistencies, which often lead to fragmented medical records

and life-threatening mistakes. To address these vulnerabilities, the industry

is shifting toward a model of constant identity assurance using advanced

technologies like facial biometrics, behavioral signals, and passive

authentication. This continuous approach ensures real-time validation across

all clinical touchpoints, significantly reducing the risks associated with

duplicate electronic health records — currently estimated at 8-12% of total

files. Furthermore, the integration of agentic AI and multimodal systems —

combining fingerprints, voice, and device data — creates a secure identity

layer that streamlines clinical workflows and protects patients from

misidentification. With the healthcare biometrics market projected to reach

$42 billion by 2030, the article argues that automating identity verification

is no longer optional. Ultimately, by replacing episodic manual checks with

autonomous, intelligent monitoring, healthcare organizations can enhance data

integrity, safeguard financial interests against identity fraud, and, most

importantly, ensure the highest standards of safety for the individuals in

their care.The 4 disciplines of delivery — and why conflating them silently breaks your teams

In his article for CIO, Prasanna Kumar Ramachandran argues that enterprise

success depends on maintaining four distinct delivery disciplines: product

management, technical architecture, program management, and release

management. Each domain addresses a fundamental question that the others are

ill-equipped to answer. Product management defines the "what" and "why,"

establishing the strategic vision and priorities. Technical architecture

translates this into the "how," determining structural feasibility and

sequence. Program management orchestrates the delivery timeline by managing

cross-team dependencies, while release management ensures safe, compliant

deployment to production. Organizations frequently stumble by treating these

roles as interchangeable or asking a single team to bridge all four. This

conflation "silently breaks" teams because it forces experts into roles

outside their core competencies. For instance, an architect focused on product

decisions might prioritize technical elegance over market needs, while program

managers might sequence work based on staff availability rather than strategic

value. When these boundaries blur, the result is often wasted effort, missed

dependencies, and a fundamental misalignment between technical output and

business goals. By clearly delineating these responsibilities, leaders can

prevent operational friction and ensure that every capability delivered

actually reaches the customer safely and generates measurable impact.

In his article for CIO, Prasanna Kumar Ramachandran argues that enterprise

success depends on maintaining four distinct delivery disciplines: product

management, technical architecture, program management, and release

management. Each domain addresses a fundamental question that the others are

ill-equipped to answer. Product management defines the "what" and "why,"

establishing the strategic vision and priorities. Technical architecture

translates this into the "how," determining structural feasibility and

sequence. Program management orchestrates the delivery timeline by managing

cross-team dependencies, while release management ensures safe, compliant

deployment to production. Organizations frequently stumble by treating these

roles as interchangeable or asking a single team to bridge all four. This

conflation "silently breaks" teams because it forces experts into roles

outside their core competencies. For instance, an architect focused on product

decisions might prioritize technical elegance over market needs, while program

managers might sequence work based on staff availability rather than strategic

value. When these boundaries blur, the result is often wasted effort, missed

dependencies, and a fundamental misalignment between technical output and

business goals. By clearly delineating these responsibilities, leaders can

prevent operational friction and ensure that every capability delivered

actually reaches the customer safely and generates measurable impact.Teaching AI models to say “I’m not sure”

Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory

(CSAIL) have developed a novel training technique called Reinforcement

Learning with Calibration Rewards (RLCR) to address the issue of AI

overconfidence. Modern large language models often deliver every response with

the same level of certainty, regardless of whether they are correct or merely

guessing. This dangerous trait stems from standard reinforcement learning

methods that reward accuracy but fail to penalize misplaced confidence. RLCR

fixes this flaw by teaching models to generate calibrated confidence scores

alongside their answers. During training, the system is penalized for being

confidently wrong or unnecessarily hesitant when correct. Experimental results

demonstrate that RLCR can reduce calibration errors by up to 90 percent

without sacrificing accuracy, even on entirely new tasks the models have never

encountered. This advancement is particularly significant for high-stakes

applications in medicine, law, and finance, where human users must rely on the

AI’s self-assessment to determine when to seek a second opinion. By providing

a reliable signal of uncertainty, RLCR transforms AI from an unshakable but

potentially deceptive voice into a more trustworthy tool that explicitly

communicates its own limitations, ultimately enhancing safety and reliability

in complex decision-making environments.

Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory

(CSAIL) have developed a novel training technique called Reinforcement

Learning with Calibration Rewards (RLCR) to address the issue of AI

overconfidence. Modern large language models often deliver every response with

the same level of certainty, regardless of whether they are correct or merely

guessing. This dangerous trait stems from standard reinforcement learning

methods that reward accuracy but fail to penalize misplaced confidence. RLCR

fixes this flaw by teaching models to generate calibrated confidence scores

alongside their answers. During training, the system is penalized for being

confidently wrong or unnecessarily hesitant when correct. Experimental results

demonstrate that RLCR can reduce calibration errors by up to 90 percent

without sacrificing accuracy, even on entirely new tasks the models have never

encountered. This advancement is particularly significant for high-stakes

applications in medicine, law, and finance, where human users must rely on the

AI’s self-assessment to determine when to seek a second opinion. By providing

a reliable signal of uncertainty, RLCR transforms AI from an unshakable but

potentially deceptive voice into a more trustworthy tool that explicitly

communicates its own limitations, ultimately enhancing safety and reliability

in complex decision-making environments.Are you paying an AI ‘swarm tax’? Why single agents often beat complex systems

The VentureBeat article discusses a "swarm tax" paid by enterprises that

over-engineer AI systems with complex multi-agent architectures. Recent

Stanford University research reveals that single-agent systems often match or

even outperform multi-agent swarms when both are allocated an equivalent

"thinking token budget." The perceived superiority of swarms frequently stems

from higher total computation during testing rather than inherent structural

advantages. This "tax" manifests as increased latency, higher costs, and

greater technical complexity. A primary reason for this performance gap is the

"Data Processing Inequality," where critical information is often lost or

fragmented during the handoffs and summarizations required in multi-agent

orchestration. In contrast, a single agent maintains a continuous context

window, allowing for much more efficient information retention and reasoning.

The study suggests that developers should prioritize optimizing single-agent

models—using techniques like SAS-L to extend reasoning—before adopting

multi-agent frameworks. Swarms remain useful only in specific scenarios, such

as when a single agent’s context becomes corrupted by noisy data or when a

task is naturally modular and requires parallel processing. Ultimately, the

article advocates for a "single-agent first" approach, warning that

unnecessary architectural bloat can lead to diminishing returns and

inefficient resource utilization in enterprise AI deployments.

The VentureBeat article discusses a "swarm tax" paid by enterprises that

over-engineer AI systems with complex multi-agent architectures. Recent

Stanford University research reveals that single-agent systems often match or

even outperform multi-agent swarms when both are allocated an equivalent

"thinking token budget." The perceived superiority of swarms frequently stems

from higher total computation during testing rather than inherent structural

advantages. This "tax" manifests as increased latency, higher costs, and

greater technical complexity. A primary reason for this performance gap is the

"Data Processing Inequality," where critical information is often lost or

fragmented during the handoffs and summarizations required in multi-agent

orchestration. In contrast, a single agent maintains a continuous context

window, allowing for much more efficient information retention and reasoning.

The study suggests that developers should prioritize optimizing single-agent

models—using techniques like SAS-L to extend reasoning—before adopting

multi-agent frameworks. Swarms remain useful only in specific scenarios, such

as when a single agent’s context becomes corrupted by noisy data or when a

task is naturally modular and requires parallel processing. Ultimately, the

article advocates for a "single-agent first" approach, warning that

unnecessary architectural bloat can lead to diminishing returns and

inefficient resource utilization in enterprise AI deployments.Cloud tech outages: how the EU plans to bolster its digital infrastructure

The recent global outages involving Amazon Web Services in late 2025 and CrowdStrike in 2024 have underscored the extreme fragility of modern digital infrastructure, which remains heavily reliant on a small group of U.S.-based hyperscalers. These disruptions revealed that the perceived redundancy of cloud computing is often an illusion, as many organizations concentrate their primary and backup systems within the same provider's ecosystem. Consequently, the European Union is shifting its strategy from mere technical efficiency to a geopolitical pursuit of "digital sovereignty." To mitigate the risks of "digital colonialism" and the reach of the U.S. CLOUD Act, European leaders are championing the 2025 European Digital Sovereignty Declaration. This framework prioritizes the development of a federated cloud architecture, linking national nodes into a cohesive, secure network to reduce dependence on foreign monopolies. Furthermore, the EU is investing heavily in homegrown semiconductors, foundational AI models, and public digital infrastructure. By establishing a dedicated task force to monitor progress through 2026, the bloc aims to ensure that European data remains subject strictly to local jurisdiction. This comprehensive approach seeks to bolster resilience against future technical failures while securing the strategic autonomy necessary for Europe’s long-term digital and economic security.When a Cloud Region Fails: Rethinking High Availability in a Geopolitically Unstable World

/articles/sovereign-fault-domains-cloud-resilience/en/smallimage/sovereign-fault-domains-cloud-resilience-thumbnail-1776430533702.jpg) In the InfoQ article "When a Cloud Region Fails," Rohan Vardhan introduces the

concept of sovereign fault domains (SFDs) to address cloud resilience within

an increasingly unstable geopolitical landscape. While traditional

high-availability strategies focus on technical abstractions like

multi-availability zone (multi-AZ) deployments to mitigate hardware failures,

Vardhan argues these are insufficient against sovereign-level disruptions.

SFDs represent failure boundaries defined by legal, political, or physical

jurisdictions. Recent events, such as sudden cloud provider withdrawals or

infrastructure instability in conflict zones, demonstrate how geopolitical

shifts can trigger correlated failures across entire regions, rendering

standard multi-AZ setups ineffective. To combat these risks, architects must

shift their baseline for high availability from multi-AZ to multi-region

architectures. This transition requires a fundamental rethink of distributed

systems, moving beyond technical redundancy to include legal and political

considerations in data replication and traffic management. The article

advocates for the adoption of explicit region evacuation playbooks, the

definition of geopolitical recovery targets, and the expansion of chaos

engineering to simulate sovereign-level losses. Ultimately, achieving true

resilience in the modern world necessitates acknowledging that cloud regions

are physical and political assets, not just virtualized resources, requiring

intentional design to survive jurisdictional partitions.

In the InfoQ article "When a Cloud Region Fails," Rohan Vardhan introduces the

concept of sovereign fault domains (SFDs) to address cloud resilience within

an increasingly unstable geopolitical landscape. While traditional

high-availability strategies focus on technical abstractions like

multi-availability zone (multi-AZ) deployments to mitigate hardware failures,

Vardhan argues these are insufficient against sovereign-level disruptions.

SFDs represent failure boundaries defined by legal, political, or physical

jurisdictions. Recent events, such as sudden cloud provider withdrawals or

infrastructure instability in conflict zones, demonstrate how geopolitical

shifts can trigger correlated failures across entire regions, rendering

standard multi-AZ setups ineffective. To combat these risks, architects must

shift their baseline for high availability from multi-AZ to multi-region

architectures. This transition requires a fundamental rethink of distributed

systems, moving beyond technical redundancy to include legal and political

considerations in data replication and traffic management. The article

advocates for the adoption of explicit region evacuation playbooks, the

definition of geopolitical recovery targets, and the expansion of chaos

engineering to simulate sovereign-level losses. Ultimately, achieving true

resilience in the modern world necessitates acknowledging that cloud regions

are physical and political assets, not just virtualized resources, requiring

intentional design to survive jurisdictional partitions.Inside Caller-as-a-Service Fraud: The Scam Economy Has a Hiring Process

The BleepingComputer article explores the emergence of "Caller-as-a-Service,"

a professionalized vishing ecosystem where cybercrime syndicates mirror the

organizational structure of legitimate businesses. These industrialized fraud

operations utilize a clear division of labor, employing specialized roles such

as infrastructure operators, data analysts, and professional callers.

Recruitment for these positions is surprisingly formal; underground job

postings resemble professional LinkedIn ads, specifically seeking native

English speakers with high emotional intelligence and persuasive social

engineering skills. To establish credibility, recruiters often display

verifiable "proof-of-profit" via large cryptocurrency balances to entice new

talent. Once hired, callers are frequently subjected to real-time supervision

through screen sharing to ensure strict adherence to malicious scripts and

maximize victim conversion rates. Compensation models are equally

sophisticated, ranging from fixed weekly salaries of $1,500 to success-based

commissions of $1,000 per successful vishing hit. This service-driven model

significantly lowers the barrier to entry for criminals, as it allows them to

outsource the technical and interpersonal complexities of a cyberattack.

Ultimately, the article emphasizes that the professionalization of the scam

economy makes these threats more resilient and efficient, necessitating that

defenders implement more robust identity verification and multi-factor

authentication to protect individuals from these increasingly coordinated,

data-driven vishing campaigns.

The BleepingComputer article explores the emergence of "Caller-as-a-Service,"

a professionalized vishing ecosystem where cybercrime syndicates mirror the

organizational structure of legitimate businesses. These industrialized fraud

operations utilize a clear division of labor, employing specialized roles such

as infrastructure operators, data analysts, and professional callers.

Recruitment for these positions is surprisingly formal; underground job

postings resemble professional LinkedIn ads, specifically seeking native

English speakers with high emotional intelligence and persuasive social

engineering skills. To establish credibility, recruiters often display

verifiable "proof-of-profit" via large cryptocurrency balances to entice new

talent. Once hired, callers are frequently subjected to real-time supervision

through screen sharing to ensure strict adherence to malicious scripts and

maximize victim conversion rates. Compensation models are equally

sophisticated, ranging from fixed weekly salaries of $1,500 to success-based

commissions of $1,000 per successful vishing hit. This service-driven model

significantly lowers the barrier to entry for criminals, as it allows them to

outsource the technical and interpersonal complexities of a cyberattack.

Ultimately, the article emphasizes that the professionalization of the scam

economy makes these threats more resilient and efficient, necessitating that

defenders implement more robust identity verification and multi-factor

authentication to protect individuals from these increasingly coordinated,

data-driven vishing campaigns.