Quote for the day:

"To accomplish great things, we must not only act, but also dream, not only plan, but also believe." -- Anatole France

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 18 mins • Perfect for listening on the go.

AI agents aren’t failing. The coordination layer is failing

The article "AI agents aren't failing—the coordination layer is failing"

asserts that the primary bottleneck in scaling AI is not the performance of

individual agents, but rather the absence of a sophisticated "coordination

layer." As organizations transition to multi-agent environments, relying on

direct agent-to-agent communication creates quadratic complexity that leads to

race conditions, outdated context, and cascading failures. To solve these

issues, the author introduces the "Event Spine" pattern, a centralized

architectural foundation using ordered event streams. This approach enables

agents to maintain a shared state without direct queries, significantly

reducing latency and redundant processing. Implementing this infrastructure

reportedly slashed end-to-end latency from 2.4 seconds to 180 milliseconds and

reduced CPU utilization by 36 percent. The article concludes that multi-agent

AI is effectively a distributed system requiring the same explicit

coordination frameworks that the industry found essential for microservices.

Enterprises must invest in this "spine" now to prevent agent proliferation

from turning into unmanageable chaos. By focusing on the infrastructure

connecting these agents, developers can ensure that their AI systems work as a

cohesive unit rather than a collection of competing, inefficient silos that

are prone to failure at scale.

The article "AI agents aren't failing—the coordination layer is failing"

asserts that the primary bottleneck in scaling AI is not the performance of

individual agents, but rather the absence of a sophisticated "coordination

layer." As organizations transition to multi-agent environments, relying on

direct agent-to-agent communication creates quadratic complexity that leads to

race conditions, outdated context, and cascading failures. To solve these

issues, the author introduces the "Event Spine" pattern, a centralized

architectural foundation using ordered event streams. This approach enables

agents to maintain a shared state without direct queries, significantly

reducing latency and redundant processing. Implementing this infrastructure

reportedly slashed end-to-end latency from 2.4 seconds to 180 milliseconds and

reduced CPU utilization by 36 percent. The article concludes that multi-agent

AI is effectively a distributed system requiring the same explicit

coordination frameworks that the industry found essential for microservices.

Enterprises must invest in this "spine" now to prevent agent proliferation

from turning into unmanageable chaos. By focusing on the infrastructure

connecting these agents, developers can ensure that their AI systems work as a

cohesive unit rather than a collection of competing, inefficient silos that

are prone to failure at scale.Agents don’t know what good looks like. And that’s exactly the problem.

In this O’Reilly Radar article, Luca Mezzalira reflects on a discussion

between Neal Ford and Sam Newman regarding the inherent limitations of

agentic AI in software architecture. The central thesis is that while AI

agents are exceptionally skilled at generating code and executing local

tasks, they lack a fundamental understanding of what "good" looks like in a

global architectural context. Agents typically optimize for immediate task

completion, often neglecting long-term maintainability, systemic

scalability, and the subtle trade-offs essential to sound design. This

creates a significant risk where automated efficiency leads to architectural

erosion and technical debt if left unchecked. Mezzalira argues that the

solution lies not in making agents "smarter" in isolation, but in

establishing robust human-led governance and automated guardrails that

define and enforce quality standards. As agents handle more routine coding

duties, the role of the human developer must evolve from a "T-shaped"

specialist into a "Comb-shaped" professional who possesses both deep

technical expertise and the broad systemic vision required to orchestrate

these tools effectively. Ultimately, the article emphasizes that the true

value of human engineers in the AI era is their unique ability to maintain

architectural integrity and provide the contextual judgment that machines

currently cannot replicate.

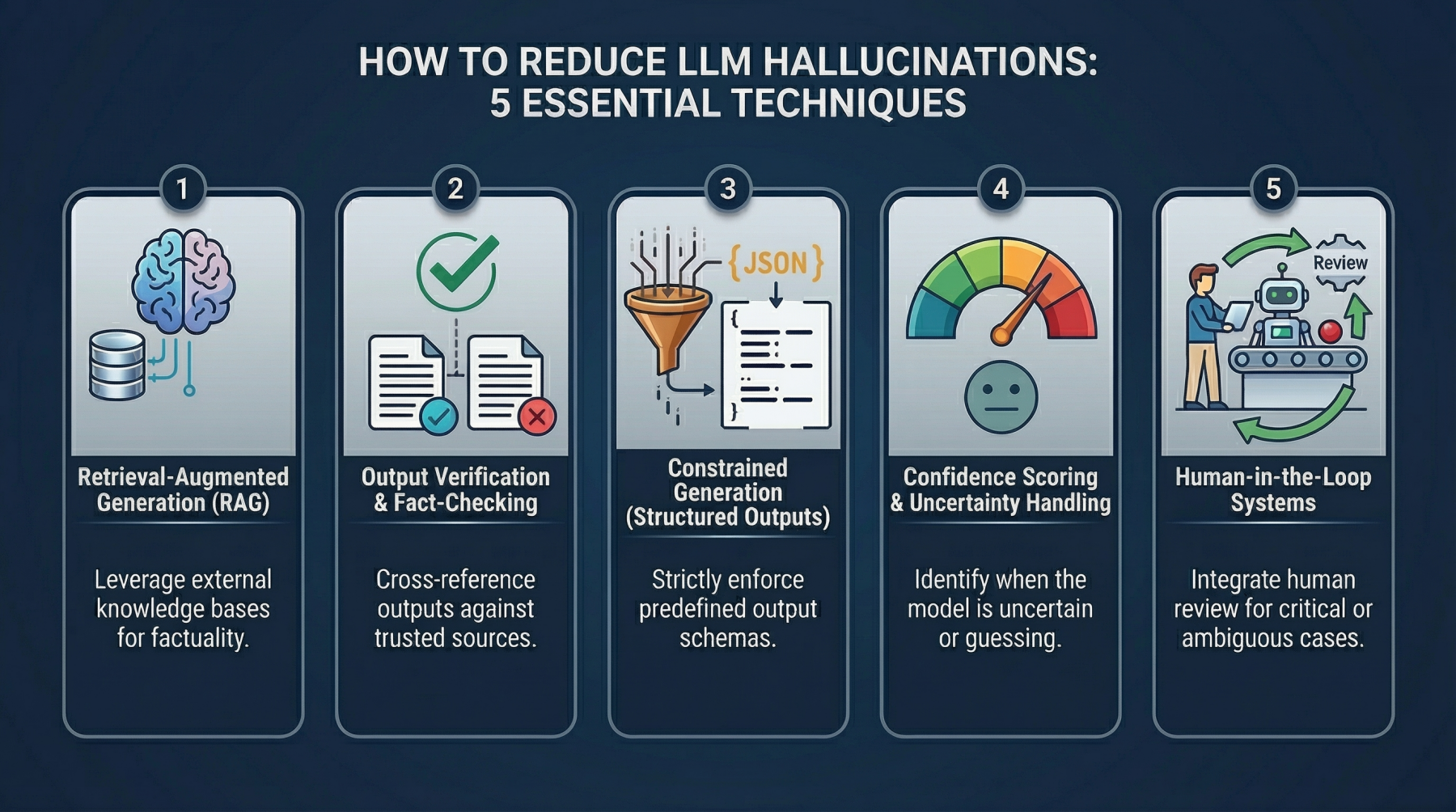

Understanding tokenization and consumption in LLMs

The article "Understanding Tokenization and Consumption in LLMs" explains

the fundamental role of tokenization in how large language models (LLMs)

interpret user input and calculate costs. Tokenization involves breaking

text into smaller subunits, such as word fragments or punctuation, allowing

models to process diverse languages and complex syntax efficiently. This

granular approach is critical because LLMs generate responses iteratively,

token by token, and billing is typically based on the total sum of tokens in

both the prompt and the resulting output. The author compares leading

platforms like ChatGPT, Claude Cowork, and GitHub Copilot, noting that while

they share core principles, their specific tokenization algorithms and

pricing structures vary. For instance, ChatGPT uses byte pair encoding for

general efficiency, whereas GitHub Copilot is optimized for programming

syntax. To manage costs and improve performance, the article suggests best

practices for prompt engineering, such as using concise language, avoiding

redundancy, and breaking complex tasks into smaller segments. Ultimately, a

deep understanding of token consumption enables professionals to optimize

their AI workflows, predict expenses accurately, and select the most

appropriate platform for their specific organizational needs, whether for

general content generation or specialized software development.

The article "Understanding Tokenization and Consumption in LLMs" explains

the fundamental role of tokenization in how large language models (LLMs)

interpret user input and calculate costs. Tokenization involves breaking

text into smaller subunits, such as word fragments or punctuation, allowing

models to process diverse languages and complex syntax efficiently. This

granular approach is critical because LLMs generate responses iteratively,

token by token, and billing is typically based on the total sum of tokens in

both the prompt and the resulting output. The author compares leading

platforms like ChatGPT, Claude Cowork, and GitHub Copilot, noting that while

they share core principles, their specific tokenization algorithms and

pricing structures vary. For instance, ChatGPT uses byte pair encoding for

general efficiency, whereas GitHub Copilot is optimized for programming

syntax. To manage costs and improve performance, the article suggests best

practices for prompt engineering, such as using concise language, avoiding

redundancy, and breaking complex tasks into smaller segments. Ultimately, a

deep understanding of token consumption enables professionals to optimize

their AI workflows, predict expenses accurately, and select the most

appropriate platform for their specific organizational needs, whether for

general content generation or specialized software development.Data Centres Without the Compute

The article "Data Centres Without the Compute" explores a paradigm shift in

data center architecture, moving away from traditional server-centric

designs where compute, memory, and storage are tightly coupled. Stuart Dee

argues that modern workloads, especially AI and real-time analytics, have

exposed memory as a dominant constraint rather than compute. This shift is

facilitated by advancements in photonics and the Innovative Optical and

Wireless Network (IOWN), which dissolves physical boundaries through

end-to-end optical paths. By replacing traditional electronic switching with

all-optical networking, latency and energy consumption are significantly

reduced, enabling memory disaggregation at scale. Consequently, data centers

can evolve into specialized, software-defined environments where memory

resides in dense, energy-efficient arrays that are accessed remotely by

compute-heavy facilities. This "data-centric infrastructure" allows for

dynamic resource composition across metropolitan distances, transforming the

network into a memory backplane. Ultimately, the article suggests that the

future of digital infrastructure lies in decoupling resources, allowing

memory to be located where power and cooling are optimal while compute

remains closer to users. This transition marks the end of the locality

assumption, paving the way for a federated model where data centers serve as

modular components within a broader optical system.

The article "Data Centres Without the Compute" explores a paradigm shift in

data center architecture, moving away from traditional server-centric

designs where compute, memory, and storage are tightly coupled. Stuart Dee

argues that modern workloads, especially AI and real-time analytics, have

exposed memory as a dominant constraint rather than compute. This shift is

facilitated by advancements in photonics and the Innovative Optical and

Wireless Network (IOWN), which dissolves physical boundaries through

end-to-end optical paths. By replacing traditional electronic switching with

all-optical networking, latency and energy consumption are significantly

reduced, enabling memory disaggregation at scale. Consequently, data centers

can evolve into specialized, software-defined environments where memory

resides in dense, energy-efficient arrays that are accessed remotely by

compute-heavy facilities. This "data-centric infrastructure" allows for

dynamic resource composition across metropolitan distances, transforming the

network into a memory backplane. Ultimately, the article suggests that the

future of digital infrastructure lies in decoupling resources, allowing

memory to be located where power and cooling are optimal while compute

remains closer to users. This transition marks the end of the locality

assumption, paving the way for a federated model where data centers serve as

modular components within a broader optical system.What Every Business Leader Needs to Understand About Sovereign AI

Sovereign AI is emerging as a critical strategic imperative for business

leaders, transcending its role as a mere technical requirement to become a

fundamental pillar of long-term resilience and competitive advantage.

According to insights from Dataversity, sovereignty should be viewed as an

offensive strategy rather than a defensive posture, enabling organizations

to build robust compliance frameworks and mitigate significant risks such as

reputational damage and legal fines. While many companies currently focus

sovereignty efforts on data and infrastructure, a key shift involves

extending this control to the intelligence layer—the AI models

themselves—where crucial decision-making occurs. A hybrid sovereignty

approach is recommended, balancing internal control over sensitive assets

with external partnerships to foster innovation while avoiding vendor

lock-in. By 2030, the global market for sovereign AI is projected to reach

$600 billion, highlighting its potential to unlock new market opportunities

and scale. For leaders, treating sovereignty as a structural necessity

rather than discretionary spend is essential for ensuring AI accuracy and

reliability. This proactive "sovereignty-by-design" methodology ultimately

transforms regulatory compliance into business superiority, allowing

enterprises to navigate a complex, fragmented global landscape while

maintaining absolute ownership of their most valuable digital intelligence

and future innovation.

Turning Military Experience Into Cyber Advantage

The blog post "Turning Military Experience Into Cyber Advantage" by Chetan

Anand explores how the discipline and operational expertise of veterans

translate into a strategic asset for the cybersecurity industry. Anand

argues that cybersecurity should be viewed not merely as a technical IT

function, but as enterprise risk management conducted within a digital

battlespace—a concept inherently familiar to military personnel. Key

attributes such as risk assessment, situational awareness, and structured

decision-making under pressure map directly onto roles in security

operations, threat modeling, and incident response. Furthermore, the article

highlights the growing demand for military leadership in Governance, Risk,

and Compliance (GRC) roles, where integrity and accountability are

paramount. Veterans are encouraged to overcome common misconceptions, such

as the necessity of coding skills, and focus on articulating their

experience in business terms rather than military jargon. By prioritizing a

problem-solving mindset and leveraging mentorship programs like ISACA’s,

transitioning service members can bridge the gap between their tactical

background and civilian career requirements. Ultimately, the piece positions

military service as a foundational training ground for the rigorous demands

of modern cyber defense, provided veterans effectively translate their

unique skills into organizational value and business outcomes.

The blog post "Turning Military Experience Into Cyber Advantage" by Chetan

Anand explores how the discipline and operational expertise of veterans

translate into a strategic asset for the cybersecurity industry. Anand

argues that cybersecurity should be viewed not merely as a technical IT

function, but as enterprise risk management conducted within a digital

battlespace—a concept inherently familiar to military personnel. Key

attributes such as risk assessment, situational awareness, and structured

decision-making under pressure map directly onto roles in security

operations, threat modeling, and incident response. Furthermore, the article

highlights the growing demand for military leadership in Governance, Risk,

and Compliance (GRC) roles, where integrity and accountability are

paramount. Veterans are encouraged to overcome common misconceptions, such

as the necessity of coding skills, and focus on articulating their

experience in business terms rather than military jargon. By prioritizing a

problem-solving mindset and leveraging mentorship programs like ISACA’s,

transitioning service members can bridge the gap between their tactical

background and civilian career requirements. Ultimately, the piece positions

military service as a foundational training ground for the rigorous demands

of modern cyber defense, provided veterans effectively translate their

unique skills into organizational value and business outcomes.The Hidden ROI of Visibility: Better Decisions, Better Behavior, Better Security

In his article for SecurityWeek, Joshua Goldfarb explores the "hidden ROI"

of cybersecurity visibility, arguing that its fundamental value extends far

beyond traditional compliance and auditing functions. Using a personal

anecdote about how home security cameras deterred a hostile neighbor,

Goldfarb illustrates that visibility serves as a powerful psychological

deterrent. When users and technical teams know their actions are being

recorded, they are significantly more likely to adhere to security policies

and avoid risky behaviors like visiting restricted sites or installing

unvetted software. Beyond behavioral changes, comprehensive visibility

across network, endpoint, and application layers—including APIs and AI

capabilities—fosters more collaborative, data-driven relationships between

security departments and application owners. This objective approach

effectively shifts internal discussions from subjective friction to

actionable risk management. Furthermore, high-quality data enables more

informed decision-making and precise risk assessments, both of which are

critical in complex, modern hybrid-cloud environments. Although achieving

total transparency is often resource-intensive, Goldfarb emphasizes that the

resulting honesty, improved organizational culture, and strategic clarity

provide a distinct competitive advantage. Ultimately, visibility transforms

security from a reactive technical function into a proactive organizational

catalyst that encourages integrity and operational excellence across the

entire enterprise ecosystem.

In his article for SecurityWeek, Joshua Goldfarb explores the "hidden ROI"

of cybersecurity visibility, arguing that its fundamental value extends far

beyond traditional compliance and auditing functions. Using a personal

anecdote about how home security cameras deterred a hostile neighbor,

Goldfarb illustrates that visibility serves as a powerful psychological

deterrent. When users and technical teams know their actions are being

recorded, they are significantly more likely to adhere to security policies

and avoid risky behaviors like visiting restricted sites or installing

unvetted software. Beyond behavioral changes, comprehensive visibility

across network, endpoint, and application layers—including APIs and AI

capabilities—fosters more collaborative, data-driven relationships between

security departments and application owners. This objective approach

effectively shifts internal discussions from subjective friction to

actionable risk management. Furthermore, high-quality data enables more

informed decision-making and precise risk assessments, both of which are

critical in complex, modern hybrid-cloud environments. Although achieving

total transparency is often resource-intensive, Goldfarb emphasizes that the

resulting honesty, improved organizational culture, and strategic clarity

provide a distinct competitive advantage. Ultimately, visibility transforms

security from a reactive technical function into a proactive organizational

catalyst that encourages integrity and operational excellence across the

entire enterprise ecosystem.Out of the Shadows: How CIOs Are Racing to Govern AI Tools

The rise of "shadow AI"—the unauthorized deployment of artificial

intelligence tools by employees—presents a critical challenge for

contemporary CIOs. Unlike traditional shadow IT, these autonomous systems

frequently process sensitive data and make consequential decisions without

oversight from legal or security departments. Research indicates that while

over 90% of employees admit to entering corporate information into AI tools

without approval, more than half of organizations still lack a formal

governance framework. This gap leads to significant financial liabilities,

with shadow AI breaches costing enterprises an average of $4.63 million. To

combat this, CIOs are moving beyond restrictive measures to establish

proactive governance playbooks. These strategies include forming

cross-functional AI committees, implementing real-time discovery tools, and

classifying applications into sanctioned, restricted, and forbidden

categories. Furthermore, experts suggest that organizations must leverage AI

to monitor AI, using automated assessment pipelines to keep pace with rapid

innovation. Ultimately, the goal is to create a "frictionless" official path

for AI adoption that renders the shadow path obsolete. By balancing the

velocity of innovation with robust security controls, leadership can protect

intellectual property while empowering the workforce to utilize these

transformative technologies safely and effectively within a transparent,

structured environment.

The rise of "shadow AI"—the unauthorized deployment of artificial

intelligence tools by employees—presents a critical challenge for

contemporary CIOs. Unlike traditional shadow IT, these autonomous systems

frequently process sensitive data and make consequential decisions without

oversight from legal or security departments. Research indicates that while

over 90% of employees admit to entering corporate information into AI tools

without approval, more than half of organizations still lack a formal

governance framework. This gap leads to significant financial liabilities,

with shadow AI breaches costing enterprises an average of $4.63 million. To

combat this, CIOs are moving beyond restrictive measures to establish

proactive governance playbooks. These strategies include forming

cross-functional AI committees, implementing real-time discovery tools, and

classifying applications into sanctioned, restricted, and forbidden

categories. Furthermore, experts suggest that organizations must leverage AI

to monitor AI, using automated assessment pipelines to keep pace with rapid

innovation. Ultimately, the goal is to create a "frictionless" official path

for AI adoption that renders the shadow path obsolete. By balancing the

velocity of innovation with robust security controls, leadership can protect

intellectual property while empowering the workforce to utilize these

transformative technologies safely and effectively within a transparent,

structured environment.Smartphones as Micro Data Centers: A Creative Edge Solution?

The article "Smartphones as Micro Data Centers: A Creative Edge Solution?"

by Christopher Tozzi explores the revolutionary potential of pooling the

resources of billions of mobile devices to create decentralized, miniature

data centers. By clustering the CPU, memory, and storage of smartphones,

organizations can deploy flexible, low-cost infrastructure capable of

hosting diverse workloads. This innovative approach is particularly

well-suited for edge computing and AI inference, as it places processing

power closer to end-users to minimize latency and enhance real-time

analysis. Furthermore, repurposing discarded handsets offers significant

sustainability benefits by reducing e-waste and avoiding the

capital-intensive construction of traditional facilities. However, several

technical hurdles remain, including software compatibility issues arising

from the ARM-based architecture of mobile chips versus conventional x86

servers. Additionally, the lack of dedicated, high-capacity GPUs and the

absence of mature clustering software currently limits the ability to handle

heavy AI acceleration or large-scale enterprise tasks. Despite these

limitations, smartphone-based micro-data centers represent a creative and

efficient shift in digital infrastructure. As the demand for localized

computing continues to surge, this crowdsourced model provides a viable,

sustainable pathway for scaling the internet's edge while maximizing the

utility of existing global hardware resources.

The article "Smartphones as Micro Data Centers: A Creative Edge Solution?"

by Christopher Tozzi explores the revolutionary potential of pooling the

resources of billions of mobile devices to create decentralized, miniature

data centers. By clustering the CPU, memory, and storage of smartphones,

organizations can deploy flexible, low-cost infrastructure capable of

hosting diverse workloads. This innovative approach is particularly

well-suited for edge computing and AI inference, as it places processing

power closer to end-users to minimize latency and enhance real-time

analysis. Furthermore, repurposing discarded handsets offers significant

sustainability benefits by reducing e-waste and avoiding the

capital-intensive construction of traditional facilities. However, several

technical hurdles remain, including software compatibility issues arising

from the ARM-based architecture of mobile chips versus conventional x86

servers. Additionally, the lack of dedicated, high-capacity GPUs and the

absence of mature clustering software currently limits the ability to handle

heavy AI acceleration or large-scale enterprise tasks. Despite these

limitations, smartphone-based micro-data centers represent a creative and

efficient shift in digital infrastructure. As the demand for localized

computing continues to surge, this crowdsourced model provides a viable,

sustainable pathway for scaling the internet's edge while maximizing the

utility of existing global hardware resources.Why India’s AI future needs both sovereign control and heritage depth

Arun Subramaniyan, CEO of Articul8, outlines a strategic vision for India’s

AI future that balances sovereign security with cultural heritage. He argues

that India must develop sovereign models to safeguard critical

infrastructure and national security while simultaneously building heritage

models that utilize the nation’s vast linguistic and historical knowledge.

This dual approach ensures both protection and global influence, serving

billions across diverse markets. For enterprises, the focus must shift from

generic foundation models, which often fail in high-stakes industrial

contexts, to domain-specific AI trained on deep institutional knowledge.

These specialized models provide the accuracy and security required for

regulated sectors like energy, manufacturing, and banking. Subramaniyan

identifies data fragmentation and the rapid pace of technological change as

primary bottlenecks, suggesting that platform partners can help

organizations absorb this complexity. Ultimately, India’s unique

position—characterized by rapid infrastructure expansion and a wealth of

untapped cultural data—offers a once-in-a-generation opportunity to lead in

the global AI landscape. By encoding local regulatory and business contexts

into AI frameworks, India can move beyond simple pilot projects to

large-scale, production-ready deployments that drive real economic value

while preserving its unique intellectual legacy and ensuring digital

sovereignty.

/articles/architecting-autonomy-scale/en/smallimage/architecting-autonomy-scale-thumbnail-1774360140662.jpg)

/filters:no_upscale()/articles/optimizing-search-systems/en/resources/97figure-2-1752143797166.jpg)