In response to current digital transformation demands, organizations are integrating emerging technologies at an unprecedented rate. Despite their numerous benefits, securing these technologies is challenging for technology leaders. The white paper identified more than 200 critical and emerging technologies reshaping the digital ecosystem. Beyond AI and IoT, technologies such as blockchain, biotechnology and quantum computing are rising on the hype cycle, introducing new cybersecurity risks. ... Quantum computing, while promising breakthrough computational power, presents grave cybersecurity risks. It threatens to break current encryption standards, and quantum computers can potentially decrypt data collected now for future access. "The threat of quantum computing underscores the need for quantum-resistant cryptographic solutions to secure our digital future," the white paper stated. ... The cybersecurity industry faces a critical shortage of skilled professionals capable of managing emerging technology security. Cybersecurity Ventures projected a shortfall of 3.5 million cybersecurity professionals by 2025. Gartner predicted this skills gap would cause more than 50% of significant incidents by 2025.

Do You Need a Solution or Enterprise Architect?

A Solution Architect is more like a surgeon who operates on someone to fix a problem, and the patient returns to normal life in a short time. An Enterprise Architect is more like an internal medicine specialist who treats a patient with a chronic illness over a number of years to improve the person’s quality of life. ... Architects are most successful when they help projects to succeed. Commonality of process and technology can be beneficial for an organization. But once architects are merely policing projects and rejecting aspects based on strict criteria, they lose the ability to positively influence the initiatives. Solution alignment is best achieved through working collaboratively with projects early to convince them of the advantages of various design choices. The first deliverable many architecture teams produce is what I call the “red/yellow/green list”. You’ve all seen these. Each technology classification is listed down the page – for example: server type, operating system, network software, database technology, and programming language. Three “colour” columns follow across the page. “Red” items are forbidden to be used by new projects. Although some legacy applications may still use them, they need to be phased out. “Yellow” items can be used under certain circumstances, but must be pre-approved by some kind of review committee.

DataRobot launches Enterprise AI Suite to bridge gap between AI development and business value

The agentic AI approach is designed to help organizations handle complex

business queries and workflows. The system employs specialist agents that work

together to solve multi-faceted business problems. This approach is particularly

valuable for organizations dealing with complex data environments and multiple

business systems. “You ask a question to your agentic workflow, it breaks up the

questions into a set of more specific questions, and then it routes them to

agents which are specialists in various different areas,” Saha explained. For

instance, a business analyst’s question about revenue might be routed to

multiple specialized agents – one handling SQL queries, another using Python –

before combining results into a comprehensive response. ... “We have put

together a lot of instrumentation which lets people visually understand, for

example, if you have a lot of clustering of data in the vector database, you can

get a spurious answer,” Saha said. “You would be able to see that, if you see

your questions are landing in areas where you don’t have enough information.”

This observability extends to the platform’s governance capabilities, with

real-time monitoring and intervention features.

Using AI for DevOps: What Developers and Ops Need To Know

“AI can be incredibly powerful in DevOps when it’s implemented with a clear

framework that makes it easy for developers to do the right thing and hard for

them to do the wrong thing,” says Durkin. “Making it easy to do the right

thing starts with standardizing templates and policies to streamline

workflows. Create templates and enforce policies that support easy, repeatable

integration of AI tools. By establishing policies that automate security and

compliance checks, AI tools can operate within these boundaries, providing

valuable support without compromising standards. This approach simplifies

adoption and makes it harder to skip essential steps, reinforcing best

practices across teams.” ... While having a well-considered strategy in place

before embracing AI and DevOps is a must, Durkin and Govrin both offered up

some additional tips and advice for getting AI tools and technologies to

integrate with DevOps ambitions more easily. “In enterprise environments,

deploying AI applications locally can significantly improve adoption and

integration,” said Govrin. “Unlike consumer apps, enterprise AI benefits

greatly from self-hosted setups, where solutions like local inference, support

for self-hosted models and edge inferencing play a key role. These methods

keep data secure and mitigate risks associated with data transfer across

public clouds.”

The CISO paradox: With great responsibility comes little or no power

The absence of command makes cybersecurity decision-making a tedious and often

frustrating process for CISOs. They are expected to move fast, to anticipate

and address security issues before they become realized. But without command,

they’re stuck in a cycle of “selling” the importance of security investments,

waiting for approvals, and relying on others to prioritize those investments.

This constant need for buy-in slows down response times and creates

opportunities for something bad to happen. In cybersecurity, where timing is

everything, these delays can be costly. Beyond timing, the concept of command

is critical for strategic alignment and empowerment. In organizations where

the CISO lacks true command, they’re forced to operate reactively rather than

proactively. ... If organizations want to truly protect themselves, they need

to recognize that CISOs require true command. The most effective CISOs are

those who can operate with full authority over their domain, free from

constant internal roadblocks. As companies consider how best to secure their

data, they should ask themselves whether they are genuinely setting their

CISOs up for success. Are they empowering them with the resources, authority,

and autonomy to act? Or are they merely assigning a high-stakes responsibility

without the power to fulfill it?

Harnessing SaaS to elevate your digital transformation journey

While SaaS provides the infrastructure, AI is the catalyst that powers digital

transformation at scale. Companies are increasingly adopting AI-driven SaaS

platforms to streamline workflows, automate tasks, and make data-driven

decisions. In the B2B SaaS sector, this combination is revolutionising how

businesses operate, helping them personalize customer interactions, predict

outcomes, and optimize operations. ... In manufacturing, AI optimizes supply

chain management, reducing waste and increasing productivity. In the finance

sector, AI-driven SaaS automates risk assessment, improving decision-making

and reducing operational costs. The benefits of adopting AI and SaaS are

clear: enhanced customer experience, streamlined operations, and the ability

to innovate faster than ever before. Companies that fail to integrate these

technologies risk falling behind as competitors capitalize on these

advancements to deliver superior products and services. As businesses continue

to adopt SaaS and AI-driven solutions, the future of digital transformation

looks promising. Companies are no longer just thinking about automating

processes or improving efficiency, they are investing in technologies that

will help them shape the future of their industries.

Tackling ransomware without banning ransom payments

Despite these somewhat muddied waters, the correct response to ransomware

attacks is clear: paying demands should almost always be a last resort. The

only exception should be where there is a risk to life. Paying because it’s

easy, costs less and causes less disruption to the business is not a good

enough reason to pay, regardless of whether it’s the business handing cashing

out or an insurer. However, while a step in the right direction, totally

banning ransom payments addresses only one form of attack and feels a bit like

a ‘whack-a-mole’ strategy. It may ease the rise in attacks for a short while,

but attackers will inevitably switch tactics, to compromising business email

perhaps, or something we’ve not even heard of yet. So, what else can be done

to slow the rise in ransomware attacks? Well, we can consider a few options,

such as closing vulnerability trading brokers and regulating cryptocurrency

transactions. To pick on the latter as an example, most cybercrime monetizes

through cryptocurrency, so rather than simply banning payments, it could be a

better option to regulate the crypto industry and flow of money. Alongside

this kind of regulatory change, governments could also consider moving the

decision of whether to pay or not to an independent body.

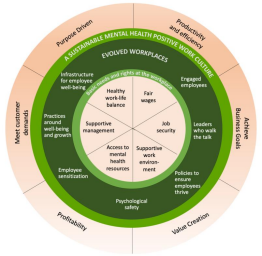

CISOs in 2025: Balancing security, compliance, and accountability

The scope of the CISO role has expanded significantly over the past 10-15

years, and has moved from mainly technical oversight to strategic leadership,

risk management, and regulatory compliance. The constant pressure to prevent

breaches and manage incidents can lead to high stress and burnout, making the

role less appealing. This also means that modern CISOs must possess a blend of

technical expertise, strategic thinking, and strong interpersonal skills. The

requirement for such a diverse skill set can limit the pool of qualified

candidates, as not all cybersecurity professionals have the necessary

combination of skills. ... CISOs will need to be able to effectively

communicate complex cybersecurity issues to non-technical board members and

executives. This involves translating technical jargon into business language,

and clearly articulating the impact of cybersecurity risks on the

organization’s overall business strategy. And as cybersecurity becomes

integral to business strategy, CISOs must be able to think beyond immediate

threats, and focus on long-term strategic planning. This includes

understanding how cybersecurity initiatives align with business goals and

contribute to competitive advantage.

Emergence of Preemptive Cyber Defense: The Key to Defusing Sophisticated Attacks

The frequency of attacks is only part of the problem. Perhaps the biggest

concern is the sophistication of incidents. Right now, cybercriminals are

using everything from AI and machine learning to polymorphic malware coupled

with sophisticated psychological tactics that play off of breaking world

events and geopolitical tension. ... The clear limitations of these reactive

systems have many businesses looking to shift away from the

“one-size-fits-all” approach to more dynamic options. ... With redundancy,

security, and resiliency in mind, many companies are following the lead of

government agencies and diversifying their cybersecurity investments across

multiple providers. This includes the option of a preemptive cyber defense

solution, which, rather than relying on a single offering, blends in three — a

triad that addresses the complexities of modern cybersecurity challenges. ...

The preemptive cyber defense triad offers businesses the ultimate protection—a

security ecosystem where the attack surface is constantly changing (AMTD), the

security controls are always optimized (ASCA), and the overall threat exposure

is continuously managed and minimized (CTEM).

Insurance Firm Introduces Liability Coverage for CISOs

“CISOs are the front line of defense against cyber threats, yet their role may

leave them exposed to personal liabilities – particularly in light of the

Securities and Exchange Commission’s (SEC) new cyber disclosure rules,” Nick

Economidis, senior vice president of eRisk at Crum and Forster, said in a

statement. “Our CISO Professional Liability Insurance is designed to bridge

that gap, providing an essential safety net by offering CISOs the protection

they need to perform their jobs with confidence.” ... The new insurance

program by the Morristown, New Jersey-based law firm comes in the wake of

charges against software maker SolarWinds and its CISO, Tim Brown, being

dismissed by a federal court judge. The charges were made in connection with

the massive software supply chain attack in 2020 by a threat group supported

by Russia’s foreign intelligence services. ... “As personal liability risks

for CISOs continue to evolve, the availability and scope of D&O insurance

will remain a critical factor in recruiting and retaining top cybersecurity

talent,” Fehling wrote. “Companies that offer robust insurance protection may

gain a competitive advantage in the tight market for skilled security

leaders.”

Quote for the day:

"If you want to achieve excellence,

you can get there today. As of this second, quit doing less-than-excellent

work." -- Thomas J. Watson