Quote for the day:

"Inspired leaders move a business beyond problems into opportunities." -- Dr. Abraham Zaleznik

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 23 mins • Perfect for listening on the go.

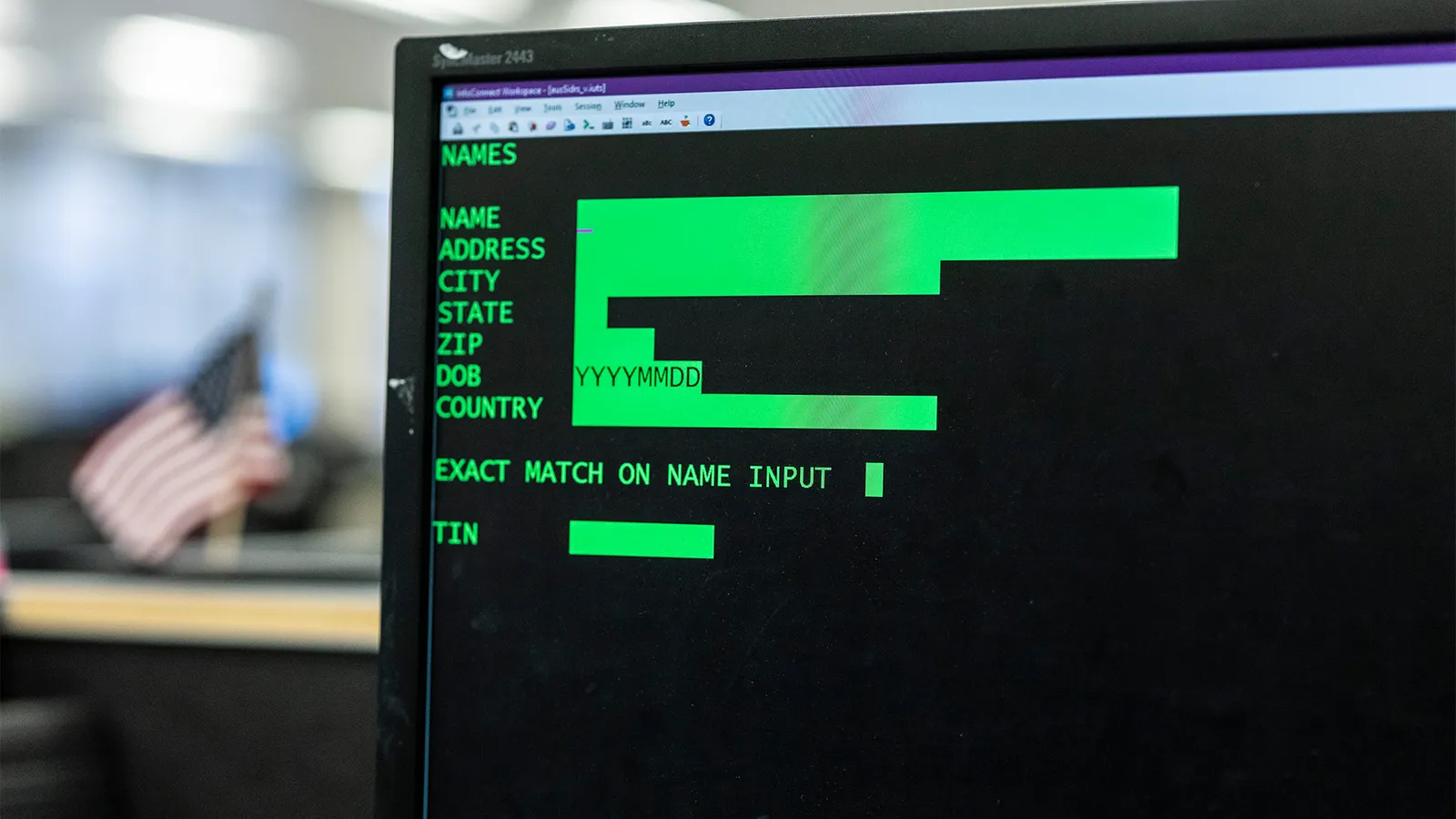

Why many enterprises struggle with outdated digital systems & how to fix them

The article on Express Computer, "Why many enterprises struggle with outdated

digital systems & how to fix them," explores the pervasive issue of legacy

technical debt. Many organizations remain tethered to aging infrastructure

that stifles innovation and hampers agility. The struggle often stems from the

prohibitive costs of replacement, the immense complexity of migrating

mission-critical processes, and a fundamental fear of business disruption.

Governance layers and siloed ownership further exacerbate these challenges,

creating compounding "enterprise debt" across processes, data, and talent. To

address these bottlenecks, the author advocates for a strategic shift toward a

product mindset and incremental modernization instead of high-risk, wholesale

replacements. Recommended fixes include mapping system dependencies,

quantifying inefficiencies, and following a clear roadmap that progresses from

stabilization to systematic optimization. By decoupling tightly integrated

components and establishing clear ownership, enterprises can transform their

brittle legacy systems into scalable, resilient assets. Fostering a culture of

continuous improvement and aligning digital transformation with core business

objectives are equally vital for survival. Ultimately, the piece emphasizes

that overcoming outdated digital systems is a strategic necessity in a

fast-paced market, requiring a balanced approach to technical remediation and

organizational change to ensure long-term competitiveness.

The article on Express Computer, "Why many enterprises struggle with outdated

digital systems & how to fix them," explores the pervasive issue of legacy

technical debt. Many organizations remain tethered to aging infrastructure

that stifles innovation and hampers agility. The struggle often stems from the

prohibitive costs of replacement, the immense complexity of migrating

mission-critical processes, and a fundamental fear of business disruption.

Governance layers and siloed ownership further exacerbate these challenges,

creating compounding "enterprise debt" across processes, data, and talent. To

address these bottlenecks, the author advocates for a strategic shift toward a

product mindset and incremental modernization instead of high-risk, wholesale

replacements. Recommended fixes include mapping system dependencies,

quantifying inefficiencies, and following a clear roadmap that progresses from

stabilization to systematic optimization. By decoupling tightly integrated

components and establishing clear ownership, enterprises can transform their

brittle legacy systems into scalable, resilient assets. Fostering a culture of

continuous improvement and aligning digital transformation with core business

objectives are equally vital for survival. Ultimately, the piece emphasizes

that overcoming outdated digital systems is a strategic necessity in a

fast-paced market, requiring a balanced approach to technical remediation and

organizational change to ensure long-term competitiveness.COBOL developers will always be needed, even as AI takes the lead on modernization projects

The article from ITPro explores the enduring necessity of COBOL developers

amidst the rise of artificial intelligence in legacy modernization projects.

While AI is increasingly being marketed as a "silver bullet" for converting

ancient COBOL codebases into modern languages like Java, industry experts

argue that these digital transformations cannot succeed without human domain

expertise. COBOL remains the backbone of global financial and administrative

systems, housing decades of intricate business logic that AI often fails to

interpret accurately. The piece emphasizes that while generative AI can

significantly accelerate code translation and documentation, it lacks the

contextual understanding required to define what a successful transformation

actually looks like. Consequently, veteran developers are essential for

overseeing AI-driven migrations, identifying potential risks, and ensuring

that the logic preserved in the legacy system is correctly replicated in the

new environment. Rather than replacing the workforce, AI acts as a

collaborative tool that shifts the developer's role from manual coding to

strategic orchestration. Ultimately, the survival of critical infrastructure

depends on a hybrid approach that combines the speed of machine learning with

the deep-seated knowledge of COBOL specialists, proving that legacy expertise

is more valuable than ever in the modern era.

The article from ITPro explores the enduring necessity of COBOL developers

amidst the rise of artificial intelligence in legacy modernization projects.

While AI is increasingly being marketed as a "silver bullet" for converting

ancient COBOL codebases into modern languages like Java, industry experts

argue that these digital transformations cannot succeed without human domain

expertise. COBOL remains the backbone of global financial and administrative

systems, housing decades of intricate business logic that AI often fails to

interpret accurately. The piece emphasizes that while generative AI can

significantly accelerate code translation and documentation, it lacks the

contextual understanding required to define what a successful transformation

actually looks like. Consequently, veteran developers are essential for

overseeing AI-driven migrations, identifying potential risks, and ensuring

that the logic preserved in the legacy system is correctly replicated in the

new environment. Rather than replacing the workforce, AI acts as a

collaborative tool that shifts the developer's role from manual coding to

strategic orchestration. Ultimately, the survival of critical infrastructure

depends on a hybrid approach that combines the speed of machine learning with

the deep-seated knowledge of COBOL specialists, proving that legacy expertise

is more valuable than ever in the modern era.The CTO is dead. Long live the CTO

In the article "The CTO is dead. Long live the CTO" on CIO.com, Marios

Fakiolas argues that the traditional role of the Chief Technology Officer as a

technical gatekeeper and "human compiler" has become obsolete due to the rise

of advanced AI. Modern Large Language Models can now design complex system

architectures in minutes, outperforming humans in handling multidimensional

constraints and technical interdependencies. Consequently, the new era demands

a "multiplier" who shifts focus from providing technical answers to

architecting systems that enable continuous organizational intelligence.

Today’s CTO is measured not by architectural purity, but by tangible business

outcomes such as gross margin, ROI, and operational velocity. This evolution

requires leaders to move beyond their "AI comfort zone" of fancy demos and

instead tackle difficult structural challenges like cost optimization and team

restructuring. The author emphasizes that the modern leader must lead from the

front, ruthlessly killing legacy "darlings" and designing for impermanence

rather than static stability. Ultimately, the successful CTO must transition

from being a bottleneck to becoming an orchestrator of AI agents and human

expertise, ensuring that the entire organization can pivot rapidly without

trauma. By embracing this proactive mindset, technology leaders can transcend

the gatekeeping era and drive meaningful innovation in a fierce, AI-driven

market.

In the article "The CTO is dead. Long live the CTO" on CIO.com, Marios

Fakiolas argues that the traditional role of the Chief Technology Officer as a

technical gatekeeper and "human compiler" has become obsolete due to the rise

of advanced AI. Modern Large Language Models can now design complex system

architectures in minutes, outperforming humans in handling multidimensional

constraints and technical interdependencies. Consequently, the new era demands

a "multiplier" who shifts focus from providing technical answers to

architecting systems that enable continuous organizational intelligence.

Today’s CTO is measured not by architectural purity, but by tangible business

outcomes such as gross margin, ROI, and operational velocity. This evolution

requires leaders to move beyond their "AI comfort zone" of fancy demos and

instead tackle difficult structural challenges like cost optimization and team

restructuring. The author emphasizes that the modern leader must lead from the

front, ruthlessly killing legacy "darlings" and designing for impermanence

rather than static stability. Ultimately, the successful CTO must transition

from being a bottleneck to becoming an orchestrator of AI agents and human

expertise, ensuring that the entire organization can pivot rapidly without

trauma. By embracing this proactive mindset, technology leaders can transcend

the gatekeeping era and drive meaningful innovation in a fierce, AI-driven

market.When insider risk is a wellbeing issue, not just a disciplinary one

In the article "When insider risk is a wellbeing issue, not just a disciplinary

one" on Security Boulevard, Katie Barnett argues for a paradigm shift in how

organizations manage insider threats. Moving beyond traditional framing—which

often focuses on malicious intent and punitive disciplinary measures—the author

highlights that many security incidents are actually the byproduct of employee

stress, fatigue, and disengagement. In a modern work environment characterized

by digital isolation and economic uncertainty, personal strains such as

financial pressure or burnout can erode professional judgment, making

individuals more susceptible to manipulation or unintentional policy violations.

The piece emphasizes that relying solely on technical controls and monitoring is

insufficient; these tools do not address the underlying human factors that lead

to risk. Instead, Barnett advocates for a proactive approach where wellbeing is

treated as a core pillar of organizational resilience. This involves training

managers to recognize early behavioral warning signs, fostering a supportive

culture where staff feel safe raising concerns, and creating interdepartmental

cooperation between HR and security teams. Ultimately, the article posits that

by integrating support and psychological safety into the security strategy,

organizations can prevent incidents before they escalate, strengthening their

overall security posture through empathy rather than just compliance.

In the article "When insider risk is a wellbeing issue, not just a disciplinary

one" on Security Boulevard, Katie Barnett argues for a paradigm shift in how

organizations manage insider threats. Moving beyond traditional framing—which

often focuses on malicious intent and punitive disciplinary measures—the author

highlights that many security incidents are actually the byproduct of employee

stress, fatigue, and disengagement. In a modern work environment characterized

by digital isolation and economic uncertainty, personal strains such as

financial pressure or burnout can erode professional judgment, making

individuals more susceptible to manipulation or unintentional policy violations.

The piece emphasizes that relying solely on technical controls and monitoring is

insufficient; these tools do not address the underlying human factors that lead

to risk. Instead, Barnett advocates for a proactive approach where wellbeing is

treated as a core pillar of organizational resilience. This involves training

managers to recognize early behavioral warning signs, fostering a supportive

culture where staff feel safe raising concerns, and creating interdepartmental

cooperation between HR and security teams. Ultimately, the article posits that

by integrating support and psychological safety into the security strategy,

organizations can prevent incidents before they escalate, strengthening their

overall security posture through empathy rather than just compliance.

What it takes to win that CSO role

In the CSO Online article "What it takes to win that CSO role," David Weldon

explores the transformation of the Chief Security Officer position into a

high-stakes C-suite role requiring board-level accountability. No longer a

back-office function, the modern CSO operates at the critical intersection of

technology, regulatory exposure, revenue continuity, and brand trust. Achieving

success in this position demands a shift from being a "cost center" to a "trust

center," where security is positioned as a strategic business enabler that

supports revenue growth rather than just a preventative measure. Key

requirements include deep expertise in identity and access management and a

sophisticated understanding of emerging threats like shadow AI, data poisoning,

and model risk. Beyond technical prowess, financial acumen is non-negotiable;

aspiring CSOs must translate security investments into business value, such as

reduced insurance premiums or contractual leverage. Communication is paramount,

as the role involves constant negotiation and the ability to translate complex

risks for non-technical stakeholders. Ultimately, winning the role requires

aligning accountability with authority and demonstrating the operating depth to

maintain business resilience during sustained outages. By evolving from a "no"

person to a "how" person, successful CSOs ensure that security becomes a

foundational pillar of organizational success and customer confidence.

In the CSO Online article "What it takes to win that CSO role," David Weldon

explores the transformation of the Chief Security Officer position into a

high-stakes C-suite role requiring board-level accountability. No longer a

back-office function, the modern CSO operates at the critical intersection of

technology, regulatory exposure, revenue continuity, and brand trust. Achieving

success in this position demands a shift from being a "cost center" to a "trust

center," where security is positioned as a strategic business enabler that

supports revenue growth rather than just a preventative measure. Key

requirements include deep expertise in identity and access management and a

sophisticated understanding of emerging threats like shadow AI, data poisoning,

and model risk. Beyond technical prowess, financial acumen is non-negotiable;

aspiring CSOs must translate security investments into business value, such as

reduced insurance premiums or contractual leverage. Communication is paramount,

as the role involves constant negotiation and the ability to translate complex

risks for non-technical stakeholders. Ultimately, winning the role requires

aligning accountability with authority and demonstrating the operating depth to

maintain business resilience during sustained outages. By evolving from a "no"

person to a "how" person, successful CSOs ensure that security becomes a

foundational pillar of organizational success and customer confidence.Human-Centered AI Is Becoming A Leadership Imperative

In his Forbes article, "Human-Centered AI Is Becoming A Leadership Imperative," Rhett Power argues that while artificial intelligence offers unprecedented industrial opportunities, its successful implementation depends entirely on a shift from technical obsession to human-centric leadership. Power contends that unchecked AI deployment often fails because it ignores the social and cognitive arrangements necessary for technology to thrive. To bridge the widening gap between technological promise and actual business value, leaders must adopt three foundational principles: prioritizing desired business outcomes over specific tools, evolving training to support role-specific enablement, and treating human-centered design as a core competitive advantage. Power identifies a new leadership paradigm where executives must serve as visionary guides who align AI with human values, ethical guardians who ensure transparency and bias mitigation, and human advocates who prioritize employee experience. By focusing on augmenting rather than replacing human expertise, organizations can transform AI into a seamless collaborative partner that drives long-term resilience and innovation. Ultimately, the article emphasizes that the true value of AI lies in its ability to extend the reach of human judgment, making the integration of empathy and ethical oversight a non-negotiable requirement for modern executive accountability in a rapidly evolving digital landscape.Employee Experience 2.0: AI as the Performance Engine of the Work Operating System

In the article "Employee Experience 2.0: AI as the Performance Engine of the

Work Operating System," Jeff Corbin outlines an essential evolution in workplace

management. While the first version of the Employee Experience (EX 1.0) focused

on cross-departmental alignment between HR, IT, and Communications, the author

argues that human capacity alone is no longer sufficient to manage the modern

digital workspace. EX 2.0 introduces artificial intelligence as a "performance

layer" that transforms the work operating system from a static framework into a

self-optimizing engine. AI addresses critical challenges such as "digital

friction"—where employees waste nearly 30% of their day searching through

disconnected systems like SharePoint and ServiceNow—by acting as an automated

editor for content governance. Beyond cleaning up data, AI-driven EX 2.0 enables

hyper-personalization of communications and provides predictive analytics that

can identify turnover risks or workflow bottlenecks before they escalate. By

integrating AI as a core architectural component, organizations can move beyond

manual coordination to create a frictionless environment that boosts engagement

and productivity. Ultimately, the piece calls for leaders to upgrade their

governance models, positioning AI not just as a tool, but as a collaborative

partner that ensures the employee experience remains agile and effective in a

technology-driven era.

In the article "Employee Experience 2.0: AI as the Performance Engine of the

Work Operating System," Jeff Corbin outlines an essential evolution in workplace

management. While the first version of the Employee Experience (EX 1.0) focused

on cross-departmental alignment between HR, IT, and Communications, the author

argues that human capacity alone is no longer sufficient to manage the modern

digital workspace. EX 2.0 introduces artificial intelligence as a "performance

layer" that transforms the work operating system from a static framework into a

self-optimizing engine. AI addresses critical challenges such as "digital

friction"—where employees waste nearly 30% of their day searching through

disconnected systems like SharePoint and ServiceNow—by acting as an automated

editor for content governance. Beyond cleaning up data, AI-driven EX 2.0 enables

hyper-personalization of communications and provides predictive analytics that

can identify turnover risks or workflow bottlenecks before they escalate. By

integrating AI as a core architectural component, organizations can move beyond

manual coordination to create a frictionless environment that boosts engagement

and productivity. Ultimately, the piece calls for leaders to upgrade their

governance models, positioning AI not just as a tool, but as a collaborative

partner that ensures the employee experience remains agile and effective in a

technology-driven era.

The Next Era of UX and Analytics, and Merging Conversational AI with Design-to-Code

The article "The Transformation of Software Development: Smarter UI Components, the Next Era of UX and Analytics" explores the profound shift from static, reactive user interfaces to proactive, intelligent systems. Modern software development is evolving beyond standard component libraries toward "smarter" UI elements that leverage embedded analytics and machine learning to adapt to user behavior in real-time. This transformation allows digital interfaces to anticipate user needs, personalize layouts dynamically, and optimize complex workflows without manual intervention. By integrating sophisticated telemetry directly into front-end components, developers gain granular, actionable insights into performance and engagement, effectively bridging the gap between user experience and technical execution. This evolution significantly impacts the modern DevOps lifecycle, as development teams move from building isolated features to orchestrating continuous learning environments. The article further highlights that these intelligent components reduce the cognitive load for end-users by surfacing relevant information and simplifying intricate navigations. Ultimately, the synergy between advanced data analytics and front-end engineering is setting a new industry standard for digital excellence, where personalization and efficiency are core to the process. Organizations that embrace this era of "smarter" components will deliver highly tailored experiences that drive superior retention and user satisfaction in an increasingly competitive market.Certificate lifespans are shrinking and most organizations aren’t ready

The article "Certificate lifespans are shrinking and most organizations aren't

ready," featured on Help Net Security, outlines the critical challenges

businesses face as TLS certificate validity periods compress from one year down

to 47 days. John Murray of GlobalSign emphasizes that this rapid shift, driven

by browser requirements, necessitates a complete overhaul of traditional manual

certificate management. To avoid operational disruptions and outages,

organizations must prioritize "discovery" as the foundational step, utilizing

tools like GlobalSign's Atlas or LifeCycle X to inventory every certificate and

platform. This proactive approach is not only vital for managing shorter

lifecycles but also serves as essential preparation for the eventual migration

to post-quantum cryptography. Murray suggests that manual spreadsheets are no

longer sustainable; instead, businesses should adopt automation protocols like

ACME and shift toward flexible, SAN-based licensing models to remove procurement

friction. While larger enterprises may have dedicated PKI teams, mid-market and

smaller organizations are at a higher risk of being caught off guard. By

establishing automated renewal pipelines and closing the specialized knowledge

gap in PKI expertise, companies can build a resilient security posture.

Ultimately, the window for preparation is closing, and integrating automated

lifecycle management is now a strategic imperative rather than a future luxury.

The article "Certificate lifespans are shrinking and most organizations aren't

ready," featured on Help Net Security, outlines the critical challenges

businesses face as TLS certificate validity periods compress from one year down

to 47 days. John Murray of GlobalSign emphasizes that this rapid shift, driven

by browser requirements, necessitates a complete overhaul of traditional manual

certificate management. To avoid operational disruptions and outages,

organizations must prioritize "discovery" as the foundational step, utilizing

tools like GlobalSign's Atlas or LifeCycle X to inventory every certificate and

platform. This proactive approach is not only vital for managing shorter

lifecycles but also serves as essential preparation for the eventual migration

to post-quantum cryptography. Murray suggests that manual spreadsheets are no

longer sustainable; instead, businesses should adopt automation protocols like

ACME and shift toward flexible, SAN-based licensing models to remove procurement

friction. While larger enterprises may have dedicated PKI teams, mid-market and

smaller organizations are at a higher risk of being caught off guard. By

establishing automated renewal pipelines and closing the specialized knowledge

gap in PKI expertise, companies can build a resilient security posture.

Ultimately, the window for preparation is closing, and integrating automated

lifecycle management is now a strategic imperative rather than a future luxury.

Agoda CTO on why AI still needs human oversight

In the Tech Wire Asia article, Agoda’s Chief Technology Officer, Idan Zalzberg,

discusses the essential role of human oversight in an era dominated by

artificial intelligence. While AI tools have significantly accelerated developer

workflows and boosted productivity—with early experiments at Agoda showing a 27%

uplift—Zalzberg emphasizes that these technologies remain supplementary. The

primary challenge lies in the inherent unpredictability and non-deterministic

nature of generative AI, which differs from traditional software by producing

inconsistent outputs. Consequently, Agoda maintains a strict policy where human

engineers remain fully accountable for all code, regardless of its origin.

Quality control remains rigorous, utilizing the same static analysis and

automated testing frameworks applied to human-written scripts. Zalzberg notes

that the evolution of the engineering role shifts focus toward critical

thinking, strategic decision-making, and "evaluation"—a statistical method for

assessing AI performance. Beyond technical management, the article highlights

how cultural attitudes toward risk influence AI adoption rates across different

regions. Ultimately, Zalzberg argues that AI maturity is defined by a balanced

approach: leveraging the speed of automation while ensuring that sensitive

decisions—such as pricing or critical architecture—are governed by human

judgment and a centralized gateway to manage security and costs effectively.

In the Tech Wire Asia article, Agoda’s Chief Technology Officer, Idan Zalzberg,

discusses the essential role of human oversight in an era dominated by

artificial intelligence. While AI tools have significantly accelerated developer

workflows and boosted productivity—with early experiments at Agoda showing a 27%

uplift—Zalzberg emphasizes that these technologies remain supplementary. The

primary challenge lies in the inherent unpredictability and non-deterministic

nature of generative AI, which differs from traditional software by producing

inconsistent outputs. Consequently, Agoda maintains a strict policy where human

engineers remain fully accountable for all code, regardless of its origin.

Quality control remains rigorous, utilizing the same static analysis and

automated testing frameworks applied to human-written scripts. Zalzberg notes

that the evolution of the engineering role shifts focus toward critical

thinking, strategic decision-making, and "evaluation"—a statistical method for

assessing AI performance. Beyond technical management, the article highlights

how cultural attitudes toward risk influence AI adoption rates across different

regions. Ultimately, Zalzberg argues that AI maturity is defined by a balanced

approach: leveraging the speed of automation while ensuring that sensitive

decisions—such as pricing or critical architecture—are governed by human

judgment and a centralized gateway to manage security and costs effectively.

/filters:no_upscale()/articles/scaling-cloud-distributed-applications/en/resources/55figure-5-1764666987811.jpg)