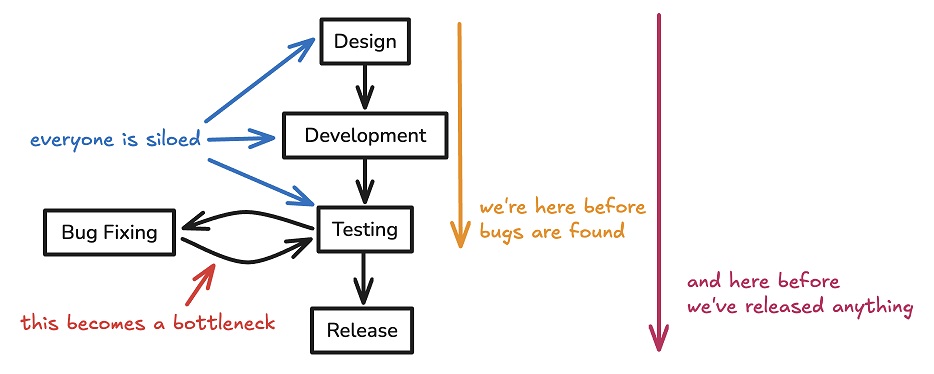

The hidden cost of speed

The software development engine within a company is like the power grid: it’s a

given that it works, and there are no celebrations or accolades for keeping the

lights on. When it fails or goes down, however, everyone’s upset and what’s left

is assigning blame and determining culpability. Unfortunately, in many

industries, the responsible application and development of software is not

considered until there’s a problem. There is no “working well” for a developer

in an ecosystem without insight and intuition as to how difficult the workload

is for various projects or positions. The black and white reality is simply

”Working” or “Not working, what the hell is going on, do we need to fire them,

why is everything so slow lately?” This can be incredibly frustrating for

developers. In my own experience, the person in the worst position is the

developer brought in to clean up another developer’s mess. It’s now your

responsibility not only to convince management that they need to slow down to

give you time to fix things (which will stall sales), but also to architect

everything, orchestrate the rollout, and coordinate with sales goals and

marketing.

Tracing The Destructive Path of Ransomware's Evolution

Contemporary attackers carefully select high-value organizations and

infrastructure to cripple until substantial ransoms are paid — frequently

upwards of seven figures for large corporations, hospitals, pipelines, and

municipalities. Present-day ransomware groups’ techniques reflect a chilling

professionalization of tactics. They leverage military-grade encryption,

identity-hiding cryptocurrencies, data-stealing side efforts, and penetration

testing of victims before attacks to determine maximum tolerances. Hackers often

gain initial entry by purchasing access to systems from underground brokers,

then deploy multipart extortion schemes, including threatening distributed

denial-of-service (DDoS) attacks, if demands aren’t promptly met. Ransomware

perpetrators also tap advancements like artificial intelligence (AI) to

accelerate attacks through malicious code generation, underground dark web

communities to coordinate schemes, and initial access markets to reduce

overhead. ... Ransomware groups continue to innovate their attack methods.

Supply chain attacks have become increasingly common. By compromising a single

software supplier, attackers can access the networks of thousands of downstream

customers.

Zero-Touch Provisioning Simplifies and Augments State and Local Networks

“With zero-touch provisioning unlocking greater time efficiencies, these

agencies can more optimally serve the public,” he says. “For example, research

shows that shaving mere seconds off emergency response calls yields more lives

saved.” Government agencies also can reach wider and broader audiences and

increase constituent trust by delivering crucial food and mobile healthcare

services faster. Even agencies with strong budgets can benefit from more

efficient spending thanks to zero-trust networking, DePreta adds. “By

eliminating the need for manual intervention, government agencies can optimize

budgets to better serve their communities and become smarter in the way they

deliver services. From public services such as mobile healthcare clinics to

public safety activities such as emergency response and disaster relief, ZTP

enables government agencies to do more with less,” he says. ... “You can take a

couple of devices and ship them to a branch, and someone who is not necessarily

a technical expert in that branch can unbox them and plug them in. You are then

up and running right away,” DeBacker says.

Why employee ‘will’ can make or break transformations

Leaders who focus on making work more meaningful and expressing their

appreciation inspire and motivate employees. Previous McKinsey research shows

that executives at organizations who invest time and effort in changing employee

mindsets from the start are four times more likely than those who didn’t to say

their change programs were successful. Indeed, employees notice when their

bosses don’t change their own behaviors to adapt to the goals of transformation.

... he best ideas for how to implement transformation initiatives may come from

frontline employees who are closest to the customer. Organizations that

encourage employees to pursue innovation and continuous improvement see a higher

share of employees that own initiatives or reach milestones during

transformations. ... Once leaders have elevated a core group of employees

to own initiatives or milestones, they should turn to empowering a broader group

to serve as role models who can activate others. These change

leaders—influencers, managers, and supervisors—play a visible role in shaping

and amplifying the behaviors that enhance organizational performance while

counteracting behaviors that get in the way of success.

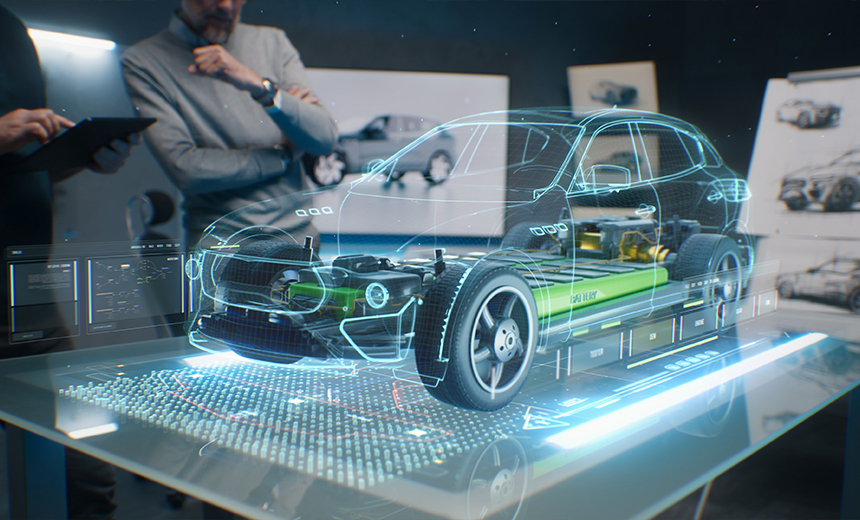

Deploying digital twins: 7 challenges businesses can face and how to navigate them

An organization adopting digital twins needs to be well-networked. "The

biggest roadblock to digital systems is connectivity, at the network and human

levels," Thierry Klein, president of Nokia Bell Labs Solutions Research, told

ZDNET. "Digital twins are most effective when multiple digital twins are

integrated, but this requires collaboration among stakeholders, a robust

digital network, and systems that can be connected to the digital twin." ...

The ability to represent physical environments in real time also presents

challenges to digital twin environments. "With digital twins, you're generally

relying on your model to run parallel with some real-life physical system so

you can understand certain effects that might be impacting the system," Naveen

Rao, vice president of AI for Databricks, told ZDNET. ... "The lack of open,

interoperable data standards presents another significant roadblock.

"Antiquated technology, legacy proprietary data formats, and analog processes

create silos of 'dark data' -- or data that's inaccessible to teams across the

asset lifecycle," Shelly Nooner, vice president of innovation and platform for

Trimble, told ZDNET.

Why CEOs and Corporate Boards Can’t Afford to Get AI Governance Wrong

The first step in preparing for safe and successful AI adoption is

establishing the necessary C-Suite governance structures. This needs to be a

point of urgency, as far more advanced and powerful AI capabilities, including

Artificial General Intelligence (AGI), where AI may be able to perform human

cognitive tasks better than the smartest human being, loom on the horizon. BCG

published a leadership report earlier this year entitled “Every C-Suite Member

Is Now a Chief AI Officer.” ... Corporate leadership and boards must determine

how best to manage the risks and opportunities presented by AI to serve its

customers and to protect its stakeholders. To begin with, they must identify

where management responsibility should sit, and how these responsibilities

should be structured. BCG’s report states that from the CEO on down, there

needs to be at minimum, “a basic understanding of GenAI, particularly with

respect to security and privacy risks,” adding that business leaders “must

have confidence that all decisions strike the right balance between risk and

business benefit.”

Get ready for a tumultuous era of GPU cost volitivity

Demand is almost certain to increase as companies continue to build AI at a

rapid pace. Investment firm Mizuho has said the total market for GPUs could

grow tenfold over the next five years to more than $400 billion, as businesses

rush to deploy new AI applications. Supply depends on several factors that are

hard to predict. They include manufacturing capacity, which is costly to

scale, as well as geopolitical considerations — many GPUs are manufactured in

Taiwan, whose continued independence is threatened by China. Supplies have

already been scarce, with some companies reportedly waiting six months to get

their hands on Nvidia’s powerful H100 chips. As businesses become more

dependent on GPUs to power AI applications, these dynamics mean that they will

need to get to grips with managing variable costs. ... To lock in costs, more

companies may choose to manage their own GPU servers rather than renting them

from cloud providers. This creates additional overhead but provides greater

control and can lead to lower costs in the longer term. Companies may also buy

up GPUs defensively: Even if they don’t know how they’ll use them yet, these

defensive contracts can ensure they’ll have access to GPUs for future needs —

and that their competitors won’t.

Optimizing Continuous Deployment at Uber: Automating Microservices in Large Monorepos

/filters:no_upscale()/news/2024/09/uber-continuous-feployment/en/resources/2unnamed-1725634186219.png)

The newly designed system, named

Up

CD, was designed to improve automation and safety. It is tightly integrated

with Uber's internal cloud platform and observability tools, ensuring that

deployments follow a standardized and repeatable process by default. The new

system prioritized simplicity and transparency, especially in managing

monorepos. One key improvement was optimizing deployments by looking at which

services were affected by each commit, rather than deploying every service

with every code change. This reduced unnecessary builds and gave engineers

more clarity over the changes impacting their services. ... Up introduced a

unified commit flow for all services, ensuring that each service progressed

through a series of deployment stages, each with its own safety checks. These

conditions included time delays, deployment windows, and service alerts,

ensuring deployments were triggered only when safe. Each stage operated

independently, allowing flexibility in customizing deployment flows while

maintaining safety. This new approach reduced manual errors and provided a

more structured deployment experience.

Cybercriminals use legitimate software for attacks increasing

The report underscores the growing trend of attackers adopting legitimate

tools to evade security measures and deceive security personnel. These tools

are used for various malicious activities, including spreading ransomware,

conducting network scanning, lateral movement within networks, and

establishing command-and-control (C2) operations. Among the tools identified

in the report are PDQ Deploy, PSExec, Rclone, SoftPerfect, AnyDesk,

ScreenConnect, and WMIC. A series of case studies detailed in the report

highlights specific incidents involving these tools. Between September 2023

and August 2024, 22 posts on various criminal forums discussed or shared

cracked versions of the SoftPerfect network scanner. ... Remote management and

monitoring (RMM) tools like AnyDesk and ScreenConnect are also prominently

featured in criminal discussions. An August 2024 post on the RAMP forum

described using AnyDesk during a penetration test and recommended disabling

secure logon for successful connections. Initial Access Brokers (IABs)

frequently sell access to networks through these established remote management

and monitoring tool connections.

Principles of Modern Data Infrastructure

Designing a modern data infrastructure to fail fast means creating systems

that can quickly detect and handle failures, improving reliability and

resilience. If a system goes down, most of the time, the problem is with the

data layer not being able to handle the stress rather than the application

compute layer. While scaling, when one or more components within the data

infrastructure fail, they should fail fast and recover fast. In the meantime,

since the data layer is stateful, the whole fail-and-recovery process should

minimize data inconsistency as well. ... By default, databases and data stores

need to be able to respond quickly to user queries under heavy throughput.

Users expect a real-time or near-real-time experience from all applications.

Much of the time, even a few milliseconds, is too slow. For instance, a web

API request may translate to one or a few queries to the primary on-disk

database and then a few to even tens of operations to the in-memory data

store. For each in-memory data store operation, a sub-millisecond response

time is a bare necessity for an expected user experience.

Quote for the day:

Leaders must be good listeners. It's

rule number one, and it's the most powerful thing they can do to build

trusted relationships. - Lee Ellis