Quote for the day:

“We are what we pretend to be, so we must be careful about what we pretend to be.” -- Kurt Vonnegut

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 22 mins • Perfect for listening on the go.

One-Time Passcodes Are Gateway for Financial Fraud Attacks

The article "One-Time Passcodes Are Gateway for Financial Fraud Attacks"

highlights the increasing vulnerability of SMS-based one-time passcodes (OTPs)

as a primary authentication method. Threat intelligence from Recorded Future

reveals that fraudsters are increasingly exploiting real-time communication

weaknesses through social engineering and impersonation to intercept these

codes, facilitating account takeovers and payment fraud. This shift indicates

a growing industrialization of fraud operations where attackers no longer need

to defeat complex technical security controls but instead manipulate user

behavior during live interactions. Security experts, including those from

Coalition, argue that OTPs represent "low-hanging fruit" for cybercriminals

and advocate for phishing-resistant alternatives like FIDO-based hardware

authentication. Consequently, global regulators are taking action to mitigate

these risks. For instance, Singapore and the United Arab Emirates have already

phased out SMS-based OTPs for banking logins, while India and the Philippines

are moving toward multifactor approaches involving biometrics and device-based

identification. Although U.S. regulators still recognize OTPs as part of

multifactor authentication, the rise of SIM-swapping and sophisticated social

engineering is pushing the financial industry toward more resilient,

multi-signal authentication models that integrate behavioral patterns and

device identity to better balance security with user experience.

The article "One-Time Passcodes Are Gateway for Financial Fraud Attacks"

highlights the increasing vulnerability of SMS-based one-time passcodes (OTPs)

as a primary authentication method. Threat intelligence from Recorded Future

reveals that fraudsters are increasingly exploiting real-time communication

weaknesses through social engineering and impersonation to intercept these

codes, facilitating account takeovers and payment fraud. This shift indicates

a growing industrialization of fraud operations where attackers no longer need

to defeat complex technical security controls but instead manipulate user

behavior during live interactions. Security experts, including those from

Coalition, argue that OTPs represent "low-hanging fruit" for cybercriminals

and advocate for phishing-resistant alternatives like FIDO-based hardware

authentication. Consequently, global regulators are taking action to mitigate

these risks. For instance, Singapore and the United Arab Emirates have already

phased out SMS-based OTPs for banking logins, while India and the Philippines

are moving toward multifactor approaches involving biometrics and device-based

identification. Although U.S. regulators still recognize OTPs as part of

multifactor authentication, the rise of SIM-swapping and sophisticated social

engineering is pushing the financial industry toward more resilient,

multi-signal authentication models that integrate behavioral patterns and

device identity to better balance security with user experience.Evaluating the ethics of autonomous systems

MIT researchers, led by Professor Chuchu Fan and graduate student Anjali

Parashar, have developed a pioneering evaluation framework titled SEED-SET to

assess the ethical alignment of autonomous systems before their deployment.

This innovative system addresses the challenge of balancing measurable

outcomes, such as cost and reliability, with subjective human values like

fairness. Designed to operate without pre-existing labeled data, SEED-SET

utilizes a hierarchical structure that separates objective technical

performance from subjective ethical criteria. By employing a Large Language

Model as a proxy for human stakeholders, the framework can consistently

evaluate thousands of complex scenarios without the fatigue often experienced

by human reviewers. In testing involving realistic models like power grids and

urban traffic routing, the system successfully pinpointed critical ethical

dilemmas, such as strategies that might inadvertently prioritize high-income

neighborhoods over disadvantaged ones. SEED-SET generated twice as many

optimal test cases as traditional methods, uncovering "unknown unknowns" that

static regulatory codes often miss. This research, presented at the

International Conference on Learning Representations, provides a systematic

way to ensure AI-driven decision-making remains well-aligned with diverse

human preferences, moving beyond simple technical optimization to foster more

equitable technological solutions for high-stakes societal challenges.

MIT researchers, led by Professor Chuchu Fan and graduate student Anjali

Parashar, have developed a pioneering evaluation framework titled SEED-SET to

assess the ethical alignment of autonomous systems before their deployment.

This innovative system addresses the challenge of balancing measurable

outcomes, such as cost and reliability, with subjective human values like

fairness. Designed to operate without pre-existing labeled data, SEED-SET

utilizes a hierarchical structure that separates objective technical

performance from subjective ethical criteria. By employing a Large Language

Model as a proxy for human stakeholders, the framework can consistently

evaluate thousands of complex scenarios without the fatigue often experienced

by human reviewers. In testing involving realistic models like power grids and

urban traffic routing, the system successfully pinpointed critical ethical

dilemmas, such as strategies that might inadvertently prioritize high-income

neighborhoods over disadvantaged ones. SEED-SET generated twice as many

optimal test cases as traditional methods, uncovering "unknown unknowns" that

static regulatory codes often miss. This research, presented at the

International Conference on Learning Representations, provides a systematic

way to ensure AI-driven decision-making remains well-aligned with diverse

human preferences, moving beyond simple technical optimization to foster more

equitable technological solutions for high-stakes societal challenges.Blast Radius of TeamPCP Attacks Expands Amid Hacker Infighting

The article "Blast Radius of TeamPCP Attacks Expands Amid Hacker Infighting"

details the escalating impact of supply chain compromises targeting

open-source projects like LiteLLM and Trivy. Attributed to the threat group

TeamPCP, these attacks have victimized high-profile entities such as the

European Commission and AI startup Mercor by harvesting cloud credentials and

API keys. The situation has become increasingly volatile due to "infighting"

and a lack of clear collaboration between cybercriminal factions. While

TeamPCP initiates the intrusions, groups like ShinyHunters and Lapsus$ have

begun leaking and claiming credit for the stolen data, leading to a murky

ecosystem where multiple actors converge on the same access points. Further

complicating the threat landscape is TeamPCP's formal alliance with the Vect

ransomware gang, which utilizes a three-stage remote access Trojan to deepen

their foothold. Security experts emphasize that the speed of these

attacks—often moving from initial compromise to data exfiltration within

hours—necessitates a rapid response. Organizations are urged to move beyond

merely removing malicious packages; they must immediately revoke exposed

secrets, rotate cloud credentials, and audit CI/CD workflows to mitigate the

risk of follow-on extortion and ransomware deployment by this expanding

criminal network.

The article "Blast Radius of TeamPCP Attacks Expands Amid Hacker Infighting"

details the escalating impact of supply chain compromises targeting

open-source projects like LiteLLM and Trivy. Attributed to the threat group

TeamPCP, these attacks have victimized high-profile entities such as the

European Commission and AI startup Mercor by harvesting cloud credentials and

API keys. The situation has become increasingly volatile due to "infighting"

and a lack of clear collaboration between cybercriminal factions. While

TeamPCP initiates the intrusions, groups like ShinyHunters and Lapsus$ have

begun leaking and claiming credit for the stolen data, leading to a murky

ecosystem where multiple actors converge on the same access points. Further

complicating the threat landscape is TeamPCP's formal alliance with the Vect

ransomware gang, which utilizes a three-stage remote access Trojan to deepen

their foothold. Security experts emphasize that the speed of these

attacks—often moving from initial compromise to data exfiltration within

hours—necessitates a rapid response. Organizations are urged to move beyond

merely removing malicious packages; they must immediately revoke exposed

secrets, rotate cloud credentials, and audit CI/CD workflows to mitigate the

risk of follow-on extortion and ransomware deployment by this expanding

criminal network.Beyond RAG: Architecting Context-Aware AI Systems with Spring Boot

/articles/beyond-rag-context-aware/en/smallimage/beyond-rag-context-aware-thumbnail-1774531119239.jpg) The article "Beyond RAG: Architecting Context-Aware AI Systems with Spring

Boot" introduces Context-Augmented Generation (CAG), an architectural

refinement designed to address the limitations of standard Retrieval-Augmented

Generation (RAG) in enterprise environments. While traditional RAG

successfully grounds AI responses in external data, it often ignores vital

runtime factors such as user identity, session history, and specific workflow

states. CAG solves this by introducing a dedicated context manager that

assembles and normalizes these contextual signals before they reach the core

RAG pipeline. This additional layer allows systems to provide answers that are

not only factually accurate but also contextually appropriate for the specific

user and situation. A key advantage of this design is its modularity; the

context manager operates independently of the retriever and large language

model, requiring no changes to the underlying infrastructure or model

retraining. By isolating contextual reasoning, enterprise teams can achieve

better traceability, consistency, and governance across their AI applications.

Specifically targeting Java developers, the piece demonstrates how to

implement this pattern using Spring Boot, moving AI beyond simple prototypes

toward production-ready systems that can handle complex, multi-departmental

constraints and dynamic organizational policies with much greater

precision.

The article "Beyond RAG: Architecting Context-Aware AI Systems with Spring

Boot" introduces Context-Augmented Generation (CAG), an architectural

refinement designed to address the limitations of standard Retrieval-Augmented

Generation (RAG) in enterprise environments. While traditional RAG

successfully grounds AI responses in external data, it often ignores vital

runtime factors such as user identity, session history, and specific workflow

states. CAG solves this by introducing a dedicated context manager that

assembles and normalizes these contextual signals before they reach the core

RAG pipeline. This additional layer allows systems to provide answers that are

not only factually accurate but also contextually appropriate for the specific

user and situation. A key advantage of this design is its modularity; the

context manager operates independently of the retriever and large language

model, requiring no changes to the underlying infrastructure or model

retraining. By isolating contextual reasoning, enterprise teams can achieve

better traceability, consistency, and governance across their AI applications.

Specifically targeting Java developers, the piece demonstrates how to

implement this pattern using Spring Boot, moving AI beyond simple prototypes

toward production-ready systems that can handle complex, multi-departmental

constraints and dynamic organizational policies with much greater

precision.Eliminating blind spots – nailing the IPv6 transition

The article "Eliminating blind spots – nailing the IPv6 transition" highlights

the critical shift from IPv4 to IPv6, noting that global adoption reached 45%

by 2026. Despite this growth, many IT teams remain overly reliant on legacy

dual-stack monitoring that prioritizes IPv4, leading to significant visibility

gaps. Because IPv6 operates differently—utilizing 128-bit addresses and

emphasizing ICMPv6 and AAAA records—traditional scanning and monitoring

methods often fail to detect degraded performance or security vulnerabilities.

These "blind spots" can result in service outages that teams only discover

through user complaints rather than proactive alerts. To navigate this

transition successfully, organizations must adopt monitoring solutions with

robust auto-discovery capabilities and real-time notifications tailored to

IPv6-specific behaviors. The article emphasizes that an effective transition

does not require a complete infrastructure rebuild; instead, it demands a

mindset shift where IPv6 is treated as a primary protocol rather than a

secondary concern. By integrating comprehensive visibility across cloud, data

centers, and OT environments, businesses can ensure network resilience and

security. Ultimately, proactively addressing these monitoring deficiencies

allows IT departments to manage the increasing complexity of modern internet

traffic while avoiding the pitfalls of reactive troubleshooting in a rapidly

evolving digital landscape.

The article "Eliminating blind spots – nailing the IPv6 transition" highlights

the critical shift from IPv4 to IPv6, noting that global adoption reached 45%

by 2026. Despite this growth, many IT teams remain overly reliant on legacy

dual-stack monitoring that prioritizes IPv4, leading to significant visibility

gaps. Because IPv6 operates differently—utilizing 128-bit addresses and

emphasizing ICMPv6 and AAAA records—traditional scanning and monitoring

methods often fail to detect degraded performance or security vulnerabilities.

These "blind spots" can result in service outages that teams only discover

through user complaints rather than proactive alerts. To navigate this

transition successfully, organizations must adopt monitoring solutions with

robust auto-discovery capabilities and real-time notifications tailored to

IPv6-specific behaviors. The article emphasizes that an effective transition

does not require a complete infrastructure rebuild; instead, it demands a

mindset shift where IPv6 is treated as a primary protocol rather than a

secondary concern. By integrating comprehensive visibility across cloud, data

centers, and OT environments, businesses can ensure network resilience and

security. Ultimately, proactively addressing these monitoring deficiencies

allows IT departments to manage the increasing complexity of modern internet

traffic while avoiding the pitfalls of reactive troubleshooting in a rapidly

evolving digital landscape.Post-Quantum Readiness Starts Long Before Q-Day

The Forbes article "Post-Quantum Readiness Starts Long Before Q-Day" by Etay

Maor highlights the urgent need for organizations to prepare for the

inevitable arrival of "Q-Day"—the moment quantum computers become capable of

shattering current public-key cryptography standards. While significant

quantum utility may be years away, the author warns of the "harvest now,

decrypt later" threat, where malicious actors collect encrypted sensitive data

today to decrypt it once quantum technology matures. Consequently,

post-quantum readiness must be viewed as a critical leadership and

business-risk issue rather than a distant technical concern. Maor argues that

the transition will be a multi-year journey, not a simple switch, requiring

deep visibility into an organization’s cryptographic sprawl to identify

vulnerabilities. He recommends a hybrid security approach, utilizing standards

like TLS 1.3 with post-quantum-ready cipher suites to protect high-priority

"crown jewel" data while the broader ecosystem catches up. By prioritizing

sensitive traffic and adopting a centralized operating model, such as a

quantum-aware Secure Access Service Edge (SASE), businesses can build

long-term resilience. Ultimately, proactive preparation is essential to

safeguarding data confidentiality against the future capabilities of quantum

computing, ensuring that security measures evolve alongside emerging

threats.

The Forbes article "Post-Quantum Readiness Starts Long Before Q-Day" by Etay

Maor highlights the urgent need for organizations to prepare for the

inevitable arrival of "Q-Day"—the moment quantum computers become capable of

shattering current public-key cryptography standards. While significant

quantum utility may be years away, the author warns of the "harvest now,

decrypt later" threat, where malicious actors collect encrypted sensitive data

today to decrypt it once quantum technology matures. Consequently,

post-quantum readiness must be viewed as a critical leadership and

business-risk issue rather than a distant technical concern. Maor argues that

the transition will be a multi-year journey, not a simple switch, requiring

deep visibility into an organization’s cryptographic sprawl to identify

vulnerabilities. He recommends a hybrid security approach, utilizing standards

like TLS 1.3 with post-quantum-ready cipher suites to protect high-priority

"crown jewel" data while the broader ecosystem catches up. By prioritizing

sensitive traffic and adopting a centralized operating model, such as a

quantum-aware Secure Access Service Edge (SASE), businesses can build

long-term resilience. Ultimately, proactive preparation is essential to

safeguarding data confidentiality against the future capabilities of quantum

computing, ensuring that security measures evolve alongside emerging

threats.Confidential computing resurfaces as security priority for CIOs

Confidential computing has resurfaced as a critical security priority for

CIOs, addressing the long-standing industry gap of protecting data while it is

actively being processed. While traditional encryption safeguards data at rest

and in transit, confidential computing utilizes hardware-encrypted Trusted

Execution Environments (TEEs) to isolate sensitive information from the

surrounding infrastructure, cloud providers, and even privileged users. This

technology is gaining significant traction as organizations seek to protect

intellectual property and regulated analytics workloads, especially within the

context of generative AI. According to IDC, 75% of surveyed organizations are

already testing or adopting the technology in some form. Unlike earlier

versions that required deep technical expertise and application redesign,

modern confidential computing integrates seamlessly into existing virtual

machines and containers. This evolution allows developers to maintain current

workflows while gaining hardware-enforced security boundaries that software

controls alone cannot provide. Gartner has notably ranked confidential

computing as a top three technology to watch for 2026, highlighting its

growing importance in sectors like finance and healthcare. By providing

hardware-rooted attestation and verifiable trust, it helps organizations

minimize risk exposure and maintain regulatory compliance. Ultimately, as

confidential computing converges with AI and data security management

platforms, it will become an essential component of a robust zero-trust

architecture.

Confidential computing has resurfaced as a critical security priority for

CIOs, addressing the long-standing industry gap of protecting data while it is

actively being processed. While traditional encryption safeguards data at rest

and in transit, confidential computing utilizes hardware-encrypted Trusted

Execution Environments (TEEs) to isolate sensitive information from the

surrounding infrastructure, cloud providers, and even privileged users. This

technology is gaining significant traction as organizations seek to protect

intellectual property and regulated analytics workloads, especially within the

context of generative AI. According to IDC, 75% of surveyed organizations are

already testing or adopting the technology in some form. Unlike earlier

versions that required deep technical expertise and application redesign,

modern confidential computing integrates seamlessly into existing virtual

machines and containers. This evolution allows developers to maintain current

workflows while gaining hardware-enforced security boundaries that software

controls alone cannot provide. Gartner has notably ranked confidential

computing as a top three technology to watch for 2026, highlighting its

growing importance in sectors like finance and healthcare. By providing

hardware-rooted attestation and verifiable trust, it helps organizations

minimize risk exposure and maintain regulatory compliance. Ultimately, as

confidential computing converges with AI and data security management

platforms, it will become an essential component of a robust zero-trust

architecture.Introducing the Agent Governance Toolkit: Open-source runtime security for AI agents

Microsoft has introduced the Agent Governance Toolkit, an open-source project

designed to provide critical runtime security for autonomous AI agents. As AI

evolves from simple chat interfaces to independent actors capable of executing

complex trades and managing infrastructure, the need for robust oversight has

become paramount. Released under the MIT license, this framework-agnostic

toolkit addresses the risks outlined in the OWASP Top 10 for Agentic

Applications through deterministic, sub-millisecond policy enforcement. The

suite comprises seven specialized packages, including "Agent OS" for stateless

policy execution and "Agent Mesh" for cryptographic identity and dynamic trust

scoring. Drawing inspiration from battle-tested operating system principles,

the toolkit incorporates features like execution rings, circuit breakers, and

emergency kill switches to ensure reliable and secure operations. It

seamlessly integrates with popular frameworks like LangChain and AutoGen,

allowing developers to implement governance without rewriting core code. By

mapping directly to regulatory requirements like the EU AI Act, the toolkit

empowers organizations to proactively manage goal hijacking, tool misuse, and

cascading failures. Ultimately, Microsoft’s initiative fosters a secure

ecosystem where autonomous agents can scale safely across diverse platforms,

including Azure Kubernetes Service, while remaining subject to transparent and

community-driven governance standards.

Microsoft has introduced the Agent Governance Toolkit, an open-source project

designed to provide critical runtime security for autonomous AI agents. As AI

evolves from simple chat interfaces to independent actors capable of executing

complex trades and managing infrastructure, the need for robust oversight has

become paramount. Released under the MIT license, this framework-agnostic

toolkit addresses the risks outlined in the OWASP Top 10 for Agentic

Applications through deterministic, sub-millisecond policy enforcement. The

suite comprises seven specialized packages, including "Agent OS" for stateless

policy execution and "Agent Mesh" for cryptographic identity and dynamic trust

scoring. Drawing inspiration from battle-tested operating system principles,

the toolkit incorporates features like execution rings, circuit breakers, and

emergency kill switches to ensure reliable and secure operations. It

seamlessly integrates with popular frameworks like LangChain and AutoGen,

allowing developers to implement governance without rewriting core code. By

mapping directly to regulatory requirements like the EU AI Act, the toolkit

empowers organizations to proactively manage goal hijacking, tool misuse, and

cascading failures. Ultimately, Microsoft’s initiative fosters a secure

ecosystem where autonomous agents can scale safely across diverse platforms,

including Azure Kubernetes Service, while remaining subject to transparent and

community-driven governance standards.Twinning! Quantum ‘Digital Twins’ Tackle Error Correction Task to Speed Path to Reliable Quantum Computers

Researchers have introduced a groundbreaking classical simulation method that

utilizes "digital twins" to significantly accelerate the development of

reliable, fault-tolerant quantum computers. By creating highly detailed

virtual replicas of quantum hardware, scientists can now model quantum error

correction (QEC) processes for systems containing up to 97 physical qubits.

This approach addresses the massive overhead traditionally required to

stabilize fragile qubits, where multiple physical units are needed to form a

single, error-resistant logical qubit. Unlike traditional methods that require

building and debugging expensive physical prototypes, these digital twins

leverage Monte Carlo simulations to model error propagation and decoding

strategies on standard cloud computing nodes in roughly an hour. This shift

allows researchers to rapidly iterate and optimize hardware parameters and

error-fixing codes without the exorbitant costs and time constraints of

physical testing. Functioning essentially as a "virtual wind tunnel," this

innovation provides a critical, scalable framework for designing the complex

error-correction layers necessary for practical quantum computation. By

streamlining the path toward fault tolerance, this digital twin methodology

represents a profound, practical advancement that enables the quantum industry

to refine complex systems virtually, ultimately bringing the reality of

large-scale, dependable quantum computing closer than ever before.

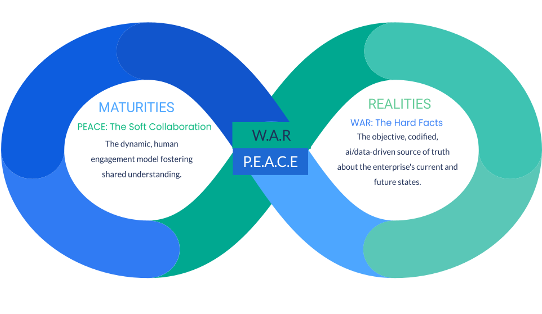

The end of the org chart: Leadership in an agentic enterprise

The traditional organizational chart is becoming obsolete as modern

enterprises transition toward an "agentic" model where AI agents and humans

collaborate as teammates. According to industry expert Steve Tout, the sheer

volume of digital information—now doubling every eight hours—has overwhelmed

human judgment, rendering legacy hierarchical structures and the

"people-process-technology" framework increasingly insufficient. In this

evolving landscape, AI agents handle repeatable cognitive tasks, synthesis,

and data-heavy "grunt work," while human professionals retain control over

high-level judgment, ethical accountability, and client trust. Organizations

like McKinsey are already pioneering this shift, deploying tens of thousands

of agents to streamline complex workflows. Leadership is consequently being

redefined; it is no longer about maintaining a strict span of control or

following predictable reporting lines. Instead, next-generation leaders must

become architects of integrated networks, managing both human talent and

agentic systems to foster deep organizational intelligence. By protecting

human decision-makers from information fatigue, agentic enterprises can

achieve greater clarity and faster strategic alignment. Ultimately, success in

this new era requires a fundamental shift from viewing technology as a

standalone tool to embracing it as a collaborative force that enhances the

unique human capacity for sensemaking in complex, fast-moving business

environments.

The traditional organizational chart is becoming obsolete as modern

enterprises transition toward an "agentic" model where AI agents and humans

collaborate as teammates. According to industry expert Steve Tout, the sheer

volume of digital information—now doubling every eight hours—has overwhelmed

human judgment, rendering legacy hierarchical structures and the

"people-process-technology" framework increasingly insufficient. In this

evolving landscape, AI agents handle repeatable cognitive tasks, synthesis,

and data-heavy "grunt work," while human professionals retain control over

high-level judgment, ethical accountability, and client trust. Organizations

like McKinsey are already pioneering this shift, deploying tens of thousands

of agents to streamline complex workflows. Leadership is consequently being

redefined; it is no longer about maintaining a strict span of control or

following predictable reporting lines. Instead, next-generation leaders must

become architects of integrated networks, managing both human talent and

agentic systems to foster deep organizational intelligence. By protecting

human decision-makers from information fatigue, agentic enterprises can

achieve greater clarity and faster strategic alignment. Ultimately, success in

this new era requires a fundamental shift from viewing technology as a

standalone tool to embracing it as a collaborative force that enhances the

unique human capacity for sensemaking in complex, fast-moving business

environments.

/dq/media/media_files/2025/10/17/cloud-sovereignty-2025-10-17-10-54-28.jpg)