The Digital Revolution and the Demand for Cyber Insurance

The problem with cyberattacks is this – it’s extremely unlikely that any

individual or an organization can bring the potential of an attack down to zero.

At the same time, with the advancements in technology – the attacks and risks

are going to only get more complex and sophisticated. Hence, even the best

firewalls and cybersecurity practices might prove toothless in the face of a

highly-coordinated attack. The prognosis gets worse when one looks at the

current state of preparedness. ... Cyber insurance policies have proven

effective in helping businesses pay off liabilities arising from stolen customer

data, compromised passwords, breached bank accounts, frozen databases and a lot

more. A comprehensive and dynamic cyber insurance protection becomes necessary

for almost every business when one considers the severe reputational damage,

regulatory fines, legal charges and customer obligations that arise from a cyber

attack incident. The best part about cyber insurance is that many insurers also

assist businesses in managing the attack – including response, negotiating

ransom, legal proceedings and further protection to prevent a repeat of such

attacks in the future.

From PKI to PQC: Devising a strategy for the transition

“Independently of PQC as a topic, one of the challenges often voiced by our

customers is that public key infrastructure (PKI) can exist in a company in a

broad range of departments, making it difficult centralize the responsibility

for and ownership of it,” Jason Sabin, Chief Technology Officer at digital

security company DigiCert, told Help Net Security. Companies are solving that

problem in different ways. In some cases, they centralize their cryptographic

activity under one department and one head. In other cases, they create an

acting committee, with stakeholders across the company who influence the

direction of their programs. “The companies that have already started to

centralize management have an organizational method to request that budget and

schedule the activity. But for the organizations that have not, there’s a little

bit of an organizational design challenge present. And that’s where, I think,

the technology leaders need to partner with the business leaders to come up with

the best organizational path forward,” he remarked.

Cisco: Generative AI expectations outstrip enterprise readiness

At the heart of most AI networks will be Ethernet, since high-bandwidth Ethernet

infrastructure is essential to facilitate quick data transfer between AI

workloads, Cisco stated. “Implementing software controls like Priority Flow

Control (PFC) and Explicit Congestion Notification (ECN) in the Ethernet network

guarantees uninterrupted data delivery, especially for latency-sensitive AI

workloads.” For AI readiness, the Cisco research recommends that enterprises

build in automation tools for network configuration in order to optimize data

transfer between AI workloads. “Automation reduces manual intervention, improves

efficiency, and allows the infrastructure to dynamically adapt to the demands of

AI workloads,” the researchers said. ... A core component of the data center AI

blueprint is Cisco’s Nexus 9000 data center switches, which support up to

25.6Tbps of bandwidth per ASIC and “have the hardware and software capabilities

available today to provide the right latency, congestion management mechanisms,

and telemetry to meet the requirements of AI/ML applications,” Cisco stated.

Security Is a Process, Not a Tool

A new approach is required that can be more easily scaled to record and map

myriad interactions and processes continuously and at enterprise scale. Enter

process mining for cybersecurity. Process mining has existed in numerous

industries for over a decade. From enterprise resource management (ERP) systems

to robotic process automation (RPA), where mapping a process is the first stage

of deployment, capturing human interactions with technology as they run through

their jobs is a familiar strategy. However, this approach has not been applied

to cybersecurity for a handful of reasons. First, analyzing and cataloging

processes is tedious work that many cybersecurity and IT teams prefer to leave

to auditors. Asking the cybersecurity or IT or networking teams to add this to

their already heavy workloads of monitoring and securing infrastructure and

software is unsustainable. Second, while cybersecurity and audit teams have long

relied on data collected by agents, that data is largely tied to events and

changes in security tools, not on processes.

Info Stealers Thrive in Hot Market for Stolen Data

Despite fierce competition and the ever-present threat of takedowns,

info-stealer innovation continues as newcomers debut constantly and existing

players refresh their offerings regularly. ... Researchers said the info

stealer is being spread through a variety of common distribution tactics,

including malicious websites - often disguised as a legitimate installer - as

well as drive-by downloads and phishing campaigns. First discovered in 2020,

attackers have previously spread the malware using search engine optimization

poisoning techniques. At the beginning of 2022, researchers at BlackBerry

warned that the malware was often "being bundled with legitimate, signed

software" a ploy that "makes it difficult to detect the threat before it has

been deployed onto a victim system." Crypto wallets remain one of Jupyter's

top targets. The malware searches outright for data files tied to 17 different

types of wallets - including Atomic, Guarda, SimplEOS and NEON - as well as

for wild-card filenames based on the word "wallet," plus OpenVPN and remote

desktop protocol credentials, BlackBerry reported.

Microsoft launches Fabric, adds Copilot for the new platform

Microsoft Fabric is a new environment for data integration, data management

and analytics, bringing together a set of capabilities that enable customers

to model and analyze data in myriad ways. The suite includes Power BI,

Microsoft's longstanding traditional BI platform on which users can develop

and consume data products such as reports, dashboards and AI and machine

learning models. In addition, Fabric includes Azure Synapse Analytics, a

cloud-based service for data integration, data warehousing and big data

analytics. Finally, Fabric includes Azure Data Factory, an extract, transform

and load service that enables customers to integrate and transform data at

scale. In addition to the three formerly separate platforms, Fabric includes a

multi-cloud data lake called OneLake that automatically connects to every data

workload within Fabric. OneLake comes with shortcuts to data sources such as

Azure Data Lake Storage Gen2 and Amazon S3.The combination of the previously

disparate platforms in a single environment is designed to simplify data

management and analysis, according to Microsoft.

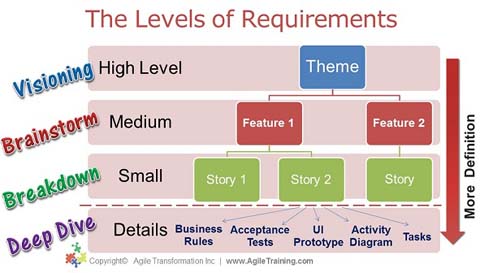

The Importance Of Continuous Testing In Agile And DevOps Environments

By embracing continuous testing, businesses can reduce test cycle times. This

accelerated testing process integrates testing seamlessly throughout the

development life cycle. As a result, organizations can deliver high-quality

software faster, ensuring a quicker time to market. This agility allows them

to respond promptly to customer demands, stay ahead of the competition and

seize valuable market opportunities. ... Implementing continuous testing can

increase collaboration among teams. By fostering effective collaboration and

communication between development, testing, and operations teams,

organizations can create a seamless integration of testing within their agile

and DevOps processes. This alignment enables them to streamline workflows,

enhance knowledge sharing, and drive efficient cross-functional teamwork,

ultimately resulting in better software quality and faster time to market. ...

By prioritizing continuous testing, organizations deliver bug-free and

feature-rich products consistently, meeting or exceeding customer

expectations.

Five Ways for Digital Trust Professionals to Improve Soft Skills

“Emotional intelligence is the ability to recognize, understand, manage, and

effectively respond to one’s own and others’ emotions. The reality is that

soft skills and emotional intelligence are even more important than technical

skills as predictors of success. “Soft skills are important for auditors and

cybersecurity professionals because they facilitate effective communication,

build client confidence, foster trust, enhance teamwork and enable a nuanced

understanding of organizational dynamics and human behavior, all of which are

essential for identifying risks, conveying complex findings and ensuring the

successful implementation of recommended security measures. ...

Problem-solving: Problem-solving remains a constant need, particularly as AI

and LLMs introduce new challenges. The stable importance of this skill

indicates that while AI can provide significant data analysis, humans are

still needed for ultimate decision-making, especially when ethical or complex

considerations are involved."

How US SEC legal actions put CISOs at risk and what to do about it

If CISOs find themselves in this awkward position, step one is to meet with

the general counsel and listen to the attorney's reasoning for the changes,

Rasch said. If the CISO is still not satisfied, the next step is to meet

with the CEO and listen to the chief executive's rationale. If the CISO is

still not satisfied, Rasch suggests hiring outside counsel to offer an

ostensibly objective assessment of whether the filing constitutes legal

fraud. ... After retaining counsel, all subsequent moves are fraught with

danger. "If the CISO believes that there has been a fraud to the SEC, the

CISO has an obligation to report it to the board. That may itself be

corporate suicide," Rasch said, adding that the next move-going to the

feds-is even more problematic. "Going to the SEC is crossing the Rubicon."

"The CISO is not an expert on SEC disclosures, but you have an officer who

now knows that the company made materially false disclosures," Rasch said.

"There is a legal obligation for the CISO to do so if the CISO is right. And

only if the CISO is right."

Agile Coaching as a Path toward a Deeper Meaning of Work and Life

Agile coaching can only be learned in actual interactions with people, not

by learning methodologies or concepts in classrooms. Of course, classroom

training, books, and individual coaching can be of great help. However, the

main purpose of all this is to build a social setting, where people can

develop new ways of participating in conversations about work. In practice,

the best way to learn is to participate in various coaching situations with

a more experienced coach. The key is to reflect what kind of cooperative

patterns emerged in those situations, and how they were handled. It is also

important to learn where the focus of agile coaching is. When an expert,

such as a software designer, focuses on developing a product, an agile coach

focuses on the social patterns that are emerging around that product

development. In practice, the coach learns to focus on the conversations and

interactions between the software designers and helps to transform the

cooperative patterns of product development.

Quote for the day:

"Don't wait for the perfect moment

take the moment and make it perfect." -- Aryn Kyle

)