Chaos Engineering: A Science-based Approach to System Reliability

While testing is standard practice in software development, it’s not always

easy to foresee issues that can happen in production. Especially as systems

become increasingly complex to deliver maximum customer value. The adoption of

microservices enables faster release times and more possibilities than we’ve

ever seen before, however they introduce challenges. According to the 2020 IDG

cloud computing survey, 92 percent of organizations’ IT environments are at

least somewhat in the cloud today. In 2020, we saw highly accelerated digital

transformation as organizations had to quickly adjust to the impact of a

global pandemic. With added complexity comes more possible points of failure.

The trouble is that we humans managing these intricate systems cannot possibly

understand or foresee all of the issues because it’s impossible to understand

how each of the individual components of a loosely coupled architecture will

relate to each other. This is where Chaos Engineering steps in to proactively

create resilience. The major caveat of Chaos Engineering is that things are

broken in a very intentional and controlled manner while in production, unlike

regular QA practices, where this is done in safe development environments. It

is methodical and experimental and less ‘chaotic’ than the name implies.

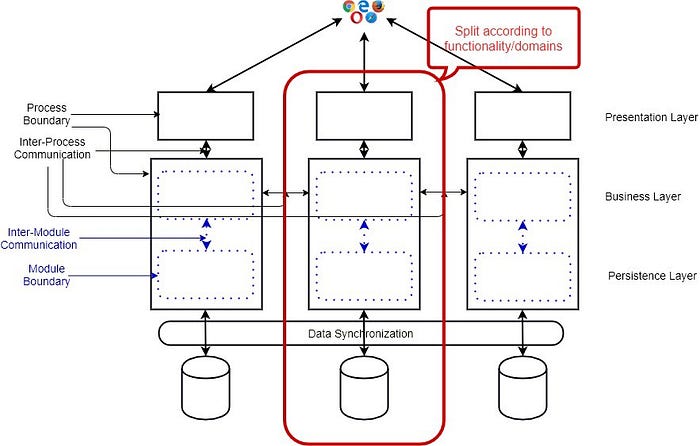

ECLASS presents the Distributed Ledger-based Infrastructure for Industrial Digital Twins

Advancing digitalization, increasing networking and horizontal integration in

the areas of purchasing, logistics and production, as well as in the

engineering, maintenance and operation of machines and products, are creating

new opportunities and business models that were unimaginable before. Classic

value chains are turning more and more into interconnected value networks in

which partners can seamlessly find and exchange the relevant information.

Machines, products and processes receive their Digital Twins, which represent

all relevant aspects of the physical world in the information world. The

combination of physical objects and their Digital Twins creates so-called

Cyber Physical Systems. Over the complete lifecycle, the relevant product

information and production data captured in the Digital Twin must be available

to the partners in the value chain at any time and in any place. The digital

representation of the real world in the information world, in the form of

Digital Twins, is therefore becoming increasingly important. However, the

desired horizontal and vertical integration and cooperation of all

participants in the value network across company boundaries, countries, and

continents can only succeed on the basis of common standards

Data Protection Bill won’t get cleared in its current version

Pande from Omidyar Network India said stakeholders of the data privacy

regulations should consider making the concept of consent more effective and

simple. The National Institute of Public Finance and Policy (NIPFP)

administered a quiz in 2019 to test how well urban, English speaking college

students understand privacy policies of Flipkart, Google, Paytm, Uber, and

WhatsApp. The students only scored an average of 5.3 out of 10. The privacy

policies were as complex as a Harvard Law Review paper, Pande said. Facebook’s

Claybaugh, however, said that “despite the challenges of communicating with

people about privacy, we do take pretty strong measures both in our data

policy which is interactive, in relatively easy-to-understand language

compared to, kind of, the terms of service we are used to seeing.” Lee, who

earlier worked with Singapore’s Personal Data Protection Commission said

challenges of a (DPA) are “manifold”. She said it must be ensured that the DPA

is “independent” and is given necessary powers especially when it must

regulate the government. The DPA must be staffed with the right people with

knowledge of technical and legal issues involved, she added.

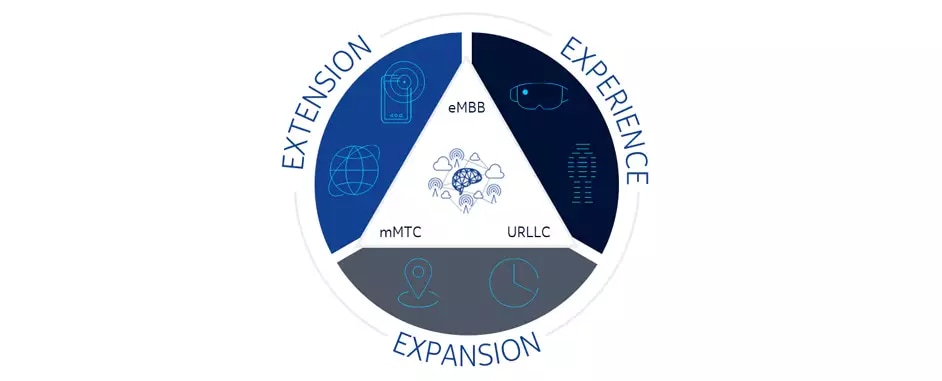

India approves game-changing framework against cyber threats

The office of National Security Advisor Ajit Doval, sources said, noted that

with the increasing use of Internet of Things (IoT) devices, the risk will

continue to increase manifold and the advent of 5G technology will further

increase the security concerns resulting from telecom networks. Maintaining

the integrity of the supply chain, including electronic components, is also

necessary for ensuring security against malware infections. Telecom is also

the critical underlying infrastructure for all other sectoral information

infrastructure of the country such as power, banking and finance, transport,

governance and the strategic sector. Security breaches resulting in compromise

of confidentiality and integrity of information or in disruption of the

infrastructure can have disastrous consequences. Sources said that in view of

these issues, the NSA office had recommended a framework -- 'National Security

Directive on Telecom Sector', which will address 5G and supply chain concerns.

Under the provisions of the directive, in order to maintain the integrity of

the supply chain security and in order to discourage insecure equipment in the

network, government will declare a list of 'Trusted Sources/Trusted Products'

for the benefit of the Telecom Service Providers (TSPs).

The case for HPC in the enterprise

Essentially, HPC is an incredibly powerful computing infrastructure built

specifically to conduct intensive computational analysis. Examples include

physics experiments that identify and predict black holes. Or modeling

genetic sequencing patterns against disease and patient profiles. In the

past year, the Amaro Lab at UC San Diego performed modeling on the COVID-19

coronavirus to an atomic level using one of the top supercomputers in the

world at the Texas Advanced Computing Center (TACC). I hosted a webinar with

folks from UCSD, TACC and Intel discussing their work here. Those types of

compute intensive workloads are still happening. However, enterprises are

also increasing their demand for compute intensive workloads. Enterprises

are processing increasing amounts of data to better understand customers and

business operations. At the same time, edge computing is creating an

explosive number of new data sources. Due to the sheer amount of data,

enterprises are leveraging automation through the form of machine learning

and artificial intelligence to parse the data and gain insights while making

faster and more accurate business decisions. Traditional systems

architectures are simply not able to keep up with the data tsunami.

5 reasons IT should consider client virtualization

First is the compatibility to run different operating systems or different

versions of the same operating system. For example, many enterprise workers

are increasingly running applications that are cross-platform such as Linux

applications for developers, Android for healthcare or finance, and Windows

for productivity. Second is the potential to isolate workloads for better

security. Note that different types of virtualization models co-exist to

support the diverse needs of customers (and applications in general are

getting virtualized for better cloud and client compatibility). The focus of

this article is full client virtualization that enables businesses to take

complete advantage of the capabilities of rich commercial clients including

improved performance, security and resilience. Virtualization in the client is

different from virtualization in servers. It’s not just about CPU

virtualization, but also about creating a good end-user experience with, for

example, better graphics, responsiveness of I/O, network, optimized battery

life of mobile devices and more. A decade ago, the goal of client

virtualization was to use a virtual machine for a one-off scenario or

workload.

The top 6 use cases for a data fabric architecture

A data fabric architecture promises a way to deal with many of the security

and governance issues being raised by new privacy regulations and the rise

in security breach incidents. "By far the largest positive impact of a data

fabric for organizations is the focus on enterprise-wide data security and

governance as part of the deployment, establishing it as a fundamental,

ongoing process," said Wim Stoop, director of product marketing at Cloudera.

Data governance is often seen in isolation, tied to a use case like tackling

regulatory compliance needs or departmental requirements in isolation. With

a data fabric, organizations are required to take a step back and consider

data management holistically. This delivers the self-service access to data

and analytics businesses demand to experiment and quickly drive value from

data. Such a degree of management, governance and security of data then also

makes proving compliance -- both industry and regulatory -- more or less a

side effect of having implemented the fabric itself. Although this is not a

full solution, it greatly reduces the effort associated with adhering to

compliance requirements. Platz cautioned that there is a wide gulf between a

vision for a perfect data fabric and what is practical today. "In practice,

many first versions of data fabric architectures look more like just another

data lake," Platz said.

Malicious Browser Extensions for Social Media Infect Millions of Systems

"This could be used to gather credentials and other sensitive corporate data

from the websites visited by the victim," he says. "We are preparing a

technical blog post with more technical information and IoCs, but for now, we

can share the ... malicious domains." The malicious extensions are the latest

attempt by cybercriminals to hide code in add-ons for popular browsers. In

February, independent researcher Jamila Kaya and Duo Security announced they

had discovered more than 500 Chrome extensions that infected millions of

users' browsers to steal data. In June, Awake Security reported more than 70

extensions in the Google Chrome Web store were downloaded more than 32 million

times and which collected browsing data and credentials for internal

websites. In its latest research, Avast found the third-party extensions

would collect information about users whenever they clicked on a link,

offering attackers the option to send users to an attacker-controlled URL

before forwarding them to their destinations. The extensions also collect the

users' birthdates, e-mail addresses, and information about the local system,

including name of the device, its operating system, and IP addresses.

How to use Agile swarming techniques to get features done

Teams that concentrate on individual skills and tasks end up with some

members far ahead and others grinding away at unfinished work. For example,

a back-end developer is still working on a feature, while the front-end

developer for that feature has finished coding. The front-end developer then

starts coding the next feature. The team can design hooks into the code to

let the front-end developers validate their work. However, a feature is not

done until a team completes the whole thing, fully integrates it and tests

it. Letting developers move asynchronously through the project might result

in good velocity metrics, but those measures don't always translate to the

team delivering the feature on time. If testers discover issues in a

delivered feature, the entire team must return to already completed tasks.

Let this scenario play out in a real software organization, and you end up

with partially completed work on many disparate tasks, and nothing finished.

The goal of Agile development is not to ensure the team is 100% busy, with

each person grabbing new product backlog items as soon as they complete

their prior task. This approach to development results in extensive

multitasking and ultimately slows the flow of completed items.

Application Level Encryption for Software Architects

Unless well-defined, the task for application-level encryption is frequently

underestimated, poorly implemented, and results in haphazard architectural

compromises when developers find out that integrating a cryptographic library

or service is just the tip of the iceberg. Whoever is formally assigned with

the job of implementing encryption-based data protection, faces thousands of

pages of documentation on how to implement things better, but very little on

how to design things correctly. Design exercises turn out to be a bumpy ride

every time you don’t expect the need for design and have a sequence of ad-hoc

decisions because you anticipated getting things done quickly: First, you face

key model and cryptosystem choice challenges, which hide under “which

library/tool should I use for this?” Hopefully, you chose a tool that fits

your use-case security-wise, not the one with the most stars on GitHub.

Hopefully, it contains only secure and modern cryptographic decisions.

Hopefully, it will be compatible with other team’s choices when the encryption

has to span several applications/platforms. Then you face key storage and

access challenges: where to store the encryption keys, how to separate them

from data, what are integration points where the components and data meet for

encryption/decryption, what is the trust/risk level toward these

components?

Quote for the day:

"Public opinion is no more than this: What people think that other people think." -- Alfred Austin