Quote for the day:

"Don't worry about being successful but work toward being significant and the success will naturally follow." -- Oprah Winfrey

Is it Possible to Fight AI and Win?

What’s the most important thing security teams need to figure out?

Organizations must stop talking about AI like it’s a death star of sorts. AI

is not a single, all-powerful, monolithic entity. It’s a stack of threats,

behaviors, and operational surfaces and each one has its own kill chain,

controls, and business consequences. We need to break AI down into its parts

and conduct a real campaign to defend ourselves. ... If AI is going to be

operationalized inside your business, it should be treated like a business

function. Not a feature or experiment, but a real operating capability. When

you look at it that way, the approach becomes clearer because businesses

already know how to do this. There is always an equivalent of HR, finance,

engineering, marketing, and operations. AI has the same needs. ... Quick fixes

aren’t enough in the AI era. The bad actors are innovating at machine speed,

so humans must respond at machine speed with appropriate human direction and

ethical clarity. AI is a tool. And the side that uses it better will win. If

that isn’t enough, AI will force another reality that organizations need to

prepare for. Security and compliance will become an on-demand model. Customers

will not wait for annual reports or scheduled reviews. They will click into a

dashboard and see your posture in real time. Your controls, your gaps, and

your response discipline will be visible when it matters, not when it is

convenient.

What’s the most important thing security teams need to figure out?

Organizations must stop talking about AI like it’s a death star of sorts. AI

is not a single, all-powerful, monolithic entity. It’s a stack of threats,

behaviors, and operational surfaces and each one has its own kill chain,

controls, and business consequences. We need to break AI down into its parts

and conduct a real campaign to defend ourselves. ... If AI is going to be

operationalized inside your business, it should be treated like a business

function. Not a feature or experiment, but a real operating capability. When

you look at it that way, the approach becomes clearer because businesses

already know how to do this. There is always an equivalent of HR, finance,

engineering, marketing, and operations. AI has the same needs. ... Quick fixes

aren’t enough in the AI era. The bad actors are innovating at machine speed,

so humans must respond at machine speed with appropriate human direction and

ethical clarity. AI is a tool. And the side that uses it better will win. If

that isn’t enough, AI will force another reality that organizations need to

prepare for. Security and compliance will become an on-demand model. Customers

will not wait for annual reports or scheduled reviews. They will click into a

dashboard and see your posture in real time. Your controls, your gaps, and

your response discipline will be visible when it matters, not when it is

convenient.Cybersecurity Budgets are Going Up, Pointing to a Boom

Nearly all of the security leaders (99%) in the 2025 KPMG Cybersecurity Survey

plan on upping their cybersecurity budgets in the two-to-three years to come,

in preparation for what may be the upcoming boom in cybersecurity. More than

half (54%) say budget increases will fall between 6%-10%. “The data doesn’t

just point to steady growth; it signals a potential boom. We’re seeing a major

market pivot where cybersecurity is now a fundamental driver of business

strategy,” Michael Isensee, Cybersecurity & Tech Risk Leader, KPMG LLP,

said in a release. “Leaders are moving beyond reactive defense and are

actively investing to build a security posture that can withstand future

shocks, especially from AI and other emerging technologies. This isn’t just

about spending more; it’s about strategic investment in resilience.” ... The

security leaders recognize AI is amassing steam as a dual catalyst—38% are

challenged by AI-powered attacks in the coming three years, with 70% of

organizations currently committing 10% of their budgets to combating such

attacks. But they also say AI is their best weapon to proactively identify and

stop threats when it comes to fraud prevention (57%), predictive analytics

(56%) and enhanced detection (53%). But they need the talent to pull it off.

And as the boom takes off, 53% just don’t have enough qualified candidates. As

a result, 49% are increasing compensation and the same number are bolstering

internal training, while 25% are increasingly turning to third parties like

MSSPs to fill the skills gap.

Nearly all of the security leaders (99%) in the 2025 KPMG Cybersecurity Survey

plan on upping their cybersecurity budgets in the two-to-three years to come,

in preparation for what may be the upcoming boom in cybersecurity. More than

half (54%) say budget increases will fall between 6%-10%. “The data doesn’t

just point to steady growth; it signals a potential boom. We’re seeing a major

market pivot where cybersecurity is now a fundamental driver of business

strategy,” Michael Isensee, Cybersecurity & Tech Risk Leader, KPMG LLP,

said in a release. “Leaders are moving beyond reactive defense and are

actively investing to build a security posture that can withstand future

shocks, especially from AI and other emerging technologies. This isn’t just

about spending more; it’s about strategic investment in resilience.” ... The

security leaders recognize AI is amassing steam as a dual catalyst—38% are

challenged by AI-powered attacks in the coming three years, with 70% of

organizations currently committing 10% of their budgets to combating such

attacks. But they also say AI is their best weapon to proactively identify and

stop threats when it comes to fraud prevention (57%), predictive analytics

(56%) and enhanced detection (53%). But they need the talent to pull it off.

And as the boom takes off, 53% just don’t have enough qualified candidates. As

a result, 49% are increasing compensation and the same number are bolstering

internal training, while 25% are increasingly turning to third parties like

MSSPs to fill the skills gap. How Neuro-Symbolic AI Breaks the Limits of LLMs

While AI transforms subjective work like content creation and data

summarization, executives rightfully hesitate to use it when facing objective,

high-stakes determinations that have clear right and wrong answers, such as

contract interpretation, regulatory compliance, or logical workflow

validation. But what if AI could demonstrate its reasoning and provide

mathematical proof of its conclusions? That’s where neuro-symbolic AI offers a

way forward. The “neuro” refers to neural networks, the technology behind

today’s LLMs, which learn patterns from massive datasets. A practical example

could be a compliance system, where a neural model trained on thousands of

past cases might infer that a certain policy doesn’t apply in a scenario. On

the other hand, symbolic AI represents knowledge through rules, constraints,

and structure, and it applies logic to make deductions. ... Neuro-symbolic AI

introduces a structural advance in LLM training by embedding automated

reasoning directly into the training loop. This uses formal logic and

mathematical proof to mechanically verify whether a statement, program, or

output used in the training data is correct. A tool such as Lean,4 is precise,

deterministic, and gives provable assurance. The key advantage of automated

reasoning is that it verifies each step of the reasoning process, and not just

the final answer.

While AI transforms subjective work like content creation and data

summarization, executives rightfully hesitate to use it when facing objective,

high-stakes determinations that have clear right and wrong answers, such as

contract interpretation, regulatory compliance, or logical workflow

validation. But what if AI could demonstrate its reasoning and provide

mathematical proof of its conclusions? That’s where neuro-symbolic AI offers a

way forward. The “neuro” refers to neural networks, the technology behind

today’s LLMs, which learn patterns from massive datasets. A practical example

could be a compliance system, where a neural model trained on thousands of

past cases might infer that a certain policy doesn’t apply in a scenario. On

the other hand, symbolic AI represents knowledge through rules, constraints,

and structure, and it applies logic to make deductions. ... Neuro-symbolic AI

introduces a structural advance in LLM training by embedding automated

reasoning directly into the training loop. This uses formal logic and

mathematical proof to mechanically verify whether a statement, program, or

output used in the training data is correct. A tool such as Lean,4 is precise,

deterministic, and gives provable assurance. The key advantage of automated

reasoning is that it verifies each step of the reasoning process, and not just

the final answer. Three things they’re not telling you about mobile app security

With the realities of “wilderness survival” in mind, effective mobile app

security must be designed for specific environmental exposures. You may need

to wear some kind of jacket at your office job (web app), but you’ll need a

very different kind of purpose-built jacket as well as other clothing layers,

tools, and safety checks to climb Mount Everest (mobile app). Similarly,

mobile app development teams need to rigorously test their code for potential

security issues and also incorporate multi-layered protections designed for

some harsh realities. ... A proactive and comprehensive approach is one that

applies mobile application security at each stage of the software development

lifecycle (SDLC). It includes the aforementioned testing in the stages of

planning, design, and development as well as those multi-layered protections

to ensure application integrity post-release. ... Whether stemming from

overconfidence or just kicking the can down the road, inadequate mobile app

security presents an existential risk. A recent survey of developers and

security professionals found that organizations experienced an average of nine

mobile app security incidents over the previous year. The total calculated

cost of each incident isn’t just about downtime and raw dollars, but also

“little things” like user experience, customer retention, and your

reputation.

With the realities of “wilderness survival” in mind, effective mobile app

security must be designed for specific environmental exposures. You may need

to wear some kind of jacket at your office job (web app), but you’ll need a

very different kind of purpose-built jacket as well as other clothing layers,

tools, and safety checks to climb Mount Everest (mobile app). Similarly,

mobile app development teams need to rigorously test their code for potential

security issues and also incorporate multi-layered protections designed for

some harsh realities. ... A proactive and comprehensive approach is one that

applies mobile application security at each stage of the software development

lifecycle (SDLC). It includes the aforementioned testing in the stages of

planning, design, and development as well as those multi-layered protections

to ensure application integrity post-release. ... Whether stemming from

overconfidence or just kicking the can down the road, inadequate mobile app

security presents an existential risk. A recent survey of developers and

security professionals found that organizations experienced an average of nine

mobile app security incidents over the previous year. The total calculated

cost of each incident isn’t just about downtime and raw dollars, but also

“little things” like user experience, customer retention, and your

reputation.Cybersecurity in 2026: Fewer dashboards, sharper decisions, real accountability

The way organisations perceive risk is one of the most important changes

predicted in 2026. Security teams spent years concentrating on inventory,

which included tracking vulnerabilities, chasing scores and counting assets.

The model is beginning to disintegrate. Attack-path modelling, on the other

hand, is becoming far more useful and practical. These models are evolving

from static diagrams to real-world settings where teams may simulate real

attacks. Consider it a cyberwar simulation where defenders may test “what if”

scenarios in real time, comprehend how a threat might propagate via systems

and determine whether vulnerabilities truly cause harm to organisations. This

evolution is accompanied by a growing disenchantment with abstract frameworks

that failed to provide concrete outcomes. The emphasis is shifting to

risk-prioritized operations, where teams start tackling the few problems that

actually provide attackers access instead than responding to clutter. Success

in 2026 will be determined more by impact than by activities. ... Many

companies continue to handle security issues behind closed doors as PR

disasters. However, an alternative strategy is gaining momentum. Communicate

as soon as something goes wrong. Update frequently, share your knowledge and

acknowledge your shortcomings. Post signs of compromise. Allow partners and

clients to defend themselves. Particularly in the middle of disorder, this

seems dangerous.

The way organisations perceive risk is one of the most important changes

predicted in 2026. Security teams spent years concentrating on inventory,

which included tracking vulnerabilities, chasing scores and counting assets.

The model is beginning to disintegrate. Attack-path modelling, on the other

hand, is becoming far more useful and practical. These models are evolving

from static diagrams to real-world settings where teams may simulate real

attacks. Consider it a cyberwar simulation where defenders may test “what if”

scenarios in real time, comprehend how a threat might propagate via systems

and determine whether vulnerabilities truly cause harm to organisations. This

evolution is accompanied by a growing disenchantment with abstract frameworks

that failed to provide concrete outcomes. The emphasis is shifting to

risk-prioritized operations, where teams start tackling the few problems that

actually provide attackers access instead than responding to clutter. Success

in 2026 will be determined more by impact than by activities. ... Many

companies continue to handle security issues behind closed doors as PR

disasters. However, an alternative strategy is gaining momentum. Communicate

as soon as something goes wrong. Update frequently, share your knowledge and

acknowledge your shortcomings. Post signs of compromise. Allow partners and

clients to defend themselves. Particularly in the middle of disorder, this

seems dangerous. AI and Latency: Why Milliseconds Decide Winners and Losers in the Data Center Race

Many traditional workloads can tolerate latency. Batch processing doesn’t care

if it takes an extra second to move data. AI training, especially at

hyperscale, can also be forgiving. You can load up terabytes of data in a data

center in Idaho and process it for days without caring if it’s a few

milliseconds slower. Inference is a different beast. Inference is where AI

turns trained models into real-time answers. It’s what happens when ChatGPT

finishes your sentence, your banking AI flags a fraudulent transaction, or a

predictive maintenance system decides whether to shut down a turbine. ... If

you think latency is just a technical metric, you’re missing the bigger

picture. In AI-powered industries, shaving milliseconds off inference times

directly impacts conversion rates, customer retention, and operational safety.

A stock trading platform with 10 ms faster AI-driven trade execution has a

measurable financial advantage. A translation service that responds instantly

feels more natural and wins user loyalty. A factory that catches a machine

fault 200 ms earlier can prevent costly downtime. Latency isn’t a checkbox,

it’s a competitive differentiator. And customers are willing to pay for it.

That’s why AWS and others have “latency-optimized” SKUs. That’s why every

major hyperscaler is pushing inference nodes closer to urban centers.

Many traditional workloads can tolerate latency. Batch processing doesn’t care

if it takes an extra second to move data. AI training, especially at

hyperscale, can also be forgiving. You can load up terabytes of data in a data

center in Idaho and process it for days without caring if it’s a few

milliseconds slower. Inference is a different beast. Inference is where AI

turns trained models into real-time answers. It’s what happens when ChatGPT

finishes your sentence, your banking AI flags a fraudulent transaction, or a

predictive maintenance system decides whether to shut down a turbine. ... If

you think latency is just a technical metric, you’re missing the bigger

picture. In AI-powered industries, shaving milliseconds off inference times

directly impacts conversion rates, customer retention, and operational safety.

A stock trading platform with 10 ms faster AI-driven trade execution has a

measurable financial advantage. A translation service that responds instantly

feels more natural and wins user loyalty. A factory that catches a machine

fault 200 ms earlier can prevent costly downtime. Latency isn’t a checkbox,

it’s a competitive differentiator. And customers are willing to pay for it.

That’s why AWS and others have “latency-optimized” SKUs. That’s why every

major hyperscaler is pushing inference nodes closer to urban centers.Why developers need to sharpen their focus on documentation

“One of the bigger benefits of architectural documentation is how it functions

as an onboarding resource for developers,” Kalinowski told ITPro. “It’s much

easier for new joiners to grasp the system’s architecture and design

principles, which means the burden’s not entirely on senior team members’

shoulders to do the training," he added. “It also acts as a repository of

institutional knowledge that preserves decision rationale, which might

otherwise get lost when team members move to other projects or leave the

company." ... “Every day, developers lose time because of inefficiencies in

their organization – they get bogged down in repetitive tasks and waste time

navigating between different tools,” he said. “They also end up losing time

trying to locate pertinent information – like that one piece of documentation

that explains an architectural decision from a previous team member,” Peters

added. “If software development were an F1 race, these inefficiencies are the

pit stops that eat into lap time. Every unnecessary context switch or

repetitive task equals more time lost when trying to reach the finish line.”

... “Documentation and deployments appear to either be not routine enough to

warrant AI assistance or otherwise removed from existing workflows so that not

much time is spent on it,” the company said. ... For developers of all

experience levels, Stack Overflow highlighted a concerning divide in terms of

documentation activities.

“One of the bigger benefits of architectural documentation is how it functions

as an onboarding resource for developers,” Kalinowski told ITPro. “It’s much

easier for new joiners to grasp the system’s architecture and design

principles, which means the burden’s not entirely on senior team members’

shoulders to do the training," he added. “It also acts as a repository of

institutional knowledge that preserves decision rationale, which might

otherwise get lost when team members move to other projects or leave the

company." ... “Every day, developers lose time because of inefficiencies in

their organization – they get bogged down in repetitive tasks and waste time

navigating between different tools,” he said. “They also end up losing time

trying to locate pertinent information – like that one piece of documentation

that explains an architectural decision from a previous team member,” Peters

added. “If software development were an F1 race, these inefficiencies are the

pit stops that eat into lap time. Every unnecessary context switch or

repetitive task equals more time lost when trying to reach the finish line.”

... “Documentation and deployments appear to either be not routine enough to

warrant AI assistance or otherwise removed from existing workflows so that not

much time is spent on it,” the company said. ... For developers of all

experience levels, Stack Overflow highlighted a concerning divide in terms of

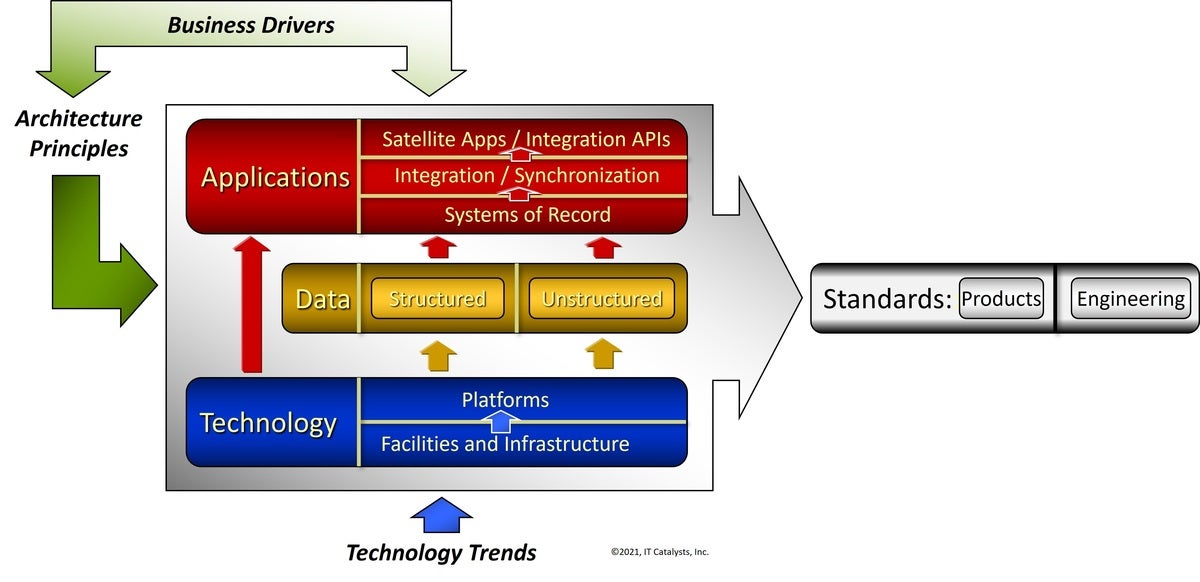

documentation activities.AI Pilots Are Easy. Business Use Cases Are Hard

Moving from pilot to purpose is where most AI journeys lose momentum. The gap

often lies not in the model itself, but in the ecosystem around it. Fragmented

data, unclear ROI frameworks and organizational silos slow down scaling. To

avoid this breakdown, an AI pilot must be anchored to clear business outcomes

- whether that's cost optimization, data-led infrastructure or customer

experience. Once the outcomes are defined, the organization can test the

system with the specific data and processes that will support it. This focus

sets the stage for the next 10 to 14 months of refinement needed to ready the

tool for deeper integration. When implementation begins, workflows become

self-optimizing, decisions accelerate and frontline teams gain real-time

intelligence. As AI moves beyond pilots, systems begin spotting patterns

before people do. Teams shift from retrospective analysis to live

decision-making. Processes improve themselves through constant feedback loops.

These capabilities unlock efficiency and insight across businesses, but highly

regulated industries such as banking, insurance, and healthcare face

additional hurdles. Compliance, data privacy and explainability add layers of

complexity, making it essential for AI integration to include process

redesign, staff retraining and organizationwide AI literacy, not just within

technical teams.

Moving from pilot to purpose is where most AI journeys lose momentum. The gap

often lies not in the model itself, but in the ecosystem around it. Fragmented

data, unclear ROI frameworks and organizational silos slow down scaling. To

avoid this breakdown, an AI pilot must be anchored to clear business outcomes

- whether that's cost optimization, data-led infrastructure or customer

experience. Once the outcomes are defined, the organization can test the

system with the specific data and processes that will support it. This focus

sets the stage for the next 10 to 14 months of refinement needed to ready the

tool for deeper integration. When implementation begins, workflows become

self-optimizing, decisions accelerate and frontline teams gain real-time

intelligence. As AI moves beyond pilots, systems begin spotting patterns

before people do. Teams shift from retrospective analysis to live

decision-making. Processes improve themselves through constant feedback loops.

These capabilities unlock efficiency and insight across businesses, but highly

regulated industries such as banking, insurance, and healthcare face

additional hurdles. Compliance, data privacy and explainability add layers of

complexity, making it essential for AI integration to include process

redesign, staff retraining and organizationwide AI literacy, not just within

technical teams.Why your next cloud bill could be a trap

“AI-ready” often means “AI–deeply embedded” into your data, tools, and

runtime environment. Your logs are now processed through their AI analytics.

Your application telemetry routes through their AI-based observability. Your

customer data is indexed for their vector search. This is convenient in the

short term. In the long term, it shifts power. The more AI-native services you

consume from a single hyperscaler, the more they shape your architecture and

your economics. You become less likely to adopt open source models, alternative

GPU clouds, or sovereign and private clouds that might be a better fit for

specific workloads. You are more likely to accept rate changes, technical

limits, and road maps that may not align with your interests, simply because

unwinding that dependency is too painful. ... For companies not prepared to

fully commit to AI-native services from a single hyperscaler or in search of a

backup option, these alternatives matter. They can host models under your

control, support open ecosystems, or serve as a landing zone for workloads you

might eventually relocate from a hyperscaler. However, maintaining this

flexibility requires avoiding the strong influence of deeply integrated,

proprietary AI stacks from the start. ... The bottom line is simple: AI-native

cloud is coming, and in many ways, it’s already here. The question is not

whether you will use AI in the cloud, but how much control you will retain over

its cost, architecture, and strategic direction.

“AI-ready” often means “AI–deeply embedded” into your data, tools, and

runtime environment. Your logs are now processed through their AI analytics.

Your application telemetry routes through their AI-based observability. Your

customer data is indexed for their vector search. This is convenient in the

short term. In the long term, it shifts power. The more AI-native services you

consume from a single hyperscaler, the more they shape your architecture and

your economics. You become less likely to adopt open source models, alternative

GPU clouds, or sovereign and private clouds that might be a better fit for

specific workloads. You are more likely to accept rate changes, technical

limits, and road maps that may not align with your interests, simply because

unwinding that dependency is too painful. ... For companies not prepared to

fully commit to AI-native services from a single hyperscaler or in search of a

backup option, these alternatives matter. They can host models under your

control, support open ecosystems, or serve as a landing zone for workloads you

might eventually relocate from a hyperscaler. However, maintaining this

flexibility requires avoiding the strong influence of deeply integrated,

proprietary AI stacks from the start. ... The bottom line is simple: AI-native

cloud is coming, and in many ways, it’s already here. The question is not

whether you will use AI in the cloud, but how much control you will retain over

its cost, architecture, and strategic direction.