Quote for the day:

"Leadership isn’t about watching people work. It’s about helping teams deliver results whether they’re in the office or working remotely." -- Gordon Tredgold

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 21 mins • Perfect for listening on the go.

What enterprise devops teams should learn from SaaS

Enterprise DevOps teams can significantly enhance their software delivery by

adopting the rigorous strategies utilized by successful SaaS providers. Unlike

traditional IT projects with fixed end dates, SaaS companies treat software as

a continuously evolving product, prioritizing a product-based mindset where

end users are viewed as customers. This shift involves moving away from

manual, reactive workflows toward automated, "Day 0" planning that integrates

security, observability, and scalability directly into the initial

architectural design. To minimize risks, teams should follow the "code less,

test more" philosophy, leveraging advanced CI/CD pipelines, feature flagging,

and synthetic test data to ensure frequent deployments remain seamless and

reliable. Furthermore, shifting security left ensures that compliance and

infrastructure hardening are foundational elements rather than late-stage

additions. By standardizing observability through the lens of user workflows

rather than simple system uptime, organizations can move from reactive

troubleshooting to proactive reliability. Ultimately, the article emphasizes

that treating internal development platforms as specialized SaaS products

allows enterprise IT to transform from a corporate bottleneck into a powerful

competitive advantage. This approach focuses on driving business value through

incremental improvements, ensuring that every deployment enhances the user

experience while maintaining high standards of security and operational

excellence.

Enterprise DevOps teams can significantly enhance their software delivery by

adopting the rigorous strategies utilized by successful SaaS providers. Unlike

traditional IT projects with fixed end dates, SaaS companies treat software as

a continuously evolving product, prioritizing a product-based mindset where

end users are viewed as customers. This shift involves moving away from

manual, reactive workflows toward automated, "Day 0" planning that integrates

security, observability, and scalability directly into the initial

architectural design. To minimize risks, teams should follow the "code less,

test more" philosophy, leveraging advanced CI/CD pipelines, feature flagging,

and synthetic test data to ensure frequent deployments remain seamless and

reliable. Furthermore, shifting security left ensures that compliance and

infrastructure hardening are foundational elements rather than late-stage

additions. By standardizing observability through the lens of user workflows

rather than simple system uptime, organizations can move from reactive

troubleshooting to proactive reliability. Ultimately, the article emphasizes

that treating internal development platforms as specialized SaaS products

allows enterprise IT to transform from a corporate bottleneck into a powerful

competitive advantage. This approach focuses on driving business value through

incremental improvements, ensuring that every deployment enhances the user

experience while maintaining high standards of security and operational

excellence.Quietly Effective leadership for Busy DevOps Teams

The article "Quietly Effective Leadership for Busy DevOps Teams" explores a

pragmatic approach to leading high-pressure technical teams by prioritizing

clarity and calm over heroic intervention. It emphasizes that effective

leadership begins with defining goals in plain language and strictly defending

a small set of priorities to avoid team burnout. Central to this philosophy is

making invisible labor visible, which prevents individual "heroics" from

masking systemic inefficiencies. To maintain long-term operational stability,

the author suggests using "decision notes" to document rationale and adopting

trusted metrics—such as deploy frequency and change failure rates—as helpful

guides rather than punitive tools. During incidents, the focus shifts to

creating order through repeatable mechanics and clearly defined roles, such as

the Incident Commander, to prevent panic and maintain stakeholder trust.

Furthermore, the piece advocates for building cultural trust through "boring

consistency" and predictable decision-making. By reserving sprint capacity for

toil reduction and automating frequent, low-risk tasks, leaders can foster a

sustainable environment where improvements compound significantly over time.

Ultimately, the guide suggests that "quiet" leadership, characterized by

supportive guardrails rather than rigid gatekeeping, empowers teams to ship

faster while maintaining their mental well-being and operational sanity in an

increasingly demanding DevOps landscape.

The article "Quietly Effective Leadership for Busy DevOps Teams" explores a

pragmatic approach to leading high-pressure technical teams by prioritizing

clarity and calm over heroic intervention. It emphasizes that effective

leadership begins with defining goals in plain language and strictly defending

a small set of priorities to avoid team burnout. Central to this philosophy is

making invisible labor visible, which prevents individual "heroics" from

masking systemic inefficiencies. To maintain long-term operational stability,

the author suggests using "decision notes" to document rationale and adopting

trusted metrics—such as deploy frequency and change failure rates—as helpful

guides rather than punitive tools. During incidents, the focus shifts to

creating order through repeatable mechanics and clearly defined roles, such as

the Incident Commander, to prevent panic and maintain stakeholder trust.

Furthermore, the piece advocates for building cultural trust through "boring

consistency" and predictable decision-making. By reserving sprint capacity for

toil reduction and automating frequent, low-risk tasks, leaders can foster a

sustainable environment where improvements compound significantly over time.

Ultimately, the guide suggests that "quiet" leadership, characterized by

supportive guardrails rather than rigid gatekeeping, empowers teams to ship

faster while maintaining their mental well-being and operational sanity in an

increasingly demanding DevOps landscape.Your brain for sale? The new frontier of neural data

"Your Brain for Sale: The New Frontier of Neural Data" explores the emerging

landscape of consumer neurotechnology, where wearable headsets and

focus-enhancing devices are increasingly harvesting electrical brain signals.

Unlike medical implants, these non-invasive gadgets inhabit a rapidly expanding

$55 billion market, aimed at everyday users seeking to optimize sleep or

productivity. However, this technological leap has outpaced existing legal and

ethical frameworks, creating a precarious "wild west" for mental privacy. The

article highlights how companies often secure broad, irrevocable licenses over

user data through complex terms of service, sometimes barring individuals from

accessing their own neural records. Because neural data can reveal intimate

cognitive patterns and emotional states that individuals may not consciously

disclose, the stakes for privacy are exceptionally high. While jurisdictions

like Chile and US states such as Colorado and California have begun enacting

landmark protections, much of the world lacks specific regulations for brain

data. As the industry attracts massive investment from tech giants, the proposed

US Mind Act represents a critical attempt to bridge this regulatory gap.

Ultimately, the piece warns that without robust governance, our most private

inner thoughts could become the next frontier of corporate commodification,

necessitating urgent global action to safeguard neural integrity.

"Your Brain for Sale: The New Frontier of Neural Data" explores the emerging

landscape of consumer neurotechnology, where wearable headsets and

focus-enhancing devices are increasingly harvesting electrical brain signals.

Unlike medical implants, these non-invasive gadgets inhabit a rapidly expanding

$55 billion market, aimed at everyday users seeking to optimize sleep or

productivity. However, this technological leap has outpaced existing legal and

ethical frameworks, creating a precarious "wild west" for mental privacy. The

article highlights how companies often secure broad, irrevocable licenses over

user data through complex terms of service, sometimes barring individuals from

accessing their own neural records. Because neural data can reveal intimate

cognitive patterns and emotional states that individuals may not consciously

disclose, the stakes for privacy are exceptionally high. While jurisdictions

like Chile and US states such as Colorado and California have begun enacting

landmark protections, much of the world lacks specific regulations for brain

data. As the industry attracts massive investment from tech giants, the proposed

US Mind Act represents a critical attempt to bridge this regulatory gap.

Ultimately, the piece warns that without robust governance, our most private

inner thoughts could become the next frontier of corporate commodification,

necessitating urgent global action to safeguard neural integrity.

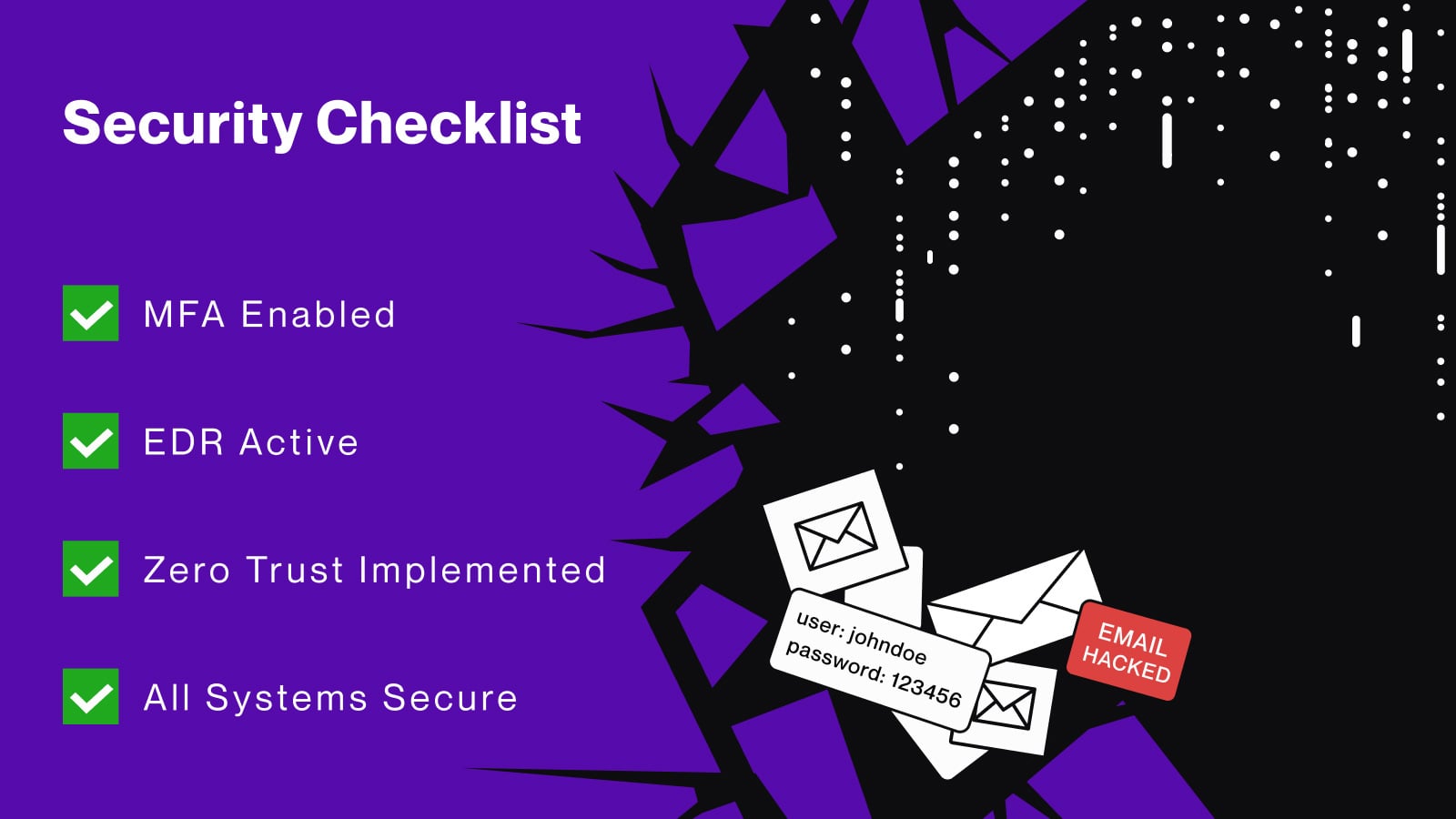

Cybercriminals move deeper into networks, hiding in edge infrastructure

The 2026 Threatscape Report from Lumen reveals a strategic shift in

cybercriminal activity, with attackers increasingly targeting edge

infrastructure like routers, VPN gateways, and firewalls to bypass traditional

endpoint security. By lurking in these often-overlooked devices, adversaries can

evade detection for months, complicating efforts to link disparate attack

stages. The report highlights the massive scale of modern botnets, with Aisuru

recording nearly three million IPs and emerging campaigns like Kimwolf

demonstrating the ability to scale rapidly even after disruption. High-profile

threats like Rhadamanthys and SystemBC exploit unpatched vulnerabilities and

utilize stealthy command-and-control (C2) servers, many of which show zero

detection on security platforms. Furthermore, the integration of Generative AI

is accelerating the pace at which attackers assemble and retool their malware.

Long-running operations such as Raptor Train exemplify the evolution of

infrastructure-centric campaigns, where the network layer itself becomes the

primary focus of the operation. This landscape underscores a critical need for

advanced network intelligence, as defenders must identify threats closer to

their origin to mitigate sophisticated, persistent campaigns. Ultimately, as

cybercriminals move deeper into network blind spots, organizations must

prioritize visibility across internet-exposed systems to maintain a robust and

proactive security posture against these evolving global threats.

The 2026 Threatscape Report from Lumen reveals a strategic shift in

cybercriminal activity, with attackers increasingly targeting edge

infrastructure like routers, VPN gateways, and firewalls to bypass traditional

endpoint security. By lurking in these often-overlooked devices, adversaries can

evade detection for months, complicating efforts to link disparate attack

stages. The report highlights the massive scale of modern botnets, with Aisuru

recording nearly three million IPs and emerging campaigns like Kimwolf

demonstrating the ability to scale rapidly even after disruption. High-profile

threats like Rhadamanthys and SystemBC exploit unpatched vulnerabilities and

utilize stealthy command-and-control (C2) servers, many of which show zero

detection on security platforms. Furthermore, the integration of Generative AI

is accelerating the pace at which attackers assemble and retool their malware.

Long-running operations such as Raptor Train exemplify the evolution of

infrastructure-centric campaigns, where the network layer itself becomes the

primary focus of the operation. This landscape underscores a critical need for

advanced network intelligence, as defenders must identify threats closer to

their origin to mitigate sophisticated, persistent campaigns. Ultimately, as

cybercriminals move deeper into network blind spots, organizations must

prioritize visibility across internet-exposed systems to maintain a robust and

proactive security posture against these evolving global threats.

Hackers Exploit Kubernetes Misconfigurations to Move From Containers to Cloud Accounts

Recent cybersecurity findings reveal a significant 282% surge in threat

operations targeting Kubernetes environments, as hackers increasingly exploit

misconfigurations to escalate access from containerized applications to full

cloud accounts. Malicious actors, such as the North Korean state-sponsored group

Slow Pisces, utilize sophisticated tactics including service account token theft

and the abuse of overly permissive access controls to pivot toward sensitive

financial infrastructure. By gaining initial code execution within a container,

adversaries can extract mounted JSON Web Tokens (JWTs) to authenticate with the

Kubernetes API server, allowing them to list secrets, manipulate workloads, and

eventually access broader cloud resources. Notable vulnerabilities like the

React2Shell flaw (CVE-2025-55182) have also been weaponized to deploy backdoors

and cryptominers within days of disclosure. To mitigate these risks, security

experts emphasize the necessity of enforcing strict Role-Based Access Control

(RBAC) policies, transitioning to short-lived projected tokens, and maintaining

robust runtime monitoring. Additionally, enabling comprehensive Kubernetes audit

logs remains essential for detecting early signs of API misuse or lateral

movement. These proactive measures are critical for organizations seeking to

secure their core cloud environments against calculated attacks that transform

minor configuration oversights into devastating breaches involving substantial

financial loss and operational disruption.

Recent cybersecurity findings reveal a significant 282% surge in threat

operations targeting Kubernetes environments, as hackers increasingly exploit

misconfigurations to escalate access from containerized applications to full

cloud accounts. Malicious actors, such as the North Korean state-sponsored group

Slow Pisces, utilize sophisticated tactics including service account token theft

and the abuse of overly permissive access controls to pivot toward sensitive

financial infrastructure. By gaining initial code execution within a container,

adversaries can extract mounted JSON Web Tokens (JWTs) to authenticate with the

Kubernetes API server, allowing them to list secrets, manipulate workloads, and

eventually access broader cloud resources. Notable vulnerabilities like the

React2Shell flaw (CVE-2025-55182) have also been weaponized to deploy backdoors

and cryptominers within days of disclosure. To mitigate these risks, security

experts emphasize the necessity of enforcing strict Role-Based Access Control

(RBAC) policies, transitioning to short-lived projected tokens, and maintaining

robust runtime monitoring. Additionally, enabling comprehensive Kubernetes audit

logs remains essential for detecting early signs of API misuse or lateral

movement. These proactive measures are critical for organizations seeking to

secure their core cloud environments against calculated attacks that transform

minor configuration oversights into devastating breaches involving substantial

financial loss and operational disruption.

Resilience is a leadership decision, not a cloud feature

In the article "Resilience is a leadership decision, not a cloud feature," Vinay Chhabra argues that as India’s digital economy increasingly relies on cloud infrastructure, organizations must recognize that systemic resilience is a strategic mandate rather than a built-in technical capability. While cloud environments offer speed and scale, they also introduce architectural concentration risks where shared control layers can turn isolated disruptions into catastrophic, balance-sheet-impacting outages. Chhabra asserts that reliability cannot be outsourced, as complex internal updates and dependency conflicts often amplify failure domains. Consequently, true resilience requires deliberate leadership choices regarding diversification and containment. Boards must weigh the trade-offs between cost efficiency and operational survivability, moving beyond a mindset focused solely on quarterly optimization. Diversification is not merely about using multiple providers but about ensuring that single points of failure—such as identity layers or regions—do not cause cascading collapses across an enterprise. By treating resilience as strategic capital, leaders can implement independent recovery environments and verified failover protocols. Ultimately, the transition from being vulnerable to being robust depends on a cultural shift where executives prioritize long-term control and disciplined governance over the false comfort of centralized efficiency in an interconnected digital landscape.Anthropic’s dispute with US government exposes deeper rifts over AI governance, risk and control

The escalating dispute between Anthropic PBC and the United States government

underscores a profound rift in the governance, risk management, and control of

artificial intelligence. Initially sparked by Anthropic’s refusal to permit its

models for use in autonomous weaponry and mass surveillance, the conflict

intensified when the Department of Defense designated the company as a “supply

chain risk.” This move, compounded by a presidential order barring federal

agencies from using Anthropic’s technology, is currently facing legal challenges

through a preliminary injunction. The situation highlights a fundamental

tension: whether private corporations should establish ethical boundaries for

dual-use technologies or if the state should dictate use cases based on national

security priorities. Industry analysts note that such policy shocks expose the

vulnerabilities of enterprise systems deeply embedded with specific AI models,

where forced transitions can lead to significant technical debt. While losing

lucrative government contracts is a financial blow, experts suggest Anthropic’s

firm stance on ethical restrictions might ultimately strengthen its brand

reputation and long-term trust within the commercial enterprise sector.

Ultimately, this rift illustrates that AI is no longer merely a productivity

tool but a strategic asset requiring new, complex governance frameworks that

balance corporate responsibility, state interests, and global societal

impacts.

The escalating dispute between Anthropic PBC and the United States government

underscores a profound rift in the governance, risk management, and control of

artificial intelligence. Initially sparked by Anthropic’s refusal to permit its

models for use in autonomous weaponry and mass surveillance, the conflict

intensified when the Department of Defense designated the company as a “supply

chain risk.” This move, compounded by a presidential order barring federal

agencies from using Anthropic’s technology, is currently facing legal challenges

through a preliminary injunction. The situation highlights a fundamental

tension: whether private corporations should establish ethical boundaries for

dual-use technologies or if the state should dictate use cases based on national

security priorities. Industry analysts note that such policy shocks expose the

vulnerabilities of enterprise systems deeply embedded with specific AI models,

where forced transitions can lead to significant technical debt. While losing

lucrative government contracts is a financial blow, experts suggest Anthropic’s

firm stance on ethical restrictions might ultimately strengthen its brand

reputation and long-term trust within the commercial enterprise sector.

Ultimately, this rift illustrates that AI is no longer merely a productivity

tool but a strategic asset requiring new, complex governance frameworks that

balance corporate responsibility, state interests, and global societal

impacts.The rise of proactive cyber: Why defense is no longer enough

The cybersecurity landscape is undergoing a fundamental shift from a reactive

model to a proactive, "active defense" strategy as traditional methods fail to

keep pace with increasingly sophisticated threats. For decades, organizations

focused on detecting intrusions and patching vulnerabilities, but the rapid

acceleration of cyberattacks—where the time between initial access and secondary

handoffs has collapsed from hours to mere seconds—has rendered this approach

insufficient. Driven by government strategy and industry leaders like Google and

Microsoft, this proactive movement seeks to disrupt adversaries "upstream"

before they penetrate target networks. Rather than engaging in illegal "hacking

back," these measures utilize legal authorities, civil litigation, and technical

capabilities to dismantle attacker infrastructure and shift the economic balance

against threat actors. While the private sector is central to these efforts due

to its control over digital infrastructure, the strategy faces significant

hurdles, including jurisdictional complexities and the concentration of

capability among tech giants. For the average security leader, the rise of

proactive cyber does not replace the need for fundamental hygiene; instead, it

requires CISOs to foster operational readiness and participate in collaborative

threat intelligence sharing. By degrading adversary capabilities before they

reach the "castle walls," proactive cyber aims to buy critical time and enhance

global resilience.

The cybersecurity landscape is undergoing a fundamental shift from a reactive

model to a proactive, "active defense" strategy as traditional methods fail to

keep pace with increasingly sophisticated threats. For decades, organizations

focused on detecting intrusions and patching vulnerabilities, but the rapid

acceleration of cyberattacks—where the time between initial access and secondary

handoffs has collapsed from hours to mere seconds—has rendered this approach

insufficient. Driven by government strategy and industry leaders like Google and

Microsoft, this proactive movement seeks to disrupt adversaries "upstream"

before they penetrate target networks. Rather than engaging in illegal "hacking

back," these measures utilize legal authorities, civil litigation, and technical

capabilities to dismantle attacker infrastructure and shift the economic balance

against threat actors. While the private sector is central to these efforts due

to its control over digital infrastructure, the strategy faces significant

hurdles, including jurisdictional complexities and the concentration of

capability among tech giants. For the average security leader, the rise of

proactive cyber does not replace the need for fundamental hygiene; instead, it

requires CISOs to foster operational readiness and participate in collaborative

threat intelligence sharing. By degrading adversary capabilities before they

reach the "castle walls," proactive cyber aims to buy critical time and enhance

global resilience.

Delegating Decisions in Security Operations

The blog post "Delegating Decisions in Security Operations" explores the

critical challenges and strategies involved in modern cybersecurity management,

particularly focusing on the balance between human expertise and automated

systems. As cyber threats grow in complexity and volume, Security Operations

Centers (SOCs) are increasingly forced to delegate high-stakes decision-making

to sophisticated software and artificial intelligence. This shift is necessary

because the sheer velocity of incoming alerts often exceeds human cognitive

limits. However, the author emphasizes that delegation is not merely about

offloading tasks but requires a fundamental restructuring of trust and

accountability within the organization. Effective delegation necessitates that

automated tools are transparent and explainable, allowing human operators to

intervene or refine logic when anomalies arise. Furthermore, the post highlights

the importance of "human-in-the-loop" architectures, where automation handles

repetitive, low-level data processing while human analysts focus on strategic

threat hunting and nuanced risk assessment. Ultimately, the article argues that

successful security operations depend on a symbiotic relationship where

technology augments human intuition rather than replacing it. By establishing

clear protocols for how and when decisions are delegated, organizations can

improve their resilience against evolving digital threats while maintaining the

essential oversight required for complex security environments.

The blog post "Delegating Decisions in Security Operations" explores the

critical challenges and strategies involved in modern cybersecurity management,

particularly focusing on the balance between human expertise and automated

systems. As cyber threats grow in complexity and volume, Security Operations

Centers (SOCs) are increasingly forced to delegate high-stakes decision-making

to sophisticated software and artificial intelligence. This shift is necessary

because the sheer velocity of incoming alerts often exceeds human cognitive

limits. However, the author emphasizes that delegation is not merely about

offloading tasks but requires a fundamental restructuring of trust and

accountability within the organization. Effective delegation necessitates that

automated tools are transparent and explainable, allowing human operators to

intervene or refine logic when anomalies arise. Furthermore, the post highlights

the importance of "human-in-the-loop" architectures, where automation handles

repetitive, low-level data processing while human analysts focus on strategic

threat hunting and nuanced risk assessment. Ultimately, the article argues that

successful security operations depend on a symbiotic relationship where

technology augments human intuition rather than replacing it. By establishing

clear protocols for how and when decisions are delegated, organizations can

improve their resilience against evolving digital threats while maintaining the

essential oversight required for complex security environments.

7 reasons IT always gets the blame — and how IT leaders can change that

The article "7 reasons IT always gets the blame — and how IT leaders can change

that" explores why technology departments often serve as organizational

scapegoats and provides actionable strategies for CIOs to reshape this

perception. IT frequently faces criticism due to poor communication and a siloed

"outsider" status, where technical jargon alienates non-experts. Additional

causes include mismatched goals regarding ROI, chronic underinvestment in change

management, and vague ownership boundaries as technology permeates every

business function. Leadership often focuses on visible symptoms like outages

rather than underlying root causes, while the legacy view of IT as a mere cost

center further erodes trust. To counter these challenges, IT leaders must

transition from reactive support roles to proactive business partners. This

shift requires sharpening communication by translating technical risks into

business language and ensuring transparency before crises occur. By aligning

technological initiatives with long-term enterprise strategies, documenting

trade-offs, and reporting on outcomes rather than just incidents, CIOs can build

credibility. Ultimately, fostering a post-mortem culture that prioritizes

process improvement over finger-pointing allows IT to move beyond its role as a

convenient target, establishing itself as a strategic driver of organizational

resilience and sustained business growth.

The article "7 reasons IT always gets the blame — and how IT leaders can change

that" explores why technology departments often serve as organizational

scapegoats and provides actionable strategies for CIOs to reshape this

perception. IT frequently faces criticism due to poor communication and a siloed

"outsider" status, where technical jargon alienates non-experts. Additional

causes include mismatched goals regarding ROI, chronic underinvestment in change

management, and vague ownership boundaries as technology permeates every

business function. Leadership often focuses on visible symptoms like outages

rather than underlying root causes, while the legacy view of IT as a mere cost

center further erodes trust. To counter these challenges, IT leaders must

transition from reactive support roles to proactive business partners. This

shift requires sharpening communication by translating technical risks into

business language and ensuring transparency before crises occur. By aligning

technological initiatives with long-term enterprise strategies, documenting

trade-offs, and reporting on outcomes rather than just incidents, CIOs can build

credibility. Ultimately, fostering a post-mortem culture that prioritizes

process improvement over finger-pointing allows IT to move beyond its role as a

convenient target, establishing itself as a strategic driver of organizational

resilience and sustained business growth.

_Dzmitry_Skazau_Alamy.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)

/dq/media/media_files/KhXRkhRATvhED3nupbpC.jpg)