Quote for the day:

"Success… seems to be connected with action. Successful people keep moving. They make mistakes, but they don’t quit." -- Conrad Hilton

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 21 mins • Perfect for listening on the go.

The New Geography of Risk: Why Businesses Need a Real-Time Country Risk Dashboard

The Risk Awareness article highlights a profound shift in the corporate

landscape, where geopolitical risk has evolved from a peripheral strategic

concern into a vital daily operational variable. The modern business

environment is increasingly shaped by fast-moving disruptions like tariffs,

export controls, sanctions, and vulnerable maritime corridors, as evidenced by

recent supply chain shocks such as the Red Sea shipping disruptions and the

global semiconductor crisis. Because reactive crisis management leaves

organizations highly exposed, forward-thinking businesses are shifting their

focus toward continuous, real-time internal "country risk dashboards." Unlike

traditional risk frameworks that look only at sovereign stability and

macroeconomic indicators, modern dashboards integrate comprehensive, dynamic

tracking of trade restrictions, shifting technology ecosystem policies,

maritime dependencies, hidden vendor concentration threats within procurement

networks, and currency volatility. This evolution reflects a broader corporate

transition from optimizing purely for cost efficiency to designing for

long-term operational resilience through proactive strategies like

friend-shoring and regional diversification. Ultimately, predictive certainty

is unrealistic; therefore, a sustainable competitive advantage will belong to

organizations that successfully cultivate deep internal geopolitical literacy

and translate global political developments into rapid, actionable operational

signals across procurement, logistics, and treasury functions faster than

their industry peers.

The Risk Awareness article highlights a profound shift in the corporate

landscape, where geopolitical risk has evolved from a peripheral strategic

concern into a vital daily operational variable. The modern business

environment is increasingly shaped by fast-moving disruptions like tariffs,

export controls, sanctions, and vulnerable maritime corridors, as evidenced by

recent supply chain shocks such as the Red Sea shipping disruptions and the

global semiconductor crisis. Because reactive crisis management leaves

organizations highly exposed, forward-thinking businesses are shifting their

focus toward continuous, real-time internal "country risk dashboards." Unlike

traditional risk frameworks that look only at sovereign stability and

macroeconomic indicators, modern dashboards integrate comprehensive, dynamic

tracking of trade restrictions, shifting technology ecosystem policies,

maritime dependencies, hidden vendor concentration threats within procurement

networks, and currency volatility. This evolution reflects a broader corporate

transition from optimizing purely for cost efficiency to designing for

long-term operational resilience through proactive strategies like

friend-shoring and regional diversification. Ultimately, predictive certainty

is unrealistic; therefore, a sustainable competitive advantage will belong to

organizations that successfully cultivate deep internal geopolitical literacy

and translate global political developments into rapid, actionable operational

signals across procurement, logistics, and treasury functions faster than

their industry peers.Beyond Unit Tests: Using AI to Find Secret Failures in Distributed Systems

Inside a Crypto Drainer: How to Spot it Before it Empties Your Wallet

The BleepingComputer article details the increasing professionalization of

cryptocurrency theft through structured Drainer as a Service (DaaS) platforms.

Analyzing Flare researchers' extensive data on the malicious Lucifer DaaS

platform between January 2025 and early 2026, the report highlights how these

modern ecosystems closely mimic legitimate SaaS businesses. DaaS operators

manage complex transaction logic, wallet interactions, and software updates

while taking a twenty percent commission on successful thefts, whereas

recruited affiliates use social engineering to drive phishing traffic toward

malicious websites. Rather than relying on traditional device compromise,

drainers exploit user confusion regarding complex Web3 permissions and

approvals, abusing authorization mechanisms like Permit and Permit2 to siphon

digital assets within seconds. Lucifer significantly reduced technical

barriers for its affiliates by introducing automated utilities like website

cloning features and Zero Config deployment workflows. Furthermore, the group

demonstrated robust operational resilience against security takedowns by

shifting suspended documentation onto the decentralized InterPlanetary File

System (IPFS). Because these malicious interactions deliberately mimic routine

crypto operations, spotting a drainer requires careful user vigilance. Key

warning signs include sites demanding immediate wallet connections, requests

for unlimited token approvals, unexpected off-chain signature prompts, and

artificial urgency. Ultimately, proactive monitoring of these underground

networks allows security teams to detect threat indicators before fraud

reaches users.

The BleepingComputer article details the increasing professionalization of

cryptocurrency theft through structured Drainer as a Service (DaaS) platforms.

Analyzing Flare researchers' extensive data on the malicious Lucifer DaaS

platform between January 2025 and early 2026, the report highlights how these

modern ecosystems closely mimic legitimate SaaS businesses. DaaS operators

manage complex transaction logic, wallet interactions, and software updates

while taking a twenty percent commission on successful thefts, whereas

recruited affiliates use social engineering to drive phishing traffic toward

malicious websites. Rather than relying on traditional device compromise,

drainers exploit user confusion regarding complex Web3 permissions and

approvals, abusing authorization mechanisms like Permit and Permit2 to siphon

digital assets within seconds. Lucifer significantly reduced technical

barriers for its affiliates by introducing automated utilities like website

cloning features and Zero Config deployment workflows. Furthermore, the group

demonstrated robust operational resilience against security takedowns by

shifting suspended documentation onto the decentralized InterPlanetary File

System (IPFS). Because these malicious interactions deliberately mimic routine

crypto operations, spotting a drainer requires careful user vigilance. Key

warning signs include sites demanding immediate wallet connections, requests

for unlimited token approvals, unexpected off-chain signature prompts, and

artificial urgency. Ultimately, proactive monitoring of these underground

networks allows security teams to detect threat indicators before fraud

reaches users.

Throughput vs Goodput: The Performance Metric You Are Probably Ignoring in LLM Testing

The DZone article contrasts throughput and goodput as essential performance metrics, particularly within the context of Large Language Model (LLM) testing. While throughput measures raw operational volume by tracking total request completions or transactions per second, it inherently overlooks latency and user experience quality. For instance, an LLM server might maintain a stable, high throughput by successfully delivering standard HTTP 200 responses, even as the actual token processing time severely degrades. To address this dangerous blind spot, goodput acts as a quality-focused metric that incorporates Service Level Objectives (SLOs), counting only the specific requests that finish entirely within acceptable thresholds like Time to First Token and Inter-Token Latency. Consequently, as concurrent user loads increase and saturate critical GPU computing resources, goodput will diverge downward from throughput, serving as an early warning signal of performance deterioration. Featured in advanced tools like NVIDIA’s AIPerf, goodput proves indispensable for validating the production readiness of endpoints and mapping out exactly where systems begin to break under stress. Ultimately, the article advises reporting both metrics together; while throughput determines if an infrastructure configuration can physically handle the overall data volume, goodput answers whether the system is truly serving users effectively without silently breaching response boundaries.AI at scale: What engineering teams are confronting

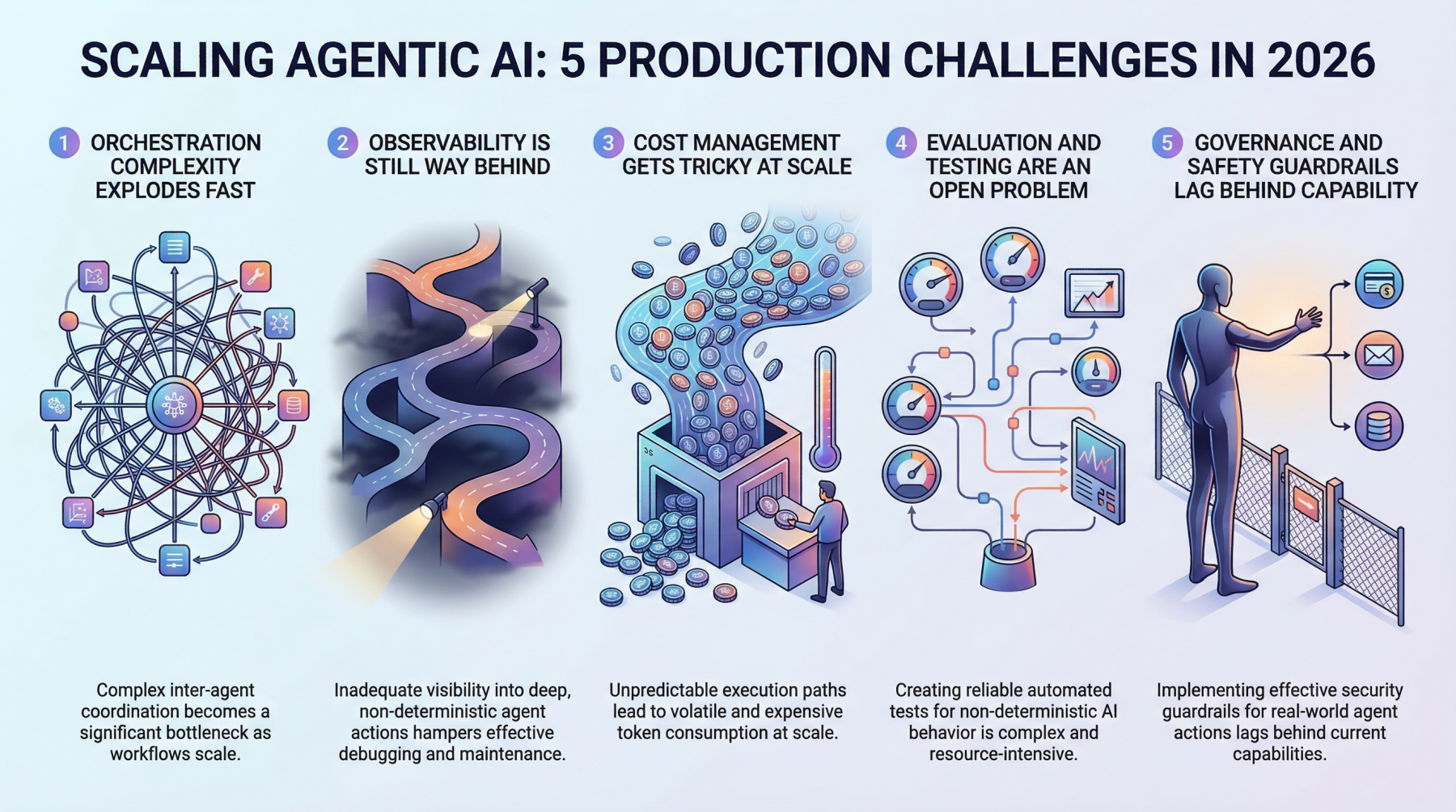

The InfoWorld article explores the shift enterprise engineering teams face

when transitioning AI from exploratory experimentation to operational

deployment at scale. While early enterprise discussions focused on model size

and automated pilots, production reality demands secure, observable, and

operationally durable environments. Recent research reveals that while nearly

seventy-five percent of organizations utilize production GPU workloads and

invest heavily in agentic AI designed to execute tasks, severe infrastructure

mismatches remain. Most cloud estates were originally built for application

deployment rather than the governed, reproducible pipelines required for

execution level AI; notably, most firms must migrate over a quarter of their

data to adapt. This foundational disconnect exposes severe governance gaps,

especially when processing personally identifiable data under strict

regulatory frameworks. Furthermore, managing dozens of cloud accounts across

multiple vendors running diverse tools like Terraform and CloudFormation

multiplies this operational complexity, making uniform policy enforcement

across teams difficult. Rather than treating adoption as a simple build versus

buy decision, successful organizations prioritize sustainable architectural

fit. They avoid isolated silos by embedding external delivery expertise

directly into core networks, actively testing workloads against production

grade standards from day one. Ultimately, scaling success is determined not by

algorithmic novelty, but by the deliberate, AI native design of the underlying

cloud platform.

The InfoWorld article explores the shift enterprise engineering teams face

when transitioning AI from exploratory experimentation to operational

deployment at scale. While early enterprise discussions focused on model size

and automated pilots, production reality demands secure, observable, and

operationally durable environments. Recent research reveals that while nearly

seventy-five percent of organizations utilize production GPU workloads and

invest heavily in agentic AI designed to execute tasks, severe infrastructure

mismatches remain. Most cloud estates were originally built for application

deployment rather than the governed, reproducible pipelines required for

execution level AI; notably, most firms must migrate over a quarter of their

data to adapt. This foundational disconnect exposes severe governance gaps,

especially when processing personally identifiable data under strict

regulatory frameworks. Furthermore, managing dozens of cloud accounts across

multiple vendors running diverse tools like Terraform and CloudFormation

multiplies this operational complexity, making uniform policy enforcement

across teams difficult. Rather than treating adoption as a simple build versus

buy decision, successful organizations prioritize sustainable architectural

fit. They avoid isolated silos by embedding external delivery expertise

directly into core networks, actively testing workloads against production

grade standards from day one. Ultimately, scaling success is determined not by

algorithmic novelty, but by the deliberate, AI native design of the underlying

cloud platform.

Why Enterprise Technology Is Becoming More About Stability Than Speed

The article explores a shifting paradigm in enterprise technology,

highlighting how modern businesses are transitioning their focus from pure

digital acceleration and speed toward operational stability, coordination, and

resilience. For years, digital transformations prioritized rapid deployment,

which accidentally generated fragmented, layered digital environments burdened

by overlapping software systems and continuous employee notifications. Relying

on reports from PwC, McKinsey, and Deloitte, the article underscores that

unchecked technical complexity reduces business visibility and slows overall

operational coordination. Furthermore, the expansion of artificial

intelligence does not automatically resolve organizational fragmentation;

instead, it often amplifies existing systemic weaknesses unless integrated

into well-structured, cohesive workflows. Consequently, modern technology

strategies are prioritizing invisible operational infrastructure, secure

workflows, and foundational simplicity over superficial disruptions.

Enterprise cybersecurity is similarly evolving from an isolated IT defense

mechanism into a foundational business driver supporting continuity and

customer trust. Crucially, as enterprise tools become more complex and

automated, human judgment remains indispensable for interpreting context,

guiding strategy, and navigating uncertainty. Ultimately, the next era of

successful enterprise technology will value the calming ability to sustain

reliable, unified, and stable operations within interconnected environments

far above the urge to continuously move fast.

The article explores a shifting paradigm in enterprise technology,

highlighting how modern businesses are transitioning their focus from pure

digital acceleration and speed toward operational stability, coordination, and

resilience. For years, digital transformations prioritized rapid deployment,

which accidentally generated fragmented, layered digital environments burdened

by overlapping software systems and continuous employee notifications. Relying

on reports from PwC, McKinsey, and Deloitte, the article underscores that

unchecked technical complexity reduces business visibility and slows overall

operational coordination. Furthermore, the expansion of artificial

intelligence does not automatically resolve organizational fragmentation;

instead, it often amplifies existing systemic weaknesses unless integrated

into well-structured, cohesive workflows. Consequently, modern technology

strategies are prioritizing invisible operational infrastructure, secure

workflows, and foundational simplicity over superficial disruptions.

Enterprise cybersecurity is similarly evolving from an isolated IT defense

mechanism into a foundational business driver supporting continuity and

customer trust. Crucially, as enterprise tools become more complex and

automated, human judgment remains indispensable for interpreting context,

guiding strategy, and navigating uncertainty. Ultimately, the next era of

successful enterprise technology will value the calming ability to sustain

reliable, unified, and stable operations within interconnected environments

far above the urge to continuously move fast.Deloitte survey: Gen Z and millennials are forcing HR to rethink leadership

The Deloitte Global 2026 Gen Z and Millennial Survey, which polled over 22,500

participants across 44 countries, reveals that younger professionals are

fundamentally reshaping traditional corporate frameworks. While they maintain

career ambition, they heavily prioritize flexibility, psychological safety,

and sustainable long-term progress over aggressive ladder-climbing.

Alarmingly, only 6 percent identify becoming a corporate leader as their top

professional goal, primarily because modern management roles are

overwhelmingly associated with stress, burnout, and a compromised work-life

balance. Beyond leadership structures, persistent financial

anxieties—specifically regarding the cost of living and housing

affordability—are directly dictating where these employees choose to work and

live. Furthermore, an "AI readiness gap" has emerged; although nearly

three-quarters of respondents utilize AI tools daily, one-third believe their

employers are fundamentally unprepared to manage this rapid technological

shift. While corporate recognition of mental health has marginally improved,

pervasive digital fatigue and workload pressures continue to trigger

widespread exhaustion. Ultimately, retention increasingly hinges on shared

organizational values and workplace community, with roughly 40 percent of

younger workers rejecting assignments that conflict with their personal

ethics. HR departments must therefore shift from rigid enforcement toward

dynamic, human-centered systems focused on genuine well-being, organizational

trust, and workflow redesign.

The Deloitte Global 2026 Gen Z and Millennial Survey, which polled over 22,500

participants across 44 countries, reveals that younger professionals are

fundamentally reshaping traditional corporate frameworks. While they maintain

career ambition, they heavily prioritize flexibility, psychological safety,

and sustainable long-term progress over aggressive ladder-climbing.

Alarmingly, only 6 percent identify becoming a corporate leader as their top

professional goal, primarily because modern management roles are

overwhelmingly associated with stress, burnout, and a compromised work-life

balance. Beyond leadership structures, persistent financial

anxieties—specifically regarding the cost of living and housing

affordability—are directly dictating where these employees choose to work and

live. Furthermore, an "AI readiness gap" has emerged; although nearly

three-quarters of respondents utilize AI tools daily, one-third believe their

employers are fundamentally unprepared to manage this rapid technological

shift. While corporate recognition of mental health has marginally improved,

pervasive digital fatigue and workload pressures continue to trigger

widespread exhaustion. Ultimately, retention increasingly hinges on shared

organizational values and workplace community, with roughly 40 percent of

younger workers rejecting assignments that conflict with their personal

ethics. HR departments must therefore shift from rigid enforcement toward

dynamic, human-centered systems focused on genuine well-being, organizational

trust, and workflow redesign.Protecting Sensitive Training Data in the Age of AI

The CPO Magazine article highlights the re-emergence of modern tape technology

as a critical and cost-effective solution for storing and protecting the

massive volumes of data required to train large language models. As artificial

intelligence integration expands, modern organizations collect unprecedented

amounts of raw information, leading to soaring cloud storage expenses and

heightened cybersecurity threats. Unlike costly flash drives or traditional

hard disk media, modern Linear Tape-Open solutions offer an exceptionally

affordable way to house cold data lakes, streaming continuous high throughput

without experiencing performance bottlenecks or supply chain pressures. Beyond

clear financial advantages, tape storage serves as a robust cybersecurity

asset. Because it is a physical and air-gapped medium, it provides an isolated

offline repository that safeguards proprietary training data sets from remote

cybercriminals. This architecture completely mitigates traditional cloud

platform vulnerabilities and effectively thwarts dangerous data poisoning

attacks designed to inject biased details, manipulate algorithms, or degrade

model accuracy. Furthermore, tape technology incorporates Write-Once,

Read-Many functionalities that ensure immutable, tamper-proof historical

records, helping businesses satisfy strict compliance and evolving regulatory

mandates. Ultimately, utilizing tape alongside cloud frameworks in hybrid

storage deployments enables enterprises to responsibly scale and secure their

artificial intelligence infrastructure.

The CPO Magazine article highlights the re-emergence of modern tape technology

as a critical and cost-effective solution for storing and protecting the

massive volumes of data required to train large language models. As artificial

intelligence integration expands, modern organizations collect unprecedented

amounts of raw information, leading to soaring cloud storage expenses and

heightened cybersecurity threats. Unlike costly flash drives or traditional

hard disk media, modern Linear Tape-Open solutions offer an exceptionally

affordable way to house cold data lakes, streaming continuous high throughput

without experiencing performance bottlenecks or supply chain pressures. Beyond

clear financial advantages, tape storage serves as a robust cybersecurity

asset. Because it is a physical and air-gapped medium, it provides an isolated

offline repository that safeguards proprietary training data sets from remote

cybercriminals. This architecture completely mitigates traditional cloud

platform vulnerabilities and effectively thwarts dangerous data poisoning

attacks designed to inject biased details, manipulate algorithms, or degrade

model accuracy. Furthermore, tape technology incorporates Write-Once,

Read-Many functionalities that ensure immutable, tamper-proof historical

records, helping businesses satisfy strict compliance and evolving regulatory

mandates. Ultimately, utilizing tape alongside cloud frameworks in hybrid

storage deployments enables enterprises to responsibly scale and secure their

artificial intelligence infrastructure.20 Leadership Strategies For Continuous Learning And Skill Development

The Forbes Human Resources Council article outlines twenty foundational

strategies for leaders committed to continuous learning and skill development.

The expert contributors emphasize that effective leadership is an ongoing

journey requiring an open, curious mindset rather than a rigid posture of

absolute expertise. Key actionable tactics include building daily habits

rooted in deep curiosity, seeking diverse perspectives, and integrating

real-time self-reflection into everyday operational decisions. Rather than

treating professional training as an isolated retreat, successful executives

hardwire learning into their daily organizational rhythms through robust

feedback loops, comprehensive reviews, and the establishment of a personal

board of directors to uncover hidden organizational blind spots. Furthermore,

the panel highlights the immense value of modern development channels, such as

engaging in two-way reverse mentoring with next-generation talent, utilizing

personalized AI-powered coaching tools, and actively pursuing challenging

stretch assignments outside of their comfort zones. Crucially, sustainable

growth involves intentionally focusing on developing others, ensuring that

knowledge sharing, substantial educational assistance budgets, and

collaborative operational reviews build a future-ready talent pipeline. By

consistently staying close to day-to-day operations and carefully analyzing

failures, leaders can remain nimble, highly context-aware, and exceptionally

well equipped to successfully navigate a rapidly changing business

environment.

The Forbes Human Resources Council article outlines twenty foundational

strategies for leaders committed to continuous learning and skill development.

The expert contributors emphasize that effective leadership is an ongoing

journey requiring an open, curious mindset rather than a rigid posture of

absolute expertise. Key actionable tactics include building daily habits

rooted in deep curiosity, seeking diverse perspectives, and integrating

real-time self-reflection into everyday operational decisions. Rather than

treating professional training as an isolated retreat, successful executives

hardwire learning into their daily organizational rhythms through robust

feedback loops, comprehensive reviews, and the establishment of a personal

board of directors to uncover hidden organizational blind spots. Furthermore,

the panel highlights the immense value of modern development channels, such as

engaging in two-way reverse mentoring with next-generation talent, utilizing

personalized AI-powered coaching tools, and actively pursuing challenging

stretch assignments outside of their comfort zones. Crucially, sustainable

growth involves intentionally focusing on developing others, ensuring that

knowledge sharing, substantial educational assistance budgets, and

collaborative operational reviews build a future-ready talent pipeline. By

consistently staying close to day-to-day operations and carefully analyzing

failures, leaders can remain nimble, highly context-aware, and exceptionally

well equipped to successfully navigate a rapidly changing business

environment.

/articles/portable-systems-sovereignty/en/smallimage/Architecting-Portable-Systems-on-Open-Standards-for-Digital-Sovereignty-thumb-1773829386100.jpg)

/articles/configuration-control-plane/en/smallimage/configuration-as-a-control-plane-designing-for-safety-and-reliability-at-scale-thumb-1773657574566.jpg)